mirror of

https://github.com/LCTT/TranslateProject.git

synced 2025-03-27 02:30:10 +08:00

commit

f62f6c2ab7

published

20150716 A Week With GNOME As My Linux Desktop--What They Get Right & Wrong - Page 1 - Introduction.md20150716 A Week With GNOME As My Linux Desktop--What They Get Right & Wrong - Page 2 - The GNOME Desktop.md20150716 A Week With GNOME As My Linux Desktop--What They Get Right & Wrong - Page 3 - GNOME Applications.md20150716 A Week With GNOME As My Linux Desktop--What They Get Right & Wrong - Page 4 - GNOME Settings.md20150716 A Week With GNOME As My Linux Desktop--What They Get Right & Wrong - Page 5 - Conclusion.md20150728 How to Update Linux Kernel for Improved System Performance.md20150728 Tips to Create ISO from CD, Watch User Activity and Check Memory Usages of Browser.md20150806 Linux FAQs with Answers--How to fix 'ImportError--No module named wxversion' on Linux.md20150811 Darkstat is a Web Based Network Traffic Analyzer--Install it on Linux.md20150811 How to download apk files from Google Play Store on Linux.md20150813 Ubuntu Want To Make It Easier For You To Install The Latest Nvidia Linux Driver.md20150816 Ubuntu NVIDIA Graphics Drivers PPA Is Ready For Action.md20150816 shellinabox--A Web based AJAX Terminal Emulator.md

sources

news

20150818 Linux Without Limits--IBM Launch LinuxONE Mainframes.md20150818 Ubuntu Linux is coming to IBM mainframes.md

share

20150610 Tickr Is An Open-Source RSS News Ticker for Linux Desktops.md20150817 Top 5 Torrent Clients For Ubuntu Linux.md20150821 Top 4 open source command-line email clients.md

talk

20150818 A Linux User Using Windows 10 After More than 8 Years--See Comparison.md20150818 Debian GNU or Linux Birthday-- A 22 Years of Journey and Still Counting.md20150818 Docker Working on Security Components Live Container Migration.md20150819 Linuxcon--The Changing Role of the Server OS.md20150820 A Look at What's Next for the Linux Kernel.md20150820 LinuxCon's surprise keynote speaker Linus Torvalds muses about open-source software.md20150820 Which Open Source Linux Distributions Would Presidential Hopefuls Run.md20150820 Why did you start using Linux.md20150821 Linux 4.3 Kernel To Add The MOST Driver Subsystem.md

tech

20150205 Install Strongswan - A Tool to Setup IPsec Based VPN in Linux.md20150527 Howto Manage Host Using Docker Machine in a VirtualBox.md20150717 How to monitor NGINX with Datadog - Part 3.md20150730 Howto Configure Nginx as Rreverse Proxy or Load Balancer with Weave and Docker.md20150803 Managing Linux Logs.md20150812 Linux Tricks--Play Game in Chrome Text-to-Speech Schedule a Job and Watch Commands in Linux.md20150813 Howto Run JBoss Data Virtualization GA with OData in Docker Container.md20150813 Linux and Unix Test Disk I O Performance With dd Command.md20150813 Linux file system hierarchy v2.0.md20150813 Ubuntu Want To Make It Easier For You To Install The Latest Nvidia Linux Driver.md20150817 How to Install OsTicket Ticketing System in Fedora 22 or Centos 7.md20150817 Linux FAQs with Answers--How to fix Wireshark GUI freeze on Linux desktop.md20150818 How to monitor stock quotes from the command line on Linux.md20150821 How to Install Visual Studio Code in Linux.md20150821 Linux FAQs with Answers--How to check MariaDB server version.md

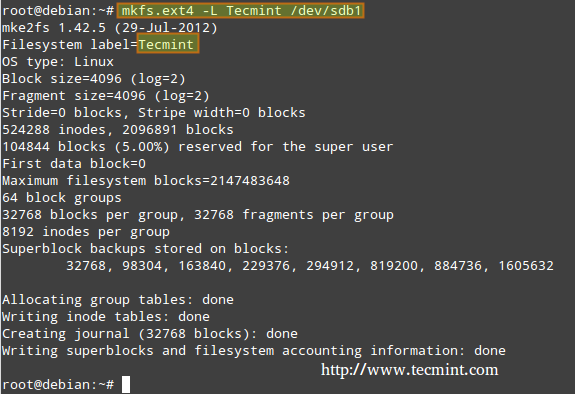

LFCS

Part 1 - LFCS--How to use GNU 'sed' Command to Create Edit and Manipulate files in Linux.mdPart 10 - LFCS--Understanding and Learning Basic Shell Scripting and Linux Filesystem Troubleshooting.mdPart 2 - LFCS--How to Install and Use vi or vim as a Full Text Editor.mdPart 3 - LFCS--How to Archive or Compress Files and Directories Setting File Attributes and Finding Files in Linux.mdPart 4 - LFCS--Partitioning Storage Devices Formatting Filesystems and Configuring Swap Partition.mdPart 5 - LFCS--How to Mount or Unmount Local and Network Samba and NFS Filesystems in Linux.mdPart 6 - LFCS--Assembling Partitions as RAID Devices – Creating & Managing System Backups.mdPart 7 - LFCS--Managing System Startup Process and Services SysVinit Systemd and Upstart.mdPart 8 - LFCS--Managing Users and Groups File Permissions and Attributes and Enabling sudo Access on Accounts.mdPart 9 - LFCS--Linux Package Management with Yum RPM Apt Dpkg Aptitude and Zypper.md

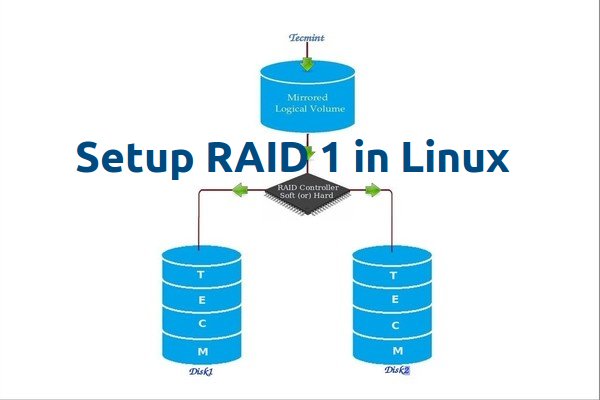

RAID

Part 3 - Setting up RAID 1 (Mirroring) using 'Two Disks' in Linux.mdPart 4 - Creating RAID 5 (Striping with Distributed Parity) in Linux.md

RHCE

Part 1 - RHCE Series--How to Setup and Test Static Network Routing.mdPart 2 - How to Perform Packet Filtering Network Address Translation and Set Kernel Runtime Parameters.mdPart 3 - How to Produce and Deliver System Activity Reports Using Linux Toolsets.mdPart 4 - Using Shell Scripting to Automate Linux System Maintenance Tasks.md

RHCSA Series

translated

share

tech

20150527 Howto Manage Host Using Docker Machine in a VirtualBox.md20150716 A Week With GNOME As My Linux Desktop--What They Get Right & Wrong - Page 1 - Introduction.md20150716 A Week With GNOME As My Linux Desktop--What They Get Right & Wrong - Page 2 - The GNOME Desktop.md20150716 A Week With GNOME As My Linux Desktop--What They Get Right & Wrong - Page 3 - GNOME Applications.md20150716 A Week With GNOME As My Linux Desktop--What They Get Right & Wrong - Page 4 - GNOME Settings.md20150716 A Week With GNOME As My Linux Desktop--What They Get Right & Wrong - Page 5 - Conclusion.md20150717 How to monitor NGINX with Datadog - Part 3.md20150728 How to Update Linux Kernel for Improved System Performance.md20150730 Howto Configure Nginx as Rreverse Proxy or Load Balancer with Weave and Docker.md20150803 Managing Linux Logs.md20150811 How to Install Snort and Usage in Ubuntu 15.04.md20150812 Linux Tricks--Play Game in Chrome Text-to-Speech Schedule a Job and Watch Commands in Linux.md20150816 How to migrate MySQL to MariaDB on Linux.md20150817 Linux FAQs with Answers--How to count the number of threads in a process on Linux.mdLinux and Unix Test Disk IO Performance With dd Command.MD

RHCE

Part 1 - RHCE Series--How to Setup and Test Static Network Routing.mdPart 2 - How to Perform Packet Filtering Network Address Translation and Set Kernel Runtime Parameters.mdPart 3 - How to Produce and Deliver System Activity Reports Using Linux Toolsets.md

RHCSA

@ -0,0 +1,56 @@

|

||||

一周 GNOME 之旅:品味它和 KDE 的是是非非(第一节 介绍)

|

||||

================================================================================

|

||||

|

||||

*作者声明: 如果你是因为某种神迹而在没看标题的情况下点开了这篇文章,那么我想再重申一些东西……这是一篇评论文章,文中的观点都是我自己的,不代表 Phoronix 网站和 Michael 的观点。它们完全是我自己的想法。*

|

||||

|

||||

另外,没错……这可能是一篇引战的文章。我希望 KDE 和 Gnome 社团变得更好一些,因为我想发起一个讨论并反馈给他们。为此,当我想指出(我所看到的)一个瑕疵时,我会尽量地做到具体而直接。这样,相关的讨论也能做到同样的具体和直接。再次声明:本文另一可选标题为“死于成千上万的[纸割][1]”(LCTT 译注:paper cuts——纸割,被纸片割伤——指易修复但烦人的缺陷。Ubuntu 从 9.10 开始,发起了 [One Hundred Papercuts][1] 项目,用于修复那些小而烦人的易用性问题)。

|

||||

|

||||

现在,重申完毕……文章开始。

|

||||

|

||||

|

||||

|

||||

当我把[《评价 Fedora 22 KDE 》][2]一文发给 Michael 时,感觉很不是滋味。不是因为我不喜欢 KDE,或者不待见 Fedora,远非如此。事实上,我刚开始想把我的 T450s 的系统换为 Arch Linux 时,马上又决定放弃了,因为我很享受 fedora 在很多方面所带来的便捷性。

|

||||

|

||||

我感觉很不是滋味的原因是 Fedora 的开发者花费了大量的时间和精力在他们的“工作站”产品上,但是我却一点也没看到。在使用 Fedora 时,我并没用采用那些主要开发者希望用户采用的那种使用方式,因此我也就体验不到所谓的“ Fedora 体验”。它感觉就像一个人评价 Ubuntu 时用的却是 Kubuntu,评价 OS X 时用的却是 Hackintosh,或者评价 Gentoo 时用的却是 Sabayon。根据论坛里大量读者对 Michael 的说法,他们在评价各种发行版时都是使用的默认设置——我也不例外。但是我还是认为这些评价应该在“真实”配置下完成,当然我也知道在给定的情况下评论某些东西也的确是有价值的——无论是好是坏。

|

||||

|

||||

正是在怀着这种态度的情况下,我决定跳到 Gnome 这个水坑里来泡泡澡。

|

||||

|

||||

但是,我还要在此多加一个声明……我在这里所看到的 KDE 和 Gnome 都是打包在 Fedora 中的。OpenSUSE、 Kubuntu、 Arch等发行版的各个桌面可能有不同的实现方法,使得我这里所说的具体的“痛点”跟你所用的发行版有所不同。还有,虽然用了这个标题,但这篇文章将会是一篇“很 KDE”的重量级文章。之所以这样称呼,是因为我在“使用” Gnome 之后,才知道 KDE 的“纸割”到底有多么的多。

|

||||

|

||||

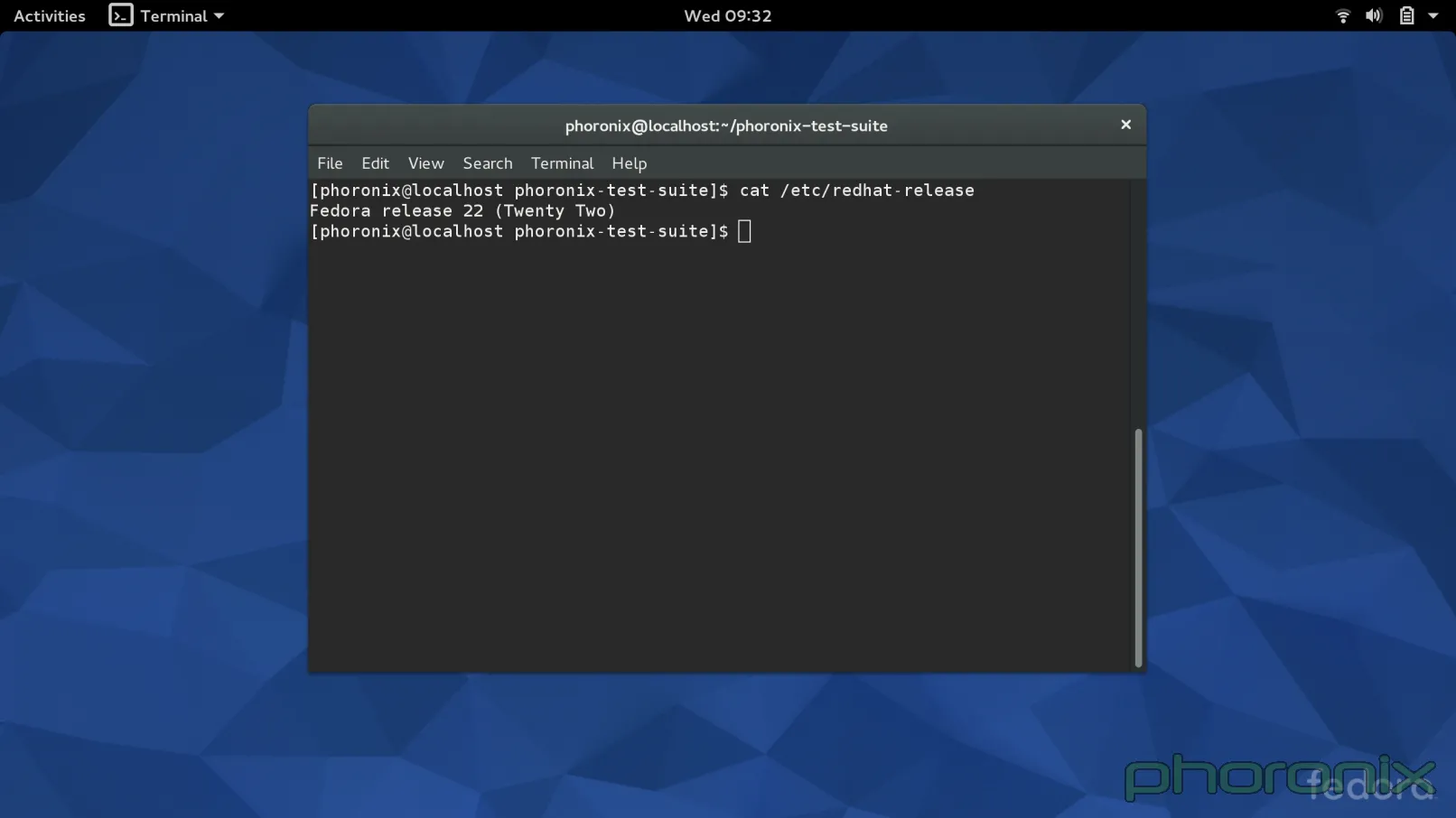

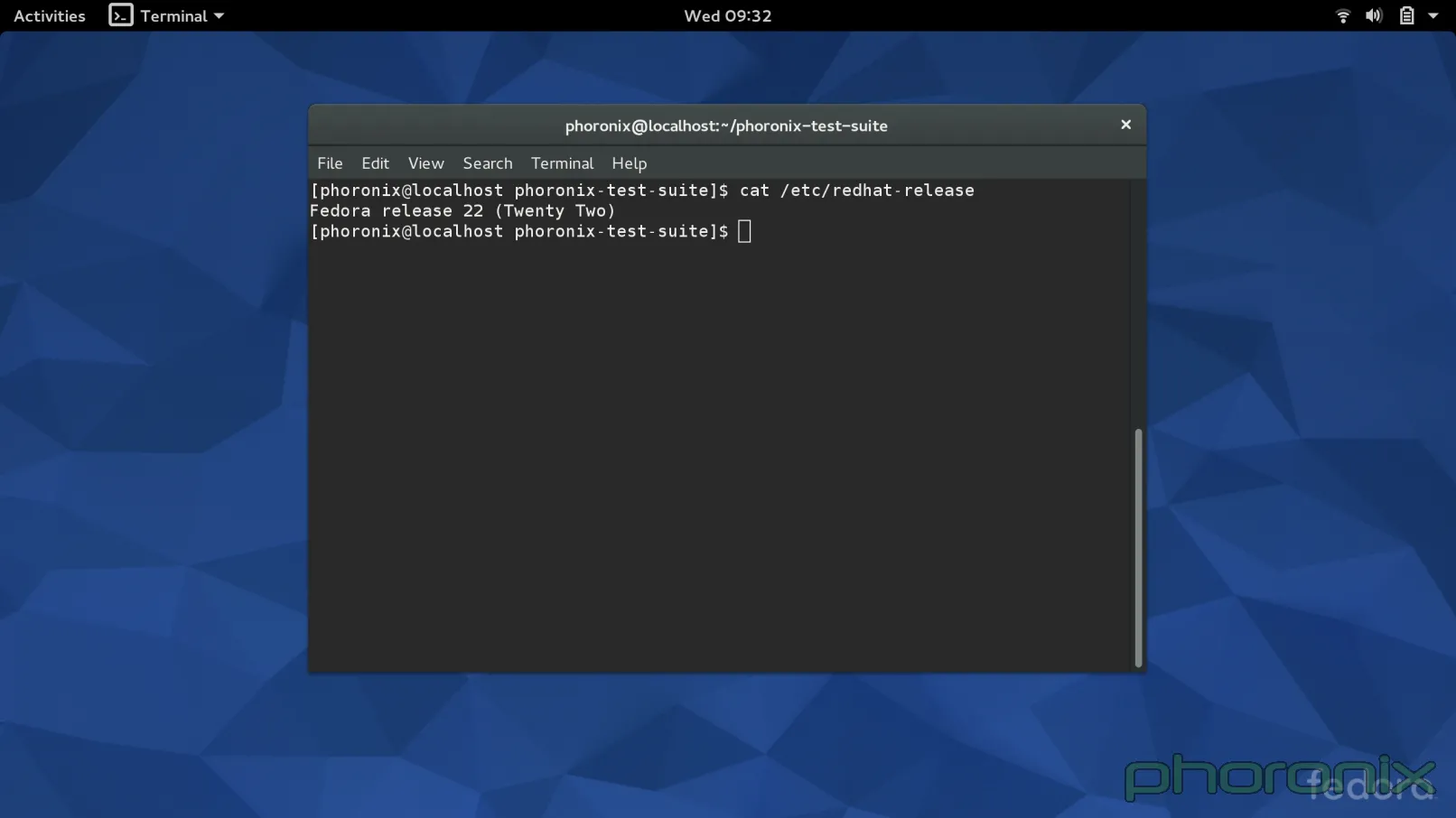

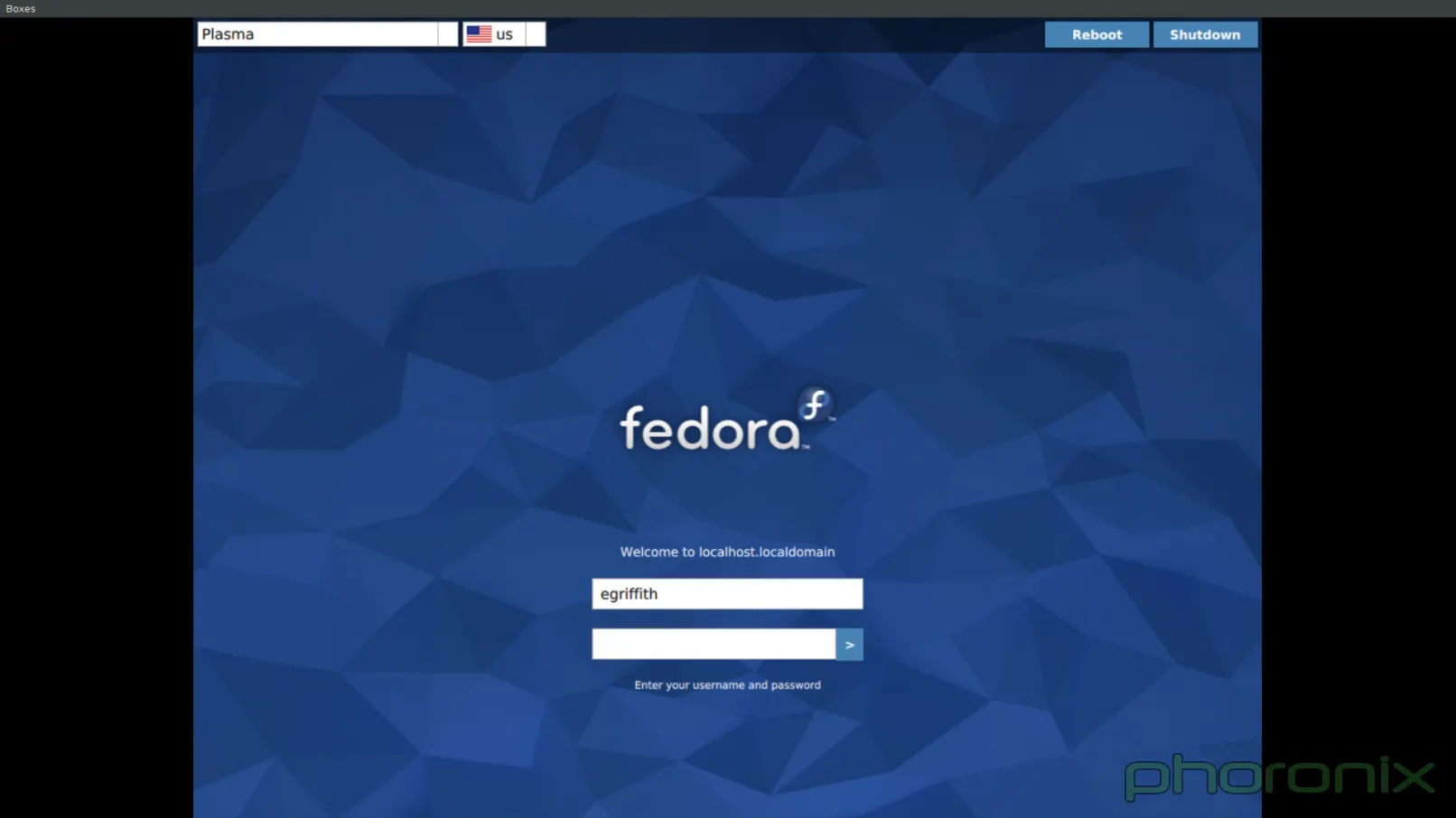

### 登录界面 ###

|

||||

|

||||

|

||||

|

||||

我一般情况下都不会介意发行版带着它们自己的特别主题,因为一般情况下桌面看起来会更好看。可我今天可算是找到了一个例外。

|

||||

|

||||

第一印象很重要,对吧?那么,GDM(LCTT 译注: Gnome Display Manage:Gnome 显示管理器。)绝对干得漂亮。它的登录界面看起来极度简洁,每一部分都应用了一致的设计风格。使用通用图标而不是文本框为它的简洁加了分。

|

||||

|

||||

|

||||

|

||||

这并不是说 Fedora 22 KDE ——现在已经是 SDDM 而不是 KDM 了——的登录界面不好看,但是看起来绝对没有它这样和谐。

|

||||

|

||||

问题到底出来在哪?顶部栏。看看 Gnome 的截图——你选择一个用户,然后用一个很小的齿轮简单地选择想登入哪个会话。设计很简洁,一点都不碍事,实话讲,如果你没注意的话可能完全会看不到它。现在看看那蓝色( LCTT 译注:blue,有忧郁之意,一语双关)的 KDE 截图,顶部栏看起来甚至不像是用同一个工具渲染出来的,它的整个位置的安排好像是某人想着:“哎哟妈呀,我们需要把这个选项扔在哪个地方……”之后决定下来的。

|

||||

|

||||

对于右上角的重启和关机选项也一样。为什么不单单用一个电源按钮,点击后会下拉出一个菜单,里面包括重启,关机,挂起的功能?按钮的颜色跟背景色不同肯定会让它更加突兀和显眼……但我可不觉得这样子有多好。同样,这看起来可真像“苦思”后的决定。

|

||||

|

||||

从实用观点来看,GDM 还要远远实用的多,再看看顶部一栏。时间被列了出来,还有一个音量控制按钮,如果你想保持周围安静,你甚至可以在登录前设置静音,还有一个可用性按钮来实现高对比度、缩放、语音转文字等功能,所有可用的功能通过简单的一个开关按钮就能得到。

|

||||

|

||||

|

||||

|

||||

切换到上游(KDE 自带)的 Breeve 主题……突然间,我抱怨的大部分问题都被解决了。通用图标,所有东西都放在了屏幕中央,但不是那么重要的被放到了一边。因为屏幕顶部和底部都是同样的空白,在中间也就酝酿出了一种美好的和谐。还是有一个文本框来切换会话,但既然电源按钮被做成了通用图标,那么这点还算可以原谅。当前时间以一种漂亮的感觉呈现,旁边还有电量指示器。当然 gnome 还是有一些很好的附加物,例如音量小程序和可用性按钮,但 Breeze 总归要比 Fedora 的 KDE 主题进步。

|

||||

|

||||

到 Windows(Windows 8和10之前)或者 OS X 中去,你会看到类似的东西——非常简洁的,“不碍事”的锁屏与登录界面,它们都没有文本框或者其它分散视觉的小工具。这是一种有效的不分散人注意力的设计。Fedora……默认带有 Breeze 主题。VDG 在 Breeze 主题设计上干得不错。可别糟蹋了它。

|

||||

|

||||

--------------------------------------------------------------------------------

|

||||

|

||||

via: http://www.phoronix.com/scan.php?page=article&item=gnome-week-editorial&num=1

|

||||

|

||||

作者:Eric Griffith

|

||||

译者:[XLCYun](https://github.com/XLCYun)

|

||||

校对:[wxy](https://github.com/wxy)

|

||||

|

||||

本文由 [LCTT](https://github.com/LCTT/TranslateProject) 原创翻译,[Linux中国](https://linux.cn/) 荣誉推出

|

||||

|

||||

[1]:https://wiki.ubuntu.com/One%20Hundred%20Papercuts

|

||||

[2]:http://www.phoronix.com/scan.php?page=article&item=fedora-22-kde&num=1

|

||||

[3]:https://launchpad.net/hundredpapercuts

|

||||

@ -0,0 +1,32 @@

|

||||

一周 GNOME 之旅:品味它和 KDE 的是是非非(第二节 GNOME桌面)

|

||||

================================================================================

|

||||

|

||||

### 桌面 ###

|

||||

|

||||

|

||||

|

||||

在我这一周的前五天中,我都是直接手动登录进 Gnome 的——没有打开自动登录功能。在第五天的晚上,每一次都要手动登录让我觉得很厌烦,所以我就到用户管理器中打开了自动登录功能。下一次我登录的时候收到了一个提示:“你的密钥链(keychain)未解锁,请输入你的密码解锁”。在这时我才意识到了什么……Gnome 以前一直都在自动解锁我的密钥链(KDE 中叫做我的钱包),每当我通过 GDM 登录时 !当我绕开 GDM 的登录程序时,Gnome 才不得不介入让我手动解锁。

|

||||

|

||||

现在,鄙人的陋见是如果你打开了自动登录功能,那么你的密钥链也应当自动解锁——否则,这功能还有何用?无论如何,你还是需要输入你的密码,况且在 GDM 登录界面你还有机会选择要登录的会话,如果你想换的话。

|

||||

|

||||

但是,这点且不提,也就是在那一刻,我意识到要让这桌面感觉就像它在**和我**一起工作一样是多么简单的一件事。当我通过 SDDM 登录 KDE 时?甚至连启动界面都还没加载完成,就有一个窗口弹出来遮挡了启动动画(因此启动动画也就被破坏了),它提示我解锁我的 KDE 钱包或 GPG 钥匙环。

|

||||

|

||||

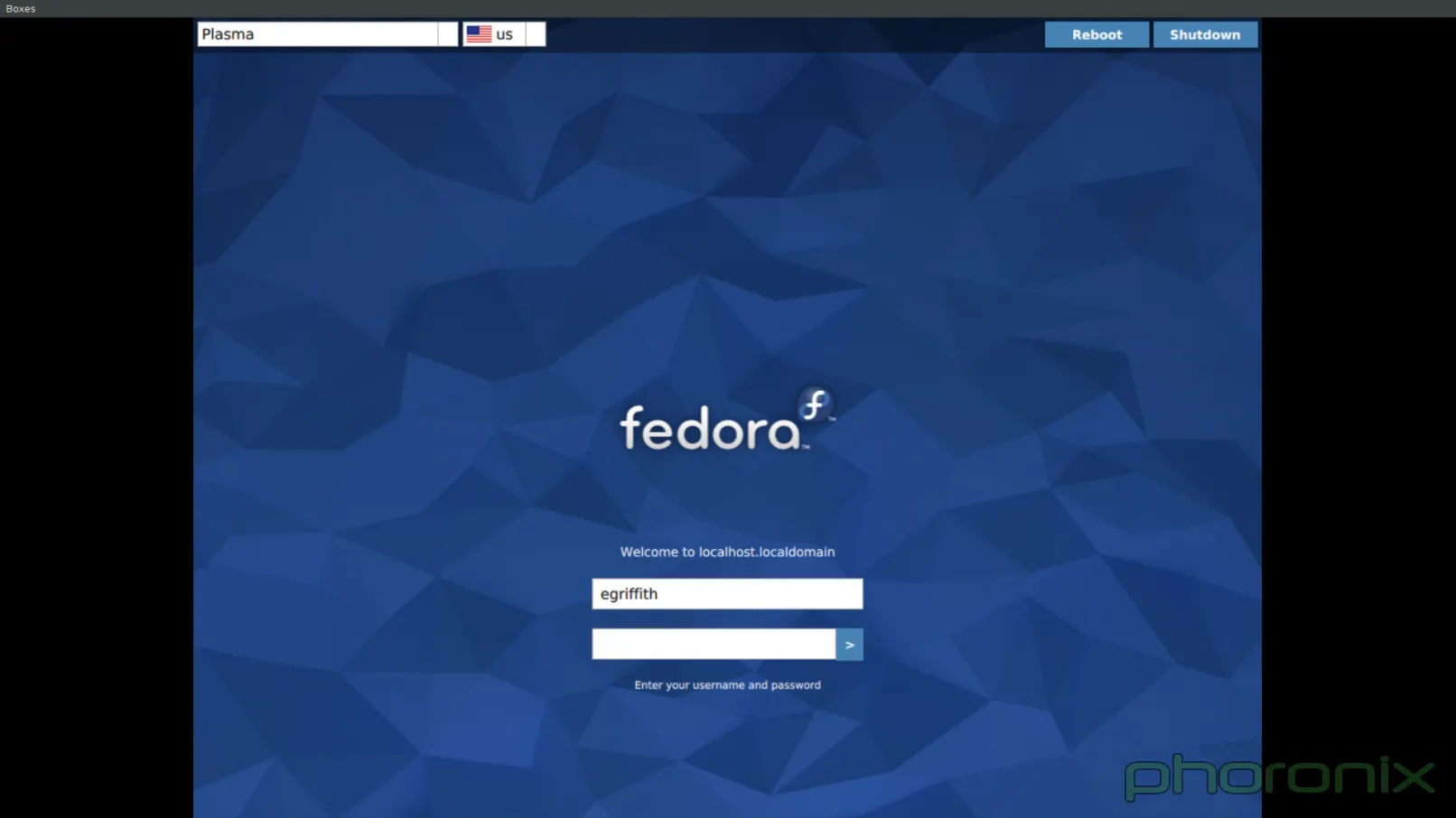

如果当前还没有钱包,你就会收到一个创建钱包的提醒——就不能在创建用户的时候同时为我创建一个吗?接着它又让你在两种加密模式中选择一种,甚至还暗示我们其中一种(Blowfish)是不安全的,既然是为了安全,为什么还要我选择一个不安全的东西?作者声明:如果你安装了真正的 KDE spin 版本而不是仅仅安装了被 KDE 搞过的版本,那么在创建用户时,它就会为你创建一个钱包。但很不幸的是,它不会帮你自动解锁,并且它似乎还使用了更老的 Blowfish 加密模式,而不是更新而且更安全的 GPG 模式。

|

||||

|

||||

|

||||

|

||||

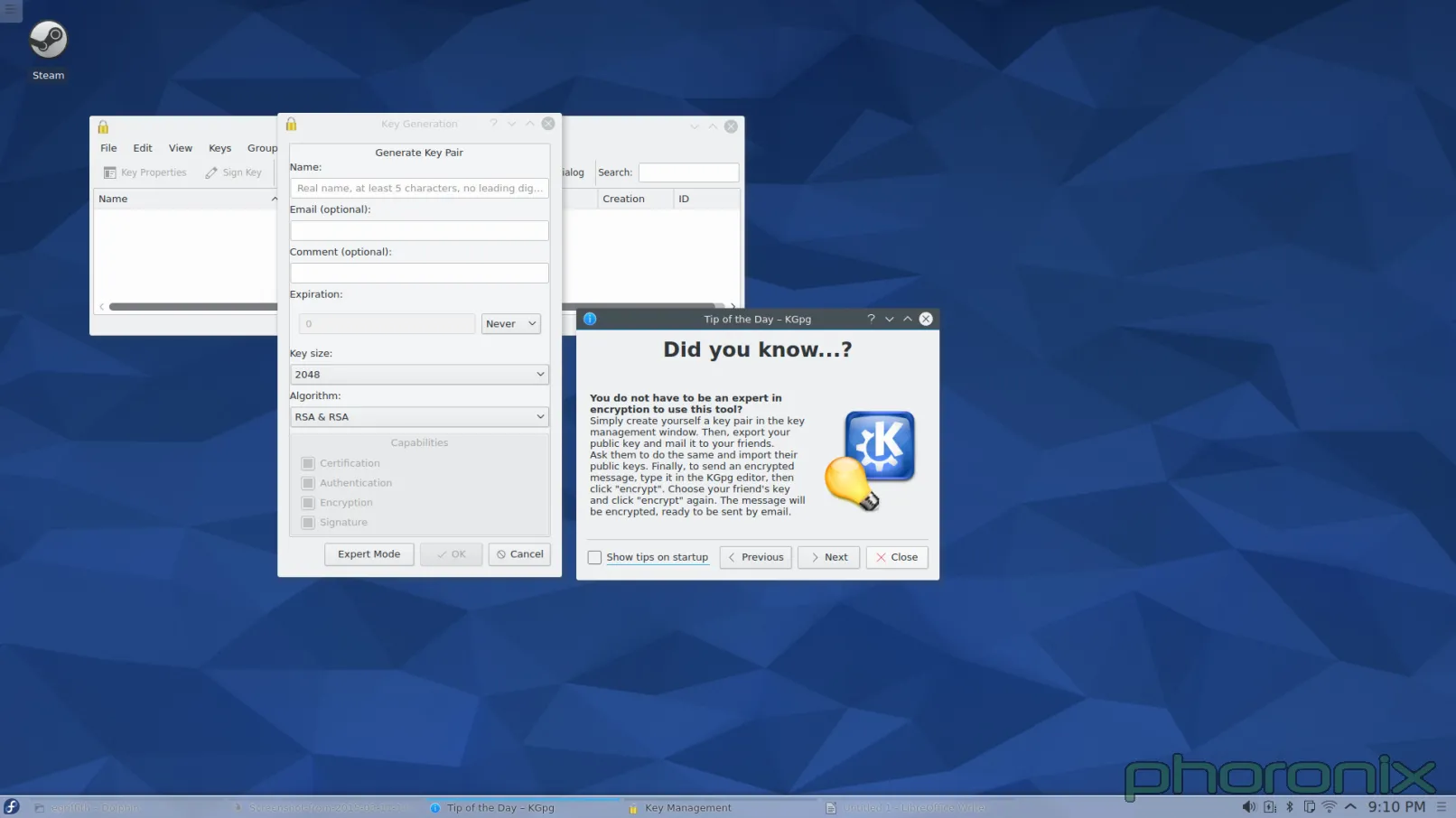

如果你选择了那个安全的加密模式(GPG),那么它会尝试加载 GPG 密钥……我希望你已经创建过一个了,因为如果你没有,那么你可又要被指责一番了。怎么样才能创建一个?额……它不帮你创建一个……也不告诉你怎么创建……假如你真的搞明白了你应该使用 KGpg 来创建一个密钥,接着在你就会遇到一层层的菜单和一个个的提示,而这些菜单和提示只能让新手感到困惑。为什么你要问我 GPG 的二进制文件在哪?天知道在哪!如果不止一个,你就不能为我选择一个最新的吗?如果只有一个,我再问一次,为什么你还要问我?

|

||||

|

||||

为什么你要问我要使用多大的密钥大小和加密算法?你既然默认选择了 2048 和 RSA/RSA,为什么不直接使用?如果你想让这些选项能够被修改,那就把它们扔在下面的“Expert mode(专家模式)” 按钮里去。这里不仅仅是说让配置可被用户修改的问题,而是说根本不需要默认把多余的东西扔在了用户面前。这种问题将会成为这篇文章剩下的主要内容之一……KDE 需要更理智的默认配置。配置是好的,我很喜欢在使用 KDE 时的配置,但它还需要知道什么时候应该,什么时候不应该去提示用户。而且它还需要知道“嗯,它是可配置的”不能做为默认配置做得不好的借口。用户最先接触到的就是默认配置,不好的默认配置注定要失去用户。

|

||||

|

||||

让我们抛开密钥链的问题,因为我想我已经表达出了我的想法。

|

||||

|

||||

--------------------------------------------------------------------------------

|

||||

|

||||

via: http://www.phoronix.com/scan.php?page=article&item=gnome-week-editorial&num=2

|

||||

|

||||

作者:Eric Griffith

|

||||

译者:[XLCYun](https://github.com/XLCYun)

|

||||

校对:[wxy](https://github.com/wxy)

|

||||

|

||||

本文由 [LCTT](https://github.com/LCTT/TranslateProject) 原创翻译,[Linux中国](https://linux.cn/) 荣誉推出

|

||||

@ -0,0 +1,66 @@

|

||||

一周 GNOME 之旅:品味它和 KDE 的是是非非(第三节 GNOME应用)

|

||||

================================================================================

|

||||

|

||||

### 应用 ###

|

||||

|

||||

|

||||

|

||||

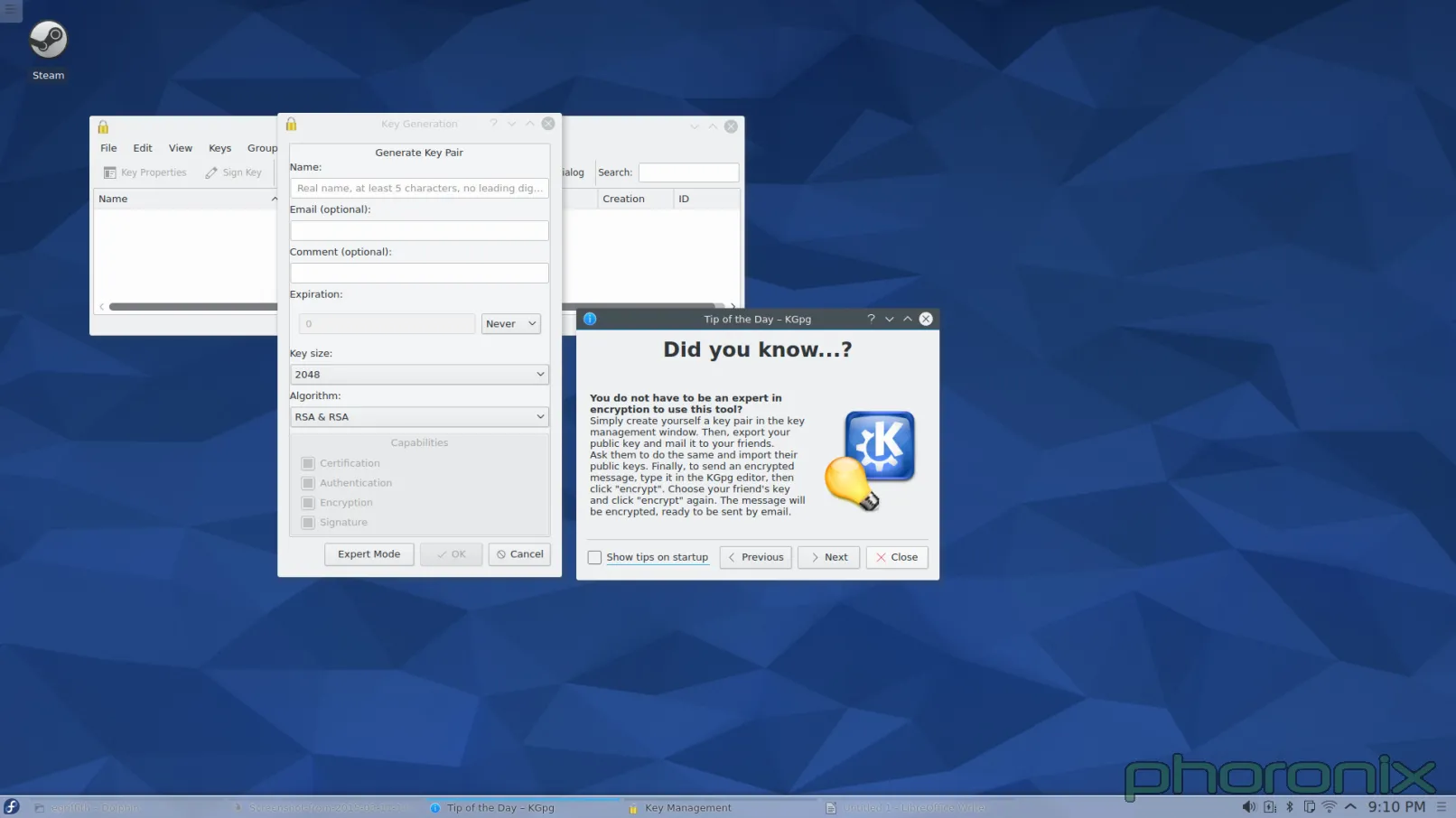

这是一个基本扯平的方面。每一个桌面环境都有一些非常好的应用,也有一些不怎么样的。再次强调,Gnome 把那些 KDE 完全错失的小细节给做对了。我不是想说 KDE 中有哪些应用不好。他们都能工作,但仅此而已。也就是说:它们合格了,但确实还没有达到甚至接近100分。

|

||||

|

||||

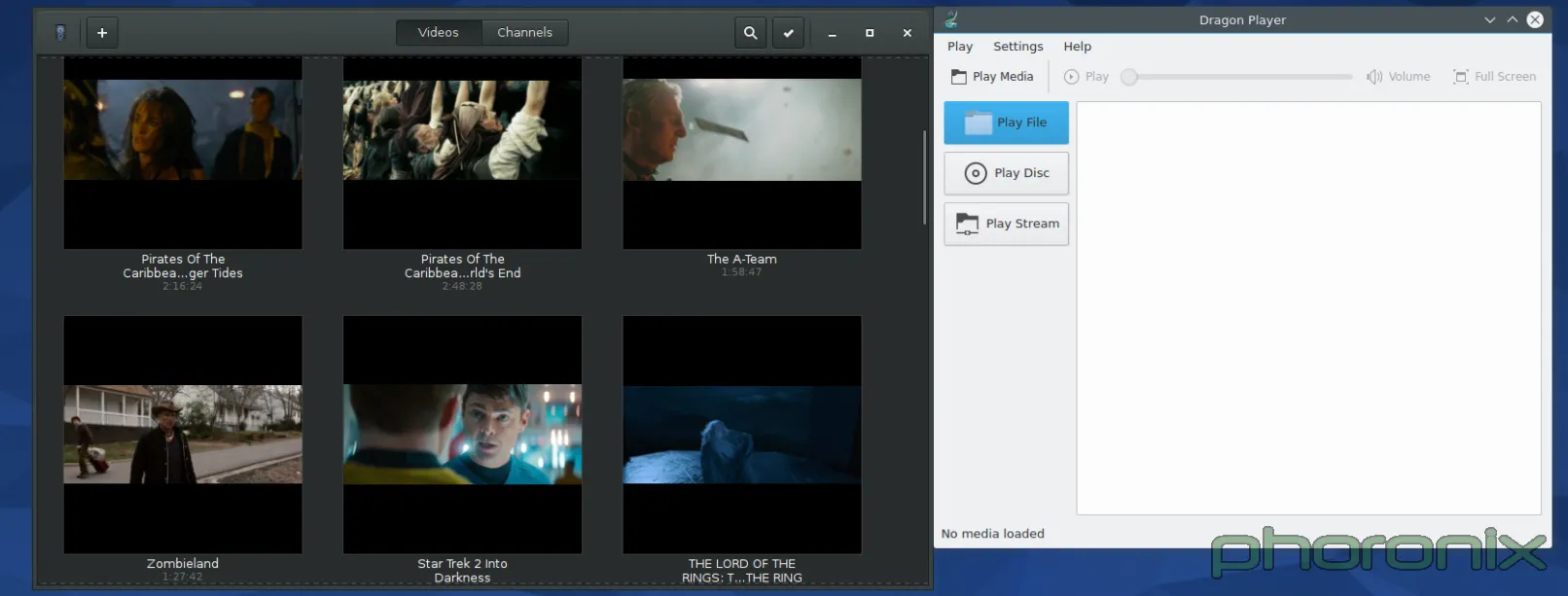

Gnome 在左,KDE 在右。Dragon 播放器运行得很好,清晰的标出了播放文件、URL或和光盘的按钮,正如你在 Gnome Videos 中能做到的一样……但是在便利的文件名和用户的友好度方面,Gnome 多走了一小步。它默认显示了在你的电脑上检测到的所有影像文件,不需要你做任何事情。KDE 有 [Baloo][](正如之前的 [Nepomuk][2],LCTT 译注:这是 KDE 中一种文件索引服务框架)为什么不使用它们?它们能列出可读取的影像文件……但却没被使用。

|

||||

|

||||

下一步……音乐播放器

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

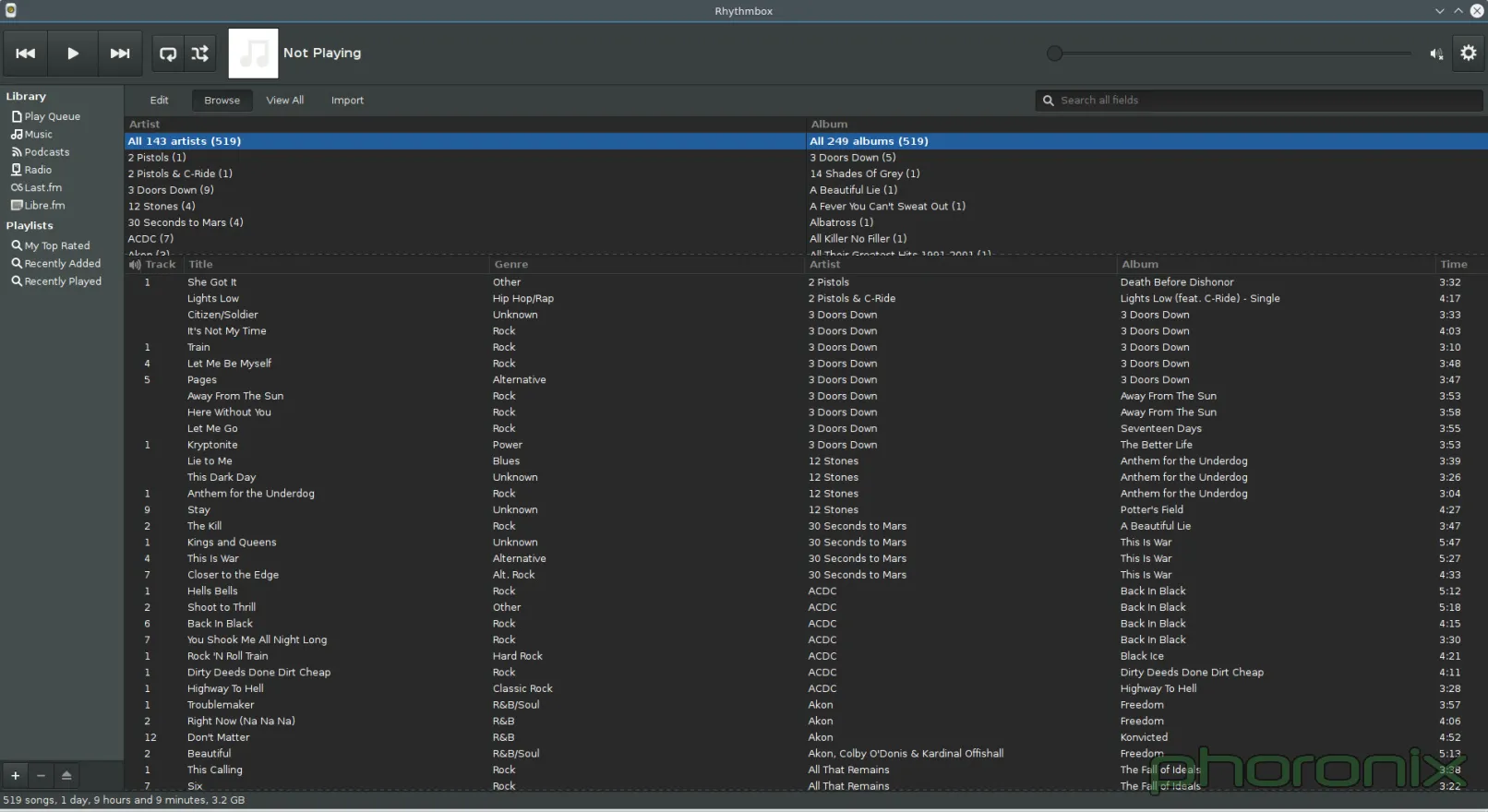

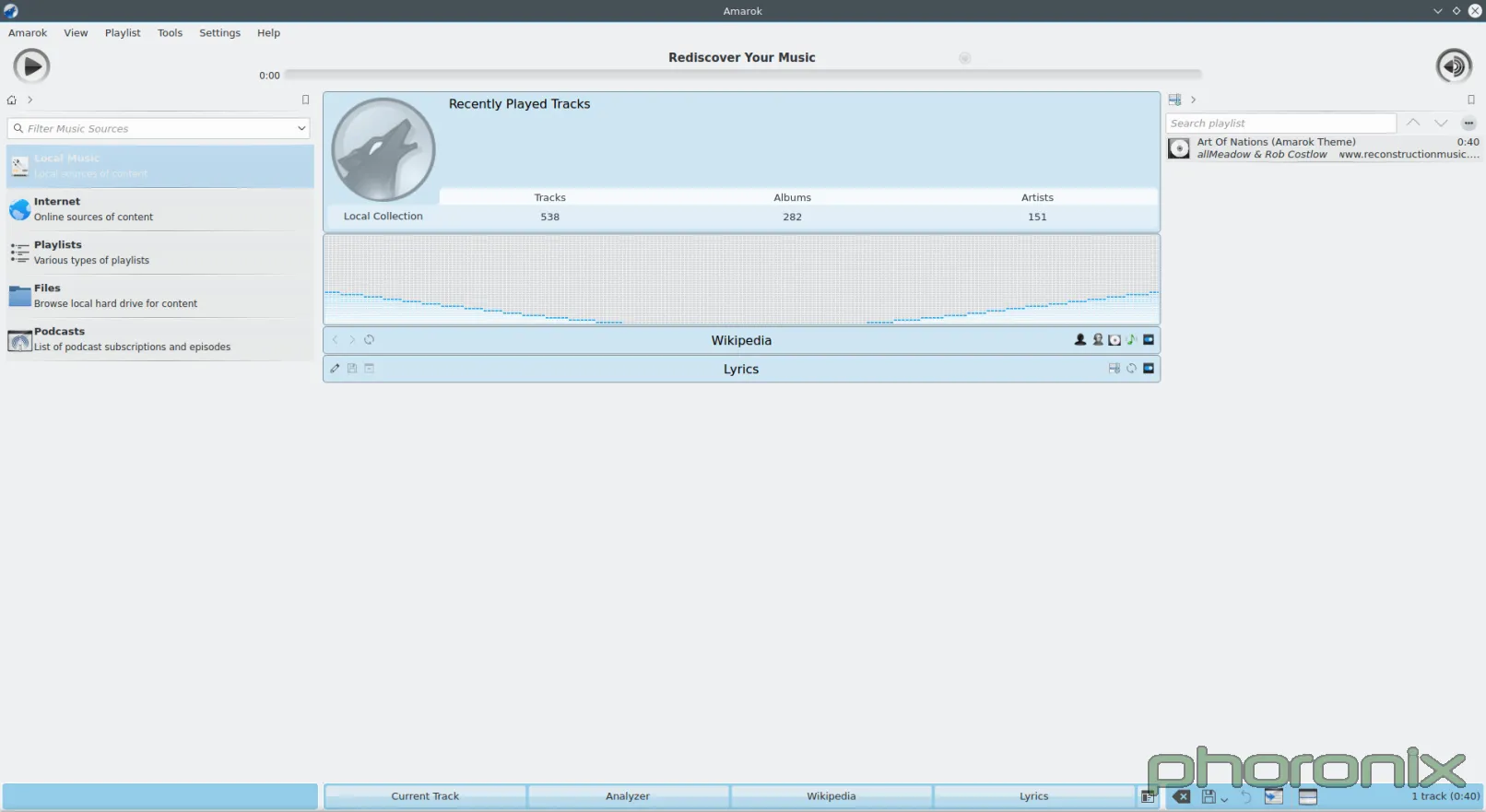

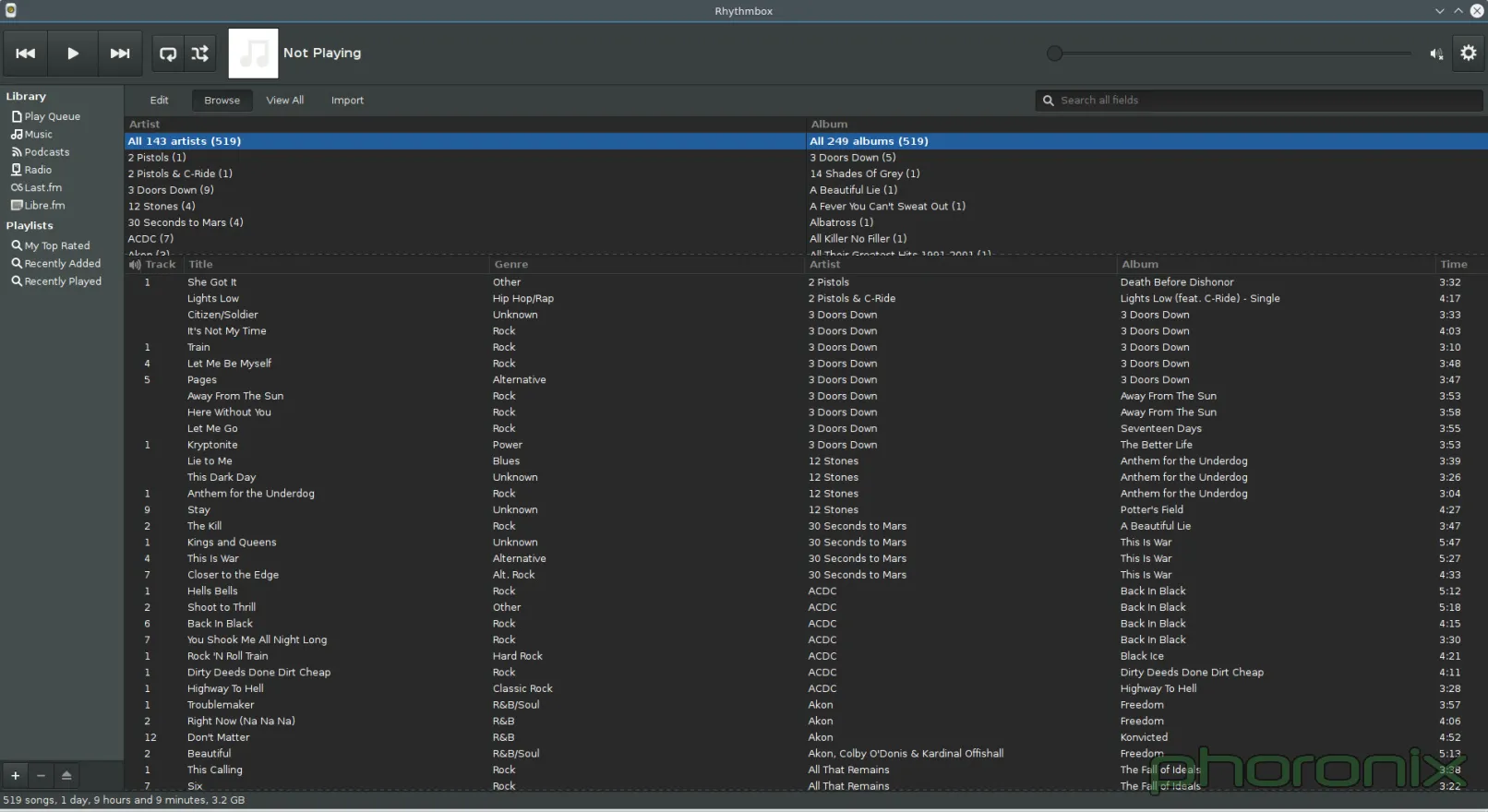

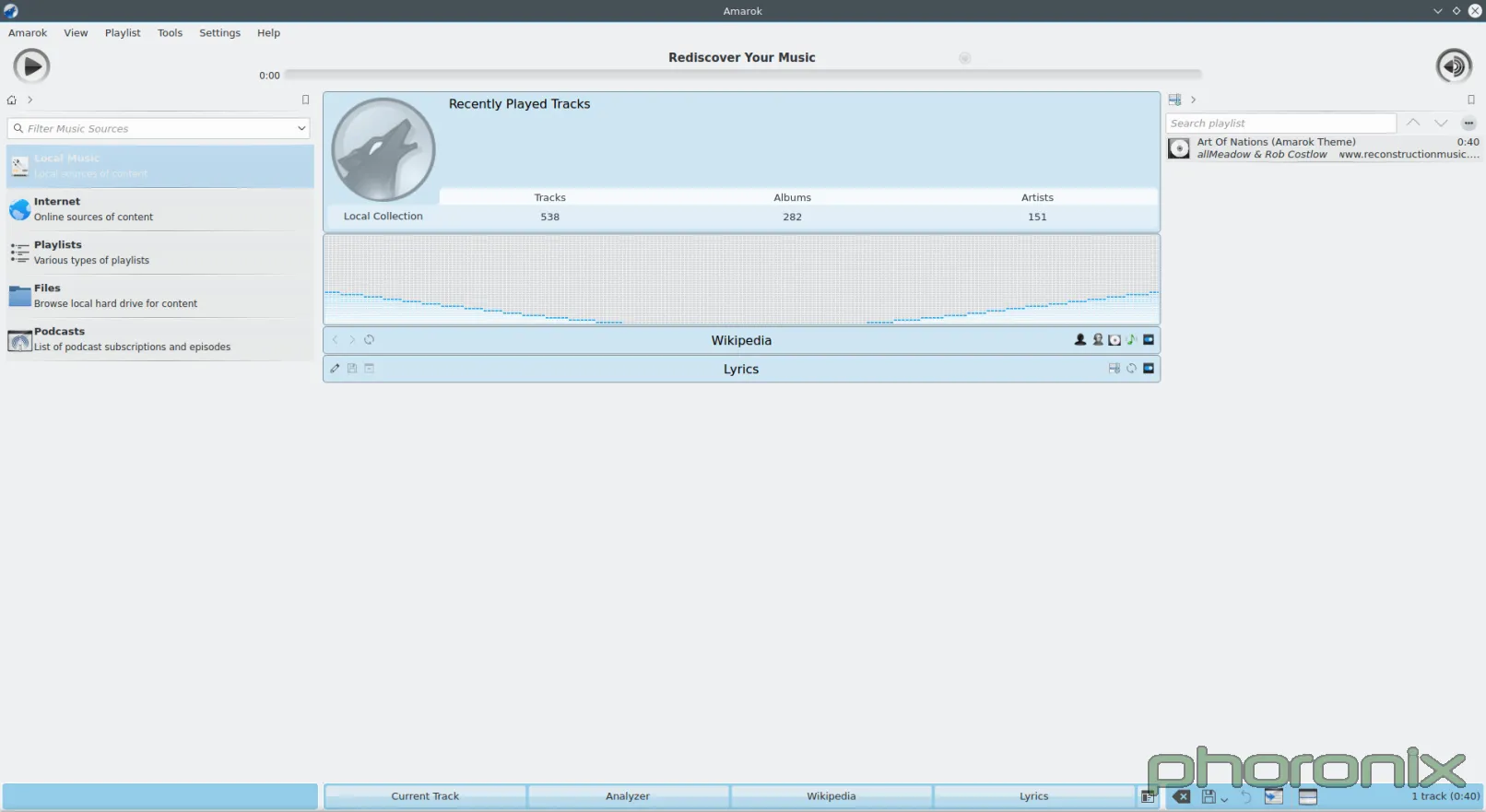

这两个应用,左边的是 Rhythmbox ,右边的是 Amarok,都是打开后没有做任何修改直接截屏的。看到差别了吗?Rhythmbox 看起来像个音乐播放器,直接了当,排序文件的方法也很清晰,它知道它应该是什么样的,它的工作是什么:就是播放音乐。

|

||||

|

||||

Amarok 感觉就像是某个人为了展示而把所有的扩展和选项都尽可能地塞进一个应用程序中去,而做出来的一个技术演示产品(tech demos),或者一个库演示产品(library demos)——而这些是不应该做为产品装进去的,它只应该展示其中一点东西。而 Amarok 给人的感觉却是这样的:好像是某个人想把每一个感觉可能很酷的东西都塞进一个媒体播放器里,甚至都不停下来想“我想写啥来着?一个播放音乐的应用?”

|

||||

|

||||

看看默认布局就行了。前面和中心都呈现了什么?一个可视化工具和集成了维基百科——占了整个页面最大和最显眼的区域。第二大的呢?播放列表。第三大,同时也是最小的呢?真正的音乐列表。这种默认设置对于一个核心应用来说,怎么可能称得上理智?

|

||||

|

||||

软件管理器!它在最近几年当中有很大的进步,而且接下来的几个月中,很可能只能看到它更大的进步。不幸的是,这是另一个 KDE 做得差一点点就能……但还是在终点线前以脸戗地了。

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

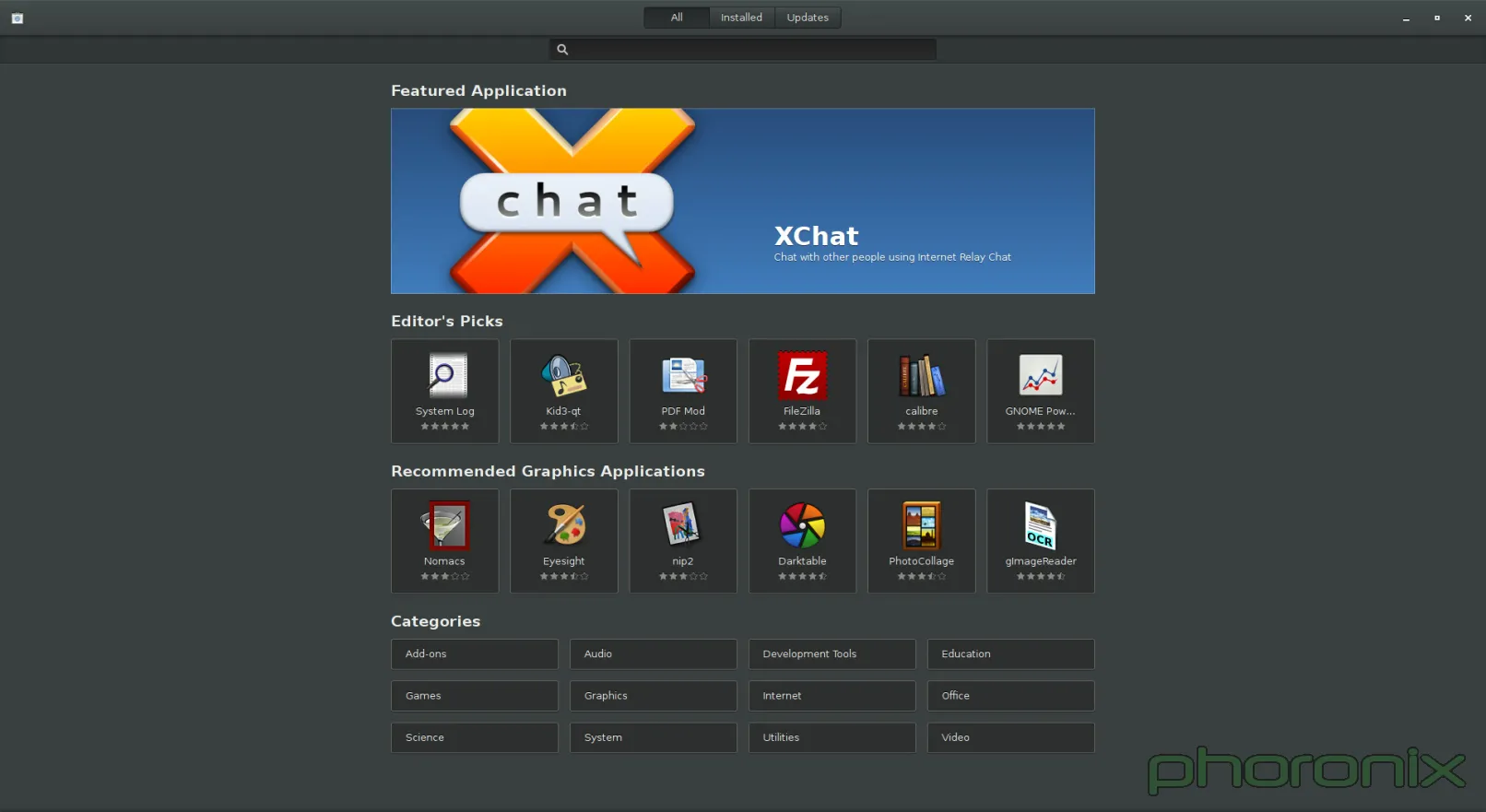

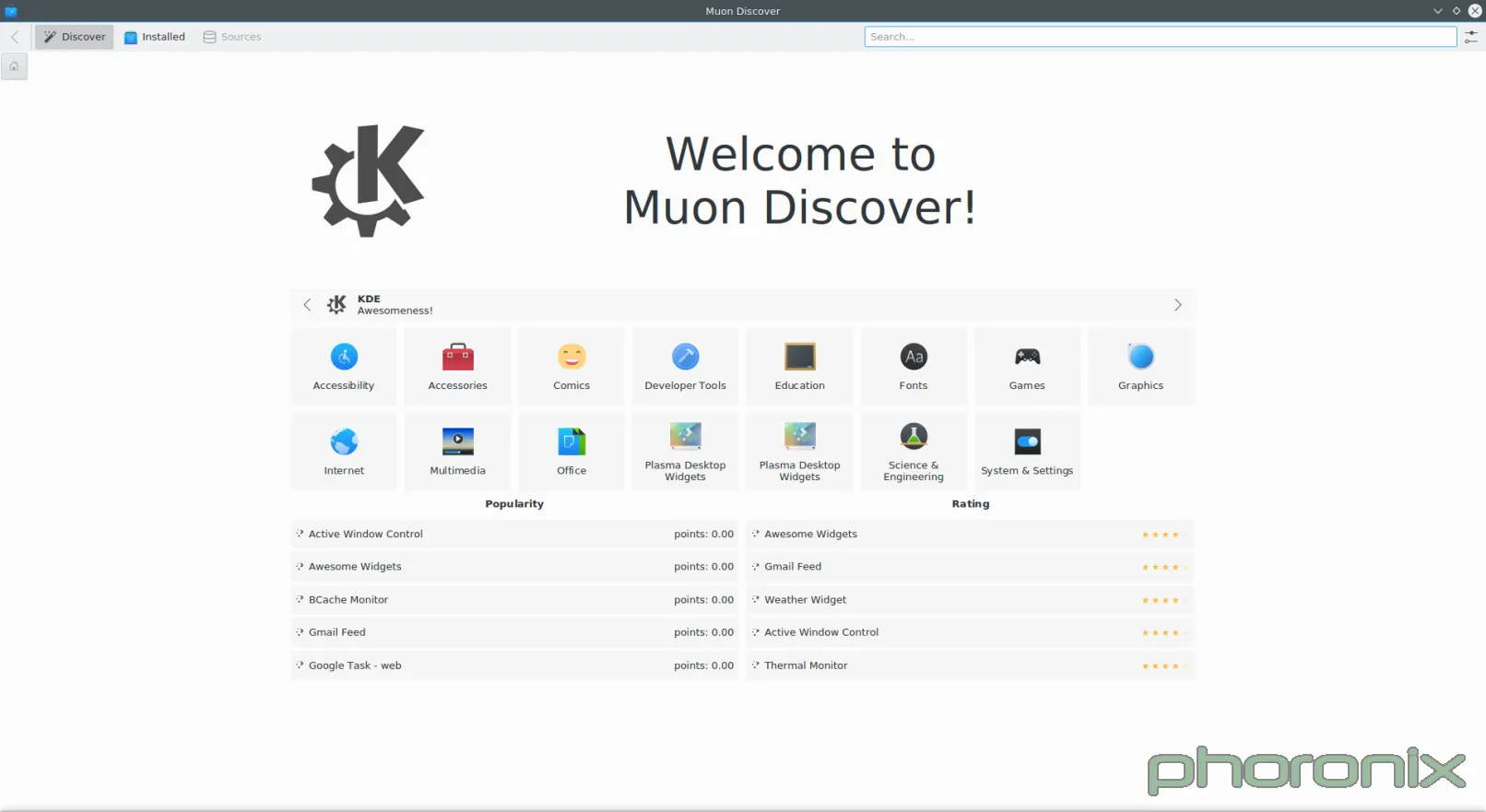

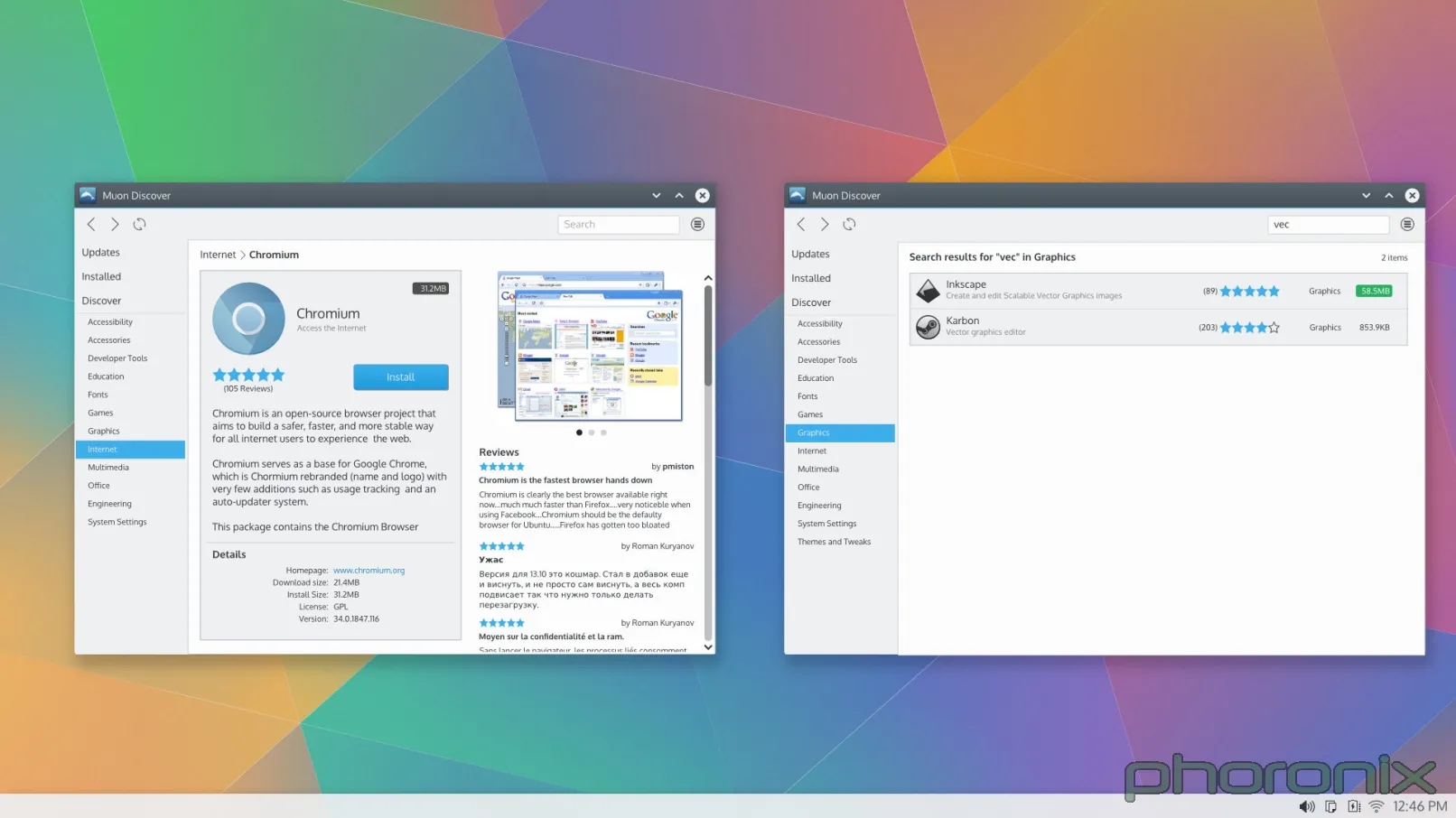

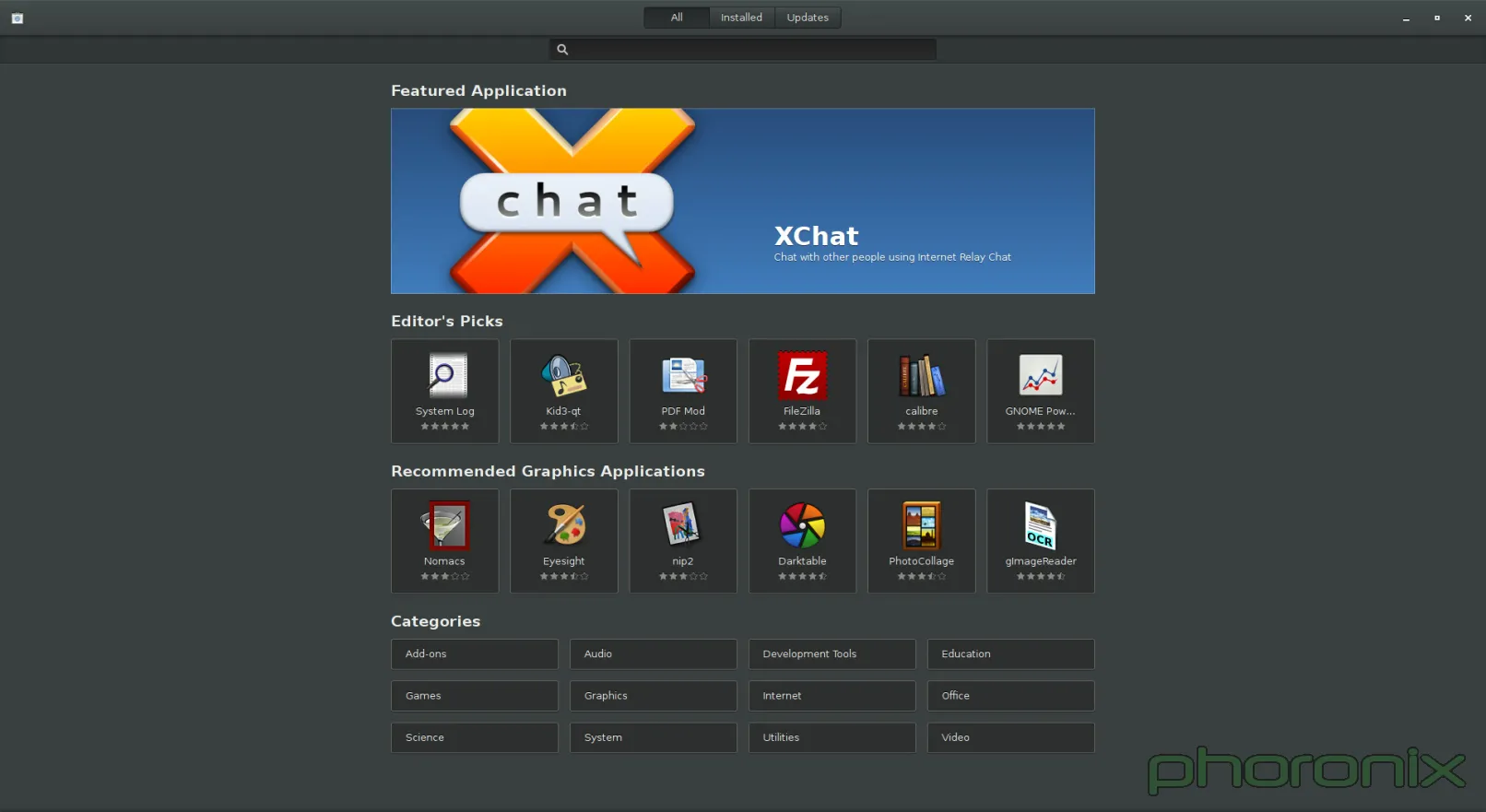

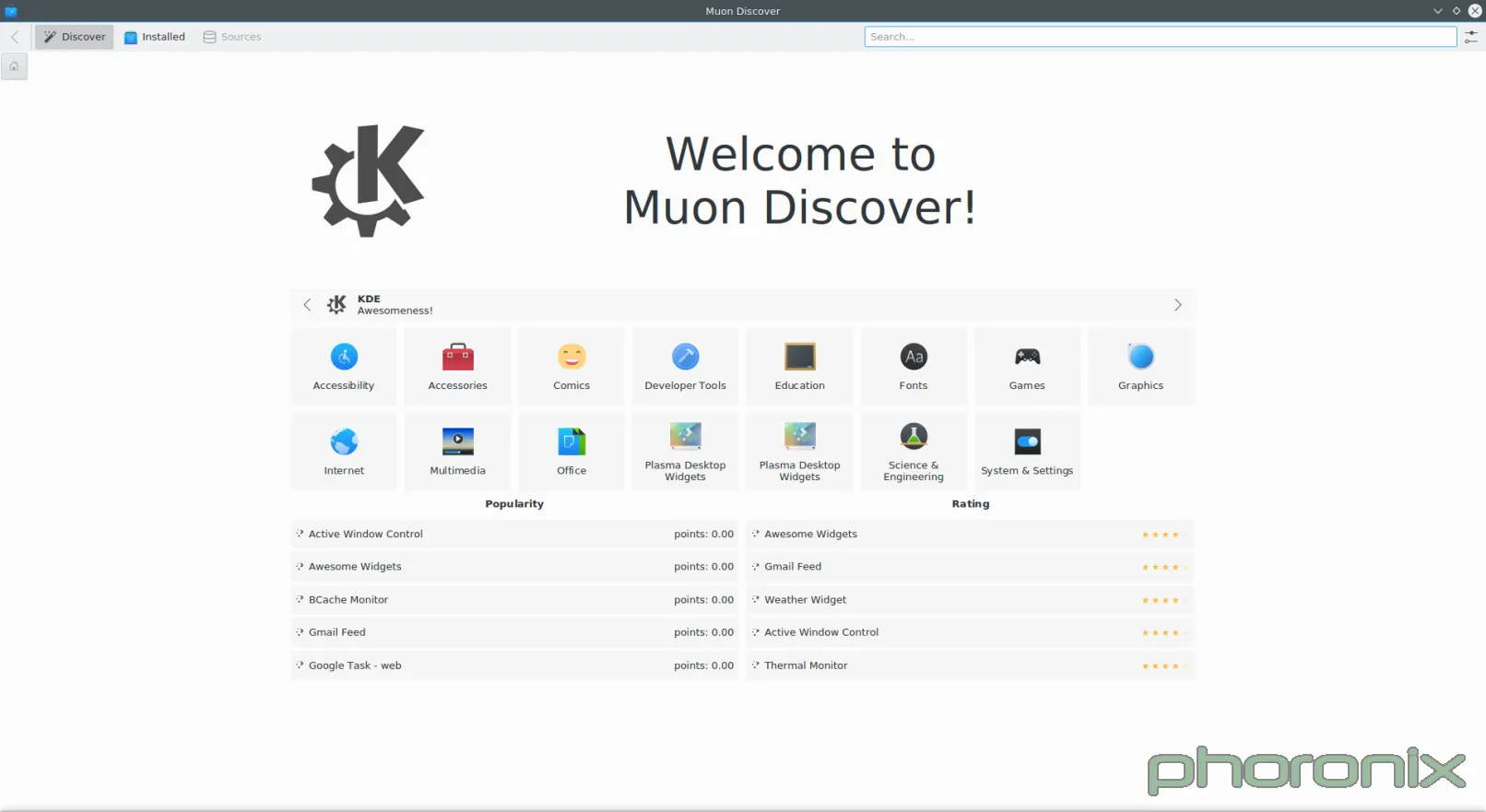

Gnome 软件中心可能是我的新的最爱的软件中心,先放下牢骚等下再发。Muon, 我想爱上你,真的。但你就是个设计上的梦魇。当 VDG 给你画设计草稿时(草图如下),你看起来真漂亮。白色空间用得很好,设计简洁,类别列表也很好,你的整个“不要分开做成两个应用程序”的设计都很不错。

|

||||

|

||||

|

||||

|

||||

接着就有人为你写代码,实现真正的UI,但是,我猜这些家伙当时一定是喝醉了。

|

||||

|

||||

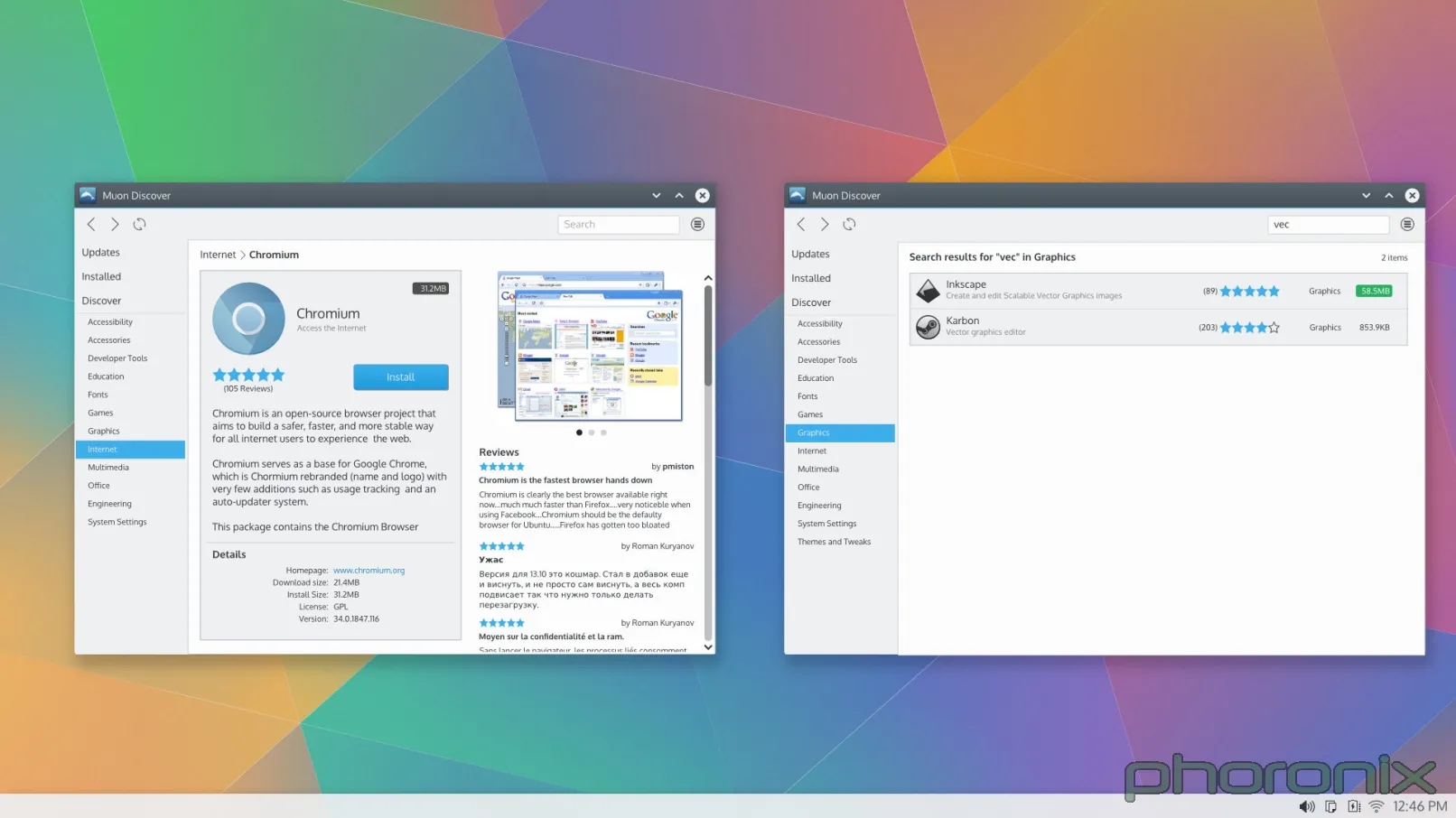

我们来看看 Gnome 软件中心。正中间是什么?软件,软件截图和软件描述等等。Muon 的正中心是什么?白白浪费的大块白色空间。Gnome 软件中心还有一个贴心便利特点,那就是放了一个“运行”的按钮在那儿,以防你已经安装了这个软件。便利性和易用性很重要啊,大哥。说实话,仅仅让 Muon 把东西都居中对齐了可能看起来的效果都要好得多。

|

||||

|

||||

Gnome 软件中心沿着顶部的东西是什么,像个标签列表?所有软件,已安装软件,软件升级。语言简洁,直接,直指要点。Muon,好吧,我们有个“发现”,这个语言表达上还算差强人意,然后我们又有一个“已安装软件”,然后,就没有然后了。软件升级哪去了?

|

||||

|

||||

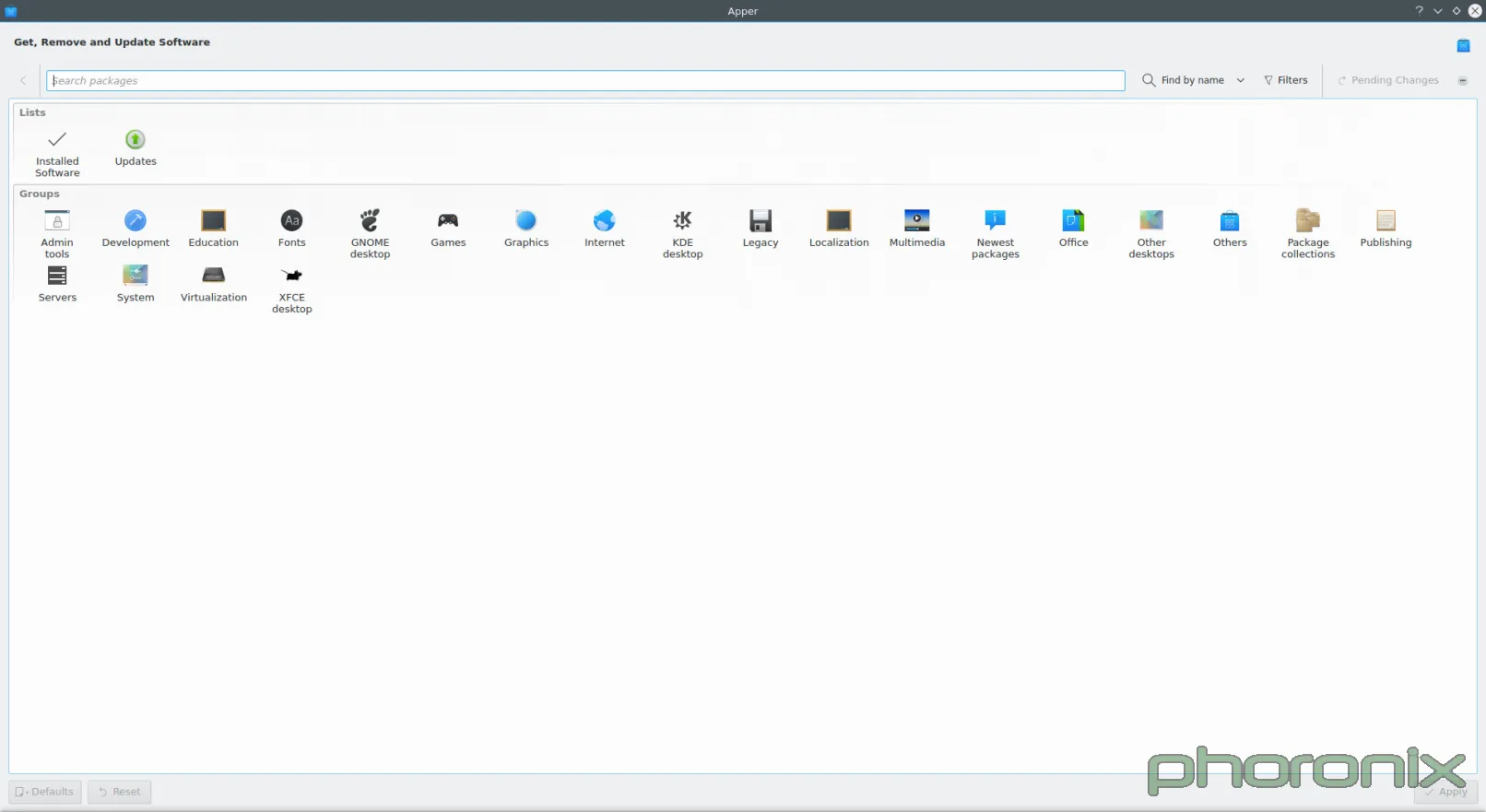

好吧……开发者决定把升级独立分开成一个应用程序,这样你就得打开两个应用程序才能管理你的软件——一个用来安装,一个用来升级——自从有了新立得图形软件包管理器以来,首次有这种破天荒的设计,与任何已存的软件中心的设计范例相违背。

|

||||

|

||||

我不想贴上截图给你们看,因为我不想等下还得清理我的电脑,如果你进入 Muon 安装了什么,那么它就会在屏幕下方根据安装的应用名创建一个标签,所以如果你一次性安装很多软件的话,那么下面的标签数量就会慢慢的增长,然后你就不得不手动检查清除它们,因为如果你不这样做,当标签增长到超过屏幕显示时,你就不得不一个个找过去来才能找到最近正在安装的软件。想想:在火狐浏览器中打开50个标签是什么感受。太烦人,太不方便!

|

||||

|

||||

我说过我会给 Gnome 一点打击,我是认真的。Muon 有一点做得比 Gnome 软件中心做得好。在 Muon 的设置栏下面有个“显示技术包”,即:编辑器,软件库,非图形应用程序,无 AppData 的应用等等(LCTT 译注:AppData 是软件包中的一个特殊文件,用于专门存储软件的信息)。Gnome 则没有。如果你想安装其中任何一项你必须跑到终端操作。我想这是他们做得不对的一点。我完全理解他们推行 AppData 的心情,但我想他们太急了(LCTT 译注:推行所有软件包带有 AppData 是 Gnome 软件中心的目标之一)。我是在想安装 PowerTop,而 Gnome 不显示这个软件时我才发现这点的——因为它没有 AppData,也没有“显示技术包”设置。

|

||||

|

||||

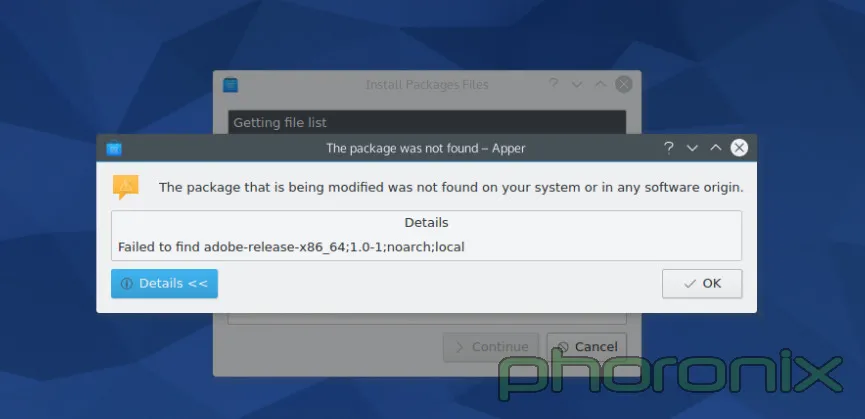

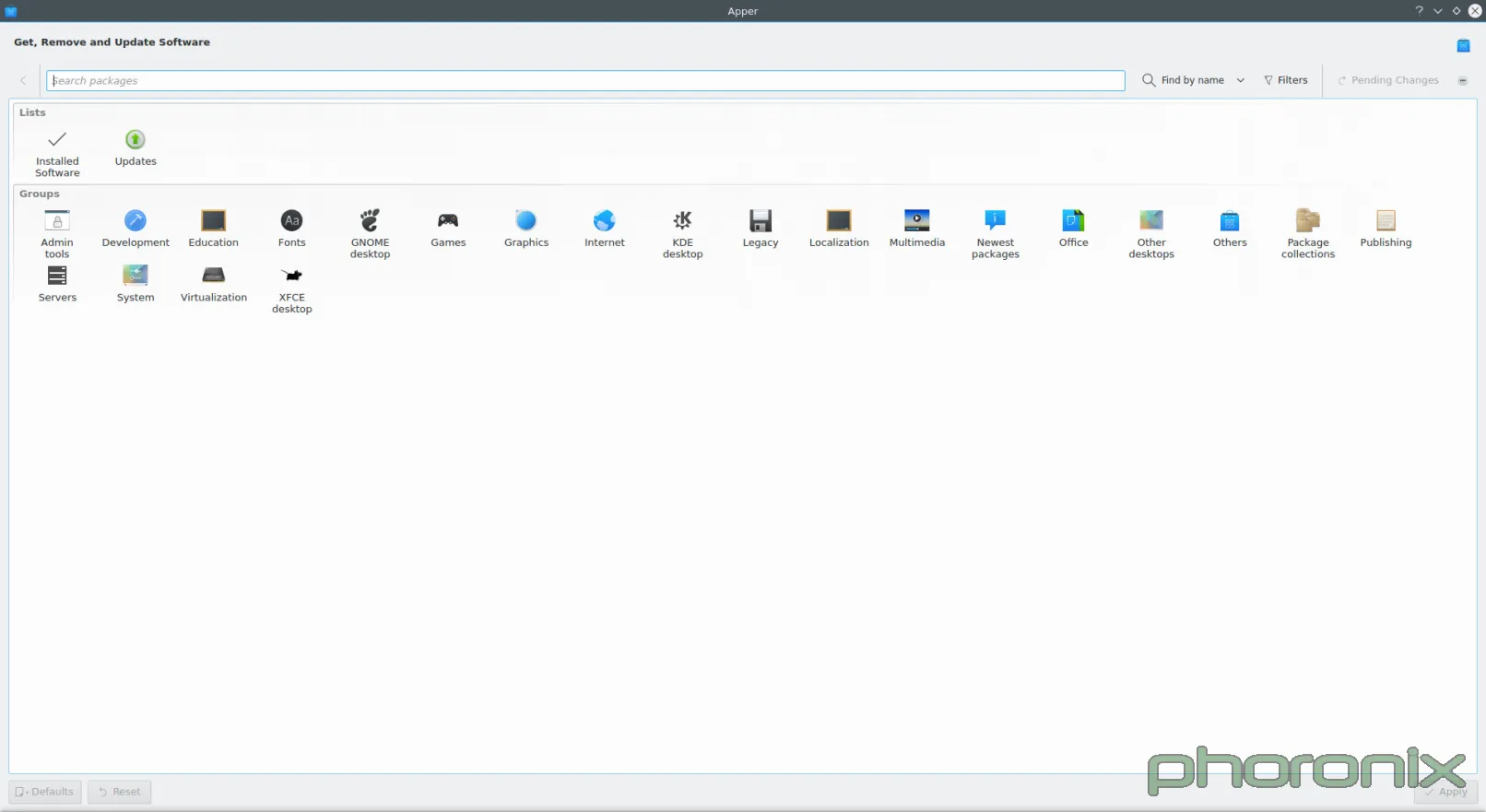

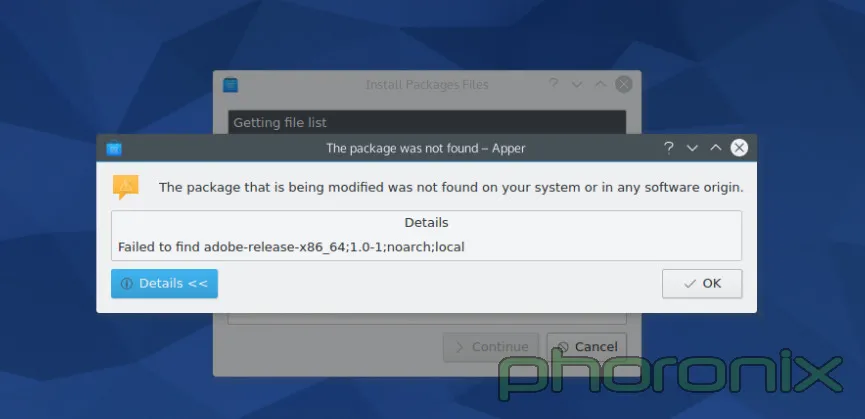

更不幸的事实是,如果你在 KDE 下你不能说“用 [Apper][3] 就行了”,因为……

|

||||

|

||||

|

||||

|

||||

Apper 对安装本地软件包的支持大约在 Fedora 19 时就中止了,几乎两年了。我喜欢关注细节与质量。

|

||||

|

||||

--------------------------------------------------------------------------------

|

||||

|

||||

via: http://www.phoronix.com/scan.php?page=article&item=gnome-week-editorial&num=3

|

||||

|

||||

作者:Eric Griffith

|

||||

译者:[XLCYun](https://github.com/XLCYun)

|

||||

校对:[wxy](https://github.com/wxy)

|

||||

|

||||

本文由 [LCTT](https://github.com/LCTT/TranslateProject) 原创翻译,[Linux中国](https://linux.cn/) 荣誉推出

|

||||

|

||||

[1]:https://community.kde.org/Baloo

|

||||

[2]:http://www.ikde.org/tech/kde-tech-nepomuk/

|

||||

[3]:https://en.wikipedia.org/wiki/Apper

|

||||

@ -0,0 +1,52 @@

|

||||

一周 GNOME 之旅:品味它和 KDE 的是是非非(第四节 GNOME设置)

|

||||

================================================================================

|

||||

|

||||

### 设置 ###

|

||||

|

||||

在这我要挑一挑几个特定 KDE 控制模块的毛病,大部分原因是因为相比它们的对手GNOME来说,糟糕得太可笑,实话说,真是悲哀。

|

||||

|

||||

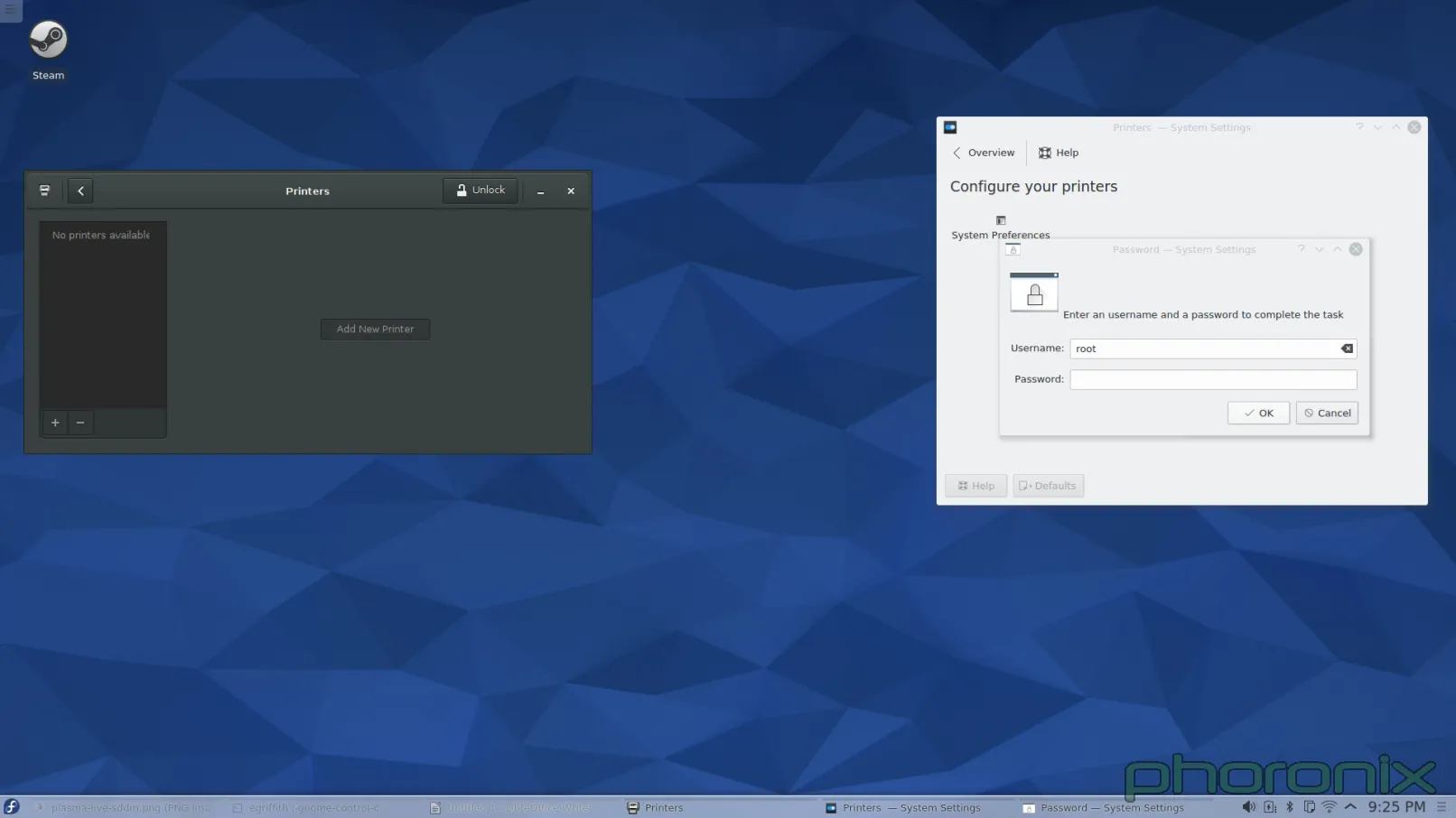

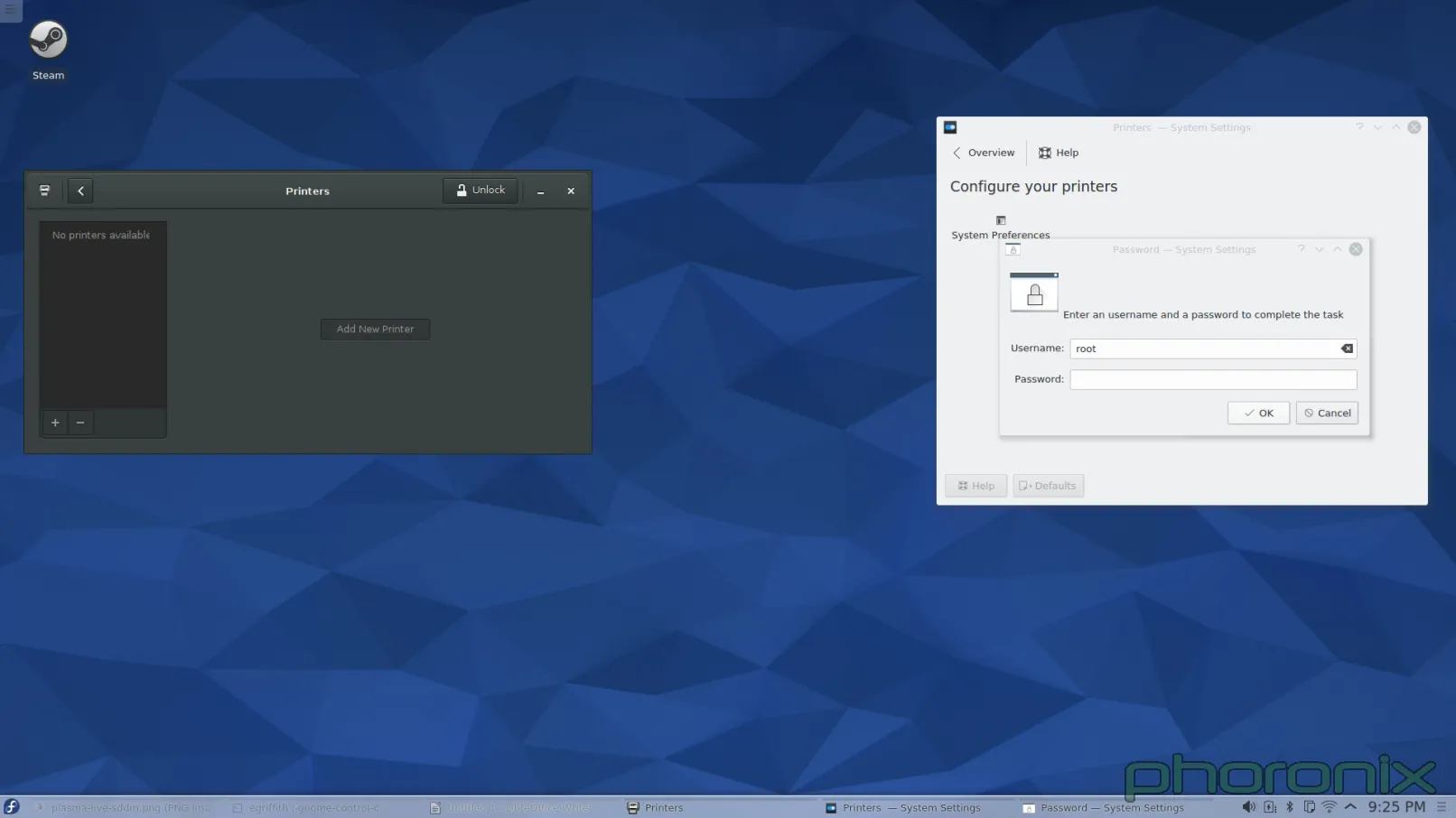

第一个接招的?打印机。

|

||||

|

||||

|

||||

|

||||

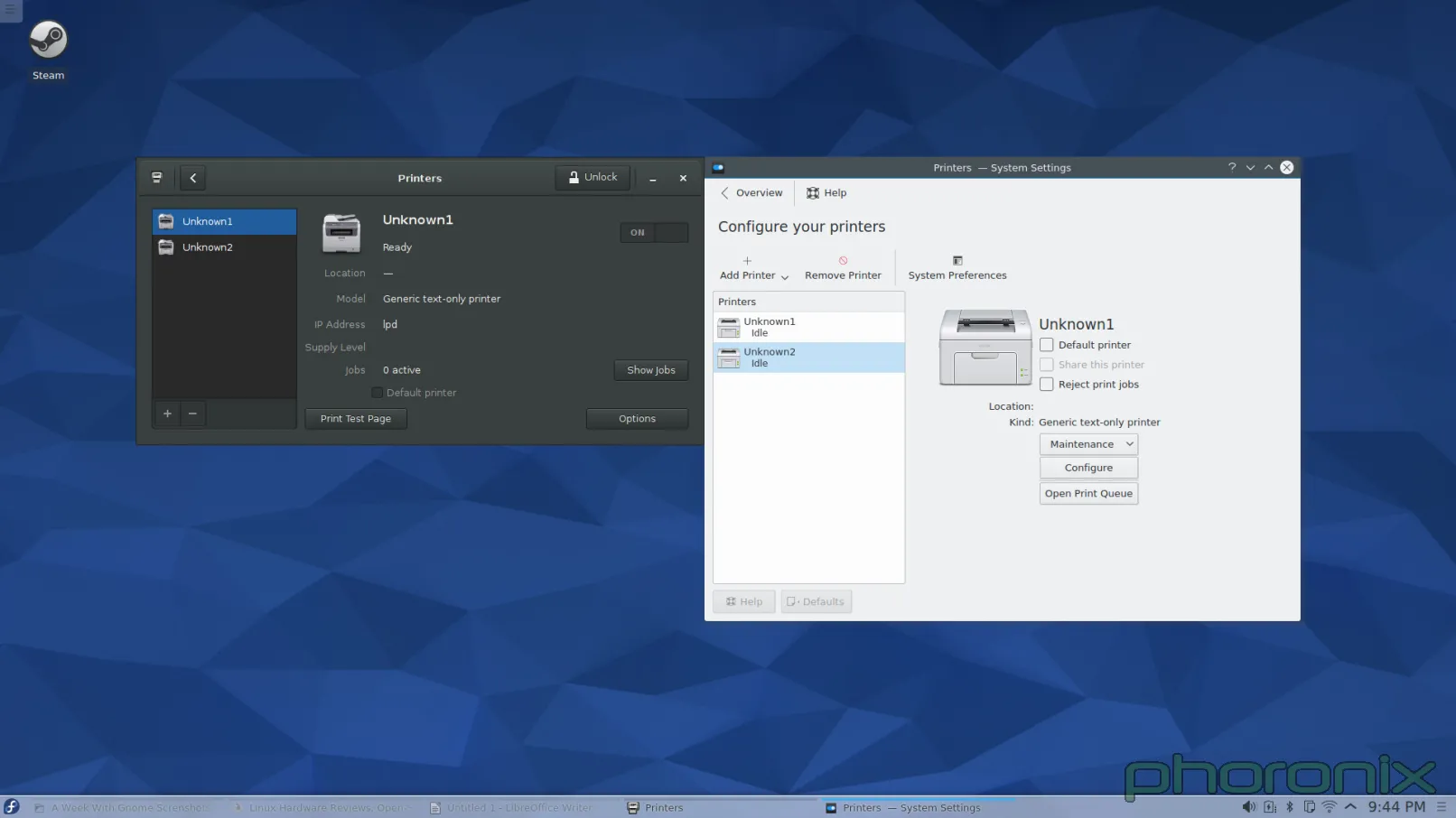

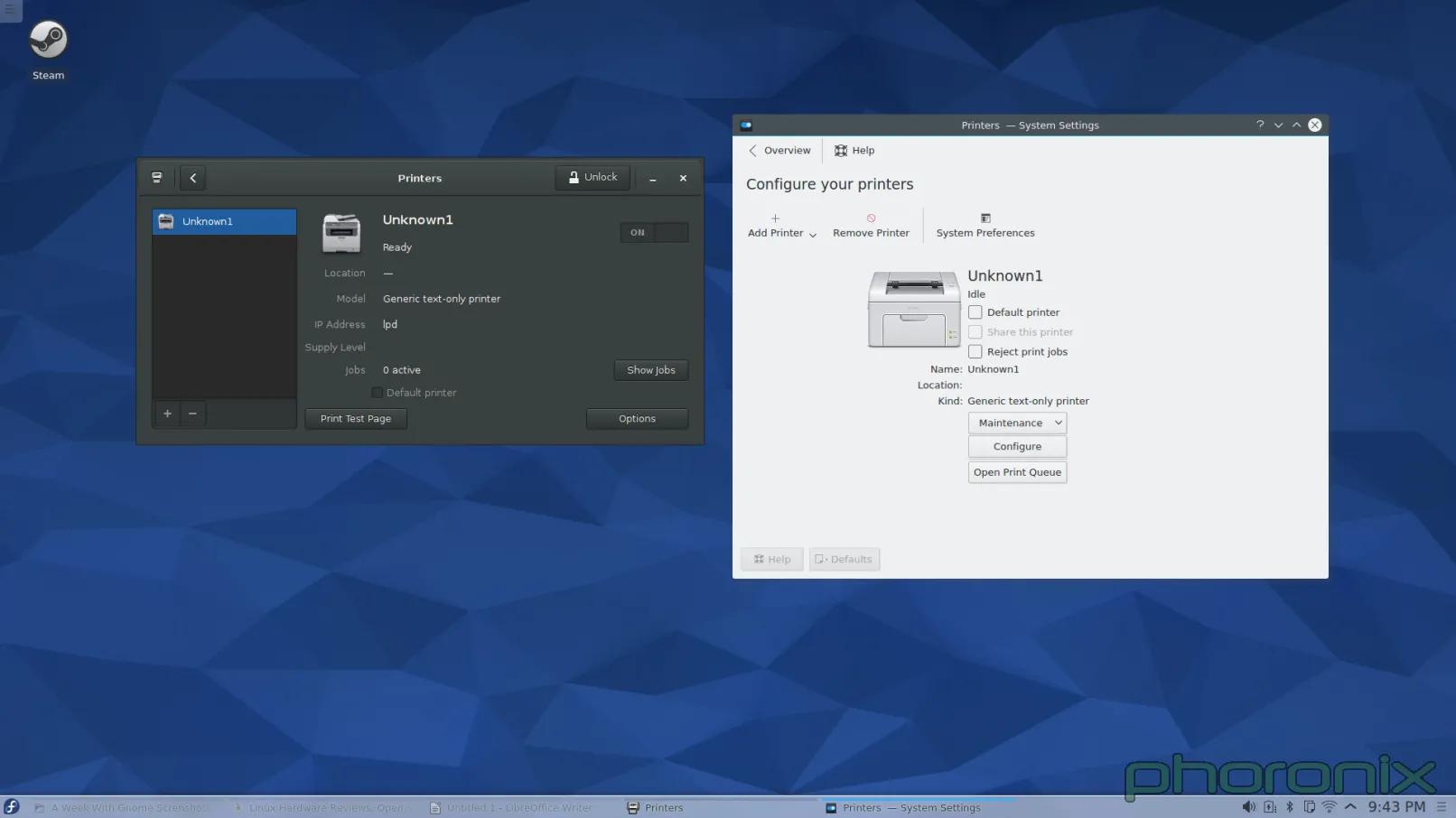

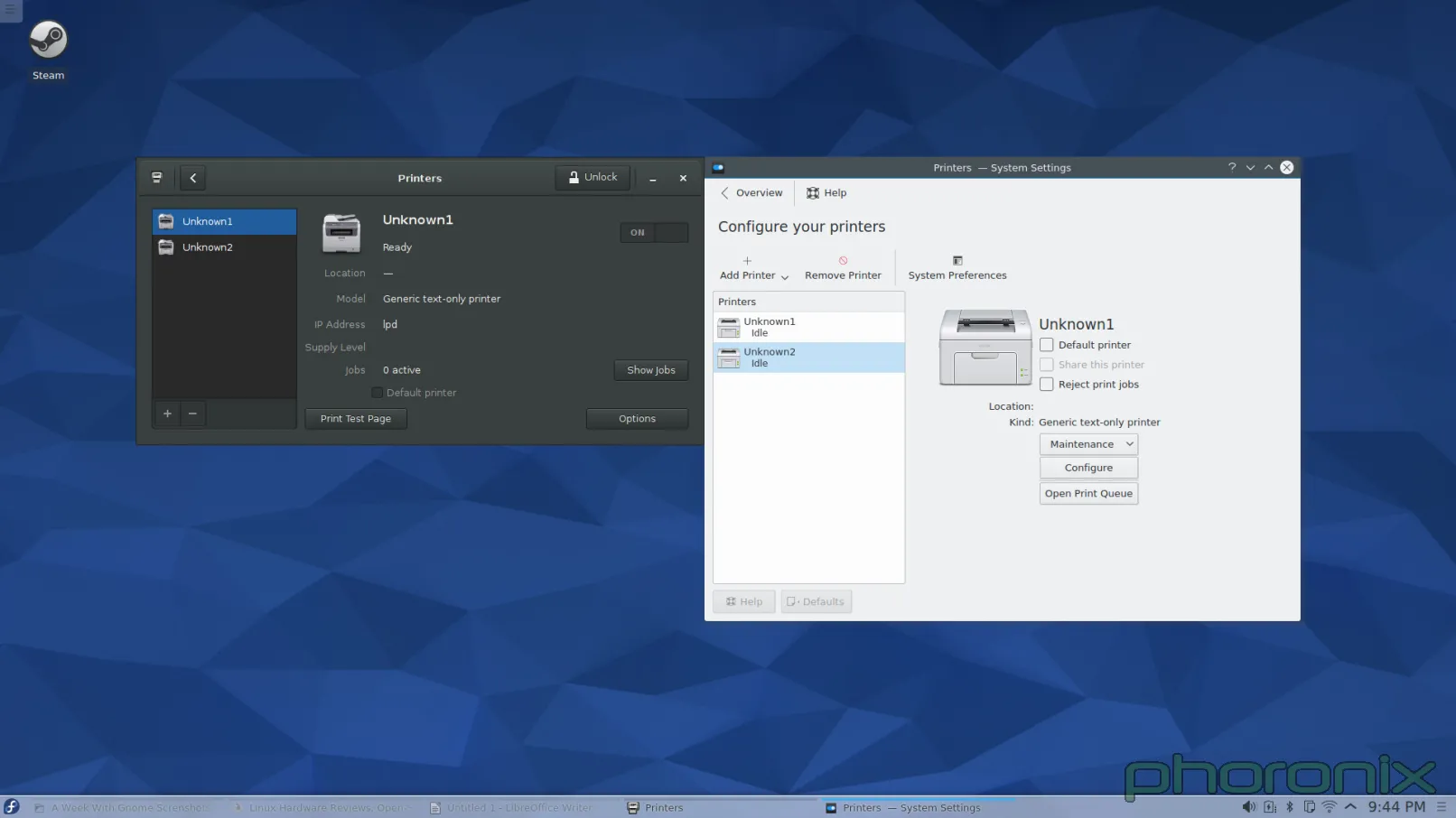

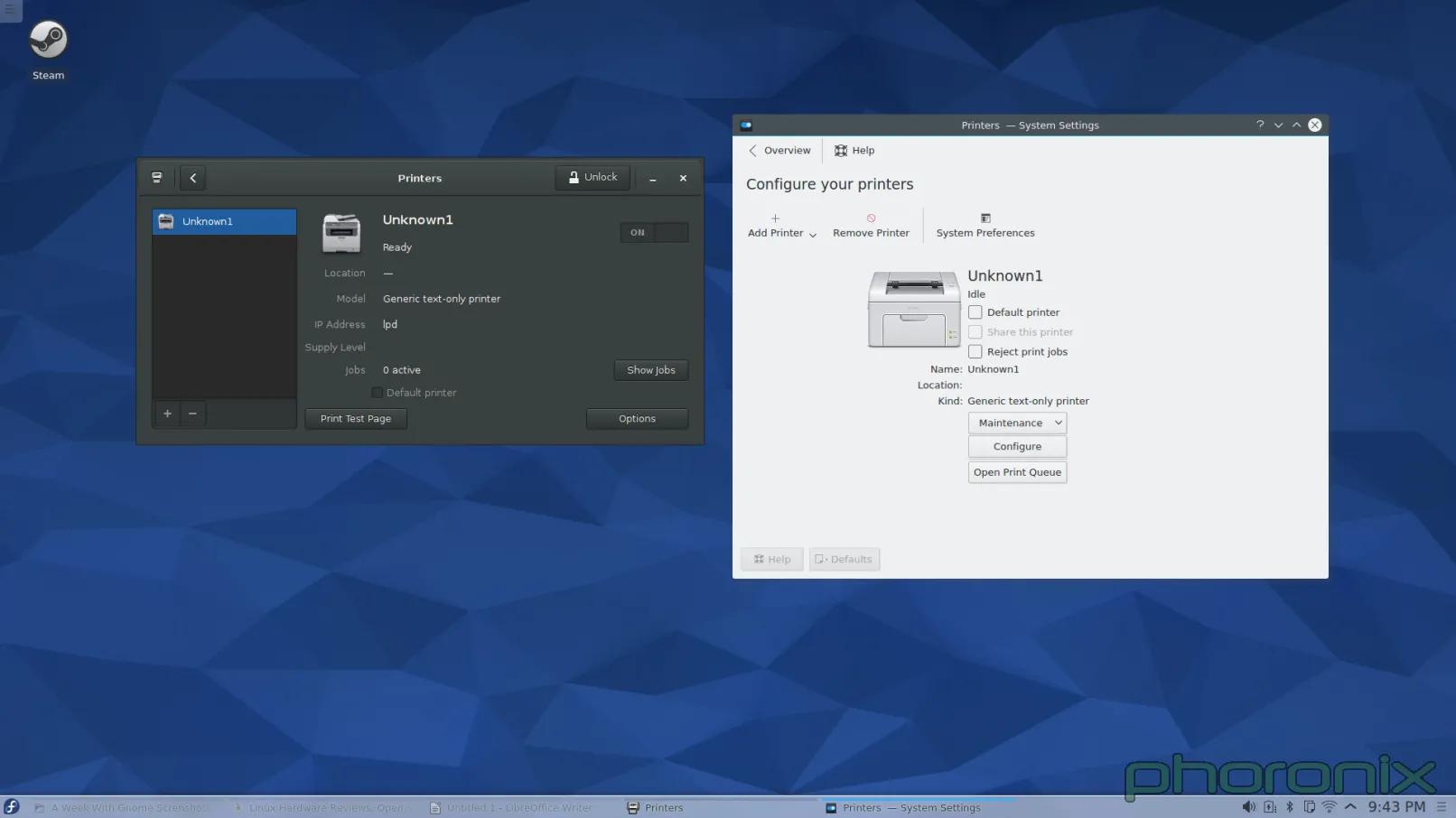

GNOME 在左,KDE 在右。你知道左边跟右边的打印程序有什么区别吗?当我在 GNOME 控制中心打开“打印机”时,程序窗口弹出来了,然后这样就可以使用了。而当我在 KDE 系统设置打开“打印机”时,我得到了一条密码提示。甚至我都没能看一眼打印机呢,我就必须先交出 ROOT 密码。

|

||||

|

||||

让我再重复一遍。在今天这个有了 PolicyKit 和 Logind 的日子里,对一个应该是 sudo 的操作,我依然被询问要求 ROOT 的密码。我安装系统的时候甚至都没设置 root 密码。所以我必须跑到 Konsole 去,接着运行 'sudo passwd root' 命令,这样我才能给 root 设一个密码,然后我才能回到系统设置中的打印程序,再交出 root 密码,然后仅仅是看一看哪些打印机可用。完成了这些工作后,当我点击“添加打印机”时,我再次得到请求 ROOT 密码的提示,当我解决了它后再选择一个打印机和驱动时,我再次得到请求 ROOT 密码的提示。仅仅是为了添加一个打印机到系统我就收到三次密码请求!

|

||||

|

||||

而在 GNOME 下添加打印机,在点击打印机程序中的“解锁”之前,我没有得到任何请求 SUDO 密码的提示。整个过程我只被请求过一次,仅此而已。KDE,求你了……采用 GNOME 的“解锁”模式吧。不到一定需要的时候不要发出提示。还有,不管是哪个库,只要它允许 KDE 应用程序绕过 PolicyKit/Logind(如果有的话)并直接请求 ROOT 权限……那就把它封进箱里吧。如果这是个多用户系统,那我要么必须交出 ROOT 密码,要么我必须时时刻刻待命,以免有一个用户需要升级、更改或添加一个新的打印机。而这两种情况都是完全无法接受的。

|

||||

|

||||

有还一件事……

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

这个问题问大家:怎么样看起来更简洁?我在写这篇文章时意识到:当有任何附加的打印机准备好时,Gnome 打印机程序会把过程做得非常简洁,它们在左边上放了一个竖直栏来列出这些打印机。而我在 KDE 中添加第二台打印机时,它突然增加出一个左边栏来。而在添加之前,我脑海中已经有了一个恐怖的画面,它会像图片文件夹显示预览图一样直接在界面里插入另外一个图标。我很高兴也很惊讶的看到我是错的。但是事实是它直接“长出”另外一个从未存在的竖直栏,彻底改变了它的界面布局,而这样也称不上“好”。终究还是一种令人困惑,奇怪而又不直观的设计。

|

||||

|

||||

打印机说得够多了……下一个接受我公开石刑的 KDE 系统设置是?多媒体,即 Phonon。

|

||||

|

||||

|

||||

|

||||

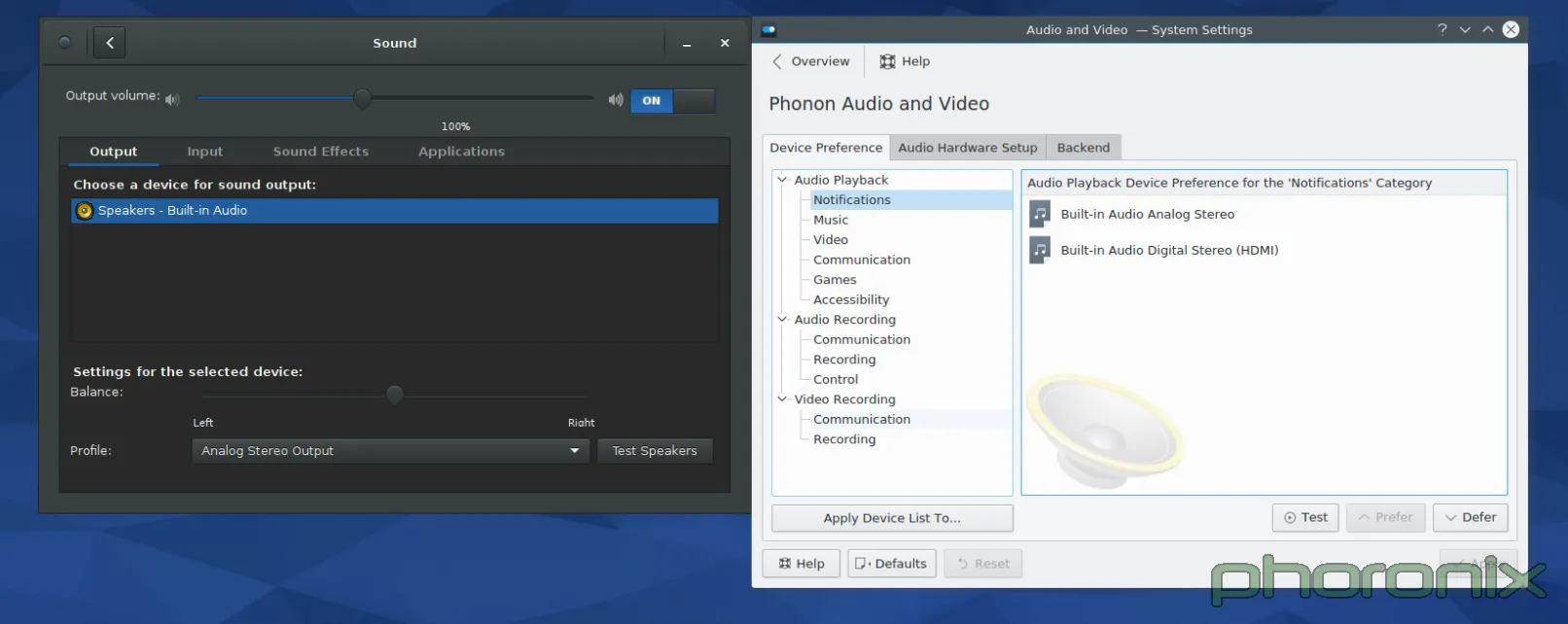

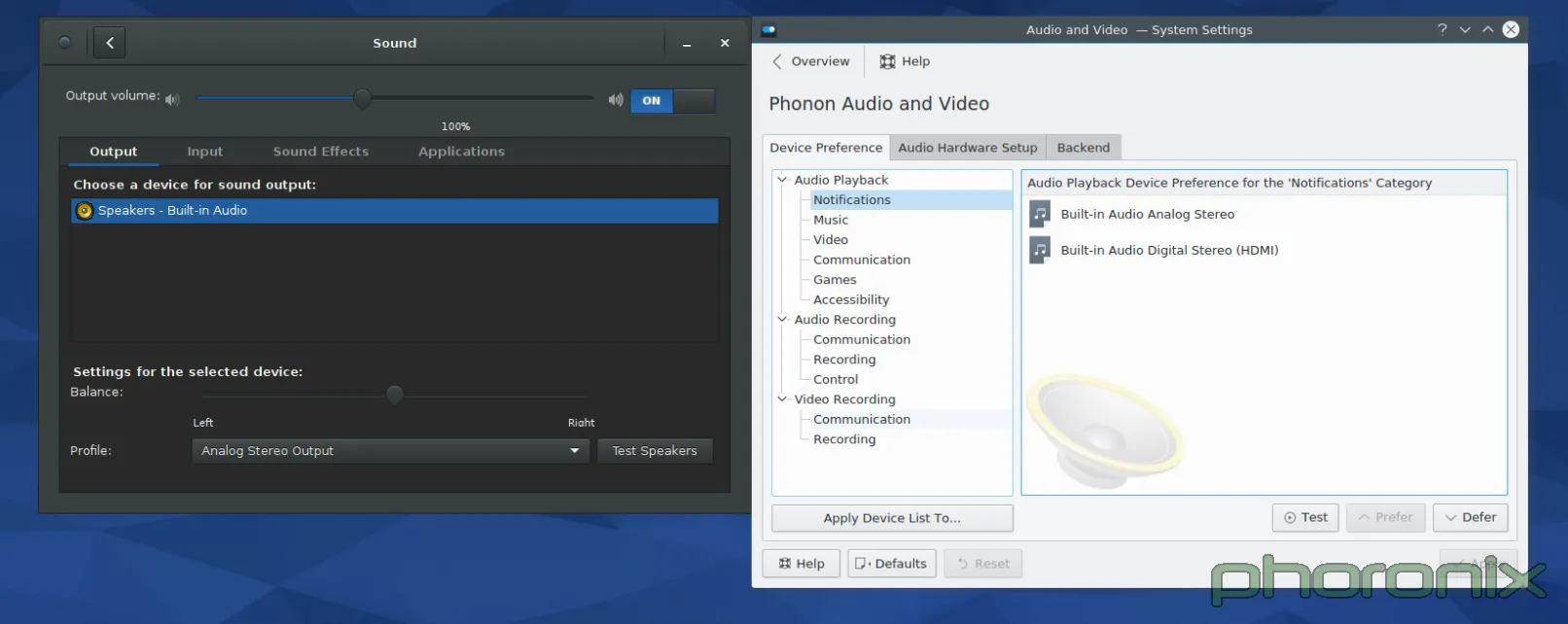

一如既往,GNOME 在左边,KDE 在右边。让我们先看看 GNOME 的系统设置先……眼睛移动是从左到右,从上到下,对吧?来吧,就这样做。首先:音量控制滑条。滑条中的蓝色条与空条百分百清晰地消除了哪边是“音量增加”的困惑。在音量控制条后马上就是一个 On/Off 开关,用来开关静音功能。Gnome 的再次得分在于静音后能记住当前设置的音量,而在点击音量增加按钮取消静音后能回到原来设置的音量中来。Kmixer,你个健忘的垃圾,我真的希望我能多讨论你一下。

|

||||

|

||||

继续!输入输出和应用程序的标签选项?每一个应用程序的音量随时可控?Gnome,每过一秒,我爱你越深。音量均衡选项、声音配置、和清晰地标上标志的“测试麦克风”选项。

|

||||

|

||||

我不清楚它能否以一种更干净更简洁的设计实现。是的,它只是一个 Gnome 化的 Pavucontrol,但我想这就是重要的地方。Pavucontrol 在这方面几乎完全做对了,Gnome 控制中心中的“声音”应用程序的改善使它向完美更进了一步。

|

||||

|

||||

Phonon,该你上了。但开始前我想说:我 TM 看到的是什么?!我知道我看到的是音频设备的优先级列表,但是它呈现的方式有点太坑。还有,那些用户可能关心的那些东西哪去了?拥有一个优先级列表当然很好,它也应该存在,但问题是优先级列表属于那种用户乱搞一两次之后就不会再碰的东西。它还不够重要,或者说不够常用到可以直接放在正中间位置的程度。音量控制滑块呢?对每个应用程序的音量控制功能呢?那些用户使用最频繁的东西呢?好吧,它们在 Kmix 中,一个分离的程序,拥有它自己的配置选项……而不是在系统设置下……这样真的让“系统设置”这个词变得有点用词不当。

|

||||

|

||||

|

||||

|

||||

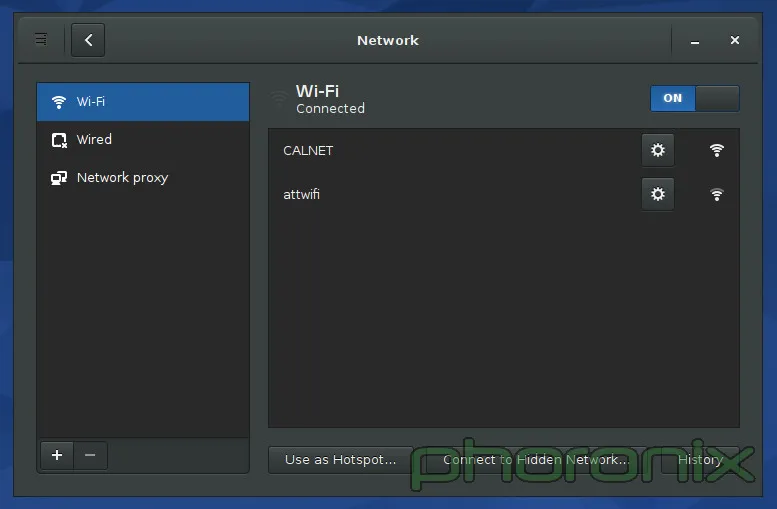

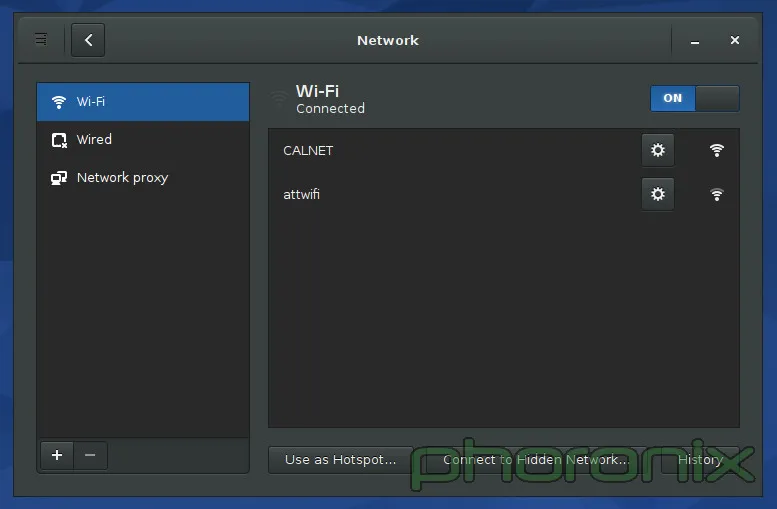

上面展示的 Gnome 的网络设置。KDE 的没有展示,原因就是我接下来要吐槽的内容了。如果你进入 KDE 的系统设置里,然后点击“网络”区域中三个选项中的任何一个,你会得到一大堆的选项:蓝牙设置、Samba 分享的默认用户名和密码(说真的,“连通性(Connectivity)”下面只有两个选项:SMB 的用户名和密码。TMD 怎么就配得上“连通性”这么大的词?),浏览器身份验证控制(只有 Konqueror 能用……一个已经倒闭的项目),代理设置,等等……我的 wifi 设置哪去了?它们没在这。哪去了?好吧,它们在网络应用程序的设置里面……而不是在网络设置里……

|

||||

|

||||

KDE,你这是要杀了我啊,你有“系统设置”当凶器,拿着它动手吧!

|

||||

|

||||

--------------------------------------------------------------------------------

|

||||

|

||||

via: http://www.phoronix.com/scan.php?page=article&item=gnome-week-editorial&num=4

|

||||

|

||||

作者:Eric Griffith

|

||||

译者:[XLCYun](https://github.com/XLCYun)

|

||||

校对:[wxy](https://github.com/wxy)

|

||||

|

||||

本文由 [LCTT](https://github.com/LCTT/TranslateProject) 原创翻译,[Linux中国](https://linux.cn/) 荣誉推出

|

||||

@ -0,0 +1,40 @@

|

||||

一周 GNOME 之旅:品味它和 KDE 的是是非非(第三节 总结)

|

||||

================================================================================

|

||||

|

||||

### 用户体验和最后想法 ###

|

||||

|

||||

当 Gnome 2.x 和 KDE 4.x 要正面交锋时……我在它们之间左右逢源。我对它们爱恨交织,但总的来说它们使用起来还算是一种乐趣。然后 Gnome 3.x 来了,带着一场 Gnome Shell 的戏剧。那时我就放弃了 Gnome,我尽我所能的避开它。当时它对用户是不友好的,而且不直观,它打破了原有的设计典范,只为平板的统治世界做准备……而根据平板下跌的销量来看,这样的未来不可能实现。

|

||||

|

||||

在 Gnome 3 后续发布了八个版本后,奇迹发生了。Gnome 变得对对用户友好了,变得直观了。它完美吗?当然不。我还是很讨厌它想推动的那种设计范例,我讨厌它总想给我强加一种工作流(work flow),但是在付出时间和耐心后,这两都能被接受。只要你能够回头去看看 Gnome Shell 那外星人一样的界面,然后开始跟 Gnome 的其它部分(特别是控制中心)互动,你就能发现 Gnome 绝对做对了:细节,对细节的关注!

|

||||

|

||||

人们能适应新的界面设计范例,能适应新的工作流—— iPhone 和 iPad 都证明了这一点——但真正让他们操心的一直是“纸割”——那些不完美的细节。

|

||||

|

||||

它带出了 KDE 和 Gnome 之间最重要的一个区别。Gnome 感觉像一个产品,像一种非凡的体验。你用它的时候,觉得它是完整的,你要的东西都触手可及。它让人感觉就像是一个拥有 Windows 或者 OS X 那样桌面体验的 Linux 桌面版:你要的都在里面,而且它是被同一个目标一致的团队中的同一个人写出来的。天,即使是一个应用程序发出的 sudo 请求都感觉是 Gnome 下的一个特意设计的部分,就像在 Windows 下的一样。而在 KDE 下感觉就是随便一个应用程序都能创建的那种各种外观的弹窗。它不像是以系统本身这样的正式身份停下来说“嘿,有个东西要请求管理员权限!你要给它吗?”。

|

||||

|

||||

KDE 让人体验不到有凝聚力的体验。KDE 像是在没有方向地打转,感觉没有完整的体验。它就像是一堆东西往不同的的方向移动,只不过恰好它们都有一个共同享有的工具包而已。如果开发者对此很开心,那么好吧,他们开心就好,但是如果他们想提供最好体验的话,那么就需要多关注那些小地方了。用户体验跟直观应当做为每一个应用程序的设计中心,应当有一个视野,知道 KDE 要提供什么——并且——知道它看起来应该是什么样的。

|

||||

|

||||

是不是有什么原因阻止我在 KDE 下使用 Gnome 磁盘管理? Rhythmbox 呢? Evolution 呢? 没有,没有,没有。但是这样说又错过了关键。Gnome 和 KDE 都称它们自己为“桌面环境”。那么它们就应该是完整的环境,这意味着他们的各个部件应该汇集并紧密结合在一起,意味着你应该使用它们环境下的工具,因为它们说“您在一个完整的桌面中需要的任何东西,我们都支持。”说真的?只有 Gnome 看起来能符合完整的要求。KDE 在“汇集在一起”这一方面感觉就像个半成品,更不用说提供“完整体验”中你所需要的东西。Gnome 磁盘管理没有相应的对手—— kpartionmanage 要求 ROOT 权限。KDE 不运行“首次用户注册”的过程(原文:No 'First Time User' run through。可能是指系统安装过程中KDE没有创建新用户的过程,译注) ,现在也不过是在 Kubuntu 下引入了一个用户管理器。老天,Gnome 甚至提供了地图、笔记、日历和时钟应用。这些应用都是百分百要紧的吗?不,当然不了。但是正是这些应用帮助 Gnome 推动“Gnome 是一种完整丰富的体验”的想法。

|

||||

|

||||

我吐槽的 KDE 问题并非不可能解决,决对不是这样的!但是它需要人去关心它。它需要开发者为他们的作品感到自豪,而不仅仅是为它们实现的功能而感到自豪——组织的价值可大了去了。别夺走用户设置选项的能力—— GNOME 3.x 就是因为缺乏配置选项的能力而为我所诟病,但别把“好吧,你想怎么设置就怎么设置”作为借口而不提供任何理智的默认设置。默认设置是用户将看到的东西,它们是用户从打开软件的第一刻开始进行评判的关键。给用户留个好印象吧。

|

||||

|

||||

我知道 KDE 开发者们知道设计很重要,这也是为什么VDG(Visual Design Group 视觉设计组)存在的原因,但是感觉好像他们没有让 VDG 充分发挥,所以 KDE 里存在组织上的缺陷。不是 KDE 没办法完整,不是它没办法汇集整合在一起然后解决衰败问题,只是开发者们没做到。他们瞄准了靶心……但是偏了。

|

||||

|

||||

还有,在任何人说这句话之前……千万别说“欢迎给我们提交补丁啊"。因为当我开心的为某个人提交补丁时,只要开发者坚持以他们喜欢的却不直观的方式干事,更多这样的烦人事就会不断发生。这不关 Muon 有没有中心对齐。也不关 Amarok 的界面太丑。也不关每次我敲下快捷键后,弹出的音量和亮度调节窗口占用了我一大块的屏幕“地皮”(说真的,有人会把这些东西缩小)。

|

||||

|

||||

这跟心态的冷漠有关,跟开发者们在为他们的应用设计 UI 时根本就不多加思考有关。KDE 团队做的东西都工作得很好。Amarok 能播放音乐。Dragon 能播放视频。Kwin 或 Qt 和 kdelibs 似乎比 Mutter/gtk 更有力更效率(仅根据我的电池电量消耗计算。非科学性测试)。这些都很好,很重要……但是它们呈现的方式也很重要。甚至可以说,呈现方式是最重要的,因为它是用户看到的并与之交互的东西。

|

||||

|

||||

KDE 应用开发者们……让 VDG 参与进来吧。让 VDG 审查并核准每一个“核心”应用,让一个 VDG 的 UI/UX 专家来设计应用的使用模式和使用流程,以此保证其直观性。真见鬼,不管你们在开发的是啥应用,仅仅把它的模型发到 VDG 论坛寻求反馈甚至都可能都能得到一些非常好的指点跟反馈。你有这么好的资源在这,现在赶紧用吧。

|

||||

|

||||

我不想说得好像我一点都不懂感恩。我爱 KDE,我爱那些志愿者们为了给 Linux 用户一个可视化的桌面而付出的工作与努力,也爱可供选择的 Gnome。正是因为我关心我才写这篇文章。因为我想看到更好的 KDE,我想看到它走得比以前更加遥远。而这样做需要每个人继续努力,并且需要人们不再躲避批评。它需要人们对系统互动及系统崩溃的地方都保持诚实。如果我们不能直言批评,如果我们不说“这真垃圾!”,那么情况永远不会变好。

|

||||

|

||||

这周后我会继续使用 Gnome 吗?可能不。应该不。Gnome 还在试着强迫我接受其工作流,而我不想追随,也不想遵循,因为我在使用它的时候感觉变得不够高效,因为它并不遵循我的思维模式。可是对于我的朋友们,当他们问我“我该用哪种桌面环境?”我可能会推荐 Gnome,特别是那些不大懂技术,只要求“能工作”就行的朋友。根据目前 KDE 的形势来看,这可能是我能说出的最狠毒的评估了。

|

||||

|

||||

--------------------------------------------------------------------------------

|

||||

|

||||

via: http://www.phoronix.com/scan.php?page=article&item=gnome-week-editorial&num=5

|

||||

|

||||

作者:Eric Griffith

|

||||

译者:[XLCYun](https://github.com/XLCYun)

|

||||

校对:[wxy](https://github.com/wxy)

|

||||

|

||||

本文由 [LCTT](https://github.com/LCTT/TranslateProject) 原创翻译,[Linux中国](https://linux.cn/) 荣誉推出

|

||||

@ -0,0 +1,120 @@

|

||||

如何更新 Linux 内核来提升系统性能

|

||||

================================================================================

|

||||

|

||||

|

||||

目前的 [Linux 内核][1]的开发速度是前所未有的,大概每2到3个月就会有一个主要的版本发布。每个发布都带来几个的新的功能和改进,可以让很多人的处理体验更快、更有效率、或者其它的方面更好。

|

||||

|

||||

问题是,你不能在这些内核发布的时候就用它们,你要等到你的发行版带来新内核的发布。我们先前讲到[定期更新内核的好处][2],所以你不必等到那时。让我们来告诉你该怎么做。

|

||||

|

||||

> 免责声明: 我们先前的一些文章已经提到过,升级内核有(很小)的风险可能会破坏你系统。如果发生这种情况,通常可以通过使用旧内核来使系统保持工作,但是有时还是不行。因此我们对系统的任何损坏都不负责,你得自己承担风险!

|

||||

|

||||

### 预备工作 ###

|

||||

|

||||

要更新你的内核,你首先要确定你使用的是32位还是64位的系统。打开终端并运行:

|

||||

|

||||

uname -a

|

||||

|

||||

检查一下输出的是 x86\_64 还是 i686。如果是 x86\_64,你就运行64位的版本,否则就运行32位的版本。千万记住这个,这很重要。

|

||||

|

||||

|

||||

|

||||

接下来,访问[官方的 Linux 内核网站][3],它会告诉你目前稳定内核的版本。愿意的话,你可以尝试下发布预选版(RC),但是这比稳定版少了很多测试。除非你确定想要需要发布预选版,否则就用稳定内核。

|

||||

|

||||

|

||||

|

||||

### Ubuntu 指导 ###

|

||||

|

||||

对 Ubuntu 及其衍生版的用户而言升级内核非常简单,这要感谢 Ubuntu 主线内核 PPA。虽然,官方把它叫做 PPA,但是你不能像其他 PPA 一样将它添加到你软件源列表中,并指望它自动升级你的内核。实际上,它只是一个简单的网页,你应该浏览并下载到你想要的内核。

|

||||

|

||||

|

||||

|

||||

现在,访问这个[内核 PPA 网页][4],并滚到底部。列表的最下面会含有最新发布的预选版本(你可以在名字中看到“rc”字样),但是这上面就可以看到最新的稳定版(说的更清楚些,本文写作时最新的稳定版是4.1.2。LCTT 译注:这里虽然 4.1.2 是当时的稳定版,但是由于尚未进入 Ubuntu 发行版中,所以文件夹名称为“-unstable”)。点击文件夹名称,你会看到几个选择。你需要下载 3 个文件并保存到它们自己的文件夹中(如果你喜欢的话可以放在下载文件夹中),以便它们与其它文件相隔离:

|

||||

|

||||

1. 针对架构的含“generic”(通用)的头文件(我这里是64位,即“amd64”)

|

||||

2. 放在列表中间,在文件名末尾有“all”的头文件

|

||||

3. 针对架构的含“generic”内核文件(再说一次,我会用“amd64”,但是你如果用32位的,你需要使用“i686”)

|

||||

|

||||

你还可以在下面看到含有“lowlatency”(低延时)的文件。但最好忽略它们。这些文件相对不稳定,并且只为那些通用文件不能满足像音频录制这类任务想要低延迟的人准备的。再说一次,首选通用版,除非你有特定的任务需求不能很好地满足。一般的游戏和网络浏览不是使用低延时版的借口。

|

||||

|

||||

你把它们放在各自的文件夹下,对么?现在打开终端,使用`cd`命令切换到新创建的文件夹下,如

|

||||

|

||||

cd /home/user/Downloads/Kernel

|

||||

|

||||

接着运行:

|

||||

|

||||

sudo dpkg -i *.deb

|

||||

|

||||

这个命令会标记文件夹中所有的“.deb”文件为“待安装”,接着执行安装。这是推荐的安装方法,因为不可以很简单地选择一个文件安装,它总会报出依赖问题。这这样一起安装就可以避免这个问题。如果你不清楚`cd`和`sudo`是什么。快速地看一下 [Linux 基本命令][5]这篇文章。

|

||||

|

||||

|

||||

|

||||

安装完成后,**重启**你的系统,这时应该就会运行刚安装的内核了!你可以在命令行中使用`uname -a`来检查输出。

|

||||

|

||||

### Fedora 指导 ###

|

||||

|

||||

如果你使用的是 Fedora 或者它的衍生版,过程跟 Ubuntu 很类似。不同的是文件获取的位置不同,安装的命令也不同。

|

||||

|

||||

|

||||

|

||||

查看 [最新 Fedora 内核构建][6]列表。选取列表中最新的稳定版并翻页到下面选择 i686 或者 x86_64 版。这取决于你的系统架构。这时你需要下载下面这些文件并保存到它们对应的目录下(比如“Kernel”到下载目录下):

|

||||

|

||||

- kernel

|

||||

- kernel-core

|

||||

- kernel-headers

|

||||

- kernel-modules

|

||||

- kernel-modules-extra

|

||||

- kernel-tools

|

||||

- perf 和 python-perf (可选)

|

||||

|

||||

如果你的系统是 i686(32位)同时你有 4GB 或者更大的内存,你需要下载所有这些文件的 PAE 版本。PAE 是用于32位系统上的地址扩展技术,它允许你使用超过 3GB 的内存。

|

||||

|

||||

现在使用`cd`命令进入文件夹,像这样

|

||||

|

||||

cd /home/user/Downloads/Kernel

|

||||

|

||||

接着运行下面的命令来安装所有的文件

|

||||

|

||||

yum --nogpgcheck localinstall *.rpm

|

||||

|

||||

最后**重启**你的系统,这样你就可以运行新的内核了!

|

||||

|

||||

#### 使用 Rawhide ####

|

||||

|

||||

另外一个方案是,Fedora 用户也可以[切换到 Rawhide][7],它会自动更新所有的包到最新版本,包括内核。然而,Rawhide 经常会破坏系统(尤其是在早期的开发阶段中),它**不应该**在你日常使用的系统中用。

|

||||

|

||||

### Arch 指导 ###

|

||||

|

||||

[Arch 用户][8]应该总是使用的是最新和最棒的稳定版(或者相当接近的版本)。如果你想要更接近最新发布的稳定版,你可以启用测试库提前2到3周获取到主要的更新。

|

||||

|

||||

要这么做,用[你喜欢的编辑器][9]以`sudo`权限打开下面的文件

|

||||

|

||||

/etc/pacman.conf

|

||||

|

||||

接着取消注释带有 testing 的三行(删除行前面的#号)。如果你启用了 multilib 仓库,就把 multilib-testing 也做相同的事情。如果想要了解更多参考[这个 Arch 的 wiki 界面][10]。

|

||||

|

||||

升级内核并不简单(有意这么做的),但是这会给你带来很多好处。只要你的新内核不会破坏任何东西,你可以享受它带来的性能提升,更好的效率,更多的硬件支持和潜在的新特性。尤其是你正在使用相对较新的硬件时,升级内核可以帮助到你。

|

||||

|

||||

|

||||

**怎么升级内核这篇文章帮助到你了么?你认为你所喜欢的发行版对内核的发布策略应该是怎样的?**。在评论栏让我们知道!

|

||||

|

||||

--------------------------------------------------------------------------------

|

||||

|

||||

via: http://www.makeuseof.com/tag/update-linux-kernel-improved-system-performance/

|

||||

|

||||

作者:[Danny Stieben][a]

|

||||

译者:[geekpi](https://github.com/geekpi)

|

||||

校对:[wxy](https://github.com/wxy)

|

||||

|

||||

本文由 [LCTT](https://github.com/LCTT/TranslateProject) 原创翻译,[Linux中国](https://linux.cn/) 荣誉推出

|

||||

|

||||

[a]:http://www.makeuseof.com/tag/author/danny/

|

||||

[1]:http://www.makeuseof.com/tag/linux-kernel-explanation-laymans-terms/

|

||||

[2]:http://www.makeuseof.com/tag/5-reasons-update-kernel-linux/

|

||||

[3]:http://www.kernel.org/

|

||||

[4]:http://kernel.ubuntu.com/~kernel-ppa/mainline/

|

||||

[5]:http://www.makeuseof.com/tag/an-a-z-of-linux-40-essential-commands-you-should-know/

|

||||

[6]:http://koji.fedoraproject.org/koji/packageinfo?packageID=8

|

||||

[7]:http://www.makeuseof.com/tag/bleeding-edge-linux-fedora-rawhide/

|

||||

[8]:http://www.makeuseof.com/tag/arch-linux-letting-you-build-your-linux-system-from-scratch/

|

||||

[9]:http://www.makeuseof.com/tag/nano-vs-vim-terminal-text-editors-compared/

|

||||

[10]:https://wiki.archlinux.org/index.php/Pacman#Repositories

|

||||

@ -1,47 +1,52 @@

|

||||

|

||||

用 CD 创建 ISO,观察用户活动和检查浏览器内存的技巧

|

||||

一些 Linux 小技巧

|

||||

================================================================================

|

||||

我已经写过 [Linux 提示和技巧][1] 系列的一篇文章。写这篇文章的目的是让你知道这些小技巧可以有效地管理你的系统/服务器。

|

||||

|

||||

|

||||

|

||||

在Linux中创建 Cdrom ISO 镜像和监控用户

|

||||

*在Linux中创建 Cdrom ISO 镜像和监控用户*

|

||||

|

||||

在这篇文章中,我们将看到如何使用 CD/DVD 驱动器中加载到的内容来创建 ISO 镜像,打开随机手册页学习,看到登录用户的详细情况和查看浏览器内存使用量,而所有这些完全使用本地工具/命令无任何第三方应用程序/组件。让我们开始吧...

|

||||

在这篇文章中,我们将看到如何使用 CD/DVD 驱动器中载入的碟片来创建 ISO 镜像;打开随机手册页学习;看到登录用户的详细情况和查看浏览器内存使用量,而所有这些完全使用本地工具/命令,无需任何第三方应用程序/组件。让我们开始吧……

|

||||

|

||||

### 用 CD 中创建 ISO 映像 ###

|

||||

### 用 CD 碟片创建 ISO 映像 ###

|

||||

|

||||

我们经常需要备份/复制 CD/DVD 的内容。如果你是在 Linux 平台上,不需要任何额外的软件。所有需要的是进入 Linux 终端。

|

||||

|

||||

要从 CD/DVD 上创建 ISO 镜像,你需要做两件事。第一件事就是需要找到CD/DVD 驱动器的名称。要找到 CD/DVD 驱动器的名称,可以使用以下三种方法。

|

||||

|

||||

**1. 从终端/控制台上运行 lsblk 命令(单个驱动器).**

|

||||

**1. 从终端/控制台上运行 lsblk 命令(列出块设备)**

|

||||

|

||||

$ lsblk

|

||||

|

||||

|

||||

|

||||

找驱动器

|

||||

*找块设备*

|

||||

|

||||

**2.要查看有关 CD-ROM 的信息,可以使用以下命令。**

|

||||

从上图可以看到,sr0 就是你的 cdrom (即 /dev/sr0 )。

|

||||

|

||||

**2. 要查看有关 CD-ROM 的信息,可以使用以下命令**

|

||||

|

||||

$ less /proc/sys/dev/cdrom/info

|

||||

|

||||

|

||||

|

||||

检查 Cdrom 信息

|

||||

*检查 Cdrom 信息*

|

||||

|

||||

从上图可以看到, 设备名称是 sr0 (即 /dev/sr0)。

|

||||

|

||||

**3. 使用 [dmesg 命令][2] 也会得到相同的信息,并使用 egrep 来自定义输出。**

|

||||

|

||||

命令 ‘dmesg‘ 命令的输出/控制内核缓冲区信息。‘egrep‘ 命令输出匹配到的行。选项 -i 和 -color 与 egrep 连用时会忽略大小写,并高亮显示匹配的字符串。

|

||||

命令 ‘dmesg‘ 命令的输出/控制内核缓冲区信息。‘egrep‘ 命令输出匹配到的行。egrep 使用选项 -i 和 -color 时会忽略大小写,并高亮显示匹配的字符串。

|

||||

|

||||

$ dmesg | egrep -i --color 'cdrom|dvd|cd/rw|writer'

|

||||

|

||||

|

||||

|

||||

查找设备信息

|

||||

*查找设备信息*

|

||||

|

||||

一旦知道 CD/DVD 的名称后,在 Linux 上你可以用下面的命令来创建 ISO 镜像。

|

||||

从上图可以看到,设备名称是 sr0 (即 /dev/sr0)。

|

||||

|

||||

一旦知道 CD/DVD 的名称后,在 Linux 上你可以用下面的命令来创建 ISO 镜像(你看,只需要 cat 即可!)。

|

||||

|

||||

$ cat /dev/sr0 > /path/to/output/folder/iso_name.iso

|

||||

|

||||

@ -49,11 +54,11 @@

|

||||

|

||||

|

||||

|

||||

创建 CDROM 的 ISO 映像

|

||||

*创建 CDROM 的 ISO 映像*

|

||||

|

||||

### 随机打开一个手册页 ###

|

||||

|

||||

如果你是 Linux 新人并想学习使用命令行开关,这个修改是为你做的。把下面的代码行添加在`〜/ .bashrc`文件的末尾。

|

||||

如果你是 Linux 新人并想学习使用命令行开关,这个技巧就是给你的。把下面的代码行添加在`〜/ .bashrc`文件的末尾。

|

||||

|

||||

/use/bin/man $(ls /bin | shuf | head -1)

|

||||

|

||||

@ -63,17 +68,19 @@

|

||||

|

||||

|

||||

|

||||

LoadKeys 手册页

|

||||

*LoadKeys 手册页*

|

||||

|

||||

|

||||

|

||||

Zgrep 手册页

|

||||

*Zgrep 手册页*

|

||||

|

||||

希望你知道如何退出手册页浏览——如果你已经厌烦了每次都看到手册页,你可以删除你添加到 `.bashrc`文件中的那几行。

|

||||

|

||||

### 查看登录用户的状态 ###

|

||||

|

||||

了解其他用户正在共享服务器上做什么。

|

||||

|

||||

一般情况下,你是共享的 Linux 服务器的用户或管理员的。如果你担心自己服务器的安全并想要查看哪些用户在做什么,你可以使用命令 'w'。

|

||||

一般情况下,你是共享的 Linux 服务器的用户或管理员的。如果你担心自己服务器的安全并想要查看哪些用户在做什么,你可以使用命令 `w`。

|

||||

|

||||

这个命令可以让你知道是否有人在执行恶意代码或篡改服务器,让他停下或使用其他方法。'w' 是查看登录用户状态的首选方式。

|

||||

|

||||

@ -83,33 +90,33 @@ Zgrep 手册页

|

||||

|

||||

|

||||

|

||||

检查 Linux 用户状态

|

||||

*检查 Linux 用户状态*

|

||||

|

||||

### 查看浏览器的内存使用状况 ###

|

||||

|

||||

最近有不少谈论关于 Google-chrome 内存使用量。如果你想知道一个浏览器的内存用量,你可以列出进程名,PID 和它的使用情况。要检查浏览器的内存使用情况,只需在地址栏输入 “about:memory” 不要带引号。

|

||||

最近有不少谈论关于 Google-chrome 的内存使用量。如要检查浏览器的内存使用情况,只需在地址栏输入 “about:memory”,不要带引号。

|

||||

|

||||

我已经在 Google-Chrome 和 Mozilla 的 Firefox 网页浏览器进行了测试。你可以查看任何浏览器,如果它工作得很好,你可能会承认我们在下面的评论。你也可以杀死浏览器进程在 Linux 终端的进程/服务中。

|

||||

|

||||

在 Google Chrome 中,在地址栏输入 `about:memory`,你应该得到类似下图的东西。

|

||||

在 Google Chrome 中,在地址栏输入 `about:memory`,你应该得到类似下图的东西。

|

||||

|

||||

|

||||

|

||||

查看 Chrome 内存使用状况

|

||||

*查看 Chrome 内存使用状况*

|

||||

|

||||

在Mozilla Firefox浏览器,在地址栏输入 `about:memory`,你应该得到类似下图的东西。

|

||||

|

||||

|

||||

|

||||

查看 Firefox 内存使用状况

|

||||

*查看 Firefox 内存使用状况*

|

||||

|

||||

如果你已经了解它是什么,除了这些选项。要检查内存用量,你也可以点击最左边的 ‘Measure‘ 选项。

|

||||

|

||||

|

||||

|

||||

Firefox 主进程

|

||||

*Firefox 主进程*

|

||||

|

||||

它将通过浏览器树形展示进程内存使用量

|

||||

它将通过浏览器树形展示进程内存使用量。

|

||||

|

||||

目前为止就这样了。希望上述所有的提示将会帮助你。如果你有一个(或多个)技巧,分享给我们,将帮助 Linux 用户更有效地管理他们的 Linux 系统/服务器。

|

||||

|

||||

@ -122,7 +129,7 @@ via: http://www.tecmint.com/creating-cdrom-iso-image-watch-user-activity-in-linu

|

||||

|

||||

作者:[Avishek Kumar][a]

|

||||

译者:[strugglingyouth](https://github.com/strugglingyouth)

|

||||

校对:[校对者ID](https://github.com/校对者ID)

|

||||

校对:[wxy](https://github.com/wxy)

|

||||

|

||||

本文由 [LCTT](https://github.com/LCTT/TranslateProject) 原创翻译,[Linux中国](https://linux.cn/) 荣誉推出

|

||||

|

||||

@ -1,7 +1,7 @@

|

||||

Linux有问必答——如何修复Linux上的“ImportError: No module named wxversion”错误

|

||||

Linux有问必答:如何修复“ImportError: No module named wxversion”错误

|

||||

================================================================================

|

||||

|

||||

> **问题** 我试着在[你的Linux发行版]上运行一个Python应用,但是我得到了这个错误"ImportError: No module named wxversion."。我怎样才能解决Python程序中的这个错误呢?

|

||||

> **问题** 我试着在[某某 Linux 发行版]上运行一个 Python 应用,但是我得到了这个错误“ImportError: No module named wxversion.”。我怎样才能解决 Python 程序中的这个错误呢?

|

||||

|

||||

Looking for python... 2.7.9 - Traceback (most recent call last):

|

||||

File "/home/dev/playonlinux/python/check_python.py", line 1, in

|

||||

@ -10,7 +10,8 @@ Linux有问必答——如何修复Linux上的“ImportError: No module named wx

|

||||

failed tests

|

||||

|

||||

该错误表明,你的Python应用是基于GUI的,依赖于一个名为wxPython的缺失模块。[wxPython][1]是一个用于wxWidgets GUI库的Python扩展模块,普遍被C++程序员用来设计GUI应用。该wxPython扩展允许Python开发者在任何Python应用中方便地设计和整合GUI。

|

||||

To solve this import error, you need to install wxPython on your Linux, as described below.

|

||||

|

||||

摇解决这个 import 错误,你需要在你的 Linux 上安装 wxPython,如下:

|

||||

|

||||

### 安装wxPython到Debian,Ubuntu或Linux Mint ###

|

||||

|

||||

@ -40,10 +41,10 @@ via: http://ask.xmodulo.com/importerror-no-module-named-wxversion.html

|

||||

|

||||

作者:[Dan Nanni][a]

|

||||

译者:[GOLinux](https://github.com/GOLinux)

|

||||

校对:[校对者ID](https://github.com/校对者ID)

|

||||

校对:[wxy](https://github.com/wxy)

|

||||

|

||||

本文由 [LCTT](https://github.com/LCTT/TranslateProject) 原创翻译,[Linux中国](https://linux.cn/) 荣誉推出

|

||||

|

||||

[a]:http://ask.xmodulo.com/author/nanni

|

||||

[1]:http://wxpython.org/

|

||||

[2]:http://xmodulo.com/how-to-set-up-epel-repository-on-centos.html

|

||||

[2]:https://linux.cn/article-2324-1.html

|

||||

@ -1,6 +1,7 @@

|

||||

Darkstat一个基于网络的流量分析器 - 在Linux中安装

|

||||

在 Linux 中安装 Darkstat:基于网页的流量分析器

|

||||

================================================================================

|

||||

Darkstat是一个简易的,基于网络的流量分析程序。它可以在主流的操作系统如Linux、Solaris、MAC、AIX上工作。它以守护进程的形式持续工作在后台并不断地嗅探网络数据并以简单易懂的形式展现在网页上。它可以为主机生成流量报告,鉴别特定主机上哪些端口打开并且兼容IPv6。让我们看下如何在Linux中安装和配置它。

|

||||

|

||||

Darkstat是一个简易的,基于网页的流量分析程序。它可以在主流的操作系统如Linux、Solaris、MAC、AIX上工作。它以守护进程的形式持续工作在后台,不断地嗅探网络数据,以简单易懂的形式展现在它的网页上。它可以为主机生成流量报告,识别特定的主机上哪些端口是打开的,它兼容IPv6。让我们看下如何在Linux中安装和配置它。

|

||||

|

||||

### 在Linux中安装配置Darkstat ###

|

||||

|

||||

@ -20,14 +21,15 @@ Darkstat是一个简易的,基于网络的流量分析程序。它可以在主

|

||||

|

||||

### 配置 Darkstat ###

|

||||

|

||||

为了正确运行这个程序,我恩需要执行一些基本的配置。运行下面的命令用gedit编辑器打开/etc/darkstat/init.cfg文件。

|

||||

为了正确运行这个程序,我们需要执行一些基本的配置。运行下面的命令用gedit编辑器打开/etc/darkstat/init.cfg文件。

|

||||

|

||||

sudo gedit /etc/darkstat/init.cfg

|

||||

|

||||

|

||||

编辑 Darkstat

|

||||

|

||||

修改START_DARKSTAT这个参数为yes,并在“INTERFACE”中提供你的网络接口。确保取消了DIR、PORT、BINDIP和LOCAL这些参数的注释。如果你希望绑定Darkstat到特定的IP,在BINDIP中提供它

|

||||

*编辑 Darkstat*

|

||||

|

||||

修改START_DARKSTAT这个参数为yes,并在“INTERFACE”中提供你的网络接口。确保取消了DIR、PORT、BINDIP和LOCAL这些参数的注释。如果你希望绑定Darkstat到特定的IP,在BINDIP参数中提供它。

|

||||

|

||||

### 启动Darkstat守护进程 ###

|

||||

|

||||

@ -47,7 +49,7 @@ Darkstat是一个简易的,基于网络的流量分析程序。它可以在主

|

||||

|

||||

### 总结 ###

|

||||

|

||||

它是一个占用很少内存的轻量级工具。这个工具流行的原因是简易、易于配置和使用。这是一个对系统管理员而言必须拥有的程序

|

||||

它是一个占用很少内存的轻量级工具。这个工具流行的原因是简易、易于配置使用。这是一个对系统管理员而言必须拥有的程序。

|

||||

|

||||

--------------------------------------------------------------------------------

|

||||

|

||||

@ -55,7 +57,7 @@ via: http://linuxpitstop.com/install-darkstat-on-ubuntu-linux/

|

||||

|

||||

作者:[Aun][a]

|

||||

译者:[geekpi](https://github.com/geekpi)

|

||||

校对:[校对者ID](https://github.com/校对者ID)

|

||||

校对:[wxy](https://github.com/wxy)

|

||||

|

||||

本文由 [LCTT](https://github.com/LCTT/TranslateProject) 原创翻译,[Linux中国](http://linux.cn/) 荣誉推出

|

||||

|

||||

@ -1,14 +1,15 @@

|

||||

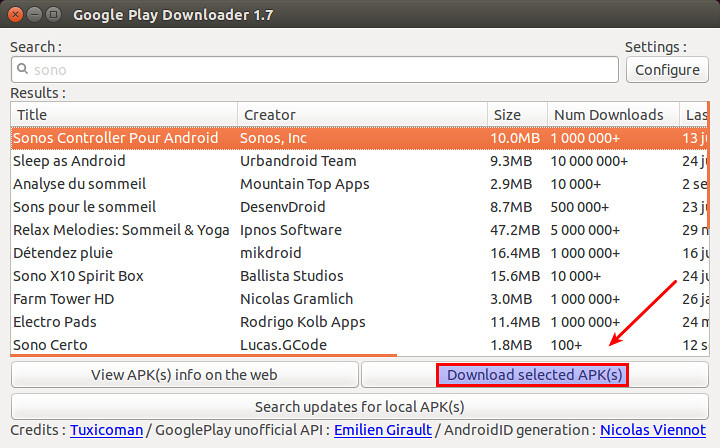

如何在 Linux 中从 Google Play 商店里下载 apk 文件

|

||||

如何在 Linux 上从 Google Play 商店里下载 apk 文件

|

||||

================================================================================

|

||||

假设你想在你的 Android 设备中安装一个 Android 应用,然而由于某些原因,你不能在 Andor 设备上访问 Google Play 商店。接着你该怎么做呢?在不访问 Google Play 商店的前提下安装应用的一种可能的方法是使用其他的手段下载该应用的 APK 文件,然后手动地在 Android 设备上 [安装 APK 文件][1]。

|

||||

|

||||

在非 Android 设备如常规的电脑和笔记本电脑上,有着几种方式来从 Google Play 商店下载到官方的 APK 文件。例如,使用浏览器插件(例如, 针对 [Chrome][2] 或针对 [Firefox][3] 的插件) 或利用允许你使用浏览器下载 APK 文件的在线的 APK 存档等。假如你不信任这些闭源的插件或第三方的 APK 仓库,这里有另一种手动下载官方 APK 文件的方法,它使用一个名为 [GooglePlayDownloader][4] 的开源 Linux 应用。

|

||||

假设你想在你的 Android 设备中安装一个 Android 应用,然而由于某些原因,你不能在 Andord 设备上访问 Google Play 商店(LCTT 译注:显然这对于我们来说是常态)。接着你该怎么做呢?在不访问 Google Play 商店的前提下安装应用的一种可能的方法是,使用其他的手段下载该应用的 APK 文件,然后手动地在 Android 设备上 [安装 APK 文件][1]。

|

||||

|

||||

GooglePlayDownloader 是一个基于 Python 的 GUI 应用,使得你可以从 Google Play 商店上搜索和下载 APK 文件。由于它是完全开源的,你可以放心地使用它。在本篇教程中,我将展示如何在 Linux 环境下,使用 GooglePlayDownloader 来从 Google Play 商店下载 APK 文件。

|

||||

在非 Android 设备如常规的电脑和笔记本电脑上,有着几种方式来从 Google Play 商店下载到官方的 APK 文件。例如,使用浏览器插件(例如,针对 [Chrome][2] 或针对 [Firefox][3] 的插件) 或利用允许你使用浏览器下载 APK 文件的在线的 APK 存档等。假如你不信任这些闭源的插件或第三方的 APK 仓库,这里有另一种手动下载官方 APK 文件的方法,它使用一个名为 [GooglePlayDownloader][4] 的开源 Linux 应用。

|

||||

|

||||

GooglePlayDownloader 是一个基于 Python 的 GUI 应用,它可以让你从 Google Play 商店上搜索和下载 APK 文件。由于它是完全开源的,你可以放心地使用它。在本篇教程中,我将展示如何在 Linux 环境下,使用 GooglePlayDownloader 来从 Google Play 商店下载 APK 文件。

|

||||

|

||||

### Python 需求 ###

|

||||

|

||||

GooglePlayDownloader 需要使用 Python 中 SSL 模块的扩展 SNI(服务器名称指示) 来支持 SSL/TLS 通信,该功能由 Python 2.7.9 或更高版本带来。这使得一些旧的发行版本如 Debian 7 Wheezy 及早期版本,Ubuntu 14.04 及早期版本或 CentOS/RHEL 7 及早期版本均不能满足该要求。假设你已经有了一个带有 Python 2.7.9 或更高版本的发行版本,可以像下面这样接着安装 GooglePlayDownloader。

|

||||

GooglePlayDownloader 需要使用带有 SNI(Server Name Indication 服务器名称指示)的 Python 来支持 SSL/TLS 通信,该功能由 Python 2.7.9 或更高版本引入。这使得一些旧的发行版本如 Debian 7 Wheezy 及早期版本,Ubuntu 14.04 及早期版本或 CentOS/RHEL 7 及早期版本均不能满足该要求。这里假设你已经有了一个带有 Python 2.7.9 或更高版本的发行版本,可以像下面这样接着安装 GooglePlayDownloader。

|

||||

|

||||

### 在 Ubuntu 上安装 GooglePlayDownloader ###

|

||||

|

||||

@ -16,7 +17,7 @@ GooglePlayDownloader 需要使用 Python 中 SSL 模块的扩展 SNI(服务器

|

||||

|

||||

#### 在 Ubuntu 14.10 上 ####

|

||||

|

||||

下载 [python-ndg-httpsclient][5] deb 软件包,这在旧一点的 Ubuntu 发行版本中是一个缺失的依赖。同时还要下载 GooglePlayDownloader 的官方 deb 软件包。

|

||||

下载 [python-ndg-httpsclient][5] deb 软件包,这是一个较旧的 Ubuntu 发行版本中缺失的依赖。同时还要下载 GooglePlayDownloader 的官方 deb 软件包。

|

||||

|

||||

$ wget http://mirrors.kernel.org/ubuntu/pool/main/n/ndg-httpsclient/python-ndg-httpsclient_0.3.2-1ubuntu4_all.deb

|

||||

$ wget http://codingteam.net/project/googleplaydownloader/download/file/googleplaydownloader_1.7-1_all.deb

|

||||

@ -64,7 +65,7 @@ GooglePlayDownloader 需要使用 Python 中 SSL 模块的扩展 SNI(服务器

|

||||

|

||||

### 使用 GooglePlayDownloader 从 Google Play 商店下载 APK 文件 ###

|

||||

|

||||

一旦你安装好 GooglePlayDownloader 后,你就可以像下面那样从 Google Play 商店下载 APK 文件。

|

||||

一旦你安装好 GooglePlayDownloader 后,你就可以像下面那样从 Google Play 商店下载 APK 文件。(LCTT 译注:显然你需要让你的 Linux 能爬梯子)

|

||||

|

||||

首先通过输入下面的命令来启动该应用:

|

||||

|

||||

@ -76,7 +77,7 @@ GooglePlayDownloader 需要使用 Python 中 SSL 模块的扩展 SNI(服务器

|

||||

|

||||

|

||||

|

||||

一旦你从搜索列表中找到了该应用,就选择该应用,接着点击 "下载选定的 APK 文件" 按钮。最后你将在你的家目录中找到下载的 APK 文件。现在,你就可以将下载到的 APK 文件转移到你所选择的 Android 设备上,然后手动安装它。

|

||||

一旦你从搜索列表中找到了该应用,就选择该应用,接着点击 “下载选定的 APK 文件” 按钮。最后你将在你的家目录中找到下载的 APK 文件。现在,你就可以将下载到的 APK 文件转移到你所选择的 Android 设备上,然后手动安装它。

|

||||

|

||||

希望这篇教程对你有所帮助。

|

||||

|

||||

@ -86,7 +87,7 @@ via: http://xmodulo.com/download-apk-files-google-play-store.html

|

||||

|

||||

作者:[Dan Nanni][a]

|

||||

译者:[FSSlc](https://github.com/FSSlc)

|

||||

校对:[校对者ID](https://github.com/校对者ID)

|

||||

校对:[wxy](https://github.com/wxy)

|

||||

|

||||

本文由 [LCTT](https://github.com/LCTT/TranslateProject) 原创翻译,[Linux中国](https://linux.cn/) 荣誉推出

|

||||

|

||||

@ -0,0 +1,63 @@

|

||||

Ubuntu 有望让你安装最新 Nvidia Linux 驱动更简单

|

||||

================================================================================

|

||||

|

||||

|

||||

*Ubuntu 上的游戏玩家在增长——因而需要最新版驱动*

|

||||

|

||||

**在 Ubuntu 上安装上游的 NVIDIA 图形驱动即将变得更加容易。**

|

||||

|

||||

Ubuntu 开发者正在考虑构建一个全新的'官方' PPA,以便为桌面用户分发最新的闭源 NVIDIA 二进制驱动。

|

||||

|

||||

该项改变会让 Ubuntu 游戏玩家收益,并且*不会*给其它人造成 OS 稳定性方面的风险。

|

||||

|

||||

**仅**当用户明确选择它时,新的上游驱动将通过这个新 PPA 安装并更新。其他人将继续得到并使用更近的包含在 Ubuntu 归档中的稳定版 NVIDIA Linux 驱动快照。

|

||||

|

||||

### 为什么需要该项目? ###

|

||||

|

||||

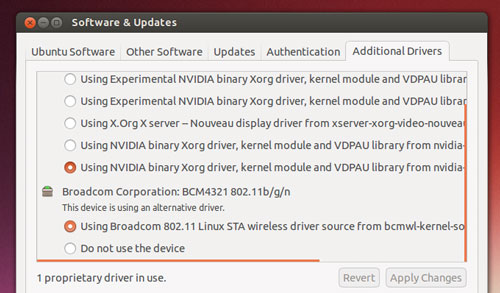

|

||||

|

||||

*Ubuntu 提供了驱动——但是它们不是最新的*

|

||||

|

||||

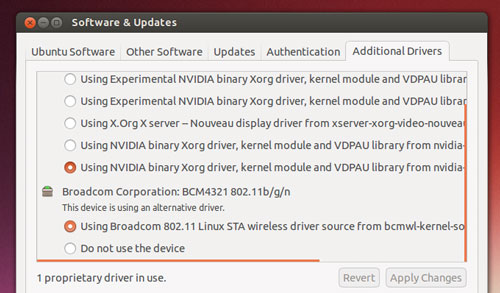

可以从归档中(使用命令行、synaptic,或者通过额外驱动工具)安装到 Ubuntu 上的闭源 NVIDIA 图形驱动在大多数情况下都能工作得很好,并且可以轻松地处理 Unity 桌面外壳的混染。

|

||||

|

||||

但对于游戏需求而言,那完全是另外一码事儿。

|

||||

|

||||

如果你想要将最高帧率和 HD 纹理从最新流行的 Steam 游戏中压榨出来,你需要最新的二进制驱动文件。

|

||||

|

||||

驱动越新,越可能支持最新的特性和技术,或者带有预先打包的游戏专门的优化和漏洞修复。

|

||||

|

||||

问题在于,在 Ubuntu 上安装最新 Nvidia Linux 驱动不是件容易的事儿,而且也不具安全保证。

|

||||

|

||||

要填补这个空白,许多由热心人维护的第三方 PPA 就出现了。由于许多这些 PPA 也发布了其它实验性的或者前沿软件,它们的使用**并不是毫无风险的**。添加一个前沿的 PPA 通常是搞崩整个系统的最快的方式!

|

||||

|

||||

一个解决方法是,让 Ubuntu 用户安装最新的专有图形驱动以满足对第三方 PPA 的需要,**但是**提供一个安全机制,如果有需要,你可以回滚到稳定版本。

|

||||

|

||||

### ‘对全新驱动的需求难以忽视’ ###

|

||||

|

||||

> '一个让Ubuntu用户安全地获得最新硬件驱动的解决方案出现了。'

|

||||

|

||||

‘在快速发展的市场中,对全新驱动的需求正变得难以忽视,用户将想要最新的上游软件,’卡斯特罗在一封给 Ubuntu 桌面邮件列表的电子邮件中解释道。

|

||||

|

||||

‘[NVIDIA] 可以毫不费力为 [Windows 10] 用户带来了不起的体验。直到我们可以说服 NVIDIA 在 Ubuntu 中做了同样的工作,这样我们就可以搞定这一切了。’

|

||||

|

||||

卡斯特罗的“官方的” NVIDIA PPA 方案就是最实现这一目的的最容易的方式。

|

||||

|

||||

游戏玩家将可以在 Ubuntu 的默认专有软件驱动工具中选择接收来自该 PPA 的新驱动,再也不需要它们从网站或维基页面拷贝并粘贴终端命令了。

|

||||

|

||||

该 PPA 内的驱动将由一个选定的社区成员组成的团队打包并维护,并受惠于一个名为**自动化测试**的半官方方式。

|

||||

|

||||

就像卡斯特罗自己说的那样:'人们想要最新的闪光的东西,而不管他们想要做什么。我们也许也要在其周围放置一个框架,因此人们可以获得他们所想要的,而不必破坏他们的计算机。'

|

||||

|

||||

**你想要使用这个 PPA 吗?你怎样来评估 Ubuntu 上默认 Nvidia 驱动的性能呢?在评论中分享你的想法吧,伙计们!**

|

||||

|

||||

--------------------------------------------------------------------------------

|

||||

|

||||

via: http://www.omgubuntu.co.uk/2015/08/ubuntu-easy-install-latest-nvidia-linux-drivers

|

||||

|

||||

作者:[Joey-Elijah Sneddon][a]

|

||||

译者:[GOLinux](https://github.com/GOLinux)

|

||||

校对:[wxy](https://github.com/wxy)

|

||||

|

||||

本文由 [LCTT](https://github.com/LCTT/TranslateProject) 原创翻译,[Linux中国](https://linux.cn/) 荣誉推出

|

||||

|

||||

[a]:https://plus.google.com/117485690627814051450/?rel=author

|

||||

@ -0,0 +1,66 @@

|

||||

Ubuntu NVIDIA 显卡驱动 PPA 已经做好准备

|

||||

================================================================================

|

||||

|

||||

|

||||

加速你的帧率!

|

||||

|

||||

**嘿,各位,稍安勿躁,很快就好。**

|

||||

|

||||

就在提议开发一个[新的 PPA][1] 来给 Ubuntu 用户们提供最新的 NVIDIA 显卡驱动后不久,ubuntu 社区的人们又集结起来了,就是为了这件事。

|

||||

|

||||

顾名思义,‘**Graphics Drivers PPA**’ 包含了最新的 NVIDIA Linux 显卡驱动发布,已经打包好可供用户升级使用,没有让人头疼的二进制运行时文件!

|

||||

|

||||

这个 PPA 被设计用来让玩家们尽可能方便地在 Ubuntu 上运行最新款的游戏。

|

||||

|

||||

#### 万事俱备,只欠东风 ####

|

||||

|

||||

Jorge Castro 开发一个包含 NVIDIA 最新显卡驱动的 PPA 神器的想法得到了 Ubuntu 用户和广大游戏开发者的热烈响应。

|

||||

|

||||

就连那些致力于将“Steam平台”上的知名大作移植到 Linux 上的人们,也给了不少建议。

|

||||

|

||||

Edwin Smith,Feral Interactive 公司(‘Shadow of Mordor’) 的产品总监,对于“让用户更方便地更新驱动”的倡议表示非常欣慰。

|

||||

|

||||

### 如何使用最新的 Nvidia Drivers PPA###

|

||||

|

||||

虽然新的“显卡PPA”已经开发出来,但是现在还远远达不到成熟。开发者们提醒到:

|

||||

|

||||

> “这个 PPA 还处于测试阶段,在你使用它之前最好有一些打包的经验。请大家稍安勿躁,再等几天。”

|

||||

|

||||

将 PPA 试发布给 Ubuntu desktop 邮件列表的 Jorge,也强调说,使用现行的一些 PPA(比如 xorg-edgers)的玩家可能发现不了什么区别(因为现在的驱动只不过是把内容从其他那些现存驱动拷贝过来了)

|

||||

|

||||

“新驱动发布的时候,好戏才会上演呢,”他说。

|

||||

|

||||

截至写作本文时为止,这个 PPA 囊括了从 Ubuntu 12.04.1 到 15.10 各个版本的 Nvidia 驱动。注意这些驱动对所有的发行版都适用。

|

||||

|

||||

> **毫无疑问,除非你清楚自己在干些什么,并且知道如果出了问题应该怎么撤销,否则就不要进行下面的操作。**

|

||||

|

||||

新打开一个终端窗口,运行下面的命令加入 PPA:

|

||||

|

||||

sudo add-apt-repository ppa:graphics-drivers/ppa

|

||||

|

||||

安装或更新到最新的 Nvidia 显卡驱动:

|

||||

|

||||

sudo apt-get update && sudo apt-get install nvidia-355

|

||||

|

||||

记住:如果PPA把你的系统弄崩了,你可得自己去想办法,我们提醒过了哦。(译者注:切记!)

|

||||

|

||||

如果想要撤销对PPA的改变,使用 `ppa-purge` 命令。

|

||||

|

||||

有什么意见,想法,或者指正,就在下面的评论栏里写下来吧。(我没有 NVIDIA 的硬件来为我自己验证上面的这些东西,如果你可以验证的话,那就太感谢了。)

|

||||

|

||||

--------------------------------------------------------------------------------

|

||||

|

||||

via: http://www.omgubuntu.co.uk/2015/08/ubuntu-nvidia-graphics-drivers-ppa-is-ready-for-action

|

||||

|

||||

作者:[Joey-Elijah Sneddon][a]

|

||||

译者:[DongShuaike](https://github.com/DongShuaike)

|

||||

校对:[wxy](https://github.com/wxy)

|

||||

|

||||

本文由 [LCTT](https://github.com/LCTT/TranslateProject) 原创翻译,[Linux中国](http://linux.cn/) 荣誉推出

|

||||

|

||||

[a]:https://plus.google.com/117485690627814051450/?rel=author

|

||||

[1]:https://linux.cn/article-6030-1.html

|

||||

|

||||

|

||||

|

||||

|

||||

@ -0,0 +1,158 @@

|

||||

shellinabox:一款使用 AJAX 的基于 Web 的终端模拟器

|

||||

================================================================================

|

||||

|

||||

### shellinabox简介 ###

|

||||

|

||||

通常情况下,我们在访问任何远程服务器时,会使用常见的通信工具如OpenSSH和Putty等。但是,有可能我们在防火墙后面不能使用这些工具访问远程系统,或者防火墙只允许HTTPS流量才能通过。不用担心!即使你在这样的防火墙后面,我们依然有办法来访问你的远程系统。而且,你不需要安装任何类似于OpenSSH或Putty的通讯工具。你只需要有一个支持JavaScript和CSS的现代浏览器,并且你不用安装任何插件或第三方应用软件。

|

||||

|

||||

这个 **Shell In A Box**,发音是**shellinabox**,是由**Markus Gutschke**开发的一款自由开源的基于Web的Ajax的终端模拟器。它使用AJAX技术,通过Web浏览器提供了类似原生的 Shell 的外观和感受。

|

||||

|

||||

这个**shellinaboxd**守护进程实现了一个Web服务器,能够侦听指定的端口。其Web服务器可以发布一个或多个服务,这些服务显示在用 AJAX Web 应用实现的VT100模拟器中。默认情况下,端口为4200。你可以更改默认端口到任意选择的任意端口号。在你的远程服务器安装shellinabox以后,如果你想从本地系统接入,打开Web浏览器并导航到:**http://IP-Address:4200/**。输入你的用户名和密码,然后就可以开始使用你远程系统的Shell。看起来很有趣,不是吗?确实 有趣!

|

||||

|

||||

**免责声明**:

|

||||

|

||||

shellinabox不是SSH客户端或任何安全软件。它仅仅是一个应用程序,能够通过Web浏览器模拟一个远程系统的Shell。同时,它和SSH没有任何关系。这不是可靠的安全地远程控制您的系统的方式。这只是迄今为止最简单的方法之一。无论如何,你都不应该在任何公共网络上运行它。

|

||||

|

||||

### 安装shellinabox ###

|

||||

|

||||

#### 在Debian / Ubuntu系统上: ####

|

||||

|

||||

shellinabox在默认库是可用的。所以,你可以使用命令来安装它:

|

||||

|

||||

$ sudo apt-get install shellinabox

|

||||

|

||||

#### 在RHEL / CentOS系统上: ####

|

||||

|

||||

首先,使用命令安装EPEL仓库:

|

||||

|

||||

# yum install epel-release

|

||||

|

||||

然后,使用命令安装shellinabox:

|

||||

|

||||

# yum install shellinabox

|

||||

|

||||

完成!

|

||||

|

||||

### 配置shellinabox ###

|

||||

|

||||

正如我之前提到的,shellinabox侦听端口默认为**4200**。你可以将此端口更改为任意数字,以防别人猜到。

|

||||

|

||||

在Debian/Ubuntu系统上shellinabox配置文件的默认位置是**/etc/default/shellinabox**。在RHEL/CentOS/Fedora上,默认位置在**/etc/sysconfig/shellinaboxd**。

|

||||

|

||||

如果要更改默认端口,

|

||||

|

||||

在Debian / Ubuntu:

|

||||

|

||||

$ sudo vi /etc/default/shellinabox

|

||||

|

||||

在RHEL / CentOS / Fedora:

|

||||

|

||||

# vi /etc/sysconfig/shellinaboxd

|

||||

|

||||

更改你的端口到任意数字。因为我在本地网络上测试它,所以我使用默认值。

|

||||

|

||||

# Shell in a box daemon configuration

|

||||

# For details see shellinaboxd man page

|

||||

|

||||

# Basic options

|

||||

USER=shellinabox

|

||||

GROUP=shellinabox

|

||||

CERTDIR=/var/lib/shellinabox

|

||||

PORT=4200

|

||||

OPTS="--disable-ssl-menu -s /:LOGIN"

|

||||

|

||||

# Additional examples with custom options:

|

||||

|

||||

# Fancy configuration with right-click menu choice for black-on-white:

|

||||

# OPTS="--user-css Normal:+black-on-white.css,Reverse:-white-on-black.css --disable-ssl-menu -s /:LOGIN"

|

||||

|

||||

# Simple configuration for running it as an SSH console with SSL disabled:

|

||||

# OPTS="-t -s /:SSH:host.example.com"

|

||||

|

||||

重启shelinabox服务。

|

||||

|

||||

**在Debian/Ubuntu:**

|

||||

|

||||

$ sudo systemctl restart shellinabox

|

||||

|

||||

或者

|

||||

|

||||

$ sudo service shellinabox restart

|

||||

|

||||

在RHEL/CentOS系统,运行下面的命令能在每次重启时自动启动shellinaboxd服务

|

||||

|

||||

# systemctl enable shellinaboxd

|

||||

|

||||

或者

|

||||

|

||||

# chkconfig shellinaboxd on

|

||||

|

||||

如果你正在运行一个防火墙,记得要打开端口**4200**或任何你指定的端口。

|

||||

|

||||

例如,在RHEL/CentOS系统,你可以如下图所示允许端口。

|

||||

|

||||

# firewall-cmd --permanent --add-port=4200/tcp

|

||||

|

||||

----------

|

||||

|

||||

# firewall-cmd --reload

|

||||

|

||||

### 使用 ###

|

||||

|

||||

现在,在你的客户端系统,打开Web浏览器并导航到:**https://ip-address-of-remote-servers:4200**。

|

||||

|

||||

**注意**:如果你改变了端口,请填写修改后的端口。

|

||||

|

||||

你会得到一个证书问题的警告信息。接受该证书并继续。

|

||||

|

||||

|

||||

|

||||

输入远程系统的用户名和密码。现在,您就能够从浏览器本身访问远程系统的外壳。

|

||||

|

||||

|

||||

|

||||

右键点击你浏览器的空白位置。你可以得到一些有很有用的额外菜单选项。

|

||||

|

||||

|

||||

|

||||

从现在开始,你可以通过本地系统的Web浏览器在你的远程服务器随意操作。

|

||||

|

||||

当你完成工作时,记得输入`exit`退出。

|

||||

|

||||

当再次连接到远程系统时,单击**连接**按钮,然后输入远程服务器的用户名和密码。

|

||||

|

||||

|

||||

|

||||

如果想了解shellinabox更多细节,在你的终端键入下面的命令:

|

||||

|

||||

# man shellinabox

|

||||

|

||||

或者

|

||||

|

||||

# shellinaboxd -help

|

||||

|

||||

同时,参考[shellinabox 在wiki页面的介绍][1],来了解shellinabox的综合使用细节。

|

||||

|

||||

### 结论 ###

|

||||

|

||||

正如我之前提到的,如果你在服务器运行在防火墙后面,那么基于web的SSH工具是非常有用的。有许多基于web的SSH工具,但shellinabox是非常简单而有用的工具,可以从的网络上的任何地方,模拟一个远程系统的Shell。因为它是基于浏览器的,所以你可以从任何设备访问您的远程服务器,只要你有一个支持JavaScript和CSS的浏览器。

|

||||

|

||||

就这些啦。祝你今天有个好心情!

|

||||

|

||||

#### 参考链接: ####

|

||||

|

||||

- [shellinabox website][2]

|

||||

|

||||

--------------------------------------------------------------------------------

|

||||

|

||||

via: http://www.unixmen.com/shellinabox-a-web-based-ajax-terminal-emulator/

|

||||

|

||||

作者:[SK][a]

|

||||

译者:[xiaoyu33](https://github.com/xiaoyu33)

|

||||

校对:[wxy](https://github.com/wxy)

|

||||

|

||||

本文由 [LCTT](https://github.com/LCTT/TranslateProject) 原创翻译,[Linux中国](http://linux.cn/) 荣誉推出

|

||||

|

||||

[a]:http://www.unixmen.com/author/sk/

|

||||

[1]:https://code.google.com/p/shellinabox/wiki/shellinaboxd_man

|

||||

[2]:https://code.google.com/p/shellinabox/

|

||||

@ -0,0 +1,52 @@

|

||||

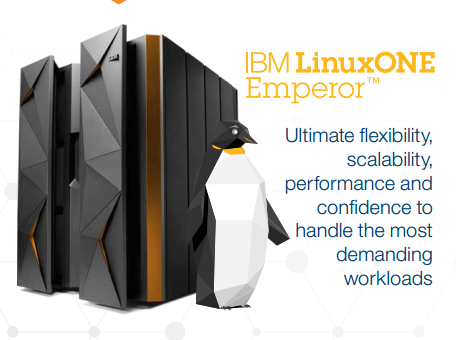

Linux Without Limits: IBM Launch LinuxONE Mainframes

|

||||

================================================================================

|

||||

|

||||

|

||||

LinuxONE Emperor MainframeGood news for Ubuntu’s server team today as [IBM launch the LinuxONE][1] a Linux-only mainframe that is also able to run Ubuntu.

|

||||

|

||||

The largest of the LinuxONE systems launched by IBM is called ‘Emperor’ and can scale up to 8000 virtual machines or tens of thousands of containers – a possible record for any one single Linux system.

|

||||

|

||||

The LinuxONE is described by IBM as a ‘game changer’ that ‘unleashes the potential of Linux for business’.

|

||||

|

||||

IBM and Canonical are working together on the creation of an Ubuntu distribution for LinuxONE and other IBM z Systems. Ubuntu will join RedHat and SUSE as ‘premier Linux distributions’ on IBM z.

|

||||

|

||||

Alongside the ‘Emperor’ IBM is also offering the LinuxONE Rockhopper, a smaller mainframe for medium-sized businesses and organisations.

|

||||

|

||||

IBM is the market leader in mainframes and commands over 90% of the mainframe market.

|

||||

|

||||

注:youtube 视频

|

||||

<iframe width="750" height="422" frameborder="0" allowfullscreen="" src="https://www.youtube.com/embed/2ABfNrWs-ns?feature=oembed"></iframe>

|

||||

|

||||

### What Is a Mainframe Computer Used For? ###

|

||||

|

||||

The computer you’re reading this article on would be dwarfed by a ‘big iron’ mainframe. They are large, hulking great cabinets packed full of high-end components, custom designed technology and dizzying amounts of storage (that is data storage, not ample room for pens and rulers).

|

||||

|

||||

Mainframes computers are used by large organizations and businesses to process and store large amounts of data, crunch through statistics, and handle large-scale transaction processing.

|

||||

|

||||

### ‘World’s Fastest Processor’ ###

|

||||

|

||||

IBM has teamed up with Canonical Ltd to use Ubuntu on the LinuxONE and other IBM z Systems.

|

||||

|

||||

The LinuxONE Emperor uses the IBM z13 processor. The chip, announced back in January, is said to be the world’s fastest microprocessor. It is able to deliver transaction response times in the milliseconds.

|

||||

|

||||

But as well as being well equipped to handle for high-volume mobile transactions, the z13 inside the LinuxONE is also an ideal cloud system.

|

||||

|

||||

It can handle more than 50 virtual servers per core for a total of 8000 virtual servers, making it a cheaper, greener and more performant way to scale-out to the cloud.

|

||||

|

||||

**You don’t have to be a CIO or mainframe spotter to appreciate this announcement. The possibilities LinuxONE provides are clear enough. **

|

||||

|

||||

Source: [Reuters (h/t @popey)][2]

|

||||

|

||||

--------------------------------------------------------------------------------

|

||||

|

||||

via: http://www.omgubuntu.co.uk/2015/08/ibm-linuxone-mainframe-ubuntu-partnership

|

||||

|

||||

作者:[Joey-Elijah Sneddon][a]

|

||||

译者:[译者ID](https://github.com/译者ID)

|

||||

校对:[校对者ID](https://github.com/校对者ID)

|

||||

|

||||

本文由 [LCTT](https://github.com/LCTT/TranslateProject) 原创翻译,[Linux中国](https://linux.cn/) 荣誉推出

|

||||

|

||||

[a]:https://plus.google.com/117485690627814051450/?rel=author

|

||||

[1]:http://www-03.ibm.com/systems/z/announcement.html

|

||||

[2]:http://www.reuters.com/article/2015/08/17/us-ibm-linuxone-idUSKCN0QM09P20150817

|

||||

@ -0,0 +1,46 @@

|

||||

Ubuntu Linux is coming to IBM mainframes

|

||||

================================================================================

|

||||

SEATTLE -- It's finally happened. At [LinuxCon][1], IBM and [Canonical][2] announced that [Ubuntu Linux][3] will soon be running on IBM mainframes.

|

||||

|

||||

|

||||

|

||||

You'll soon to be able to get your IBM mainframe in Ubuntu Linux orange

|

||||

|

||||

According to Ross Mauri, IBM's General Manager of System z, and Mark Shuttleworth, Canonical and Ubuntu's founder, this move came about because of customer demand. For over a decade, [Red Hat Enterprise Linux (RHEL)][4] and [SUSE Linux Enterprise Server (SLES)][5] were the only supported IBM mainframe Linux distributions.

|

||||

|

||||

As Ubuntu matured, more and more businesses turned to it for the enterprise Linux, and more and more of them wanted it on IBM big iron hardware. In particular, banks wanted Ubuntu there. Soon, financial CIOs will have their wish granted.

|

||||

|

||||

In an interview Shuttleworth said that Ubuntu Linux will be available on the mainframe by April 2016 in the next long-term support version of Ubuntu: Ubuntu 16.04. Canonical and IBM already took the first move in this direction in late 2014 by bringing [Ubuntu to IBM's POWER][6] architecture.

|

||||

|

||||

Before that, Canonical and IBM almost signed the dotted line to bring [Ubuntu to IBM mainframes in 2011][7] but that deal was never finalized. This time, it's happening.

|

||||

|

||||

Jane Silber, Canonical's CEO, explained in a statement, "Our [expansion of Ubuntu platform][8] support to [IBM z Systems][9] is a recognition of the number of customers that count on z Systems to run their businesses, and the maturity the hybrid cloud is reaching in the marketplace.

|

||||

|

||||

**Silber continued:**

|

||||

|

||||

> With support of z Systems, including [LinuxONE][10], Canonical is also expanding our relationship with IBM, building on our support for the POWER architecture and OpenPOWER ecosystem. Just as Power Systems clients are now benefiting from the scaleout capabilities of Ubuntu, and our agile development process which results in first to market support of new technologies such as CAPI (Coherent Accelerator Processor Interface) on POWER8, z Systems clients can expect the same rapid rollout of technology advancements, and benefit from [Juju][11] and our other cloud tools to enable faster delivery of new services to end users. In addition, our collaboration with IBM includes the enablement of scale-out deployment of many IBM software solutions with Juju Charms. Mainframe clients will delight in having a wealth of 'charmed' IBM solutions, other software provider products, and open source solutions, deployable on mainframes via Juju.

|

||||

|

||||

Shuttleworth expects Ubuntu on z to be very successful. "It's blazingly fast, and with its support for OpenStack, people who want exceptional cloud region performance will be very happy.

|

||||

|

||||

--------------------------------------------------------------------------------

|

||||

|

||||

via: http://www.zdnet.com/article/ubuntu-linux-is-coming-to-the-mainframe/#ftag=RSSbaffb68

|

||||

|

||||

作者:[Steven J. Vaughan-Nichols][a]

|

||||

译者:[译者ID](https://github.com/译者ID)

|

||||

校对:[校对者ID](https://github.com/校对者ID)

|

||||

|

||||

本文由 [LCTT](https://github.com/LCTT/TranslateProject) 原创翻译,[Linux中国](https://linux.cn/) 荣誉推出

|

||||

|

||||

[a]:http://www.zdnet.com/meet-the-team/us/steven-j-vaughan-nichols/

|

||||

[1]:http://events.linuxfoundation.org/events/linuxcon-north-america

|

||||

[2]:http://www.canonical.com/

|

||||

[3]:http://www.ubuntu.comj/

|

||||

[4]:http://www.redhat.com/en/technologies/linux-platforms/enterprise-linux

|

||||

[5]:https://www.suse.com/products/server/

|

||||

[6]:http://www.zdnet.com/article/ibm-doubles-down-on-linux/

|

||||

[7]:http://www.zdnet.com/article/mainframe-ubuntu-linux/

|

||||

[8]:https://insights.ubuntu.com/2015/08/17/ibm-and-canonical-plan-ubuntu-support-on-ibm-z-systems-mainframe/

|

||||

[9]:http://www-03.ibm.com/systems/uk/z/

|

||||

[10]:http://www.zdnet.com/article/linuxone-ibms-new-linux-mainframes/

|

||||

[11]:https://jujucharms.com/

|

||||

@ -1,97 +0,0 @@

|

||||

translating by xiaoyu33

|

||||

|

||||

Tickr Is An Open-Source RSS News Ticker for Linux Desktops

|

||||

================================================================================

|

||||

|

||||

|

||||

**Latest! Latest! Read all about it!**

|

||||

|

||||

Alright, so the app we’re highlighting today isn’t quite the binary version of an old newspaper seller — but it is a great way to have the latest news brought to you, on your desktop.

|

||||

|

||||

Tick is a GTK-based news ticker for the Linux desktop that scrolls the latest headlines and article titles from your favourite RSS feeds in horizontal strip that you can place anywhere on your desktop.

|

||||

|

||||

Call me Joey Calamezzo; I put mine on the bottom TV news station style.

|

||||

|

||||

“Over to you, sub-heading.”

|

||||

|

||||

### RSS — Remember That? ###

|

||||

|

||||

“Thanks paragraph ending.”

|

||||

|

||||

In an era of push notifications, social media, and clickbait, cajoling us into reading the latest mind-blowing, humanity saving listicle ASAP, RSS can seem a bit old hat.

|

||||

|

||||

For me? Well, RSS lives up to its name of Really Simple Syndication. It’s the easiest, most manageable way to have news come to me. I can manage and read stuff when I want; there’s no urgency to view lest the tweet vanish into the stream or the push notification vanish.

|

||||

|

||||

The beauty of Tickr is in its utility. You can have a constant stream of news trundling along the bottom of your screen, which you can passively glance at from time to time.

|

||||

|

||||

|

||||

|

||||

There’s no pressure to ‘read’ or ‘mark all read’ or any of that. When you see something you want to read you just click it to open it in a web browser.

|

||||

|

||||

### Setting it Up ###

|

||||

|

||||

|

||||

|

||||

Although Tickr is available to install from the Ubuntu Software Centre it hasn’t been updated for a long time. Nowhere is this sense of abandonment more keenly felt than when opening the unwieldy and unintuitive configuration panel.

|

||||

|

||||

To open it:

|

||||

|

||||

1. Right click on the Tickr bar

|

||||

1. Go to Edit > Preferences

|

||||

1. Adjust the various settings

|

||||

|

||||

Row after row of options and settings, few of which seem to make sense at first. But poke and prod around and you’ll controls for pretty much everything, including:

|

||||

|

||||

- Set scrolling speed

|

||||

- Choose behaviour when mousing over

|

||||

- Feed update frequency

|

||||

- Font, including font sizes and color

|

||||

- Separator character (‘delineator’)

|

||||

- Position of Tickr on screen

|

||||

- Color and opacity of Tickr bar

|

||||

- Choose how many articles each feed displays

|

||||

|

||||

One ‘quirk’ worth mentioning is that pressing the ‘Apply’ only updates the on-screen Tickr to preview changes. For changes to take effect when you exit the Preferences window you need to click ‘OK’.

|

||||

|

||||

Getting the bar to sit flush on your display can also take a fair bit of tweaking, especially on Unity.

|

||||

|

||||

Press the “full width button” to have the app auto-detect your screen width. By default when placed at the top or bottom it leaves a 25px gap (the app was created back in the days of GNOME 2.x desktops). After hitting the top or bottom buttons just add an extra 25 pixels to the input box compensate for this.

|

||||

|

||||

Other options available include: choose which browser articles open in; whether Tickr appears within a regular window frame; whether a clock is shown; and how often the app checks feed for articles.

|

||||

|

||||

#### Adding Feeds ####

|

||||

|

||||

Tickr comes with a built-in list of over 30 different feeds, ranging from technology blogs to mainstream news services.

|

||||

|

||||

|

||||

|

||||

You can select as many of these as you like to show headlines in the on screen ticker. If you want to add your own feeds you can: –

|

||||

|

||||

1. Right click on the Tickr bar

|

||||

1. Go to File > Open Feed

|

||||

1. Enter Feed URL

|

||||

1. Click ‘Add/Upd’ button

|

||||

1. Click ‘OK (select)’

|

||||

|

||||

To set how many items from each feed shows in the ticker change the “Read N items max per feed” in the other preferences window.

|

||||

|

||||

### Install Tickr in Ubuntu 14.04 LTS and Up ###

|

||||

|

||||

So that’s Tickr. It’s not going to change the world but it will keep you abreast of what’s happening in it.

|

||||

|

||||

To install it in Ubuntu 14.04 LTS or later head to the Ubuntu Software Centre but clicking the button below.

|

||||

|

||||

- [Click to install Tickr form the Ubuntu Software Center][1]

|

||||

|

||||

--------------------------------------------------------------------------------

|

||||

|

||||

via: http://www.omgubuntu.co.uk/2015/06/tickr-open-source-desktop-rss-news-ticker

|

||||

|

||||

作者:[Joey-Elijah Sneddon][a]

|

||||

译者:[译者ID](https://github.com/译者ID)

|

||||

校对:[校对者ID](https://github.com/校对者ID)

|

||||

|

||||

本文由 [LCTT](https://github.com/LCTT/TranslateProject) 原创翻译,[Linux中国](https://linux.cn/) 荣誉推出

|

||||

|

||||

[a]:https://plus.google.com/117485690627814051450/?rel=author

|

||||

[1]:apt://tickr

|

||||

117

sources/share/20150817 Top 5 Torrent Clients For Ubuntu Linux.md

Normal file

117

sources/share/20150817 Top 5 Torrent Clients For Ubuntu Linux.md

Normal file

@ -0,0 +1,117 @@

|

||||

Top 5 Torrent Clients For Ubuntu Linux

|

||||

================================================================================

|

||||

|

||||

|

||||