mirror of

https://github.com/LCTT/TranslateProject.git

synced 2025-03-18 02:00:18 +08:00

commit

ddf9652a3b

@ -0,0 +1,52 @@

|

||||

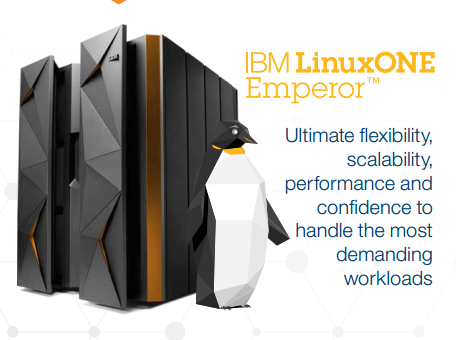

Linux Without Limits: IBM Launch LinuxONE Mainframes

|

||||

================================================================================

|

||||

|

||||

|

||||

LinuxONE Emperor MainframeGood news for Ubuntu’s server team today as [IBM launch the LinuxONE][1] a Linux-only mainframe that is also able to run Ubuntu.

|

||||

|

||||

The largest of the LinuxONE systems launched by IBM is called ‘Emperor’ and can scale up to 8000 virtual machines or tens of thousands of containers – a possible record for any one single Linux system.

|

||||

|

||||

The LinuxONE is described by IBM as a ‘game changer’ that ‘unleashes the potential of Linux for business’.

|

||||

|

||||

IBM and Canonical are working together on the creation of an Ubuntu distribution for LinuxONE and other IBM z Systems. Ubuntu will join RedHat and SUSE as ‘premier Linux distributions’ on IBM z.

|

||||

|

||||

Alongside the ‘Emperor’ IBM is also offering the LinuxONE Rockhopper, a smaller mainframe for medium-sized businesses and organisations.

|

||||

|

||||

IBM is the market leader in mainframes and commands over 90% of the mainframe market.

|

||||

|

||||

注:youtube 视频

|

||||

<iframe width="750" height="422" frameborder="0" allowfullscreen="" src="https://www.youtube.com/embed/2ABfNrWs-ns?feature=oembed"></iframe>

|

||||

|

||||

### What Is a Mainframe Computer Used For? ###

|

||||

|

||||

The computer you’re reading this article on would be dwarfed by a ‘big iron’ mainframe. They are large, hulking great cabinets packed full of high-end components, custom designed technology and dizzying amounts of storage (that is data storage, not ample room for pens and rulers).

|

||||

|

||||

Mainframes computers are used by large organizations and businesses to process and store large amounts of data, crunch through statistics, and handle large-scale transaction processing.

|

||||

|

||||

### ‘World’s Fastest Processor’ ###

|

||||

|

||||

IBM has teamed up with Canonical Ltd to use Ubuntu on the LinuxONE and other IBM z Systems.

|

||||

|

||||

The LinuxONE Emperor uses the IBM z13 processor. The chip, announced back in January, is said to be the world’s fastest microprocessor. It is able to deliver transaction response times in the milliseconds.

|

||||

|

||||

But as well as being well equipped to handle for high-volume mobile transactions, the z13 inside the LinuxONE is also an ideal cloud system.

|

||||

|

||||

It can handle more than 50 virtual servers per core for a total of 8000 virtual servers, making it a cheaper, greener and more performant way to scale-out to the cloud.

|

||||

|

||||

**You don’t have to be a CIO or mainframe spotter to appreciate this announcement. The possibilities LinuxONE provides are clear enough. **

|

||||

|

||||

Source: [Reuters (h/t @popey)][2]

|

||||

|

||||

--------------------------------------------------------------------------------

|

||||

|

||||

via: http://www.omgubuntu.co.uk/2015/08/ibm-linuxone-mainframe-ubuntu-partnership

|

||||

|

||||

作者:[Joey-Elijah Sneddon][a]

|

||||

译者:[译者ID](https://github.com/译者ID)

|

||||

校对:[校对者ID](https://github.com/校对者ID)

|

||||

|

||||

本文由 [LCTT](https://github.com/LCTT/TranslateProject) 原创翻译,[Linux中国](https://linux.cn/) 荣誉推出

|

||||

|

||||

[a]:https://plus.google.com/117485690627814051450/?rel=author

|

||||

[1]:http://www-03.ibm.com/systems/z/announcement.html

|

||||

[2]:http://www.reuters.com/article/2015/08/17/us-ibm-linuxone-idUSKCN0QM09P20150817

|

||||

@ -0,0 +1,46 @@

|

||||

Ubuntu Linux is coming to IBM mainframes

|

||||

================================================================================

|

||||

SEATTLE -- It's finally happened. At [LinuxCon][1], IBM and [Canonical][2] announced that [Ubuntu Linux][3] will soon be running on IBM mainframes.

|

||||

|

||||

|

||||

|

||||

You'll soon to be able to get your IBM mainframe in Ubuntu Linux orange

|

||||

|

||||

According to Ross Mauri, IBM's General Manager of System z, and Mark Shuttleworth, Canonical and Ubuntu's founder, this move came about because of customer demand. For over a decade, [Red Hat Enterprise Linux (RHEL)][4] and [SUSE Linux Enterprise Server (SLES)][5] were the only supported IBM mainframe Linux distributions.

|

||||

|

||||

As Ubuntu matured, more and more businesses turned to it for the enterprise Linux, and more and more of them wanted it on IBM big iron hardware. In particular, banks wanted Ubuntu there. Soon, financial CIOs will have their wish granted.

|

||||

|

||||

In an interview Shuttleworth said that Ubuntu Linux will be available on the mainframe by April 2016 in the next long-term support version of Ubuntu: Ubuntu 16.04. Canonical and IBM already took the first move in this direction in late 2014 by bringing [Ubuntu to IBM's POWER][6] architecture.

|

||||

|

||||

Before that, Canonical and IBM almost signed the dotted line to bring [Ubuntu to IBM mainframes in 2011][7] but that deal was never finalized. This time, it's happening.

|

||||

|

||||

Jane Silber, Canonical's CEO, explained in a statement, "Our [expansion of Ubuntu platform][8] support to [IBM z Systems][9] is a recognition of the number of customers that count on z Systems to run their businesses, and the maturity the hybrid cloud is reaching in the marketplace.

|

||||

|

||||

**Silber continued:**

|

||||

|

||||

> With support of z Systems, including [LinuxONE][10], Canonical is also expanding our relationship with IBM, building on our support for the POWER architecture and OpenPOWER ecosystem. Just as Power Systems clients are now benefiting from the scaleout capabilities of Ubuntu, and our agile development process which results in first to market support of new technologies such as CAPI (Coherent Accelerator Processor Interface) on POWER8, z Systems clients can expect the same rapid rollout of technology advancements, and benefit from [Juju][11] and our other cloud tools to enable faster delivery of new services to end users. In addition, our collaboration with IBM includes the enablement of scale-out deployment of many IBM software solutions with Juju Charms. Mainframe clients will delight in having a wealth of 'charmed' IBM solutions, other software provider products, and open source solutions, deployable on mainframes via Juju.

|

||||

|

||||

Shuttleworth expects Ubuntu on z to be very successful. "It's blazingly fast, and with its support for OpenStack, people who want exceptional cloud region performance will be very happy.

|

||||

|

||||

--------------------------------------------------------------------------------

|

||||

|

||||

via: http://www.zdnet.com/article/ubuntu-linux-is-coming-to-the-mainframe/#ftag=RSSbaffb68

|

||||

|

||||

作者:[Steven J. Vaughan-Nichols][a]

|

||||

译者:[译者ID](https://github.com/译者ID)

|

||||

校对:[校对者ID](https://github.com/校对者ID)

|

||||

|

||||

本文由 [LCTT](https://github.com/LCTT/TranslateProject) 原创翻译,[Linux中国](https://linux.cn/) 荣誉推出

|

||||

|

||||

[a]:http://www.zdnet.com/meet-the-team/us/steven-j-vaughan-nichols/

|

||||

[1]:http://events.linuxfoundation.org/events/linuxcon-north-america

|

||||

[2]:http://www.canonical.com/

|

||||

[3]:http://www.ubuntu.comj/

|

||||

[4]:http://www.redhat.com/en/technologies/linux-platforms/enterprise-linux

|

||||

[5]:https://www.suse.com/products/server/

|

||||

[6]:http://www.zdnet.com/article/ibm-doubles-down-on-linux/

|

||||

[7]:http://www.zdnet.com/article/mainframe-ubuntu-linux/

|

||||

[8]:https://insights.ubuntu.com/2015/08/17/ibm-and-canonical-plan-ubuntu-support-on-ibm-z-systems-mainframe/

|

||||

[9]:http://www-03.ibm.com/systems/uk/z/

|

||||

[10]:http://www.zdnet.com/article/linuxone-ibms-new-linux-mainframes/

|

||||

[11]:https://jujucharms.com/

|

||||

@ -0,0 +1,344 @@

|

||||

A Linux User Using ‘Windows 10′ After More than 8 Years – See Comparison

|

||||

================================================================================

|

||||

Windows 10 is the newest member of windows NT family of which general availability was made on July 29, 2015. It is the successor of Windows 8.1. Windows 10 is supported on Intel Architecture 32 bit, AMD64 and ARMv7 processors.

|

||||

|

||||

|

||||

|

||||

Windows 10 and Linux Comparison

|

||||

|

||||

As a Linux-user for more than 8 continuous years, I thought to test Windows 10, as it is making a lots of news these days. This article is a breakthrough of my observation. I will be seeing everything from the perspective of a Linux user so you may find it a bit biased towards Linux but with absolutely no false information.

|

||||

|

||||

1. I searched Google with the text “download windows 10” and clicked the first link.

|

||||

|

||||

|

||||

|

||||

Search Windows 10

|

||||

|

||||

You may directly go to link : [https://www.microsoft.com/en-us/software-download/windows10ISO][1]

|

||||

|

||||

2. I was supposed to select a edition from ‘windows 10‘, ‘windows 10 KN‘, ‘windows 10 N‘ and ‘windows 10 single language‘.

|

||||

|

||||

|

||||

|

||||

Select Windows 10 Edition

|

||||

|

||||

For those who want to know details of different editions of Windows 10, here is the brief details of editions.

|

||||

|

||||

- Windows 10 – Contains everything offered by Microsoft for this OS.

|

||||

- Windows 10N – This edition comes without Media-player.

|

||||

- Windows 10KN – This edition comes without media playing capabilities.

|

||||

- Windows 10 Single Language – Only one Language Pre-installed.

|

||||

|

||||

3. I selected the first option ‘Windows 10‘ and clicked ‘Confirm‘. Then I was supposed to select a product language. I choose ‘English‘.

|

||||

|

||||

I was provided with Two Download Links. One for 32-bit and other for 64-bit. I clicked 64-bit, as per my architecture.

|

||||

|

||||

|

||||

|

||||

Download Windows 10

|

||||

|

||||

With my download speed (15Mbps), it took me 3 long hours to download it. Unfortunately there were no torrent file to download the OS, which could otherwise have made the overall process smooth. The OS iso image size is 3.8 GB.

|

||||

|

||||

I could not find an image of smaller size but again the truth is there don’t exist net-installer image like things for Windows. Also there is no way to calculate hash value after the iso image has been downloaded.

|

||||

|

||||

Wonder why so ignorance from windows on such issues. To verify if the iso is downloaded correctly I need to write the image to a disk or to a USB flash drive and then boot my system and keep my finger crossed till the setup is finished.

|

||||

|

||||

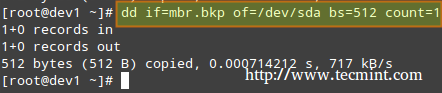

Lets start. I made my USB flash drive bootable with the windows 10 iso using dd command, as:

|

||||

|

||||

# dd if=/home/avi/Downloads/Win10_English_x64.iso of=/dev/sdb1 bs=512M; sync

|

||||

|

||||

It took a few minutes to complete the process. I then rebooted the system and choose to boot from USB flash Drive in my UEFI (BIOS) settings.

|

||||

|

||||

#### System Requirements ####

|

||||

|

||||

If you are upgrading

|

||||

|

||||

- Upgrade supported only from Windows 7 SP1 or Windows 8.1

|

||||

|

||||

If you are fresh Installing

|

||||

|

||||

- Processor: 1GHz or faster

|

||||

- RAM : 1GB and Above(32-bit), 2GB and Above(64-bit)

|

||||

- HDD: 16GB and Above(32-bit), 20GB and Above(64-bit)

|

||||

- Graphic card: DirectX 9 or later + WDDM 1.0 Driver

|

||||

|

||||

### Installation of Windows 10 ###

|

||||

|

||||

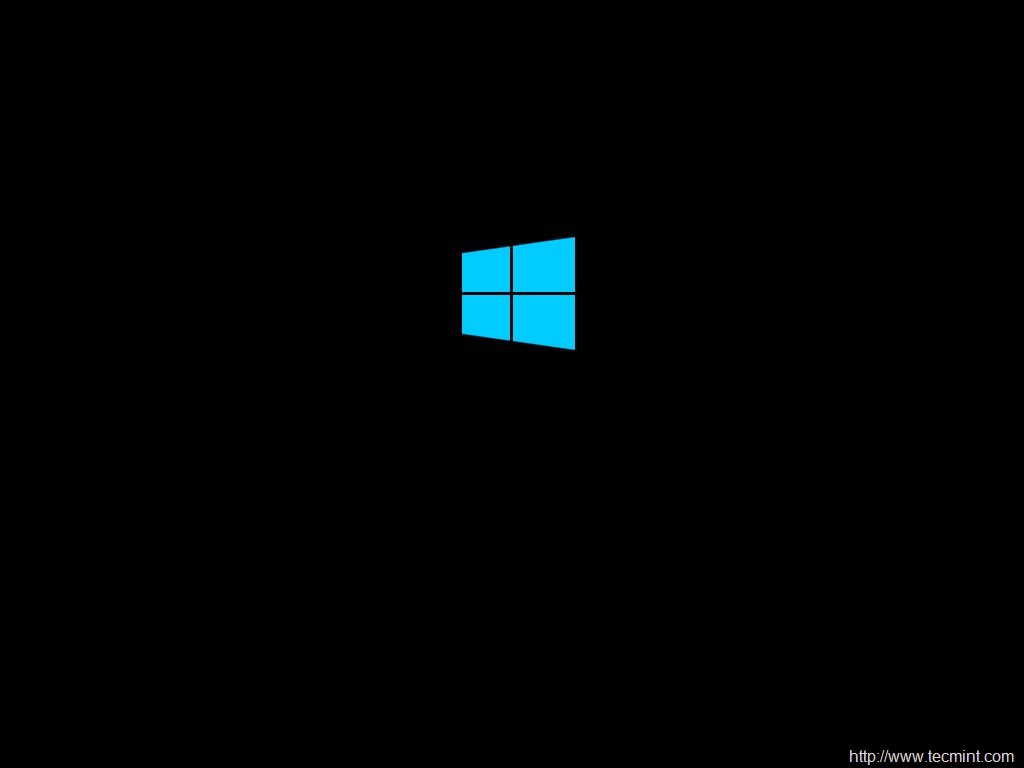

1. Windows 10 boots. Yet again they changed the logo. Also no information on whats going on.

|

||||

|

||||

|

||||

|

||||

Windows 10 Logo

|

||||

|

||||

2. Selected Language to install, Time & currency format and keyboard & Input methods before clicking Next.

|

||||

|

||||

|

||||

|

||||

Select Language and Time

|

||||

|

||||

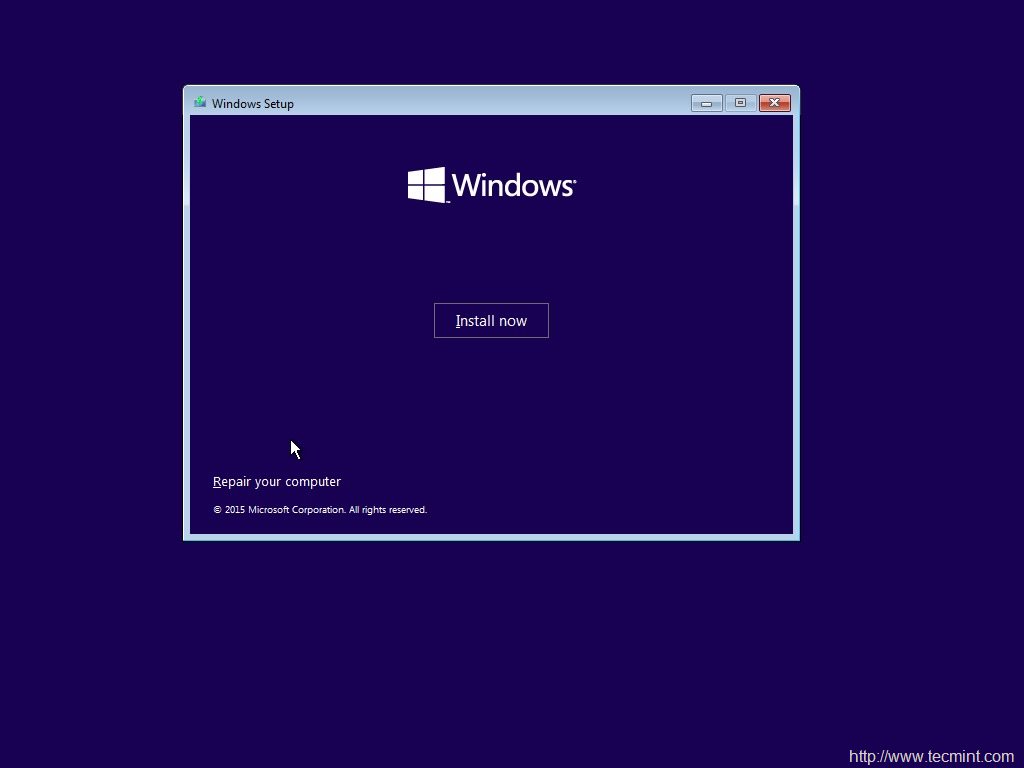

3. And then ‘Install Now‘ Menu.

|

||||

|

||||

|

||||

|

||||

Install Windows 10

|

||||

|

||||

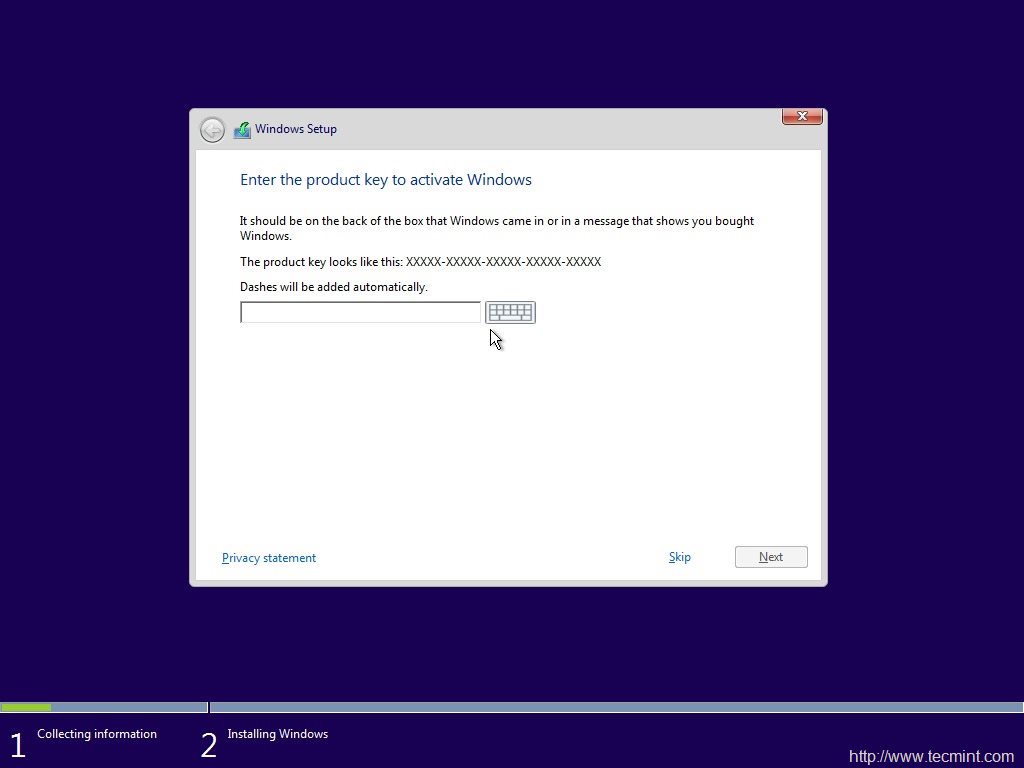

4. The next screen is asking for Product key. I clicked ‘skip’.

|

||||

|

||||

|

||||

|

||||

Windows 10 Product Key

|

||||

|

||||

5. Choose from a listed OS. I chose ‘windows 10 pro‘.

|

||||

|

||||

|

||||

|

||||

Select Install Operating System

|

||||

|

||||

6. oh yes the license agreement. Put a check mark against ‘I accept the license terms‘ and click next.

|

||||

|

||||

|

||||

|

||||

Accept License

|

||||

|

||||

7. Next was to upgrade (to windows 10 from previous versions of windows) and Install Windows. Don’t know why custom: Windows Install only is suggested as advanced by windows. Anyway I chose to Install windows only.

|

||||

|

||||

|

||||

|

||||

Select Installation Type

|

||||

|

||||

8. Selected the file-system and clicked ‘next’.

|

||||

|

||||

|

||||

|

||||

Select Install Drive

|

||||

|

||||

9. The installer started to copy files, getting files ready for installation, installing features, installing updates and finishing up. It would be better if the installer would have shown verbose output on the action is it taking.

|

||||

|

||||

|

||||

|

||||

Installing Windows

|

||||

|

||||

10. And then windows restarted. They said reboot was needed to continue.

|

||||

|

||||

|

||||

|

||||

Windows Installation Process

|

||||

|

||||

11. And then all I got was the below screen which reads “Getting Ready”. It took 5+ minutes at this point. No idea what was going on. No output.

|

||||

|

||||

|

||||

|

||||

Windows Getting Ready

|

||||

|

||||

12. yet again, it was time to “Enter Product Key”. I clicked “Do this later” and then used expressed settings.

|

||||

|

||||

|

||||

|

||||

Enter Product Key

|

||||

|

||||

|

||||

|

||||

Select Express Settings

|

||||

|

||||

14. And then three more output screens, where I as a Linuxer expected that the Installer will tell me what it is doing but all in vain.

|

||||

|

||||

|

||||

|

||||

Loading Windows

|

||||

|

||||

|

||||

|

||||

Getting Updates

|

||||

|

||||

|

||||

|

||||

Still Loading Windows

|

||||

|

||||

15. And then the installer wanted to know who owns this machine “My organization” or I myself. Chose “I own it” and then next.

|

||||

|

||||

|

||||

|

||||

Select Organization

|

||||

|

||||

16. Installer prompted me to join “Azure Ad” or “Join a domain”, before I can click ‘continue’. I chooses the later option.

|

||||

|

||||

|

||||

|

||||

Connect Windows

|

||||

|

||||

17. The Installer wants me to create an account. So I entered user_name and clicked ‘Next‘, I was expecting an error message that I must enter a password.

|

||||

|

||||

|

||||

|

||||

Create Account

|

||||

|

||||

18. To my surprise Windows didn’t even showed warning/notification that I must create password. Such a negligence. Anyway I got my desktop.

|

||||

|

||||

|

||||

|

||||

Windows 10 Desktop

|

||||

|

||||

#### Experience of a Linux-user (Myself) till now ####

|

||||

|

||||

- No Net-installer Image

|

||||

- Image size too heavy

|

||||

- No way to check the integrity of iso downloaded (no hash check)

|

||||

- The booting and installation remains same as it was in XP, Windows 7 and 8 perhaps.

|

||||

- As usual no output on what windows Installer is doing – What file copying or what package installing.

|

||||

- Installation was straight forward and easy as compared to the installation of a Linux distribution.

|

||||

|

||||

### Windows 10 Testing ###

|

||||

|

||||

19. The default Desktop is clean. It has a recycle bin Icon on the default desktop. Search web directly from the desktop itself. Additionally icons for Task viewing, Internet browsing, folder browsing and Microsoft store is there. As usual notification bar is present on the bottom right to sum up desktop.

|

||||

|

||||

|

||||

|

||||

Deskop Shortcut Icons

|

||||

|

||||

20. Internet Explorer replaced with Microsoft Edge. Windows 10 has replace the legacy web browser Internet Explorer also known as IE with Edge aka project spartan.

|

||||

|

||||

|

||||

|

||||

Microsoft Edge Browser

|

||||

|

||||

It is fast at least as compared to IE (as it seems it testing). Familiar user Interface. The home screen contains news feed updates. There is also a search bar title that reads ‘Where to next?‘. The browser loads time is considerably low which result in improving overall speed and performance. The memory usages of Edge seems normal.

|

||||

|

||||

|

||||

|

||||

Windows Performance

|

||||

|

||||

Edge has got cortana – Intelligent Personal Assistant, Support for chrome-extension, web Note – Take notes while Browsing, Share – Right from the tab without opening any other TAB.

|

||||

|

||||

#### Experience of a Linux-user (Myself) on this point ####

|

||||

|

||||

21. Microsoft has really improved web browsing. Lets see how stable and fine it remains. It don’t lag as of now.

|

||||

|

||||

22. Though RAM usages by Edge was fine for me, a lots of users are complaining that Edge is notorious for Excessive RAM Usages.

|

||||

|

||||

23. Difficult to say at this point if Edge is ready to compete with Chrome and/or Firefox at this point of time. Lets see what future unfolds.

|

||||

|

||||

#### A few more Virtual Tour ####

|

||||

|

||||

24. Start Menu redesigned – Seems clear and effective. Metro icons make it live. Populated with most commonly applications viz., Calendar, Mail, Edge, Photos, Contact, Temperature, Companion suite, OneNote, Store, Xbox, Music, Movies & TV, Money, News, Store, etc.

|

||||

|

||||

|

||||

|

||||

Windows Look and Feel

|

||||

|

||||

In Linux on Gnome Desktop Environment, I use to search required applications simply by pressing windows key and then type the name of the application.

|

||||

|

||||

|

||||

|

||||

Search Within Desktop

|

||||

|

||||

25. File Explorer – seems clear Designing. Edges are sharp. In the left pane there is link to quick access folders.

|

||||

|

||||

|

||||

|

||||

Windows File Explorer

|

||||

|

||||

Equally clear and effective file explorer on Gnome Desktop Environment on Linux. Removed UN-necessary graphics and images from icons is a plus point.

|

||||

|

||||

|

||||

|

||||

File Browser on Gnome

|

||||

|

||||

26. Settings – Though the settings are a bit refined on Windows 10, you may compare it with the settings on a Linux Box.

|

||||

|

||||

**Settings on Windows**

|

||||

|

||||

|

||||

|

||||

Windows 10 Settings

|

||||

|

||||

**Setting on Linux Gnome**

|

||||

|

||||

|

||||

|

||||

Gnome Settings

|

||||

|

||||

27. List of Applications – List of Application on Linux is better than what they use to provide (based upon my memory, when I was a regular windows user) but still it stands low as compared to how Gnome3 list application.

|

||||

|

||||

**Application Listed by Windows**

|

||||

|

||||

|

||||

|

||||

Application List on Windows 10

|

||||

|

||||

**Application Listed by Gnome3 on Linux**

|

||||

|

||||

|

||||

|

||||

Gnome Application List on Linux

|

||||

|

||||

28. Virtual Desktop – Virtual Desktop feature of Windows 10 is one of those topic which are very much talked about these days.

|

||||

|

||||

Here is the virtual Desktop in Windows 10.

|

||||

|

||||

|

||||

|

||||

Windows Virtual Desktop

|

||||

|

||||

and the virtual Desktop on Linux we are using for more than 2 decades.

|

||||

|

||||

|

||||

|

||||

Virtual Desktop on Linux

|

||||

|

||||

#### A few other features of Windows 10 ####

|

||||

|

||||

29. Windows 10 comes with wi-fi sense. It shares your password with others. Anyone who is in the range of your wi-fi and connected to you over Skype, Outlook, Hotmail or Facebook can be granted access to your wifi network. And mind it this feature has been added as a feature by microsoft to save time and hassle-free connection.

|

||||

|

||||

In a reply to question raised by Tecmint, Microsoft said – The user has to agree to enable wifi sense, everytime on a new network. oh! What a pathetic taste as far as security is concerned. I am not convinced.

|

||||

|

||||

30. Up-gradation from Windows 7 and Windows 8.1 is free though the retail cost of Home and pro editions are approximately $119 and $199 respectively.

|

||||

|

||||

31. Microsoft released first cumulative update for windows 10, which is said to put system into endless crash loop for a few people. Windows perhaps don’t understand such problem or don’t want to work on that part don’t know why.

|

||||

|

||||

32. Microsoft’s inbuilt utility to block/hide unwanted updates don’t work in my case. This means If a update is there, there is no way to block/hide it. Sorry windows users!

|

||||

|

||||

#### A few features native to Linux that windows 10 have ####

|

||||

|

||||

Windows 10 has a lots of features that were taken directly from Linux. If Linux were not released under GNU License perhaps Microsoft would never had the below features.

|

||||

|

||||

33. Command-line package management – Yup! You heard it right. Windows 10 has a built-in package management. It works only in Windows Power Shell. OneGet is the official package manager for windows. Windows package manager in action.

|

||||

|

||||

|

||||

|

||||

Windows 10 Package Manager

|

||||

|

||||

- Border-less windows

|

||||

- Flat Icons

|

||||

- Virtual Desktop

|

||||

- One search for Online+offline search

|

||||

- Convergence of mobile and desktop OS

|

||||

|

||||

### Overall Conclusion ###

|

||||

|

||||

- Improved responsiveness

|

||||

- Well implemented Animation

|

||||

- low on resource

|

||||

- Improved battery life

|

||||

- Microsoft Edge web-browser is rock solid

|

||||

- Supported on Raspberry pi 2.

|

||||

- It is good because windows 8/8.1 was not upto mark and really bad.

|

||||

- It is a the same old wine in new bottle. Almost the same things with brushed up icons.

|

||||

|

||||

What my testing suggest is Windows 10 has improved on a few things like look and feel (as windows always did), +1 for Project spartan, Virtual Desktop, Command-line package management, one search for online and offline search. It is overall an improved product but those who thinks that Windows 10 will prove to be the last nail in the coffin of Linux are mistaken.

|

||||

|

||||

Linux is years ahead of Windows. Their approach is different. In near future windows won’t stand anywhere around Linux and there is nothing for which a Linux user need to go to Windows 10.

|

||||

|

||||

That’s all for now. Hope you liked the post. I will be here again with another interesting post you people will love to read. Provide us with your valuable feedback in the comments below.

|

||||

|

||||

--------------------------------------------------------------------------------

|

||||

|

||||

via: http://www.tecmint.com/a-linux-user-using-windows-10-after-more-than-8-years-see-comparison/

|

||||

|

||||

作者:[vishek Kumar][a]

|

||||

译者:[译者ID](https://github.com/译者ID)

|

||||

校对:[校对者ID](https://github.com/校对者ID)

|

||||

|

||||

本文由 [LCTT](https://github.com/LCTT/TranslateProject) 原创翻译,[Linux中国](https://linux.cn/) 荣誉推出

|

||||

|

||||

[a]:http://www.tecmint.com/author/avishek/

|

||||

[1]:https://www.microsoft.com/en-us/software-download/windows10ISO

|

||||

@ -0,0 +1,109 @@

|

||||

Debian GNU/Linux Birthday : A 22 Years of Journey and Still Counting…

|

||||

================================================================================

|

||||

On 16th August 2015, the Debian project has celebrated its 22nd anniversary, making it one of the oldest popular distribution in open source world. Debian project was conceived and founded in the year 1993 by Ian Murdock. By that time Slackware had already made a remarkable presence as one of the earliest Linux Distribution.

|

||||

|

||||

|

||||

|

||||

Happy 22nd Birthday to Debian Linux

|

||||

|

||||

Ian Ashley Murdock, an American Software Engineer by profession, conceived the idea of Debian project, when he was a student of Purdue University. He named the project Debian after the name of his then-girlfriend Debra Lynn (Deb) and his name. He later married her and then got divorced in January 2008.

|

||||

|

||||

|

||||

|

||||

Debian Creator: Ian Murdock

|

||||

|

||||

Ian is currently serving as Vice President of Platform and Development Community at ExactTarget.

|

||||

|

||||

Debian (as Slackware) was the result of unavailability of up-to mark Linux Distribution, that time. Ian in an interview said – “Providing the first class Product without profit would be the sole aim of Debian Project. Even Linux was not reliable and up-to mark that time. I Remember…. Moving files between file-system and dealing with voluminous file would often result in Kernel Panic. However the project Linux was promising. The availability of Source Code freely and the potential it seemed was qualitative.”

|

||||

|

||||

I remember … like everyone else I wanted to solve problem, run something like UNIX at home, but it was not possible…neither financially nor legally, in the other sense . Then I come to know about GNU kernel Development and its non-association with any kind of legal issues, he added. He was sponsored by Free Software Foundation (FSF) in the early days when he was working on Debian, it also helped Debian to take a giant step though Ian needed to finish his degree and hence quited FSF roughly after one year of sponsorship.

|

||||

|

||||

### Debian Development History ###

|

||||

|

||||

- **Debian 0.01 – 0.09** : Released between August 1993 – December 1993.

|

||||

- **Debian 0.91 ** – Released in January 1994 with primitive package system, No dependencies.

|

||||

- **Debian 0.93 rc5** : March 1995. It is the first modern release of Debian, dpkg was used to install and maintain packages after base system installation.

|

||||

- **Debian 0.93 rc6**: Released in November 1995. It was last a.out release, deselect made an appearance for the first time – 60 developers were maintaining packages, then at that time.

|

||||

- **Debian 1.1**: Released in June 1996. Code name – Buzz, Packages count – 474, Package Manager dpkg, Kernel 2.0, ELF.

|

||||

- **Debian 1.2**: Released in December 1996. Code name – Rex, Packages count – 848, Developers Count – 120.

|

||||

- **Debian 1.3**: Released in July 1997. Code name – Bo, package count 974, Developers count – 200.

|

||||

- **Debian 2.0**: Released in July 1998. Code name: Hamm, Support for architecture – Intel i386 and Motorola 68000 series, Number of Packages: 1500+, Number of Developers: 400+, glibc included.

|

||||

- **Debian 2.1**: Released on March 09, 1999. Code name – slink, support architecture Alpha and Sparc, apt came in picture, Number of package – 2250.

|

||||

- **Debian 2.2**: Released on August 15, 2000. Code name – Potato, Supported architecture – Intel i386, Motorola 68000 series, Alpha, SUN Sparc, PowerPC and ARM architecture. Number of packages: 3900+ (binary) and 2600+ (Source), Number of Developers – 450. There were a group of people studied and came with an article called Counting potatoes, which shows – How a free software effort could lead to a modern operating system despite all the issues around it.

|

||||

- **Debian 3.0** : Released on July 19th, 2002. Code name – woody, Architecture supported increased– HP, PA_RISC, IA-64, MIPS and IBM, First release in DVD, Package Count – 8500+, Developers Count – 900+, Cryptography.

|

||||

- **Debian 3.1**: Release on June 6th, 2005. Code name – sarge, Architecture support – same as woody + AMD64 – Unofficial Port released, Kernel – 2.4 qnd 2.6 series, Number of Packages: 15000+, Number of Developers : 1500+, packages like – OpenOffice Suite, Firefox Browser, Thunderbird, Gnome 2.8, kernel 3.3 Advanced Installation Support: RAID, XFS, LVM, Modular Installer.

|

||||

- **Debian 4.0**: Released on April 8th, 2007. Code name – etch, architecture support – same as sarge, included AMD64. Number of packages: 18,200+ Developers count : 1030+, Graphical Installer.

|

||||

- **Debian 5.0**: Released on February 14th, 2009. Code name – lenny, Architecture Support – Same as before + ARM. Number of packages: 23000+, Developers Count: 1010+.

|

||||

- **Debian 6.0** : Released on July 29th, 2009. Code name – squeeze, Package included : kernel 2.6.32, Gnome 2.3. Xorg 7.5, DKMS included, Dependency-based. Architecture : Same as pervious + kfreebsd-i386 and kfreebsd-amd64, Dependency based booting.

|

||||

- **Debian 7.0**: Released on may 4, 2013. Code name: wheezy, Support for Multiarch, Tools for private cloud, Improved Installer, Third party repo need removed, full featured multimedia-codec, Kernel 3.2, Xen Hypervisor 4.1.4 Package Count: 37400+.

|

||||

- **Debian 8.0**: Released on May 25, 2015 and Code name: Jessie, Systemd as the default init system, powered by Kernel 3.16, fast booting, cgroups for services, possibility of isolating part of the services, 43000+ packages. Sysvinit init system available in Jessie.

|

||||

|

||||

**Note**: Linux Kernel initial release was on October 05, 1991 and Debian initial release was on September 15, 1993. So, Debian is there for 22 Years running Linux Kernel which is there for 24 years.

|

||||

|

||||

### Debian Facts ###

|

||||

|

||||

Year 1994 was spent on organizing and managing Debian project so that it would be easy for others to contribute. Hence no release for users were made this year however there were certain internal release.

|

||||

|

||||

Debian 1.0 was never released. A CDROM manufacturer company by mistakenly labelled an unreleased version as Debian 1.0. Hence to avoid confusion Debian 1.0 was released as Debian 1.1 and since then only the concept of official CDROM images came into existence.

|

||||

|

||||

Each release of Debian is a character of Toy Story.

|

||||

|

||||

Debian remains available in old stable, stable, testing and experimental, all the time.

|

||||

|

||||

The Debian Project continues to work on the unstable distribution (codenamed sid, after the evil kid from the Toy Story). Sid is the permanent name for the unstable distribution and is remains ‘Still In Development’. The testing release is intended to become the next stable release and is currently codenamed jessie.

|

||||

|

||||

Debian official distribution includes only Free and OpenSource Software and nothing else. However the availability of contrib and Non-free Packages makes it possible to install those packages which are free but their dependencies are not licensed free (contrib) and Packages licensed under non-free softwares.

|

||||

|

||||

Debian is the mother of a lot of Linux distribution. Some of these Includes:

|

||||

|

||||

- Damn Small Linux

|

||||

- KNOPPIX

|

||||

- Linux Advanced

|

||||

- MEPIS

|

||||

- Ubuntu

|

||||

- 64studio (No more active)

|

||||

- LMDE

|

||||

|

||||

Debian is the world’s largest non commercial Linux Distribution. It is written in C (32.1%) programming language and rest in 70 other languages.

|

||||

|

||||

|

||||

|

||||

Debian Contribution

|

||||

|

||||

Image Source: [Xmodulo][1]

|

||||

|

||||

Debian project contains 68.5 million actual loc (lines of code) + 4.5 million lines of comments and white spaces.

|

||||

|

||||

International Space station dropped Windows & Red Hat for adopting Debian – These astronauts are using one release back – now “squeeze” for stability and strength from community.

|

||||

|

||||

Thank God! Who would have heard the scream from space on Windows Metro Screen :P

|

||||

|

||||

#### The Black Wednesday ####

|

||||

|

||||

On November 20th, 2002 the University of Twente Network Operation Center (NOC) caught fire. The fire department gave up protecting the server area. NOC hosted satie.debian.org which included Security, non-US archive, New Maintainer, quality assurance, databases – Everything was turned to ashes. Later these services were re-built by debian.

|

||||

|

||||

#### The Future Distro ####

|

||||

|

||||

Next in the list is Debian 9, code name – Stretch, what it will have is yet to be revealed. The best is yet to come, Just Wait for it!

|

||||

|

||||

A lot of distribution made an appearance in Linux Distro genre and then disappeared. In most cases managing as it gets bigger was a concern. But certainly this is not the case with Debian. It has hundreds of thousands of developer and maintainer all across the globe. It is a one Distro which was there from the initial days of Linux.

|

||||

|

||||

The contribution of Debian in Linux ecosystem can’t be measured in words. If there had been no Debian, Linux would not have been so rich and user-friendly. Debian is among one of the disto which is considered highly reliable, secure and stable and a perfect choice for Web Servers.

|

||||

|

||||

That’s the beginning of Debian. It came a long way and still going. The Future is Here! The world is here! If you have not used Debian till now, What are you Waiting for. Just Download Your Image and get started, we will be here if you get into trouble.

|

||||

|

||||

- [Debian Homepage][2]

|

||||

|

||||

--------------------------------------------------------------------------------

|

||||

|

||||

via: http://www.tecmint.com/happy-birthday-to-debian-gnu-linux/

|

||||

|

||||

作者:[Avishek Kumar][a]

|

||||

译者:[译者ID](https://github.com/译者ID)

|

||||

校对:[校对者ID](https://github.com/校对者ID)

|

||||

|

||||

本文由 [LCTT](https://github.com/LCTT/TranslateProject) 原创翻译,[Linux中国](https://linux.cn/) 荣誉推出

|

||||

|

||||

[a]:http://www.tecmint.com/author/avishek/

|

||||

[1]:http://xmodulo.com/2013/08/interesting-facts-about-debian-linux.html

|

||||

[2]:https://www.debian.org/

|

||||

@ -0,0 +1,53 @@

|

||||

Docker Working on Security Components, Live Container Migration

|

||||

================================================================================

|

||||

|

||||

|

||||

**Docker developers take the stage at Containercon and discuss their work on future container innovations for security and live migration.**

|

||||

|

||||

SEATTLE—Containers are one of the hottest topics in IT today and at the Linuxcon USA event here there is a co-located event called Containercon, dedicated to this virtualization technology.

|

||||

|

||||

Docker, the lead commercial sponsor of the open-source Docker effort brought three of its top people to the keynote stage today, but not Docker founder Solomon Hykes.

|

||||

|

||||

Hykes who delivered a Linuxcon keynote in 2014 was in the audience though, as Senior Vice President of Engineering Marianna Tessel, Docker security chief Diogo Monica and Docker chief maintainer Michael Crosby presented what's new and what's coming in Docker.

|

||||

|

||||

Tessel emphasized that Docker is very real today and used in production environments at some of the largest organizations on the planet, including the U.S. Government. Docker also is working in small environments too, including the Raspberry Pi small form factor ARM computer, which now can support up to 2,300 containers on a single device.

|

||||

|

||||

"We're getting more powerful and at the same time Docker will also get simpler to use," Tessel said.

|

||||

|

||||

As a metaphor, Tessel said that the whole Docker experience is much like a cruise ship, where there is powerful and complex machinery that powers the ship, yet the experience for passengers is all smooth sailing.

|

||||

|

||||

One area that Docker is trying to make easier is security. Tessel said that security is mind-numbingly complex for most people as organizations constantly try to avoid network breaches.

|

||||

|

||||

That's where Docker Content Trust comes into play, which is a configurable feature in the recent Docker 1.8 release. Diogo Mónica, security lead for Docker joined Tessel on stage and said that security is a hard topic, which is why Docker content trust is being developed.

|

||||

|

||||

With Docker Content Trust there is a verifiable way to make sure that a given Docker application image is authentic. There also are controls to limit fraud and potential malicious code injection by verifying application freshness.

|

||||

|

||||

To prove his point, Monica did a live demonstration of what could happen if Content Trust is not enabled. In one instance, a Website update is manipulated to allow the demo Web app to be defaced. When Content Trust is enabled, the hack didn't work and was blocked.

|

||||

|

||||

"Don't let the simple demo fool you," Tessel said. "You have seen the best security possible."

|

||||

|

||||

One area where containers haven't been put to use before is for live migration, which on VMware virtual machines is a technology called vMotion. It's an area that Docker is currently working on.

|

||||

|

||||

Docker chief maintainer Michael Crosby did an onstage demonstration of a live migration of Docker containers. Crosby referred to the approach as checkpoint and restore, where a running container gets a checkpoint snapshot and is then restored to another location.

|

||||

|

||||

A container also can be cloned and then run in another location. Crosby humorously referred to his cloned container as "Dolly," a reference to the world's first cloned animal, Dolly the sheep.

|

||||

|

||||

Tessel also took time to talk about the RunC component of containers, which is now a technology component that is being developed by the Open Containers Initiative as a multi-stakeholder process. With RunC, containers expand beyond Linux to multiple operating systems including Windows and Solaris.

|

||||

|

||||

Overall, Tessel said that she can't predict the future of Docker, though she is very optimistic.

|

||||

|

||||

"I'm not sure what the future is, but I'm sure it'll be out of this world," Tessel said.

|

||||

|

||||

Sean Michael Kerner is a senior editor at eWEEK and InternetNews.com. Follow him on Twitter @TechJournalist.

|

||||

|

||||

--------------------------------------------------------------------------------

|

||||

|

||||

via: http://www.eweek.com/virtualization/docker-working-on-security-components-live-container-migration.html

|

||||

|

||||

作者:[Sean Michael Kerner][a]

|

||||

译者:[译者ID](https://github.com/译者ID)

|

||||

校对:[校对者ID](https://github.com/校对者ID)

|

||||

|

||||

本文由 [LCTT](https://github.com/LCTT/TranslateProject) 原创翻译,[Linux中国](https://linux.cn/) 荣誉推出

|

||||

|

||||

[a]:http://www.eweek.com/cp/bio/Sean-Michael-Kerner/

|

||||

@ -1,145 +0,0 @@

|

||||

Vic020

|

||||

|

||||

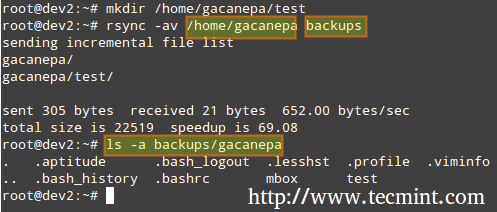

Linux Tricks: Play Game in Chrome, Text-to-Speech, Schedule a Job and Watch Commands in Linux

|

||||

================================================================================

|

||||

Here again, I have compiled a list of four things under [Linux Tips and Tricks][1] series you may do to remain more productive and entertained with Linux Environment.

|

||||

|

||||

|

||||

|

||||

Linux Tips and Tricks Series

|

||||

|

||||

The topics I have covered includes Google-chrome inbuilt small game, Text-to-speech in Linux Terminal, Quick job scheduling using ‘at‘ command and watch a command at regular interval.

|

||||

|

||||

### 1. Play A Game in Google Chrome Browser ###

|

||||

|

||||

Very often when there is a power shedding or no network due to some other reason, I don’t put my Linux box into maintenance mode. I keep myself engage in a little fun game by Google Chrome. I am not a gamer and hence I have not installed third-party creepy games. Security is another concern.

|

||||

|

||||

So when there is Internet related issue and my web page seems something like this:

|

||||

|

||||

|

||||

|

||||

Unable to Connect Internet

|

||||

|

||||

You may play the Google-chrome inbuilt game simply by hitting the space-bar. There is no limitation for the number of times you can play. The best thing is you need not break a sweat installing and using it.

|

||||

|

||||

No third-party application/plugin required. It should work well on other platforms like Windows and Mac but our niche is Linux and I’ll talk about Linux only and mind it, it works well on Linux. It is a very simple game (a kind of time pass).

|

||||

|

||||

Use Space-Bar/Navigation-up-key to jump. A glimpse of the game in action.

|

||||

|

||||

|

||||

|

||||

Play Game in Google Chrome

|

||||

|

||||

### 2. Text to Speech in Linux Terminal ###

|

||||

|

||||

For those who may not be aware of espeak utility, It is a Linux command-line text to speech converter. Write anything in a variety of languages and espeak utility will read it loud for you.

|

||||

|

||||

Espeak should be installed in your system by default, however it is not installed for your system, you may do:

|

||||

|

||||

# apt-get install espeak (Debian)

|

||||

# yum install espeak (CentOS)

|

||||

# dnf install espeak (Fedora 22 onwards)

|

||||

|

||||

You may ask espeak to accept Input Interactively from standard Input device and convert it to speech for you. You may do:

|

||||

|

||||

$ espeak [Hit Return Key]

|

||||

|

||||

For detailed output you may do:

|

||||

|

||||

$ espeak --stdout | aplay [Hit Return Key][Double - Here]

|

||||

|

||||

espeak is flexible and you can ask espeak to accept input from a text file and speak it loud for you. All you need to do is:

|

||||

|

||||

$ espeak --stdout /path/to/text/file/file_name.txt | aplay [Hit Enter]

|

||||

|

||||

You may ask espeak to speak fast/slow for you. The default speed is 160 words per minute. Define your preference using switch ‘-s’.

|

||||

|

||||

To ask espeak to speak 30 words per minute, you may do:

|

||||

|

||||

$ espeak -s 30 -f /path/to/text/file/file_name.txt | aplay

|

||||

|

||||

To ask espeak to speak 200 words per minute, you may do:

|

||||

|

||||

$ espeak -s 200 -f /path/to/text/file/file_name.txt | aplay

|

||||

|

||||

To use another language say Hindi (my mother tongue), you may do:

|

||||

|

||||

$ espeak -v hindi --stdout 'टेकमिंट विश्व की एक बेहतरीन लाइंक्स आधारित वेबसाइट है|' | aplay

|

||||

|

||||

You may choose any language of your preference and ask to speak in your preferred language as suggested above. To get the list of all the languages supported by espeak, you need to run:

|

||||

|

||||

$ espeak --voices

|

||||

|

||||

### 3. Quick Schedule a Job ###

|

||||

|

||||

Most of us are already familiar with [cron][2] which is a daemon to execute scheduled commands.

|

||||

|

||||

Cron is an advanced command often used by Linux SYSAdmins to schedule a job such as Backup or practically anything at certain time/interval.

|

||||

|

||||

Are you aware of ‘at’ command in Linux which lets you schedule a job/command to run at specific time? You can tell ‘at’ what to do and when to do and everything else will be taken care by command ‘at’.

|

||||

|

||||

For an example, say you want to print the output of uptime command at 11:02 AM, All you need to do is:

|

||||

|

||||

$ at 11:02

|

||||

uptime >> /home/$USER/uptime.txt

|

||||

Ctrl+D

|

||||

|

||||

|

||||

|

||||

Schedule Job in Linux

|

||||

|

||||

To check if the command/script/job has been set or not by ‘at’ command, you may do:

|

||||

|

||||

$ at -l

|

||||

|

||||

|

||||

|

||||

View Scheduled Jobs

|

||||

|

||||

You may schedule more than one command in one go using at, simply as:

|

||||

|

||||

$ at 12:30

|

||||

Command – 1

|

||||

Command – 2

|

||||

…

|

||||

command – 50

|

||||

…

|

||||

Ctrl + D

|

||||

|

||||

### 4. Watch a Command at Specific Interval ###

|

||||

|

||||

We need to run some command for specified amount of time at regular interval. Just for example say we need to print the current time and watch the output every 3 seconds.

|

||||

|

||||

To see current time we need to run the below command in terminal.

|

||||

|

||||

$ date +"%H:%M:%S

|

||||

|

||||

|

||||

|

||||

Check Date and Time in Linux

|

||||

|

||||

and to check the output of this command every three seconds, we need to run the below command in Terminal.

|

||||

|

||||

$ watch -n 3 'date +"%H:%M:%S"'

|

||||

|

||||

|

||||

|

||||

Watch Command in Linux

|

||||

|

||||

The switch ‘-n’ in watch command is for Interval. In the above example we defined Interval to be 3 sec. You may define yours as required. Also you may pass any command/script with watch command to watch that command/script at the defined interval.

|

||||

|

||||

That’s all for now. Hope you are like this series that aims at making you more productive with Linux and that too with fun inside. All the suggestions are welcome in the comments below. Stay tuned for more such posts. Keep connected and Enjoy…

|

||||

|

||||

--------------------------------------------------------------------------------

|

||||

|

||||

via: http://www.tecmint.com/text-to-speech-in-terminal-schedule-a-job-and-watch-commands-in-linux/

|

||||

|

||||

作者:[Avishek Kumar][a]

|

||||

译者:[译者ID](https://github.com/译者ID)

|

||||

校对:[校对者ID](https://github.com/校对者ID)

|

||||

|

||||

本文由 [LCTT](https://github.com/LCTT/TranslateProject) 原创翻译,[Linux中国](https://linux.cn/) 荣誉推出

|

||||

|

||||

[a]:http://www.tecmint.com/author/avishek/

|

||||

[1]:http://www.tecmint.com/tag/linux-tricks/

|

||||

[2]:http://www.tecmint.com/11-cron-scheduling-task-examples-in-linux/

|

||||

@ -0,0 +1,99 @@

|

||||

How to monitor stock quotes from the command line on Linux

|

||||

================================================================================

|

||||

If you are one of those stock investors or traders, monitoring the stock market will be one of your daily routines. Most likely you will be using an online trading platform which comes with some fancy real-time charts and all sort of advanced stock analysis and tracking tools. While such sophisticated market research tools are a must for any serious stock investors to read the market, monitoring the latest stock quotes still goes a long way to build a profitable portfolio.

|

||||

|

||||

If you are a full-time system admin constantly sitting in front of terminals while trading stocks as a hobby during the day, a simple command-line tool that shows real-time stock quotes will be a blessing for you.

|

||||

|

||||

In this tutorial, let me introduce a neat command-line tool that allows you to monitor stock quotes from the command line on Linux.

|

||||

|

||||

This tool is called [Mop][1]. Written in Go, this lightweight command-line tool is extremely handy for tracking the latest stock quotes from the U.S. markets. You can easily customize the list of stocks to monitor, and it shows the latest stock quotes in ncurses-based, easy-to-read interface.

|

||||

|

||||

**Note**: Mop obtains the latest stock quotes via Yahoo! Finance API. Be aware that their stock quotes are known to be delayed by 15 minutes. So if you are looking for "real-time" stock quotes with zero delay, Mop is not a tool for you. Such "live" stock quote feeds are usually available for a fee via some proprietary closed-door interface. With that being said, let's see how you can use Mop under Linux environment.

|

||||

|

||||

### Install Mop on Linux ###

|

||||

|

||||

Since Mop is implemented in Go, you will need to install Go language first. If you don't have Go installed, follow [this guide][2] to install Go on your Linux platform. Make sure to set GOPATH environment variable as described in the guide.

|

||||

|

||||

Once Go is installed, proceed to install Mop as follows.

|

||||

|

||||

**Debian, Ubuntu or Linux Mint**

|

||||

|

||||

$ sudo apt-get install git

|

||||

$ go get github.com/michaeldv/mop

|

||||

$ cd $GOPATH/src/github.com/michaeldv/mop

|

||||

$ make install

|

||||

|

||||

Fedora, CentOS, RHEL

|

||||

|

||||

$ sudo yum install git

|

||||

$ go get github.com/michaeldv/mop

|

||||

$ cd $GOPATH/src/github.com/michaeldv/mop

|

||||

$ make install

|

||||

|

||||

The above commands will install Mop under $GOPATH/bin.

|

||||

|

||||

Now edit your .bashrc to include $GOPATH/bin in your PATH variable.

|

||||

|

||||

export PATH="$PATH:$GOPATH/bin"

|

||||

|

||||

----------

|

||||

|

||||

$ source ~/.bashrc

|

||||

|

||||

### Monitor Stock Quotes from the Command Line with Mop ###

|

||||

|

||||

To launch Mod, simply run the command called cmd.

|

||||

|

||||

$ cmd

|

||||

|

||||

At the first launch, you will see a few stock tickers which Mop comes pre-configured with.

|

||||

|

||||

|

||||

|

||||

The quotes show information like the latest price, %change, daily low/high, 52-week low/high, dividend, and annual yield. Mop obtains market overview information from [CNN][3], and individual stock quotes from [Yahoo Finance][4]. The stock quote information updates itself within the terminal periodically.

|

||||

|

||||

### Customize Stock Quotes in Mop ###

|

||||

|

||||

Let's try customizing the stock list. Mop provides easy-to-remember shortcuts for this: '+' to add a new stock, and '-' to remove a stock.

|

||||

|

||||

To add a new stock, press '+', and type a stock ticker symbol to add (e.g., MSFT). You can add more than one stock at once by typing a comma-separated list of tickers (e.g., "MSFT, AMZN, TSLA").

|

||||

|

||||

|

||||

|

||||

Removing stocks from the list can be done similarly by pressing '-'.

|

||||

|

||||

### Sort Stock Quotes in Mop ###

|

||||

|

||||

You can sort the stock quote list based on any column. To sort, press 'o', and use left/right key to choose the column to sort by. When a particular column is chosen, you can sort the list either in increasing order or in decreasing order by pressing ENTER.

|

||||

|

||||

|

||||

|

||||

By pressing 'g', you can group your stocks based on whether they are advancing or declining for the day. Advancing issues are represented in green color, while declining issues are colored in white.

|

||||

|

||||

|

||||

|

||||

If you want to access help page, simply press '?'.

|

||||

|

||||

|

||||

|

||||

### Conclusion ###

|

||||

|

||||

As you can see, Mop is a lightweight, yet extremely handy stock monitoring tool. Of course you can easily access stock quotes information elsewhere, from online websites, your smartphone, etc. However, if you spend a great deal of your time in a terminal environment, Mop can easily fit in to your workspace, hopefully without distracting must of your workflow. Just let it run and continuously update market date in one of your terminals, and be done with it.

|

||||

|

||||

Happy trading!

|

||||

|

||||

--------------------------------------------------------------------------------

|

||||

|

||||

via: http://xmodulo.com/monitor-stock-quotes-command-line-linux.html

|

||||

|

||||

作者:[Dan Nanni][a]

|

||||

译者:[译者ID](https://github.com/译者ID)

|

||||

校对:[校对者ID](https://github.com/校对者ID)

|

||||

|

||||

本文由 [LCTT](https://github.com/LCTT/TranslateProject) 原创翻译,[Linux中国](https://linux.cn/) 荣誉推出

|

||||

|

||||

[a]:http://xmodulo.com/author/nanni

|

||||

[1]:https://github.com/michaeldv/mop

|

||||

[2]:http://ask.xmodulo.com/install-go-language-linux.html

|

||||

[3]:http://money.cnn.com/data/markets/

|

||||

[4]:http://finance.yahoo.com/

|

||||

@ -0,0 +1,315 @@

|

||||

Part 10 - LFCS: Understanding & Learning Basic Shell Scripting and Linux Filesystem Troubleshooting

|

||||

================================================================================

|

||||

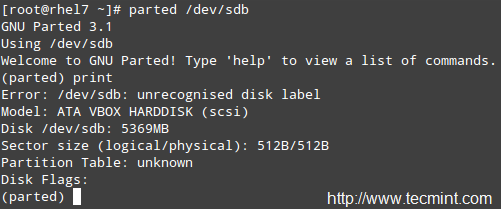

The Linux Foundation launched the LFCS certification (Linux Foundation Certified Sysadmin), a brand new initiative whose purpose is to allow individuals everywhere (and anywhere) to get certified in basic to intermediate operational support for Linux systems, which includes supporting running systems and services, along with overall monitoring and analysis, plus smart decision-making when it comes to raising issues to upper support teams.

|

||||

|

||||

|

||||

|

||||

Linux Foundation Certified Sysadmin – Part 10

|

||||

|

||||

Check out the following video that guides you an introduction to the Linux Foundation Certification Program.

|

||||

|

||||

注:youtube 视频

|

||||

|

||||

<iframe width="720" height="405" frameborder="0" allowfullscreen="allowfullscreen" src="//www.youtube.com/embed/Y29qZ71Kicg"></iframe>

|

||||

|

||||

This is the last article (Part 10) of the present 10-tutorial long series. In this article we will focus on basic shell scripting and troubleshooting Linux file systems. Both topics are required for the LFCS certification exam.

|

||||

|

||||

### Understanding Terminals and Shells ###

|

||||

|

||||

Let’s clarify a few concepts first.

|

||||

|

||||

- A shell is a program that takes commands and gives them to the operating system to be executed.

|

||||

- A terminal is a program that allows us as end users to interact with the shell. One example of a terminal is GNOME terminal, as shown in the below image.

|

||||

|

||||

|

||||

|

||||

Gnome Terminal

|

||||

|

||||

When we first start a shell, it presents a command prompt (also known as the command line), which tells us that the shell is ready to start accepting commands from its standard input device, which is usually the keyboard.

|

||||

|

||||

You may want to refer to another article in this series ([Use Command to Create, Edit, and Manipulate files – Part 1][1]) to review some useful commands.

|

||||

|

||||

Linux provides a range of options for shells, the following being the most common:

|

||||

|

||||

**bash Shell**

|

||||

|

||||

Bash stands for Bourne Again SHell and is the GNU Project’s default shell. It incorporates useful features from the Korn shell (ksh) and C shell (csh), offering several improvements at the same time. This is the default shell used by the distributions covered in the LFCS certification, and it is the shell that we will use in this tutorial.

|

||||

|

||||

**sh Shell**

|

||||

|

||||

The Bourne SHell is the oldest shell and therefore has been the default shell of many UNIX-like operating systems for many years.

|

||||

ksh Shell

|

||||

|

||||

The Korn SHell is a Unix shell which was developed by David Korn at Bell Labs in the early 1980s. It is backward-compatible with the Bourne shell and includes many features of the C shell.

|

||||

|

||||

A shell script is nothing more and nothing less than a text file turned into an executable program that combines commands that are executed by the shell one after another.

|

||||

|

||||

### Basic Shell Scripting ###

|

||||

|

||||

As mentioned earlier, a shell script is born as a plain text file. Thus, can be created and edited using our preferred text editor. You may want to consider using vi/m (refer to [Usage of vi Editor – Part 2][2] of this series), which features syntax highlighting for your convenience.

|

||||

|

||||

Type the following command to create a file named myscript.sh and press Enter.

|

||||

|

||||

# vim myscript.sh

|

||||

|

||||

The very first line of a shell script must be as follows (also known as a shebang).

|

||||

|

||||

#!/bin/bash

|

||||

|

||||

It “tells” the operating system the name of the interpreter that should be used to run the text that follows.

|

||||

|

||||

Now it’s time to add our commands. We can clarify the purpose of each command, or the entire script, by adding comments as well. Note that the shell ignores those lines beginning with a pound sign # (explanatory comments).

|

||||

|

||||

#!/bin/bash

|

||||

echo This is Part 10 of the 10-article series about the LFCS certification

|

||||

echo Today is $(date +%Y-%m-%d)

|

||||

|

||||

Once the script has been written and saved, we need to make it executable.

|

||||

|

||||

# chmod 755 myscript.sh

|

||||

|

||||

Before running our script, we need to say a few words about the $PATH environment variable. If we run,

|

||||

|

||||

echo $PATH

|

||||

|

||||

from the command line, we will see the contents of $PATH: a colon-separated list of directories that are searched when we enter the name of a executable program. It is called an environment variable because it is part of the shell environment – a set of information that becomes available for the shell and its child processes when the shell is first started.

|

||||

|

||||

When we type a command and press Enter, the shell searches in all the directories listed in the $PATH variable and executes the first instance that is found. Let’s see an example,

|

||||

|

||||

|

||||

|

||||

Environment Variables

|

||||

|

||||

If there are two executable files with the same name, one in /usr/local/bin and another in /usr/bin, the one in the first directory will be executed first, whereas the other will be disregarded.

|

||||

|

||||

If we haven’t saved our script inside one of the directories listed in the $PATH variable, we need to append ./ to the file name in order to execute it. Otherwise, we can run it just as we would do with a regular command.

|

||||

|

||||

# pwd

|

||||

# ./myscript.sh

|

||||

# cp myscript.sh ../bin

|

||||

# cd ../bin

|

||||

# pwd

|

||||

# myscript.sh

|

||||

|

||||

|

||||

|

||||

Execute Script

|

||||

|

||||

#### Conditionals ####

|

||||

|

||||

Whenever you need to specify different courses of action to be taken in a shell script, as result of the success or failure of a command, you will use the if construct to define such conditions. Its basic syntax is:

|

||||

|

||||

if CONDITION; then

|

||||

COMMANDS;

|

||||

else

|

||||

OTHER-COMMANDS

|

||||

fi

|

||||

|

||||

Where CONDITION can be one of the following (only the most frequent conditions are cited here) and evaluates to true when:

|

||||

|

||||

- [ -a file ] → file exists.

|

||||

- [ -d file ] → file exists and is a directory.

|

||||

- [ -f file ] →file exists and is a regular file.

|

||||

- [ -u file ] →file exists and its SUID (set user ID) bit is set.

|

||||

- [ -g file ] →file exists and its SGID bit is set.

|

||||

- [ -k file ] →file exists and its sticky bit is set.

|

||||

- [ -r file ] →file exists and is readable.

|

||||

- [ -s file ]→ file exists and is not empty.

|

||||

- [ -w file ]→file exists and is writable.

|

||||

- [ -x file ] is true if file exists and is executable.

|

||||

- [ string1 = string2 ] → the strings are equal.

|

||||

- [ string1 != string2 ] →the strings are not equal.

|

||||

|

||||

[ int1 op int2 ] should be part of the preceding list, while the items that follow (for example, -eq –> is true if int1 is equal to int2.) should be a “children” list of [ int1 op int2 ] where op is one of the following comparison operators.

|

||||

|

||||

- -eq –> is true if int1 is equal to int2.

|

||||

- -ne –> true if int1 is not equal to int2.

|

||||

- -lt –> true if int1 is less than int2.

|

||||

- -le –> true if int1 is less than or equal to int2.

|

||||

- -gt –> true if int1 is greater than int2.

|

||||

- -ge –> true if int1 is greater than or equal to int2.

|

||||

|

||||

#### For Loops ####

|

||||

|

||||

This loop allows to execute one or more commands for each value in a list of values. Its basic syntax is:

|

||||

|

||||

for item in SEQUENCE; do

|

||||

COMMANDS;

|

||||

done

|

||||

|

||||

Where item is a generic variable that represents each value in SEQUENCE during each iteration.

|

||||

|

||||

#### While Loops ####

|

||||

|

||||

This loop allows to execute a series of repetitive commands as long as the control command executes with an exit status equal to zero (successfully). Its basic syntax is:

|

||||

|

||||

while EVALUATION_COMMAND; do

|

||||

EXECUTE_COMMANDS;

|

||||

done

|

||||

|

||||

Where EVALUATION_COMMAND can be any command(s) that can exit with a success (0) or failure (other than 0) status, and EXECUTE_COMMANDS can be any program, script or shell construct, including other nested loops.

|

||||

|

||||

#### Putting It All Together ####

|

||||

|

||||

We will demonstrate the use of the if construct and the for loop with the following example.

|

||||

|

||||

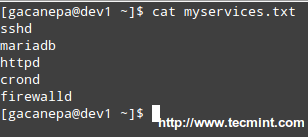

**Determining if a service is running in a systemd-based distro**

|

||||

|

||||

Let’s create a file with a list of services that we want to monitor at a glance.

|

||||

|

||||

# cat myservices.txt

|

||||

|

||||

sshd

|

||||

mariadb

|

||||

httpd

|

||||

crond

|

||||

firewalld

|

||||

|

||||

|

||||

|

||||

Script to Monitor Linux Services

|

||||

|

||||

Our shell script should look like.

|

||||

|

||||

#!/bin/bash

|

||||

|

||||

# This script iterates over a list of services and

|

||||

# is used to determine whether they are running or not.

|

||||

|

||||

for service in $(cat myservices.txt); do

|

||||

systemctl status $service | grep --quiet "running"

|

||||

if [ $? -eq 0 ]; then

|

||||

echo $service "is [ACTIVE]"

|

||||

else

|

||||

echo $service "is [INACTIVE or NOT INSTALLED]"

|

||||

fi

|

||||

done

|

||||

|

||||

|

||||

|

||||

Linux Service Monitoring Script

|

||||

|

||||

**Let’s explain how the script works.**

|

||||

|

||||

1). The for loop reads the myservices.txt file one element of LIST at a time. That single element is denoted by the generic variable named service. The LIST is populated with the output of,

|

||||

|

||||

# cat myservices.txt

|

||||

|

||||

2). The above command is enclosed in parentheses and preceded by a dollar sign to indicate that it should be evaluated to populate the LIST that we will iterate over.

|

||||

|

||||

3). For each element of LIST (meaning every instance of the service variable), the following command will be executed.

|

||||

|

||||

# systemctl status $service | grep --quiet "running"

|

||||

|

||||

This time we need to precede our generic variable (which represents each element in LIST) with a dollar sign to indicate it’s a variable and thus its value in each iteration should be used. The output is then piped to grep.

|

||||

|

||||

The –quiet flag is used to prevent grep from displaying to the screen the lines where the word running appears. When that happens, the above command returns an exit status of 0 (represented by $? in the if construct), thus verifying that the service is running.

|

||||

|

||||

An exit status different than 0 (meaning the word running was not found in the output of systemctl status $service) indicates that the service is not running.

|

||||

|

||||

|

||||

|

||||

Services Monitoring Script

|

||||

|

||||

We could go one step further and check for the existence of myservices.txt before even attempting to enter the for loop.

|

||||

|

||||

#!/bin/bash

|

||||

|

||||

# This script iterates over a list of services and

|

||||

# is used to determine whether they are running or not.

|

||||

|

||||

if [ -f myservices.txt ]; then

|

||||

for service in $(cat myservices.txt); do

|

||||

systemctl status $service | grep --quiet "running"

|

||||

if [ $? -eq 0 ]; then

|

||||

echo $service "is [ACTIVE]"

|

||||

else

|

||||

echo $service "is [INACTIVE or NOT INSTALLED]"

|

||||

fi

|

||||

done

|

||||

else

|

||||

echo "myservices.txt is missing"

|

||||

fi

|

||||

|

||||

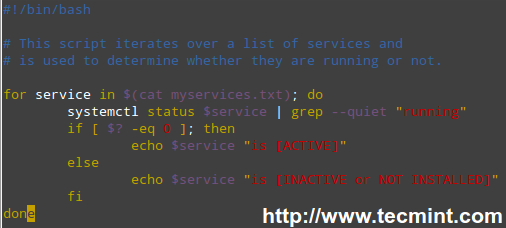

**Pinging a series of network or internet hosts for reply statistics**

|

||||

|

||||

You may want to maintain a list of hosts in a text file and use a script to determine every now and then whether they’re pingable or not (feel free to replace the contents of myhosts and try for yourself).

|

||||

|

||||