mirror of

https://github.com/LCTT/TranslateProject.git

synced 2025-01-25 23:11:02 +08:00

Merge remote-tracking branch 'LCTT/master'

This commit is contained in:

commit

c004c0a5a4

@ -4,9 +4,9 @@ script:

|

||||

- 'if [ "$TRAVIS_PULL_REQUEST" = "false" ]; then sh ./scripts/badge.sh; fi'

|

||||

branches:

|

||||

only:

|

||||

- master

|

||||

- master

|

||||

except:

|

||||

- gh-pages

|

||||

- gh-pages

|

||||

git:

|

||||

submodules: false

|

||||

deploy:

|

||||

|

||||

@ -1,4 +1,4 @@

|

||||

#!/bin/bash

|

||||

#!/bin/sh

|

||||

# PR 检查脚本

|

||||

set -e

|

||||

|

||||

|

||||

@ -22,7 +22,12 @@ do_analyze() {

|

||||

# 统计每个类别的每个操作

|

||||

REGEX="$(get_operation_regex "$STAT" "$TYPE")"

|

||||

OTHER_REGEX="${OTHER_REGEX}|${REGEX}"

|

||||

eval "${TYPE}_${STAT}=\"\$(grep -Ec '$REGEX' /tmp/changes)\"" || true

|

||||

CHANGES_FILE="/tmp/changes_${TYPE}_${STAT}"

|

||||

eval "grep -E '$REGEX' /tmp/changes" \

|

||||

| sed 's/^[^\/]*\///g' \

|

||||

| sort > "$CHANGES_FILE" || true

|

||||

sed 's/^.*\///g' "$CHANGES_FILE" > "${CHANGES_FILE}_basename"

|

||||

eval "${TYPE}_${STAT}=$(wc -l < "$CHANGES_FILE")"

|

||||

eval echo "${TYPE}_${STAT}=\$${TYPE}_${STAT}"

|

||||

done

|

||||

done

|

||||

|

||||

@ -1,4 +1,4 @@

|

||||

#!/bin/bash

|

||||

#!/bin/sh

|

||||

# 检查脚本状态

|

||||

set -e

|

||||

|

||||

|

||||

@ -1,4 +1,4 @@

|

||||

#!/bin/bash

|

||||

#!/bin/sh

|

||||

# PR 文件变更收集

|

||||

set -e

|

||||

|

||||

@ -31,7 +31,16 @@ git --no-pager show --summary "${MERGE_BASE}..HEAD"

|

||||

|

||||

echo "[收集] 写出文件变更列表……"

|

||||

|

||||

git diff "$MERGE_BASE" HEAD --no-renames --name-status > /tmp/changes

|

||||

RAW_CHANGES="$(git diff "$MERGE_BASE" HEAD --no-renames --name-status -z \

|

||||

| tr '\0' '\n')"

|

||||

[ -z "$RAW_CHANGES" ] && {

|

||||

echo "[收集] 无变更,退出……"

|

||||

exit 1

|

||||

}

|

||||

echo "$RAW_CHANGES" | while read -r STAT; do

|

||||

read -r NAME

|

||||

echo "${STAT} ${NAME}"

|

||||

done > /tmp/changes

|

||||

echo "[收集] 已写出文件变更列表:"

|

||||

cat /tmp/changes

|

||||

{ [ -z "$(cat /tmp/changes)" ] && echo "(无变更)"; } || true

|

||||

|

||||

@ -10,9 +10,10 @@ export TSL_DIR='translated' # 已翻译

|

||||

export PUB_DIR='published' # 已发布

|

||||

|

||||

# 定义匹配规则

|

||||

export CATE_PATTERN='(news|talk|tech)' # 类别

|

||||

export CATE_PATTERN='(talk|tech)' # 类别

|

||||

export FILE_PATTERN='[0-9]{8} [a-zA-Z0-9_.,() -]*\.md' # 文件名

|

||||

|

||||

# 获取用于匹配操作的正则表达式

|

||||

# 用法:get_operation_regex 状态 类型

|

||||

#

|

||||

# 状态为:

|

||||

@ -26,5 +27,50 @@ export FILE_PATTERN='[0-9]{8} [a-zA-Z0-9_.,() -]*\.md' # 文件名

|

||||

get_operation_regex() {

|

||||

STAT="$1"

|

||||

TYPE="$2"

|

||||

|

||||

echo "^${STAT}\\s+\"?$(eval echo "\$${TYPE}_DIR")/"

|

||||

}

|

||||

|

||||

# 确保两个变更文件一致

|

||||

# 用法:ensure_identical X类型 X状态 Y类型 Y状态 是否仅比较文件名

|

||||

#

|

||||

# 状态为:

|

||||

# - A:添加

|

||||

# - M:修改

|

||||

# - D:删除

|

||||

# 类型为:

|

||||

# - SRC:未翻译

|

||||

# - TSL:已翻译

|

||||

# - PUB:已发布

|

||||

ensure_identical() {

|

||||

TYPE_X="$1"

|

||||

STAT_X="$2"

|

||||

TYPE_Y="$3"

|

||||

STAT_Y="$4"

|

||||

NAME_ONLY="$5"

|

||||

SUFFIX=

|

||||

[ -n "$NAME_ONLY" ] && SUFFIX="_basename"

|

||||

|

||||

X_FILE="/tmp/changes_${TYPE_X}_${STAT_X}${SUFFIX}"

|

||||

Y_FILE="/tmp/changes_${TYPE_Y}_${STAT_Y}${SUFFIX}"

|

||||

|

||||

cmp "$X_FILE" "$Y_FILE" 2> /dev/null

|

||||

}

|

||||

|

||||

# 检查文章分类

|

||||

# 用法:check_category 类型 状态

|

||||

#

|

||||

# 状态为:

|

||||

# - A:添加

|

||||

# - M:修改

|

||||

# - D:删除

|

||||

# 类型为:

|

||||

# - SRC:未翻译

|

||||

# - TSL:已翻译

|

||||

check_category() {

|

||||

TYPE="$1"

|

||||

STAT="$2"

|

||||

|

||||

CHANGES="/tmp/changes_${TYPE}_${STAT}"

|

||||

! grep -Eqv "^${CATE_PATTERN}/" "$CHANGES"

|

||||

}

|

||||

|

||||

@ -1,4 +1,4 @@

|

||||

#!/bin/bash

|

||||

#!/bin/sh

|

||||

# 匹配 PR 规则

|

||||

set -e

|

||||

|

||||

@ -27,31 +27,39 @@ rule_bypass_check() {

|

||||

# 添加原文:添加至少一篇原文

|

||||

rule_source_added() {

|

||||

[ "$SRC_A" -ge 1 ] \

|

||||

&& check_category SRC A \

|

||||

&& [ "$TOTAL" -eq "$SRC_A" ] && echo "匹配规则:添加原文 ${SRC_A} 篇"

|

||||

}

|

||||

|

||||

# 申领翻译:只能申领一篇原文

|

||||

rule_translation_requested() {

|

||||

[ "$SRC_M" -eq 1 ] \

|

||||

&& check_category SRC M \

|

||||

&& [ "$TOTAL" -eq 1 ] && echo "匹配规则:申领翻译"

|

||||

}

|

||||

|

||||

# 提交译文:只能提交一篇译文

|

||||

rule_translation_completed() {

|

||||

[ "$SRC_D" -eq 1 ] && [ "$TSL_A" -eq 1 ] \

|

||||

&& ensure_identical SRC D TSL A \

|

||||

&& check_category SRC D \

|

||||

&& check_category TSL A \

|

||||

&& [ "$TOTAL" -eq 2 ] && echo "匹配规则:提交译文"

|

||||

}

|

||||

|

||||

# 校对译文:只能校对一篇

|

||||

rule_translation_revised() {

|

||||

[ "$TSL_M" -eq 1 ] \

|

||||

&& check_category TSL M \

|

||||

&& [ "$TOTAL" -eq 1 ] && echo "匹配规则:校对译文"

|

||||

}

|

||||

|

||||

# 发布译文:发布多篇译文

|

||||

rule_translation_published() {

|

||||

[ "$TSL_D" -ge 1 ] && [ "$PUB_A" -ge 1 ] && [ "$TSL_D" -eq "$PUB_A" ] \

|

||||

&& [ "$TOTAL" -eq $(($TSL_D + $PUB_A)) ] \

|

||||

&& ensure_identical SRC D TSL A 1 \

|

||||

&& check_category TSL D \

|

||||

&& [ "$TOTAL" -eq $((TSL_D + PUB_A)) ] \

|

||||

&& echo "匹配规则:发布译文 ${PUB_A} 篇"

|

||||

}

|

||||

|

||||

|

||||

@ -1,3 +1,6 @@

|

||||

Translating by MjSeven

|

||||

|

||||

|

||||

For your first HTML code, let’s help Batman write a love letter

|

||||

============================================================

|

||||

|

||||

@ -553,360 +556,4 @@ We want to apply our styles to the specific div and img that we are using right

|

||||

<div id="letter-container">

|

||||

```

|

||||

|

||||

and here’s how to use this id in our embedded style as a selector:

|

||||

|

||||

```

|

||||

#letter-container{

|

||||

...

|

||||

}

|

||||

```

|

||||

|

||||

Notice the “#” symbol. It indicates that it is an id, and the styles inside {…} should apply to the element with that specific id only.

|

||||

|

||||

Let’s apply this to our code:

|

||||

|

||||

```

|

||||

<style>

|

||||

#letter-container{

|

||||

width:550px;

|

||||

}

|

||||

#header-bat-logo{

|

||||

width:100%;

|

||||

}

|

||||

</style>

|

||||

```

|

||||

|

||||

```

|

||||

<div id="letter-container">

|

||||

<h1>Bat Letter</h1>

|

||||

<img id="header-bat-logo" src="bat-logo.jpeg">

|

||||

<p>

|

||||

After all the battles we faught together, after all the difficult times we saw together, after all the good and bad moments we've been through, I think it's time I let you know how I feel about you.

|

||||

</p>

|

||||

```

|

||||

|

||||

```

|

||||

<h2>You are the light of my life</h2>

|

||||

<p>

|

||||

You complete my darkness with your light. I love:

|

||||

</p>

|

||||

<ul>

|

||||

<li>the way you see good in the worse</li>

|

||||

<li>the way you handle emotionally difficult situations</li>

|

||||

<li>the way you look at Justice</li>

|

||||

</ul>

|

||||

<p>

|

||||

I have learned a lot from you. You have occupied a special place in my heart over the time.

|

||||

</p>

|

||||

<h2>I have a confession to make</h2>

|

||||

<p>

|

||||

It feels like my chest <em>does</em> have a heart. You make my heart beat. Your smile brings smile on my face, your pain brings pain to my heart.

|

||||

</p>

|

||||

<p>

|

||||

I don't show my emotions, but I think this man behind the mask is falling for you.

|

||||

</p>

|

||||

<p><strong>I love you Superman.</strong></p>

|

||||

<p>

|

||||

Your not-so-secret-lover, <br>

|

||||

Batman

|

||||

</p>

|

||||

</div>

|

||||

```

|

||||

|

||||

Our HTML is ready with embedded styling.

|

||||

|

||||

However, you can see that as we include more styles, the <style></style> will get bigger. This can quickly clutter our main html file. So let’s go one step further and use linked styling by copying the content inside our style tag to a new file.

|

||||

|

||||

Create a new file in the project root directory and save it as style.css:

|

||||

|

||||

```

|

||||

#letter-container{

|

||||

width:550px;

|

||||

}

|

||||

#header-bat-logo{

|

||||

width:100%;

|

||||

}

|

||||

```

|

||||

|

||||

We don’t need to write `<style>` and `</style>` in our CSS file.

|

||||

|

||||

We need to link our newly created CSS file to our HTML file using the `<link>`tag in our html file. Here’s how we can do that:

|

||||

|

||||

```

|

||||

<link rel="stylesheet" type="text/css" href="style.css">

|

||||

```

|

||||

|

||||

We use the link element to include external resources inside your HTML document. It is mostly used to link Stylesheets. The three attributes that we are using are:

|

||||

|

||||

* rel: Relation. What relationship the linked file has to the document. The file with the .css extension is called a stylesheet, and so we keep rel=“stylesheet”.

|

||||

|

||||

* type: the Type of the linked file; it’s “text/css” for a CSS file.

|

||||

|

||||

* href: Hypertext Reference. Location of the linked file.

|

||||

|

||||

There is no </link> at the end of the link element. So, <link> is also a self-closing tag.

|

||||

|

||||

```

|

||||

<link rel="gf" type="cute" href="girl.next.door">

|

||||

```

|

||||

|

||||

If only getting a Girlfriend was so easy :D

|

||||

|

||||

Nah, that’s not gonna happen, let’s move on.

|

||||

|

||||

Here’s the content of our loveletter.html:

|

||||

|

||||

```

|

||||

<link rel="stylesheet" type="text/css" href="style.css">

|

||||

<div id="letter-container">

|

||||

<h1>Bat Letter</h1>

|

||||

<img id="header-bat-logo" src="bat-logo.jpeg">

|

||||

<p>

|

||||

After all the battles we faught together, after all the difficult times we saw together, after all the good and bad moments we've been through, I think it's time I let you know how I feel about you.

|

||||

</p>

|

||||

<h2>You are the light of my life</h2>

|

||||

<p>

|

||||

You complete my darkness with your light. I love:

|

||||

</p>

|

||||

<ul>

|

||||

<li>the way you see good in the worse</li>

|

||||

<li>the way you handle emotionally difficult situations</li>

|

||||

<li>the way you look at Justice</li>

|

||||

</ul>

|

||||

<p>

|

||||

I have learned a lot from you. You have occupied a special place in my heart over the time.

|

||||

</p>

|

||||

<h2>I have a confession to make</h2>

|

||||

<p>

|

||||

It feels like my chest <em>does</em> have a heart. You make my heart beat. Your smile brings smile on my face, your pain brings pain to my heart.

|

||||

</p>

|

||||

<p>

|

||||

I don't show my emotions, but I think this man behind the mask is falling for you.

|

||||

</p>

|

||||

<p><strong>I love you Superman.</strong></p>

|

||||

<p>

|

||||

Your not-so-secret-lover, <br>

|

||||

Batman

|

||||

</p>

|

||||

</div>

|

||||

```

|

||||

|

||||

and our style.css:

|

||||

|

||||

```

|

||||

#letter-container{

|

||||

width:550px;

|

||||

}

|

||||

#header-bat-logo{

|

||||

width:100%;

|

||||

}

|

||||

```

|

||||

|

||||

Save both the files and refresh, and your output in the browser should remain the same.

|

||||

|

||||

### A Few Formalities

|

||||

|

||||

Our love letter is almost ready to deliver to Batman, but there are a few formal pieces remaining.

|

||||

|

||||

Like any other programming language, HTML has also gone through many versions since its birth year(1990). The current version of HTML is HTML5.

|

||||

|

||||

So, how would the browser know which version of HTML you are using to code your page? To tell the browser that you are using HTML5, you need to include `<!DOCTYPE html>` at top of the page. For older versions of HTML, this line used to be different, but you don’t need to learn that because we don’t use them anymore.

|

||||

|

||||

Also, in previous HTML versions, we used to encapsulate the entire document inside `<html></html>` tag. The entire file was divided into two major sections: Head, inside `<head></head>`, and Body, inside `<body></body>`. This is not required in HTML5, but we still do this for compatibility reasons. Let’s update our code with `<Doctype>`, `<html>`, `<head>` and `<body>`:

|

||||

|

||||

```

|

||||

<!DOCTYPE html>

|

||||

<html>

|

||||

<head>

|

||||

<link rel="stylesheet" type="text/css" href="style.css">

|

||||

</head>

|

||||

<body>

|

||||

<div id="letter-container">

|

||||

<h1>Bat Letter</h1>

|

||||

<img id="header-bat-logo" src="bat-logo.jpeg">

|

||||

<p>

|

||||

After all the battles we faught together, after all the difficult times we saw together, after all the good and bad moments we've been through, I think it's time I let you know how I feel about you.

|

||||

</p>

|

||||

<h2>You are the light of my life</h2>

|

||||

<p>

|

||||

You complete my darkness with your light. I love:

|

||||

</p>

|

||||

<ul>

|

||||

<li>the way you see good in the worse</li>

|

||||

<li>the way you handle emotionally difficult situations</li>

|

||||

<li>the way you look at Justice</li>

|

||||

</ul>

|

||||

<p>

|

||||

I have learned a lot from you. You have occupied a special place in my heart over the time.

|

||||

</p>

|

||||

<h2>I have a confession to make</h2>

|

||||

<p>

|

||||

It feels like my chest <em>does</em> have a heart. You make my heart beat. Your smile brings smile on my face, your pain brings pain to my heart.

|

||||

</p>

|

||||

<p>

|

||||

I don't show my emotions, but I think this man behind the mask is falling for you.

|

||||

</p>

|

||||

<p><strong>I love you Superman.</strong></p>

|

||||

<p>

|

||||

Your not-so-secret-lover, <br>

|

||||

Batman

|

||||

</p>

|

||||

</div>

|

||||

</body>

|

||||

</html>

|

||||

```

|

||||

|

||||

The main content goes inside `<body>` and meta information goes inside `<head>`. So we keep the div inside `<body>` and load the stylesheets inside `<head>`.

|

||||

|

||||

Save and refresh, and your HTML page should display the same as earlier.

|

||||

|

||||

### Title in HTML

|

||||

|

||||

This is the last change. I promise.

|

||||

|

||||

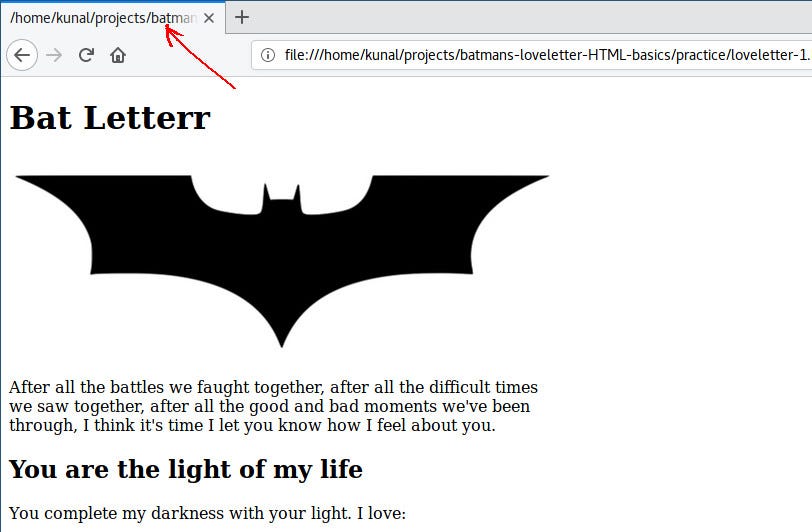

You might have noticed that the title of the tab is displaying the path of the HTML file:

|

||||

|

||||

|

||||

|

||||

|

||||

We can use `<title>` tag to define a title for our HTML file. The title tag also, like the link tag, goes inside head. Let’s put “Bat Letter” in our title:

|

||||

|

||||

```

|

||||

<!DOCTYPE html>

|

||||

<html>

|

||||

<head>

|

||||

<title>Bat Letter</title>

|

||||

<link rel="stylesheet" type="text/css" href="style.css">

|

||||

</head>

|

||||

<body>

|

||||

<div id="letter-container">

|

||||

<h1>Bat Letter</h1>

|

||||

<img id="header-bat-logo" src="bat-logo.jpeg">

|

||||

<p>

|

||||

After all the battles we faught together, after all the difficult times we saw together, after all the good and bad moments we've been through, I think it's time I let you know how I feel about you.

|

||||

</p>

|

||||

<h2>You are the light of my life</h2>

|

||||

<p>

|

||||

You complete my darkness with your light. I love:

|

||||

</p>

|

||||

<ul>

|

||||

<li>the way you see good in the worse</li>

|

||||

<li>the way you handle emotionally difficult situations</li>

|

||||

<li>the way you look at Justice</li>

|

||||

</ul>

|

||||

<p>

|

||||

I have learned a lot from you. You have occupied a special place in my heart over the time.

|

||||

</p>

|

||||

<h2>I have a confession to make</h2>

|

||||

<p>

|

||||

It feels like my chest <em>does</em> have a heart. You make my heart beat. Your smile brings smile on my face, your pain brings pain to my heart.

|

||||

</p>

|

||||

<p>

|

||||

I don't show my emotions, but I think this man behind the mask is falling for you.

|

||||

</p>

|

||||

<p><strong>I love you Superman.</strong></p>

|

||||

<p>

|

||||

Your not-so-secret-lover, <br>

|

||||

Batman

|

||||

</p>

|

||||

</div>

|

||||

</body>

|

||||

</html>

|

||||

```

|

||||

|

||||

Save and refresh, and you will see that instead of the file path, “Bat Letter” is now displayed on the tab.

|

||||

|

||||

Batman’s Love Letter is now complete.

|

||||

|

||||

Congratulations! You made Batman’s Love Letter in HTML.

|

||||

|

||||

|

||||

|

||||

|

||||

### What we learned

|

||||

|

||||

We learned the following new concepts:

|

||||

|

||||

* The structure of an HTML document

|

||||

|

||||

* How to write elements in HTML (<p></p>)

|

||||

|

||||

* How to write styles inside the element using the style attribute (this is called inline styling, avoid this as much as you can)

|

||||

|

||||

* How to write styles of an element inside <style>…</style> (this is called embedded styling)

|

||||

|

||||

* How to write styles in a separate file and link to it in HTML using <link> (this is called a linked stylesheet)

|

||||

|

||||

* What is a tag name, attribute, opening tag, and closing tag

|

||||

|

||||

* How to give an id to an element using id attribute

|

||||

|

||||

* Tag selectors and id selectors in CSS

|

||||

|

||||

We learned the following HTML tags:

|

||||

|

||||

* <p>: for paragraphs

|

||||

|

||||

* <br>: for line breaks

|

||||

|

||||

* <ul>, <li>: to display lists

|

||||

|

||||

* <div>: for grouping elements of our letter

|

||||

|

||||

* <h1>, <h2>: for heading and sub heading

|

||||

|

||||

* <img>: to insert an image

|

||||

|

||||

* <strong>, <em>: for bold and italic text styling

|

||||

|

||||

* <style>: for embedded styling

|

||||

|

||||

* <link>: for including external an stylesheet

|

||||

|

||||

* <html>: to wrap the entire HTML document

|

||||

|

||||

* <!DOCTYPE html>: to let the browser know that we are using HTML5

|

||||

|

||||

* <head>: to wrap meta info, like <link> and <title>

|

||||

|

||||

* <body>: for the body of the HTML page that is actually displayed

|

||||

|

||||

* <title>: for the title of the HTML page

|

||||

|

||||

We learned the following CSS properties:

|

||||

|

||||

* width: to define the width of an element

|

||||

|

||||

* CSS units: “px” and “%”

|

||||

|

||||

That’s it for the day friends, see you in the next tutorial.

|

||||

|

||||

* * *

|

||||

|

||||

Want to learn Web Development with fun and engaging tutorials?

|

||||

|

||||

[Click here to get new Web Development tutorials every week.][4]

|

||||

|

||||

--------------------------------------------------------------------------------

|

||||

|

||||

|

||||

作者简介:

|

||||

|

||||

Developer + Writer | supersarkar.com | twitter.com/supersarkar

|

||||

|

||||

-------------

|

||||

|

||||

|

||||

via: https://medium.freecodecamp.org/for-your-first-html-code-lets-help-batman-write-a-love-letter-64c203b9360b

|

||||

|

||||

作者:[Kunal Sarkar][a]

|

||||

译者:[译者ID](https://github.com/译者ID)

|

||||

校对:[校对者ID](https://github.com/校对者ID)

|

||||

|

||||

本文由 [LCTT](https://github.com/LCTT/TranslateProject) 原创编译,[Linux中国](https://linux.cn/) 荣誉推出

|

||||

|

||||

[a]:https://medium.freecodecamp.org/@supersarkar

|

||||

[1]:https://www.pexels.com/photo/batman-black-and-white-logo-93596/

|

||||

[2]:https://code.visualstudio.com/

|

||||

[3]:https://www.pexels.com/photo/batman-black-and-white-logo-93596/

|

||||

[4]:http://supersarkar.com/

|

||||

and here’s how to use th

|

||||

|

||||

@ -1,258 +0,0 @@

|

||||

translating by Flowsnow

|

||||

|

||||

Build a bikesharing app with Redis and Python

|

||||

======

|

||||

|

||||

|

||||

|

||||

I travel a lot on business. I'm not much of a car guy, so when I have some free time, I prefer to walk or bike around a city. Many of the cities I've visited on business have bikeshare systems, which let you rent a bike for a few hours. Most of these systems have an app to help users locate and rent their bikes, but it would be more helpful for users like me to have a single place to get information on all the bikes in a city that are available to rent.

|

||||

|

||||

To solve this problem and demonstrate the power of open source to add location-aware features to a web application, I combined publicly available bikeshare data, the [Python][1] programming language, and the open source [Redis][2] in-memory data structure server to index and query geospatial data.

|

||||

|

||||

The resulting bikeshare application incorporates data from many different sharing systems, including the [Citi Bike][3] bikeshare in New York City. It takes advantage of the General Bikeshare Feed provided by the Citi Bike system and uses its data to demonstrate some of the features that can be built using Redis to index geospatial data. The Citi Bike data is provided under the [Citi Bike data license agreement][4].

|

||||

|

||||

### General Bikeshare Feed Specification

|

||||

|

||||

The General Bikeshare Feed Specification (GBFS) is an [open data specification][5] developed by the [North American Bikeshare Association][6] to make it easier for map and transportation applications to add bikeshare systems into their platforms. The specification is currently in use by over 60 different sharing systems in the world.

|

||||

|

||||

The feed consists of several simple [JSON][7] data files containing information about the state of the system. The feed starts with a top-level JSON file referencing the URLs of the sub-feed data:

|

||||

```

|

||||

{

|

||||

|

||||

"data": {

|

||||

|

||||

"en": {

|

||||

|

||||

"feeds": [

|

||||

|

||||

{

|

||||

|

||||

"name": "system_information",

|

||||

|

||||

"url": "https://gbfs.citibikenyc.com/gbfs/en/system_information.json"

|

||||

|

||||

},

|

||||

|

||||

{

|

||||

|

||||

"name": "station_information",

|

||||

|

||||

"url": "https://gbfs.citibikenyc.com/gbfs/en/station_information.json"

|

||||

|

||||

},

|

||||

|

||||

. . .

|

||||

|

||||

]

|

||||

|

||||

}

|

||||

|

||||

},

|

||||

|

||||

"last_updated": 1506370010,

|

||||

|

||||

"ttl": 10

|

||||

|

||||

}

|

||||

|

||||

```

|

||||

|

||||

The first step is loading information about the bikesharing stations into Redis using data from the `system_information` and `station_information` feeds.

|

||||

|

||||

The `system_information` feed provides the system ID, which is a short code that can be used to create namespaces for Redis keys. The GBFS spec doesn't specify the format of the system ID, but does guarantee it is globally unique. Many of the bikeshare feeds use short names like coast_bike_share, boise_greenbike, or topeka_metro_bikes for system IDs. Others use familiar geographic abbreviations such as NYC or BA, and one uses a universally unique identifier (UUID). The bikesharing application uses the identifier as a prefix to construct unique keys for the given system.

|

||||

|

||||

The `station_information` feed provides static information about the sharing stations that comprise the system. Stations are represented by JSON objects with several fields. There are several mandatory fields in the station object that provide the ID, name, and location of the physical bike stations. There are also several optional fields that provide helpful information such as the nearest cross street or accepted payment methods. This is the primary source of information for this part of the bikesharing application.

|

||||

|

||||

### Building the database

|

||||

|

||||

I've written a sample application, [load_station_data.py][8], that mimics what would happen in a backend process for loading data from external sources.

|

||||

|

||||

### Finding the bikeshare stations

|

||||

|

||||

Loading the bikeshare data starts with the [systems.csv][9] file from the [GBFS repository on GitHub][5].

|

||||

|

||||

The repository's [systems.csv][9] file provides the discovery URL for registered bikeshare systems with an available GBFS feed. The discovery URL is the starting point for processing bikeshare information.

|

||||

|

||||

The `load_station_data` application takes each discovery URL found in the systems file and uses it to find the URL for two sub-feeds: system information and station information. The system information feed provides a key piece of information: the unique ID of the system. (Note: the system ID is also provided in the systems.csv file, but some of the identifiers in that file do not match the identifiers in the feeds, so I always fetch the identifier from the feed.) Details on the system, like bikeshare URLs, phone numbers, and emails, could be added in future versions of the application, so the data is stored in a Redis hash using the key `${system_id}:system_info`.

|

||||

|

||||

### Loading the station data

|

||||

|

||||

The station information provides data about every station in the system, including the system's location. The `load_station_data` application iterates over every station in the station feed and stores the data about each into a Redis hash using a key of the form `${system_id}:station:${station_id}`. The location of each station is added to a geospatial index for the bikeshare using the `GEOADD` command.

|

||||

|

||||

### Updating data

|

||||

|

||||

On subsequent runs, I don't want the code to remove all the feed data from Redis and reload it into an empty Redis database, so I carefully considered how to handle in-place updates of the data.

|

||||

|

||||

The code starts by loading the dataset with information on all the bikesharing stations for the system being processed into memory. When information is loaded for a station, the station (by key) is removed from the in-memory set of stations. Once all station data is loaded, we're left with a set containing all the station data that must be removed for that system.

|

||||

|

||||

The application iterates over this set of stations and creates a transaction to delete the station information, remove the station key from the geospatial indexes, and remove the station from the list of stations for the system.

|

||||

|

||||

### Notes on the code

|

||||

|

||||

There are a few interesting things to note in [the sample code][8]. First, items are added to the geospatial indexes using the `GEOADD` command but removed with the `ZREM` command. As the underlying implementation of the geospatial type uses sorted sets, items are removed using `ZREM`. A word of caution: For simplicity, the sample code demonstrates working with a single Redis node; the transaction blocks would need to be restructured to run in a cluster environment.

|

||||

|

||||

If you are using Redis 4.0 (or later), you have some alternatives to the `DELETE` and `HMSET` commands in the code. Redis 4.0 provides the [`UNLINK`][10] command as an asynchronous alternative to the `DELETE` command. `UNLINK` will remove the key from the keyspace, but it reclaims the memory in a separate thread. The [`HMSET`][11] command is [deprecated in Redis 4.0 and the `HSET` command is now variadic][12] (that is, it accepts an indefinite number of arguments).

|

||||

|

||||

### Notifying clients

|

||||

|

||||

At the end of the process, a notification is sent to the clients relying on our data. Using the Redis pub/sub mechanism, the notification goes out over the `geobike:station_changed` channel with the ID of the system.

|

||||

|

||||

### Data model

|

||||

|

||||

When structuring data in Redis, the most important thing to think about is how you will query the information. The two main queries the bikeshare application needs to support are:

|

||||

|

||||

* Find stations near us

|

||||

* Display information about stations

|

||||

|

||||

|

||||

|

||||

Redis provides two main data types that will be useful for storing our data: hashes and sorted sets. The [hash type][13] maps well to the JSON objects that represent stations; since Redis hashes don't enforce a schema, they can be used to store the variable station information.

|

||||

|

||||

Of course, finding stations geographically requires a geospatial index to search for stations relative to some coordinates. Redis provides [several commands][14] to build up a geospatial index using the [sorted set][15] data structure.

|

||||

|

||||

We construct keys using the format `${system_id}:station:${station_id}` for the hashes containing information about the stations and keys using the format `${system_id}:stations:location` for the geospatial index used to find stations.

|

||||

|

||||

### Getting the user's location

|

||||

|

||||

The next step in building out the application is to determine the user's current location. Most applications accomplish this through built-in services provided by the operating system. The OS can provide applications with a location based on GPS hardware built into the device or approximated from the device's available WiFi networks.

|

||||

|

||||

|

||||

|

||||

### Finding stations

|

||||

|

||||

After the user's location is found, the next step is locating nearby bikesharing stations. Redis' geospatial functions can return information on stations within a given distance of the user's current coordinates. Here's an example of this using the Redis command-line interface.

|

||||

|

||||

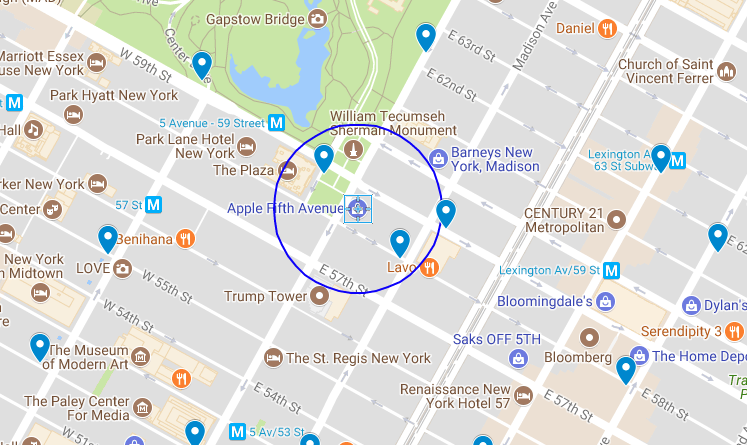

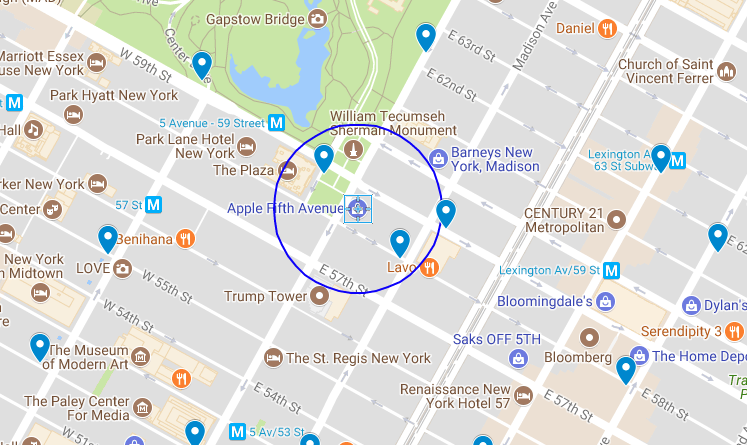

Imagine I'm at the Apple Store on Fifth Avenue in New York City, and I want to head downtown to Mood on West 37th to catch up with my buddy [Swatch][16]. I could take a taxi or the subway, but I'd rather bike. Are there any nearby sharing stations where I could get a bike for my trip?

|

||||

|

||||

The Apple store is located at 40.76384, -73.97297. According to the map, two bikeshare stations—Grand Army Plaza & Central Park South and East 58th St. & Madison—fall within a 500-foot radius (in blue on the map above) of the store.

|

||||

|

||||

I can use Redis' `GEORADIUS` command to query the NYC system index for stations within a 500-foot radius:

|

||||

```

|

||||

127.0.0.1:6379> GEORADIUS NYC:stations:location -73.97297 40.76384 500 ft

|

||||

|

||||

1) "NYC:station:3457"

|

||||

|

||||

2) "NYC:station:281"

|

||||

|

||||

```

|

||||

|

||||

Redis returns the two bikeshare locations found within that radius, using the elements in our geospatial index as the keys for the metadata about a particular station. The next step is looking up the names for the two stations:

|

||||

```

|

||||

127.0.0.1:6379> hget NYC:station:281 name

|

||||

|

||||

"Grand Army Plaza & Central Park S"

|

||||

|

||||

|

||||

|

||||

127.0.0.1:6379> hget NYC:station:3457 name

|

||||

|

||||

"E 58 St & Madison Ave"

|

||||

|

||||

```

|

||||

|

||||

Those keys correspond to the stations identified on the map above. If I want, I can add more flags to the `GEORADIUS` command to get a list of elements, their coordinates, and their distance from our current point:

|

||||

```

|

||||

127.0.0.1:6379> GEORADIUS NYC:stations:location -73.97297 40.76384 500 ft WITHDIST WITHCOORD ASC

|

||||

|

||||

1) 1) "NYC:station:281"

|

||||

|

||||

2) "289.1995"

|

||||

|

||||

3) 1) "-73.97371262311935425"

|

||||

|

||||

2) "40.76439830559216659"

|

||||

|

||||

2) 1) "NYC:station:3457"

|

||||

|

||||

2) "383.1782"

|

||||

|

||||

3) 1) "-73.97209256887435913"

|

||||

|

||||

2) "40.76302702144496237"

|

||||

|

||||

```

|

||||

|

||||

Looking up the names associated with those keys generates an ordered list of stations I can choose from. Redis doesn't provide directions or routing capability, so I use the routing features of my device's OS to plot a course from my current location to the selected bike station.

|

||||

|

||||

The `GEORADIUS` function can be easily implemented inside an API in your favorite development framework to add location functionality to an app.

|

||||

|

||||

### Other query commands

|

||||

|

||||

In addition to the `GEORADIUS` command, Redis provides three other commands for querying data from the index: `GEOPOS`, `GEODIST`, and `GEORADIUSBYMEMBER`.

|

||||

|

||||

The `GEOPOS` command can provide the coordinates for a given element from the geohash. For example, if I know there is a bikesharing station at West 38th and 8th and its ID is 523, then the element name for that station is NYC🚉523. Using Redis, I can find the station's longitude and latitude:

|

||||

```

|

||||

127.0.0.1:6379> geopos NYC:stations:location NYC:station:523

|

||||

|

||||

1) 1) "-73.99138301610946655"

|

||||

|

||||

2) "40.75466497634030105"

|

||||

|

||||

```

|

||||

|

||||

The `GEODIST` command provides the distance between two elements of the index. If I wanted to find the distance between the station at Grand Army Plaza & Central Park South and the station at East 58th St. & Madison, I would issue the following command:

|

||||

```

|

||||

127.0.0.1:6379> GEODIST NYC:stations:location NYC:station:281 NYC:station:3457 ft

|

||||

|

||||

"671.4900"

|

||||

|

||||

```

|

||||

|

||||

Finally, the `GEORADIUSBYMEMBER` command is similar to the `GEORADIUS` command, but instead of taking a set of coordinates, the command takes the name of another member of the index and returns all the members within a given radius centered on that member. To find all the stations within 1,000 feet of the Grand Army Plaza & Central Park South, enter the following:

|

||||

```

|

||||

127.0.0.1:6379> GEORADIUSBYMEMBER NYC:stations:location NYC:station:281 1000 ft WITHDIST

|

||||

|

||||

1) 1) "NYC:station:281"

|

||||

|

||||

2) "0.0000"

|

||||

|

||||

2) 1) "NYC:station:3132"

|

||||

|

||||

2) "793.4223"

|

||||

|

||||

3) 1) "NYC:station:2006"

|

||||

|

||||

2) "911.9752"

|

||||

|

||||

4) 1) "NYC:station:3136"

|

||||

|

||||

2) "940.3399"

|

||||

|

||||

5) 1) "NYC:station:3457"

|

||||

|

||||

2) "671.4900"

|

||||

|

||||

```

|

||||

|

||||

While this example focused on using Python and Redis to parse data and build an index of bikesharing system locations, it can easily be generalized to locate restaurants, public transit, or any other type of place developers want to help users find.

|

||||

|

||||

This article is based on [my presentation][17] at Open Source 101 in Raleigh this year.

|

||||

|

||||

--------------------------------------------------------------------------------

|

||||

|

||||

via: https://opensource.com/article/18/2/building-bikesharing-application-open-source-tools

|

||||

|

||||

作者:[Tague Griffith][a]

|

||||

译者:[译者ID](https://github.com/译者ID)

|

||||

校对:[校对者ID](https://github.com/校对者ID)

|

||||

|

||||

本文由 [LCTT](https://github.com/LCTT/TranslateProject) 原创编译,[Linux中国](https://linux.cn/) 荣誉推出

|

||||

|

||||

[a]:https://opensource.com/users/tague

|

||||

[1]:https://www.python.org/

|

||||

[2]:https://redis.io/

|

||||

[3]:https://www.citibikenyc.com/

|

||||

[4]:https://www.citibikenyc.com/data-sharing-policy

|

||||

[5]:https://github.com/NABSA/gbfs

|

||||

[6]:http://nabsa.net/

|

||||

[7]:https://www.json.org/

|

||||

[8]:https://gist.github.com/tague/5a82d96bcb09ce2a79943ad4c87f6e15

|

||||

[9]:https://github.com/NABSA/gbfs/blob/master/systems.csv

|

||||

[10]:https://redis.io/commands/unlink

|

||||

[11]:https://redis.io/commands/hmset

|

||||

[12]:https://raw.githubusercontent.com/antirez/redis/4.0/00-RELEASENOTES

|

||||

[13]:https://redis.io/topics/data-types#Hashes

|

||||

[14]:https://redis.io/commands#geo

|

||||

[15]:https://redis.io/topics/data-types-intro#redis-sorted-sets

|

||||

[16]:https://twitter.com/swatchthedog

|

||||

[17]:http://opensource101.com/raleigh/talks/building-location-aware-apps-open-source-tools/

|

||||

@ -1,80 +0,0 @@

|

||||

translating---geekpi

|

||||

|

||||

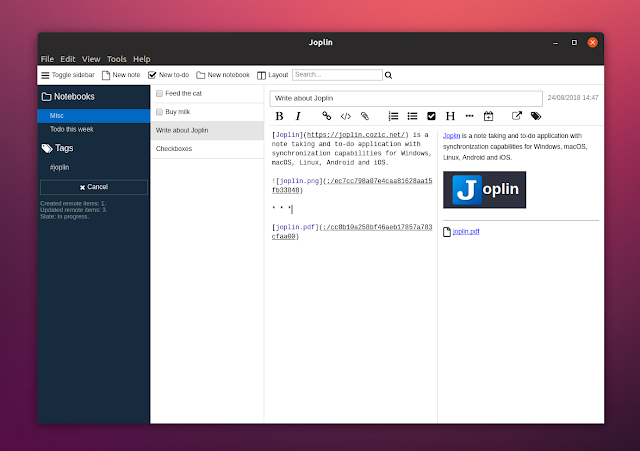

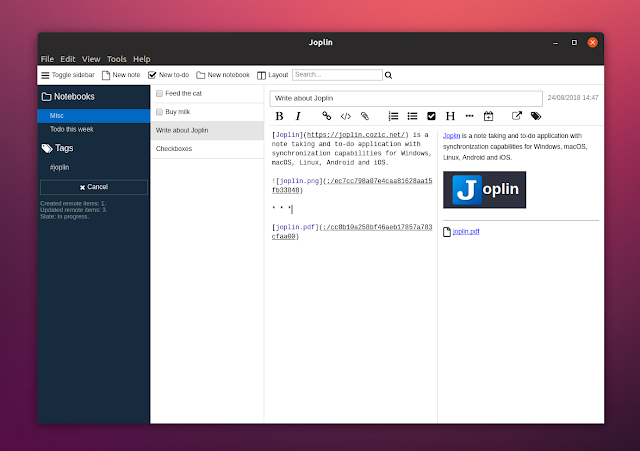

Joplin: Encrypted Open Source Note Taking And To-Do Application

|

||||

======

|

||||

**[Joplin][1] is a free and open source note taking and to-do application available for Linux, Windows, macOS, Android and iOS. Its key features include end-to-end encryption, Markdown support, and synchronization via third-party services like NextCloud, Dropbox, OneDrive or WebDAV.**

|

||||

|

||||

|

||||

|

||||

With Joplin you can write your notes in the **Markdown format** (with support for math notations and checkboxes) and the desktop app comes with 3 views: Markdown code, Markdown preview, or both side by side. **You can add attachments to your notes (with image previews) or edit them in an external Markdown editor** and have them automatically updated in Joplin each time you save the file.

|

||||

|

||||

The application should handle a large number of notes pretty well by allowing you to **organizing notes into notebooks, add tags, and search in notes**. You can also sort notes by updated date, creation date or title. **Each notebook can contain notes, to-do items, or both** , and you can easily add links to other notes (in the desktop app right click on a note and select `Copy Markdown link` , then paste the link in a note).

|

||||

|

||||

**Do-do items in Joplin support alarms** , but this feature didn't work for me on Ubuntu 18.04.

|

||||

|

||||

**Other Joplin features include:**

|

||||

|

||||

* **Optional Web Clipper extension** for Firefox and Chrome (in the Joplin desktop application go to `Tools > Web clipper options` to enable the clipper service and find download links for the Chrome / Firefox extension) which can clip simplified or complete pages, clip a selection or screenshot.

|

||||

|

||||

* **Optional command line client**.

|

||||

|

||||

* **Import Enex files (Evernote export format) and Markdown files**.

|

||||

|

||||

* **Export JEX files (Joplin Export format), PDF and raw files**.

|

||||

|

||||

* **Offline first, so the entire data is always available on the device even without an internet connection**.

|

||||

|

||||

* **Geolocation support**.

|

||||

|

||||

|

||||

|

||||

[![Joplin notes checkboxes link to other note][2]][3]

|

||||

Joplin with hidden sidebar showing checkboxes and a link to another note

|

||||

|

||||

While it doesn't offer as many features as Evernote, Joplin is a robust open source Evernote alternative. Joplin includes all the basic features, and on top of that it's open source software, it includes encryption support, and you also get to choose the service you want to use for synchronization.

|

||||

|

||||

The application was actually designed as an Evernote alternative so it can import complete Evernote notebooks, notes, tags, attachments, and note metadata like the author, creation and updated time, or geolocation.

|

||||

|

||||

Another aspect on which the Joplin development was focused was to avoid being tied to a particular company or service. This is why the application offers multiple synchronization solutions, like NextCloud, Dropbox, oneDrive and WebDav, while also making it easy to support new services. It's also easy to switch from one service to another if you change your mind.

|

||||

|

||||

**I should note that Joplin doesn't use encryption by default and you must enable this from its settings. Go to** `Tools > Encryption options` and enable the Joplin end-to-end encryption from there.

|

||||

|

||||

### Download Joplin

|

||||

|

||||

[Download Joplin][7]

|

||||

|

||||

**Joplin is available for Linux, Windows, macOS, Android and iOS. On Linux, there's an AppImage as well as an Aur package available.**

|

||||

|

||||

To run the Joplin AppImage on Linux, double click it and select `Make executable and run` if your file manager supports this. If not, you'll need to make it executable either using your file manager (should be something like: `right click > Properties > Permissions > Allow executing file as program` , but this may vary depending on the file manager you use), or from the command line:

|

||||

```

|

||||

chmod +x /path/to/Joplin-*-x86_64.AppImage

|

||||

|

||||

```

|

||||

|

||||

Replacing `/path/to/` with the path to where you downloaded Joplin. Now you can double click the Joplin Appimage file to launch it.

|

||||

|

||||

**TIP:** If you integrate Joplin to your menu and `~/.local/share/applications/appimagekit-joplin.desktop`) and adding `StartupWMClass=Joplin` at the end of the file on a new line, without modifying anything else.

|

||||

|

||||

Joplin has a **command line client** that can be [installed using npm][5] (for Debian, Ubuntu or Linux Mint, see [how to install and configure Node.js and npm][6] ).

|

||||

|

||||

|

||||

--------------------------------------------------------------------------------

|

||||

|

||||

via: https://www.linuxuprising.com/2018/08/joplin-encrypted-open-source-note.html

|

||||

|

||||

作者:[Logix][a]

|

||||

选题:[lujun9972](https://github.com/lujun9972)

|

||||

译者:[译者ID](https://github.com/译者ID)

|

||||

校对:[校对者ID](https://github.com/校对者ID)

|

||||

|

||||

本文由 [LCTT](https://github.com/LCTT/TranslateProject) 原创编译,[Linux中国](https://linux.cn/) 荣誉推出

|

||||

|

||||

[a]:https://plus.google.com/118280394805678839070

|

||||

[1]:https://joplin.cozic.net/

|

||||

[2]:https://3.bp.blogspot.com/-y9JKL1F89Vo/W3_0dkZjzQI/AAAAAAAABcI/hQI7GAx6i_sMcel4mF0x4uxBrMO88O59wCLcBGAs/s640/joplin-notes-markdown.png (Joplin notes checkboxes link to other note)

|

||||

[3]:https://3.bp.blogspot.com/-y9JKL1F89Vo/W3_0dkZjzQI/AAAAAAAABcI/hQI7GAx6i_sMcel4mF0x4uxBrMO88O59wCLcBGAs/s1600/joplin-notes-markdown.png

|

||||

[4]:https://github.com/laurent22/joplin/issues/338

|

||||

[5]:https://joplin.cozic.net/terminal/

|

||||

[6]:https://www.linuxuprising.com/2018/04/how-to-install-and-configure-nodejs-and.html

|

||||

|

||||

[7]: https://joplin.cozic.net/#installation

|

||||

@ -1,512 +0,0 @@

|

||||

Translating by qhwdw

|

||||

Lab 6: Network Driver

|

||||

======

|

||||

### Lab 6: Network Driver (default final project)

|

||||

|

||||

**Due on Thursday, December 6, 2018

|

||||

**

|

||||

|

||||

### Introduction

|

||||

|

||||

This lab is the default final project that you can do on your own.

|

||||

|

||||

Now that you have a file system, no self respecting OS should go without a network stack. In this the lab you are going to write a driver for a network interface card. The card will be based on the Intel 82540EM chip, also known as the E1000.

|

||||

|

||||

##### Getting Started

|

||||

|

||||

Use Git to commit your Lab 5 source (if you haven't already), fetch the latest version of the course repository, and then create a local branch called `lab6` based on our lab6 branch, `origin/lab6`:

|

||||

|

||||

```

|

||||

athena% cd ~/6.828/lab

|

||||

athena% add git

|

||||

athena% git commit -am 'my solution to lab5'

|

||||

nothing to commit (working directory clean)

|

||||

athena% git pull

|

||||

Already up-to-date.

|

||||

athena% git checkout -b lab6 origin/lab6

|

||||

Branch lab6 set up to track remote branch refs/remotes/origin/lab6.

|

||||

Switched to a new branch "lab6"

|

||||

athena% git merge lab5

|

||||

Merge made by recursive.

|

||||

fs/fs.c | 42 +++++++++++++++++++

|

||||

1 files changed, 42 insertions(+), 0 deletions(-)

|

||||

athena%

|

||||

```

|

||||

|

||||

The network card driver, however, will not be enough to get your OS hooked up to the Internet. In the new lab6 code, we have provided you with a network stack and a network server. As in previous labs, use git to grab the code for this lab, merge in your own code, and explore the contents of the new `net/` directory, as well as the new files in `kern/`.

|

||||

|

||||

In addition to writing the driver, you will need to create a system call interface to give access to your driver. You will implement missing network server code to transfer packets between the network stack and your driver. You will also tie everything together by finishing a web server. With the new web server you will be able to serve files from your file system.

|

||||

|

||||

Much of the kernel device driver code you will have to write yourself from scratch. This lab provides much less guidance than previous labs: there are no skeleton files, no system call interfaces written in stone, and many design decisions are left up to you. For this reason, we recommend that you read the entire assignment write up before starting any individual exercises. Many students find this lab more difficult than previous labs, so please plan your time accordingly.

|

||||

|

||||

##### Lab Requirements

|

||||

|

||||

As before, you will need to do all of the regular exercises described in the lab and _at least one_ challenge problem. Write up brief answers to the questions posed in the lab and a description of your challenge exercise in `answers-lab6.txt`.

|

||||

|

||||

#### QEMU's virtual network

|

||||

|

||||

We will be using QEMU's user mode network stack since it requires no administrative privileges to run. QEMU's documentation has more about user-net [here][1]. We've updated the makefile to enable QEMU's user-mode network stack and the virtual E1000 network card.

|

||||

|

||||

By default, QEMU provides a virtual router running on IP 10.0.2.2 and will assign JOS the IP address 10.0.2.15. To keep things simple, we hard-code these defaults into the network server in `net/ns.h`.

|

||||

|

||||

While QEMU's virtual network allows JOS to make arbitrary connections out to the Internet, JOS's 10.0.2.15 address has no meaning outside the virtual network running inside QEMU (that is, QEMU acts as a NAT), so we can't connect directly to servers running inside JOS, even from the host running QEMU. To address this, we configure QEMU to run a server on some port on the _host_ machine that simply connects through to some port in JOS and shuttles data back and forth between your real host and the virtual network.

|

||||

|

||||

You will run JOS servers on ports 7 (echo) and 80 (http). To avoid collisions on shared Athena machines, the makefile generates forwarding ports for these based on your user ID. To find out what ports QEMU is forwarding to on your development host, run make which-ports. For convenience, the makefile also provides make nc-7 and make nc-80, which allow you to interact directly with servers running on these ports in your terminal. (These targets only connect to a running QEMU instance; you must start QEMU itself separately.)

|

||||

|

||||

##### Packet Inspection

|

||||

|

||||

The makefile also configures QEMU's network stack to record all incoming and outgoing packets to `qemu.pcap` in your lab directory.

|

||||

|

||||

To get a hex/ASCII dump of captured packets use `tcpdump` like this:

|

||||

|

||||

```

|

||||

tcpdump -XXnr qemu.pcap

|

||||

```

|

||||

|

||||

Alternatively, you can use [Wireshark][2] to graphically inspect the pcap file. Wireshark also knows how to decode and inspect hundreds of network protocols. If you're on Athena, you'll have to use Wireshark's predecessor, ethereal, which is in the sipbnet locker.

|

||||

|

||||

##### Debugging the E1000

|

||||

|

||||

We are very lucky to be using emulated hardware. Since the E1000 is running in software, the emulated E1000 can report to us, in a user readable format, its internal state and any problems it encounters. Normally, such a luxury would not be available to a driver developer writing with bare metal.

|

||||

|

||||

The E1000 can produce a lot of debug output, so you have to enable specific logging channels. Some channels you might find useful are:

|

||||

|

||||

| Flag | Meaning |

|

||||

| --------- | ---------------------------------------------------|

|

||||

| tx | Log packet transmit operations |

|

||||

| txerr | Log transmit ring errors |

|

||||

| rx | Log changes to RCTL |

|

||||

| rxfilter | Log filtering of incoming packets |

|

||||

| rxerr | Log receive ring errors |

|

||||

| unknown | Log reads and writes of unknown registers |

|

||||

| eeprom | Log reads from the EEPROM |

|

||||

| interrupt | Log interrupts and changes to interrupt registers. |

|

||||

|

||||

To enable "tx" and "txerr" logging, for example, use make E1000_DEBUG=tx,txerr ....

|

||||

|

||||

Note: `E1000_DEBUG` flags only work in the 6.828 version of QEMU.

|

||||

|

||||

You can take debugging using software emulated hardware one step further. If you are ever stuck and do not understand why the E1000 is not responding the way you would expect, you can look at QEMU's E1000 implementation in `hw/e1000.c`.

|

||||

|

||||

#### The Network Server

|

||||

|

||||

Writing a network stack from scratch is hard work. Instead, we will be using lwIP, an open source lightweight TCP/IP protocol suite that among many things includes a network stack. You can find more information on lwIP [here][3]. In this assignment, as far as we are concerned, lwIP is a black box that implements a BSD socket interface and has a packet input port and packet output port.

|

||||

|

||||

The network server is actually a combination of four environments:

|

||||

|

||||

* core network server environment (includes socket call dispatcher and lwIP)

|

||||

* input environment

|

||||

* output environment

|

||||

* timer environment

|

||||

|

||||

|

||||

|

||||

The following diagram shows the different environments and their relationships. The diagram shows the entire system including the device driver, which will be covered later. In this lab, you will implement the parts highlighted in green.

|

||||

|

||||

![Network server architecture][4]

|

||||

|

||||

##### The Core Network Server Environment

|

||||

|

||||

The core network server environment is composed of the socket call dispatcher and lwIP itself. The socket call dispatcher works exactly like the file server. User environments use stubs (found in `lib/nsipc.c`) to send IPC messages to the core network environment. If you look at `lib/nsipc.c` you will see that we find the core network server the same way we found the file server: `i386_init` created the NS environment with NS_TYPE_NS, so we scan `envs`, looking for this special environment type. For each user environment IPC, the dispatcher in the network server calls the appropriate BSD socket interface function provided by lwIP on behalf of the user.

|

||||

|

||||

Regular user environments do not use the `nsipc_*` calls directly. Instead, they use the functions in `lib/sockets.c`, which provides a file descriptor-based sockets API. Thus, user environments refer to sockets via file descriptors, just like how they referred to on-disk files. A number of operations (`connect`, `accept`, etc.) are specific to sockets, but `read`, `write`, and `close` go through the normal file descriptor device-dispatch code in `lib/fd.c`. Much like how the file server maintained internal unique ID's for all open files, lwIP also generates unique ID's for all open sockets. In both the file server and the network server, we use information stored in `struct Fd` to map per-environment file descriptors to these unique ID spaces.

|

||||

|

||||

Even though it may seem that the IPC dispatchers of the file server and network server act the same, there is a key difference. BSD socket calls like `accept` and `recv` can block indefinitely. If the dispatcher were to let lwIP execute one of these blocking calls, the dispatcher would also block and there could only be one outstanding network call at a time for the whole system. Since this is unacceptable, the network server uses user-level threading to avoid blocking the entire server environment. For every incoming IPC message, the dispatcher creates a thread and processes the request in the newly created thread. If the thread blocks, then only that thread is put to sleep while other threads continue to run.

|

||||

|

||||

In addition to the core network environment there are three helper environments. Besides accepting messages from user applications, the core network environment's dispatcher also accepts messages from the input and timer environments.

|

||||

|

||||

##### The Output Environment

|

||||

|

||||

When servicing user environment socket calls, lwIP will generate packets for the network card to transmit. LwIP will send each packet to be transmitted to the output helper environment using the `NSREQ_OUTPUT` IPC message with the packet attached in the page argument of the IPC message. The output environment is responsible for accepting these messages and forwarding the packet on to the device driver via the system call interface that you will soon create.

|

||||

|

||||

##### The Input Environment

|

||||

|

||||

Packets received by the network card need to be injected into lwIP. For every packet received by the device driver, the input environment pulls the packet out of kernel space (using kernel system calls that you will implement) and sends the packet to the core server environment using the `NSREQ_INPUT` IPC message.

|

||||

|

||||

The packet input functionality is separated from the core network environment because JOS makes it hard to simultaneously accept IPC messages and poll or wait for a packet from the device driver. We do not have a `select` system call in JOS that would allow environments to monitor multiple input sources to identify which input is ready to be processed.

|

||||

|

||||

If you take a look at `net/input.c` and `net/output.c` you will see that both need to be implemented. This is mainly because the implementation depends on your system call interface. You will write the code for the two helper environments after you implement the driver and system call interface.

|

||||

|

||||

##### The Timer Environment

|

||||

|

||||

The timer environment periodically sends messages of type `NSREQ_TIMER` to the core network server notifying it that a timer has expired. The timer messages from this thread are used by lwIP to implement various network timeouts.

|

||||

|

||||

### Part A: Initialization and transmitting packets

|

||||

|

||||

Your kernel does not have a notion of time, so we need to add it. There is currently a clock interrupt that is generated by the hardware every 10ms. On every clock interrupt we can increment a variable to indicate that time has advanced by 10ms. This is implemented in `kern/time.c`, but is not yet fully integrated into your kernel.

|

||||

|

||||

```

|

||||

Exercise 1. Add a call to `time_tick` for every clock interrupt in `kern/trap.c`. Implement `sys_time_msec` and add it to `syscall` in `kern/syscall.c` so that user space has access to the time.

|

||||

```

|

||||

|

||||

Use make INIT_CFLAGS=-DTEST_NO_NS run-testtime to test your time code. You should see the environment count down from 5 in 1 second intervals. The "-DTEST_NO_NS" disables starting the network server environment because it will panic at this point in the lab.

|

||||

|

||||

#### The Network Interface Card

|

||||

|

||||

Writing a driver requires knowing in depth the hardware and the interface presented to the software. The lab text will provide a high-level overview of how to interface with the E1000, but you'll need to make extensive use of Intel's manual while writing your driver.

|

||||

|

||||

```

|

||||

Exercise 2. Browse Intel's [Software Developer's Manual][5] for the E1000. This manual covers several closely related Ethernet controllers. QEMU emulates the 82540EM.

|

||||

|

||||

You should skim over chapter 2 now to get a feel for the device. To write your driver, you'll need to be familiar with chapters 3 and 14, as well as 4.1 (though not 4.1's subsections). You'll also need to use chapter 13 as reference. The other chapters mostly cover components of the E1000 that your driver won't have to interact with. Don't worry about the details right now; just get a feel for how the document is structured so you can find things later.

|

||||

|

||||

While reading the manual, keep in mind that the E1000 is a sophisticated device with many advanced features. A working E1000 driver only needs a fraction of the features and interfaces that the NIC provides. Think carefully about the easiest way to interface with the card. We strongly recommend that you get a basic driver working before taking advantage of the advanced features.

|

||||

```

|

||||

|

||||

##### PCI Interface

|

||||

|

||||

The E1000 is a PCI device, which means it plugs into the PCI bus on the motherboard. The PCI bus has address, data, and interrupt lines, and allows the CPU to communicate with PCI devices and PCI devices to read and write memory. A PCI device needs to be discovered and initialized before it can be used. Discovery is the process of walking the PCI bus looking for attached devices. Initialization is the process of allocating I/O and memory space as well as negotiating the IRQ line for the device to use.

|

||||

|

||||

We have provided you with PCI code in `kern/pci.c`. To perform PCI initialization during boot, the PCI code walks the PCI bus looking for devices. When it finds a device, it reads its vendor ID and device ID and uses these two values as a key to search the `pci_attach_vendor` array. The array is composed of `struct pci_driver` entries like this:

|

||||

|

||||

```

|

||||

struct pci_driver {

|

||||

uint32_t key1, key2;

|

||||

int (*attachfn) (struct pci_func *pcif);

|

||||

};

|

||||

```

|

||||

|

||||

If the discovered device's vendor ID and device ID match an entry in the array, the PCI code calls that entry's `attachfn` to perform device initialization. (Devices can also be identified by class, which is what the other driver table in `kern/pci.c` is for.)

|

||||

|

||||

The attach function is passed a _PCI function_ to initialize. A PCI card can expose multiple functions, though the E1000 exposes only one. Here is how we represent a PCI function in JOS:

|

||||

|

||||

```

|

||||

struct pci_func {

|

||||

struct pci_bus *bus;

|

||||

|

||||

uint32_t dev;

|

||||

uint32_t func;

|

||||

|

||||

uint32_t dev_id;

|

||||

uint32_t dev_class;

|

||||

|

||||

uint32_t reg_base[6];

|

||||

uint32_t reg_size[6];

|

||||

uint8_t irq_line;

|

||||

};

|

||||

```

|

||||

|

||||

The above structure reflects some of the entries found in Table 4-1 of Section 4.1 of the developer manual. The last three entries of `struct pci_func` are of particular interest to us, as they record the negotiated memory, I/O, and interrupt resources for the device. The `reg_base` and `reg_size` arrays contain information for up to six Base Address Registers or BARs. `reg_base` stores the base memory addresses for memory-mapped I/O regions (or base I/O ports for I/O port resources), `reg_size` contains the size in bytes or number of I/O ports for the corresponding base values from `reg_base`, and `irq_line` contains the IRQ line assigned to the device for interrupts. The specific meanings of the E1000 BARs are given in the second half of table 4-2.

|

||||

|

||||

When the attach function of a device is called, the device has been found but not yet _enabled_. This means that the PCI code has not yet determined the resources allocated to the device, such as address space and an IRQ line, and, thus, the last three elements of the `struct pci_func` structure are not yet filled in. The attach function should call `pci_func_enable`, which will enable the device, negotiate these resources, and fill in the `struct pci_func`.

|

||||

|

||||

```

|

||||

Exercise 3. Implement an attach function to initialize the E1000. Add an entry to the `pci_attach_vendor` array in `kern/pci.c` to trigger your function if a matching PCI device is found (be sure to put it before the `{0, 0, 0}` entry that mark the end of the table). You can find the vendor ID and device ID of the 82540EM that QEMU emulates in section 5.2. You should also see these listed when JOS scans the PCI bus while booting.

|

||||

|

||||

For now, just enable the E1000 device via `pci_func_enable`. We'll add more initialization throughout the lab.

|

||||

|

||||

We have provided the `kern/e1000.c` and `kern/e1000.h` files for you so that you do not need to mess with the build system. They are currently blank; you need to fill them in for this exercise. You may also need to include the `e1000.h` file in other places in the kernel.

|

||||

|

||||

When you boot your kernel, you should see it print that the PCI function of the E1000 card was enabled. Your code should now pass the `pci attach` test of make grade.

|

||||

```

|

||||

|

||||

##### Memory-mapped I/O

|

||||

|

||||

Software communicates with the E1000 via _memory-mapped I/O_ (MMIO). You've seen this twice before in JOS: both the CGA console and the LAPIC are devices that you control and query by writing to and reading from "memory". But these reads and writes don't go to DRAM; they go directly to these devices.

|

||||

|

||||

`pci_func_enable` negotiates an MMIO region with the E1000 and stores its base and size in BAR 0 (that is, `reg_base[0]` and `reg_size[0]`). This is a range of _physical memory addresses_ assigned to the device, which means you'll have to do something to access it via virtual addresses. Since MMIO regions are assigned very high physical addresses (typically above 3GB), you can't use `KADDR` to access it because of JOS's 256MB limit. Thus, you'll have to create a new memory mapping. We'll use the area above MMIOBASE (your `mmio_map_region` from lab 4 will make sure we don't overwrite the mapping used by the LAPIC). Since PCI device initialization happens before JOS creates user environments, you can create the mapping in `kern_pgdir` and it will always be available.

|

||||

|

||||

```

|

||||

Exercise 4. In your attach function, create a virtual memory mapping for the E1000's BAR 0 by calling `mmio_map_region` (which you wrote in lab 4 to support memory-mapping the LAPIC).

|

||||

|

||||

You'll want to record the location of this mapping in a variable so you can later access the registers you just mapped. Take a look at the `lapic` variable in `kern/lapic.c` for an example of one way to do this. If you do use a pointer to the device register mapping, be sure to declare it `volatile`; otherwise, the compiler is allowed to cache values and reorder accesses to this memory.

|

||||

|

||||

To test your mapping, try printing out the device status register (section 13.4.2). This is a 4 byte register that starts at byte 8 of the register space. You should get `0x80080783`, which indicates a full duplex link is up at 1000 MB/s, among other things.

|

||||

```

|