@@ -48,8 +49,6 @@

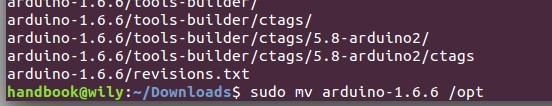

[root@centos-007 ~]# yum install java-1.7.0-openjdk-1.7.0.79-2.5.5.2.el7_1

-----------

-

[root@centos-007 ~]# yum install java-1.7.0-openjdk-devel-1.7.0.85-2.6.1.2.el7_1.x86_64

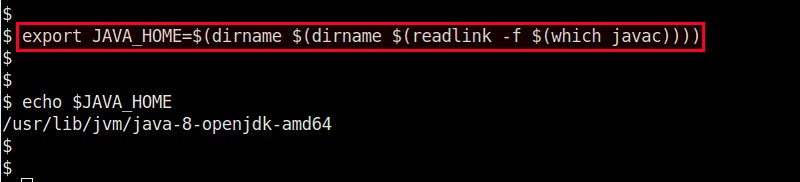

安装完 java 和它的所有依赖后,运行下面的命令设置 JAVA_HOME 环境变量。

@@ -61,8 +60,6 @@

[root@centos-007 ~]# java –version

-----------

-

java version "1.7.0_79"

OpenJDK Runtime Environment (rhel-2.5.5.2.el7_1-x86_64 u79-b14)

OpenJDK 64-Bit Server VM (build 24.79-b02, mixed mode)

@@ -71,7 +68,7 @@

### 安装 MySQL 5.6.x ###

-如果的机器上有其它的 MySQL,建议你先卸载它们并安装这个版本,或者升级它们的模式到指定的版本。因为 Zephyr 前提要求这个指定的主要/最小 MySQL (5.6.x)版本要有 root 用户名。

+如果的机器上有其它的 MySQL,建议你先卸载它们并安装这个版本,或者升级它们的模式(schemas)到指定的版本。因为 Zephyr 前提要求这个指定的 5.6.x 版本的 MySQL ,要有 root 用户名。

可以按照下面的步骤在 CentOS-7.1 上安装 MySQL 5.6 :

@@ -93,10 +90,7 @@

[root@centos-007 ~]# service mysqld start

[root@centos-007 ~]# service mysqld status

-对于全新安装的 MySQL 服务器,MySQL root 用户的密码为空。

-为了安全起见,我们应该重置 MySQL root 用户的密码。

-

-用自动生成的空密码连接到 MySQL 并更改 root 用户密码。

+对于全新安装的 MySQL 服务器,MySQL root 用户的密码为空。为了安全起见,我们应该重置 MySQL root 用户的密码。用自动生成的空密码连接到 MySQL 并更改 root 用户密码。

[root@centos-007 ~]# mysql

mysql> SET PASSWORD FOR 'root'@'localhost' = PASSWORD('your_password');

@@ -224,7 +218,7 @@ via: http://linoxide.com/linux-how-to/setup-zephyr-tool-centos-7-x/

作者:[Kashif Siddique][a]

译者:[ictlyh](http://mutouxiaogui.cn/blog/)

-校对:[校对者ID](https://github.com/校对者ID)

+校对:[wxy](https://github.com/wxy)

本文由 [LCTT](https://github.com/LCTT/TranslateProject) 原创翻译,[Linux中国](https://linux.cn/) 荣誉推出

diff --git a/published/20150831 Linux workstation security checklist.md b/published/20150831 Linux workstation security checklist.md

new file mode 100644

index 0000000000..15daaa5382

--- /dev/null

+++ b/published/20150831 Linux workstation security checklist.md

@@ -0,0 +1,509 @@

+来自 Linux 基金会内部的《Linux 工作站安全检查清单》

+================================================================================

+

+### 目标受众

+

+ 这是一套 Linux 基金会为其系统管理员提供的推荐规范。

+

+这个文档用于帮助那些使用 Linux 工作站来访问和管理项目的 IT 设施的系统管理员团队。

+

+如果你的系统管理员是远程员工,你也许可以使用这套指导方针确保系统管理员的系统可以通过核心安全需求,降低你的IT 平台成为攻击目标的风险。

+

+即使你的系统管理员不是远程员工,很多人也会在工作环境中通过便携笔记本完成工作,或者在家中设置系统以便在业余时间或紧急时刻访问工作平台。不论发生何种情况,你都能调整这个推荐规范来适应你的环境。

+

+

+### 限制

+

+但是,这并不是一个详细的“工作站加固”文档,可以说这是一个努力避免大多数明显安全错误而不会导致太多不便的一组推荐基线(baseline)。你也许阅读这个文档后会认为它的方法太偏执,而另一些人也许会认为这仅仅是一些肤浅的研究。安全就像在高速公路上开车 -- 任何比你开的慢的都是一个傻瓜,然而任何比你开的快的人都是疯子。这个指南仅仅是一些列核心安全规则,既不详细又不能替代经验、警惕和常识。

+

+我们分享这篇文档是为了[将开源协作的优势带到 IT 策略文献资料中][18]。如果你发现它有用,我们希望你可以将它用到你自己团体中,并分享你的改进,对它的完善做出你的贡献。

+

+### 结构

+

+每一节都分为两个部分:

+

+- 核对适合你项目的需求

+- 形式不定的提示内容,解释了为什么这么做

+

+#### 严重级别

+

+在清单的每一个项目都包括严重级别,我们希望这些能帮助指导你的决定:

+

+- **关键(ESSENTIAL)** 该项应该在考虑列表上被明确的重视。如果不采取措施,将会导致你的平台安全出现高风险。

+- **中等(NICE)** 该项将改善你的安全形势,但是会影响到你的工作环境的流程,可能会要求养成新的习惯,改掉旧的习惯。

+- **低等(PARANOID)** 留作感觉会明显完善我们平台安全、但是可能会需要大量调整与操作系统交互的方式的项目。

+

+记住,这些只是参考。如果你觉得这些严重级别不能反映你的工程对安全的承诺,你应该调整它们为你所合适的。

+

+## 选择正确的硬件

+

+我们并不会要求管理员使用一个特殊供应商或者一个特殊的型号,所以这一节提供的是选择工作系统时的核心注意事项。

+

+### 检查清单

+

+- [ ] 系统支持安全启动(SecureBoot) _(关键)_

+- [ ] 系统没有火线(Firewire),雷电(thunderbolt)或者扩展卡(ExpressCard)接口 _(中等)_

+- [ ] 系统有 TPM 芯片 _(中等)_

+

+### 注意事项

+

+#### 安全启动(SecureBoot)

+

+尽管它还有争议,但是安全引导能够预防很多针对工作站的攻击(Rootkits、“Evil Maid”,等等),而没有太多额外的麻烦。它并不能阻止真正专门的攻击者,加上在很大程度上,国家安全机构有办法应对它(可能是通过设计),但是有安全引导总比什么都没有强。

+

+作为选择,你也许可以部署 [Anti Evil Maid][1] 提供更多健全的保护,以对抗安全引导所需要阻止的攻击类型,但是它需要更多部署和维护的工作。

+

+#### 系统没有火线(Firewire),雷电(thunderbolt)或者扩展卡(ExpressCard)接口

+

+火线是一个标准,其设计上允许任何连接的设备能够完全地直接访问你的系统内存(参见[维基百科][2])。雷电接口和扩展卡同样有问题,虽然一些后来部署的雷电接口试图限制内存访问的范围。如果你没有这些系统端口,那是最好的,但是它并不严重,它们通常可以通过 UEFI 关闭或内核本身禁用。

+

+#### TPM 芯片

+

+可信平台模块(Trusted Platform Module ,TPM)是主板上的一个与核心处理器单独分开的加密芯片,它可以用来增加平台的安全性(比如存储全盘加密的密钥),不过通常不会用于日常的平台操作。充其量,这个是一个有则更好的东西,除非你有特殊需求,需要使用 TPM 增加你的工作站安全性。

+

+## 预引导环境

+

+这是你开始安装操作系统前的一系列推荐规范。

+

+### 检查清单

+

+- [ ] 使用 UEFI 引导模式(不是传统 BIOS)_(关键)_

+- [ ] 进入 UEFI 配置需要使用密码 _(关键)_

+- [ ] 使用安全引导 _(关键)_

+- [ ] 启动系统需要 UEFI 级别密码 _(中等)_

+

+### 注意事项

+

+#### UEFI 和安全引导

+

+UEFI 尽管有缺点,还是提供了很多传统 BIOS 没有的好功能,比如安全引导。大多数现代的系统都默认使用 UEFI 模式。

+

+确保进入 UEFI 配置模式要使用高强度密码。注意,很多厂商默默地限制了你使用密码长度,所以相比长口令你也许应该选择高熵值的短密码(关于密码短语请参考下面内容)。

+

+基于你选择的 Linux 发行版,你也许需要、也许不需要按照 UEFI 的要求,来导入你的发行版的安全引导密钥,从而允许你启动该发行版。很多发行版已经与微软合作,用大多数厂商所支持的密钥给它们已发布的内核签名,因此避免了你必须处理密钥导入的麻烦。

+

+作为一个额外的措施,在允许某人访问引导分区然后尝试做一些不好的事之前,让他们输入密码。为了防止肩窥(shoulder-surfing),这个密码应该跟你的 UEFI 管理密码不同。如果你经常关闭和启动,你也许不想这么麻烦,因为你已经必须输入 LUKS 密码了(LUKS 参见下面内容),这样会让你您减少一些额外的键盘输入。

+

+## 发行版选择注意事项

+

+很有可能你会坚持一个广泛使用的发行版如 Fedora,Ubuntu,Arch,Debian,或它们的一个类似发行版。无论如何,以下是你选择使用发行版应该考虑的。

+

+### 检查清单

+

+- [ ] 拥有一个强健的 MAC/RBAC 系统(SELinux/AppArmor/Grsecurity) _(关键)_

+- [ ] 发布安全公告 _(关键)_

+- [ ] 提供及时的安全补丁 _(关键)_

+- [ ] 提供软件包的加密验证 _(关键)_

+- [ ] 完全支持 UEFI 和安全引导 _(关键)_

+- [ ] 拥有健壮的原生全磁盘加密支持 _(关键)_

+

+### 注意事项

+

+#### SELinux,AppArmor,和 GrSecurity/PaX

+

+强制访问控制(Mandatory Access Controls,MAC)或者基于角色的访问控制(Role-Based Access Controls,RBAC)是一个用在老式 POSIX 系统的基于用户或组的安全机制扩展。现在大多数发行版已经捆绑了 MAC/RBAC 系统(Fedora,Ubuntu),或通过提供一种机制一个可选的安装后步骤来添加它(Gentoo,Arch,Debian)。显然,强烈建议您选择一个预装 MAC/RBAC 系统的发行版,但是如果你对某个没有默认启用它的发行版情有独钟,装完系统后应计划配置安装它。

+

+应该坚决避免使用不带任何 MAC/RBAC 机制的发行版,像传统的 POSIX 基于用户和组的安全在当今时代应该算是考虑不足。如果你想建立一个 MAC/RBAC 工作站,通常认为 AppArmor 和 PaX 比 SELinux 更容易掌握。此外,在工作站上,很少有或者根本没有对外监听的守护进程,而针对用户运行的应用造成的最高风险,GrSecurity/PaX _可能_ 会比SELinux 提供更多的安全便利。

+

+#### 发行版安全公告

+

+大多数广泛使用的发行版都有一个给它们的用户发送安全公告的机制,但是如果你对一些机密感兴趣,去看看开发人员是否有见于文档的提醒用户安全漏洞和补丁的机制。缺乏这样的机制是一个重要的警告信号,说明这个发行版不够成熟,不能被用作主要管理员的工作站。

+

+#### 及时和可靠的安全更新

+

+多数常用的发行版提供定期安全更新,但应该经常检查以确保及时提供关键包更新。因此应避免使用附属发行版(spin-offs)和“社区重构”,因为它们必须等待上游发行版先发布,它们经常延迟发布安全更新。

+

+现在,很难找到一个不使用加密签名、更新元数据或二者都不使用的发行版。如此说来,常用的发行版在引入这个基本安全机制就已经知道这些很多年了(Arch,说你呢),所以这也是值得检查的。

+

+#### 发行版支持 UEFI 和安全引导

+

+检查发行版是否支持 UEFI 和安全引导。查明它是否需要导入额外的密钥或是否要求启动内核有一个已经被系统厂商信任的密钥签名(例如跟微软达成合作)。一些发行版不支持 UEFI 或安全启动,但是提供了替代品来确保防篡改(tamper-proof)或防破坏(tamper-evident)引导环境([Qubes-OS][3] 使用 Anti Evil Maid,前面提到的)。如果一个发行版不支持安全引导,也没有防止引导级别攻击的机制,还是看看别的吧。

+

+#### 全磁盘加密

+

+全磁盘加密是保护静止数据的要求,大多数发行版都支持。作为一个选择方案,带有自加密硬盘的系统也可以用(通常通过主板 TPM 芯片实现),并提供了类似安全级别而且操作更快,但是花费也更高。

+

+## 发行版安装指南

+

+所有发行版都是不同的,但是也有一些一般原则:

+

+### 检查清单

+

+- [ ] 使用健壮的密码全磁盘加密(LUKS) _(关键)_

+- [ ] 确保交换分区也加密了 _(关键)_

+- [ ] 确保引导程序设置了密码(可以和LUKS一样) _(关键)_

+- [ ] 设置健壮的 root 密码(可以和LUKS一样) _(关键)_

+- [ ] 使用无特权账户登录,作为管理员组的一部分 _(关键)_

+- [ ] 设置健壮的用户登录密码,不同于 root 密码 _(关键)_

+

+### 注意事项

+

+#### 全磁盘加密

+

+除非你正在使用自加密硬盘,配置你的安装程序完整地加密所有存储你的数据与系统文件的磁盘很重要。简单地通过自动挂载的 cryptfs 环(loop)文件加密用户目录还不够(说你呢,旧版 Ubuntu),这并没有给系统二进制文件或交换分区提供保护,它可能包含大量的敏感数据。推荐的加密策略是加密 LVM 设备,以便在启动过程中只需要一个密码。

+

+`/boot`分区将一直保持非加密,因为引导程序需要在调用 LUKS/dm-crypt 前能引导内核自身。一些发行版支持加密的`/boot`分区,比如 [Arch][16],可能别的发行版也支持,但是似乎这样增加了系统更新的复杂度。如果你的发行版并没有原生支持加密`/boot`也不用太在意,内核镜像本身并没有什么隐私数据,它会通过安全引导的加密签名检查来防止被篡改。

+

+#### 选择一个好密码

+

+现代的 Linux 系统没有限制密码口令长度,所以唯一的限制是你的偏执和倔强。如果你要启动你的系统,你将大概至少要输入两个不同的密码:一个解锁 LUKS ,另一个登录,所以长密码将会使你老的更快。最好从丰富或混合的词汇中选择2-3个单词长度,容易输入的密码。

+

+优秀密码例子(是的,你可以使用空格):

+

+- nature abhors roombas

+- 12 in-flight Jebediahs

+- perdon, tengo flatulence

+

+如果你喜欢输入可以在公开场合和你生活中能见到的句子,比如:

+

+- Mary had a little lamb

+- you're a wizard, Harry

+- to infinity and beyond

+

+如果你愿意的话,你也应该带上最少要 10-12个字符长度的非词汇的密码。

+

+除非你担心物理安全,你可以写下你的密码,并保存在一个远离你办公桌的安全的地方。

+

+#### Root,用户密码和管理组

+

+我们建议,你的 root 密码和你的 LUKS 加密使用同样的密码(除非你共享你的笔记本给信任的人,让他应该能解锁设备,但是不应该能成为 root 用户)。如果你是笔记本电脑的唯一用户,那么你的 root 密码与你的 LUKS 密码不同是没有安全优势上的意义的。通常,你可以使用同样的密码在你的 UEFI 管理,磁盘加密,和 root 登录中 -- 知道这些任意一个都会让攻击者完全控制您的系统,在单用户工作站上使这些密码不同,没有任何安全益处。

+

+你应该有一个不同的,但同样强健的常规用户帐户密码用来日常工作。这个用户应该是管理组用户(例如`wheel`或者类似,根据发行版不同),允许你执行`sudo`来提升权限。

+

+换句话说,如果在你的工作站只有你一个用户,你应该有两个独特的、强健(robust)而强壮(strong)的密码需要记住:

+

+**管理级别**,用在以下方面:

+

+- UEFI 管理

+- 引导程序(GRUB)

+- 磁盘加密(LUKS)

+- 工作站管理(root 用户)

+

+**用户级别**,用在以下:

+

+- 用户登录和 sudo

+- 密码管理器的主密码

+

+很明显,如果有一个令人信服的理由的话,它们全都可以不同。

+

+## 安装后的加固

+

+安装后的安全加固在很大程度上取决于你选择的发行版,所以在一个像这样的通用文档中提供详细说明是徒劳的。然而,这里有一些你应该采取的步骤:

+

+### 检查清单

+

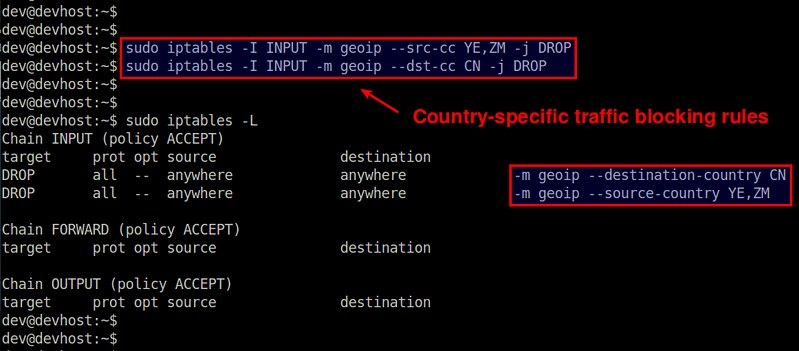

+- [ ] 在全局范围内禁用火线和雷电模块 _(关键)_

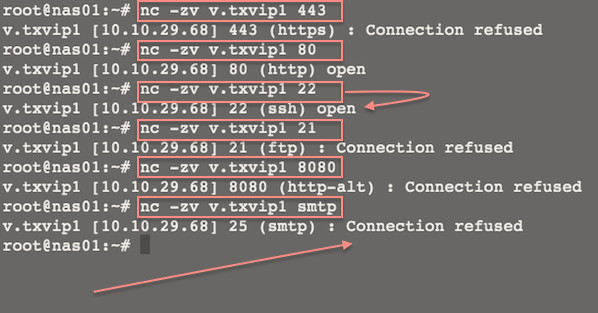

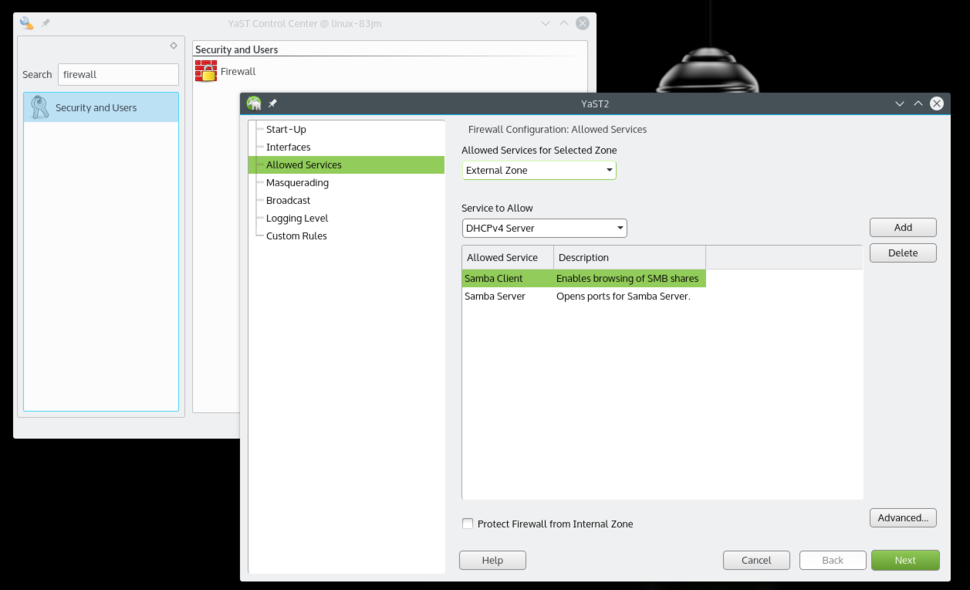

+- [ ] 检查你的防火墙,确保过滤所有传入端口 _(关键)_

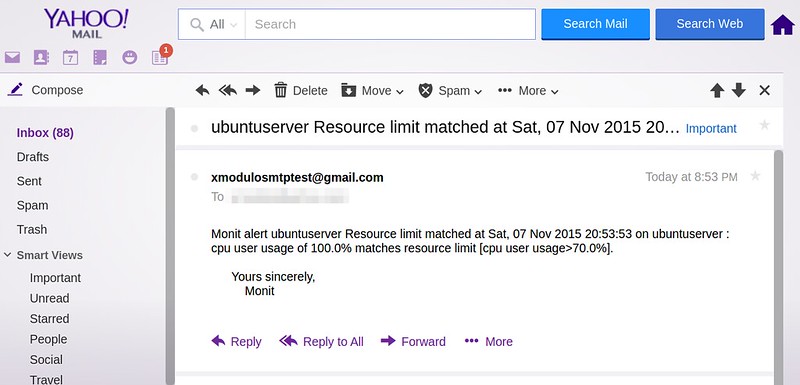

+- [ ] 确保 root 邮件转发到一个你可以收到的账户 _(关键)_

+- [ ] 建立一个系统自动更新任务,或更新提醒 _(中等)_

+- [ ] 检查以确保 sshd 服务默认情况下是禁用的 _(中等)_

+- [ ] 配置屏幕保护程序在一段时间的不活动后自动锁定 _(中等)_

+- [ ] 设置 logwatch _(中等)_

+- [ ] 安装使用 rkhunter _(中等)_

+- [ ] 安装一个入侵检测系统(Intrusion Detection System) _(中等)_

+

+### 注意事项

+

+#### 将模块列入黑名单

+

+将火线和雷电模块列入黑名单,增加一行到`/etc/modprobe.d/blacklist-dma.conf`文件:

+

+ blacklist firewire-core

+ blacklist thunderbolt

+

+重启后的这些模块将被列入黑名单。这样做是无害的,即使你没有这些端口(但也不做任何事)。

+

+#### Root 邮件

+

+默认的 root 邮件只是存储在系统基本上没人读过。确保你设置了你的`/etc/aliases`来转发 root 邮件到你确实能读取的邮箱,否则你也许错过了重要的系统通知和报告:

+

+ # Person who should get root's mail

+ root: bob@example.com

+

+编辑后这些后运行`newaliases`,然后测试它确保能投递到,像一些邮件供应商将拒绝来自不存在的域名或者不可达的域名的邮件。如果是这个原因,你需要配置邮件转发直到确实可用。

+

+#### 防火墙,sshd,和监听进程

+

+默认的防火墙设置将取决于您的发行版,但是大多数都允许`sshd`端口连入。除非你有一个令人信服的合理理由允许连入 ssh,你应该过滤掉它,并禁用 sshd 守护进程。

+

+ systemctl disable sshd.service

+ systemctl stop sshd.service

+

+如果你需要使用它,你也可以临时启动它。

+

+通常,你的系统不应该有任何侦听端口,除了响应 ping 之外。这将有助于你对抗网络级的零日漏洞利用。

+

+#### 自动更新或通知

+

+建议打开自动更新,除非你有一个非常好的理由不这么做,如果担心自动更新将使您的系统无法使用(以前发生过,所以这种担心并非杞人忧天)。至少,你应该启用自动通知可用的更新。大多数发行版已经有这个服务自动运行,所以你不需要做任何事。查阅你的发行版文档了解更多。

+

+你应该尽快应用所有明显的勘误,即使这些不是特别贴上“安全更新”或有关联的 CVE 编号。所有的问题都有潜在的安全漏洞和新的错误,比起停留在旧的、已知的问题上,未知问题通常是更安全的策略。

+

+#### 监控日志

+

+你应该会对你的系统上发生了什么很感兴趣。出于这个原因,你应该安装`logwatch`然后配置它每夜发送在你的系统上发生的任何事情的活动报告。这不会预防一个专业的攻击者,但是一个不错的安全网络功能。

+

+注意,许多 systemd 发行版将不再自动安装一个“logwatch”所需的 syslog 服务(因为 systemd 会放到它自己的日志中),所以你需要安装和启用“rsyslog”来确保在使用 logwatch 之前你的 /var/log 不是空的。

+

+#### Rkhunter 和 IDS

+

+安装`rkhunter`和一个类似`aide`或者`tripwire`入侵检测系统(IDS)并不是那么有用,除非你确实理解它们如何工作,并采取必要的步骤来设置正确(例如,保证数据库在外部介质,从可信的环境运行检测,记住执行系统更新和配置更改后要刷新散列数据库,等等)。如果你不愿在你的工作站执行这些步骤,并调整你如何工作的方式,这些工具只能带来麻烦而没有任何实在的安全益处。

+

+我们建议你安装`rkhunter`并每晚运行它。它相当易于学习和使用,虽然它不会阻止一个复杂的攻击者,它也能帮助你捕获你自己的错误。

+

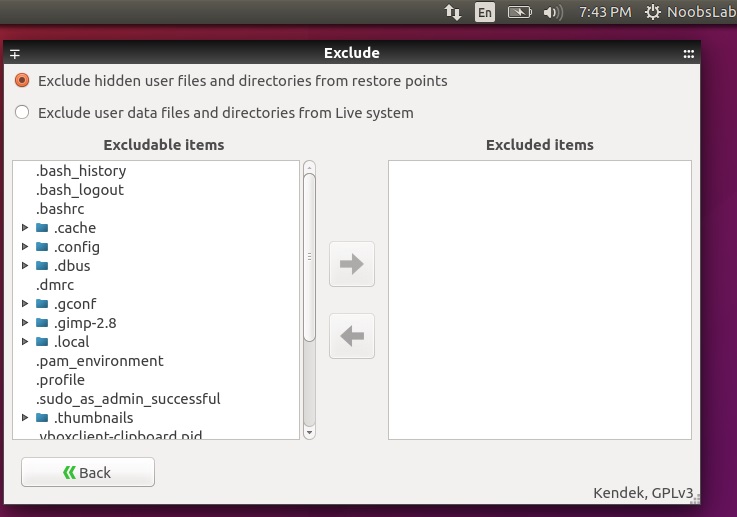

+## 个人工作站备份

+

+工作站备份往往被忽视,或偶尔才做一次,这常常是不安全的方式。

+

+### 检查清单

+

+- [ ] 设置加密备份工作站到外部存储 _(关键)_

+- [ ] 使用零认知(zero-knowledge)备份工具备份到站外或云上 _(中等)_

+

+### 注意事项

+

+#### 全加密的备份存到外部存储

+

+把全部备份放到一个移动磁盘中比较方便,不用担心带宽和上行网速(在这个时代,大多数供应商仍然提供显著的不对称的上传/下载速度)。不用说,这个移动硬盘本身需要加密(再说一次,通过 LUKS),或者你应该使用一个备份工具建立加密备份,例如`duplicity`或者它的 GUI 版本 `deja-dup`。我建议使用后者并使用随机生成的密码,保存到离线的安全地方。如果你带上笔记本去旅行,把这个磁盘留在家,以防你的笔记本丢失或被窃时可以找回备份。

+

+除了你的家目录外,你还应该备份`/etc`目录和出于取证目的的`/var/log`目录。

+

+尤其重要的是,避免拷贝你的家目录到任何非加密存储上,即使是需要快速的在两个系统上移动文件时,一旦完成你肯定会忘了清除它,从而暴露个人隐私或者安全信息到监听者手中 -- 尤其是把这个存储介质跟你的笔记本放到同一个包里。

+

+#### 有选择的零认知站外备份

+

+站外备份(Off-site backup)也是相当重要的,是否可以做到要么需要你的老板提供空间,要么找一家云服务商。你可以建一个单独的 duplicity/deja-dup 配置,只包括重要的文件,以免传输大量你不想备份的数据(网络缓存、音乐、下载等等)。

+

+作为选择,你可以使用零认知(zero-knowledge)备份工具,例如 [SpiderOak][5],它提供一个卓越的 Linux GUI工具还有更多的实用特性,例如在多个系统或平台间同步内容。

+

+## 最佳实践

+

+下面是我们认为你应该采用的最佳实践列表。它当然不是非常详细的,而是试图提供实用的建议,来做到可行的整体安全性和可用性之间的平衡。

+

+### 浏览

+

+毫无疑问, web 浏览器将是你的系统上最大、最容易暴露的面临攻击的软件。它是专门下载和执行不可信、甚至是恶意代码的一个工具。它试图采用沙箱和代码清洁(code sanitization)等多种机制保护你免受这种危险,但是在之前它们都被击败了多次。你应该知道,在任何时候浏览网站都是你做的最不安全的活动。

+

+有几种方法可以减少浏览器的影响,但这些真实有效的方法需要你明显改变操作您的工作站的方式。

+

+#### 1: 使用两个不同的浏览器 _(关键)_

+

+这很容易做到,但是只有很少的安全效益。并不是所有浏览器都可以让攻击者完全自由访问您的系统 -- 有时它们只能允许某人读取本地浏览器存储,窃取其它标签的活动会话,捕获浏览器的输入等。使用两个不同的浏览器,一个用在工作/高安全站点,另一个用在其它方面,有助于防止攻击者请求整个 cookie 存储的小问题。主要的不便是两个不同的浏览器会消耗大量内存。

+

+我们建议:

+

+##### 火狐用来访问工作和高安全站点

+

+使用火狐登录工作有关的站点,应该额外关心的是确保数据如 cookies,会话,登录信息,击键等等,明显不应该落入攻击者手中。除了少数的几个网站,你不应该用这个浏览器访问其它网站。

+

+你应该安装下面的火狐扩展:

+

+- [ ] NoScript _(关键)_

+ - NoScript 阻止活动内容加载,除非是在用户白名单里的域名。如果用于默认浏览器它会很麻烦(可是提供了真正好的安全效益),所以我们建议只在访问与工作相关的网站的浏览器上开启它。

+

+- [ ] Privacy Badger _(关键)_

+ - EFF 的 Privacy Badger 将在页面加载时阻止大多数外部追踪器和广告平台,有助于在这些追踪站点影响你的浏览器时避免跪了(追踪器和广告站点通常会成为攻击者的目标,因为它们能会迅速影响世界各地成千上万的系统)。

+

+- [ ] HTTPS Everywhere _(关键)_

+ - 这个 EFF 开发的扩展将确保你访问的大多数站点都使用安全连接,甚至你点击的连接使用的是 http://(可以有效的避免大多数的攻击,例如[SSL-strip][7])。

+

+- [ ] Certificate Patrol _(中等)_

+ - 如果你正在访问的站点最近改变了它们的 TLS 证书,这个工具将会警告你 -- 特别是如果不是接近失效期或者现在使用不同的证书颁发机构。它有助于警告你是否有人正尝试中间人攻击你的连接,不过它会产生很多误报。

+

+你应该让火狐成为你打开连接时的默认浏览器,因为 NoScript 将在加载或者执行时阻止大多数活动内容。

+

+##### 其它一切都用 Chrome/Chromium

+

+Chromium 开发者在增加很多很好的安全特性方面走在了火狐前面(至少[在 Linux 上][6]),例如 seccomp 沙箱,内核用户空间等等,这会成为一个你访问的网站与你其它系统之间的额外隔离层。Chromium 是上游开源项目,Chrome 是 Google 基于它构建的专有二进制包(加一句偏执的提醒,如果你有任何不想让谷歌知道的事情都不要使用它)。

+

+推荐你在 Chrome 上也安装**Privacy Badger** 和 **HTTPS Everywhere** 扩展,然后给它一个与火狐不同的主题,以让它告诉你这是你的“不可信站点”浏览器。

+

+#### 2: 使用两个不同浏览器,一个在专用的虚拟机里 _(中等)_

+

+这有点像上面建议的做法,除了您将添加一个通过快速访问协议运行在专用虚拟机内部 Chrome 的额外步骤,它允许你共享剪贴板和转发声音事件(如,Spice 或 RDP)。这将在不可信浏览器和你其它的工作环境之间添加一个优秀的隔离层,确保攻击者完全危害你的浏览器将必须另外打破 VM 隔离层,才能达到系统的其余部分。

+

+这是一个鲜为人知的可行方式,但是需要大量的 RAM 和高速的处理器来处理多增加的负载。这要求作为管理员的你需要相应地调整自己的工作实践而付出辛苦。

+

+#### 3: 通过虚拟化完全隔离你的工作和娱乐环境 _(低等)_

+

+了解下 [Qubes-OS 项目][3],它致力于通过划分你的应用到完全隔离的 VM 中来提供高度安全的工作环境。

+

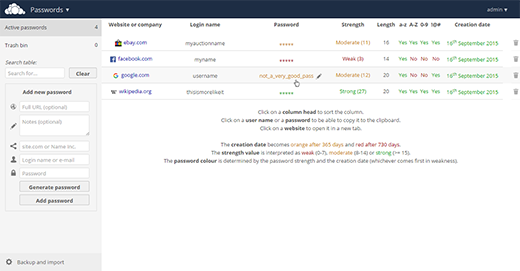

+### 密码管理器

+

+#### 检查清单

+

+- [ ] 使用密码管理器 _(关键)_

+- [ ] 不相关的站点使用不同的密码 _(关键)_

+- [ ] 使用支持团队共享的密码管理器 _(中等)_

+- [ ] 给非网站类账户使用一个单独的密码管理器 _(低等)_

+

+#### 注意事项

+

+使用好的、唯一的密码对你的团队成员来说应该是非常关键的需求。凭证(credential)盗取一直在发生 — 通过被攻破的计算机、盗取数据库备份、远程站点利用、以及任何其它的方式。凭证绝不应该跨站点重用,尤其是关键的应用。

+

+##### 浏览器中的密码管理器

+

+每个浏览器有一个比较安全的保存密码机制,可以同步到供应商维护的,并使用用户的密码保证数据加密。然而,这个机制有严重的劣势:

+

+1. 不能跨浏览器工作

+2. 不提供任何与团队成员共享凭证的方法

+

+也有一些支持良好、免费或便宜的密码管理器,可以很好的融合到多个浏览器,跨平台工作,提供小组共享(通常是付费服务)。可以很容易地通过搜索引擎找到解决方案。

+

+##### 独立的密码管理器

+

+任何与浏览器结合的密码管理器都有一个主要的缺点,它实际上是应用的一部分,这样最有可能被入侵者攻击。如果这让你不放心(应该这样),你应该选择两个不同的密码管理器 -- 一个集成在浏览器中用来保存网站密码,一个作为独立运行的应用。后者可用于存储高风险凭证如 root 密码、数据库密码、其它 shell 账户凭证等。

+

+这样的工具在团队成员间共享超级用户的凭据方面特别有用(服务器 root 密码、ILO密码、数据库管理密码、引导程序密码等等)。

+

+这几个工具可以帮助你:

+

+- [KeePassX][8],在第2版中改进了团队共享

+- [Pass][9],它使用了文本文件和 PGP,并与 git 结合

+- [Django-Pstore][10],它使用 GPG 在管理员之间共享凭据

+- [Hiera-Eyaml][11],如果你已经在你的平台中使用了 Puppet,在你的 Hiera 加密数据的一部分里面,可以便捷的追踪你的服务器/服务凭证。

+

+### 加固 SSH 与 PGP 的私钥

+

+个人加密密钥,包括 SSH 和 PGP 私钥,都是你工作站中最重要的物品 -- 这是攻击者最想得到的东西,这可以让他们进一步攻击你的平台或在其它管理员面前冒充你。你应该采取额外的步骤,确保你的私钥免遭盗窃。

+

+#### 检查清单

+

+- [ ] 用来保护私钥的强壮密码 _(关键)_

+- [ ] PGP 的主密码保存在移动存储中 _(中等)_

+- [ ] 用于身份验证、签名和加密的子密码存储在智能卡设备 _(中等)_

+- [ ] SSH 配置为以 PGP 认证密钥作为 ssh 私钥 _(中等)_

+

+#### 注意事项

+

+防止私钥被偷的最好方式是使用一个智能卡存储你的加密私钥,绝不要拷贝到工作站上。有几个厂商提供支持 OpenPGP 的设备:

+

+- [Kernel Concepts][12],在这里可以采购支持 OpenPGP 的智能卡和 USB 读取器,你应该需要一个。

+- [Yubikey NEO][13],这里提供 OpenPGP 功能的智能卡还提供很多很酷的特性(U2F、PIV、HOTP等等)。

+

+确保 PGP 主密码没有存储在工作站也很重要,仅使用子密码。主密钥只有在签名其它的密钥和创建新的子密钥时使用 — 不经常发生这种操作。你可以照着 [Debian 的子密钥][14]向导来学习如何将你的主密钥移动到移动存储并创建子密钥。

+

+你应该配置你的 gnupg 代理作为 ssh 代理,然后使用基于智能卡 PGP 认证密钥作为你的 ssh 私钥。我们发布了一个[详尽的指导][15]如何使用智能卡读取器或 Yubikey NEO。

+

+如果你不想那么麻烦,最少要确保你的 PGP 私钥和你的 SSH 私钥有个强健的密码,这将让攻击者很难盗取使用它们。

+

+### 休眠或关机,不要挂起

+

+当系统挂起时,内存中的内容仍然保留在内存芯片中,可以会攻击者读取到(这叫做冷启动攻击(Cold Boot Attack))。如果你离开你的系统的时间较长,比如每天下班结束,最好关机或者休眠,而不是挂起它或者就那么开着。

+

+### 工作站上的 SELinux

+

+如果你使用捆绑了 SELinux 的发行版(如 Fedora),这有些如何使用它的建议,让你的工作站达到最大限度的安全。

+

+#### 检查清单

+

+- [ ] 确保你的工作站强制(enforcing)使用 SELinux _(关键)_

+- [ ] 不要盲目的执行`audit2allow -M`,应该经常检查 _(关键)_

+- [ ] 绝不要 `setenforce 0` _(中等)_

+- [ ] 切换你的用户到 SELinux 用户`staff_u` _(中等)_

+

+#### 注意事项

+

+SELinux 是强制访问控制(Mandatory Access Controls,MAC),是 POSIX许可核心功能的扩展。它是成熟、强健,自从它推出以来已经有很长的路了。不管怎样,许多系统管理员现在仍旧重复过时的口头禅“关掉它就行”。

+

+话虽如此,在工作站上 SELinux 会带来一些有限的安全效益,因为大多数你想运行的应用都是可以自由运行的。开启它有益于给网络提供足够的保护,也有可能有助于防止攻击者通过脆弱的后台服务提升到 root 级别的权限用户。

+

+我们的建议是开启它并强制使用(enforcing)。

+

+##### 绝不`setenforce 0`

+

+使用`setenforce 0`临时把 SELinux 设置为许可(permissive)模式很有诱惑力,但是你应该避免这样做。当你想查找一个特定应用或者程序的问题时,实际上这样做是把整个系统的 SELinux 给关闭了。

+

+你应该使用`semanage permissive -a [somedomain_t]`替换`setenforce 0`,只把这个程序放入许可模式。首先运行`ausearch`查看哪个程序发生问题:

+

+ ausearch -ts recent -m avc

+

+然后看下`scontext=`(源自 SELinux 的上下文)行,像这样:

+

+ scontext=staff_u:staff_r:gpg_pinentry_t:s0-s0:c0.c1023

+ ^^^^^^^^^^^^^^

+

+这告诉你程序`gpg_pinentry_t`被拒绝了,所以你想排查应用的故障,应该增加它到许可域:

+

+ semange permissive -a gpg_pinentry_t

+

+这将允许你使用应用然后收集 AVC 的其它数据,你可以结合`audit2allow`来写一个本地策略。一旦完成你就不会看到新的 AVC 的拒绝消息,你就可以通过运行以下命令从许可中删除程序:

+

+ semanage permissive -d gpg_pinentry_t

+

+##### 用 SELinux 的用户 staff_r 使用你的工作站

+

+SELinux 带有角色(role)的原生实现,基于用户帐户相关角色来禁止或授予某些特权。作为一个管理员,你应该使用`staff_r`角色,这可以限制访问很多配置和其它安全敏感文件,除非你先执行`sudo`。

+

+默认情况下,用户以`unconfined_r`创建,你可以自由运行大多数应用,没有任何(或只有一点)SELinux 约束。转换你的用户到`staff_r`角色,运行下面的命令:

+

+ usermod -Z staff_u [username]

+

+你应该退出然后登录新的角色,届时如果你运行`id -Z`,你将会看到:

+

+ staff_u:staff_r:staff_t:s0-s0:c0.c1023

+

+在执行`sudo`时,你应该记住增加一个额外标志告诉 SELinux 转换到“sysadmin”角色。你需要用的命令是:

+

+ sudo -i -r sysadm_r

+

+然后`id -Z`将会显示:

+

+ staff_u:sysadm_r:sysadm_t:s0-s0:c0.c1023

+

+**警告**:在进行这个切换前你应该能很顺畅的使用`ausearch`和`audit2allow`,当你以`staff_r`角色运行时你的应用有可能不再工作了。在写作本文时,已知以下流行的应用在`staff_r`下没有做策略调整就不会工作:

+

+- Chrome/Chromium

+- Skype

+- VirtualBox

+

+切换回`unconfined_r`,运行下面的命令:

+

+ usermod -Z unconfined_u [username]

+

+然后注销再重新回到舒适区。

+

+## 延伸阅读

+

+IT 安全的世界是一个没有底的兔子洞。如果你想深入,或者找到你的具体发行版更多的安全特性,请查看下面这些链接:

+

+- [Fedora 安全指南](https://docs.fedoraproject.org/en-US/Fedora/19/html/Security_Guide/index.html)

+- [CESG Ubuntu 安全指南](https://www.gov.uk/government/publications/end-user-devices-security-guidance-ubuntu-1404-lts)

+- [Debian 安全手册](https://www.debian.org/doc/manuals/securing-debian-howto/index.en.html)

+- [Arch Linux 安全维基](https://wiki.archlinux.org/index.php/Security)

+- [Mac OSX 安全](https://www.apple.com/support/security/guides/)

+

+## 许可

+

+这项工作在[创作共用授权4.0国际许可证][0]许可下。

+

+--------------------------------------------------------------------------------

+

+via: https://github.com/lfit/itpol/blob/bbc17d8c69cb8eee07ec41f8fbf8ba32fdb4301b/linux-workstation-security.md

+

+作者:[mricon][a]

+译者:[wyangsun](https://github.com/wyangsun)

+校对:[wxy](https://github.com/wxy)

+

+本文由 [LCTT](https://github.com/LCTT/TranslateProject) 原创编译,[Linux中国](https://linux.cn/) 荣誉推出

+

+[a]:https://github.com/mricon

+[0]: http://creativecommons.org/licenses/by-sa/4.0/

+[1]: https://github.com/QubesOS/qubes-antievilmaid

+[2]: https://en.wikipedia.org/wiki/IEEE_1394#Security_issues

+[3]: https://qubes-os.org/

+[4]: https://xkcd.com/936/

+[5]: https://spideroak.com/

+[6]: https://code.google.com/p/chromium/wiki/LinuxSandboxing

+[7]: http://www.thoughtcrime.org/software/sslstrip/

+[8]: https://keepassx.org/

+[9]: http://www.passwordstore.org/

+[10]: https://pypi.python.org/pypi/django-pstore

+[11]: https://github.com/TomPoulton/hiera-eyaml

+[12]: http://shop.kernelconcepts.de/

+[13]: https://www.yubico.com/products/yubikey-hardware/yubikey-neo/

+[14]: https://wiki.debian.org/Subkeys

+[15]: https://github.com/lfit/ssh-gpg-smartcard-config

+[16]: http://www.pavelkogan.com/2014/05/23/luks-full-disk-encryption/

+[17]: https://en.wikipedia.org/wiki/Cold_boot_attack

+[18]: http://www.linux.com/news/featured-blogs/167-amanda-mcpherson/850607-linux-foundation-sysadmins-open-source-their-it-policies

\ No newline at end of file

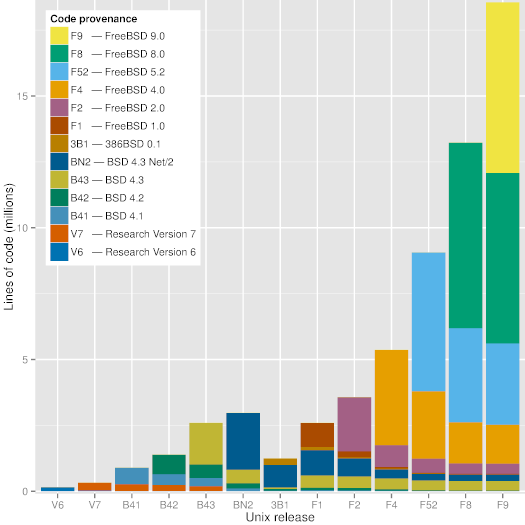

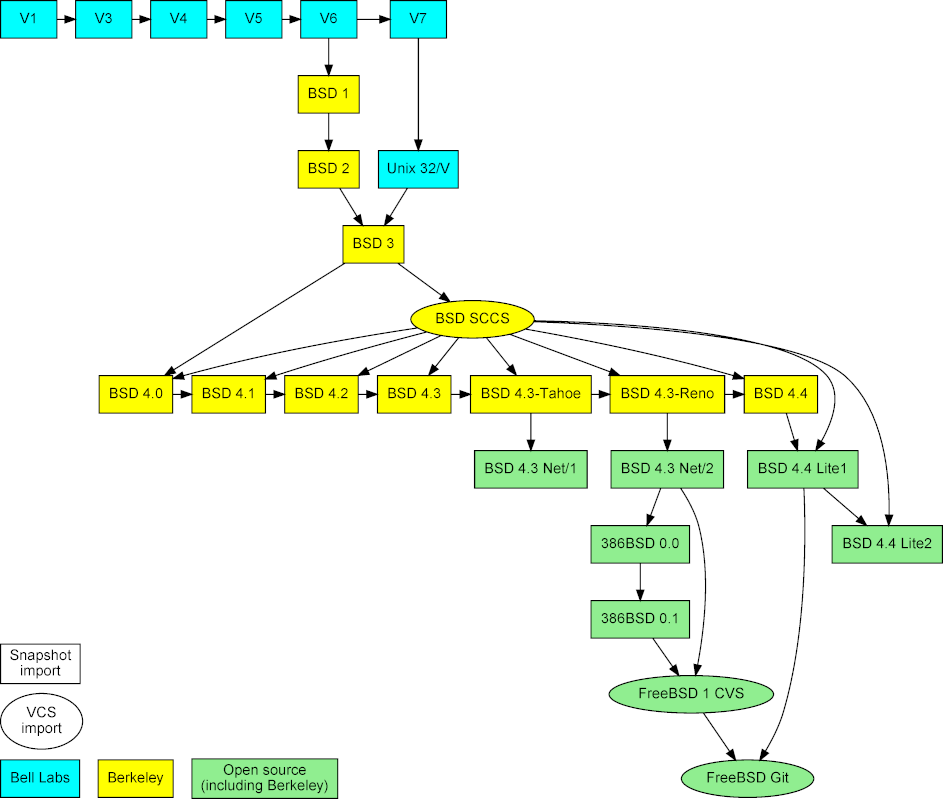

diff --git a/published/20151012 The Brief History Of Aix HP-UX Solaris BSD And LINUX.md b/published/20151012 The Brief History Of Aix HP-UX Solaris BSD And LINUX.md

new file mode 100644

index 0000000000..2f6780cdc2

--- /dev/null

+++ b/published/20151012 The Brief History Of Aix HP-UX Solaris BSD And LINUX.md

@@ -0,0 +1,101 @@

+UNIX 家族小史

+================================================================================

+

+

+要记住,当一扇门在你面前关闭的时候,另一扇门就会打开。肯·汤普森([Ken Thompson][1]) 和丹尼斯·里奇([Dennis Richie][2]) 两个人就是这句名言很好的实例。他们俩是**20世纪**最优秀的信息技术专家之二,因为他们创造了最具影响力和创新性的软件之一: **UNIX**。

+

+### UNIX 系统诞生于贝尔实验室 ###

+

+**UNIX** 最开始的名字是 **UNICS** (**UN**iplexed **I**nformation and **C**omputing **S**ervice),它有一个大家庭,并不是从石头缝里蹦出来的。UNIX的祖父是 **CTSS** (**C**ompatible **T**ime **S**haring **S**ystem),它的父亲是 **Multics** (**MULT**iplexed **I**nformation and **C**omputing **S**ervice),这个系统能支持大量用户通过交互式分时(timesharing)的方式使用大型机。

+

+UNIX 诞生于 **1969** 年,由**肯·汤普森**以及后来加入的**丹尼斯·里奇**共同完成。这两位优秀的研究员和科学家在一个**通用电器 GE**和**麻省理工学院**的合作项目里工作,项目目标是开发一个叫 Multics 的交互式分时系统。

+

+Multics 的目标是整合分时技术以及当时其他先进技术,允许用户在远程终端通过电话(拨号)登录到主机,然后可以编辑文档,阅读电子邮件,运行计算器,等等。

+

+在之后的五年里,AT&T 公司为 Multics 项目投入了数百万美元。他们购买了 GE-645 大型机,聚集了贝尔实验室的顶级研究人员,例如肯·汤普森、 Stuart Feldman、丹尼斯·里奇、道格拉斯·麦克罗伊(M. Douglas McIlroy)、 Joseph F. Ossanna 以及 Robert Morris。但是项目目标太过激进,进度严重滞后。最后,AT&T 高层决定放弃这个项目。

+

+贝尔实验室的管理层决定停止这个让许多研究人员无比纠结的操作系统上的所有遗留工作。不过要感谢汤普森,里奇和一些其他研究员,他们把老板的命令丢到一边,并继续在实验室里满怀热心地忘我工作,最终孵化出前无古人后无来者的 UNIX。

+

+UNIX 的第一声啼哭是在一台 PDP-7 微型机上,它是汤普森测试自己在操作系统设计上的点子的机器,也是汤普森和 里奇一起玩 Space and Travel 游戏的模拟器。

+

+> “我们想要的不仅是一个优秀的编程环境,而是能围绕这个系统形成团体。按我们自己的经验,通过远程访问和分时主机实现的公共计算,本质上不只是用终端输入程序代替打孔机而已,而是鼓励密切沟通。”丹尼斯·里奇说。

+

+UNIX 是第一个靠近理想的系统,在这里程序员可以坐在机器前自由摆弄程序,探索各种可能性并随手测试。在 UNIX 整个生命周期里,它吸引了大量因其他操作系统限制而投身过来的高手做出无私贡献,因此它的功能模型一直保持上升趋势。

+

+UNIX 在 1970 年因为 PDP-11/20 获得了首次资金注入,之后正式更名为 UNIX 并支持在 PDP-11/20 上运行。UNIX 带来的第一次用于实际场景中是在 1971 年,贝尔实验室的专利部门配备来做文字处理。

+

+### UNIX 上的 C 语言革命 ###

+

+丹尼斯·里奇在 1972 年发明了一种叫 “**C**” 的高级编程语言 ,之后他和肯·汤普森决定用 “C” 重写 UNIX 系统,来支持更好的移植性。他们在那一年里编写和调试了差不多 100,000 行代码。在迁移到 “C” 语言后,系统可移植性非常好,只需要修改一小部分机器相关的代码就可以将 UNIX 移植到其他计算机平台上。

+

+UNIX 第一次公开露面是 1973 年丹尼斯·里奇和肯·汤普森在操作系统原理(Operating Systems Principles)上发表的一篇论文,然后 AT&T 发布了 UNIX 系统第 5 版,并授权给教育机构使用,之后在 1975 年第一次以 **$20.000** 的价格授权企业使用 UNIX 第 6 版。应用最广泛的是 1980 年发布的 UNIX 第 7 版,任何人都可以购买授权,只是授权条款非常严格。授权内容包括源代码,以及用 PDP-11 汇编语言写的及其相关内核。反正,各种版本 UNIX 系统完全由它的用户手册确定。

+

+### AIX 系统 ###

+

+在 **1983** 年,**微软**计划开发 **Xenix** 作为 MS-DOS 的多用户版继任者,他们在那一年花了 $8,000 搭建了一台拥有 **512 KB** 内存以及 **10 MB**硬盘并运行 Xenix 的 Altos 586。而到 1984 年为止,全世界 UNIX System V 第二版的安装数量已经超过了 100,000 。在 1986 年发布了包含因特网域名服务的 4.3BSD,而且 **IBM** 宣布 **AIX 系统**的安装数已经超过 250,000。AIX 基于 Unix System V 开发,这套系统拥有 BSD 风格的根文件系统,是两者的结合。

+

+AIX 第一次引入了 **日志文件系统 (JFS)** 以及集成逻辑卷管理器 (Logical Volume Manager ,LVM)。IBM 在 1989 年将 AIX 移植到自己的 RS/6000 平台。2001 年发布的 5L 版是一个突破性的版本,提供了 Linux 友好性以及支持 Power4 服务器的逻辑分区。

+

+在 2004 年发布的 AIX 5.3 引入了支持高级电源虚拟化( Advanced Power Virtualization,APV)的虚拟化技术,支持对称多线程、微分区,以及共享处理器池。

+

+在 2007 年,IBM 同时发布 AIX 6.1 和 Power6 架构,开始加强自己的虚拟化产品。他们还将高级电源虚拟化重新包装成 PowerVM。

+

+这次改进包括被称为 WPARs 的负载分区形式,类似于 Solaris 的 zones/Containers,但是功能更强。

+

+### HP-UX 系统 ###

+

+**惠普 UNIX (Hewlett-Packard’s UNIX,HP-UX)** 源于 System V 第 3 版。这套系统一开始只支持 PA-RISC HP 9000 平台。HP-UX 第 1 版发布于 1984 年。

+

+HP-UX 第 9 版引入了 SAM,一个基于字符的图形用户界面 (GUI),用户可以用来管理整个系统。在 1995 年发布的第 10 版,调整了系统文件分布以及目录结构,变得有点类似 AT&T SVR4。

+

+第 11 版发布于 1997 年。这是 HP 第一个支持 64 位寻址的版本。不过在 2000 年重新发布成 11i,因为 HP 为特定的信息技术用途,引入了操作环境(operating environments)和分级应用(layered applications)的捆绑组(bundled groups)。

+

+在 2001 年发布的 11.20 版宣称支持安腾(Itanium)系统。HP-UX 是第一个使用 ACLs(访问控制列表,Access Control Lists)管理文件权限的 UNIX 系统,也是首先支持内建逻辑卷管理器(Logical Volume Manager)的系统之一。

+

+如今,HP-UX 因为 HP 和 Veritas 的合作关系使用了 Veritas 作为主文件系统。

+

+HP-UX 目前的最新版本是 11iv3, update 4。

+

+### Solaris 系统 ###

+

+Sun 的 UNIX 版本是 **Solaris**,用来接替 1992 年创建的 **SunOS**。SunOS 一开始基于 BSD(伯克利软件发行版,Berkeley Software Distribution)风格的 UNIX,但是 SunOS 5.0 版以及之后的版本都是基于重新包装为 Solaris 的 Unix System V 第 4 版。

+

+SunOS 1.0 版于 1983 年发布,用于支持 Sun-1 和 Sun-2 平台。随后在 1985 年发布了 2.0 版。在 1987 年,Sun 和 AT&T 宣布合作一个项目以 SVR4 为基础将 System V 和 BSD 合并成一个版本。

+

+Solaris 2.4 是 Sun 发布的第一个 Sparc/x86 版本。1994 年 11 月份发布的 SunOS 4.1.4 版是最后一个版本。Solaris 7 是首个 64 位 Ultra Sparc 版本,加入了对文件系统元数据记录的原生支持。

+

+Solaris 9 发布于 2002 年,支持 Linux 特性以及 Solaris 卷管理器(Solaris Volume Manager)。之后,2005 年发布了 Solaris 10,带来许多创新,比如支持 Solaris Containers,新的 ZFS 文件系统,以及逻辑域(Logical Domains)。

+

+目前 Solaris 最新的版本是 第 10 版,最后的更新发布于 2008 年。

+

+### Linux ###

+

+到了 1991 年,用来替代商业操作系统的自由(free)操作系统的需求日渐高涨。因此,**Linus Torvalds** 开始构建一个自由的操作系统,最终成为 **Linux**。Linux 最开始只有一些 “C” 文件,并且使用了阻止商业发行的授权。Linux 是一个类 UNIX 系统但又不尽相同。

+

+2015 年发布了基于 GNU Public License (GPL)授权的 3.18 版。IBM 声称有超过 1800 万行开源代码开源给开发者。

+

+如今 GNU Public License 是应用最广泛的自由软件授权方式。根据开源软件原则,这份授权允许个人和企业自由分发、运行、通过拷贝共享、学习,以及修改软件源码。

+

+### UNIX vs. Linux:技术概要 ###

+

+- Linux 鼓励多样性,Linux 的开发人员来自各种背景,有更多不同经验和意见。

+- Linux 比 UNIX 支持更多的平台和架构。

+- UNIX 商业版本的开发人员针对特定目标平台以及用户设计他们的操作系统。

+- **Linux 比 UNIX 有更好的安全性**,更少受病毒或恶意软件攻击。截止到现在,Linux 上大约有 60-100 种病毒,但是没有任何一种还在传播。另一方面,UNIX 上大约有 85-120 种病毒,但是其中有一些还在传播中。

+- 由于 UNIX 命令、工具和元素很少改变,甚至很多接口和命令行参数在后续 UNIX 版本中一直沿用。

+- 有些 Linux 开发项目以自愿为基础进行资助,比如 Debian。其他项目会维护一个和商业 Linux 的社区版,比如 SUSE 的 openSUSE 以及红帽的 Fedora。

+- 传统 UNIX 是纵向扩展,而另一方面 Linux 是横向扩展。

+

+--------------------------------------------------------------------------------

+

+via: http://www.unixmen.com/brief-history-aix-hp-ux-solaris-bsd-linux/

+

+作者:[M.el Khamlichi][a]

+译者:[zpl1025](https://github.com/zpl1025)

+校对:[Caroline](https://github.com/carolinewuyan)

+

+本文由 [LCTT](https://github.com/LCTT/TranslateProject) 原创编译,[Linux中国](https://linux.cn/) 荣誉推出

+

+[a]:http://www.unixmen.com/author/pirat9/

+[1]:http://www.unixmen.com/ken-thompson-unix-systems-father/

+[2]:http://www.unixmen.com/dennis-m-ritchie-father-c-programming-language/

diff --git a/translated/tech/20151020 how to h2 in apache.md b/published/20151020 how to h2 in apache.md

similarity index 55%

rename from translated/tech/20151020 how to h2 in apache.md

rename to published/20151020 how to h2 in apache.md

index 32420d5bf4..add5bb7560 100644

--- a/translated/tech/20151020 how to h2 in apache.md

+++ b/published/20151020 how to h2 in apache.md

@@ -8,45 +8,44 @@ Copyright (C) 2015 greenbytes GmbH

### 源码 ###

-你可以从[这里][1]得到 Apache 发行版。Apache 2.4.17 及其更高版本都支持 HTTP/2。我不会再重复介绍如何构建服务器的指令。在很多地方有很好的指南,例如[这里][2]。

+你可以从[这里][1]得到 Apache 版本。Apache 2.4.17 及其更高版本都支持 HTTP/2。我不会再重复介绍如何构建该服务器的指令。在很多地方有很好的指南,例如[这里][2]。

-(有任何试验的链接?在 Twitter 上告诉我吧 @icing)

+(有任何这个试验性软件包的相关链接?在 Twitter 上告诉我吧 @icing)

-#### 编译支持 HTTP/2 ####

+#### 编译支持 HTTP/2 ####

-在你编译发行版之前,你要进行一些**配置**。这里有成千上万的选项。和 HTTP/2 相关的是:

+在你编译版本之前,你要进行一些**配置**。这里有成千上万的选项。和 HTTP/2 相关的是:

- **--enable-http2**

- 启用在 Apache 服务器内部实现协议的 ‘http2’ 模块。

+ 启用在 Apache 服务器内部实现该协议的 ‘http2’ 模块。

-- **--with-nghttp2=**

+- **--with-nghttp2=\**

指定 http2 模块需要的 libnghttp2 模块的非默认位置。如果 nghttp2 是在默认的位置,配置过程会自动采用。

- **--enable-nghttp2-staticlib-deps**

- 很少用到的选项,你可能用来静态链接 nghttp2 库到服务器。在大部分平台上,只有在找不到共享 nghttp2 库时才有效。

+ 很少用到的选项,你可能想将 nghttp2 库静态链接到服务器里。在大部分平台上,只有在找不到共享 nghttp2 库时才有用。

-如果你想自己编译 nghttp2,你可以到 [nghttp2.org][3] 查看文档。最新的 Fedora 以及其它发行版已经附带了这个库。

+如果你想自己编译 nghttp2,你可以到 [nghttp2.org][3] 查看文档。最新的 Fedora 以及其它版本已经附带了这个库。

#### TLS 支持 ####

-大部分人想在浏览器上使用 HTTP/2, 而浏览器只在 TLS 连接(**https:// 开头的 url)时支持它。你需要一些我下面介绍的配置。但首先你需要的是支持 ALPN 扩展的 TLS 库。

+大部分人想在浏览器上使用 HTTP/2, 而浏览器只在使用 TLS 连接(**https:// 开头的 url)时才支持 HTTP/2。你需要一些我下面介绍的配置。但首先你需要的是支持 ALPN 扩展的 TLS 库。

+ALPN 用来协商(negotiate)服务器和客户端之间的协议。如果你服务器上 TLS 库还没有实现 ALPN,客户端只能通过 HTTP/1.1 通信。那么,可以和 Apache 链接并支持它的是什么库呢?

-ALPN 用来屏蔽服务器和客户端之间的协议。如果你服务器上 TLS 库还没有实现 ALPN,客户端只能通过 HTTP/1.1 通信。那么,和 Apache 连接的到底是什么?又是什么支持它呢?

+- **OpenSSL 1.0.2** 及其以后。

+- ??? (别的我也不知道了)

-- **OpenSSL 1.0.2** 即将到来。

-- ???

-

-如果你的 OpenSSL 库是 Linux 发行版自带的,这里使用的版本号可能和官方 OpenSSL 发行版的不同。如果不确定的话检查一下你的 Linux 发行版吧。

+如果你的 OpenSSL 库是 Linux 版本自带的,这里使用的版本号可能和官方 OpenSSL 版本的不同。如果不确定的话检查一下你的 Linux 版本吧。

### 配置 ###

另一个给服务器的好建议是为 http2 模块设置合适的日志等级。添加下面的配置:

- # 某个地方有这样一行

+ # 放在某个地方的这样一行

LoadModule http2_module modules/mod_http2.so

@@ -62,38 +61,37 @@ ALPN 用来屏蔽服务器和客户端之间的协议。如果你服务器上 TL

那么,假设你已经编译部署好了服务器, TLS 库也是最新的,你启动了你的服务器,打开了浏览器。。。你怎么知道它在工作呢?

-如果除此之外你没有添加其它到服务器配置,很可能它没有工作。

+如果除此之外你没有添加其它的服务器配置,很可能它没有工作。

-你需要告诉服务器在哪里使用协议。默认情况下,你的服务器并没有启动 HTTP/2 协议。因为这是安全路由,你可能要有一套部署了才能继续。

+你需要告诉服务器在哪里使用该协议。默认情况下,你的服务器并没有启动 HTTP/2 协议。因为这样比较安全,也许才能让你已有的部署可以继续工作。

-你用 **Protocols** 命令启用 HTTP/2 协议:

+你可以用新的 **Protocols** 指令启用 HTTP/2 协议:

- # for a https server

+ # 对于 https 服务器

Protocols h2 http/1.1

...

- # for a http server

+ # 对于 http 服务器

Protocols h2c http/1.1

-你可以给一般服务器或者指定的 **vhosts** 添加这个配置。

+你可以给整个服务器或者指定的 **vhosts** 添加这个配置。

#### SSL 参数 ####

-对于 TLS (SSL),HTTP/2 有一些特殊的要求。阅读 [https:// 连接][4]了解更详细的信息。

+对于 TLS (SSL),HTTP/2 有一些特殊的要求。阅读下面的“ https:// 连接”一节了解更详细的信息。

### http:// 连接 (h2c) ###

-尽管现在还没有浏览器支持 HTTP/2 协议, http:// 这样的 url 也能正常工作, 因为有 mod_h[ttp]2 的支持。启用它你只需要做的一件事是在 **httpd.conf** 配置 Protocols :

+尽管现在还没有浏览器支持,但是 HTTP/2 协议也工作在 http:// 这样的 url 上, 而且 mod_h[ttp]2 也支持。启用它你唯一所要做的是在 Protocols 配置中启用它:

- # for a http server

+ # 对于 http 服务器

Protocols h2c http/1.1

-

这里有一些支持 **h2c** 的客户端(和客户端库)。我会在下面介绍:

#### curl ####

-Daniel Stenberg 维护的网络资源命令行客户端 curl 当然支持。如果你的系统上有 curl,有一个简单的方法检查它是否支持 http/2:

+Daniel Stenberg 维护的用于访问网络资源的命令行客户端 curl 当然支持。如果你的系统上有 curl,有一个简单的方法检查它是否支持 http/2:

sh> curl -V

curl 7.43.0 (x86_64-apple-darwin15.0) libcurl/7.43.0 SecureTransport zlib/1.2.5

@@ -126,11 +124,11 @@ Daniel Stenberg 维护的网络资源命令行客户端 curl 当然支持。如

恭喜,如果看到了有 **...101 Switching...** 的行就表示它正在工作!

-有一些情况不会发生到 HTTP/2 的 Upgrade 。如果你的第一个请求没有内容,例如你上传一个文件,就不会触发 Upgrade。[h2c 限制][5]部分有详细的解释。

+有一些情况不会发生 HTTP/2 的升级切换(Upgrade)。如果你的第一个请求有内容数据(body),例如你上传一个文件时,就不会触发升级切换。[h2c 限制][5]部分有详细的解释。

#### nghttp ####

-nghttp2 有能一起编译的客户端和服务器。如果你的系统中有客户端,你可以简单地通过获取资源验证你的安装:

+nghttp2 可以一同编译它自己的客户端和服务器。如果你的系统中有该客户端,你可以简单地通过获取一个资源来验证你的安装:

sh> nghttp -uv http:///

[ 0.001] Connected

@@ -151,7 +149,7 @@ nghttp2 有能一起编译的客户端和服务器。如果你的系统中有客

这和我们上面 **curl** 例子中看到的 Upgrade 输出很相似。

-在命令行参数中隐藏着一种可以使用 **h2c**:的参数:**-u**。这会指示 **nghttp** 进行 HTTP/1 Upgrade 过程。但如果我们不使用呢?

+有另外一种在命令行参数中不用 **-u** 参数而使用 **h2c** 的方法。这个参数会指示 **nghttp** 进行 HTTP/1 升级切换过程。但如果我们不使用呢?

sh> nghttp -v http:///

[ 0.002] Connected

@@ -166,36 +164,33 @@ nghttp2 有能一起编译的客户端和服务器。如果你的系统中有客

:scheme: http

...

-连接马上显示出了 HTTP/2!这就是协议中所谓的直接模式,当客户端发送一些特殊的 24 字节到服务器时就会发生:

+连接马上使用了 HTTP/2!这就是协议中所谓的直接(direct)模式,当客户端发送一些特殊的 24 字节到服务器时就会发生:

0x505249202a20485454502f322e300d0a0d0a534d0d0a0d0a

- or in ASCII: PRI * HTTP/2.0\r\n\r\nSM\r\n\r\n

+

+用 ASCII 表示是:

+

+ PRI * HTTP/2.0\r\n\r\nSM\r\n\r\n

支持 **h2c** 的服务器在一个新的连接中看到这些信息就会马上切换到 HTTP/2。HTTP/1.1 服务器则认为是一个可笑的请求,响应并关闭连接。

-因此 **直接** 模式只适合于那些确定服务器支持 HTTP/2 的客户端。例如,前一个 Upgrade 过程是成功的。

+因此,**直接**模式只适合于那些确定服务器支持 HTTP/2 的客户端。例如,当前一个升级切换过程成功了的时候。

-**直接** 模式的魅力是零开销,它支持所有请求,即使没有 body 部分(查看[h2c 限制][6])。任何支持 h2c 协议的服务器默认启用了直接模式。如果你想停用它,可以添加下面的配置指令到你的服务器:

+**直接**模式的魅力是零开销,它支持所有请求,即使带有请求数据部分(查看[h2c 限制][6])。

-注:下面这行打删除线

-

- H2Direct off

-

-注:下面这行打删除线

-

-对于 2.4.17 发行版,默认明文连接时启用 **H2Direct** 。但是有一些模块和这不兼容。因此,在下一发行版中,默认会设置为**off**,如果你希望你的服务器支持它,你需要设置它为:

+对于 2.4.17 版本,明文连接时默认启用 **H2Direct** 。但是有一些模块和这不兼容。因此,在下一版本中,默认会设置为**off**,如果你希望你的服务器支持它,你需要设置它为:

H2Direct on

### https:// 连接 (h2) ###

-一旦你的 mod_h[ttp]2 支持 h2c 连接,就是时候一同启用 **h2**,因为现在的浏览器支持它和 **https:** 一同使用。

+当你的 mod_h[ttp]2 可以支持 h2c 连接时,那就可以一同启用 **h2** 兄弟了,现在的浏览器仅支持它和 **https:** 一同使用。

-HTTP/2 标准对 https:(TLS)连接增加了一些额外的要求。上面已经提到了 ALNP 扩展。另外的一个要求是不会使用特定[黑名单][7]中的密码。

+HTTP/2 标准对 https:(TLS)连接增加了一些额外的要求。上面已经提到了 ALNP 扩展。另外的一个要求是不能使用特定[黑名单][7]中的加密算法。

-尽管现在版本的 **mod_h[ttp]2** 不增强这些密码(以后可能会),大部分客户端会这么做。如果你用不切当的密码在浏览器中打开 **h2** 服务器,你会看到模糊警告**INADEQUATE_SECURITY**,浏览器会拒接连接。

+尽管现在版本的 **mod_h[ttp]2** 不增强这些算法(以后可能会),但大部分客户端会这么做。如果让你的浏览器使用不恰当的算法打开 **h2** 服务器,你会看到不明确的警告**INADEQUATE_SECURITY**,浏览器会拒接连接。

-一个可接受的 Apache SSL 配置类似:

+一个可行的 Apache SSL 配置类似:

SSLCipherSuite ECDHE-RSA-AES128-GCM-SHA256:ECDHE-ECDSA-AES128-GCM-SHA256:ECDHE-RSA-AES256-GCM-SHA384:ECDHE-ECDSA-AES256-GCM-SHA384:DHE-RSA-AES128-GCM-SHA256:DHE-DSS-AES128-GCM-SHA256:kEDH+AESGCM:ECDHE-RSA-AES128-SHA256:ECDHE-ECDSA-AES128-SHA256:ECDHE-RSA-AES128-SHA:ECDHE-ECDSA-AES128-SHA:ECDHE-RSA-AES256-SHA384:ECDHE-ECDSA-AES256-SHA384:ECDHE-RSA-AES256-SHA:ECDHE-ECDSA-AES256-SHA:DHE-RSA-AES128-SHA256:DHE-RSA-AES128-SHA:DHE-DSS-AES128-SHA256:DHE-RSA-AES256-SHA256:DHE-DSS-AES256-SHA:DHE-RSA-AES256-SHA:!aNULL:!eNULL:!EXPORT:!DES:!RC4:!3DES:!MD5:!PSK

SSLProtocol All -SSLv2 -SSLv3

@@ -203,11 +198,11 @@ HTTP/2 标准对 https:(TLS)连接增加了一些额外的要求。上面已

(是的,这确实很长。)

-这里还有一些应该调整的 SSL 配置参数,但不是必须:**SSLSessionCache**, **SSLUseStapling** 等,其它地方也有介绍这些。例如 Ilya Grigorik 写的一篇博客 [高性能浏览器网络][8]。

+这里还有一些应该调整,但不是必须调整的 SSL 配置参数:**SSLSessionCache**, **SSLUseStapling** 等,其它地方也有介绍这些。例如 Ilya Grigorik 写的一篇超赞的博客: [高性能浏览器网络][8]。

#### curl ####

-再次回到 shell 并使用 curl(查看 [curl h2c 章节][9] 了解要求)你也可以通过 curl 用简单的命令检测你的服务器:

+再次回到 shell 使用 curl(查看上面的“curl h2c”章节了解要求),你也可以通过 curl 用简单的命令检测你的服务器:

sh> curl -v --http2 https:///

...

@@ -220,9 +215,9 @@ HTTP/2 标准对 https:(TLS)连接增加了一些额外的要求。上面已

恭喜你,能正常工作啦!如果还不能,可能原因是:

-- 你的 curl 不支持 HTTP/2。查看[检测][10]。

+- 你的 curl 不支持 HTTP/2。查看上面的“检测 curl”一节。

- 你的 openssl 版本太低不支持 ALPN。

-- 不能验证你的证书,或者不接受你的密码配置。尝试添加命令行选项 -k 停用 curl 中的检查。如果那能工作,还要重新配置你的 SSL 和证书。

+- 不能验证你的证书,或者不接受你的算法配置。尝试添加命令行选项 -k 停用 curl 中的这些检查。如果可以工作,就重新配置你的 SSL 和证书。

#### nghttp ####

@@ -246,11 +241,11 @@ HTTP/2 标准对 https:(TLS)连接增加了一些额外的要求。上面已

The negotiated protocol: http/1.1

[ERROR] HTTP/2 protocol was not selected. (nghttp2 expects h2)

-这表示 ALPN 能正常工作,但并没有用 h2 协议。你需要像上面介绍的那样在服务器上选中那个协议。如果一开始在 vhost 部分选中不能正常工作,试着在通用部分选中它。

+这表示 ALPN 能正常工作,但并没有用 h2 协议。你需要像上面介绍的那样检查你服务器上的 Protocols 配置。如果一开始在 vhost 部分设置不能正常工作,试着在通用部分设置它。

#### Firefox ####

-Update: [Apache Lounge][11] 的 Steffen Land 告诉我 [Firefox HTTP/2 指示插件][12]。你可以看到有多少地方用到了 h2(提示:Apache Lounge 用 h2 已经有一段时间了。。。)

+更新: [Apache Lounge][11] 的 Steffen Land 告诉我 [Firefox 上有个 HTTP/2 指示插件][12]。你可以看到有多少地方用到了 h2(提示:Apache Lounge 用 h2 已经有一段时间了。。。)

你可以在 Firefox 浏览器中打开开发者工具,在那里的网络标签页查看 HTTP/2 连接。当你打开了 HTTP/2 并重新刷新 html 页面时,你会看到类似下面的东西:

@@ -260,9 +255,9 @@ Update: [Apache Lounge][11] 的 Steffen Land 告诉我 [Firefox HTTP/2 指示

#### Google Chrome ####

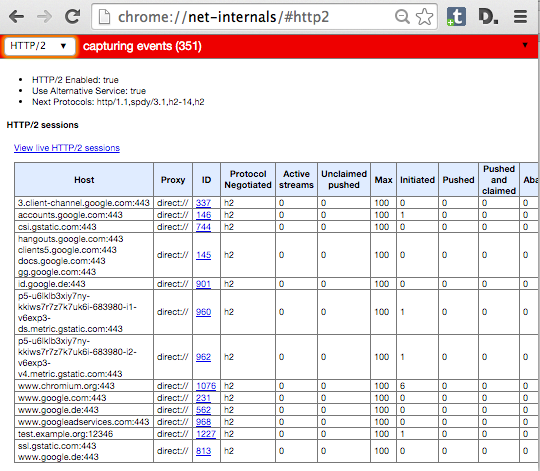

-在 Google Chrome 中,你在开发者工具中看不到 HTTP/2 指示器。相反,Chrome 用特殊的地址 **chrome://net-internals/#http2** 给出了相关信息。

+在 Google Chrome 中,你在开发者工具中看不到 HTTP/2 指示器。相反,Chrome 用特殊的地址 **chrome://net-internals/#http2** 给出了相关信息。(LCTT 译注:Chrome 已经有一个 “HTTP/2 and SPDY indicator” 可以很好的在地址栏识别 HTTP/2 连接)

-如果你在服务器中打开了一个页面并在 Chrome 那个页面查看,你可以看到类似下面这样:

+如果你打开了一个服务器的页面,可以在 Chrome 中查看那个 net-internals 页面,你可以看到类似下面这样:

@@ -276,21 +271,21 @@ Windows 10 中 Internet Explorer 的继任者 Edge 也支持 HTTP/2。你也可

#### Safari ####

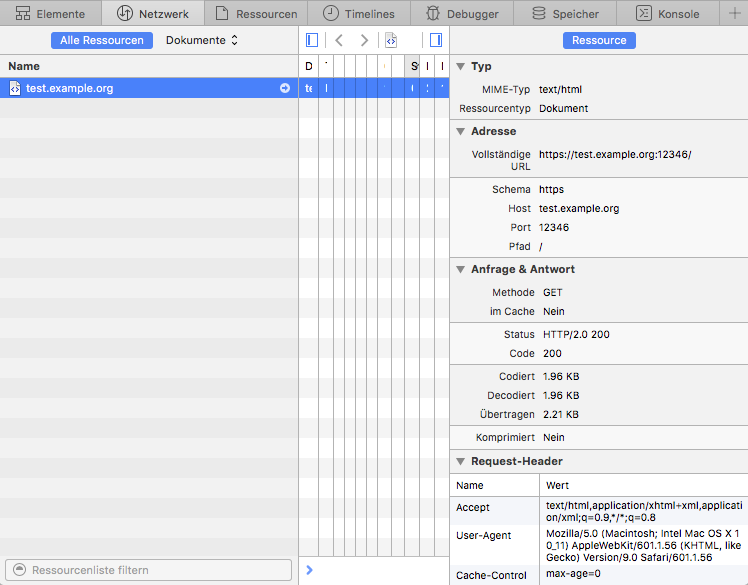

-在 Apple 的 Safari 中,打开开发者工具,那里有个网络标签页。重新加载你的服务器页面并在开发者工具中选择显示了加载的行。如果你启用了在右边显示详细试图,看 **状态** 部分。那里显示了 **HTTP/2.0 200**,类似:

+在 Apple 的 Safari 中,打开开发者工具,那里有个网络标签页。重新加载你的服务器上的页面,并在开发者工具中选择显示了加载的那行。如果你启用了在右边显示详细视图,看 **Status** 部分。那里显示了 **HTTP/2.0 200**,像这样:

#### 重新协商 ####

-https: 连接重新协商是指正在运行的连接中特定的 TLS 参数会发生变化。在 Apache httpd 中,你可以通过目录中的配置文件修改 TLS 参数。如果一个要获取特定位置资源的请求到来,配置的 TLS 参数会和当前的 TLS 参数进行对比。如果它们不相同,就会触发重新协商。

+https: 连接重新协商是指正在运行的连接中特定的 TLS 参数会发生变化。在 Apache httpd 中,你可以在 directory 配置中改变 TLS 参数。如果进来一个获取特定位置资源的请求,配置的 TLS 参数会和当前的 TLS 参数进行对比。如果它们不相同,就会触发重新协商。

-这种最常见的情形是密码变化和客户端验证。你可以要求客户访问特定位置时需要通过验证,或者对于特定资源,你可以使用更安全的, CPU 敏感的密码。

+这种最常见的情形是算法变化和客户端证书。你可以要求客户访问特定位置时需要通过验证,或者对于特定资源,你可以使用更安全的、对 CPU 压力更大的算法。

-不管你的想法有多么好,HTTP/2 中都**不可以**发生重新协商。如果有 100 多个请求到同一个地方,什么时候哪个会发生重新协商呢?

+但不管你的想法有多么好,HTTP/2 中都**不可以**发生重新协商。在同一个连接上会有 100 多个请求,那么重新协商该什么时候做呢?

-对于这种配置,现有的 **mod_h[ttp]2** 还不能保证你的安全。如果你有一个站点使用了 TLS 重新协商,别在上面启用 h2!

+对于这种配置,现有的 **mod_h[ttp]2** 还没有办法。如果你有一个站点使用了 TLS 重新协商,别在上面启用 h2!

-当然,我们会在后面的发行版中解决这个问题然后你就可以安全地启用了。

+当然,我们会在后面的版本中解决这个问题,然后你就可以安全地启用了。

### 限制 ###

@@ -298,45 +293,45 @@ https: 连接重新协商是指正在运行的连接中特定的 TLS 参数会

实现除 HTTP 之外协议的模块可能和 **mod_http2** 不兼容。这在其它协议要求服务器首先发送数据时无疑会发生。

-**NNTP** 就是这种协议的一个例子。如果你在服务器中配置了 **mod_nntp_like_ssl**,甚至都不要加载 mod_http2。等待下一个发行版。

+**NNTP** 就是这种协议的一个例子。如果你在服务器中配置了 **mod\_nntp\_like\_ssl**,那么就不要加载 mod_http2。等待下一个版本。

#### h2c 限制 ####

**h2c** 的实现还有一些限制,你应该注意:

-#### 在虚拟主机中拒绝 h2c ####

+##### 在虚拟主机中拒绝 h2c #####

你不能对指定的虚拟主机拒绝 **h2c 直连**。连接建立而没有看到请求时会触发**直连**,这使得不可能预先知道 Apache 需要查找哪个虚拟主机。

-#### 升级请求体 ####

+##### 有请求数据时的升级切换 #####

-对于有 body 部分的请求,**h2c** 升级不能正常工作。那些是 PUT 和 POST 请求(用于提交和上传)。如果你写了一个客户端,你可能会用一个简单的 GET 去处理请求或者用选项 * 去触发升级。

+对于有数据的请求,**h2c** 升级切换不能正常工作。那些是 PUT 和 POST 请求(用于提交和上传)。如果你写了一个客户端,你可能会用一个简单的 GET 或者 OPTIONS * 来处理那些请求以触发升级切换。

-原因从技术层面来看显而易见,但如果你想知道:升级过程中,连接处于半疯状态。请求按照 HTTP/1.1 的格式,而响应使用 HTTP/2。如果请求有一个 body 部分,服务器在发送响应之前需要读取整个 body。因为响应可能需要从客户端处得到应答用于流控制。但如果仍在发送 HTTP/1.1 请求,客户端就还不能处理 HTTP/2 连接。

+原因从技术层面来看显而易见,但如果你想知道:在升级切换过程中,连接处于半疯状态。请求按照 HTTP/1.1 的格式,而响应使用 HTTP/2 帧。如果请求有一个数据部分,服务器在发送响应之前需要读取整个数据。因为响应可能需要从客户端处得到应答用于流控制及其它东西。但如果仍在发送 HTTP/1.1 请求,客户端就仍然不能以 HTTP/2 连接。

-为了使行为可预测,几个服务器实现商决定不要在任何请求体中进行升级,即使 body 很小。

+为了使行为可预测,几个服务器在实现上决定不在任何带有请求数据的请求中进行升级切换,即使请求数据很小。

-#### 升级 302s ####

+##### 302 时的升级切换 #####

-有重定向发生时当前 h2c 升级也不能工作。看起来 mod_http2 之前的重写有可能发生。这当然不会导致断路,但你测试这样的站点也许会让你迷惑。

+有重定向发生时,当前的 h2c 升级切换也不能工作。看起来 mod_http2 之前的重写有可能发生。这当然不会导致断路,但你测试这样的站点也许会让你迷惑。

#### h2 限制 ####

这里有一些你应该意识到的 h2 实现限制:

-#### 连接重用 ####

+##### 连接重用 #####

HTTP/2 协议允许在特定条件下重用 TLS 连接:如果你有带通配符的证书或者多个 AltSubject 名称,浏览器可能会重用现有的连接。例如:

-你有一个 **a.example.org** 的证书,它还有另外一个名称 **b.example.org**。你在浏览器中打开 url **https://a.example.org/**,用另一个标签页加载 **https://b.example.org/**。

+你有一个 **a.example.org** 的证书,它还有另外一个名称 **b.example.org**。你在浏览器中打开 URL **https://a.example.org/**,用另一个标签页加载 **https://b.example.org/**。

-在重新打开一个新的连接之前,浏览器看到它有一个到 **a.example.org** 的连接并且证书对于 **b.example.org** 也可用。因此,它在第一个连接上面向第二个标签页发送请求。

+在重新打开一个新的连接之前,浏览器看到它有一个到 **a.example.org** 的连接并且证书对于 **b.example.org** 也可用。因此,它在第一个连接上面发送第二个标签页的请求。

-这种连接重用是刻意设计的,它使得致力于 HTTP/1 切分效率的站点能够不需要太多变化就能利用 HTTP/2。

+这种连接重用是刻意设计的,它使得使用了 HTTP/1 切分(sharding)来提高效率的站点能够不需要太多变化就能利用 HTTP/2。

-Apache **mod_h[ttp]2** 还没有完全实现这点。如果 **a.example.org** 和 **b.example.org** 是不同的虚拟主机, Apache 不会允许这样的连接重用,并会告知浏览器状态码**421 错误请求**。浏览器会意识到它需要重新打开一个到 **b.example.org** 的连接。这仍然能工作,只是会降低一些效率。

+Apache **mod_h[ttp]2** 还没有完全实现这点。如果 **a.example.org** 和 **b.example.org** 是不同的虚拟主机, Apache 不会允许这样的连接重用,并会告知浏览器状态码 **421 Misdirected Request**。浏览器会意识到它需要重新打开一个到 **b.example.org** 的连接。这仍然能工作,只是会降低一些效率。

-我们期望下一次的发布中能有切当的检查。

+我们期望下一次的发布中能有合适的检查。

Münster, 12.10.2015,

@@ -355,7 +350,7 @@ via: https://icing.github.io/mod_h2/howto.html

作者:[icing][a]

译者:[ictlyh](http://mutouxiaogui.cn/blog/)

-校对:[校对者ID](https://github.com/校对者ID)

+校对:[wxy](https://github.com/wxy)

本文由 [LCTT](https://github.com/LCTT/TranslateProject) 原创编译,[Linux中国](https://linux.cn/) 荣誉推出

diff --git a/published/20150823 How learning data structures and algorithms make you a better developer.md b/published/201511/20150823 How learning data structures and algorithms make you a better developer.md

similarity index 100%

rename from published/20150823 How learning data structures and algorithms make you a better developer.md

rename to published/201511/20150823 How learning data structures and algorithms make you a better developer.md

diff --git a/published/201511/20150827 The Strangest Most Unique Linux Distros.md b/published/201511/20150827 The Strangest Most Unique Linux Distros.md

new file mode 100644

index 0000000000..a7dff335a4

--- /dev/null

+++ b/published/201511/20150827 The Strangest Most Unique Linux Distros.md

@@ -0,0 +1,88 @@

+那些奇特的 Linux 发行版本

+================================================================================

+从大多数消费者所关注的诸如 Ubuntu,Fedora,Mint 或 elementary OS 到更加复杂、轻量级和企业级的诸如 Slackware,Arch Linux 或 RHEL,这些发行版本我都已经见识过了。除了这些,难道没有其他别的了吗?其实 Linux 的生态系统是非常多样化的,对每个人来说,总有一款适合你。下面就让我们讨论一些稀奇古怪的小众 Linux 发行版本吧,它们代表着开源平台真正的多样性。

+

+### Puppy Linux

+

+

+

+它是一个仅有一个普通 DVD 光盘容量十分之一大小的操作系统,这就是 Puppy Linux。整个操作系统仅有 100MB 大小!并且它还可以从内存中运行,这使得它运行极快,即便是在老式的 PC 机上。 在操作系统启动后,你甚至可以移除启动介质!还有什么比这个更好的吗? 系统所需的资源极小,大多数的硬件都会被自动检测到,并且它预装了能够满足你基本需求的软件。[在这里体验 Puppy Linux 吧][1].

+

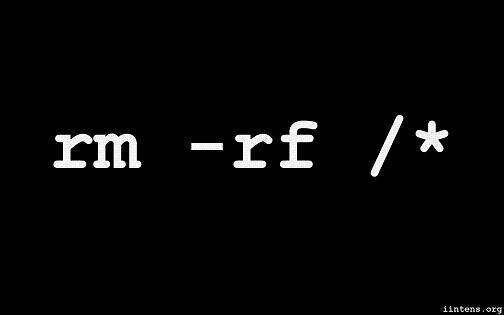

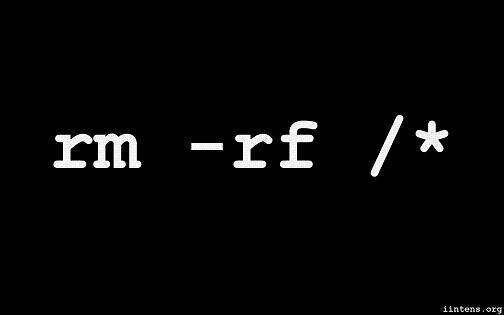

+### Suicide Linux(自杀 Linux)

+

+

+

+这个名字吓到你了吗?我想应该是。 ‘任何时候 -注意是任何时候-一旦你远程输入不正确的命令,解释器都会创造性地将它重定向为 `rm -rf /` 命令,然后擦除你的硬盘’。它就是这么简单。我真的很想知道谁自信到将[Suicide Linux][2] 安装到生产机上。 **警告:千万不要在生产机上尝试这个!** 假如你感兴趣的话,现在可以通过一个简洁的[DEB 包][3]来获取到它。

+

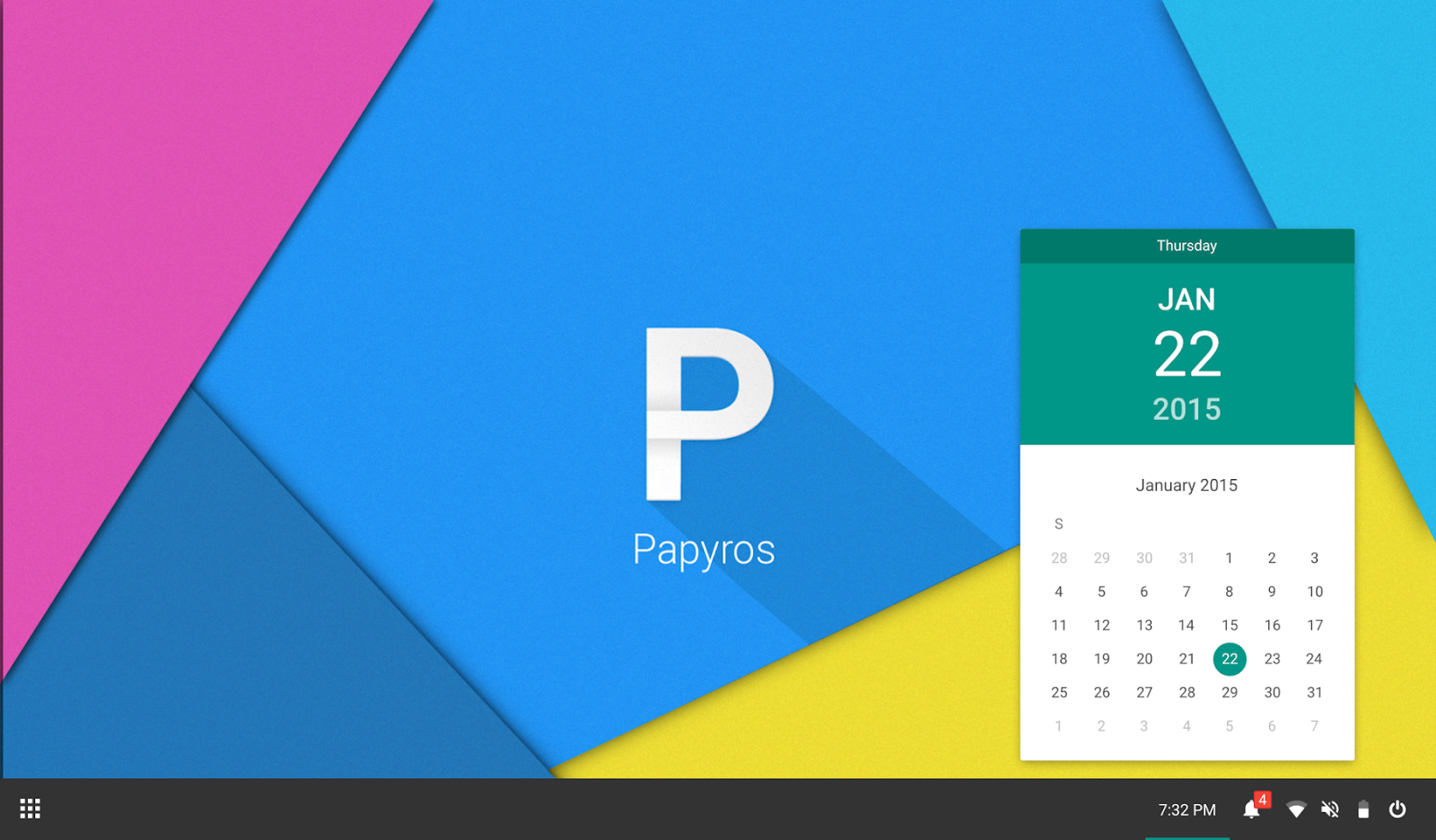

+### PapyrOS

+

+

+

+它的 “奇怪”是好的方面。PapyrOS 正尝试着将 Android 的 material design 设计语言引入到新的 Linux 发行版本上。尽管这个项目还处于早期阶段,看起来它已经很有前景。该项目的网页上说该系统已经完成了 80%,随后人们可以期待它的第一个 Alpha 发行版本。在该项目被宣告提出时,我们做了 [PapyrOS][4] 的小幅报道,从它的外观上看,它甚至可能会引领潮流。假如你感兴趣的话,可在 [Google+][5] 上关注该项目并可通过 [BountySource][6] 来贡献出你的力量。

+

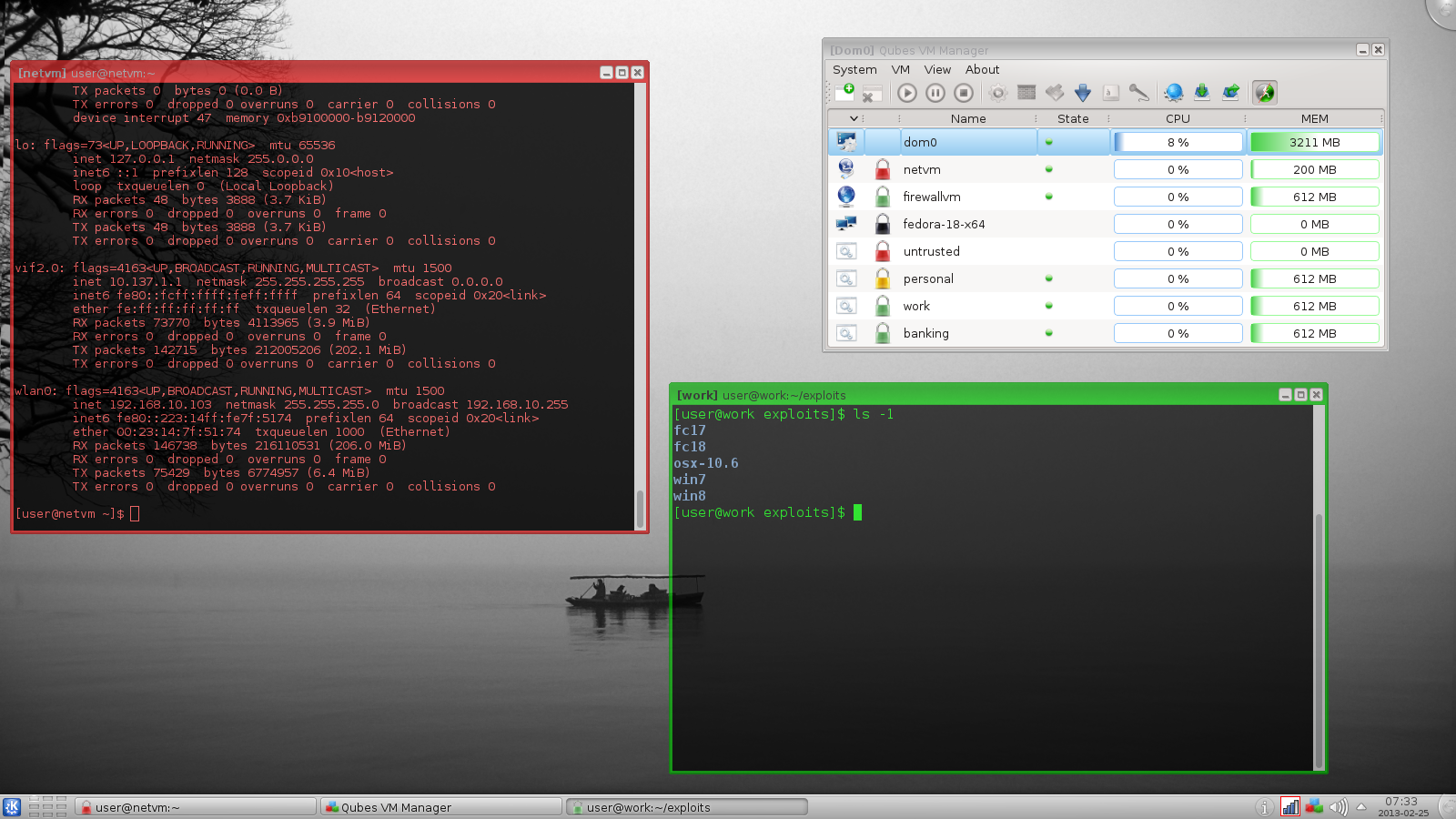

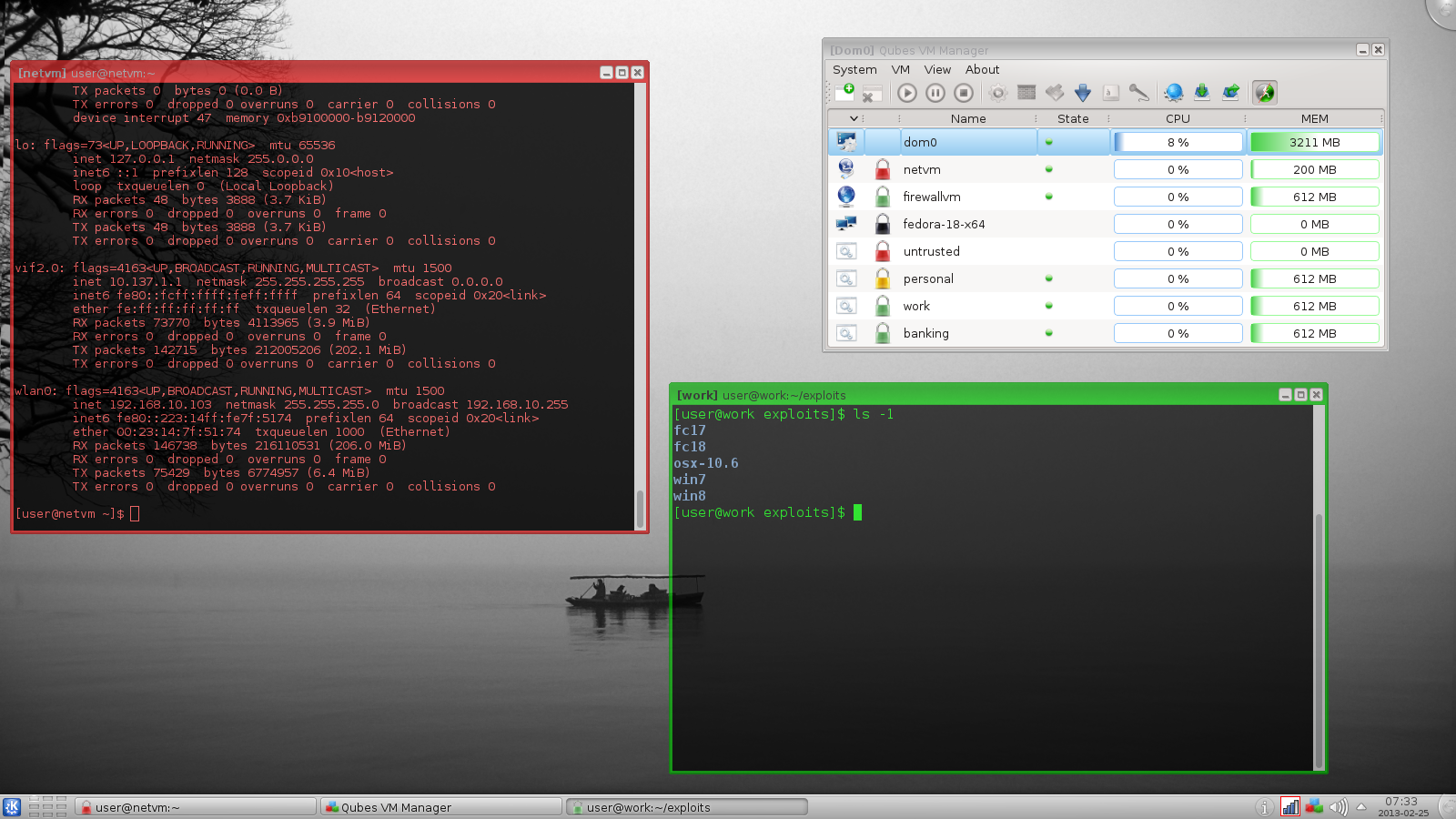

+### Qubes OS

+

+

+

+Qubes 是一个开源的操作系统,其设计通过使用[安全分级(Security by Compartmentalization)][14]的方法,来提供强安全性。其前提假设是不存在完美的没有 bug 的桌面环境。并通过实现一个‘安全隔离(Security by Isolation)’ 的方法,[Qubes Linux][7]试图去解决这些问题。Qubes 基于 Xen、X 视窗系统和 Linux,并可运行大多数的 Linux 应用,支持大多数的 Linux 驱动。Qubes 入选了 Access Innovation Prize 2014 for Endpoint Security Solution 决赛名单。

+

+### Ubuntu Satanic Edition

+

+

+

+Ubuntu SE 是一个基于 Ubuntu 的发行版本。通过一个含有主题、壁纸甚至来源于某些天才新晋艺术家的重金属音乐的综合软件包,“它同时带来了最好的自由软件和免费的金属音乐” 。尽管这个项目看起来不再积极开发了, Ubuntu Satanic Edition 甚至在其名字上都显得奇异。 [Ubuntu SE (Slightly NSFW)][8]。

+

+### Tiny Core Linux

+

+

+

+Puppy Linux 还不够小?试试这个吧。 Tiny Core Linux 是一个 12MB 大小的图形化 Linux 桌面!是的,你没有看错。一个主要的补充说明:它不是一个完整的桌面,也并不完全支持所有的硬件。它只含有能够启动进入一个非常小巧的 X 桌面,支持有线网络连接的核心部件。它甚至还有一个名为 Micro Core Linux 的没有 GUI 的版本,仅有 9MB 大小。[Tiny Core Linux][9]。

+

+### NixOS

+

+

+

+它是一个资深用户所关注的 Linux 发行版本,有着独特的打包和配置管理方式。在其他的发行版本中,诸如升级的操作可能是非常危险的。升级一个软件包可能会引起其他包无法使用,而升级整个系统感觉还不如重新安装一个。在那些你不能安全地测试由一个配置的改变所带来的结果的更改之上,它们通常没有“重来”这个选项。在 NixOS 中,整个系统由 Nix 包管理器按照一个纯功能性的构建语言的描述来构建。这意味着构建一个新的配置并不会重写先前的配置。大多数其他的特色功能也遵循着这个模式。Nix 相互隔离地存储所有的软件包。有关 NixOS 的更多内容请看[这里][10]。

+

+### GoboLinux

+

+

+

+这是另一个非常奇特的 Linux 发行版本。它与其他系统如此不同的原因是它有着独特的重新整理的文件系统。它有着自己独特的子目录树,其中存储着所有的文件和程序。GoboLinux 没有专门的包数据库,因为其文件系统就是它的数据库。在某些方面,这类重整有些类似于 OS X 上所看到的功能。

+

+### Hannah Montana Linux

+

+

+

+它是一个基于 Kubuntu 的 Linux 发行版本,它有着汉娜·蒙塔娜( Hannah Montana) 主题的开机启动界面、KDM(KDE Display Manager)、图标集、ksplash、plasma、颜色主题和壁纸(I'm so sorry)。[这是它的链接][12]。这个项目现在不再活跃了。

+

+### RLSD Linux

+

+它是一个极其精简、小巧、轻量和安全可靠的,基于 Linux 文本的操作系统。开发者称 “它是一个独特的发行版本,提供一系列的控制台应用和自带的安全特性,对黑客或许有吸引力。” [RLSD Linux][13].

+

+我们还错过了某些更加奇特的发行版本吗?请让我们知晓吧。

+

+--------------------------------------------------------------------------------

+

+via: http://www.techdrivein.com/2015/08/the-strangest-most-unique-linux-distros.html

+

+作者:Manuel Jose

+译者:[FSSlc](https://github.com/FSSlc)

+校对:[wxy](https://github.com/wxy)

+

+本文由 [LCTT](https://github.com/LCTT/TranslateProject) 原创翻译,[Linux中国](https://linux.cn/) 荣誉推出

+

+[1]:http://puppylinux.org/main/Overview%20and%20Getting%20Started.htm

+[2]:http://qntm.org/suicide

+[3]:http://sourceforge.net/projects/suicide-linux/files/

+[4]:http://www.techdrivein.com/2015/02/papyros-material-design-linux-coming-soon.html

+[5]:https://plus.google.com/communities/109966288908859324845/stream/3262a3d3-0797-4344-bbe0-56c3adaacb69

+[6]:https://www.bountysource.com/teams/papyros

+[7]:https://www.qubes-os.org/

+[8]:http://ubuntusatanic.org/

+[9]:http://tinycorelinux.net/

+[10]:https://nixos.org/

+[11]:http://www.gobolinux.org/

+[12]:http://hannahmontana.sourceforge.net/

+[13]:http://rlsd2.dimakrasner.com/

+[14]:https://en.wikipedia.org/wiki/Compartmentalization_(information_security)

\ No newline at end of file

diff --git a/published/201511/20150831 How to switch from NetworkManager to systemd-networkd on Linux.md b/published/201511/20150831 How to switch from NetworkManager to systemd-networkd on Linux.md

new file mode 100644

index 0000000000..658d6c033d

--- /dev/null

+++ b/published/201511/20150831 How to switch from NetworkManager to systemd-networkd on Linux.md

@@ -0,0 +1,165 @@

+如何在 Linux 上从 NetworkManager 切换为 systemd-network

+================================================================================

+在 Linux 世界里,对 [systemd][1] 的采用一直是激烈争论的主题,它的支持者和反对者之间的战火仍然在燃烧。到了今天,大部分主流 Linux 发行版都已经采用了 systemd 作为默认的初始化(init)系统。

+

+正如其作者所说,作为一个 “从未完成、从未完善、但一直追随技术进步” 的系统,systemd 已经不只是一个初始化进程,它被设计为一个更广泛的系统以及服务管理平台,这个平台是一个包含了不断增长的核心系统进程、库和工具的生态系统。

+

+**systemd** 的其中一部分是 **systemd-networkd**,它负责 systemd 生态中的网络配置。使用 systemd-networkd,你可以为网络设备配置基础的 DHCP/静态 IP 网络。它还可以配置虚拟网络功能,例如网桥、隧道和 VLAN。systemd-networkd 目前还不能直接支持无线网络,但你可以使用 wpa_supplicant 服务配置无线适配器,然后把它和 **systemd-networkd** 联系起来。

+

+在很多 Linux 发行版中,NetworkManager 仍然作为默认的网络配置管理器。和 NetworkManager 相比,**systemd-networkd** 仍处于积极的开发状态,还缺少一些功能。例如,它还不能像 NetworkManager 那样能让你的计算机在任何时候通过多种接口保持连接。它还没有为更高层面的脚本编程提供 ifup/ifdown 钩子函数。但是,systemd-networkd 和其它 systemd 组件(例如用于域名解析的 **resolved**、NTP 的**timesyncd**,用于命名的 udevd)结合的非常好。随着时间增长,**systemd-networkd**只会在 systemd 环境中扮演越来越重要的角色。

+

+如果你对 **systemd-networkd** 的进步感到高兴,从 NetworkManager 切换到 systemd-networkd 是值得你考虑的一件事。如果你强烈反对 systemd,对 NetworkManager 或[基础网络服务][2]感到很满意,那也很好。

+

+但对于那些想尝试 systemd-networkd 的人,可以继续看下去,在这篇指南中学会在 Linux 中怎么从 NetworkManager 切换到 systemd-networkd。

+

+### 需求 ###

+

+systemd 210 及其更高版本提供了 systemd-networkd。因此诸如 Debian 8 "Jessie" (systemd 215)、 Fedora 21 (systemd 217)、 Ubuntu 15.04 (systemd 219) 或更高版本的 Linux 发行版和 systemd-networkd 兼容。

+

+对于其它发行版,在开始下一步之前先检查一下你的 systemd 版本。

+

+ $ systemctl --version

+

+### 从 NetworkManager 切换到 Systemd-networkd ###

+

+从 NetworkManager 切换到 systemd-networkd 其实非常简答(反过来也一样)。

+

+首先,按照下面这样先停用 NetworkManager 服务,然后启用 systemd-networkd。

+

+ $ sudo systemctl disable NetworkManager

+ $ sudo systemctl enable systemd-networkd

+

+你还要启用 **systemd-resolved** 服务,systemd-networkd用它来进行域名解析。该服务还实现了一个缓存式 DNS 服务器。

+

+ $ sudo systemctl enable systemd-resolved

+ $ sudo systemctl start systemd-resolved

+

+当启动后,**systemd-resolved** 就会在 /run/systemd 目录下某个地方创建它自己的 resolv.conf。但是,把 DNS 解析信息存放在 /etc/resolv.conf 是更普遍的做法,很多应用程序也会依赖于 /etc/resolv.conf。因此为了兼容性,按照下面的方式创建一个到 /etc/resolv.conf 的符号链接。

+

+ $ sudo rm /etc/resolv.conf

+ $ sudo ln -s /run/systemd/resolve/resolv.conf /etc/resolv.conf

+

+### 用 systemd-networkd 配置网络连接 ###

+

+要用 systemd-networkd 配置网络服务,你必须指定带.network 扩展名的配置信息文本文件。这些网络配置文件保存到 /etc/systemd/network 并从这里加载。当有多个文件时,systemd-networkd 会按照字母顺序一个个加载并处理。

+

+首先创建 /etc/systemd/network 目录。

+

+ $ sudo mkdir /etc/systemd/network

+

+#### DHCP 网络 ####

+

+首先来配置 DHCP 网络。对于此,先要创建下面的配置文件。文件名可以任意,但记住文件是按照字母顺序处理的。

+

+ $ sudo vi /etc/systemd/network/20-dhcp.network

+

+----------

+

+ [Match]

+ Name=enp3*

+

+ [Network]

+ DHCP=yes

+

+正如你上面看到的,每个网络配置文件包括了一个或多个 “sections”,每个 “section”都用 [XXX] 开头。每个 section 包括了一个或多个键值对。`[Match]` 部分决定这个配置文件配置哪个(些)网络设备。例如,这个文件匹配所有名称以 ens3 开头的网络设备(例如 enp3s0、 enp3s1、 enp3s2 等等)对于匹配的接口,然后启用 [Network] 部分指定的 DHCP 网络配置。

+

+### 静态 IP 网络 ###

+

+如果你想给网络设备分配一个静态 IP 地址,那就新建下面的配置文件。

+

+ $ sudo vi /etc/systemd/network/10-static-enp3s0.network

+

+----------

+

+ [Match]

+ Name=enp3s0

+

+ [Network]

+ Address=192.168.10.50/24

+ Gateway=192.168.10.1

+ DNS=8.8.8.8

+

+正如你猜测的, enp3s0 接口地址会被指定为 192.168.10.50/24,默认网关是 192.168.10.1, DNS 服务器是 8.8.8.8。这里微妙的一点是,接口名 enp3s0 事实上也匹配了之前 DHCP 配置中定义的模式规则。但是,根据词汇顺序,文件 "10-static-enp3s0.network" 在 "20-dhcp.network" 之前被处理,对于 enp3s0 接口静态配置比 DHCP 配置有更高的优先级。

+

+一旦你完成了创建配置文件,重启 systemd-networkd 服务或者重启机器。

+

+ $ sudo systemctl restart systemd-networkd

+

+运行以下命令检查服务状态:

+

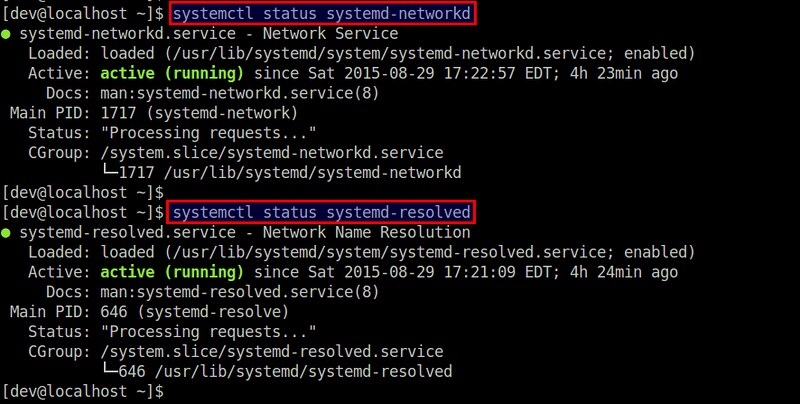

+ $ systemctl status systemd-networkd

+ $ systemctl status systemd-resolved

+

+

+

+### 用 systemd-networkd 配置虚拟网络设备 ###

+

+**systemd-networkd** 同样允许你配置虚拟网络设备,例如网桥、VLAN、隧道、VXLAN、绑定等。你必须在用 .netdev 作为扩展名的文件中配置这些虚拟设备。

+

+这里我展示了如何配置一个桥接接口。

+

+#### Linux 网桥 ####

+

+如果你想创建一个 Linux 网桥(br0) 并把物理接口(eth1) 添加到网桥,你可以新建下面的配置。

+

+ $ sudo vi /etc/systemd/network/bridge-br0.netdev

+

+----------

+

+ [NetDev]

+ Name=br0

+ Kind=bridge

+

+然后按照下面这样用 .network 文件配置网桥接口 br0 和从接口 eth1。

+

+ $ sudo vi /etc/systemd/network/bridge-br0-slave.network

+

+----------

+

+ [Match]

+ Name=eth1

+

+ [Network]

+ Bridge=br0

+

+----------

+

+ $ sudo vi /etc/systemd/network/bridge-br0.network

+

+----------

+

+ [Match]

+ Name=br0

+

+ [Network]

+ Address=192.168.10.100/24

+ Gateway=192.168.10.1

+ DNS=8.8.8.8

+

+最后,重启 systemd-networkd。

+

+ $ sudo systemctl restart systemd-networkd

+

+你可以用 [brctl 工具][3] 来验证是否创建好了网桥 br0。

+

+### 总结 ###

+

+当 systemd 誓言成为 Linux 的系统管理器时,有类似 systemd-networkd 的东西来管理网络配置也就不足为奇。但是在现阶段,systemd-networkd 看起来更适合于网络配置相对稳定的服务器环境。对于桌面/笔记本环境,它们有多种临时有线/无线接口,NetworkManager 仍然是比较好的选择。

+

+对于想进一步了解 systemd-networkd 的人,可以参考官方[man 手册][4]了解完整的支持列表和关键点。

+

+--------------------------------------------------------------------------------

+

+via: http://xmodulo.com/switch-from-networkmanager-to-systemd-networkd.html

+

+作者:[Dan Nanni][a]

+译者:[ictlyh](http://mutouxiaogui.cn/blog)

+校对:[wxy](https://github.com/wxy)

+

+本文由 [LCTT](https://github.com/LCTT/TranslateProject) 原创编译,[Linux中国](https://linux.cn/) 荣誉推出

+

+[a]:http://xmodulo.com/author/nanni

+[1]:http://xmodulo.com/use-systemd-system-administration-debian.html

+[2]:http://xmodulo.com/disable-network-manager-linux.html

+[3]:http://xmodulo.com/how-to-configure-linux-bridge-interface.html

+[4]:http://www.freedesktop.org/software/systemd/man/systemd.network.html

diff --git a/published/201511/20150909 Superclass--15 of the world's best living programmers.md b/published/201511/20150909 Superclass--15 of the world's best living programmers.md

new file mode 100644

index 0000000000..89a42d29d7

--- /dev/null

+++ b/published/201511/20150909 Superclass--15 of the world's best living programmers.md

@@ -0,0 +1,427 @@

+超神们:15 位健在的世界级程序员!

+================================================================================

+

+当开发人员说起世界顶级程序员时,他们的名字往往会被提及。

+

+好像现在程序员有很多,其中不乏有许多优秀的程序员。但是哪些程序员更好呢?

+

+虽然这很难客观评价,不过在这个话题确实是开发者们津津乐道的。ITworld 深入程序员社区,避开四溅的争执口水,试图找出可能存在的所谓共识。事实证明,屈指可数的某些名字经常是讨论的焦点。

+

+

+

+*图片来源: [tom_bullock CC BY 2.0][1]*

+

+下面就让我们来看看这些世界顶级的程序员吧!

+

+### 玛格丽特·汉密尔顿(Margaret Hamilton) ###

+

+

+

+*图片来源: [NASA][2]*

+

+**成就: 阿波罗飞行控制软件背后的大脑**

+

+生平: 查尔斯·斯塔克·德雷珀实验室(Charles Stark Draper Laboratory)软件工程部的主任,以她为首的团队负责设计和打造 NASA 的阿波罗的舰载飞行控制器软件和空间实验室(Skylab)的任务。基于阿波罗这段的工作经历,她又后续开发了[通用系统语言(Universal Systems Language)][5]和[开发先于事实( Development Before the Fact)][6]的范例。开创了[异步软件、优先调度和超可靠的软件设计][7]理念。被认为发明了“[软件工程( software engineering)][8]”一词。1986年获[奥古斯塔·埃达·洛夫莱斯奖(Augusta Ada Lovelace Award)][9],2003年获 [NASA 杰出太空行动奖(Exceptional Space Act Award)][10]。

+

+评论:

+

+> “汉密尔顿发明了测试,使美国计算机工程规范了很多” —— [ford_beeblebrox][11]

+

+> “我认为在她之前(不敬地说,包括高德纳(Knuth)在内的)计算机编程是(另一种形式上留存的)数学分支。然而这个宇宙飞船的飞行控制系统明确地将编程带入了一个崭新的领域。” —— [Dan Allen][12]

+

+> “... 她引入了‘软件工程’这个术语 — 并作出了最好的示范。” —— [David Hamilton][13]

+

+> “真是个坏家伙” [Drukered][14]

+

+

+### 唐纳德·克努斯(Donald Knuth),即 高德纳 ###

+

+

+

+*图片来源: [vonguard CC BY-SA 2.0][15]*

+

+**成就: 《计算机程序设计艺术(The Art of Computer Programming,TAOCP)》 作者**

+

+生平: 撰写了[编程理论的权威书籍][16]。发明了数字排版系统 Tex。1971年,[ACM(美国计算机协会)葛丽丝·穆雷·霍普奖(Grace Murray Hopper Award)][17] 的首位获奖者。1974年获 ACM [图灵奖(A. M. Turing)][18],1979年获[美国国家科学奖章(National Medal of Science)][19],1995年获IEEE[约翰·冯·诺依曼奖章(John von Neumann Medal)][20]。1998年入选[计算机历史博物馆(Computer History Museum)名人录(Hall of Fellows)][21]。

+

+评论:

+

+> “... 写的计算机编程艺术(The Art of Computer Programming,TAOCP)可能是有史以来计算机编程方面最大的贡献。”—— [佚名][22]

+

+> “唐·克努斯的 TeX 是我所用过的计算机程序中唯一一个几乎没有 bug 的。真是让人印象深刻!”—— [Jaap Weel][23]

+

+> “如果你要问我的话,我只能说太棒了!” —— [Mitch Rees-Jones][24]

+

+### 肯·汤普逊(Ken Thompson) ###

+

+

+

+*图片来源: [Association for Computing Machinery][25]*

+

+**成就: Unix 之父**

+

+生平:与[丹尼斯·里奇(Dennis Ritchie)][26]共同创造了 Unix。创造了 [B 语言][27]、[UTF-8 字符编码方案][28]、[ed 文本编辑器][29],同时也是 Go 语言的共同开发者。(和里奇)共同获得1983年的[图灵奖(A.M. Turing Award )][30],1994年获 [IEEE 计算机先驱奖( IEEE Computer Pioneer Award)][31],1998年获颁[美国国家科技奖章( National Medal of Technology )][32]。在1997年入选[计算机历史博物馆(Computer History Museum)名人录(Hall of Fellows)][33]。

+

+评论:

+

+> “... 可能是有史以来最能成事的程序员了。Unix 内核,Unix 工具,国际象棋程序世界冠军 Belle,Plan 9,Go 语言。” —— [Pete Prokopowicz][34]

+

+> “肯所做出的贡献,据我所知无人能及,是如此的根本、实用、经得住时间的考验,时至今日仍在使用。” —— [Jan Jannink][35]

+

+

+### 理查德·斯托曼(Richard Stallman) ###

+

+

+

+*图片来源: [Jiel Beaumadier CC BY-SA 3.0][135]*

+

+**成就: Emacs 和 GCC 缔造者**

+

+生平: 成立了 [GNU 工程(GNU Project)] [36],并创造了它的许多核心工具,如 [Emacs、GCC、GDB][37] 和 [GNU Make][38]。还创办了[自由软件基金会(Free Software Foundation)] [39]。1990年荣获 ACM 的[葛丽丝·穆雷·霍普奖( Grace Murray Hopper Award)][40],1998年获 [EFF 先驱奖(Pioneer Award)][41].

+

+评论:

+

+> “... 在 Symbolics 对阵 LMI 的战斗中,独自一人与一众 Lisp 黑客好手对码。” —— [Srinivasan Krishnan][42]

+

+> “通过他在编程上的精湛造诣与强大信念,开辟了一整套编程与计算机的亚文化。” —— [Dan Dunay][43]

+

+> “我可以不赞同这位伟人的很多方面,不必盖棺论定,他不可否认都已经是一位伟大的程序员了。” —— [Marko Poutiainen][44]

+

+> “试想 Linux 如果没有 GNU 工程的前期工作会怎么样。(多亏了)斯托曼的炸弹!” —— [John Burnette][45]

+

+### 安德斯·海尔斯伯格(Anders Hejlsberg) ###

+

+

+

+*图片来源: [D.Begley CC BY 2.0][46]*

+

+**成就: 创造了Turbo Pascal**

+

+生平: [Turbo Pascal 的原作者][47],是最流行的 Pascal 编译器和第一个集成开发环境。而后,[领导了 Turbo Pascal 的继任者 Delphi][48] 的构建。[C# 的主要设计师和架构师][49]。2001年荣获[ Dr. Dobb 的杰出编程奖(Dr. Dobb's Excellence in Programming Award )][50]。

+

+评论:

+

+> “他用汇编语言为当时两个主流的 PC 操作系统(DOS 和 CPM)编写了 [Pascal] 编译器。用它来编译、链接并运行仅需几秒钟而不是几分钟。” —— [Steve Wood][51]

+

+> “我佩服他 - 他创造了我最喜欢的开发工具,陪伴着我度过了三个关键的时期直至我成为一位专业的软件工程师。” —— [Stefan Kiryazov][52]

+

+### Doug Cutting ###

+

+

+

+图片来源: [vonguard CC BY-SA 2.0][53]

+

+**成就: 创造了 Lucene**

+

+生平: [开发了 Lucene 搜索引擎以及 Web 爬虫 Nutch][54] 和用于大型数据集的分布式处理套件 [Hadoop][55]。一位强有力的开源支持者(Lucene、Nutch 以及 Hadoop 都是开源的)。前 [Apache 软件基金(Apache Software Foundation)的理事][56]。

+

+评论:

+

+

+> “...他就是那个既写出了优秀搜索框架(lucene/solr),又为世界开启大数据之门(hadoop)的男人。” —— [Rajesh Rao][57]

+

+> “他在 Lucene 和 Hadoop(及其它工程)的创造/工作中为世界创造了巨大的财富和就业...” —— [Amit Nithianandan][58]

+

+### Sanjay Ghemawat ###

+

+

+

+*图片来源: [Association for Computing Machinery][59]*

+

+**成就: 谷歌核心架构师**

+

+生平: [协助设计和实现了一些谷歌大型分布式系统的功能][60],包括 MapReduce、BigTable、Spanner 和谷歌文件系统(Google File System)。[创造了 Unix 的 ical ][61]日历系统。2009年入选[美国国家工程院(National Academy of Engineering)][62]。2012年荣获 [ACM-Infosys 基金计算机科学奖( ACM-Infosys Foundation Award in the Computing Sciences)][63]。

+

+评论:

+

+

+> “Jeff Dean的僚机。” —— [Ahmet Alp Balkan][64]

+

+### Jeff Dean ###

+

+

+

+*图片来源: [Google][65]*

+

+**成就: 谷歌搜索索引背后的大脑**

+

+生平:协助设计和实现了[许多谷歌大型分布式系统的功能][66],包括网页爬虫,索引搜索,AdSense,MapReduce,BigTable 和 Spanner。2009年入选[美国国家工程院( National Academy of Engineering)][67]。2012年荣获ACM 的[SIGOPS 马克·维瑟奖( SIGOPS Mark Weiser Award)][68]及[ACM-Infosys基金计算机科学奖( ACM-Infosys Foundation Award in the Computing Sciences)][69]。

+

+评论:

+

+> “... 带来了在数据挖掘(GFS、MapReduce、BigTable)上的突破。” —— [Natu Lauchande][70]

+

+> “... 设计、构建并部署 MapReduce 和 BigTable,和以及数不清的其它东西” —— [Erik Goldman][71]

+

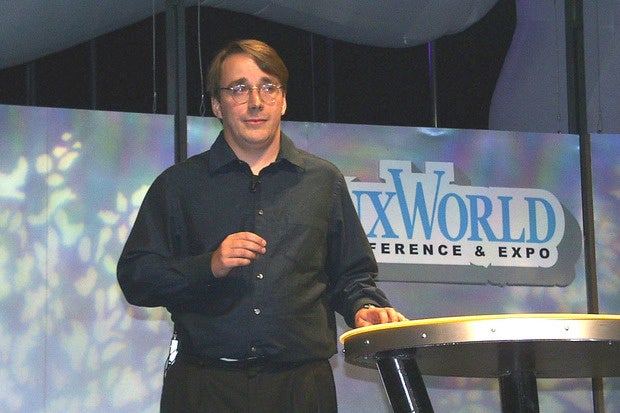

+### 林纳斯·托瓦兹(Linus Torvalds) ###

+

+

+

+*图片来源: [Krd CC BY-SA 4.0][72]*

+

+**成就: Linux缔造者**

+

+生平:创造了 [Linux 内核][73]与[开源的版本控制系统 Git][74]。收获了许多奖项和荣誉,包括有1998年的 [EFF 先驱奖(EFF Pioneer Award)][75],2000年荣获[英国电脑学会(British Computer Society)授予的洛夫莱斯勋章(Lovelace Medal)][76],2012年荣获[千禧技术奖(Millenium Technology Prize)][77]还有2014年[IEEE计算机学会( IEEE Computer Society)授予的计算机先驱奖(Computer Pioneer Award)][78]。同样入选了2008年的[计算机历史博物馆( Computer History Museum)名人录(Hall of Fellows)][79]与2012年的[互联网名人堂(Internet Hall of Fame )][80]。

+

+评论:

+

+> “他只用了几年的时间就写出了 Linux 内核,而 GNU Hurd(GNU 开发的内核)历经25年的开发却丝毫没有准备发布的意思。他的成就就是带来了希望。” —— [Erich Ficker][81]

+

+> “托沃兹可能是程序员的程序员。” —— [Dan Allen][82]

+

+> “他真的很棒。” —— [Alok Tripathy][83]

+

+### 约翰·卡马克(John Carmack) ###

+

+

+

+*图片来源: [QuakeCon CC BY 2.0][84]*

+

+**成就: 毁灭战士的缔造者**

+

+生平: ID 社联合创始人,打造了德军总部3D(Wolfenstein 3D)、毁灭战士(Doom)和雷神之锤(Quake)等所谓的即时 FPS 游戏。引领了[切片适配刷新(adaptive tile refresh)][86], [二叉空间分割(binary space partitioning)][87],表面缓存(surface caching)等开创性的计算机图像技术。2001年入选[互动艺术与科学学会名人堂(Academy of Interactive Arts and Sciences Hall of Fame)][88],2007年和2008年荣获工程技术类[艾美奖(Emmy awards)][89]并于2010年由[游戏开发者甄选奖( Game Developers Choice Awards)][90]授予终生成就奖。

+

+评论:

+

+> “他在写第一个渲染引擎的时候不到20岁。这家伙这是个天才。我若有他四分之一的天赋便心满意足了。” —— [Alex Dolinsky][91]

+

+> “... 德军总部3D(Wolfenstein 3D)、毁灭战士(Doom)还有雷神之锤(Quake)在那时都是革命性的,影响了一代游戏设计师。” —— [dniblock][92]

+

+> “一个周末他几乎可以写出任何东西....” —— [Greg Naughton][93]

+

+> “他是编程界的莫扎特... ” —— [Chris Morris][94]

+

+### 法布里斯·贝拉(Fabrice Bellard) ###

+

+

+

+*图片来源: [Duff][95]*

+

+**成就: 创造了 QEMU**

+

+生平: 创造了[一系列耳熟能详的开源软件][96],其中包括硬件模拟和虚拟化的平台 QEMU,用于处理多媒体数据的 FFmpeg,微型C编译器(Tiny C Compiler)和 一个可执行文件压缩软件 LZEXE。2000年和2001年[C语言混乱代码大赛(Obfuscated C Code Contest)的获胜者][97]并在2011年荣获[Google-O'Reilly 开源奖(Google-O'Reilly Open Source Award )][98]。[计算 Pi 最多位数][99]的前世界纪录保持着。

+

+评论:

+

+

+> “我觉得法布里斯·贝拉做的每一件事都是那么显著而又震撼。” —— [raphinou][100]

+

+> “法布里斯·贝拉是世界上最高产的程序员...” —— [Pavan Yara][101]

+

+> “他就像软件工程界的尼古拉·特斯拉(Nikola Tesla)。” —— [Michael Valladolid][102]

+

+> “自80年代以来,他一直高产出一系列的成功作品。” —— [Michael Biggins][103]

+

+### Jon Skeet ###

+

+

+

+*图片来源: [Craig Murphy CC BY 2.0][104]*

+

+**成就: Stack Overflow 的传说级贡献者**

+

+生平: Google 工程师,[深入解析C#(C# in Depth)][105]的作者。保持着[有史以来在 Stack Overflow 上最高的声誉][106],平均每月解答390个问题。

+

+评论:

+

+

+> “他根本不需要调试器,只要他盯一下代码,错误之处自会原形毕露。” —— [Steven A. Lowe][107]

+

+> “如果他的代码没有通过编译,那编译器应该道歉。” —— [Dan Dyer][108]

+

+> “他根本不需要什么编程规范,他的代码就是编程规范。” —— [佚名][109]

+

+### 亚当·安捷罗(Adam D'Angelo) ###

+

+

+

+*图片来源: [Philip Neustrom CC BY 2.0][110]*

+

+**成就: Quora 的创办人之一**

+

+生平: 还是 Facebook 工程师时,[为其搭建了 news feed 功能的基础][111]。直至其离开并联合创始了 Quora,已经成为了 Facebook 的CTO和工程 VP。2001年以高中生的身份在[美国计算机奥林匹克(USA Computing Olympiad)上第八位完成比赛][112]。2004年ACM国际大学生编程大赛(International Collegiate Programming Contest)[获得银牌的团队 - 加利福尼亚技术研究所( California Institute of Technology)][113]的成员。2005年入围 Topcoder 大学生[算法编程挑战赛(Algorithm Coding Competition)][114]。

+

+评论:

+

+> “一位程序设计全才。” —— [佚名][115]

+

+> "我做的每个好东西,他都已有了六个。" —— [马克.扎克伯格(Mark Zuckerberg)][116]

+

+### Petr Mitrechev ###

+

+

+

+*图片来源: [Facebook][117]*

+

+**成就: 有史以来最具竞技能力的程序员之一**

+

+生平: 在国际信息学奥林匹克(International Olympiad in Informatics)中[两次获得金牌][118](2000,2002)。在2006,[赢得 Google Code Jam][119] 同时也是[TopCoder Open 算法大赛冠军][120]。也同样,两次赢得 Facebook黑客杯(Facebook Hacker Cup)([2011][121],[2013][122])。写这篇文章的时候,[TopCoder 榜中排第二][123] (即:Petr)、在 [Codeforces 榜同样排第二][124]。

+

+评论:

+

+> “他是竞技程序员的偶像,即使在印度也是如此...” —— [Kavish Dwivedi][125]

+

+### Gennady Korotkevich ###

+

+

+

+*图片来源: [Ishandutta2007 CC BY-SA 3.0][126]*

+

+**成就: 竞技编程小神童**

+

+生平: 国际信息学奥林匹克(International Olympiad in Informatics)中最小参赛者(11岁),[6次获得金牌][127] (2007-2012)。2013年 ACM 国际大学生编程大赛(International Collegiate Programming Contest)[获胜队伍][128]成员及[2014 Facebook 黑客杯(Facebook Hacker Cup)][129]获胜者。写这篇文章的时候,[Codeforces 榜排名第一][130] (即:Tourist)、[TopCoder榜第一][131]。

+

+评论:

+

+> “一个编程神童!” —— [Prateek Joshi][132]

+

+> “Gennady 真是棒,也是为什么我在白俄罗斯拥有一个强大开发团队的例证。” —— [Chris Howard][133]

+

+> “Tourist 真是天才” —— [Nuka Shrinivas Rao][134]

+

+--------------------------------------------------------------------------------

+

+via: http://www.itworld.com/article/2823547/enterprise-software/158256-superclass-14-of-the-world-s-best-living-programmers.html#slide1

+

+作者:[Phil Johnson][a]

+译者:[martin2011qi](https://github.com/martin2011qi)

+校对:[wxy](https://github.com/wxy)

+

+本文由 [LCTT](https://github.com/LCTT/TranslateProject) 原创编译,[Linux中国](https://linux.cn/) 荣誉推出

+

+[a]:http://www.itworld.com/author/Phil-Johnson/

+[1]:https://www.flickr.com/photos/tombullock/15713223772

+[2]:https://commons.wikimedia.org/wiki/File:Margaret_Hamilton_in_action.jpg

+[3]:http://klabs.org/home_page/hamilton.htm

+[4]:https://www.youtube.com/watch?v=DWcITjqZtpU&feature=youtu.be&t=3m12s

+[5]:http://www.htius.com/Articles/r12ham.pdf

+[6]:http://www.htius.com/Articles/Inside_DBTF.htm

+[7]:http://www.nasa.gov/home/hqnews/2003/sep/HQ_03281_Hamilton_Honor.html

+[8]:http://www.nasa.gov/50th/50th_magazine/scientists.html

+[9]:https://books.google.com/books?id=JcmV0wfQEoYC&pg=PA321&lpg=PA321&dq=ada+lovelace+award+1986&source=bl&ots=qGdBKsUa3G&sig=bkTftPAhM1vZ_3VgPcv-38ggSNo&hl=en&sa=X&ved=0CDkQ6AEwBGoVChMI3paoxJHWxwIVA3I-Ch1whwPn#v=onepage&q=ada%20lovelace%20award%201986&f=false

+[10]:http://history.nasa.gov/alsj/a11/a11Hamilton.html

+[11]:https://www.reddit.com/r/pics/comments/2oyd1y/margaret_hamilton_with_her_code_lead_software/cmrswof

+[12]:http://qr.ae/RFEZLk

+[13]:http://qr.ae/RFEZUn

+[14]:https://www.reddit.com/r/pics/comments/2oyd1y/margaret_hamilton_with_her_code_lead_software/cmrv9u9

+[15]:https://www.flickr.com/photos/44451574@N00/5347112697

+[16]:http://cs.stanford.edu/~uno/taocp.html

+[17]:http://awards.acm.org/award_winners/knuth_1013846.cfm

+[18]:http://amturing.acm.org/award_winners/knuth_1013846.cfm

+[19]:http://www.nsf.gov/od/nms/recip_details.jsp?recip_id=198

+[20]:http://www.ieee.org/documents/von_neumann_rl.pdf

+[21]:http://www.computerhistory.org/fellowawards/hall/bios/Donald,Knuth/

+[22]:http://www.quora.com/Who-are-the-best-programmers-in-Silicon-Valley-and-why/answers/3063

+[23]:http://www.quora.com/Respected-Software-Engineers/Who-are-some-of-the-best-programmers-in-the-world/answer/Jaap-Weel

+[24]:http://qr.ae/RFE94x

+[25]:http://amturing.acm.org/photo/thompson_4588371.cfm

+[26]:https://www.youtube.com/watch?v=JoVQTPbD6UY

+[27]:https://www.bell-labs.com/usr/dmr/www/bintro.html

+[28]:http://doc.cat-v.org/bell_labs/utf-8_history

+[29]:http://c2.com/cgi/wiki?EdIsTheStandardTextEditor

+[30]:http://amturing.acm.org/award_winners/thompson_4588371.cfm

+[31]:http://www.computer.org/portal/web/awards/cp-thompson

+[32]:http://www.uspto.gov/about/nmti/recipients/1998.jsp

+[33]:http://www.computerhistory.org/fellowawards/hall/bios/Ken,Thompson/

+[34]:http://www.quora.com/Computer-Programming/Who-is-the-best-programmer-in-the-world-right-now/answer/Pete-Prokopowicz-1

+[35]:http://qr.ae/RFEWBY

+[36]:https://groups.google.com/forum/#!msg/net.unix-wizards/8twfRPM79u0/1xlglzrWrU0J

+[37]:http://www.emacswiki.org/emacs/RichardStallman

+[38]:https://www.gnu.org/gnu/thegnuproject.html

+[39]:http://www.emacswiki.org/emacs/FreeSoftwareFoundation

+[40]:http://awards.acm.org/award_winners/stallman_9380313.cfm

+[41]:https://w2.eff.org/awards/pioneer/1998.php

+[42]:http://www.quora.com/Respected-Software-Engineers/Who-are-some-of-the-best-programmers-in-the-world/answer/Greg-Naughton/comment/4146397

+[43]:http://qr.ae/RFEaib

+[44]:http://www.quora.com/Software-Engineering/Who-are-some-of-the-greatest-currently-active-software-architects-in-the-world/answer/Marko-Poutiainen

+[45]:http://qr.ae/RFEUqp

+[46]:https://www.flickr.com/photos/begley/2979906130

+[47]:http://www.taoyue.com/tutorials/pascal/history.html

+[48]:http://c2.com/cgi/wiki?AndersHejlsberg

+[49]:http://www.microsoft.com/about/technicalrecognition/anders-hejlsberg.aspx

+[50]:http://www.drdobbs.com/windows/dr-dobbs-excellence-in-programming-award/184404602

+[51]:http://qr.ae/RFEZrv

+[52]:http://www.quora.com/Software-Engineering/Who-are-some-of-the-greatest-currently-active-software-architects-in-the-world/answer/Stefan-Kiryazov

+[53]:https://www.flickr.com/photos/vonguard/4076389963/

+[54]:http://www.wizards-of-os.org/archiv/sprecher/a_c/doug_cutting.html

+[55]:http://hadoop.apache.org/

+[56]:https://www.linkedin.com/in/cutting

+[57]:http://www.quora.com/Respected-Software-Engineers/Who-are-some-of-the-best-programmers-in-the-world/answer/Shalin-Shekhar-Mangar/comment/2293071

+[58]:http://www.quora.com/Who-are-the-best-programmers-in-Silicon-Valley-and-why/answer/Amit-Nithianandan

+[59]:http://awards.acm.org/award_winners/ghemawat_1482280.cfm

+[60]:http://research.google.com/pubs/SanjayGhemawat.html

+[61]:http://www.quora.com/Google/Who-is-Sanjay-Ghemawat

+[62]:http://www8.nationalacademies.org/onpinews/newsitem.aspx?RecordID=02062009

+[63]:http://awards.acm.org/award_winners/ghemawat_1482280.cfm

+[64]:http://www.quora.com/Google/Who-is-Sanjay-Ghemawat/answer/Ahmet-Alp-Balkan

+[65]:http://research.google.com/people/jeff/index.html

+[66]:http://research.google.com/people/jeff/index.html

+[67]:http://www8.nationalacademies.org/onpinews/newsitem.aspx?RecordID=02062009

+[68]:http://news.cs.washington.edu/2012/10/10/uw-cse-ph-d-alum-jeff-dean-wins-2012-sigops-mark-weiser-award/

+[69]:http://awards.acm.org/award_winners/dean_2879385.cfm

+[70]:http://www.quora.com/Computer-Programming/Who-is-the-best-programmer-in-the-world-right-now/answer/Natu-Lauchande

+[71]:http://www.quora.com/Respected-Software-Engineers/Who-are-some-of-the-best-programmers-in-the-world/answer/Cosmin-Negruseri/comment/28399

+[72]:https://commons.wikimedia.org/wiki/File:LinuxCon_Europe_Linus_Torvalds_05.jpg

+[73]:http://www.linuxfoundation.org/about/staff#torvalds

+[74]:http://git-scm.com/book/en/Getting-Started-A-Short-History-of-Git

+[75]:https://w2.eff.org/awards/pioneer/1998.php

+[76]:http://www.bcs.org/content/ConWebDoc/14769

+[77]:http://www.zdnet.com/blog/open-source/linus-torvalds-wins-the-tech-equivalent-of-a-nobel-prize-the-millennium-technology-prize/10789

+[78]:http://www.computer.org/portal/web/pressroom/Linus-Torvalds-Named-Recipient-of-the-2014-IEEE-Computer-Society-Computer-Pioneer-Award

+[79]:http://www.computerhistory.org/fellowawards/hall/bios/Linus,Torvalds/

+[80]:http://www.internethalloffame.org/inductees/linus-torvalds

+[81]:http://qr.ae/RFEeeo

+[82]:http://qr.ae/RFEZLk

+[83]:http://www.quora.com/Software-Engineering/Who-are-some-of-the-greatest-currently-active-software-architects-in-the-world/answer/Alok-Tripathy-1

+[84]:https://www.flickr.com/photos/quakecon/9434713998

+[85]:http://doom.wikia.com/wiki/John_Carmack

+[86]:http://thegamershub.net/2012/04/gaming-gods-john-carmack/

+[87]:http://www.shamusyoung.com/twentysidedtale/?p=4759

+[88]:http://www.interactive.org/special_awards/details.asp?idSpecialAwards=6

+[89]:http://www.itworld.com/article/2951105/it-management/a-fly-named-for-bill-gates-and-9-other-unusual-honors-for-tech-s-elite.html#slide8

+[90]:http://www.gamechoiceawards.com/archive/lifetime.html

+[91]:http://qr.ae/RFEEgr

+[92]:http://www.itworld.com/answers/topic/software/question/whos-best-living-programmer#comment-424562

+[93]:http://www.quora.com/Respected-Software-Engineers/Who-are-some-of-the-best-programmers-in-the-world/answer/Greg-Naughton

+[94]:http://money.cnn.com/2003/08/21/commentary/game_over/column_gaming/

+[95]:http://dufoli.wordpress.com/2007/06/23/ammmmaaaazing-night/

+[96]:http://bellard.org/

+[97]:http://www.ioccc.org/winners.html#B

+[98]:http://www.oscon.com/oscon2011/public/schedule/detail/21161

+[99]:http://bellard.org/pi/pi2700e9/

+[100]:https://news.ycombinator.com/item?id=7850797

+[101]:http://www.quora.com/Respected-Software-Engineers/Who-are-some-of-the-best-programmers-in-the-world/answer/Erik-Frey/comment/1718701

+[102]:http://www.quora.com/Respected-Software-Engineers/Who-are-some-of-the-best-programmers-in-the-world/answer/Erik-Frey/comment/2454450

+[103]:http://qr.ae/RFEjhZ

+[104]:https://www.flickr.com/photos/craigmurphy/4325516497

+[105]:http://www.amazon.co.uk/gp/product/1935182471?ie=UTF8&tag=developetutor-21&linkCode=as2&camp=1634&creative=19450&creativeASIN=1935182471

+[106]:http://stackexchange.com/leagues/1/alltime/stackoverflow

+[107]:http://meta.stackexchange.com/a/9156

+[108]:http://meta.stackexchange.com/a/9138

+[109]:http://meta.stackexchange.com/a/9182

+[110]:https://www.flickr.com/photos/philipn/5326344032

+[111]:http://www.crunchbase.com/person/adam-d-angelo

+[112]:http://www.exeter.edu/documents/Exeter_Bulletin/fall_01/oncampus.html

+[113]:http://icpc.baylor.edu/community/results-2004

+[114]:https://www.topcoder.com/tc?module=Static&d1=pressroom&d2=pr_022205

+[115]:http://qr.ae/RFfOfe

+[116]:http://www.businessinsider.com/in-new-alleged-ims-mark-zuckerberg-talks-about-adam-dangelo-2012-9#ixzz369FcQoLB

+[117]:https://www.facebook.com/hackercup/photos/a.329665040399024.91563.133954286636768/553381194694073/?type=1

+[118]:http://stats.ioinformatics.org/people/1849

+[119]:http://googlepress.blogspot.com/2006/10/google-announces-winner-of-global-code_27.html

+[120]:http://community.topcoder.com/tc?module=SimpleStats&c=coder_achievements&d1=statistics&d2=coderAchievements&cr=10574855

+[121]:https://www.facebook.com/notes/facebook-hacker-cup/facebook-hacker-cup-finals/208549245827651

+[122]:https://www.facebook.com/hackercup/photos/a.329665040399024.91563.133954286636768/553381194694073/?type=1

+[123]:http://community.topcoder.com/tc?module=AlgoRank

+[124]:http://codeforces.com/ratings

+[125]:http://www.quora.com/Respected-Software-Engineers/Who-are-some-of-the-best-programmers-in-the-world/answer/Venkateswaran-Vicky/comment/1960855

+[126]:http://commons.wikimedia.org/wiki/File:Gennady_Korot.jpg

+[127]:http://stats.ioinformatics.org/people/804

+[128]:http://icpc.baylor.edu/regionals/finder/world-finals-2013/standings

+[129]:https://www.facebook.com/hackercup/posts/10152022955628845

+[130]:http://codeforces.com/ratings

+[131]:http://community.topcoder.com/tc?module=AlgoRank

+[132]:http://www.quora.com/Computer-Programming/Who-is-the-best-programmer-in-the-world-right-now/answer/Prateek-Joshi

+[133]:http://www.quora.com/Computer-Programming/Who-is-the-best-programmer-in-the-world-right-now/answer/Prateek-Joshi/comment/4720779

+[134]:http://www.quora.com/Computer-Programming/Who-is-the-best-programmer-in-the-world-right-now/answer/Prateek-Joshi/comment/4880549

+[135]:http://commons.wikimedia.org/wiki/File:Jielbeaumadier_richard_stallman_2010.jpg

\ No newline at end of file

diff --git a/published/20150914 Display Awesome Linux Logo With Basic Hardware Info Using screenfetch and linux_logo Tools.md b/published/201511/20150914 Display Awesome Linux Logo With Basic Hardware Info Using screenfetch and linux_logo Tools.md

similarity index 100%

rename from published/20150914 Display Awesome Linux Logo With Basic Hardware Info Using screenfetch and linux_logo Tools.md

rename to published/201511/20150914 Display Awesome Linux Logo With Basic Hardware Info Using screenfetch and linux_logo Tools.md

diff --git a/translated/tech/20150921 Configure PXE Server In Ubuntu 14.04.md b/published/201511/20150921 Configure PXE Server In Ubuntu 14.04.md

similarity index 70%

rename from translated/tech/20150921 Configure PXE Server In Ubuntu 14.04.md

rename to published/201511/20150921 Configure PXE Server In Ubuntu 14.04.md

index eab3fb5224..8689a180ce 100644

--- a/translated/tech/20150921 Configure PXE Server In Ubuntu 14.04.md

+++ b/published/201511/20150921 Configure PXE Server In Ubuntu 14.04.md

@@ -1,9 +1,9 @@

-

- 在 Ubuntu 14.04 中配置 PXE 服务器

+在 Ubuntu 14.04 中配置 PXE 服务器

================================================================================

+

-PXE(Preboot Execution Environment--预启动执行环境)服务器允许用户从网络中启动 Linux 发行版并且可以同时在数百台 PC 中安装而不需要 Linux ISO 镜像。如果你客户端的计算机没有 CD/DVD 或USB 引导盘,或者如果你想在大型企业中同时安装多台计算机,那么 PXE 服务器可以帮你节省时间和金钱。

+PXE(Preboot Execution Environment--预启动执行环境)服务器允许用户从网络中启动 Linux 发行版并且可以不需要 Linux ISO 镜像就能同时在数百台 PC 中安装。如果你客户端的计算机没有 CD/DVD 或USB 引导盘,或者如果你想在大型企业中同时安装多台计算机,那么 PXE 服务器可以帮你节省时间和金钱。

在这篇文章中,我们将告诉你如何在 Ubuntu 14.04 配置 PXE 服务器。

@@ -11,11 +11,11 @@ PXE(Preboot Execution Environment--预启动执行环境)服务器允许用

开始前,你需要先设置 PXE 服务器使用静态 IP。在你的系统中要使用静态 IP 地址,需要编辑 “/etc/network/interfaces” 文件。

-1. 打开 “/etc/network/interfaces” 文件.

+打开 “/etc/network/interfaces” 文件.

sudo nano /etc/network/interfaces

- 作如下修改:

+作如下修改:

# 回环网络接口

auto lo

@@ -43,23 +43,23 @@ DHCP,TFTP 和 NFS 是 PXE 服务器的重要组成部分。首先,需要更

### 配置 DHCP 服务: ###

-DHCP 代表动态主机配置协议(Dynamic Host Configuration Protocol),并且它主要用于动态分配网络配置参数,如用于接口和服务的 IP 地址。在 PXE 环境中,DHCP 服务器允许客户端请求并自动获得一个 IP 地址来访问网络。

+DHCP 代表动态主机配置协议(Dynamic Host Configuration Protocol),它主要用于动态分配网络配置参数,如用于接口和服务的 IP 地址。在 PXE 环境中,DHCP 服务器允许客户端请求并自动获得一个 IP 地址来访问网络。

-1. 编辑 “/etc/default/dhcp3-server” 文件.

+1、编辑 “/etc/default/dhcp3-server” 文件.

sudo nano /etc/default/dhcp3-server

- 作如下修改:

+作如下修改:

INTERFACES="eth0"

保存 (Ctrl + o) 并退出 (Ctrl + x) 文件.

-2. 编辑 “/etc/dhcp3/dhcpd.conf” 文件:

+2、编辑 “/etc/dhcp3/dhcpd.conf” 文件:

sudo nano /etc/dhcp/dhcpd.conf

- 作如下修改:

+作如下修改:

default-lease-time 600;

max-lease-time 7200;

@@ -74,29 +74,29 @@ DHCP 代表动态主机配置协议(Dynamic Host Configuration Protocol),

保存文件并退出。

-3. 启动 DHCP 服务.

+3、启动 DHCP 服务.

sudo /etc/init.d/isc-dhcp-server start

### 配置 TFTP 服务器: ###

-TFTP 是一种文件传输协议,类似于 FTP。它不用进行用户认证也不能列出目录。TFTP 服务器总是监听网络上的 PXE 客户端。当它检测到网络中有 PXE 客户端请求 PXE 服务器时,它将提供包含引导菜单的网络数据包。

+TFTP 是一种文件传输协议,类似于 FTP,但它不用进行用户认证也不能列出目录。TFTP 服务器总是监听网络上的 PXE 客户端的请求。当它检测到网络中有 PXE 客户端请求 PXE 服务时,它将提供包含引导菜单的网络数据包。

-1. 配置 TFTP 时,需要编辑 “/etc/inetd.conf” 文件.

+1、配置 TFTP 时,需要编辑 “/etc/inetd.conf” 文件.

sudo nano /etc/inetd.conf

- 作如下修改:

+作如下修改:

tftp dgram udp wait root /usr/sbin/in.tftpd /usr/sbin/in.tftpd -s /var/lib/tftpboot

- 保存文件并退出。

+保存文件并退出。

-2. 编辑 “/etc/default/tftpd-hpa” 文件。

+2、编辑 “/etc/default/tftpd-hpa” 文件。

sudo nano /etc/default/tftpd-hpa

- 作如下修改:

+作如下修改:

TFTP_USERNAME="tftp"

TFTP_DIRECTORY="/var/lib/tftpboot"

@@ -105,14 +105,14 @@ TFTP 是一种文件传输协议,类似于 FTP。它不用进行用户认证

RUN_DAEMON="yes"

OPTIONS="-l -s /var/lib/tftpboot"

- 保存文件并退出。

+保存文件并退出。

-3. 使用 `xinetd` 让 boot 服务在每次系统开机时自动启动,并启动tftpd服务。