mirror of

https://github.com/LCTT/TranslateProject.git

synced 2025-01-16 22:42:21 +08:00

commit

9dd34fd9d1

@ -1,6 +1,6 @@

|

||||

10个实用的关于linux中Squid代理服务器的面试问答

|

||||

10个关于linux中Squid代理服务器的实用面试问答

|

||||

================================================================================

|

||||

不仅是系统管理员和网络管理员时不时会听到“代理服务器”这个词,我们也经常听到。代理服务器已经是一种企业的文化,而且那是需要时间来积累的。它现在也在一些小型的学校或者大型跨国公司的自助餐厅里得到了实现。Squid(也可做代理服务)就是这样一个应用程序,它既可以被作为代理服务器,同时也是在其同类工具中比较被广泛使用的一种。

|

||||

不仅是系统管理员和网络管理员时不时会听到“代理服务器”这个词,我们也经常听到。代理服务器已经成为一种企业常态,而且经常会接触到它。它现在也出现在一些小型的学校或者大型跨国公司的自助餐厅里。Squid(常被视作代理服务的代名词)就是这样一个应用程序,它不但可以被作为代理服务器,其同时也是在该类工具中比较被广泛使用的一种。

|

||||

|

||||

本文旨在提高你在遇到关于代理服务器面试点时的一些基本应对能力。

|

||||

|

||||

@ -10,12 +10,13 @@

|

||||

|

||||

### 1. 什么是代理服务器?代理服务器在计算机网络中有什么用途? ###

|

||||

|

||||

> **回答** : 代理服务器是指那些作为客户端和资源提供商或服务器之间的中间件的物理机或者应用程序。客户端从代理服务器中寻找文件、页面或者是数据而且代理服务器能处理客户端与服务器之间所有复杂事务从而满足客户端的生成的需求。

|

||||

代理服务器是WWW(万维网)的支柱,它们其中大部分都是Web代理。一台代理服务器能处理客户端与服务器之间的复杂通信事务。此外,它在网络上提供的是匿名信息那就意味着你的身份和浏览痕迹都是安全的。代理可以去配置允许哪些网站的客户能看到,哪些网站被屏蔽了。

|

||||

> **回答** : 代理服务器是指那些作为客户端和资源提供商或服务器之间的中间件的物理机或者应用程序。客户端从代理服务器中寻找文件、页面或者是数据,而且代理服务器能处理客户端与服务器之间所有复杂事务,从而满足客户端的生成的需求。

|

||||

|

||||

代理服务器是WWW(万维网)的支柱,它们其中大部分都是Web代理。一台代理服务器能处理客户端与服务器之间的复杂通信事务。此外,它在网络上提供的是匿名信息(LCTT 译注:指浏览者的 IP、浏览器信息等被隐藏),这就意味着你的身份和浏览痕迹都是安全的。代理可以去配置允许哪些网站的客户能看到,哪些网站被屏蔽了。

|

||||

|

||||

### 2. Squid是什么? ###

|

||||

|

||||

> **回答** : Squid是一个在GNU/GPL协议下发布的即可作为代理服务器同时也可作为Web缓存守护进程的应用软件。Squid主要是支持像HTTP和FTP那样的协议但是对其它的协议比如HTTPS,SSL,TLS等同样也能支持。其特点是Web缓存守护进程通过从经常上访问的网站里缓存Web和DNS从而让上网速度更快。Squid支持所有的主流平台,包括Linux,UNIX,微软公司的Windows和苹果公司的Mac。

|

||||

> **回答** : Squid是一个在GNU/GPL协议下发布的既可作为代理服务器,同时也可作为Web缓存守护进程的应用软件。Squid主要是支持像HTTP和FTP那样的协议,但是对其它的协议比如HTTPS,SSL,TLS等同样也能支持。其特点是Web缓存守护进程通过从经常上访问的网站里缓存Web和DNS数据,从而让上网速度更快。Squid支持所有的主流平台,包括Linux,UNIX,微软公司的Windows和苹果公司的Mac。

|

||||

|

||||

### 3. Squid的默认端口是什么?怎么去修改它的操作端口? ###

|

||||

|

||||

@ -66,17 +67,17 @@ f. 保存配置文件并退出,重启Squid服务让其生效。

|

||||

|

||||

# service squid restart

|

||||

|

||||

### 5. 在Squid中什么是媒体范围限制和部分下载? ###

|

||||

### 5. 在Squid中什么是媒体范围限制(Media Range Limitation)和部分下载? ###

|

||||

|

||||

> **回答** : 媒体范围限制是Squid的一种特殊的功能,它只从服务器中获取所需要的数据而不是整个文件。这个功能很好的实现了用户在各种视频流媒体网站如YouTube和Metacafe看视频时,可以点击视频中的进度条来选择进度,因此整个视频不用全部都加载,除了一些需要的部分。

|

||||

|

||||

Squid部分下载功能的特点是很好地实现了在Windows更新时下载的文件能以一个个小数据包的形式暂停。正因为它的这个特点,正在下载文件的Windows机器能不用担心数据会丢失,从而进行恢复下载。Squid让媒体范围限制和部分下载功能只在存储一个完整文件的复件之后实现。此外,当用户指向另一个页面时,Squid要以某种方式进行特殊地配置,部分下载下来的文件才会不被删除且留有缓存。

|

||||

Squid部分下载功能的特点是很好地实现了类似在Windows更新时能以一个个小数据包的形式下载,并可以暂停,正因为它的这个特点,正在下载文件的Windows机器可以重新继续下载,而不用担心数据会丢失。Squid的媒体范围限制和部分下载功能只有在存储了一个完整文件的副本之后才行。此外,当用户访问另一个页面时,除非Squid进行了特定的配置,部分下载下来的文件会被删除且不留在缓存中。

|

||||

|

||||

### 6. 什么是Squid的反向代理? ###

|

||||

|

||||

> **回答** : 反向代理是Squid的一个特点,这个功能被用来加快最终用户的上网速度。缩写为 ‘RS’ 的原服务器包含了所有资源,而代理服务器则叫 ‘PS’ 。客户端寻找RS所提供的数据,第一次指定的数据和它的复件会经过多次配置从RS上存储在PS上。这样的话每次从PS上请求的数据就等于就是从原服务器上获取的。这样就会减轻网络拥堵,减少CPU使用率,降低网络资源的利用率从而缓解原来实际服务器的负载压力。但是RS统计不了总流量的数据因为PS分担了部分原服务器的任务。‘X-Forwarded-For HTTP’ 就能记录下通过HTTP代理或负载均衡方式连接到RS的客户端最原始的IP地址。

|

||||

> **回答** : 反向代理是Squid的一个功能,这个功能被用来加快最终用户的上网速度。下面用缩写 ‘RS’ 的表示包含了资源的原服务器,而代理服务器则称作 ‘PS’ 。初次访问时,它会从RS得到其提供的数据,并将其副本按照配置好的时间存储在PS上。这样的话每次从PS上请求的数据就相当于就是从原服务器上获取的。这样就会减轻网络拥堵,减少CPU使用率,降低网络资源的利用率,从而缓解原来实际服务器的负载压力。但是RS统计不了总流量的数据,因为PS分担了部分原服务器的任务。‘X-Forwarded-For HTTP’ 信息能用于记录下通过HTTP代理或负载均衡方式连接到RS的客户端最原始的IP地址。

|

||||

|

||||

严格意义上来说,用单个Squid服务器同时作为正向代理服务器和反向代理服务器是可行的。

|

||||

从技术上说,用单个Squid服务器同时作为正向代理服务器和反向代理服务器是可行的。

|

||||

|

||||

### 7. 由于Squid能作为一个Web缓存守护进程,那缓存可以删除吗?怎么删除? ###

|

||||

|

||||

@ -91,7 +92,7 @@ b. 创建交换分区目录。

|

||||

|

||||

# squid -z

|

||||

|

||||

### 8. 你身边有一台客户机,而你正在工作,如果想要限制儿童的访问时间段,你会怎么去设置那个场景? ###

|

||||

### 8. 你有一台工作中的机器可以访问代理服务器,如果想要限制你的孩子的访问时间,你会怎么去设置那个场景? ###

|

||||

|

||||

把允许访问的时间设置成晚上4点到7点三个小时,跨度为星期一到星期五。

|

||||

|

||||

@ -114,9 +115,9 @@ c. 重启Squid服务。

|

||||

|

||||

### 10. Squid的缓存会存储到哪里? ###

|

||||

|

||||

> **回答** : Squid存储的缓存是位于 ‘/var/spool/squid’ 的特殊目录下。

|

||||

> **回答** : Squid存储的缓存是位于 ‘/var/spool/squid’ 的特定目录下。

|

||||

|

||||

以上就是全部内容了,很快我还会带着其它有趣的内容回到这里,届时还请继续关注Tecmint。别忘了告诉我们你的反馈和评论。

|

||||

以上就是全部内容了,很快我还会带着其它有趣的内容回到这里。

|

||||

|

||||

--------------------------------------------------------------------------------

|

||||

|

||||

@ -124,7 +125,7 @@ via: http://www.tecmint.com/squid-interview-questions/

|

||||

|

||||

作者:[Avishek Kumar][a]

|

||||

译者:[ZTinoZ](https://github.com/ZTinoZ)

|

||||

校对:[校对者ID](https://github.com/校对者ID)

|

||||

校对:[wxy](https://github.com/wxy)

|

||||

|

||||

本文由 [LCTT](https://github.com/LCTT/TranslateProject) 原创翻译,[Linux中国](http://linux.cn/) 荣誉推出

|

||||

|

||||

@ -1,12 +1,12 @@

|

||||

从命令行访问Linux命令小抄

|

||||

================================================================================

|

||||

Linux命令行的强大在于其灵活及多样化,各个Linux命令都带有它自己那部分命令行选项和参数。混合并匹配它们,甚至还可以通过管道和重定向来联结不同的命令。理论上讲,你可以借助几个基本的命令来产生数以百计的使用案例。甚至对于浸淫多年的管理员而言,也难以完全使用它们。那正是命令行小抄成为我们救命稻草的一刻。

|

||||

Linux命令行的强大在于其灵活及多样化,各个Linux命令都带有它自己专属的命令行选项和参数。混合并匹配这些命令,甚至还可以通过管道和重定向来联结不同的命令。理论上讲,你可以借助几个基本的命令来产生数以百计的使用案例。甚至对于浸淫多年的管理员而言,也难以完全使用它们。那正是命令行小抄成为我们救命稻草的一刻。

|

||||

|

||||

[][1]

|

||||

|

||||

我知道联机手册页仍然是我们的良师益友,但我们想通过我们能自行支配的快速参考卡让这一切更为高效和有目的性。最终极的小抄可能被自豪地挂在你的办公室里,也可能作为PDF文件隐秘地存储在你的硬盘上,或者甚至设置成了你的桌面背景图。

|

||||

我知道联机手册页(man)仍然是我们的良师益友,但我们想通过我们能自行支配的快速参考卡让这一切更为高效和有目的性。最终极的小抄可能被自豪地挂在你的办公室里,也可能作为PDF文件隐秘地存储在你的硬盘上,或者甚至设置成了你的桌面背景图。

|

||||

|

||||

最为一个选择,也可以通过另外一个命令来访问你最爱的命令行小抄。那就是,使用[cheat][2]。这是一个命令行工具,它可以让你从命令行读取、创建或更新小抄。这个想法很简单,不过cheat经证明是十分有用的。本教程主要介绍Linux下cheat命令的使用方法。你不需要为cheat命令做个小抄了,它真的很简单。

|

||||

做为一个选择,也可以通过另外一个命令来访问你最爱的命令行小抄。那就是,使用[cheat][2]。这是一个命令行工具,它可以让你从命令行读取、创建或更新小抄。这个想法很简单,不过cheat经证明是十分有用的。本教程主要介绍Linux下cheat命令的使用方法。你不需要为cheat命令做个小抄了,它真的很简单。

|

||||

|

||||

### 安装Cheat到Linux ###

|

||||

|

||||

@ -59,9 +59,9 @@ cheat命令一个很酷的事是,它自带有超过90个的常用Linux命令

|

||||

|

||||

$ cheat -s <keyword>

|

||||

|

||||

在许多情况下,小抄适用于那些正派的人,而对其他某些人却没什么帮助。要想让内建的小抄更具个性化,cheat命令也允许你创建新的小抄,或者更新现存的那些。要这么做的话,cheat命令也会帮你在本地~/.cheat目录中保存一份小抄的副本。

|

||||

在许多情况下,小抄适用于某些人,而对另外一些人却没什么帮助。要想让内建的小抄更具个性化,cheat命令也允许你创建新的小抄,或者更新现存的那些。要这么做的话,cheat命令也会帮你在本地~/.cheat目录中保存一份小抄的副本。

|

||||

|

||||

要使用cheat的编辑功能,首先确保EDITOR环境变量设置为了你默认编辑器所在位置的完整路径。然后,复制(不可编辑)内建小抄到~/.cheat目录。你可以通过下面的命令找到内建小抄所在的位置。一旦你找到了它们的位置,只不过是将它们拷贝到~/.cheat目录。

|

||||

要使用cheat的编辑功能,首先确保EDITOR环境变量设置为你默认编辑器所在位置的完整路径。然后,复制(不可编辑)内建小抄到~/.cheat目录。你可以通过下面的命令找到内建小抄所在的位置。一旦你找到了它们的位置,只不过是将它们拷贝到~/.cheat目录。

|

||||

|

||||

$ cheat -d

|

||||

|

||||

@ -85,7 +85,7 @@ via: http://xmodulo.com/2014/07/access-linux-command-cheat-sheets-command-line.h

|

||||

|

||||

作者:[Dan Nanni][a]

|

||||

译者:[GOLinux](https://github.com/GOLinux)

|

||||

校对:[校对者ID](https://github.com/校对者ID)

|

||||

校对:[wxy](https://github.com/wxy)

|

||||

|

||||

本文由 [LCTT](https://github.com/LCTT/TranslateProject) 原创翻译,[Linux中国](http://linux.cn/) 荣誉推出

|

||||

|

||||

@ -8,12 +8,13 @@

|

||||

|

||||

### 添加窗口按钮 ###

|

||||

|

||||

处于一些未知的原因,GNOME的开发者们决定对标准的窗口按钮(关闭,最小化,最大化)不屑一顾,而支持只有单个关闭按钮的窗口了。我缺少了最大化按钮(虽然你可以简单地拖动窗口到屏幕顶部来将它最大化),然而也可以通过在标题栏右击选择最小化或者最大化来进行最小化/最大化操作。这种变化仅仅增加了操作步骤,因此缺少最小化按钮实在搞得人云里雾里。所幸的是,有个简单的修复工具可以解决这个问题,下面说说怎样做吧:

|

||||

出于一些未知的原因,GNOME的开发者们决定对标准的窗口按钮(关闭,最小化,最大化)不屑一顾,而支持只有单个关闭按钮的窗口了。我缺少了最大化按钮(虽然你可以简单地拖动窗口到屏幕顶部来将它最大化),而且也可以通过在标题栏右击选择最小化或者最大化来进行最小化/最大化操作。这种变化仅仅增加了操作步骤,因此缺少最小化按钮实在搞得人云里雾里。所幸的是,有个简单的修复工具可以解决这个问题,下面说说怎样做吧:

|

||||

|

||||

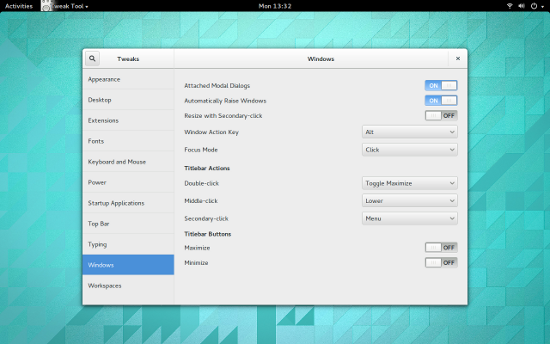

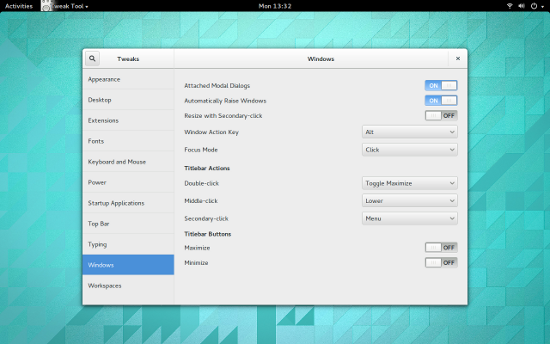

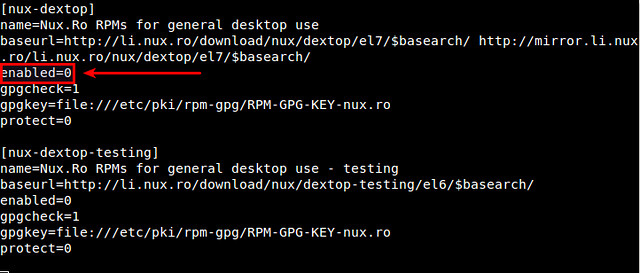

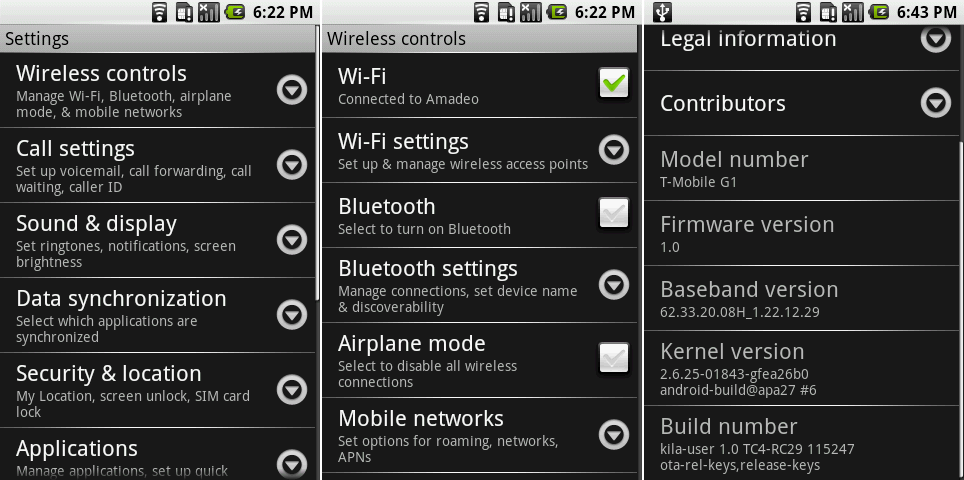

默认情况下,你应该安装了GNOME优化工具。通过该工具,你可以打开最大化或最小化按钮(图1)。

|

||||

默认情况下,你应该安装了GNOME优化工具(GNOME Tweak Tool)。通过该工具,你可以打开最大化或最小化按钮(图1)。

|

||||

|

||||

|

||||

Figure 1: 添加回最小化按钮到GNOME 3窗口

|

||||

<center>

|

||||

|

||||

*图 1: 添加回最小化按钮到GNOME 3窗口*</center>

|

||||

|

||||

添加完后,你就可以看到最小化按钮了,它在关闭按钮的左边,等着为你服务呢。你的窗口现在管理起来更方便了。

|

||||

|

||||

@ -27,36 +28,39 @@ Figure 1: 添加回最小化按钮到GNOME 3窗口

|

||||

|

||||

### 添加扩展 ###

|

||||

|

||||

GNOME 3的最佳特性之一,就是shell扩展,这些扩展为GNOME带来了全部种类的有用的特性。关于shell扩展,没必要从包管理器去安装。你可以访问[GNOME Shell扩展][2]站点,搜索你想要添加的扩展,点击扩展列表,点击打开按钮,然后扩展就安装完成了;或者你也可以从GNOME优化工具中添加它们(你在网站上会找到更多可用的扩展)。

|

||||

GNOME 3的最佳特性之一,就是shell扩展,这些扩展为GNOME带来了各种类别的有用特性。关于shell扩展,没必要从包管理器去安装。你可以访问[GNOME Shell扩展][2]站点,搜索你想要添加的扩展,点击扩展列表,点击打开按钮,然后扩展就安装完成了;或者你也可以从GNOME优化工具中添加它们(你在网站上会找到更多可用的扩展)。

|

||||

|

||||

注:你可能需要在浏览器中允许扩展安装。如果出现这样的情况,你会在第一次访问GNOME Shell扩展站点时见到警告信息。当出现提示时,只要点击允许即可。

|

||||

|

||||

令人印象更为深刻的(而又得心应手的扩展)之一,就是[Dash to Dock][3]。

|

||||

令人印象更为深刻的(而又得心应手的)扩展之一,就是[Dash to Dock][3]。

|

||||

|

||||

该扩展将Dash移出应用程序概览,并将它转变为相当标准的停靠栏(图2)。

|

||||

|

||||

|

||||

Figure 2: Dash to Dock添加一个停靠栏到GNOME 3.

|

||||

<center>

|

||||

|

||||

*图 2: Dash to Dock添加一个停靠栏到GNOME 3*</center>

|

||||

|

||||

当你添加应用程序到Dash后,他们也将被添加到Dash to Dock。你也可以通过点击Dock底部的6点图标访问应用程序概览。

|

||||

|

||||

还有大量其它扩展聚焦于讲GNOME 3打造成一个更为高效的桌面,在这些更好的扩展中,包括以下这些:

|

||||

还有大量其它扩展致力于将GNOME 3打造成一个更为高效的桌面,在这些不错的扩展中,包括以下这些:

|

||||

|

||||

- [最近项目][4]: 添加一个最近使用项目的下拉菜单到面板。

|

||||

- [搜索Firefox书签提供者][5]: 从概览搜索(并启动)书签。

|

||||

- [Firefox书签搜索][5]: 从概览搜索(并启动)书签。

|

||||

- [跳转列表][6]: 添加一个跳转列表弹出菜单到Dash图标(该扩展可以让你快速打开和程序关联的新文档,甚至更多)

|

||||

- [待办列表][7]: 添加一个下拉列表到面板,它允许你添加项目到该列表。

|

||||

- [网页搜索对话框][8]: 允许你通过敲击Ctrl+空格来快速搜索网页并输入一个文本字符串(结果在新的浏览器标签页中显示)。

|

||||

- [网页搜索框][8]: 允许你通过敲击Ctrl+空格来快速搜索网页并输入一个文本字符串(结果在新的浏览器标签页中显示)。

|

||||

|

||||

### 添加一个完整停靠栏 ###

|

||||

|

||||

如果Dash to dock对于而言功能还是太有限(你想要通知区域,甚至更多),那么向你推荐我最喜爱的停靠栏之一[Cairo Dock][9](图3)。

|

||||

如果Dash to dock对于你而言功能还是太有限(你想要“通知区域”,甚至更多),那么向你推荐我最喜爱的停靠栏之一[Cairo Dock][9](图3)。

|

||||

|

||||

|

||||

Figure 3: Cairo Dock待命

|

||||

<center>

|

||||

|

||||

在Cairo Dock添加到GNOME 3后,你的体验将成倍地增长。从你的发行版的包管理器中安装这个优秀的停靠栏吧。

|

||||

*图 3: Cairo Dock待命*</center>

|

||||

|

||||

不必将GNOME 3看作是一个效率不高的,用户不友好的桌面。只要稍作调整,GNOME 3可以成为和其它可用的桌面一样强大而用户友好的桌面。

|

||||

在将Cairo Dock添加到GNOME 3后,你的体验将成倍地增长。从你的发行版的包管理器中安装这个优秀的停靠栏吧。

|

||||

|

||||

不要将GNOME 3看作是一个效率不高的,用户不友好的桌面。只要稍作调整,GNOME 3可以成为和其它可用的桌面一样强大而用户友好的桌面。

|

||||

|

||||

--------------------------------------------------------------------------------

|

||||

|

||||

@ -64,7 +68,7 @@ via: http://www.linux.com/learn/tutorials/781916-easy-steps-to-make-gnome-3-more

|

||||

|

||||

作者:[Jack Wallen][a]

|

||||

译者:[GOLinux](https://github.com/GOLinux)

|

||||

校对:[校对者ID](https://github.com/校对者ID)

|

||||

校对:[ wxy](https://github.com/wxy)

|

||||

|

||||

本文由 [LCTT](https://github.com/LCTT/TranslateProject) 原创翻译,[Linux中国](http://linux.cn/) 荣誉推出

|

||||

|

||||

@ -1,4 +1,4 @@

|

||||

什么时候Linux才能完美

|

||||

什么时候Linux才能完美?

|

||||

================================================================================

|

||||

前几天我的同事兼损友,Ken Starks,在FOSS Force上发表了[一篇文章][1],关于他最喜欢发牢骚的内容:Linux系统中那些不能正常工作的事情。这次他抱怨的是在Mint里使用KDE时碰到的字体问题。这对于Ken来说也不是什么新鲜事了。过去他写了一些文章,关于各种Linux发行版中的缺陷从来都没有被认真修复过。他的观点是,这些在一次又一次的发布中从没有被修复过的“小问题”,对于Linux桌面系统在赢得大众方面的失败需要负主要责任。

|

||||

|

||||

@ -14,21 +14,21 @@

|

||||

|

||||

### 也不全是这样子的 ###

|

||||

|

||||

早在2002年的时候,我第一次安装使用GNU/Linux,像大多数美国人那样,我搞不定拨号连接,在我呆的这个小地方当时宽带还没普及。我在当地Best Buy商店里花了差不多70美元买了用热缩膜包装的Mandrake 9.0的Powerpack版,当时那里同时在卖Mandrake和Red Hat,现在仍然还在经营桌面业务。

|

||||

早在2002年的时候,我第一次安装使用GNU/Linux,像大多数美国人那样,我搞不定拨号连接,在我呆的这个小地方当时宽带还没普及。我在当地Best Buy商店里花了差不多70美元买了用热缩膜包装的Mandrake 9.0的Powerpack版,当时那里同时在卖Mandrake和Red Hat,现在仍然还在经营桌面PC业务。

|

||||

|

||||

在那个恐龙时代,Mandrake被认为是易用的Linux发行版中做的最好的。它安装简单,还有人说比Windows还简单,它自带的分区工具更是让划分磁盘像切苹果馅饼一样简单。不过实际上,Linux老手们经常公开嘲笑Mandrake,暗示易用的Linux不是真的Linux。

|

||||

|

||||

但是我很喜欢它,感觉来到了一个全新的世界。再也不用担心Windows的蓝屏死机和几乎每天一死了。不幸的是,之前在Windows下“能用”的很多外围设备也随之而去。

|

||||

|

||||

安装完Mandrake之后我要做的第一件事就是,把我的小白盒拿给[Dragonware Computers][2]的Michelle,把便宜的winmodem换成硬件调制解调器。就算,一个硬件猫意味着计算机响应更快,但是计算机商店却在40英里外的地方,并不是很方便,而且费用我也有点压力。

|

||||

安装完Mandrake之后我要做的第一件事就是,把我的小白盒拿给[Dragonware Computers][2]的Michelle,把便宜的winmodem换成硬件调制解调器。就算是一个硬件猫意味着计算机响应更快,但是计算机商店却在40英里外的地方,并不是很方便,而且费用对我也有点压力。

|

||||

|

||||

但是我不介意。我对Microsoft并不感冒--而且使用一个“不同”的操作系统让我感觉自己就像一个计算机天才。

|

||||

|

||||

打印机也是个麻烦,但是这个问题对于Mandrake还好,不像其他大多数发行版还需要命令行里的操作才能解决。Mandrake提供了一个华丽的图形界面来设置打印机-如果你正好幸运的有一台能在Linux下工作的打印机的话。很多,不是大多数,都不行。

|

||||

打印机也是个麻烦,但是这个问题对于Mandrake还好,不像其他大多数发行版还需要命令行里的操作才能解决。Mandrake提供了一个华丽的图形界面来设置打印机-如果你正好幸运的有一台能在Linux下工作的打印机的话。很多打印机——就算不是大多数——都不行。

|

||||

|

||||

我的还在保修期的Lexmark,在Windows下比其他打印机多出很多华而不实的小功能,厂商并不支持Linux版本,但是我找到一个多少能用的开源逆向工程驱动。它能在Mozilla浏览器里正常打印网页,但是在Star Office软件里打印的话会是用很小的字体塞到页面的右上角里。打印机还会发出很大的机械响声,让我想起了汽车变速箱在报废时发出的噪音。

|

||||

|

||||

Star Office问题的变通方案是把所有文字都保存到文本文件,然后在文本编辑器里打印。而对于那个听上去像是打印机处于自解体模式的噪音?我的方法是尽量不要打印。

|

||||

Star Office问题的变通方案是把所有文字都保存到文本文件,然后在文本编辑器里打印。而对于那个听上去像是打印机处于天魔解体模式的噪音?我的方法是尽量不要打印。

|

||||

|

||||

### 更多的其他问题-对我来说太多了都快忘了 ###

|

||||

|

||||

@ -36,12 +36,13 @@ Star Office问题的变通方案是把所有文字都保存到文本文件,然

|

||||

|

||||

好吧,我还有个并口扫描仪,在我转移到Linux之前两个星期买的,之后它就基本是块砖了,因为没有Linux下的驱动。

|

||||

|

||||

我的观点是在那个年代里这些都不重要。我们大多数人都习惯了修改配置文件之类的事情,即便是运行微软产品的“IBM兼容”计算机。就像那个年代的大多数用户,我刚学开始接触使用命令行的DOS机器,在它上面打印机需要针对每个程序单独设置,而且写写简单的autoexec.bat是必须的技能。

|

||||

我的观点是在那个年代里这些都不重要。我们大多数人都习惯了修改配置文件之类的事情,即便是运行微软产品的“IBM兼容”计算机。就像那个年代的大多数用户,我刚学开始接触使用命令行的DOS机器,在它上面打印机需要针对每个程序单独设置,而且写写简单的autoexec.bat是必备的技能。

|

||||

|

||||

|

||||

Linux就像1966年的“山羊”

|

||||

<center></center>

|

||||

|

||||

能够摆弄操作系统内部的配置是能够拥有一台计算机的一个简单部分。我们大多数使用计算机的人要么是极客或是希望成为极客。我们为这种能够调整计算机按我们想要的方式运行的能力而感到骄傲。我们就是那个年代里高科技版本的好男孩,他们会在周六下午在树荫下改装他们肌肉车上的排气管,通风管,化油器之类的。

|

||||

<center>Linux就像1966年的“山羊”</center>

|

||||

|

||||

那时,能够摆弄操作系统内部的配置是能够拥有一台计算机的一个简单部分。我们大多数使用计算机的人要么是极客或是希望成为极客。我们为这种能够调整计算机按我们想要的方式运行的能力而感到骄傲。我们就是那个年代里高科技版本的好男孩,他们会在周六下午在树荫下改装他们肌肉车上的排气管,通风管,化油器之类的。

|

||||

|

||||

### 不过现在大家不是这样使用计算机的 ###

|

||||

|

||||

@ -59,7 +60,7 @@ via: http://fossforce.com/2014/08/when-linux-was-perfect-enough/

|

||||

|

||||

作者:Christine Hall

|

||||

译者:[zpl1025](https://github.com/zpl1025)

|

||||

校对:[校对者ID](https://github.com/校对者ID)

|

||||

校对:[wxy](https://github.com/wxy)

|

||||

|

||||

本文由 [LCTT](https://github.com/LCTT/TranslateProject) 原创翻译,[Linux中国](http://linux.cn/) 荣誉推出

|

||||

|

||||

@ -1,20 +1,17 @@

|

||||

使用Clonezilla对硬盘进行镜像和克隆

|

||||

================================================================================

|

||||

|

||||

|

||||

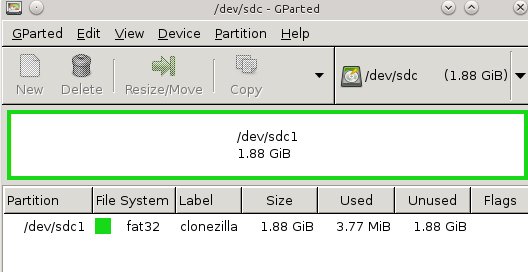

图1: 在USB存储棒上为Clonezilla创建分区

|

||||

Clonezilla是一个用于Linux,Free-Net-OpenBSD,Mac OS X,Windows以及Minix的分区和磁盘克隆程序。它支持所有主要的文件系统,包括EXT,NTFS,FAT,XFS,JFS和Btrfs,LVM2,以及VMWare的企业集群文件系统VMFS3和VMFS5。Clonezilla支持32位和64位系统,同时支持旧版BIOS和UEFI BIOS,并且同时支持MBR和GPT分区表。它是一个用于完整备份Windows系统和所有安装于上的应用软件的好工具,而我喜欢用它来为Linux测试系统做备份,以便我可以在其上做疯狂的实验搞坏后,可以快速恢复它们。

|

||||

|

||||

Clonezilla是一个用于Linux,Free-Net-,OpenBSD,Mac OS X,Windows以及Minix的分区和磁盘克隆程序。它支持所有主要的文件系统,包括EXT,NTFS,FAT,XFS,JFS和Btrfs,LVM2,以及VMWare的企业集群文件系统VMFS3和VMFS5。Clonezilla支持32位和64位系统,同时支持旧版BIOS和UEFI BIOS,并且同时支持MBR和GPT分区表。它是一个用于完整备份Windows系统和所有安装于上的应用软件的好工具,而我喜欢用它来为Linux测试系统做备份,以便我可以在其上做疯狂的实验搞坏后,可以快速恢复它们。

|

||||

Clonezilla也可以使用dd命令来备份不支持的文件系统,该命令可以复制块而非文件,因而不必在意文件系统。简单点说,就是Clonezilla可以复制任何东西。(关于块的快速说明:磁盘扇区是磁盘上最小的可编址存储单元,而块是由单个或者多个扇区组成的逻辑数据结构。)

|

||||

|

||||

Clonezilla也可以使用dd命令来备份不支持的文件系统,该命令可以复制块而非文件,因而不必弄明白文件系统。因此,简单点说,就是Clonezilla可以复制任何东西。(关于块的快速说明:磁盘扇区是磁盘上最小的可编址存储单元,而块是由单个或者多个扇区组成的逻辑数据结构。)

|

||||

|

||||

Clonezilla分为两个版本:Clonezilla Live和Clonezilla Server Edition(SE)。Clonezilla Live对于将单个计算机克隆岛本地存储设备或者网络共享来说是一流的。而Clonezilla SE则适合更大的部署,用于一次性快速多点克隆整个网络中的PC。Clonezilla SE是一个神奇的软件,我们将在今后讨论。今天,我们将创建一个Clonezilla Live USB存储棒,克隆某个系统,然后恢复它。

|

||||

Clonezilla分为两个版本:Clonezilla Live和Clonezilla Server Edition(SE)。Clonezilla Live对于将单个计算机克隆到本地存储设备或者网络共享来说是一流的。而Clonezilla SE则适合更大的部署,用于一次性快速多点克隆整个网络中的PC。Clonezilla SE是一个神奇的软件,我们将在今后讨论。今天,我们将创建一个Clonezilla Live USB存储棒,克隆某个系统,然后恢复它。

|

||||

|

||||

### Clonezilla和Tuxboot ###

|

||||

|

||||

当你访问下载页时,你会看到[稳定版和可选稳定发行版][1]。也有测试版本,如果你有兴趣帮助改善Clonezilla,那么我推荐你使用此版本。稳定版基于Debian,不含有非自由软件。可选稳定版基于Ubuntu,包含有一些非自由固件,并支持UEFI安全启动。

|

||||

|

||||

在你[下载Clonezilla][2]后,请安装[Tuxboot][3]来复制Clonezilla到USB存储棒。Tuxboot是一个Unetbootin的修改版,它支持Clonezilla;你不能使用Unetbootin,因为它无法工作。安装Tuxboot有点让人头痛,然而Ubuntu用户通过个人包归档压缩包(PPA)方便地安装:

|

||||

在你[下载Clonezilla][2]后,请安装[Tuxboot][3]来复制Clonezilla到USB存储棒。Tuxboot是一个Unetbootin的修改版,它支持Clonezilla;你不能使用Unetbootin,因为它无法配合工作。安装Tuxboot有点让人头痛,然而Ubuntu用户通过个人包归档包(PPA)方便地安装:

|

||||

|

||||

$ sudo apt-add-repository ppa:thomas.tsai/ubuntu-tuxboot

|

||||

$ sudo apt-get update

|

||||

@ -22,18 +19,24 @@ Clonezilla分为两个版本:Clonezilla Live和Clonezilla Server Edition(SE

|

||||

|

||||

如果你没有运行Ubuntu,并且你的发行版不包含打包好的Tuxboot版本,那么请[下载源代码tarball][4],并遵循README.txt文件中的说明来编译并安装。

|

||||

|

||||

安装完Tuxboot后,就可以使用它来创建你精巧的可直接启动的Clonezilla USB存储棒了。首先,创建一个最小200MB的FAT 32分区;图1(上面)展示了使用GParted来进行分区。我喜欢使用标签,比如“Clonezilla”,这会让我知道它是个什么东西。该例子中展示了将一个2GB的存储棒格式化成一个单个分区。

|

||||

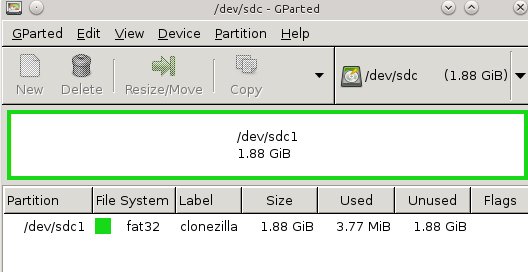

Then fire up Tuxboot (figure 2). Check "Pre-downloaded" and click the button with the ellipsis to select your Clonezilla file. It should find your USB stick automatically, and you should check the partition number to make sure it found the right one. In my example that is /dev/sdd1. Click OK, and when it's finished click Exit. It asks you if you want to reboot now, but don't worry because it won't. Now you have a nice portable Clonezilla USB stick you can use almost anywhere.

|

||||

然后,启动Tuxboot(图2)。选中“预下载的(Pre-downloaded)”然后点击带省略号的按钮来选择Clonezilla文件。它会自动发现你的USB存储棒,而你需要选中分区号来确保它找到的是正确的那个,我的例子中是/dev/sdd1。点击确定,然后当它完成后点击退出。它会问你是否要重启动,请不要担心,因为它不会的。现在你有一个精巧的便携式Clonezilla USB存储棒了,你可以随时随地使用它了。

|

||||

<center></center>

|

||||

|

||||

|

||||

图2: 启动Tuxboot

|

||||

<center>*图1: 在USB存储棒上为Clonezilla创建分区*</center>

|

||||

|

||||

|

||||

安装完Tuxboot后,就可以使用它来创建你精巧的可直接启动的Clonezilla USB存储棒了。首先,创建一个最小200MB的FAT 32分区;图1(上图)展示了使用GParted来进行分区。我喜欢使用类似“Clonezilla”这样的标签,这会让我知道它是个什么东西。该例子中展示了将一个2GB的存储棒格式化成一个单个分区。

|

||||

|

||||

然后,启动Tuxboot(图2)。选中“预下载的(Pre-downloaded)”然后点击带省略号的按钮来选择Clonezilla文件。它会自动发现你的USB存储棒,而你需要选中分区号来确保它找到的是正确的那个,我的例子中是/dev/sdd1。点击确定,然后当它完成后点击退出。它会问你是否要重启动,不要担心,现在不用重启。现在你有一个精巧的便携式Clonezilla USB存储棒了,你可以随时随地使用它了。

|

||||

|

||||

<center></center>

|

||||

|

||||

<center>*图2: 启动Tuxboot*</center>

|

||||

|

||||

### 创建磁盘镜像 ###

|

||||

|

||||

在你想要备份的计算机上启动Clonezilla USB存储棒,第一个映入你眼帘的是常规的启动菜单。启动到默认条目。你会被问及使用何种语言和键盘,而当你到达启动Clonezilla菜单时,请选择启动Clonezilla。在下一级菜单中选择设备镜像,然后进入下一屏。

|

||||

|

||||

这一屏有点让人摸不着头脑,里头有什么local_dev,ssh_server,samba_server,以及nfs_server之类的选项。这里就是要你选择将备份的镜像拷贝到哪里,目标分区或者驱动器必须和你要拷贝的卷要一样大,甚至更大。如果你选择local_dev,那么你需要一个足够大的本地分区来存储你的镜像。附加USB硬盘驱动器是一个不错的,快速而又简单的选项。如果你选择任何服务器选项,你需要有线连接到服务器,并提供IP地址并登录上去。我将使用一个本地分区,这就是说要选择local_dev。

|

||||

这一屏有点让人摸不着头脑,里头有什么local_dev,ssh_server,samba_server,以及nfs_server之类的选项。这里就是要你选择将备份的镜像拷贝到哪里,目标分区或者驱动器必须和你要拷贝的卷要一样大,甚至更大。如果你选择local_dev,那么你需要一个足够大的本地分区来存储你的镜像。附加的USB硬盘驱动器是一个不错的,快速而又简单的选项。如果你选择任何服务器选项,你需要能连接到服务器,并提供IP地址并登录上去。我将使用一个本地分区,这就是说要选择local_dev。

|

||||

|

||||

当你选择local_dev时,Clonezilla会扫描所有连接到本地的存储折本,包括硬盘和USB存储设备。然后,它会列出所有分区。选择你想要存储镜像的分区,然后它会问你使用哪个目录并列出目录。选择你所需要的目录,然后进入下一屏,它会显示所有的挂载以及已使用/可用的空间。按回车进入下一屏,请选择初学者还是专家模式。我选择初学者模式。

|

||||

|

||||

@ -41,12 +44,13 @@ Then fire up Tuxboot (figure 2). Check "Pre-downloaded" and click the button wit

|

||||

|

||||

下一屏中,它会问你新建镜像的名称。在接受默认名称,或者输入你自己的名称后,进入下一屏。Clonezilla会扫描你所有的分区并创建一个检查列表,你可以从中选择你想要拷贝的。选择完后,在下一屏中会让你选择是否进行文件系统检查并修复。我才没这耐心,所以直接跳过了。

|

||||

|

||||

下一屏中,会问你是否想要Clonezilla检查你新创建的镜像,以确保它是可恢复的。选是吧,确保万无一失。接下来,它会给你一个命令行提示,如果你想用命令行而非GUI,那么你必须再次按回车。你需要再次确认,并输入y来确认制作拷贝。

|

||||

下一屏中,会问你是否想要Clonezilla检查你新创建的镜像,以确保它是可恢复的。选“是”吧,确保万无一失。接下来,它会给你一个命令行提示,如果你想用命令行而非GUI,那么你必须再次按回车。你需要再次确认,并输入y来确认制作拷贝。

|

||||

|

||||

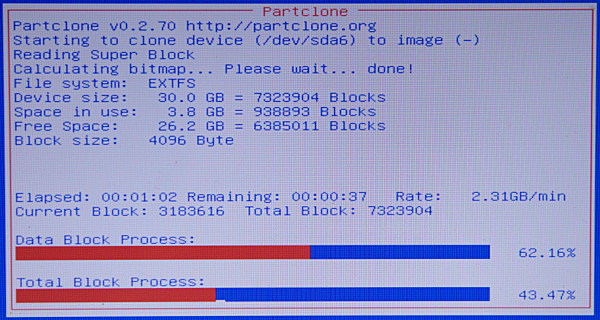

在Clonezilla创建新镜像的时候,你可以好好欣赏一下这个友好的红、白、蓝三色的进度屏(图3)。

|

||||

|

||||

|

||||

图3: 守候创建新镜像

|

||||

<center></center>

|

||||

|

||||

<center>*图3: 守候创建新镜像*</center>

|

||||

|

||||

全部完成后,按回车然后选择重启,记得拔下你的Clonezilla USB存储棒。正常启动计算机,然后去看看你新创建的Clonezilla镜像吧。你应该看到像下面这样的东西:

|

||||

|

||||

@ -81,7 +85,7 @@ via: http://www.linux.com/learn/tutorials/783416-how-to-image-and-clone-hard-dri

|

||||

|

||||

作者:[Carla Schroder][a]

|

||||

译者:[GOLinux](https://github.com/GOLinux)

|

||||

校对:[校对者ID](https://github.com/校对者ID)

|

||||

校对:[wxy](https://github.com/wxy)

|

||||

|

||||

本文由 [LCTT](https://github.com/LCTT/TranslateProject) 原创翻译,[Linux中国](http://linux.cn/) 荣誉推出

|

||||

|

||||

@ -1,14 +1,14 @@

|

||||

在哪儿以及怎么写代码:选择最好的免费代码编辑器

|

||||

何处写,如何写:选择最好的免费在线代码编辑器

|

||||

================================================================================

|

||||

深入了解一下Cloud9,Koding和Nitrous.IO。

|

||||

> 深入了解一下Cloud9,Koding和Nitrous.IO。

|

||||

|

||||

|

||||

|

||||

**已经准备好开始你的第一个编程项目了吗?很好!只要配置一下**终端或命令行,学习如何使用并安装所有要用到的编程语言,插件库和API函数库。当最终准备好一切以后,再安装好[Visual Studio][1]就可以开始了,然后才可以预览自己的工作。

|

||||

已经准备好开始你的第一个编程项目了吗?很好!只要配置一下终端或命令行,学习如何使用它,然后安装所有要用到的编程语言,插件库和API函数库。当最终准备好一切以后,再安装好[Visual Studio][1]就可以开始了,然后才可以预览自己的工作。

|

||||

|

||||

至少这是大家过去已经熟悉的方式。

|

||||

|

||||

也难怪初学程序员们逐渐喜欢上在线集成开发环境(IDE)了。IDE是一个代码编辑器,不过已经准备好编程语言以及所有需要的依赖,可以让你避免把它们一一安装到电脑上的麻烦。

|

||||

也难怪初学程序员们逐渐喜欢上在线的集成开发环境(IDE)了。IDE是一个代码编辑器,不过已经准备好编程语言以及所有需要的依赖,可以让你避免把它们一一安装到电脑上的麻烦。

|

||||

|

||||

我想搞清楚到底是哪些因素能组成一个典型的IDE,所以我试用了一下免费级别的时下最受欢迎的三款集成开发环境:[Cloud9][2],[Koding][3]和[Nitrous.IO][4]。在这个过程中,我了解了许多程序员应该或不应该使用IDE的各种情形。

|

||||

|

||||

@ -16,7 +16,7 @@

|

||||

|

||||

假如有一个像Microsoft Word那样的文字编辑器,想想类似Google Drive那样的IDE吧。你可以拥有类似的功能,但是它还能支持从任意电脑上访问,还能随时共享。因为因特网在项目工作流中的影响已经越来越重要,IDE也让生活更轻松。

|

||||

|

||||

在我最近的一篇ReadWrite教程中我使用了Nitrous.IO,这是在文章[创建一个你自己的像Yo那样的极端简单的聊天应用][5]里的一个Python应用。当使用IDE的时候,你只要选择你要用的编程语言,然后通过IDE特别设计用来运行这种语言程序的虚拟机(VM),你就可以测试和预览你的应用了。

|

||||

在我最近的一篇ReadWrite教程中我使用了Nitrous.IO,这是在文章“[创建一个你自己的像Yo那样的极端简单的聊天应用][5]”里的一个Python应用。当使用IDE的时候,你只要选择你要用的编程语言,然后通过IDE特别为运行这种语言程序而设计的虚拟机(VM),你就可以测试和预览你的应用了。

|

||||

|

||||

如果你读过那篇教程,就会知道我的那个应用只用到了两个API库-信息服务Twilio和Python微框架Flask。在我的电脑上就算是使用文字编辑器和终端来做也是很简单的,不过我选择使用IDE还有一个方便的地方:如果大家都使用同样的开发环境,跟着教程一步步走下去就更简单了。

|

||||

|

||||

@ -28,7 +28,7 @@

|

||||

|

||||

但是不能用IDE来永久存储你的整个项目。把帖子保存在Google Drive文件中不会让你的博客丢失。类似Google Drive,IDE可以让你创建链接用于共享内容,但是任何一个都还不足以替代真正的托管服务器。

|

||||

|

||||

还有,IDE并不是设计成方便广泛共享。尽管各种IDE都在不断改善大多数文字编辑器的预览功能,还只能用来给你的朋友或同事展示一下应用预览,而不是,比如说,类似Hacker News的主页。那样的话,占用太多带宽的IDE也许会让你崩溃。

|

||||

还有,IDE并不是设计成方便广泛共享。尽管各种IDE都在不断改善大多数文字编辑器的预览功能,还只能用来给你的朋友或同事展示一下应用的预览,而不是像Hacker News一样的主页。那样的话,占用太多带宽的IDE也许会让你崩溃。

|

||||

|

||||

这样说吧:IDE只是构建和测试你的应用的地方,托管服务器才是它们生存的地方。所以一旦完成了你的应用,你会希望把它布置到能长期托管的云服务器上,最好是能免费托管的那种,例如[Heroku][6]。

|

||||

|

||||

@ -44,7 +44,7 @@

|

||||

|

||||

当我完成了Cloud9的注册后,它提示的第一件事情就是添加我的GitHub和BitBucket账号。马上,所有我的GitHub项目,个人的和协作的,都可以直接克隆到本地并使用Cloud9的开发工具开始工作。其他的IDE在和GitHub集成的方面都没有达到这种水准。

|

||||

|

||||

在我测试的这三款IDE中,Cloud9看起来更加侧重于一个可以让协同工作的人们无缝衔接工作的环境。在这里,它并不是角落里放个聊天窗口。实际上,按照CEO Ruben Daniels说的,试用Cloud9的协作者可以互相看到其他人实时的编码情况,就像Google Drive上的合作者那样。

|

||||

在我测试的这三款IDE中,Cloud9看起来更加侧重于一个可以让协同工作的人们无缝衔接工作的环境。在这里,它并不是角落里放个聊天窗口。实际上,按照其CEO Ruben Daniels说的,试用Cloud9的协作者可以互相看到其他人实时的编码情况,就像Google Drive上的合作者那样。

|

||||

|

||||

“大多数IDE服务的协同功能只能操作单一文件”,Daniels说,“而我们的产品可以支持整个项目中的不同文件。协同功能被完美集成到了我们的IDE中。”

|

||||

|

||||

@ -58,15 +58,15 @@ IDE可以提供你所需的工具来构建和测试所有开源编程语言的

|

||||

|

||||

### Nitrous.IO: An IDE Wherever You Want ###

|

||||

|

||||

相对于自己的桌面环境,使用IDE的最大优势是它是自包含的。你不需要安装任何其他的就可以使用。而另一方面,使用自己的桌面环境的最大优势就是你可以在本地工作,甚至在没有互联网的情况下。

|

||||

相对于自己的桌面环境,使用IDE的最大优势是它是自足的。你不需要安装任何其他的东西就可以使用。而另一方面,使用自己的桌面环境的最大优势就是你可以在本地工作,甚至在没有互联网的情况下。

|

||||

|

||||

Nitrous.IO结合了这两个优势。你可以在网站上在线使用这个IDE,你也可以把它下载到自己的饿电脑上,共同创始人AJ Solimine这样说。优点是你可以结合Nitrous的集成性和你最喜欢的文字编辑器的熟悉。

|

||||

Nitrous.IO结合了这两个优势。“你可以在网站上在线使用这个IDE,你也可以把它下载到自己的电脑上”,其共同创始人AJ Solimine这样说。优点是你可以结合Nitrous的集成性和你最喜欢的文字编辑器的熟悉。

|

||||

|

||||

他说:“你可以使用任意当代浏览器访问Nitrous.IO的在线IDE网站,但我们仍然提供了方便的Windows和Mac桌面应用,可以让你使用你最喜欢的编辑器来写代码。”

|

||||

他说:“你可以使用任意现代浏览器访问Nitrous.IO的在线IDE网站,但我们仍然提供了方便的Windows和Mac桌面应用,可以让你使用你最喜欢的编辑器来写代码。”

|

||||

|

||||

### 底线 ###

|

||||

|

||||

这一个星期的[使用][7]三个不同IDE的最让我意外的收获?它们是如此相似。[当用来做最基本的代码编辑的时候][8],它们都一样的好用。

|

||||

这一个星期[使用][7]三个不同IDE的最让我意外的收获是什么?它们是如此相似。[当用来做最基本的代码编辑的时候][8],它们都一样的好用。

|

||||

|

||||

Cloud9,Koding,[和Nitrous.IO都支持][9]所有主流的开源编程语言,从Ruby到Python到PHP到HTML5。你可以选择任何一种VM。

|

||||

|

||||

@ -76,7 +76,7 @@ Cloud9和Nitrous.IO都实现了GitHub的一键集成。Koding需要[多几个步

|

||||

|

||||

不好的一面,它们都有相同的缺陷,不过考虑到它们都是免费的也还合理。你每次只能同时运行一个VM来测试特定编程语言写出的程序。而当你一段时间没有使用VM之后,IDE会把VM切换成休眠模式以节省带宽,而下次要用的时候就得等它重新加载(Cloud9在这一点上更加费力)。它们中也没有任何一个为已完成的项目提供像样的永久托管服务。

|

||||

|

||||

所以,对咨询我是否有一个完美的免费IDE的人,答案是可能没有。但是这也要看你侧重的地方,对你的某个项目来说也许有一个完美的IDE。

|

||||

所以,对咨询我是否有一个完美的免费IDE的人来说,答案是可能没有。但是这也要看你侧重的地方,对你的某个项目来说也许有一个完美的IDE。

|

||||

|

||||

图片由[Shutterstock][11]友情提供

|

||||

|

||||

@ -86,7 +86,7 @@ via: http://readwrite.com/2014/08/14/cloud9-koding-nitrousio-integrated-developm

|

||||

|

||||

作者:[Lauren Orsini][a]

|

||||

译者:[zpl1025](https://github.com/zpl1025)

|

||||

校对:[校对者ID](https://github.com/校对者ID)

|

||||

校对:[wxy](https://github.com/wxy)

|

||||

|

||||

本文由 [LCTT](https://github.com/LCTT/TranslateProject) 原创翻译,[Linux中国](http://linux.cn/) 荣誉推出

|

||||

|

||||

@ -0,0 +1,155 @@

|

||||

15个关于Linux的‘cd’命令的练习例子

|

||||

===========================

|

||||

|

||||

在Linux中,**‘cd‘(改变目录)**命令,是对新手和系统管理员来说,最重要最常用的命令。对管理无图形界面的服务器的管理员,‘**cd**‘是进入目录,检查日志,执行程序/应用软件/脚本和其余每个任务的唯一方法。对新手来说,是他们必须自己动手学习的最初始命令

|

||||

|

||||

|

||||

|

||||

*Linux中15个cd命令举例*

|

||||

|

||||

所以,请用心学习,我们在这会带给你**15**个基础的‘**cd**‘命令,它们富有技巧和捷径,学会使用这些了解到的技巧,会大大减少你在终端上花费的努力和时间

|

||||

|

||||

### 课程细节 ###

|

||||

|

||||

- 命令名称:cd

|

||||

- 代表:切换目录

|

||||

- 使用平台:所有Linux发行版本

|

||||

- 执行方式:命令行

|

||||

- 权限:访问自己的目录或者其余指定目录

|

||||

- 级别:基础/初学者

|

||||

|

||||

1. 从当前目录切换到/usr/local

|

||||

|

||||

avi@tecmint:~$ cd /usr/local

|

||||

avi@tecmint:/usr/local$

|

||||

|

||||

2. 使用绝对路径,从当前目录切换到/usr/local/lib

|

||||

|

||||

avi@tecmint:/usr/local$ cd /usr/local/lib

|

||||

avi@tecmint:/usr/local/lib$

|

||||

|

||||

3. 使用相对路径,从当前路径切换到/usr/local/lib

|

||||

|

||||

avi@tecmint:/usr/local$ cd lib

|

||||

avi@tecmint:/usr/local/lib$

|

||||

|

||||

4. **(a)**切换当前目录到上级目录

|

||||

|

||||

avi@tecmint:/usr/local/lib$ cd -

|

||||

/usr/local

|

||||

avi@tecmint:/usr/local$

|

||||

|

||||

**(b)**切换当前目录到上级目录

|

||||

|

||||

avi@tecmint:/usr/local/lib$ cd ..

|

||||

avi@tecmint:/usr/local$

|

||||

|

||||

5. 显示我们最后一个离开的工作目录(使用‘-’选项)

|

||||

|

||||

avi@tecmint:/usr/local$ cd --

|

||||

/home/avi

|

||||

|

||||

6. 从当前目录向上级返回两层

|

||||

|

||||

avi@tecmint:/usr/local$ cd ../../

|

||||

avi@tecmint:/$

|

||||

|

||||

7. 从任何目录返回到用户home目录

|

||||

|

||||

avi@tecmint:/usr/local$ cd ~

|

||||

avi@tecmint:~$

|

||||

|

||||

或

|

||||

|

||||

avi@tecmint:/usr/local$ cd

|

||||

avi@tecmint:~$

|

||||

|

||||

8. 切换工作目录到当前工作目录(LCTT:这有什么意义嘛?!)

|

||||

|

||||

avi@tecmint:~/Downloads$ cd .

|

||||

avi@tecmint:~/Downloads$

|

||||

|

||||

或

|

||||

|

||||

avi@tecmint:~/Downloads$ cd ./

|

||||

avi@tecmint:~/Downloads$

|

||||

|

||||

9. 你当前目录是“/usr/local/lib/python3.4/dist-packages”,现在要切换到“/home/avi/Desktop/”,要求:一行命令,通过向上一直切换直到‘/’,然后使用绝对路径

|

||||

|

||||

avi@tecmint:/usr/local/lib/python3.4/dist-packages$ cd ../../../../../home/avi/Desktop/

|

||||

avi@tecmint:~/Desktop$

|

||||

|

||||

10. 从当前工作目录切换到/var/www/html,要求:不要将命令打完整,使用TAB

|

||||

|

||||

avi@tecmint:/var/www$ cd /v<TAB>/w<TAB>/h<TAB>

|

||||

avi@tecmint:/var/www/html$

|

||||

|

||||

11. 从当前目录切换到/etc/v__ _,啊呀,你竟然忘了目录的名字,但是你又不想用TAB

|

||||

|

||||

avi@tecmint:~$ cd /etc/v*

|

||||

avi@tecmint:/etc/vbox$

|

||||

|

||||

**请注意:**如果只有一个目录以‘**v**‘开头,这将会移动到‘**vbox**‘。如果有很多目录以‘**v**‘开头,而且命令行中没有提供更多的标准,这将会移动到第一个以‘**v**‘开头的目录(按照他们在标准字典里字母存在的顺序)

|

||||

|

||||

12. 你想切换到用户‘**av**‘(不确定是avi还是avt)目录,不用**TAB**

|

||||

|

||||

avi@tecmint:/etc$ cd /home/av?

|

||||

avi@tecmint:~$

|

||||

|

||||

13. Linux下的pushed和poped

|

||||

|

||||

Pushed和poped是Linux bash命令,也是其他几个能够保存当前工作目录位置至内存,并且从内存读取目录作为当前目录的脚本,这些脚本也可以切换目录

|

||||

|

||||

avi@tecmint:~$ pushd /var/www/html

|

||||

/var/www/html ~

|

||||

avi@tecmint:/var/www/html$

|

||||

|

||||

上面的命令保存当前目录到内存,然后切换到要求的目录。一旦poped被执行,它会从内存取出保存的目录位置,作为当前目录

|

||||

|

||||

avi@tecmint:/var/www/html$ popd

|

||||

~

|

||||

avi@tecmint:~$

|

||||

|

||||

14. 切换到名字带有空格的目录

|

||||

|

||||

avi@tecmint:~$ cd test\ tecmint/

|

||||

avi@tecmint:~/test tecmint$

|

||||

|

||||

或

|

||||

|

||||

avi@tecmint:~$ cd 'test tecmint'

|

||||

avi@tecmint:~/test tecmint$

|

||||

|

||||

或

|

||||

|

||||

avi@tecmint:~$ cd "test tecmint"/

|

||||

avi@tecmint:~/test tecmint$

|

||||

|

||||

15. 从当前目录切换到下载目录,然后列出它所包含的内容(使用一行命令)

|

||||

|

||||

avi@tecmint:/usr$ cd ~/Downloads && ls

|

||||

...

|

||||

.

|

||||

service_locator_in.xls

|

||||

sources.list

|

||||

teamviewer_linux_x64.deb

|

||||

tor-browser-linux64-3.6.3_en-US.tar.xz

|

||||

.

|

||||

...

|

||||

|

||||

我们尝试使用最少的词句和一如既往的友好,来让你了解Linux的工作和执行

|

||||

|

||||

这就是所有内容。我很快会带着另一个有趣的主题回来的。

|

||||

|

||||

---

|

||||

|

||||

via: http://www.tecmint.com/cd-command-in-linux/

|

||||

|

||||

作者:[Avishek Kumar][a]

|

||||

译者:[su-kaiyao](https://github.com/su-kaiyao)

|

||||

校对:[wxy](https://github.com/wxy)

|

||||

|

||||

本文由 [LCTT](https://github.com/LCTT/TranslateProject) 原创翻译,[Linux中国](http://linux.cn/) 荣誉推出

|

||||

|

||||

[a]:http://www.tecmint.com/author/avishek/

|

||||

|

||||

@ -1,4 +1,4 @@

|

||||

Linux FAQ -- 如何在CentOS或者RHEL上启用Nux Dextop仓库

|

||||

Linux有问必答:如何在CentOS或者RHEL上启用Nux Dextop仓库

|

||||

================================================================================

|

||||

> **问题**: 我想要安装一个在Nux Dextop仓库的RPM包。我该如何在CentOS或者RHEL上设置Nux Dextop仓库?

|

||||

|

||||

@ -6,7 +6,7 @@ Linux FAQ -- 如何在CentOS或者RHEL上启用Nux Dextop仓库

|

||||

|

||||

要在CentOS或者RHEL上启用Nux Dextop,遵循下面的步骤。

|

||||

|

||||

首先,要理解Nux Dextop被设计与EPEL仓库共存。因此,你需要使用Nux Dexyop仓库前先[启用 EPEL][2]。

|

||||

首先,要知道Nux Dextop被设计与EPEL仓库共存。因此,你需要在使用Nux Dexyop仓库前先[启用 EPEL][2]。

|

||||

|

||||

启用EPEL后,用下面的命令安装Nux Dextop仓库。

|

||||

|

||||

@ -26,13 +26,13 @@ Linux FAQ -- 如何在CentOS或者RHEL上启用Nux Dextop仓库

|

||||

|

||||

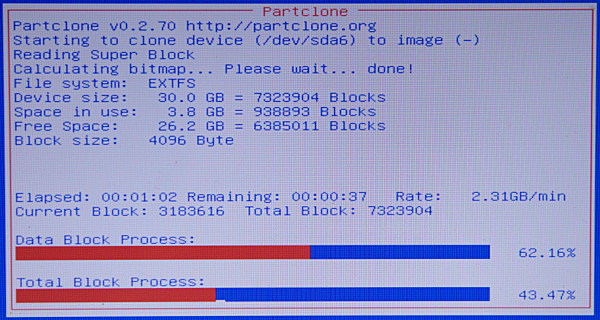

### 对于 Repoforge/RPMforge 用户 ###

|

||||

|

||||

据作者所说,Nux Dextop目前所知会与其他第三方库比如Repoforge和ATrpms相冲突。因此,如果你启用了除了EPEL的其他第三方库,强烈建议你将Nux Dextop仓库设置成“default off”(默认关闭)状态。就是用文本编辑器打开/etc/yum.repos.d/nux-dextop.repo,并且在nux-desktop下面将"enabled=1" 改成 "enabled=0"。

|

||||

据作者所说,目前已知Nux Dextop会与其他第三方库比如Repoforge和ATrpms相冲突。因此,如果你启用了除了EPEL的其他第三方库,强烈建议你将Nux Dextop仓库设置成“default off”(默认关闭)状态。就是用文本编辑器打开/etc/yum.repos.d/nux-dextop.repo,并且在nux-desktop下面将"enabled=1" 改成 "enabled=0"。

|

||||

|

||||

$ sudo vi /etc/yum.repos.d/nux-dextop.repo

|

||||

$ sudo vi /etc/yum.repos.d/nux-dextop.repo

|

||||

|

||||

|

||||

|

||||

当你无论何时从Nux Dextop仓库安装包时,显式地用下面的命令启用仓库。

|

||||

无论何时当你从Nux Dextop仓库安装包时,显式地用下面的命令启用仓库。

|

||||

|

||||

$ sudo yum --enablerepo=nux-dextop install <package-name>

|

||||

|

||||

@ -41,7 +41,7 @@ $ sudo vi /etc/yum.repos.d/nux-dextop.repo

|

||||

via: http://ask.xmodulo.com/enable-nux-dextop-repository-centos-rhel.html

|

||||

|

||||

译者:[geekpi](https://github.com/geekpi)

|

||||

校对:[校对者ID](https://github.com/校对者ID)

|

||||

校对:[ wxy](https://github.com/wxy)

|

||||

|

||||

本文由 [LCTT](https://github.com/LCTT/TranslateProject) 原创翻译,[Linux中国](http://linux.cn/) 荣誉推出

|

||||

|

||||

@ -1,4 +1,4 @@

|

||||

Linux有问必答——如何修复“运行aclocal失败:没有该文件或目录”

|

||||

Linux有问必答:如何修复“运行aclocal失败:没有该文件或目录”

|

||||

================================================================================

|

||||

> **问题**:我试着在Linux上构建一个程序,该程序的开发版本是使用“autogen.sh”脚本进行的。当我运行它来创建配置脚本时,却发生了下面的错误:

|

||||

>

|

||||

@ -24,7 +24,7 @@ Linux有问必答——如何修复“运行aclocal失败:没有该文件或

|

||||

via: http://ask.xmodulo.com/fix-failed-to-run-aclocal.html

|

||||

|

||||

译者:[GOLinux](https://github.com/GOLinux)

|

||||

校对:[校对者ID](https://github.com/校对者ID)

|

||||

校对:[wxy](https://github.com/wxy)

|

||||

|

||||

本文由 [LCTT](https://github.com/LCTT/TranslateProject) 原创翻译,[Linux中国](http://linux.cn/) 荣誉推出

|

||||

|

||||

@ -1,5 +1,7 @@

|

||||

Email生日快乐

|

||||

他发明了 Email ?

|

||||

================================================================================

|

||||

[编者按:本文所述的 Email 发明人的观点存在很大的争议,请读者留意,以我的观点来看,其更应该被称作为某个 Email 应用系统的发明人,其所发明的一些功能和特性,至今沿用。——wxy]

|

||||

|

||||

**一个印度裔美国人用他天才的头脑发明了电子邮件,而从此以后我们没有哪一天可以离开电子邮件。**

|

||||

|

||||

|

||||

@ -18,6 +20,6 @@ via: http://www.efytimes.com/e1/fullnews.asp?edid=147170

|

||||

|

||||

作者:Sanchari Banerjee

|

||||

译者:[zpl1025](https://github.com/zpl1025)

|

||||

校对:[校对者ID](https://github.com/校对者ID)

|

||||

校对:[wxy](https://github.com/wxy)

|

||||

|

||||

本文由 [LCTT](https://github.com/LCTT/TranslateProject) 原创翻译,[Linux中国](http://linux.cn/) 荣誉推出

|

||||

@ -6,13 +6,13 @@

|

||||

|

||||

<blockquote><em>通过入会声明,任何人都能轻易加入“匿名者”组织。某人类学家称,组织成员会“根据影响程度对重大事件保持着不同关注,特别是那些能挑起强烈争端的事件”。</em></blockquote>

|

||||

|

||||

<small>布景:Jeff Nishinaka / 摄影:Scott Dunbar</small>

|

||||

<small>纸雕作品:Jeff Nishinaka / 摄影:Scott Dunbar</small>

|

||||

|

||||

<h2>1</h2>

|

||||

|

||||

<p>上世纪七十年代中期,当 Christopher Doyon 还是一个生活在缅因州乡村的孩童时,就终日泡在 CB radio 上与各种陌生人聊天。他的昵称是“大红”,因为他有一头红色的头发。Christopher Doyon 把发射机挂在了卧室的墙壁上,并且说服了父亲在自家屋顶安装了两根天线。CB radio 主要用于卡车司机间的联络,但 Doyon 和一些人却将之用于不久后出现在 Internet 上的虚拟社交——自定义昵称、成员间才懂的笑话,以及施行变革的强烈愿望。</p>

|

||||

<p>上世纪七十年代中期,当 Christopher Doyon 还是一个生活在缅因州乡村的孩童时,就终日泡在 CB radio 上与各种陌生人聊天。他的昵称是“Big red”(大红),因为他有一头红色的头发。Christopher Doyon 把发射机挂在了卧室的墙壁上,并且说服了父亲在自家屋顶安装了两根天线。CB radio 主要用于卡车司机间的联络,但 Doyon 和一些人却将之用于不久后出现在 Internet 上的虚拟社交——自定义昵称、成员间才懂的笑话,以及施行变革的强烈愿望。</p>

|

||||

|

||||

<p>Doyon 很小的时候母亲就去世了,兄妹二人由父亲抚养长大,他俩都说受到过父亲的虐待。由此 Doyon 在 CB radio 社区中找到了慰藉和归属感。他和他的朋友们轮流监听当地紧急事件频道。其中一个朋友的父亲买了一个气泡灯并安装在了他的车顶上;每当这个孩子收听到来自孤立无援的乘车人的求助后,都会开车载着所有人到求助者所在的公路旁。除了拨打 911 外他们基本没有什么可做的,但这足以让他们感觉自己成为了英雄。</p>

|

||||

<p>Doyon 很小的时候母亲就去世了,兄妹二人由父亲抚养长大,他俩都说受到过父亲的虐待。由此 Doyon 在 CB radio 社区中找到了慰藉和目标感。他和他的朋友们轮流监听当地紧急事件频道。其中一个朋友的父亲买了一个气泡灯并安装在了他的车顶上;每当这个孩子收听到来自孤立无援的乘车人的求助后,都会开车载着所有人到求助者所在的公路旁。除了拨打 911 外他们基本没有什么可做的,但这足以让他们感觉自己成为了英雄。</p>

|

||||

|

||||

<p>短小精悍的 Doyon 有着一口浓厚的新英格兰口音,并且非常喜欢《星际迷航》和阿西莫夫的小说。当他在《大众机械》上看到一则“组装你的专属个人计算机”构件广告时,就央求祖父给他买一套,接下来 Doyon 花了数月的时间把计算机组装起来并连接到 Internet 上去。与鲜为人知的 CB 电波相比,在线聊天室确实不可同日而语。“我只需要点一下按钮,再选中某个家伙的名字,然后我就可以和他聊天了,” Doyon 在最近回忆时说道,“这真的很惊人。”</p>

|

||||

|

||||

@ -22,11 +22,11 @@

|

||||

|

||||

<p>Doyon 深深地沉溺于计算机中,虽然他并不是一位专业的程序员。在过去一年的几次谈话中,他告诉我他将自己视为激进主义分子,继承了 Abbie Hoffman 和 Eldridge Cleaver 的激进传统;技术不过是他抗议的工具。八十年代,哈佛大学和麻省理工学院的学生们举行集会,强烈抗议他们的学校从南非撤资。为了帮助抗议者通过安全渠道进行交流,PLF 制作了无线电套装:移动调频发射器、伸缩式天线,还有麦克风,所有部件都内置于背包内。Willard Johnson,麻省理工学院的一位激进分子和政治学家,表示黑客们出席集会并不意味着一次变革。“我们的大部分工作仍然是通过扩音器来完成的,”他解释道。</p>

|

||||

|

||||

<p>1992 年,在 Grateful Dead 的一场印第安纳的演唱会上,Doyon 秘密地向一位瘾君子出售了 300 粒药。由此他被判决在印第安纳州立监狱服役十二年,后来改为五年。服役期间,他对宗教和哲学产生了浓厚的兴趣,并于鲍尔州立大学学习了相应课程。</p>

|

||||

<p>1992 年,在印第安纳的一场 Grateful Dead 的演唱会上,Doyon 秘密地向一位瘾君子出售了 300 粒药。由此他被判决在印第安纳州立监狱服役十二年,后来改为五年。服役期间,他对宗教和哲学产生了浓厚的兴趣,并于鲍尔州立大学学习了相应课程。</p>

|

||||

|

||||

<p>1994 年,第一款商业 Web 浏览器网景领航员正式发布,同一年 Doyon 被捕入狱。当他出狱并再次回到剑桥后,PLF 依然活跃着,并且他们的工具有了实质性的飞跃。Doyon 回忆起和他入狱之前的变化,“非常巨大——好比是‘烽火狼烟’跟‘电报传信’之间那么大的差距。”黑客们入侵了一个印度的军事网站,并修改其首页文字为“拯救克什米尔”。在塞尔维亚,黑客们攻陷了一个阿尔巴尼亚网站。Stefan Wray,一位早期网络激进主义分子,为一次纽约“反哥伦布日”集会上的黑客行径辩护。“我们视之为电子形式的公众抗议,”他告诉大家。</p>

|

||||

<p>1994 年,第一款商业 Web 浏览器 Netscape Navigator(网景领航员)正式发布,同一年 Doyon 被捕入狱。当他出狱并再次回到剑桥后,PLF 依然活跃着,并且他们的工具有了实质性的飞跃。Doyon 回忆起他和入狱之前对比的变化,“非常巨大——好比是‘烽火狼烟’跟‘电报传信’之间那么大的差距。”黑客们入侵了一个印度的军事网站,并修改其首页文字为“拯救克什米尔”。在塞尔维亚,黑客们攻陷了一个阿尔巴尼亚网站。Stefan Wray,一位早期网络激进主义分子,为一次纽约“反哥伦布日”集会上的黑客行径辩护。“我们视之为电子形式的公众抗议,”他告诉大家。</p>

|

||||

|

||||

<p>1999 年,美国唱片业协会因为版权侵犯问题起诉了 Napster,一款文件共享软件。最终,Napster 于 2001 年关闭。Doyon 与其他黑客使用分布式拒绝服务(Distributed Denial of Service,DDoS,使大量数据涌入网站导致其响应速度减缓直至奔溃)的手段,攻击了美国唱片业协会的网站,使之停运时间长达一星期之久。Doyon为自己的行为进行了辩解,并高度赞扬了其他的“黑客主义者”。“我们很快意识到保卫 Napster 的战争象征着保卫 Internet 自由的战争,”他在后来写道。</p>

|

||||

<p>1999 年,美国唱片业协会因为版权侵犯问题起诉了 Napster,一款文件共享服务。最终,Napster 于 2001 年关闭。Doyon 与其他黑客使用分布式拒绝服务(Distributed Denial of Service,DDoS,使大量数据涌入网站导致其响应速度减缓直至奔溃)的手段,攻击了美国唱片业协会的网站,使之停运时间长达一星期之久。Doyon为自己的行为进行了辩解,并高度赞扬了其他的“黑客主义者”。“我们很快意识到保卫 Napster 的战争象征着保卫 Internet 自由的战争,”他在后来写道。</p>

|

||||

|

||||

<p>2008 年的一天,Doyon 和 “Commander Adama” 在剑桥的 PLE 地下公寓相遇。Adama 当着 Doyon 的面点击了癫痫基金会的一个链接,与意料中将要打开的论坛不同,出现的是一连串闪烁的彩光。有些癫痫病患者对闪光灯非常敏感——这完全是出于恶意,有人想要在无辜群众中诱发癫痫病。已经出现了至少一名受害者。</p>

|

||||

|

||||

@ -42,69 +42,69 @@

|

||||

|

||||

<center><small>“我得谈谈我的感受。”</small></center>

|

||||

|

||||

<p>Poole 希望匿名这一举措可以延续社区的尖锐性因素。“我们无意参与理智的涉外事件讨论,”他在网站上写道。4chan 社区里最具价值的事之一便是寻求“挑起强烈的争端”(lulz),这个词源自缩写 LOL。Lulz 经常是通过分享充满孩子气的笑话或图片来实现的,它们中的大部分不是色情的就是下流的。其中最令人震惊的部分被贴在了网站的“/b/”版块上,这里的用户们称呼自己为“/b/tards”。Doyon 知道 4chan 这个社区,但他认为那些用户是“一群愚昧无知的顽童”。2004 年前后,/b/ 上的部分用户开始把“匿名者”视为一个独立的实体。</p>

|

||||

<p>Poole 希望匿名这一举措可以延续社区的尖锐性因素。“我们无意参与理智的涉外事件讨论,”他在网站上写道。4chan 社区里最具价值的事之一便是寻求“挑起强烈的争端”(lulz),这个词源自缩写 LOL。Lulz 经常是通过分享幼稚的笑话或图片来实现的,其中大部分不是色情的就是下流的。其中最令人震惊的部分被贴在了网站的“/b/”版块上,这里的用户们称呼自己为“/b/tards”。Doyon 知道 4chan 这个社区,但他认为它的用户是“一群愚昧无知的顽童”。2004 年前后,/b/ 上的部分用户开始把“匿名者”视为一个独立的实体。</p>

|

||||

|

||||

<p>这是一个全新的黑客团体。“这不是一个传统意义上的组织,”一位领导计算机安全工作的研究员 Mikko Hypponen 告诉我——倒不如,视之为一个非传统的亚文化群体。Barrett Brown,德克萨斯州的一名记者,同时也是众所周知的“匿名者”高层领导,把“匿名者”描述为“一连串前仆后继的伟大友谊”。无需任何会费或者入会仪式。任何想要加入“匿名者”组织,成为一名匿名者(Anon)的人,都可以通过简短的象征性的宣誓加入。</p>

|

||||

|

||||

<p>尽管 4chan 的关注焦点是一些琐碎的话题,但许多匿名者认为自己就是“正义的十字军”。如果网上有不良迹象出现,他们就会发起具有针对性的治安维护行动。不止一次,他们以未成年少女的身份套取恋童癖的私人信息,然后把这些信息交给警察局。其他匿名者则是政治的厌恶者,为了挑起争端想方设法散布混乱的信息。他们中的一些人在 /b/ 上发布看着像是雷管炸弹的图片;另一些则叫嚣着要炸毁足球场并因此被联邦调查局逮捕。2007 年,一家洛杉矶当地的新闻联盟机构称呼“匿名者”组织为“互联网负能量制造机”。</p>

|

||||

<p>尽管 4chan 的关注焦点是一些琐碎的话题,但许多匿名者认为自己就是“正义的十字军”。如果网上有不良迹象出现,他们就会发起具有针对性的治安维护行动。不止一次,他们以未成年少女的身份使恋童癖陷入圈套,然后把他们的个人信息交给警察局。其他匿名者则是政治的厌恶者,为了挑起争端想方设法散布混乱的信息。他们中的一些人在 /b/ 上发布看着像是雷管炸弹的图片;另一些则叫嚣着要炸毁足球场并因此被联邦调查局逮捕。2007 年,一家洛杉矶当地的新闻联盟机构称呼“匿名者”组织为“互联网负能量制造机”。</p>

|

||||

|

||||

<p>2008 年 1 月,Gawker Media 上传了一段关于汤姆克鲁斯大力吹捧山达基优点的视频。这段视频是受版权保护的,山达基教会致信 Gawker,勒令其删除这段视频。“匿名者”组织认为教会企图控制网络信息。“是时候让 /b/ 来干票大的了,”有人在 4chan 上写道。“我说的是‘入侵’或者‘攻陷’山达基官方网站。”一位匿名者使用 YouTube 放出一段“新闻稿”,其中包括暴雨云视频和经过计算机处理的语音。“我们要立刻把你们从 Internet 上赶出去,并且在现有规模上逐渐瓦解山达基教会,”那个声音说,“你们无处可躲。”不到一个星期,这段 YouTube 视频的点击率就超过了两百万次。</p>

|

||||

|

||||

<p>“匿名者”组织已经不仅限于 4chan 社区。黑客们在专用的互联网中继聊天(Internet Relay Chat channels,IRC 聊天室)频道内进行交流,协商策略。通过 DDoS 攻击手段,他们使山达基的主网站间歇性崩溃了好几天。匿名者们制造了“谷歌炸弹”,由此导致 “dangerous cult” 的搜索结果中的第一条结果就是山达基主网站。其余的匿名者向山达基的欧洲总部寄送了数以百计的披萨,并用大量全黑的传真单耗干了洛杉矶教会总部的传真机墨盒。山达基教会,据报道拥有超过十亿美元资产的组织,当然能经得起墨盒耗尽的考验。但山达基教会的高层可不这么认为,他们还收到了严厉的恐吓,由此他们不得不向 FBI 申请逮捕“匿名者”组织的成员。</p>

|

||||

<p>“匿名者”组织已经不仅限于 4chan 社区。黑客们在专用的互联网中继聊天(Internet Relay Chat channels,IRC 聊天室)频道内进行交流,协商策略。通过 DDoS 攻击手段,他们使山达基的主网站间歇性崩溃了好几天。匿名者们制造了“谷歌炸弹”,由此导致 “dangerous cult” 的搜索结果中的第一条结果就是山达基主网站。其余的匿名者向山达基的欧洲总部寄送了数以百计的披萨,并用大量全黑的传真单耗干了洛杉矶教会总部的传真机墨盒。山达基教会,据报道是一个拥有超过十亿美元资产的组织,当然能经得起墨盒耗尽的考验。但山达基教会的高层可不这么认为,他们还收到了死亡恐吓,由此他们不得不向 FBI 申请调查“匿名者”组织的成员。</p>

|

||||

|

||||

<p>2008 年 3 月 15 日,在从伦敦到悉尼的一百多个城市里,数以千计匿名者们游行示威山达基教会。为了切合“匿名”这个主题,组织者下令所有的抗议者都应该佩戴相同的面具。深思熟虑过蝙蝠侠后,他们选定了 2005 年上映的反乌托邦电影《 V 字仇杀队》中 Guy Fawkes 的面具。“在每个大城市里都能以很便宜的价格大量购买,”广为人知的匿名者、游行组织者之一 Gregg Housh 告诉我说道。漫画式的面具上是一个的脸颊红润的男人,八字胡,有着灿烂的笑容。</p>

|

||||

|

||||

<p>匿名者们并未“瓦解”山达基教会。并且汤姆克鲁斯的那段视频任然保留在网络上。匿名者们证明了自己的顽强。组织选择了一个相当浮夸的口号:“我们是一体。绝不宽恕。永不遗忘。相信我们。”(We are Legion. We do not forgive. We do not forget. Expect us.)</p>

|

||||

<p>匿名者们并未“瓦解”山达基教会。并且汤姆克鲁斯的那段视频任然保留在网络上。匿名者们证明了自己的顽强。组织选择了一个相当浮夸的口号:“我们是军团。绝不宽恕。永不遗忘。等待我们。”(We are Legion. We do not forgive. We do not forget. Expect us.)</p>

|

||||

|

||||

<h2>3</h2>

|

||||

|

||||

<p>2010 年,Doyon 搬到了加利福尼亚州的圣克鲁斯,并加入了当地的“和平阵营”组织。利用从木材堆置场偷来的木头,他在山上盖起了一间简陋的小屋,“借用”附近住宅的 WiFi,使用太阳能电池板发电,并通过贩卖种植的大麻换取现金。</p>

|

||||

|

||||

<p>与此同时,“和平阵营”维权者们每天晚上开始在公共场所休息,以此抗议圣克鲁斯政府此前颁布的“流浪者管理法案”,他们认为这项法案严重侵犯了流浪者的生存权。Doyon 出席了“和平阵营”的会议,并在网上发起了抗议活动。他留着蓬乱的红色山羊胡,戴一顶米黄色软呢帽,像军人那样不知疲倦。因此维权者们送给了他“罪恶制裁克里斯”的称呼。</p>

|

||||

<p>与此同时,“和平阵营”维权者们每天晚上开始在公共场所休息,以此抗议圣克鲁斯政府此前颁布的“流浪者管理法案”,他们认为这项法案严重侵犯了流浪者的生存权。Doyon 出席了“和平阵营”的会议,并在网上发起了抗议活动。他留着蓬乱的红色山羊胡,戴一顶米黄色软呢帽,类似军服的服装。因此维权者们送给了他“罪恶制裁克里斯”的称呼。</p>

|

||||

|

||||

<p>“和平阵营”的成员之一 Kelley Landaker 曾几次和 Doyong 讨论入侵事宜。Doyon 有时会吹嘘自己的技术是多么的厉害,但作为一名资深程序员的 Landaker 却不为所动。“他说得很棒,但却不是行动派的,”Landaker 告诉我。不过在那种场合下,的确更需要一位富有激情的领导者,而不是埋头苦干的技术员。“他非常热情并且坦率,”另一位成员 Robert Norse 如是对我说。“他创造出了大量的能够吸引媒体眼球的话题。我从事这行已经二十年了,在这一点上他比我见过的任何人都要厉害。”</p>

|

||||

<p>“和平阵营”的成员之一 Kelley Landaker 曾几次和 Doyong 讨论入侵事宜。Doyon 有时会吹嘘自己的技术是多么的厉害,但作为一名资深程序员的 Landaker 却不为所动。“他说得很棒,但却不是行动派,”Landaker 告诉我。不过在那种场合下,的确更需要一位富有激情的领导者,而不是埋头苦干的技术员。“他非常热情并且坦率,”另一位成员 Robert Norse 如是对我说。“他创造出了大量的能够吸引媒体眼球的话题。我从事这行已经二十年了,在这一点上他比我见过的任何人都要厉害。”</p>

|

||||

|

||||

<p>Doyon 在 PLF 的上司,Commander Adama 仍然住在剑桥,并且通过电子邮件和 Doyon 保持着联络,他下令让 Doyon 潜入“匿名者”组织。以此获知其运作方式,并伺机为 PLF 招募新成员。因为癫痫基金会网站入侵事件的那段不愉快回忆,Doyon 拒绝了 Adama。Adama 给 Doyon 解释说,在“匿名者”组织里不怀好意的黑客只占极少数,与此相反,这个组织经常会有一些的轰动世界举动。Doyon 对这点表示怀疑。“4chan 怎么可能会轰动世界?”他质问道。但出于对 PLF 的忠诚,他还是答应了 Adama 的请求。</p>

|

||||

<p>Doyon 在 PLF 的上司,Commander Adama 仍然住在剑桥,并且通过电子邮件和 Doyon 保持着联络,他下令让 Doyon 监视“匿名者”组织,以此获知其运作方式,并伺机为 PLF 招募新成员。因为癫痫基金会网站入侵事件的那段不愉快回忆,Doyon 拒绝了 Adama。Adama 给 Doyon 解释说,在“匿名者”组织里不怀好意的黑客只占极少数,与此相反,这个组织经常会有一些的轰动世界举动。Doyon 对这点表示怀疑。“4chan 怎么可能会有轰动世界的大举动?”他质问道。但出于对 PLF 的忠诚,他还是答应了 Adama 的请求。</p>

|

||||

|

||||

<p>Doyon 经常带着一台宏基笔记本电脑出入于圣克鲁斯的一家名为 Coffee Roasting Company 的咖啡厅。“匿名者”组织的 IRC 聊天室主频道无需密码就能进入。Doyon 使用 PLF 的昵称进行登录并加入了聊天室。一段时间后,他发现了组织内大量的专用匿名者行动聊天频道,这些频道的规模更小,并相互重复。要想参与行动,你必须知道行动的专用聊天频道名称,并且聊天频道随时会因为陌生的闯入者而进行变更。这套交流系统并不具备较高的安全系数,但它的确很凑效。“这些专用行动聊天频道确保了行动机密的高度集中,”麦吉尔大学的人类学家 Gabriella Coleman 告诉我。</p>

|

||||

<p>Doyon 经常带着一台宏基笔记本电脑出入于圣克鲁斯的一家名为 Coffee Roasting Company 的咖啡厅。“匿名者”组织的 IRC 聊天室主频道无需密码就能进入。Doyon 使用 PLF 的昵称进行登录并加入了聊天室。一段时间后,他发现了组织内大量的专用匿名者行动聊天频道,这些频道的规模更小,更多专门的组内匿名者间对话相互重复。要想参与行动,你必须知道行动的专用聊天频道名称,并且聊天频道随时会因为陌生的闯入者而进行变更。这套交流系统并不具备较高的安全系数,但它的确很凑效。“这些专用行动聊天频道确保了行动机密的高度集中”麦吉尔大学的人类学家 Gabriella Coleman 告诉我。</p>

|

||||

|

||||

<p>有些匿名者提议了一项行动,名为“反击行动”。如同新闻记者 Parmy Olson 于 2012 年在书中写道的,“我们是匿名者,”这项行动成为了又一次支援文件共享网站,如 Napster 的后继者海盗湾(Pirate Bay),的行动的前奏,但随后其目标却扩展到了政治领域。2010 年末,在美国国务院的要求下,包括万事达、Visa、PayPal 在内的几家公司终止了对维基解密,一家公布了成百上千份外交文件的民间组织,的捐助。在一段网络视频中,“匿名者”组织扬言要进行报复,发誓会对那些阻碍维基解密发展的公司进行惩罚。Doyon 被这种抗议企业的精神所吸引,决定参加这次行动。</p>

|

||||

<p>有些匿名者提议了一项行动,名为“反击行动”。如同新闻记者 Parmy Olson 于 2012 年在书中写道的,“我们是匿名者,” 这项行动是以又一次支持文件共享的网站而创立,如同 Napster 的后继者海盗湾(Pirate Bay),但随后其目标却扩展到了政治领域。2010 年末,在美国国务院的要求下,包括万事达、Visa、PayPal 在内的几家公司终止了对维基解密的捐助,维基解密是一家公布了成百上千份外交文件的自发性组织。在一段在线视频中,“匿名者”组织扬言要进行报复,发誓会对那些阻碍维基解密发展的公司进行惩罚。Doyon 被这种抗议企业的精神所吸引,决定参加这次行动。</p>

|

||||

|

||||

<center><img src="http://www.newyorker.com/wp-content/uploads/2014/09/140908_a18473-600.jpg" /></center>

|

||||

|

||||

<center><small>潘多拉的魔盒</small></center>

|

||||

|

||||

<p>在十二月初的“反击行动”中,“匿名者”组织指导那些新成员,或者说新兵,关于“如何他【哔~】加入组织”,教程中提到“首先配置你【哔~】的网络,这他【哔~】的很重要。”同时他们被要求下载“低轨道离子炮”,一款易于使用的开源软件。Doyon 下载了软件并在聊天室内等待着下一步指示。当开始的指令发出后,数千名匿名者将同时发动进攻。Doyon 收到了含有目标网址的指令——目标是,www.visa.com——同时,在软件的右上角有个按钮,上面写着“IMMA CHARGIN MAH LAZER.”(“反击行动”同时也发动了大量的复杂精密的入侵进攻。)几天后,“反击行动”攻陷了万事达、Visa、PayPal 公司的主页。在法院的控告单上,PayPal 称这次攻击给公司造成了 550 万美元的损失。</p>

|

||||

<p>在十二月初的“反击行动”中,“匿名者”组织指导那些新成员,或者说新兵,去看标题为“如何加入那个【哔~】的Hive”,参与者被要求“首先配置他们【哔~】的网络,这【哔~】的很重要。”同时他们被要求下载“低轨道离子炮”,一款易于使用的开源软件。Doyon 下载了软件并在聊天室内等待着下一步指示。当开始的指令发出后,数千名匿名者将同时发动进攻。Doyon 进入了目标网址——www.visa.com——同时,在软件的右上角有个按钮,上面写着“IMMA CHARGIN MAH LAZER.”(“反击行动”同时也发动了大量的复杂精密的入侵进攻。)几天后,“反击行动”攻陷了万事达、Visa、PayPal 公司的主页。在法院的控告单上,PayPal 称这次攻击给公司造成了 550 万美元的损失。</p>

|

||||

|

||||

<p>但对 Doyon 来说,这是切实的激进主义体现。在剑桥反对种族隔离的行动中,他不能立即看到结果;而现在,只需指尖轻轻一点,就可以在攻陷大公司网站的行动中做出自己的贡献。隔天,赫芬顿邮报上出现了“万事达沦陷”的醒目标题。一位得意洋洋的匿名者发推特道:“有些事情维基解密是无能为力的。但这些事情却可以由‘反击行动’来完成。”</p>

|

||||

<p>但对 Doyon 来说,这是切实的激进主义体现。在剑桥反对种族隔离的行动中,他不能即可见效;而现在,只需指尖轻轻一点,就可以在攻陷大公司网站的行动中做出自己的贡献。隔天,赫芬顿邮报上出现了“万事达沦陷”的醒目标题。一位得意洋洋的匿名者发推特道:“有些事情维基解密是无能为力的。但这些事情却可以由‘反击行动’来完成。”</p>

|

||||

|

||||

<h2>4</h2>

|

||||

|

||||

<p>2010 年的秋天,“和平阵营”的抗议活动终止,政府只做出了轻微的让步,“流浪者管理法案”仍然有效。Doyon 希望通过借助“匿名者”组织的方略扭转局势。他回忆当时自己的想法,“也许我可以发动‘匿名者’组织来教训这种看似不堪一击的市政府网站,这些人绝对会【哔~】地赞同我的提议。最终我们将使得市政府永久性的废除‘流浪者管理法案’。”</p>

|

||||

<p>2010 年的秋天,“和平阵营”的抗议活动终止,政府只做出了略微让步,“流浪者管理法案”仍然有效。Doyon 希望通过借助“匿名者”组织的方略扭转局势。他回忆当时自己的想法,“也许我可以发动‘匿名者’组织来教训这种看似不堪一击的市政府网站,它们绝对会【哔~】地沦陷。最终我们使得市政府永久性废除‘流浪者管理法案’。”</p>

|

||||

|

||||

<p>Joshua Covelli 是一位 25 岁的匿名者,他的昵称是“Absolem”,他非常钦佩 Doyon 的果敢。“现在我们的组织完全是他【哔~】各种混乱的一盘散沙,”Covelli 告诉我道。在“Commander X”加入之后,“组织似乎开始变得有模有样了。”Covelli 的工作是俄亥俄州费尔伯恩的一所大学接待员,他从不了解任何有关圣克鲁斯的政治。但是当 Doyon 提及帮助“和平阵营”抗击活动的计划后,Covelli 立即回复了一封表示赞同的电子邮件:“我期待这样的行动很久了。”</p>

|

||||

<p>Joshua Covelli 是一位 25 岁的匿名者,他的昵称是“Absolem”,他非常钦佩 Doyon 的果敢。“过去我们的组织完全是各种混乱的一盘散沙,”Covelli 告诉我。在“Commander X”加入之后,“组织似乎开始变得有模有样了。”Covelli 的工作是俄亥俄州费尔伯恩的一所大学接待员,他从不了解任何有关圣克鲁斯的政治。但是当 Doyon 提及帮助“和平阵营”抗击活动的计划后,Covelli 立即回复了一封表示赞同的电子邮件:“我期待参加这样的行动已经很久了。”</p>

|

||||

|

||||

<p>Doyon 使用 PLF 的昵称邀请 Covelli 在 IRC 聊天室进行了一次秘密谈话:</p>

|

||||

|

||||

<blockquote>Absolem:抱歉,有个比较冒犯的问题...请问 PLF 也是组织的一员吗?</blockquote>

|

||||

<blockquote>Absolem:抱歉,有个比较冒犯的问题...请问 PLF 是组织的一部分还是分开的?</blockquote>

|

||||

|

||||

<blockquote>Absolem:我会这么问,是因为我在频道里看过你的聊天记录,你像是一名训练有素的黑客,不太像是来自组织里的成员。</blockquote>

|

||||

<blockquote>Absolem:我会这么问,是因为看你们聊天,觉得你们都是非常有组织的。</blockquote>

|

||||

|

||||

<blockquote>PLF:不不不,你的问题一点也不冒犯。很高兴遇到你。PLF 是一个来自波士顿的黑客组织,已经成立 22 年了。我在 1981 年就开始了我的黑客生涯,但那时我并没有使用计算机,而是使用的 PBX(Private Branch Exchange,电话交换机)。</blockquote>

|

||||

|

||||

<blockquote>PLF:我们组织内所有成员的年龄都超过了 40 岁。我们当中有退伍士兵和学者。并且我们的成员“Commander Adama”,正在躲避一大帮警察还有间谍的追捕。</blockquote>

|

||||

|

||||

<blockquote>Absolem:听起来很棒!我对这次行动很感兴趣,不知道我是否可以提供一些帮助,我们的组织实在是太混乱了。我的电脑技术还不错,但我在入侵技术上还完全是一个新手。我有一些小工具,但不知道怎么去使用它们。</blockquote>

|

||||

<blockquote>Absolem:听起来很棒!我对这次行动很感兴趣,不过“匿名者”组织看起来太混乱无序,不知道我是否可以提供一些帮助。我的电脑技术还不错,但我在入侵技术上还完全是一个新手。我有一些小工具,但不知道怎么去使用它们。</blockquote>

|

||||

|

||||

<p>庄重的入会仪式后,Doyon 正式接纳 Covelli 加入 PLF:</p>

|

||||

|

||||

<blockquote>PLF:把所有可能对你不利的【哔~】敏感文件加密。</blockquote>

|

||||

<blockquote>PLF:把所有可能使你受牵连的敏感文件加密。</blockquote>

|

||||

|

||||

<blockquote>PLF:还有,想要联系任何一位 PLF 成员的话,给我发消息就行。从现在起,请叫我... Commander X。</blockquote>

|

||||

|

||||

<p>2012 年,美联社称“匿名者”组织为“一伙训练有素的黑客”;Quinn Norton 在《连线》杂志上发文称“‘匿名者’组织可以入侵任何坚不可摧的网站”,并在文末赞扬他们为“一群卓越的民间黑客”。事实上,有些匿名者的确是很有天赋的程序员,但绝大部分成员根本不懂任何技术。人类学家 Coleman 告诉我只有大约五分之一的匿名者是真正的黑客——其他匿名者则是“极客与抗议者”。</p>

|

||||

<p>2012 年,美联社称“匿名者”组织为“一帮专家级的黑客”;Quinn Norton 在《连线》杂志上发文称“‘匿名者’组织可以入侵任何坚不可摧的网站”,并在文末赞扬他们为“一群卓越的民间黑客”。事实上,有些匿名者的确是很有天赋的程序员,但绝大部分成员根本不懂任何技术。人类学家 Coleman 告诉我只有大约五分之一的匿名者是真正的黑客——其他匿名者则是“极客与抗议者”。</p>

|

||||

|

||||

<p>2010 年 12 月 16 日,Doyon 以 Commander X 的身份向几名记者发送了电子邮件。“明天当地时间 12:00 的时候,‘人民解放阵线’组织与‘匿名者’组织将大举进攻圣克鲁斯政府网站”,他在邮件中写道,“12:30 之后我们将恢复网站的正常运行。”</p>

|

||||

<p>2010 年 12 月 16 日,Doyon 以 Commander X 的身份向几名记者发送了电子邮件。“明天当地时间 12:00 的时候,‘人民解放阵线’组织与‘匿名者’组织将从互联网中删除圣克鲁斯政府网站”,他在邮件中写道,“12:30 之后我们将恢复网站的正常运行。”</p>

|

||||

|

||||

<p>圣克鲁斯数据中心的工作人员收到了警告,匆忙地准备应对攻击。他们在服务器上运行起安全扫描软件,并向当地的互联网供应商 AT & T 求助,后者建议他们向 FBI 报警。</p>

|

||||

|

||||

@ -132,7 +132,7 @@

|

||||

|

||||

<center><small>“Zach 很聪明... 并且... 是一个天才... 但.. 你们... 不在一个班。”</small></center>

|

||||

|

||||

<p>Doyon 引用了一句电影台词。“拼命地跑,”他说。“我会躲起来,尽可能保持我的行动自由,用尽全力和这帮杂种们作斗争。”Frey 给了他两张 20 美元的钞票并祝他好运。</p>

|

||||

<p>Doyon 引用了一句电影台词。“拼命地跑,”他说。“我会躲起来,尽可能保持我的行动自由,用尽全力和这帮混蛋们作斗争。”Frey 给了他两张 20 美元的钞票并祝他好运。</p>

|

||||

|

||||

<h2>5</h2>

|

||||

|

||||

@ -142,35 +142,35 @@

|

||||

|

||||

<p>“突尼斯,” Brown 答道。</p>

|

||||

|

||||

<p>“我知道,那是中东地区的一个国家,” Doyon 继续问,“然后呢?”</p>

|

||||

<p>“我知道,那是中东地区的一个国家,” Doyon 继续问,“具体任务是什么呢?”</p>

|

||||

|

||||

<p>“我们准备打倒那里的独裁者,” Brown 再次答道。</p>

|

||||

|

||||

<p>“啊?!那里有一位独裁者吗?” Doyon 有点惊讶。</p>

|

||||

|

||||

<p>几天后,“突尼斯行动”正式展开。Doyon 作为参与者向突尼斯政府域名下的电子邮箱发送了大量的垃圾邮件,以此阻塞其服务器。“我会提前写好关于那次行动邮件,接着一次又一次地把它们发送出去,” Doyon 说,“有时候实在没有时间,我就只简短的写上一句问候对方母亲的的话,然后发送出去。”短短一天时间里,匿名者们就攻陷了包括突尼斯证券交易所、工业部、总统办公室、总理办公室在内的多个网站。他们把总统办公室网站的首页替换成了一艘海盗船的图片,并配以文字“‘报复’是个贱人,不是吗?”</p>

|

||||

<p>几天后,“突尼斯行动”正式展开。Doyon 作为参与者向突尼斯政府域名下的电子邮箱发送了大量的垃圾邮件,以此阻塞其服务器。“我会提前写好关于那次行动邮件,接着一次又一次地把它们发送出去,” Doyon 说,“有时候实在没有时间,我就只简短的写上一句‘问候对方母亲’的话,然后发送出去。”短短一天时间里,匿名者们就攻陷了包括突尼斯证券交易所、工业部、总统办公室、总统办公室在内的多个网站。他们把总统办公室网站的首页替换成了一艘海盗船的图片,并配以文字“恶有恶报,不是吗?”</p>

|

||||

|

||||

<p>Doyon 不时会谈起他的网上“战斗”经历,似乎他刚从弹坑里爬出来一样。“伙计,自从干了这行我就变黑了,”他向我诉苦道。“你看我的脸,全是抽烟的时候熏的——而且可能已经粘在我的脸上了。我仔细地照过镜子,毫不夸张地说我简直就是一头棕熊。”很多个夜晚,Doyon 都是在 Golden Gate 公园里露营过夜的。“我就那样干了四天,我看了看镜子里的‘我’,感觉还可以——但其实我觉得‘我’也许应该去吃点东西、洗个澡了。”</p>

|

||||

|

||||

<p>“匿名者”组织接着又在 YouTube 上声明了将要进行的一系列行动:“利比亚行动”、“巴林行动”、“摩洛哥行动”。作为解放广场事件的抗议者,Doyon 参与了“埃及行动”。在 Facebook 针对这次行动的宣传专页中,有一个为当地示威者准备的“行动套装”链接。“行动套装”通过文件共享网站 Megaupload 进行分发,其中含有一份加密软件以及应对瓦斯袭击的保护措施。并且在不久后,埃及政府关闭了埃及的所有互联网及子网络的时候,继续向当地抗议者们提供连接网络的方法。</p>

|

||||

<p>“匿名者”组织接着又在 YouTube 上声明了将要进行的一系列行动:“利比亚行动”、“巴林行动”、“摩洛哥行动”。作为解放广场事件的抗议者,Doyon 参与了“埃及行动”。在 Facebook 针对这次行动的宣传专页中,有一个为当地示威者准备的“行动套装”链接。“行动套装”通过文件共享网站 Megaupload 进行分发,其中含有一份加密软件以及应对瓦斯袭击的保护措施。在埃及政府关闭了埃及的所有互联网及子网络的时候不久后,“匿名者”组织继续向当地抗议者们提供连接网络的方法。</p>

|

||||

|

||||

<p>2011 年夏季,Doyon 接替 Adama 成为 PLF 的最高指挥官。Doyon 招募了六个新成员,并力图发展 PLF 成为“匿名者”组织的中坚力量。Covelli 成为了他的其中一技位术顾问。另一名黑客 Crypt0nymous 负责在 YouTube 上发布视频;其余的人负责研究以及组装电子设备。与松散的“匿名者”组织不同,PLF 内部有一套极其严格的管理体系。“Commander X 事必躬亲,”Covelli 说。“这是他的行事风格,也许不能称之为一种风格。”一位创立了 AnonInsiders 博客的黑客通过加密聊天告诉我,他认为 Doyon 总是一意孤行——这在“匿名者”组织中是很罕见的现象。“当我们策划发起一项行动时,他并不在乎其他人是否同意,”这位黑客补充道,“他会一个人列出行动方案,确定攻击目标,登录 IRC 聊天室,接着告诉所有人在哪里‘碰头’,然后发起 DDoS 攻击。”</p>

|

||||

<p>2011 年夏季,Doyon 接替 Adama 成为 PLF 的最高指挥官。Doyon 招募了六个新成员,并力图发展 PLF 成为“匿名者”组织的中坚力量。Covelli 成为了他的其中一位技术顾问。另一名黑客 Crypt0nymous 负责在 YouTube 上发布视频;其余的人负责研究以及组装电子设备。与松散的“匿名者”组织不同,PLF 内部有一套极其严格的管理体系。“Commander X 事必躬亲,”Covelli 说。“这是他的行事风格,要么不做,要么做好。”一位创立了 AnonInsiders 博客的黑客通过加密聊天告诉我,他认为 Doyon 总是一意孤行——这在“匿名者”组织中是很罕见的现象。“当我们策划发起一项行动时,他并不在乎其他人是否同意,”这位黑客补充道,“他会一个人列出行动方案,确定攻击目标,登录 IRC 聊天室,接着告诉所有人在哪里‘碰头’,然后发起 DDoS 攻击。”</p>

|

||||

|

||||

<p>一些匿名者把 PLF 视为可有可无的部分,认为 Doyon 的所作所为完全是个天大的笑柄。“他是因为吹牛出名的,”另一名昵称为 Tflow 的匿名者 Mustafa Al-Bassam 告诉我。不过,即使是那些极度反感 Doyon 的狂妄自大的人,也不得不承认他在“匿名者”组织发展过程中的重要性。“他所倡导的强硬路线有时很凑效,有时则完全不起作用,” Gregg Housh 说,并且补充道自己和其他优秀的匿名者都曾遇到过相同的问题。</p>

|

||||

<p>一些匿名者把 PLF 视为“面子项目”,认为 Doyon 的所作所为完全是个笑柄。“他是因为吹牛出名的,”另一名昵称为 Tflow 的匿名者 Mustafa Al-Bassam 告诉我。不过,即使是那些极度反感 Doyon 的狂妄自大的人,也不得不承认他在“匿名者”组织发展过程中的重要性。“他所倡导的强硬路线有时很凑效,有时则是碍事,” Gregg Housh 说,并且补充道自己和其他优秀的匿名者都曾遇到过相同的问题。</p>

|

||||

|

||||

<p>“匿名者”组织对外坚持声称自己是不分层次的平等组织。在由 Brian Knappenberger 制作的一部纪录片,《我们是一个团体》中,一名成员使用“一群鸟”来比喻组织,它们轮流领飞带动整个组织不断前行。Gabriella Coleman 告诉我,这个比喻不太切合实际,“匿名者”组织内实际上早就出现了一个非正式的领导阶层。“领导者非常重要,”她说。“有四五个人可以看做是我们的领头羊。”她把 Doyon 也算在了其中。但是匿名者们仍然倾向于反抗这种具有体系的组织结构。在一本即将出版的关于“匿名者”组织的书,《黑客、骗子、告密者、间谍》中,Coleman 这么写道,在匿名者中,“成员个体以及那些特立独行的人依然在一些重大事件上保持着服从的态度,优先考虑集体——特别是那些能引发强烈争端的事件。”</p>

|

||||

<p>“匿名者”组织对外坚持声称自己是不分层次的平等组织。在由 Brian Knappenberger 制作的一部纪录片,《我们是军团》中,一名成员使用“一群鸟”来比喻组织,它们轮流领飞带动整个组织不断前行。Gabriella Coleman 告诉我,这个比喻不太切合实际,“匿名者”组织内实际上早就出现了一个非正式的领导阶层。“领导者非常重要,”她说。“有四五个人可以看做是我们的领头羊。”她把 Doyon 也算在了其中。但是匿名者们仍然倾向于反抗这种体制结构。在一本即将出版的关于“匿名者”组织的书,《黑客、骗子、告密者、间谍》中,Coleman 这么写道,在匿名者中,“成员个体以及那些特立独行的人依然在一些重大事件上保持着服从的态度,优先考虑集体——特别是那些能引发强烈争端的事件。”</p>

|

||||

|

||||

<p>匿名者们谑称那些特立独行的成员为“自尊心超强的疯子”和“想让自己出名的疯子”。(不过许多匿名者已经不会再随便给他人取那种具有冒犯性的称号了。)“但还是有令人惊讶的极少数成员违反规则”打破传统上的看法,Coleman 说。“这么做的人,像 Commander X 这样的,都会在组织里受到排斥。”去年,在一家网络论坛上,有人写道,“当他开始把自己比作‘蝙蝠侠’的时候我就不想理他了。”</p>

|

||||

|

||||

<p>Peter Fein,是一位以 n0pants 为昵称而出名的网络激进分子,也是众多反对 Doyon 的浮夸行为的众多匿名者之一。Fein 浏览了 PLF 的网站,其封面上有一个徽章,还有关于组织的宣言——“为了解放众多人类的灵魂而不断战斗”。Fein 沮丧的发现 Doyon 早就使用真名为这家网站注册过了,使他这种,以及其他想要找事的匿名者们无机可乘。“如果有人要对我的网站进行 DDoS 攻击,那完全可以,” Fein 回想起通过私密聊天告诉 Doyon 时的情景,“但如果你要这么做了的话,我会揍扁你的屁股。”</p>

|

||||

|

||||

<p>2011 年 2 月 5 日,《金融时报》报道了在一家名为 HBGary Federal 的网络安全公司里,首席执行官 HBGary Federal 已经得到了“匿名者”组织骨干成员名单的消息。Barr 的调查结果表明,三位最高领导人其中之一就是‘ Commander X’,这位潜伏在加利福尼亚州的黑客有能力“策划一些大型网络攻击事件”。Barr 联系了 FBI 并提交了自己的调查结果。</p>

|

||||

<p>2011 年 2 月 5 日,《金融时报》报道了在一家名为 HBGary Federal 的网络安全公司,首席执行官 HBGary Federal 已经得到了“匿名者”组织骨干成员名单的消息。Barr 的调查结果表明,三位最高领导人其中之一就是‘ Commander X’,是一位潜伏在加利福尼亚州的黑客而且有能力“策划一些大型网络攻击事件”。Barr 联系了 FBI 并提交了自己的调查结果。</p>

|

||||

|

||||

<p>和 Fein 一样,Barr 也发现了 PLF 网站的注册法人名为 Christopher Doyon,地址是 Haight 大街。基于 Facebook 和 IRC 聊天室的调查,Barr 断定‘ Commander X’的真实身份是一名家庭住址在 Haight 大街附近的网络激进分子 Benjamin Spock de Vries。Barr 通过 Facebook 和 de Vries 取得了联系。“请告诉组织里的普通阶层,我并不是来抓你们的,” Barr 留言道,“只是想让‘领导阶层’知晓我的意图。”</p>

|

||||

<p>和 Fein 一样,Barr 也发现了 PLF 网站的注册法人名为 Christopher Doyon,地址是 Haight 大街。基于 Facebook 和 IRC 聊天室的调查,Barr 断定‘ Commander X’的真实身份是一名家庭住址在 Haight 大街附近的网络激进分子 Benjamin Spock de Vries。Barr 通过 Facebook 和 de Vries 取得了联系。“请告诉我组织里的其他人,我并不是来抓你们的,” Barr 留言道,“只是想让‘领导阶层’知晓我的意图。”</p>

|

||||

|

||||

<p>“‘领导阶层’? 2333,笑死我了,” de Vries 回复道。</p>

|

||||

|

||||

<p>《金融时报》发布报道的第二天,“匿名者”组织就进行了反击。HBGary Federal 的网站被进行了恶意篡改。Barr 的私人 Twitter 账户被盗取,他的上千封电子邮件被泄漏到了网上,同时匿名者们还公布了他的住址以及其他私人信息——这是一系列被称作“doxing”的惩罚。不到一个月后,Barr 就从 HBGary Federal 辞职了。</p>

|

||||

<p>《金融时报》发布报道的第二天,“匿名者”组织就进行了反击。HBGary Federal 的网站被进行了恶意篡改。Barr 的私人 Twitter 账户被盗取,他的上千封电子邮件被泄漏到了网上,同时匿名者们还公布了他的住址以及其他私人信息——这就是“冲动的惩罚”。不到一个月后,Barr 就从 HBGary Federal 辞职了。</p>

|

||||

|

||||

<h2>6</h2>

|

||||

|

||||

@ -180,17 +180,17 @@

|

||||

|

||||

<center><small>“这是我在 TED 夏令营里学到的东西。”</small></center>

|

||||

|

||||

<p>他时刻关注着“匿名者”组织的内部消息。那年春季,在 Barr 调查报告中提到的六位匿名者精锐成员,组建了“LulzSec 安全”组织(Lulz Security),简称 LulzSec。这个组织正如其名,这些成员认为“匿名者”组织已经变得太过严肃;他们的目标是重新引发起那些“能挑起强烈争端”的事件。当“匿名者”组织还在继续支持“阿拉伯之春”的抗议者的时候,LulzSec 入侵了公共电视网(Public Broadcasting Service,PBS)网站,并发布了一则虚假声明称已故说唱歌手 Tupac Shakur 仍然生活在新西兰。</p>

|

||||

<p>他时刻关注着“匿名者”组织的内部消息。那年春季,在 Barr 调查报告中提到的六位匿名者精锐成员,组建了“LulzSec 安全”组织(Lulz Security),简称 LulzSec。这个组织正如其名,这些成员认为“匿名者”组织已经变得太过严肃;他们的目标是重新引发起那些“能挑起强烈争端”的事件。当“匿名者”组织还在继续支持“阿拉伯之春”的抗议者时,LulzSec 入侵了公共电视网(Public Broadcasting Service,PBS)网站,并发布了一则虚假声明称已故说唱歌手 Tupac Shakur 仍然生活在新西兰。</p>

|

||||

|

||||

<p>匿名者之间会通过 Pastebin.com 网站来共享文字。在这个网站上,LulzSec 发表了一则声明,称“很不幸,我们注意到北约和我们的好总统巴拉克,奥萨马·本·美洲驼(拉登同学)的好朋友,来自 24 世纪的奥巴马,最近明显提高了对我们这些黑客的关注程度。他们把黑客入侵行为视作一种战争的表现。”目标越高远,挑起的纷争就越大。6 月 15 日,LulzSec 表示对 CIA 网站受到的袭击行为负责,他们发表了一条推特,上面写道“目标击毙(Tango down,亦即target down)—— cia.gov ——这是起挑衅行为。”</p>

|

||||

<p>匿名者之间会通过 Pastebin.com 网站来共享文本。在这个网站上,LulzSec 发表了一则声明,称“很不幸,我们注意到北约和我们的好朋友巴拉克奥萨马——来自24世纪的奥巴马 已经提升了关于黑客的筹码,他们把黑客入侵行为视作一种战争的表现。”目标越高远,挑起的纷争就越大。6 月 15 日,LulzSec 表示对 CIA 网站受到的袭击行为负责,他们发表了一条推特,上面写道“目标击毙(Tango down,亦即target down)—— cia.gov ——这是起挑衅行为。”</p>

|

||||

|

||||

<p>2011 年 6 月 20 日,LulzSec 的一名十九岁的成员 Ryan Cleary 因为对 CIA 的网站进行了 DDoS 攻击而被捕。7 月,FBI 探员逮捕了七个月前对 PayPal 进行 DDoS 攻击的其他十四名黑客。这十四名黑客,每人都面临着 15 年的牢狱之灾以及 500 万美元的罚款。他们因为图谋不轨以及故意破坏互联网,而被控违反了计算机欺诈与滥用处理条例。(该法案允许检察官进行酌情处置,并在去年网络激进分子 Aaron Swartz 因为被判处 35 年牢狱之灾而自杀身亡之后,受到了广泛的质疑和批评。)</p>

|

||||

<p>2011 年 6 月 20 日,LulzSec 的一名十九岁的成员 Ryan Cleary 因为对 CIA 的网站进行了 DDoS 攻击而被捕。7 月,FBI 探员逮捕了七个月前对 PayPal 进行 DDoS 攻击的其他十四名黑客。这十四名黑客,每人都面临着 15 年的牢狱之灾以及 50 万美元的罚款。他们因为图谋不轨以及故意破坏互联网而被控违反了计算机欺诈与滥用法案。(Computer Fraud and Abuse Act,该法案允许检察官拥有宽泛的起诉裁量权,并在去年网络激进分子 Aaron Swartz 因为被判处 35 年牢狱之灾而自杀身亡之后,受到了广泛的质疑和批评。)</p>

|

||||

|

||||

<p>LulzSec 的成员之一 Jake (Topiary) Davis 因为付不起法律诉讼费,给组织的成员们写了一封请求帮助的信件。Doyon 进入了 IRC 聊天室把 Davis 需要帮助的消息进行了扩散:</p>

|

||||

|

||||

<blockquote>CommanderX:那么请大家阅读信件并给予 Topiary 帮助...</blockquote>

|

||||

|

||||

<blockquote>Toad:你真是和【哔~】一样消息灵通。</blockquote>

|

||||

<blockquote>Toad:你真是为了抓人眼球什么都做啊!</blockquote>

|

||||

|

||||

<blockquote>Toad:这么说你得到 Topiary 的消息了?</blockquote>

|

||||

|

||||

@ -198,15 +198,15 @@

|

||||

|

||||

<blockquote>Katanon:唉...</blockquote>

|

||||

|

||||

<p>Doyon 越来越大胆。他在佛罗里达州当局逮捕了支持流浪者的激进分子后,就 DDoS 了奥兰多商务部商会网站。他使用个人笔记本电脑通过公用无线网络实施了攻击,并且没有花费太多精力来隐藏自己的网络行踪。“这种做法很勇敢,但也很愚蠢,”一位自称 Kalli 的 PLF 的资深成员告诉我。“他看起来并不在乎是否会被抓。他完全是一名自杀式黑客。”</p>

|

||||

<p>Doyon 越来越大胆。在佛罗里达州当局逮捕了支持流浪者的激进分子后,他就攻击 了奥兰多商务部商会网站。他使用个人笔记本电脑通过公用无线网络实施了攻击,并且没有花费太多精力来隐藏自己的网络行踪。“这种做法很勇敢,但也很愚蠢,”一位自称 Kalli 的 PLF 的资深成员告诉我。“他看起来并不在乎是否会被抓。他完全是一名自杀式黑客。”</p>

|

||||

|

||||

<p>两个月后,Doyon 参与了针对旧金山湾区快速交通系统(Bay Area Rapid Transit)的 DDoS 攻击,以此抗议一名 BART 的警官杀害一名叫做 Charles Hill 的流浪者的事件。随后 Doyon 现身“CBS 晚间新闻”为这次行动辩护,当然,他处理了自己的声音,把自己的脸用香蕉进行替代。他把 DDoS 攻击比作为公民的抗议行为。“与占用 Woolworth 午餐柜台的座位相比,这真的没什么不同,真的,”他说道。CBS 的主播 Bob Schieffer 笑称:“就我所见,它并不完全是一项民权运动。”</p>

|

||||

<p>两个月后,Doyon 参与了针对旧金山湾区快速交通系统(Bay Area Rapid Transit)的 DDoS 攻击,以此抗议一名 BART 的警官杀害一名叫做 Charles Hill 的流浪者的事件。随后 Doyon 现身“CBS 晚间新闻”为这次行动辩护,当然,他处理了自己的声音,用印花大手帕盖住了脸。他把 DDoS 攻击比作为公民的抗议行为。“与占用 Woolworth 午餐柜台的座位相比,这真的没什么不同,真的,”他说道。CBS 的主播 Bob Schieffer 笑称:“就我所见,它并不完全是一项民权运动。”</p>

|

||||

|

||||

<p>2011 年 9 月 22 日,在加利福尼亚州的一家名为 Mountain View 的咖啡店里,Doyon 被捕,同时面临着“使用互联网非法破坏受保护的计算机”罪名指控。他被拘留了一个星期的时间,接着在签署协议之后获得假释。两天后,他不顾律师的反对,宣布将在圣克鲁斯郡法院召开新闻发布会。他梳起了马尾辫,戴着一副墨镜、一顶黑色海盗帽,同时还在脖子上围了一条五彩手帕。</p>

|

||||

<p>2011 年 9 月 22 日,在加利福尼亚州的一家名为 Mountain View 的咖啡店里,Doyon 被捕,同时面临着“使用互联网非法破坏受保护的计算机”的罪名指控。他被拘留了一个星期的时间,接着在签署协议之后获得假释。两天后,他不顾律师的反对,宣布将在圣克鲁斯郡法院召开会议。他梳起了马尾辫,戴着一副墨镜、一顶黑色海盗帽,同时还在脖子上围了一条五彩手帕。</p>

|

||||

|

||||

<p>Doyon 通过非常夸大的方式披露了自己的身份。“我就是 Commander X,”他告诉蜂拥的记者。他举起了拳头。“作为‘匿名者’组织的一员,作为一名核心成员,我感到非常的骄傲。”他在接受一名记者的采访时说,“想要成为一名顶尖黑客的话,你只需要准备一台电脑以及一副墨镜。任何一台电脑都行。”</p>

|

||||

<p>Doyon 通过非常夸大的方式揭露了自己的身份。“我就是 Commander X,”他告诉蜂拥的记者。他举起了拳头。“作为‘匿名者’组织的一员,作为一名核心成员,我感到非常的骄傲。”他在接受一名记者的采访时说,“想要成为一名顶尖黑客的话,你只需要准备一台电脑以及一副墨镜。任何一台电脑都行。”</p>

|

||||

|

||||

<p>Kalli 非常担心 Doyon 会不小心泄露组织机密或者其他匿名者的信息。“这是所有环节中最薄弱的地方,如果这里出问题了,那么组织就完了,”他告诉我。曾在“和平阵营行动”中给予 Doyon 大力帮助的匿名者 Josh Covelli 告诉我,当他在网上看见 Doyon 的新闻发布会视频的时候,他感觉瞬间“下巴掉地下了”。“他的所作所为变得越来越不可捉摸,” Covelli 评价道。</p>

|

||||

<p>Kalli 非常担心 Doyon 会不小心泄露组织机密或者其他匿名者的信息。“这是所有环节中最薄弱的地方,如果这里出问题了,那么组织就完了,”他告诉我。曾在“和平阵营行动”中给予 Doyon 大力帮助的匿名者 Josh Covelli 告诉我,当他在网上看见 Doyon 的新闻发布会视频的时候,他感觉瞬间“下巴掉地上了”。“他的所作所为变得越来越不可捉摸,” Covelli 评价道。</p>

|

||||

|

||||

<p>三个月后,Doyon 的指定律师 Jay Leiderman 出席了圣荷西联邦法庭的辩护。Leiderman 已经好几个星期没有得到 Doyon 的消息了。“我需要得知被告无法出席的具体原因,”法官说。Leiderman 无法回答。Doyon 再次缺席了两星期后的另一场听证会。检控方表示:“很明显,看来被告已经逃跑了。”</p>

|

||||

|

||||

@ -214,7 +214,7 @@

|

||||

|

||||

<p>“Xport 行动”是“匿名者”组织进行的所有同类行动中的第一个行动。这次行动的目标是协助如今已经背负两项罪名的通缉犯 Doyon 潜逃出国。负责调度的人是 Kalli 以及另一位曾在八十年代剑桥的迷幻药派对上和 Doyon 见过面的匿名者老兵。这位老兵是一位已经退休的软件主管,在组织内部威望很高。</p>

|

||||

|

||||

<p>Doyon 的终点站是这位软件主管的家,位于加拿大的偏远乡村。2011 年 12 月,他搭便车前往旧金山,并辗转来到了市区组织大本营。他找到了他的指定联系人,后者带领他到达了奥克兰的一家披萨店。凌晨 2 点,Doyon 通过披萨店的无线网络,接收了一条加密聊天消息。</p>

|

||||

<p>Doyon 的目的地是这位软件主管家,位于加拿大的偏远乡村。2011 年 12 月,他搭便车前往旧金山,并辗转来到了市区组织大本营。他找到了他的指定联系人,后者带领他到达了奥克兰的一家披萨店。凌晨 2 点,Doyon 通过披萨店的无线网络,接收了一条加密聊天消息。</p>

|

||||

|

||||

<p>“你现在靠近窗户吗?”那条消息问道。</p>

|

||||

|

||||

@ -222,13 +222,13 @@

|

||||

|

||||

<p>“往大街对面看。看见一个绿色的邮箱了吗?十五分钟后,你去站到那个邮箱旁边,把你的背包取下来,然后把你的面具放在上面。”</p>

|

||||

|

||||

<p>一连几个星期的时间,Doyon 穿梭于海湾地区的安全屋之间,按照加密聊天那头的指示不断行动。最后,他搭上了前往西雅图的长途公交车,软件主管的一个朋友在那里接待了他。这个朋友是一名非常富有的退休人员,他花费了通过谷歌地球来帮助 Doyon 规划前往加拿大的路线。他们共同前往了一家野外用品供应商店,这位朋友为 Doyon 购置了价值 1500 美元的商品,包括登山鞋以及一个全新的背包。接着他又开车载着 Doyon 北上,两小时后到达距离国界只有几百英里的偏僻地区。随后 Doyon 见到了 Amber Lyon。</p>

|

||||

<p>一连几个星期的时间,Doyon 穿梭于海湾地区的安全屋之间,按照加密聊天那头的指示不断行动。最后,他搭上了前往西雅图的长途公交车,软件主管的一个朋友在那里接待了他。这个朋友是一名非常富有的退休人员,他花费了几小时的时间通过谷歌地球来帮助 Doyon 规划前往加拿大的路线。他们共同前往了一家野外用品供应商店,这位朋友为 Doyon 购置了价值 1500 美元的商品,包括登山鞋以及一个全新的背包。接着他又开车载着 Doyon 北上,两小时后到达距离国界只有几百英里的偏僻地区。随后 Doyon 见到了 Amber Lyon。</p>

|

||||

|

||||

<p>几个月前,广播新闻记者 Lyon 曾在 CNN 的关于“匿名者”组织的节目里采访过 Doyon。Doyon 很欣赏她的报道,他们一直保持着联络。Lyon 要求加入 Doyon 的逃亡行程,为一部可能会发行的纪录片拍摄素材。软件主管认为这样太过冒险,但 Doyon 还是接受了她的请求。“我觉得他是想让自己出名,” Lyon 告诉我。四天的时间里,她用影像记录下了 Doyon 徒步北上,在林间露宿的行程。“那一切看起来不太像是仔细规划过的,” Lyon 回忆说。“他实在是无家可归了,所以他才会想要逃到国外去。”</p>

|

||||

|

||||

<center><img src="http://www.newyorker.com/wp-content/uploads/2014/09/140908_a18506-600.jpg" /></center>

|

||||

|

||||

<center><small>“这里是我们存放各种感觉的仓库。如果你发现了某种感觉,把它带到这里然后锁起来。”</small></center>

|

||||

<center><small>“这里是我们存放各种情感的仓库。如果你产生了某种情感,把它带到这里然后锁起来。”</small></center>

|

||||

|

||||

<p>2012 年 2 月 11 日,Pastebin 上出现了一条消息。“PLF 很高兴的宣布‘ Commander X’,也就是 Christopher Mark Doyon,已经离开了美国的司法管辖区,抵达了加拿大一个比较安全的地方,”上面写着,“PLF 呼吁美国政府,希望政府能够醒悟过来并停止无谓的骚扰与监视行为——不要仅仅逮捕‘匿名者’组织的成员,对所有的激进组织应该一视同仁。”</p>

|

||||

|

||||

@ -236,13 +236,13 @@

|

||||

|

||||

Doyon 和软件主管在加拿大的小木屋里呆了几天。在一次同 Barrett Brown 的聊天中,Doyon 难掩内心的喜悦之情。

|

||||

|

||||

<blockquote>BarrettBrown:你现在应该足够安全了吧,其他的呢?...</blockquote>

|

||||

<blockquote>BarrettBrown:你现在足够多安全的藏身之处等等吧?</blockquote>

|

||||

|

||||

<blockquote>CommanderX:是的,我现在很安全,现在加拿大既不缺钱也不缺藏身的地方。</blockquote>

|

||||

|

||||

<blockquote>CommanderX:Amber Lyon 想要你的一张照片。</blockquote>

|

||||

|

||||

<blockquote>CommanderX:去他【哔~】的怪人,Barrett,相信你会喜欢我告诉她应该怎样评价你的。</blockquote>

|

||||

<blockquote>CommanderX:去你【哔~】的怪人,Barrett,相信你会喜欢我的回复。我一直爱你,永远爱你。</blockquote>

|

||||

|

||||

<blockquote>CommanderX::-)</blockquote>

|

||||

|

||||

@ -258,13 +258,13 @@ Doyon 和软件主管在加拿大的小木屋里呆了几天。在一次同 Barr

|

||||

|

||||

<blockquote>BarrettBrown:当然,估计我们不久后也得这样了</blockquote>

|

||||

|

||||

<p>在 Doyon 出逃十天后,《华尔街日报》上刊登了关于不久后升职为美国国家安全局及网络指挥部主任的 Keith Alexander 的报道,他在白宫举行的秘密会晤以及其他场合下,表达了对“匿名者”组织的高度关注。Alexander 发出警告,两年内,该组织必将会是国家电网改造的大患。参谋长联席会议的主席 General Martin Dempsey 告诉记者,这群人是国家的敌人。“他们有能力把这些使用恶意软件造成破坏的技术扩散到其他的边缘组织去,”随后又补充道,“我们必须防范这种情况发生。”</p>

|

||||

<p>在 Doyon 出逃十天后,《华尔街日报》上刊登了关于不久后升职为美国国家安全局及网络指挥部主任的 Keith Alexander 的报道,他在白宫以及其他场合举行的秘密会晤,表达了对“匿名者”组织的高度关注。Alexander 发出警告,两年内,该组织必将会是国家电网改造的大患。参谋长联席会议的主席 General Martin Dempsey 告诉记者,这群人是国家的敌人。“他们有能力把这些使用恶意软件造成破坏的技术扩散到其他的边缘组织去,”随后又补充道,“我们必须防范这种情况发生。”</p>

|

||||

|

||||

<p>3 月 8 日,国会议员们在国会大厦附近的一个敏感信息隔离设施附近举行了关于网络安全的会议。包括 Alexander、Dempsey、美国联邦调查局局长 Robert Mueller,以及美国国土安全部部长 Janet Napolitano 在内的多名美国安全方面的高级官员出席了这次会议。会议上,通过计算机向与会者模拟了东部沿海地区电力设施可能会遭受到的网络攻击时的情境。“匿名者”组织目前应该还不具备发动此种规模攻击的能力,但安全方面的官员担心他们会联合其他更加危险的组织来共同发动攻击。“在我们着手于不断增加的网络风险事故时,政府仍在就具体的处理细节进行不断协商讨论,” Napolitano 告诉我。当谈及潜在的网络安全隐患时,她补充道,“我们通常会把‘匿名者’组织的行动当做 A 级威胁来应对。”</p>

|

||||

|

||||

<p>“匿名者”也许是当今世界上最强大的无政府主义黑客组织。即使如此,它却从未表现出过任何的会对公共基础设施造成破坏的迹象或意愿。一些网络安全专家称,那些关于“匿名者”组织的谣传太过危言耸听。“在奥兰多发布战前宣言和实际发动 Stuxnet 蠕虫病毒攻击之间是有很大的差距的,” Internet 研究与战略中心的一位职员 James Andrew Lewis 告诉我,这和 2007 年美国与以色列对伊朗原子能网站发动的黑客袭击有关。哈佛大学法学院的教授 Yochai Benkler 告诉我,“我们所看见的只是以主要防御为理由而进行的开销,否则,将很难自圆其说。”</p>

|

||||

|

||||

<p>Keith Alexander 最近刚从政府部门退休,他拒绝就此事发表评论,因为他并不能代表国家安全局、联邦调查局、中央情报局以及国土安全部。尽管匿名者们从未真正盯上过政府部门的计算机网络,但他们对于那些激怒他们的人有着强烈的报复心理。前国土安全部国家网络安全部门负责人 Andy Purdy 告诉我他们“害怕被报复,”无论机构还是个人,都不同意政府公然反对“匿名者”组织。“每个人都非常脆弱,”他说。</p>

|

||||

<p>Keith Alexander 最近刚从政府部门退休,他拒绝就此事发表评论,因为他并不能代表国家安全局、联邦调查局、中央情报局以及国土安全部。尽管匿名者们从未真正盯上过政府部门的计算机网络,但他们对于那些激怒他们的人有着强烈的报复心理。前国土安全部国家网络安全部门负责人 Andy Purdy 告诉我他们“害怕被报复,”无论机构还是个人,都不同意政府公然反对“匿名者”组织。“每个人都容易成为被攻击对象,”他说。</p>

|

||||

|

||||

<h2>9</h2>

|

||||

|

||||

@ -272,7 +272,7 @@ Doyon 和软件主管在加拿大的小木屋里呆了几天。在一次同 Barr

|

||||

|

||||

<p>Doyon 感到很烦躁,但他还是继续扮演着一名黑客——以此吸引关注。他在多伦多上映的纪录片上以戴着面具的匿名者形象出现。在接受《National Post》的采访时,他向记者大肆吹嘘未经证实的消息,“我们已经入侵了美国政府的所有机密数据库。现在的问题是我们该何时泄露这些机密数据,而不是我们是否会泄露。”</p>

|

||||

|

||||

<p>2013 年 1 月,在另一名匿名者介入俄亥俄州<a href="https://gist.githubusercontent.com/SteveArcher/cdffc917a507f875b956/raw/c7b49cc11ae1e790d30c87f7b8de95482c18ec74/%E6%96%AF%E6%89%98%E6%9C%AC%E7%BB%B4%E5%B0%94%E8%BD%AE%E5%A5%B8%E6%A1%88%E5%86%8D%E8%B5%B7%E9%A3%8E%E6%B3%A2%20%E9%BB%91%E5%AE%A2%E7%BB%84%E7%BB%87%E4%BB%8B%E5%85%A5">斯托本维尔未成年少女轮奸案</a>,发起抗议行动之后,Doyon 重新启用了他两年前创办的网站 LocalLeaks,作为那起轮奸事件的信息汇总处理中心。如同许多其他“匿名者”组织的所作所为一样,LocalLeaks 网站非常具有影响力,但却也不承担任何责任。LocalLeaks 网站是第一家公布 12 分钟斯托本维尔高中毕业生猥亵视频的网站,这激起了众多当事人的愤怒。LocalLeaks 网站上同时披露了几份未被法庭收录的关于案件的材料,并且由此不小心透漏出了案件受害人的名字。Doyon向我承认他公开这些未经证实的信息的策略是存在争议的,但他同时回忆起自己当时的想法,“我们可以选择去除这些斯托本维尔案件的材料...也可以选择公开所有我们搜集的信息,基本上,给公众以提醒,不过,前提是你们得相信我们。”</p>

|

||||

<p>2013 年 1 月,在另一名匿名者介入俄亥俄州<a href="https://gist.githubusercontent.com/SteveArcher/cdffc917a507f875b956/raw/c7b49cc11ae1e790d30c87f7b8de95482c18ec74/%E6%96%AF%E6%89%98%E6%9C%AC%E7%BB%B4%E5%B0%94%E8%BD%AE%E5%A5%B8%E6%A1%88%E5%86%8D%E8%B5%B7%E9%A3%8E%E6%B3%A2%20%E9%BB%91%E5%AE%A2%E7%BB%84%E7%BB%87%E4%BB%8B%E5%85%A5">斯托本维尔未成年少女强奸案</a>,发起抗议行动之后,Doyon 重新启用了他两年前创办的网站 LocalLeaks,作为那起强奸事件的信息汇总处理中心。如同许多其他“匿名者”组织的所作所为一样,LocalLeaks 网站非常具有影响力,但却也不承担任何责任。LocalLeaks 网站是第一家公布 12 分钟斯托本维尔高中毕业生猥亵视频的网站,这激起了众多当事人的愤怒。LocalLeaks 网站上同时披露了几份未被法庭收录的关于案件的材料,并且由此不小心透漏出了案件受害人的名字。Doyon向我承认他公开这些未经证实的信息的策略是存在争议的,但他同时回忆起自己当时的想法,“我们可以选择销毁这些斯托本维尔案件的材料...也可以选择公开所有我们搜集的信息,基本上,给公众以提醒,不过,前提是你们得相信我们。”</p>

|

||||

|

||||

<p>2013 年 3 月,一个名为 Rustle League 的组织入侵了 Doyon 的 Twitter 账户,该组织此前经常挑衅“匿名者”组织。Rustle League 的领导者之一 Shm00p 告诉我,“我们的本意并不是伤害那些家伙,只不过,哦,那些家伙说的话你就当是在放屁好了——我会这么做只是因为我感到很好笑。” Rustle League 组织使用 Doyon 的账户发布了含有如 www.jewsdid911.org 链接这样的,种族主义和反犹太主义的信息。</p>

|

||||

|

||||

@ -290,37 +290,37 @@ Doyon 和软件主管在加拿大的小木屋里呆了几天。在一次同 Barr

|

||||

|

||||

<p>我们约定了一次面谈。Doyon 坚持让我通过加密聊天把面谈的详细情况提前告诉他。我坐了几个小时的飞机,租车来到了加拿大的一个偏远小镇,并且禁用了我的电话。</p>

|

||||

|

||||

<p>最后,我在一个狭小安静的住宅区公寓里见到了 Doyon。他穿了一件绿色的军人夹克衫以及印有“匿名者”组织 logo 的 T 恤衫:一个脸被问号所替代的黑衣人形象。公寓里基本上没有什么家具,充满了一股烟味。他谈论起了美国政治(“我基本没怎么在众多的选举中投票——它们不过是暗箱操作的游戏罢了”),好战的伊斯兰教(“我相信,尼日利亚政府的人不过是相互勾结,以创建一个名为‘博科圣地’的基地组织的下属机构罢了”),以及他对“匿名者”组织的小小看法(“那些自称为怪人的人是真的是烂透了,意思是,邪恶的人”)。</p>

|

||||

<p>最后,我在一个狭小安静的住宅区公寓里见到了 Doyon。他穿了一件绿色的军人夹克衫以及印有“匿名者”组织 logo 的 T 恤衫:一个脸被问号所替代的黑衣人形象。公寓里基本上没有什么家具,充满了一股烟味。他谈论起了美国政治(“我基本没怎么在众多的选举中投票——它们不过是暗箱操作的游戏罢了”),好战的伊斯兰教(“我相信,尼日利亚政府的人不过是相互勾结,以创建一个名为‘博科圣地’的基地组织的下属机构罢了”),以及他对“匿名者”组织的小小看法(“那些自称为怪人的人是真的是烂透了,其实是邪恶的人”)。</p>

|

||||

|

||||

<p>Doyon 剃去了他的胡须,但他却显得更加憔悴了。他说那是因为他病了的原因,他几乎很少出去。很小的写字台上有两台笔记本电脑、一摞关于佛教的书,还有一个堆满烟灰的烟灰缸。另一面裸露的泛黄墙壁上挂着盖伊·福克斯面具。他告诉我,“所谓‘Commander X’不过是一个处于极度痛苦中的小老头罢了。”</p>

|

||||

|

||||

<p>在刚过去的圣诞节里,匿名者的新网站 AnonInsiders 的创建者拜访了 Doyon,并给他带来了馅饼和香烟。Doyon 询问来访的朋友是否可以继承自己的衣钵成为 PLF 的最高指挥官,同时希望能够递交出自己手里的“王国钥匙”——手里的所有密码,以及几份关于“匿名者”组织的机密文件。这位朋友委婉的拒绝了。“我有自己的生活,”他告诉了我拒绝的理由。</p>

|

||||

<p>在刚过去的圣诞节里,匿名者的新网站 AnonInsiders 的创建者拜访了 Doyon,并给他带来了馅饼和香烟。Doyon 询问来访的朋友是否可以接替自己成为 PLF 的最高指挥官,同时希望能够递交出自己手里的“王国钥匙”——手里的所有密码,以及几份关于“匿名者”组织的机密文件。这位朋友委婉的拒绝了。“我有自己的生活,”他告诉了我拒绝的理由。</p>

|

||||

|

||||

<h2>11</h2>

|

||||

|

||||

<p>2014 年 8 月 9 日,当地时间下午 5 时 09 分,来自密苏里州圣路易斯郊区德尔伍德的一位说唱歌手同时也是激进分子的 Kareem (Tef Poe) Jackson,在 Twitter 上谈起了邻近城镇的一系列令人担忧的举措。“基本可以断定弗格森已经实施了戒严,任何人都无法出入,”他在 Twitter 上写道。“国内的朋友还有因特网上的朋友请帮助我们!!!”五个小时前,弗格森,一位十八岁的手无寸铁的非裔美国人 Michael Brown,被一位白人警察射杀。射杀警察声称自己这么做的原因是 Brown 意图伸手抢夺自己的枪支。而事发当时和 Brown 在一起的朋友 Dorian Johnson 却说,Brown 唯一做得不对的地方在于他当时拒绝离开街道中间。</p>

|

||||

<p>2014 年 8 月 9 日,当地时间下午 5 时 09 分,来自密苏里州圣路易斯郊区德尔伍德的一位说唱歌手同时也是激进分子的 Kareem (Tef Poe) Jackson,在 Twitter 上谈起了邻近城镇的一系列令人担忧的举措。“基本可以断定弗格森已经实施了戒严,任何人都无法出入,”他在 Twitter 上写道。“国内外的朋友们请帮助我们!!!”五个小时前,弗格森,一位十八岁的手无寸铁的非裔美国人 Michael Brown,被一位白人警察射杀。射杀警察声称自己这么做的原因是 Brown 意图伸手抢夺自己的枪支。而事发当时和 Brown 在一起的朋友 Dorian Johnson 却说,Brown 唯一做得不对的地方在于他当时拒绝离开街道中间。</p>

|

||||

|

||||