mirror of

https://github.com/LCTT/TranslateProject.git

synced 2025-01-25 23:11:02 +08:00

commit

9bb3165232

101

published/20160429 Why and how I became a software engineer.md

Normal file

101

published/20160429 Why and how I became a software engineer.md

Normal file

@ -0,0 +1,101 @@

|

||||

我成为软件工程师的原因和经历

|

||||

==========================================

|

||||

|

||||

|

||||

|

||||

1989 年乌干达首都,坎帕拉。

|

||||

|

||||

我明智的父母决定与其将我留在家里添麻烦,不如把我送到叔叔的办公室学学电脑。几天后,我和另外六、七个小孩,还有一台放置在课桌上的崭新电脑,一起置身于 21 层楼的一间狭小房屋中。很明显我们还不够格去碰那家伙。在长达三周无趣的 DOS 命令学习后,美好时光来到,终于轮到我来输 **copy doc.txt d:** 啦。

|

||||

|

||||

那将文件写入五英寸软盘的奇怪的声音,听起来却像音乐般美妙。那段时间,这块软盘简直成为了我的至宝。我把所有可以拷贝的东西都放在上面了。然而,1989 年的乌干达,人们的生活十分“正统”,相比较而言,捣鼓电脑、拷贝文件还有格式化磁盘就称不上“正统”。于是我不得不专注于自己接受的教育,远离计算机科学,走入建筑工程学。

|

||||

|

||||

之后几年里,我和同龄人一样,干过很多份工作也学到了许多技能。我教过幼儿园的小朋友,也教过大人如何使用软件,在服装店工作过,还在教堂中担任过引座员。在我获取堪萨斯大学的学位时,我正在技术管理员的手下做技术助理,听上去比较神气,其实也就是搞搞学生数据库而已。

|

||||

|

||||

当我 2007 年毕业时,计算机技术已经变得不可或缺。建筑工程学的方方面面都与计算机科学深深的交织在一起,所以我们不经意间学了些简单的编程知识。我对于这方面一直很着迷,但我不得不成为一位“正统”的工程师,由此我发展了一项秘密的私人爱好:写科幻小说。

|

||||

|

||||

在我的故事中,我以我笔下的女主角的形式存在。她们都是编程能力出众的科学家,总是卷入冒险,并用自己的技术发明战胜那些渣渣们,有时甚至要在现场发明新方法。我提到的这些“新技术”,有的是基于真实世界中的发明,也有些是从科幻小说中读到的。这就意味着我需要了解这些技术的原理,而且我的研究使我关注了许多有趣的 reddit 版块和电子杂志。

|

||||

|

||||

### 开源:巨大的宝库

|

||||

|

||||

那几周在 DOS 命令上花费的经历对我影响巨大,我在一些非专业的项目上耗费心血,并占据了宝贵的学习时间。Geocities 刚向所有 Yahoo! 用户开放时,我就创建了一个网站,用于发布一些用小型数码相机拍摄的个人图片。我建立多个免费网站,帮助家人和朋友解决一些他们所遇到的电脑问题,还为教堂搭建了一个图书馆数据库。

|

||||

|

||||

这意味着,我需要一直研究并尝试获取更多的信息,使它们变得更棒。互联网上帝保佑我,让开源进入我的视野。突然之间,30 天试用期和 license 限制对我而言就变成了过去式。我可以完全不受这些限制,继续使用 GIMP、Inkscape 和 OpenOffice。

|

||||

|

||||

### 是正经做些事情的时候了

|

||||

|

||||

我很幸运,有商业伙伴喜欢我的经历。她也是个想象力丰富的人,期待更高效、更便捷的互联世界。我们根据我们以往成功道路中经历的弱点制定了解决方案,但执行却成了一个问题。我们都缺乏给产品带来活力的能力,每当我们试图将想法带到投资人面前时,这表现的尤为突出。

|

||||

|

||||

我们需要学习编程。于是 2015 年夏末,我们来到 Holberton 学校。那是一所座落于旧金山,由社区推进,基于项目教学的学校。

|

||||

|

||||

一天早晨我的商业伙伴来找我,以她独有的方式(每当她有疯狂想法想要拉我入伙时),进行一场对话。

|

||||

|

||||

**Zee**: Gloria,我想和你说点事,在你说“不”前能先听我说完吗?

|

||||

|

||||

**Me**: 不行。

|

||||

|

||||

**Zee**: 为做全栈工程师,咱们申请上一所学校吧。

|

||||

|

||||

**Me**: 什么?

|

||||

|

||||

**Zee**: 就是这,看!就是这所学校,我们要申请这所学校来学习编程。

|

||||

|

||||

**Me**: 我不明白。我们不是正在网上学 Python 和…

|

||||

|

||||

**Zee**: 这不一样。相信我。

|

||||

|

||||

**Me**: 那…

|

||||

|

||||

**Zee**: 这就是不信任我了。

|

||||

|

||||

**Me**: 好吧 … 给我看看。

|

||||

|

||||

### 抛开偏见

|

||||

|

||||

我读到的和我们在网上看的的似乎很相似。这简直太棒了,以至于让人觉得不太真实,但我们还是决定尝试一下,全力以赴,看看结果如何。

|

||||

|

||||

要成为学生,我们需要经历四步选择,不过选择的依据仅仅是天赋和动机,而不是学历和编程经历。筛选便是课程的开始,通过它我们开始学习与合作。

|

||||

|

||||

根据我和我伙伴的经验, Holberton 学校的申请流程比其他的申请流程有趣太多了,就像场游戏。如果你完成了一项挑战,就能通往下一关,在那里有别的有趣的挑战正等着你。我们创建了 Twitter 账号,在 Medium 上写博客,为创建网站而学习 HTML 和 CSS, 打造了一个充满活力的在线社区,虽然在此之前我们并不知晓有谁会来。

|

||||

|

||||

在线社区最吸引人的就是大家有多种多样的使用电脑的经验,而背景和性别不是社区创始人(我们私下里称他们为“The Trinity”)做出选择的因素。大家只是喜欢聚在一块儿交流。我们都行进在通过学习编程来提升自己计算机技术的旅途上。

|

||||

|

||||

相较于其他的的申请流程,我们不需要泄露很多的身份信息。就像我的伙伴,她的名字里看不出她的性别和种族。直到最后一个步骤,在视频聊天的时候, The Trinity 才知道她是一位有色人种女性。迄今为止,促使她达到这个级别的只是她的热情和才华。肤色和性别并没有妨碍或者帮助到她。还有比这更酷的吗?

|

||||

|

||||

获得录取通知书的晚上,我们知道生活将向我们的梦想转变。2016 年 1 月 22 日,我们来到巴特瑞大街 98 号,去见我们的同学们 [Hippokampoiers][2],这是我们的初次见面。很明显,在见面之前,“The Trinity”已经做了很多工作,聚集了一批形形色色的人,他们充满激情与热情,致力于成长为全栈工程师。

|

||||

|

||||

这所学校有种与众不同的体验。每天都是向某一方面编程的一次竭力的冲锋。交给我们的工程,并不会有很多指导,我们需要使用一切可以使用的资源找出解决方案。[Holberton 学校][1] 认为信息来源相较于以前已经大大丰富了。MOOC(大型开放式课程)、教程、可用的开源软件和项目,以及线上社区等等,为我们完成项目提供了足够的知识。加之宝贵的导师团队来指导我们制定解决方案,这所学校变得并不仅仅是一所学校;我们已经成为了求学者的团体。任何对软件工程感兴趣并对这种学习方法感兴趣的人,我都强烈推荐这所学校。在这里的经历会让人有些悲喜交加,但是绝对值得。

|

||||

|

||||

### 开源问题

|

||||

|

||||

我最早使用的开源系统是 [Fedora][3],一个 [Red Hat][4] 赞助的项目。与 一名IRC 成员交流时,她推荐了这款免费的操作系统。 虽然在此之前,我还未独自安装过操作系统,但是这激起了我对开源的兴趣和日常使用计算机时对开源软件的依赖性。我们提倡为开源贡献代码,创造并使用开源的项目。我们的项目就在 Github 上,任何人都可以使用或是向它贡献出自己的力量。我们也会使用或以自己的方式为一些既存的开源项目做出贡献。在学校里,我们使用的大部分工具是开源的,例如 Fedora、[Vagrant][5]、[VirtualBox][6]、[GCC][7] 和 [Discourse][8],仅举几例。

|

||||

|

||||

在向软件工程师行进的路上,我始终憧憬着有朝一日能为开源社区做出一份贡献,能与他人分享我所掌握的知识。

|

||||

|

||||

### 多样性问题

|

||||

|

||||

站在教室里,和 29 位求学者交流心得,真是令人陶醉。学员中 40% 是女性, 44% 是有色人种。当你是一位有色人种且为女性,并身处于这个以缺乏多样性而著名的领域时,这些数字就变得非常重要了。这是高科技圣地麦加上的绿洲,我到达了。

|

||||

|

||||

想要成为一个全栈工程师是十分困难的,你甚至很难了解这意味着什么。这是一条充满挑战但又有丰富回报的旅途。科技推动着未来飞速发展,而你也是美好未来很重要的一部分。虽然媒体在持续的关注解决科技公司的多样化的问题,但是如果能认清自己,清楚自己的背景,知道自己为什么想成为一名全栈工程师,你便能在某一方面迅速成长。

|

||||

|

||||

不过可能最重要的是,告诉大家,女性在计算机的发展史上扮演过多么重要的角色,以帮助更多的女性回归到科技界,而且在给予就业机会时,不会因性别等因素而感到犹豫。女性的才能将会共同影响科技的未来,以及整个世界的未来。

|

||||

|

||||

|

||||

------------------------------------------------------------------------------

|

||||

|

||||

via: https://opensource.com/life/16/4/my-open-source-story-gloria-bwandungi

|

||||

|

||||

作者:[Gloria Bwandungi][a]

|

||||

译者:[martin2011qi](https://github.com/martin2011qi)

|

||||

校对:[jasminepeng](https://github.com/jasminepeng)

|

||||

|

||||

本文由 [LCTT](https://github.com/LCTT/TranslateProject) 原创翻译,[Linux中国](https://linux.cn/) 荣誉推出

|

||||

|

||||

[a]:https://opensource.com/users/nappybrain

|

||||

[1]: https://www.holbertonschool.com/

|

||||

[2]: https://twitter.com/hippokampoiers

|

||||

[3]: https://en.wikipedia.org/wiki/Fedora_(operating_system)

|

||||

[4]: https://www.redhat.com/

|

||||

[5]: https://www.vagrantup.com/

|

||||

[6]: https://www.virtualbox.org/

|

||||

[7]: https://gcc.gnu.org/

|

||||

[8]: https://www.discourse.org/

|

||||

@ -1,51 +1,52 @@

|

||||

# aria2 (Command Line Downloader) command examples

|

||||

aria2 (命令行下载器)实例

|

||||

============

|

||||

|

||||

[aria2][4] is a Free, open source, lightweight multi-protocol & multi-source command-line download utility. It supports HTTP/HTTPS, FTP, SFTP, BitTorrent and Metalink. aria2 can be manipulated via built-in JSON-RPC and XML-RPC interfaces. aria2 automatically validates chunks of data while downloading a file. It can download a file from multiple sources/protocols and tries to utilize your maximum download bandwidth. By default all the Linux Distribution included aria2, so we can install easily from official repository. some of the GUI download manager using aria2 as a plugin to improve the download speed like [uget][3].

|

||||

[aria2][4] 是一个自由、开源、轻量级多协议和多源的命令行下载工具。它支持 HTTP/HTTPS、FTP、SFTP、 BitTorrent 和 Metalink 协议。aria2 可以通过内建的 JSON-RPC 和 XML-RPC 接口来操纵。aria2 下载文件的时候,自动验证数据块。它可以通过多个来源或者多个协议下载一个文件,并且会尝试利用你的最大下载带宽。默认情况下,所有的 Linux 发行版都包括 aria2,所以我们可以从官方库中很容易的安装。一些 GUI 下载管理器例如 [uget][3] 使用 aria2 作为插件来提高下载速度。

|

||||

|

||||

#### Aria2 Features

|

||||

### Aria2 特性

|

||||

|

||||

* HTTP/HTTPS GET support

|

||||

* HTTP Proxy support

|

||||

* HTTP BASIC authentication support

|

||||

* HTTP Proxy authentication support

|

||||

* FTP support(active, passive mode)

|

||||

* FTP through HTTP proxy(GET command or tunneling)

|

||||

* Segmented download

|

||||

* Cookie support

|

||||

* It can run as a daemon process.

|

||||

* BitTorrent protocol support with fast extension.

|

||||

* Selective download in multi-file torrent

|

||||

* Metalink version 3.0 support(HTTP/FTP/BitTorrent).

|

||||

* Limiting download/upload speed

|

||||

* 支持 HTTP/HTTPS GET

|

||||

* 支持 HTTP 代理

|

||||

* 支持 HTTP BASIC 认证

|

||||

* 支持 HTTP 代理认证

|

||||

* 支持 FTP (主动、被动模式)

|

||||

* 通过 HTTP 代理的 FTP(GET 命令行或者隧道)

|

||||

* 分段下载

|

||||

* 支持 Cookie

|

||||

* 可以作为守护进程运行。

|

||||

* 支持使用 fast 扩展的 BitTorrent 协议

|

||||

* 支持在多文件 torrent 中选择文件

|

||||

* 支持 Metalink 3.0 版本(HTTP/FTP/BitTorrent)

|

||||

* 限制下载、上传速度

|

||||

|

||||

#### 1) Install aria2 on Linux

|

||||

### 1) Linux 下安装 aria2

|

||||

|

||||

We can easily install aria2 command line downloader to all the Linux Distribution such as Debian, Ubuntu, Mint, RHEL, CentOS, Fedora, suse, openSUSE, Arch Linux, Manjaro, Mageia, etc.. Just fire the below command to install. For CentOS, RHEL systems we need to enable [uget][2] or [RPMForge][1] repository.

|

||||

我们可以很容易的在所有的 Linux 发行版上安装 aria2 命令行下载器,例如 Debian、 Ubuntu、 Mint、 RHEL、 CentOS、 Fedora、 suse、 openSUSE、 Arch Linux、 Manjaro、 Mageia 等等……只需要输入下面的命令安装即可。对于 CentOS、 RHEL 系统,我们需要开启 [uget][2] 或者 [RPMForge][1] 库的支持。

|

||||

|

||||

```

|

||||

[For Debian, Ubuntu & Mint]

|

||||

[对于 Debian、 Ubuntu 和 Mint]

|

||||

$ sudo apt-get install aria2

|

||||

|

||||

[For CentOS, RHEL, Fedora 21 and older Systems]

|

||||

[对于 CentOS、 RHEL、 Fedora 21 和更早些的操作系统]

|

||||

# yum install aria2

|

||||

|

||||

[Fedora 22 and later systems]

|

||||

[Fedora 22 和 之后的系统]

|

||||

# dnf install aria2

|

||||

|

||||

[For suse & openSUSE]

|

||||

[对于 suse 和 openSUSE]

|

||||

# zypper install wget

|

||||

|

||||

[Mageia]

|

||||

# urpmi aria2

|

||||

|

||||

[For Debian, Ubuntu & Mint]

|

||||

[对于 Debian、 Ubuntu 和 Mint]

|

||||

$ sudo pacman -S aria2

|

||||

|

||||

```

|

||||

|

||||

#### 2) Download Single File

|

||||

### 2) 下载单个文件

|

||||

|

||||

The below command will download the file from given URL and stores in current directory, while downloading the file we can see the (date, time, download speed & download progress) of file.

|

||||

下面的命令将会从指定的 URL 中下载一个文件,并且保存在当前目录,在下载文件的过程中,我们可以看到文件的(日期、时间、下载速度和下载进度)。

|

||||

|

||||

```

|

||||

# aria2c https://download.owncloud.org/community/owncloud-9.0.0.tar.bz2

|

||||

@ -62,9 +63,9 @@ Status Legend:

|

||||

|

||||

```

|

||||

|

||||

#### 3) Save the file with different name

|

||||

### 3) 使用不同的名字保存文件

|

||||

|

||||

We can save the file with different name & format while initiate downloading, using -o (lowercase) option. Here we are going to save the filename with owncloud.zip.

|

||||

在初始化下载的时候,我们可以使用 `-o`(小写)选项在保存文件的时候使用不同的名字。这儿我们将要使用 owncloud.zip 文件名来保存文件。

|

||||

|

||||

```

|

||||

# aria2c -o owncloud.zip https://download.owncloud.org/community/owncloud-9.0.0.tar.bz2

|

||||

@ -81,9 +82,9 @@ Status Legend:

|

||||

|

||||

```

|

||||

|

||||

#### 4) Limit download speed

|

||||

### 4) 下载速度限制

|

||||

|

||||

By default aria2 utilize full bandwidth for downloading file and we can’t use anything on server before download completion (Which will affect other service accessing bandwidth). So better use –max-download-limit option to avoid further issue while downloading big size file.

|

||||

默认情况下,aria2 会利用全部带宽来下载文件,在文件下载完成之前,我们在服务器就什么也做不了(这将会影响其他服务访问带宽)。所以在下载大文件时最好使用 `–max-download-limit` 选项来避免进一步的问题。

|

||||

|

||||

```

|

||||

# aria2c --max-download-limit=500k https://download.owncloud.org/community/owncloud-9.0.0.tar.bz2

|

||||

@ -100,9 +101,9 @@ Status Legend:

|

||||

|

||||

```

|

||||

|

||||

#### 5) Download Multiple Files

|

||||

### 5) 下载多个文件

|

||||

|

||||

The below command will download more then on file from the location and stores in current directory, while downloading the file we can see the (date, time, download speed & download progress) of file.

|

||||

下面的命令将会从指定位置下载超过一个的文件并保存到当前目录,在下载文件的过程中,我们可以看到文件的(日期、时间、下载速度和下载进度)。

|

||||

|

||||

```

|

||||

# aria2c -Z https://download.owncloud.org/community/owncloud-9.0.0.tar.bz2 ftp://ftp.gnu.org/gnu/wget/wget-1.17.tar.gz

|

||||

@ -122,11 +123,10 @@ Status Legend:

|

||||

|

||||

```

|

||||

|

||||

#### 6) Resume Incomplete download

|

||||

### 6) 续传未完成的下载

|

||||

|

||||

Make sure, whenever going to download big size of file (eg: ISO Images), i advise you to use -c option which will help us to resume the existing incomplete download from the state and complete as usual when we are facing any network connectivity issue or system problems. Otherwise when you are download again, it will initiate the fresh download and store to different file name (append .1 to the filename automatically). Note: If any interrupt happen, aria2 save file with .aria2 extension.

|

||||

当你遇到一些网络连接问题或者系统问题的时候,并将要下载一个大文件(例如: ISO 镜像文件),我建议你使用 `-c` 选项,它可以帮助我们从该状态续传未完成的下载,并且像往常一样完成。不然的话,当你再次下载,它将会初始化新的下载,并保存成一个不同的文件名(自动的在文件名后面添加 .1 )。注意:如果出现了任何中断,aria2 使用 .aria2 后缀保存(未完成的)文件。

|

||||

|

||||

<iframe marginwidth="0" marginheight="0" scrolling="no" frameborder="0" height="90" width="728" id="_mN_gpt_827143833" style="border-width: 0px; border-style: initial; font-style: inherit; font-variant: inherit; font-weight: inherit; font-stretch: inherit; font-size: inherit; line-height: inherit; font-family: inherit; vertical-align: baseline;"></iframe>

|

||||

```

|

||||

# aria2c -c https://download.owncloud.org/community/owncloud-9.0.0.tar.bz2

|

||||

[#db0b08 8.2MiB/21MiB(38%) CN:1 DL:3.1MiB ETA:4s]^C

|

||||

@ -142,7 +142,7 @@ db0b08|INPR| 3.3MiB/s|/opt/owncloud-9.0.0.tar.bz2

|

||||

Status Legend:

|

||||

(INPR):download in-progress.

|

||||

|

||||

aria2 will resume download if the transfer is restarted.

|

||||

如果重新启动传输,aria2 将会恢复下载。

|

||||

|

||||

# aria2c -c https://download.owncloud.org/community/owncloud-9.0.0.tar.bz2

|

||||

[#873d08 21MiB/21MiB(98%) CN:1 DL:2.7MiB]

|

||||

@ -158,9 +158,9 @@ Status Legend:

|

||||

|

||||

```

|

||||

|

||||

#### 7) Get the input from file

|

||||

### 7) 从文件获取输入

|

||||

|

||||

Alternatively wget can get the list of input URL’s from file and start downloading. We need to create a file and store each URL in separate line. Add -i option with aria2 command to perform this action.

|

||||

就像 wget 可以从一个文件获取输入的 URL 列表来下载一样。我们需要创建一个文件,将每一个 URL 存储在单独的行中。ara2 命令行可以添加 `-i` 选项来执行此操作。

|

||||

|

||||

```

|

||||

# aria2c -i test-aria2.txt

|

||||

@ -180,9 +180,9 @@ Status Legend:

|

||||

|

||||

```

|

||||

|

||||

#### 8) Download using 2 connections per host

|

||||

### 8) 每个主机使用两个连接来下载

|

||||

|

||||

The maximum number of connections to one server for each download. By default this will establish one connection to each host. We can establish more then one connection to each host to speedup download by adding -x2 (2 means, two connection) option with aria2 command

|

||||

默认情况,每次下载连接到一台服务器的最大数目,对于一条主机只能建立一条。我们可以通过 aria2 命令行添加 `-x2`(2 表示两个连接)来创建到每台主机的多个连接,以加快下载速度。

|

||||

|

||||

```

|

||||

# aria2c -x2 https://download.owncloud.org/community/owncloud-9.0.0.tar.bz2

|

||||

@ -199,9 +199,9 @@ Status Legend:

|

||||

|

||||

```

|

||||

|

||||

#### 9) Download Torrent Files

|

||||

### 9) 下载 BitTorrent 种子文件

|

||||

|

||||

We can directly download a Torrent files using aria2 command.

|

||||

我们可以使用 aria2 命令行直接下载一个 BitTorrent 种子文件:

|

||||

|

||||

```

|

||||

# aria2c https://torcache.net/torrent/C86F4E743253E0EBF3090CCFFCC9B56FA38451A3.torrent?title=[kat.cr]irudhi.suttru.2015.official.teaser.full.hd.1080p.pathi.team.sr

|

||||

@ -221,27 +221,27 @@ Status Legend:

|

||||

|

||||

```

|

||||

|

||||

#### 10) Download BitTorrent Magnet URI

|

||||

### 10) 下载 BitTorrent 磁力链接

|

||||

|

||||

Also we can directly download a Torrent files through BitTorrent Magnet URI using aria2 command.

|

||||

使用 aria2 我们也可以通过 BitTorrent 磁力链接直接下载一个种子文件:

|

||||

|

||||

```

|

||||

# aria2c 'magnet:?xt=urn:btih:248D0A1CD08284299DE78D5C1ED359BB46717D8C'

|

||||

|

||||

```

|

||||

|

||||

#### 11) Download BitTorrent Metalink

|

||||

### 11) 下载 BitTorrent Metalink 种子

|

||||

|

||||

Also we can directly download a Metalink file using aria2 command.

|

||||

我们也可以通过 aria2 命令行直接下载一个 Metalink 文件。

|

||||

|

||||

```

|

||||

# aria2c https://curl.haxx.se/metalink.cgi?curl=tar.bz2

|

||||

|

||||

```

|

||||

|

||||

#### 12) Download a file from password protected site

|

||||

### 12) 从密码保护的网站下载一个文件

|

||||

|

||||

Alternatively we can download a file from password protected site. The below command will download the file from password protected site.

|

||||

或者,我们也可以从一个密码保护网站下载一个文件。下面的命令行将会从一个密码保护网站中下载文件。

|

||||

|

||||

```

|

||||

# aria2c --http-user=xxx --http-password=xxx https://download.owncloud.org/community/owncloud-9.0.0.tar.bz2

|

||||

@ -250,9 +250,9 @@ Alternatively we can download a file from password protected site. The below com

|

||||

|

||||

```

|

||||

|

||||

#### 13) Read more about aria2

|

||||

### 13) 阅读更多关于 aria2

|

||||

|

||||

If you want to know more option which is available for wget, you can grep the details on your terminal itself by firing below commands..

|

||||

如果你希望了解了解更多选项 —— 它们同时适用于 wget,可以输入下面的命令行在你自己的终端获取详细信息:

|

||||

|

||||

```

|

||||

# man aria2c

|

||||

@ -261,17 +261,15 @@ or

|

||||

|

||||

```

|

||||

|

||||

Enjoy…)

|

||||

谢谢欣赏 …)

|

||||

|

||||

--------------------------------------------------------------------------------

|

||||

|

||||

via: http://www.2daygeek.com/aria2-command-line-download-utility-tool/

|

||||

|

||||

作者:[MAGESH MARUTHAMUTHU][a]

|

||||

|

||||

译者:[译者ID](https://github.com/译者ID)

|

||||

|

||||

校对:[校对者ID](https://github.com/校对者ID)

|

||||

译者:[yangmingming](https://github.com/yangmingming)

|

||||

校对:[wxy](https://github.com/wxy)

|

||||

|

||||

本文由 [LCTT](https://github.com/LCTT/TranslateProject) 原创编译,[Linux中国](https://linux.cn/) 荣誉推出

|

||||

|

||||

@ -1,41 +1,43 @@

|

||||

谁需要 GUI?——L[inux 终端生存之道

|

||||

谁需要 GUI?—— Linux 终端生存之道

|

||||

=================================================

|

||||

|

||||

完全在 Linux 终端中生存并不容易,但这绝对是可行的。

|

||||

|

||||

|

||||

|

||||

### 处理常见功能的最佳 Linux shell 应用

|

||||

|

||||

你是否曾想像过完完全全在 Linux 终端里生存?即没有图形桌面。没有现代的 GUI 软件,仅有的就是文本——在 Linux shell 中,除了文本还是文本。这对于大部分人来说可能并不容易,但这是绝对可以逐渐做到的。[我最近在曾是在 30 天内只在 Linux shell 中生存][1]。下边提到的就是我最喜欢用的 shell 应用,它们可以用来处理多数的常用电脑功能(网页浏览、文字处理等)。这些显然有些不足,因为存文本实在是有些艰难。

|

||||

你是否曾想像过完完全全在 Linux 终端里生存?没有图形桌面,没有现代的 GUI 软件,只有文本 —— 在 Linux shell 中,除了文本还是文本。这可能并不容易,但这是绝对可行的。[我最近尝试完全在 Linux shell 中生存30天][1]。下边提到的就是我最喜欢用的 shell 应用,可以用来处理大部分的常用电脑功能(网页浏览、文字处理等)。这些显然有些不足,因为纯文本操作实在是有些艰难。

|

||||

|

||||

|

||||

|

||||

### 在 Linux 终端里发邮件

|

||||

|

||||

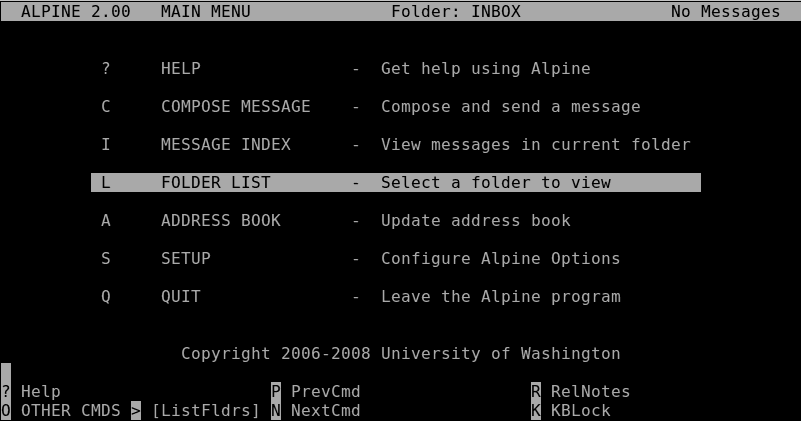

为了能在 终端里边发送邮件,我们对可选择性有极度的渴望。很多人会推荐 mutt 和 notmuch,这两个软件都功能强大并且表现非凡,但是我却更喜欢 alpine。为何?不仅是因为它的高效性,而且如果你习惯了像 Thunderbird 之类的 GUI 邮件客户端,你会发现 alpine 的界面与它们非常相似。

|

||||

要在终端里发邮件,选择有很多。很多人会推荐 mutt 和 notmuch,这两个软件都功能强大并且表现非凡,但是我却更喜欢 alpine。为何?不仅是因为它的高效性,还因为如果你习惯了像 Thunderbird 之类的 GUI 邮件客户端,你会发现 alpine 的界面与它们非常相似。

|

||||

|

||||

|

||||

|

||||

### 在 Linux 终端里浏览网页

|

||||

|

||||

我有句号要给你说:[w3m][5]。好吧,我承认这并不是一句话。但 w3m 的确是我在 Linux 终端想的作为 web 浏览器的选择。它能够柔和的将网页呈现出了,并且它也足够强大,让你在像 Google+ 之类的网站上发布消息(尽管方法并不有趣)。实际上 Lynx 可能才是那个基于文本的 Web 浏览器,但 w3m 还是我最想用的。

|

||||

我有一个词要告诉你:[w3m][5]。好吧,我承认这并不是一个真实的词。但 w3m 的确是我在 Linux 终端的 web 浏览器选择。它能够很好的呈现网页,并且它也足够强大,可以用来在像 Google+ 之类的网站上发布消息(尽管方法并不有趣)。 Lynx 可能是基于文本的 Web 浏览器的事实标准,但 w3m 还是我的最爱。

|

||||

|

||||

|

||||

|

||||

### 在 Linux 终端里编辑文本

|

||||

|

||||

对于编辑简单的文本文件,有那么一个应用是我最爱用的。不!不!不是 emacs,同样,也绝对不是 vim。对于编辑文本文件或者简要记下笔记,我喜欢使用 nano。对!就是 nano。它非常简单,易于学习并且使用方便。当然还有更多的软件富含其他特性,但 nano 的使用则是最令人愉快的。

|

||||

对于编辑简单的文本文件,有一个应用是我最的最爱。不!不!不是 emacs,同样,也绝对不是 vim。对于编辑文本文件或者简要记下笔记,我喜欢使用 nano。对!就是 nano。它非常简单,易于学习并且使用方便。当然还有更多的软件具有更多功能,但 nano 的使用是最令人愉快的。

|

||||

|

||||

|

||||

|

||||

### 在 Linux 终端里处理文字

|

||||

|

||||

在一个只有文本的 shell 之中,对于“文本编辑器”和“文字处理程序”实在是没有什么大的区别。但是像我这样需要大量写作的,如果有一些内置应用来长期协同则是非常必要的。而我最爱的就是 wordgrinder。它由足够的工具让我愉快工作——一个菜单驱动的界面(使用快捷键控制)并且支持开放文档、HTML或其他等多种文件格式。

|

||||

在一个只有文本的 shell 之中,“文本编辑器” 和 “文字处理程序” 实在没有什么大的区别。但是像我这样需要大量写作的,有一个专门用于长期写作的软件是非常必要的。而我最爱的就是 wordgrinder。它由足够的工具让我愉快工作——一个菜单驱动的界面(使用快捷键控制)并且支持 OpenDocument、HTML 或其他等多种文件格式。

|

||||

|

||||

|

||||

|

||||

### 在 Linux 终端里听音乐

|

||||

|

||||

当谈到在 shell 中播放音乐(比如 mp3,ogg 等),有一个软件绝对是卫冕之王:[cmus][7]。它支持所有你想得到的文件格式。它的使用超级简单,运行速度超级快,并且只使用系统少量的资源。如此清洁,如此流畅。这才是一个好的应用播发器的样子。

|

||||

当谈到在 shell 中播放音乐(比如 mp3,ogg 等),有一个软件绝对是卫冕之王:[cmus][7]。它支持所有你想得到的文件格式。它的使用超级简单,运行速度超级快,并且只使用系统少量的资源。如此清洁,如此流畅。这才是一个好的音乐播放器的样子。

|

||||

|

||||

|

||||

|

||||

@ -47,7 +49,7 @@

|

||||

|

||||

### 在 Linux 终端里发布推文

|

||||

|

||||

这不是开玩笑!由于 [rainbowstream][10] 的存在,我们已经在终端里发布推文了。尽管我经常遇到一些错误信息,但体验一番之后,它确实可以很好的工作。虽然不能像在网页版 Twitter,也不能像其移动版那样,但这是一个终端版的,来试一试吧。尽管它的功能还未完善,但是用起来还是很酷,不是吗?

|

||||

这不是开玩笑!由于 [rainbowstream][10] 的存在,我们已经可以在终端里发布推文了。尽管我时不时遇到一些 bug,但整体上,它工作得很好。虽然没有网页版 Twitter 或官方移动客户端那么好用,但这是一个终端版的 Twitter,来试一试吧。尽管它的功能还未完善,但是用起来还是很酷,不是吗?

|

||||

|

||||

|

||||

|

||||

@ -59,25 +61,25 @@

|

||||

|

||||

### 在 Linux 终端里管理进程

|

||||

|

||||

可以是 [htop][12]。与 top 相似,但更好用、更美观。有时候,我打开 htop 之后就让它一直运行。这样做是因为,它就是一个音乐视察器——当然,这里显示的是 RAM 和 CPU 使用情况。

|

||||

可以使用 [htop][12]。与 top 相似,但更好用、更美观。有时候,我打开 htop 之后就让它一直运行。没有原因,就是喜欢!从某方面说,它就像将音乐可视化——当然,这里显示的是 RAM 和 CPU 的使用情况。

|

||||

|

||||

|

||||

|

||||

### 在 Linux 终端了管理文件

|

||||

### 在 Linux 终端里管理文件

|

||||

|

||||

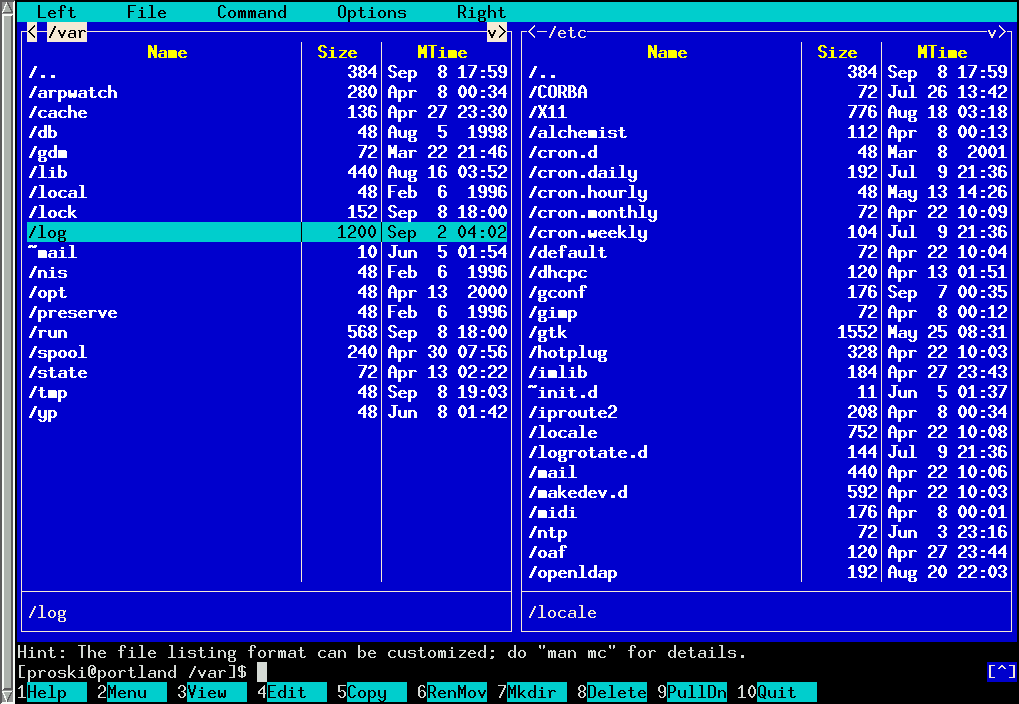

在一个纯文本终端里并不意味着你不能享受生活的美好之物。比方说一个出色的文件浏览和管理器。基于这样的场景,[Midnight Commander][13] 则是极其好用的一个。

|

||||

在一个纯文本终端里并不意味着你不能享受生活之美好。比方说一个出色的文件浏览和管理器。这方面,[Midnight Commander][13] 是很好用的。

|

||||

|

||||

|

||||

|

||||

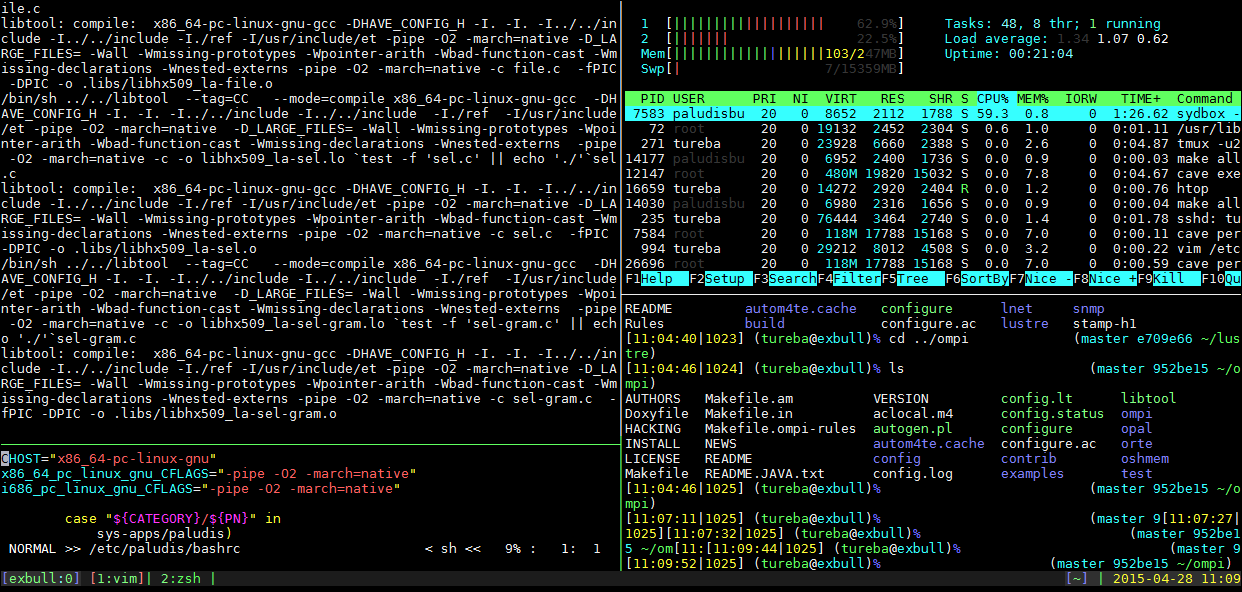

### 在 Linux 终端里管理终端窗口

|

||||

|

||||

如果你需要在终端里工作很长时间,你就需要一个多窗口终端了。基本上,它就是这样一个软件——可以让你将终端回话分割成一个自定义网格,让你同时使用和查看多个终端应用。对于 shell,它相当于一个瓦片时窗口管理器。我最喜欢用的就是 [tmux][14]。但 [GNU Screen][15] 也是很好用的。你可能需要花一些时间来学习怎么使用,但你会用之后,你就会迷上这样的用法。

|

||||

如果要在终端里工作很长时间,就需要一个多窗口终端了。它是这样一个软件 —— 可以将用户终端会话分割成一个自定义网格,从而可以同时使用和查看多个终端应用。对于 shell,它相当于一个平铺式窗口管理器。我最喜欢用的是 [tmux][14]。不过 [GNU Screen][15] 也很好用。学习怎么使用它们可能要花点时间,但一旦会用,就会很方便。

|

||||

|

||||

|

||||

|

||||

### 在 Linux 终端里进行讲稿演示

|

||||

|

||||

不管是 LibreOffice、Google slides、gasp 或者 PowerPoint。我都会在讲稿演示软件花费很多时间。如果这类软件有一个终端版的就美妙了。它应该叫做“[文本演示程序][16]”。很显然,没有图片,只是一个使用简单标记语言将幻灯片放在一起的简单程序。它不可能让你在其中插入猫的图片,但它却可以让你在终端里进行完整的演示。

|

||||

这类软件有 LibreOffice、Google slides、gasp 或者 PowerPoint。我在讲稿演示软件花费很多时间,很高兴有一个终端版的软件。它称做“[文本演示程序(tpp)][16]”。很显然,没有图片,只是一个使用简单标记语言将放在一起的幻灯片展示出来的简单程序。它不可能让你在其中插入猫的图片,但可以让你在终端里进行完整的演示。

|

||||

|

||||

--------------------------------------------------------------------------------

|

||||

|

||||

@ -85,7 +87,7 @@ via: http://www.networkworld.com/article/3091139/linux/who-needs-a-gui-how-to-li

|

||||

|

||||

作者:[Bryan Lunduke][a]

|

||||

译者:[GHLandy](https://github.com/GHLandy)

|

||||

校对:[校对者ID](https://github.com/校对者ID)

|

||||

校对:[jasminepeng](https://github.com/jasminepeng)

|

||||

|

||||

本文由 [LCTT](https://github.com/LCTT/TranslateProject) 原创翻译,[Linux中国](https://linux.cn/) 荣誉推出

|

||||

|

||||

@ -0,0 +1,58 @@

|

||||

为满足当今和未来 IT 需求,培训员工还是雇佣新人?

|

||||

================================================================

|

||||

|

||||

|

||||

|

||||

在数字化时代,由于 IT 工具不断更新,技术公司紧随其后,对 IT 技能的需求也不断变化。对于企业来说,寻找和雇佣那些拥有令人垂涎能力的创新人才,是非常不容易的。同时,培训内部员工来使他们接受新的技能和挑战,需要一定的时间,而时间要求常常是紧迫的。

|

||||

|

||||

[Sandy Hill][1] 对 IT 涉及到的多项技术都很熟悉。她作为 [Pegasystems][2] 项目的 IT 总监,负责多个 IT 团队,从应用的部署到数据中心的运营都要涉及。更重要的是,Pegasystems 开发帮助销售、市场、服务以及运营团队流水化操作,以及客户联络的应用。这意味着她需要掌握使用 IT 内部资源的最佳方法,面对公司客户遇到的 IT 挑战。

|

||||

|

||||

|

||||

|

||||

**TEP(企业家项目):这些年你是如何调整培训重心的?**

|

||||

|

||||

**Hill**:在过去的几年中,我们经历了爆炸式的发展,现在我们要实现更多的全球化进程。因此,培训目标是确保每个人都在同一起跑线上。

|

||||

|

||||

我们主要的关注点在培养员工使用新产品和工具上,这些新产品和工具能够推动创新,提高工作效率。例如,我们使用了之前没有的资产管理系统。因此我们需要为全部员工做培训,而不是雇佣那些已经知道该产品的人。当我们正在发展的时候,我们也试图保持紧张的预算和稳定的职员总数。所以,我们更愿意在内部培训而不是雇佣新人。

|

||||

|

||||

**TEP:说说培训方法吧,怎样帮助你的员工发展他们的技能?**

|

||||

|

||||

**Hill**:我要求每一位员工制定一个技术性的和非技术性的训练目标。这作为他们绩效评估的一部分。他们的技术性目标需要与他们的工作职能相符,非技术岗目标则随意,比如着重发展一项软技能,或是学一些专业领域之外的东西。我每年对职员进行一次评估,看看差距和不足之处,以使团队保持全面发展。

|

||||

|

||||

**TEP:你的训练计划能够在多大程度上减轻招聘工作量, 保持职员的稳定性?**

|

||||

|

||||

**Hill**:使我们的职员保持学习新技术的兴趣,可以让他们不断提高技能。让职员知道我们重视他们并且让他们在擅长的领域成长和发展,以此激励他们。

|

||||

|

||||

**TEP:你们发现哪些培训是最有效的?**

|

||||

|

||||

**HILL**:我们使用几种不同的培训方法,认为效果很好。对新的或特殊的项目,我们会由供应商提供培训课程,作为项目的一部分。要是这个方法不能实现,我们将进行脱产培训。我们也会购买一些在线的培训课程。我也鼓励职员每年参加至少一次会议,以了解行业的动向。

|

||||

|

||||

**TEP:哪些技能需求,更适合雇佣新人而不是培训现有员工?**

|

||||

|

||||

**Hill**:这和项目有关。最近有一个项目,需要使用 OpenStack,而我们根本没有这方面的专家。所以我们与一家从事这一领域的咨询公司合作。我们利用他们的专业人员运行该项目,并现场培训我们的内部团队成员。让内部员工学习他们需要的技能,同时还要完成他们的日常工作,是一项艰巨的任务。

|

||||

|

||||

顾问帮助我们确定我们需要的员工人数。这样我们就可以对员工进行评估,看是否存在缺口。如果存在人员上的缺口,我们还需要额外的培训或是员工招聘。我们也确实雇佣了一些合同工。另一个选择是对一些全职员工进行为期六至八周的培训,但我们的项目模式不容许这么做。

|

||||

|

||||

**TEP:最近雇佣的员工,他们的那些技能特别能够吸引到你?**

|

||||

|

||||

**Hill**:在最近的招聘中,我更看重软技能。除了扎实的技术能力外,他们需要能够在团队中进行有效的沟通和工作,要有说服他人,谈判和解决冲突的能力。

|

||||

|

||||

IT 人常常独来独往,不擅社交。然而如今IT 与整个组织结合越来越紧密,为其他业务部门提供有用的更新和状态报告的能力至关重要,可展示 IT 部门存在的重要性。

|

||||

|

||||

--------------------------------------------------------------------------------

|

||||

|

||||

via: https://enterprisersproject.com/article/2016/6/training-vs-hiring-meet-it-needs-today-and-tomorrow

|

||||

|

||||

作者:[Paul Desmond][a]

|

||||

译者:[Cathon](https://github.com/Cathon)

|

||||

校对:[jasminepeng](https://github.com/jasminepeng)

|

||||

|

||||

本文由 [LCTT](https://github.com/LCTT/TranslateProject) 原创编译,[Linux中国](https://linux.cn/) 荣誉推出

|

||||

|

||||

[a]: https://enterprisersproject.com/user/paul-desmond

|

||||

[1]: https://enterprisersproject.com/user/sandy-hill

|

||||

[2]: https://www.pega.com/pega-can?&utm_source=google&utm_medium=cpc&utm_campaign=900.US.Evaluate&utm_term=pegasystems&gloc=9009726&utm_content=smAXuLA4U|pcrid|102822102849|pkw|pegasystems|pmt|e|pdv|c|

|

||||

|

||||

|

||||

|

||||

|

||||

@ -0,0 +1,143 @@

|

||||

2016 年 Linux 下五个最佳视频编辑软件

|

||||

=====================================================

|

||||

|

||||

|

||||

|

||||

概要: 在这篇文章中,Tiwo 讨论了 Linux 下最佳视频编辑器的优缺点和在基于 Ubuntu 的发行版中的安装方法。

|

||||

|

||||

在过去,我们已经在类似的文章中讨论了 [Linux 下最佳图像管理应用软件][1],[Linux 上四个最佳的现代开源代码编辑器][2]。今天,我们来看看 Linux 下的最佳视频编辑软件。

|

||||

|

||||

当谈及免费的视频编辑软件,Windows Movie Maker 和 iMovie 是大多数人经常推荐的。

|

||||

|

||||

不幸的是,它们在 GNU/Linux 下都是不可用的。但是你不必担心这个,因为我们已经为你收集了一系列最佳的视频编辑器。

|

||||

|

||||

### Linux下最佳的视频编辑应用程序

|

||||

|

||||

接下来,让我们来看看 Linux 下排名前五的最佳视频编辑软件:

|

||||

|

||||

#### 1. Kdenlive

|

||||

|

||||

|

||||

|

||||

[Kdenlive][3] 是一款来自于 KDE 的自由而开源的视频编辑软件,它提供双视频监视器、多轨时间轴、剪辑列表、可自定义的布局支持、基本效果和基本转换的功能。

|

||||

|

||||

它支持各种文件格式和各种摄像机和相机,包括低分辨率摄像机(Raw 和 AVI DV 编辑):mpeg2、mpeg4 和 h264 AVCHD(小型摄像机和摄像机);高分辨率摄像机文件,包括 HDV 和 AVCHD 摄像机;专业摄像机,包括 XDCAM-HD^TM 流、IMX^TM (D10)流、DVCAM(D10)、DVCAM、DVCPRO^TM 、DVCPRO50^TM 流和 DNxHD^TM 流等等。

|

||||

|

||||

你可以在命令行下运行下面的命令安装 :

|

||||

|

||||

```

|

||||

sudo apt-get install kdenlive

|

||||

```

|

||||

|

||||

或者,打开 Ubuntu 软件中心,然后搜索 Kdenlive。

|

||||

|

||||

#### 2. OpenShot

|

||||

|

||||

|

||||

|

||||

[OpenShot][5] 是我们这个 Linux 视频编辑软件列表中的第二选择。 OpenShot 可以帮助您创建支持过渡、效果、调整音频电平的电影,当然,它也支持大多数格式和编解码器。

|

||||

|

||||

您还可以将电影导出到 DVD,上传到 YouTube、Vimeo、Xbox 360 和许多其他常见的格式。 OpenShot 比 Kdenlive 更简单。 所以如果你需要一个简单界面的视频编辑器,OpenShot 会是一个不错的选择。

|

||||

|

||||

最新的版本是 2.0.7。您可以从终端窗口运行以下命令安装 OpenShot 视频编辑器:

|

||||

|

||||

```

|

||||

sudo apt-get install openshot

|

||||

```

|

||||

|

||||

它需要下载 25 MB,安装后需要 70 MB 硬盘空间。

|

||||

|

||||

#### 3. Flowblade Movie Editor

|

||||

|

||||

|

||||

|

||||

[Flowblade Movie Editor][6] 是一个用于 Linux 的多轨非线性视频编辑器。它是自由而开源的。 它配备了一个时尚而现代的用户界面。

|

||||

|

||||

它是用 Python 编写的,旨在提供一个快速、精确的功能。 Flowblade 致力于在 Linux 和其他自由平台上提供最好的体验。 所以现在没有 Windows 和 OS X 版本。

|

||||

|

||||

要在 Ubuntu 和其他基于 Ubuntu 的系统上安装 Flowblade,请使用以下命令:

|

||||

|

||||

```

|

||||

sudo apt-get install flowblade

|

||||

```

|

||||

|

||||

#### 4. Lightworks

|

||||

|

||||

|

||||

|

||||

如果你要寻找一个有更多功能的视频编辑软件,这会是答案。 [Lightworks][7] 是一个跨平台的专业的视频编辑器,在 Linux、Mac OS X 和 Windows 系统下都可用。

|

||||

|

||||

它是一个获奖的专业的[非线性编辑][8](NLE)软件,支持高达 4K 的分辨率以及标清和高清格式的视频。

|

||||

|

||||

该应用程序有两个版本:Lightworks 免费版和 Lightworks 专业版。不过免费版本不支持 Vimeo(H.264 / MPEG-4)和 YouTube(H.264 / MPEG-4) - 高达 2160p(4K UHD)、蓝光和 H.264 / MP4 导出选项,以及可配置的位速率设置,但是专业版本支持。

|

||||

|

||||

- Lightworks 免费版

|

||||

- Lightworks 专业版

|

||||

|

||||

专业版本有更多的功能,例如更高的分辨率支持,4K 和蓝光支持等。

|

||||

|

||||

##### 怎么安装Lightworks?

|

||||

|

||||

不同于其他的视频编辑器,安装 Lightwork 不像运行单个命令那么直接。别担心,这不会很复杂。

|

||||

|

||||

- 第1步 – 你可以从 [Lightworks 下载页面][9]下载安装包。这个安装包大约 79.5MB。*请注意:这里没有32 位 Linux 的支持。*

|

||||

- 第2步 – 一旦下载,你可以使用 [Gdebi 软件包安装器][10]来安装。Gdebi 会自动下载依赖关系 :

|

||||

|

||||

- 第3步 – 现在你可以从 Ubuntu 仪表板或您的 Linux 发行版菜单中打开它。

|

||||

- 第4步 – 当你第一次使用它时,需要一个账号。点击 “Not Registerd?” 按钮来注册。别担心,它是免费的。

|

||||

- 第5步 – 在你的账号通过验证后,就可以登录了。

|

||||

|

||||

现在,Lightworks 可以使用了。

|

||||

|

||||

需要 Lightworks 的视频教程? 在 [Lightworks 视频教程页][11]得到它们。

|

||||

|

||||

#### 5. Blender

|

||||

|

||||

|

||||

|

||||

|

||||

Blender 是一个专业的,工业级的开源、跨平台的视频编辑器。在 3D 作品的制作中,是非常受欢迎的。 Blender 已被用于几部好莱坞电影的制作,包括蜘蛛侠系列。

|

||||

|

||||

虽然最初是设计用于制作 3D 模型,但它也可以用于各种格式的视频编辑和输入能力。 该视频编辑器包括:

|

||||

|

||||

- 实时预览、亮度波形、色度矢量示波器和直方图显示

|

||||

- 音频混合、同步、擦除和波形可视化

|

||||

- 多达 32 个插槽用于添加视频、图像、音频、场景、面具和效果

|

||||

- 速度控制、调整图层、过渡、关键帧、过滤器等

|

||||

|

||||

最新的版本可以从 [Blender 下载页][12]下载.

|

||||

|

||||

### 哪一个是最好的视频编辑软件?

|

||||

|

||||

如果你需要一个简单的视频编辑器,OpenShot、Kdenlive 和 Flowblade 是一个不错的选择。这些软件是适合初学者的,并且带有标准规范的系统。

|

||||

|

||||

如果你有一个高性能的计算机,并且需要高级功能,你可以使用 Lightworks。如果你正在寻找更高级的功能, Blender 可以帮助你。

|

||||

|

||||

这就是我写的 5 个最佳的视频编辑软件,它们可以在 Ubuntu、Linux Mint、Elementary 和其他 Linux 发行版下使用。 请与我们分享您最喜欢的视频编辑器。

|

||||

|

||||

--------------------------------------------------------------------------------

|

||||

|

||||

via: https://itsfoss.com/best-video-editing-software-linux/

|

||||

|

||||

作者:[Tiwo Satriatama][a]

|

||||

译者:[DockerChen](https://github.com/DockerChen)

|

||||

校对:[wxy](https://github.com/wxy)

|

||||

|

||||

本文由 [LCTT](https://github.com/LCTT/TranslateProject) 原创翻译,[Linux中国](https://linux.cn/) 荣誉推出

|

||||

|

||||

[a]: https://itsfoss.com/author/tiwo/

|

||||

[1]: https://linux.cn/article-7462-1.html

|

||||

[2]: https://linux.cn/article-7468-1.html

|

||||

[3]: https://kdenlive.org/

|

||||

[4]: https://itsfoss.com/tag/open-source/

|

||||

[5]: http://www.openshot.org/

|

||||

[6]: http://jliljebl.github.io/flowblade/

|

||||

[7]: https://www.lwks.com/

|

||||

[8]: https://en.wikipedia.org/wiki/Non-linear_editing_system

|

||||

[9]: https://www.lwks.com/index.php?option=com_lwks&view=download&Itemid=206

|

||||

[10]: https://itsfoss.com/gdebi-default-ubuntu-software-center/

|

||||

[11]: https://www.lwks.com/videotutorials

|

||||

[12]: https://www.blender.org/download/

|

||||

|

||||

|

||||

|

||||

@ -0,0 +1,188 @@

|

||||

写一个 JavaScript 框架:比 setTimeout 更棒的定时执行

|

||||

===================

|

||||

|

||||

这是 [JavaScript 框架系列][2]的第二章。在这一章里,我打算讲一下在浏览器里的异步代码不同执行方式。你将了解定时器和事件循环之间的不同差异,比如 setTimeout 和 Promises。

|

||||

|

||||

这个系列是关于一个开源的客户端框架,叫做 NX。在这个系列里,我主要解释一下写该框架不得不克服的主要困难。如果你对 NX 感兴趣可以参观我们的 [主页][1]。

|

||||

|

||||

这个系列包含以下几个章节:

|

||||

|

||||

1. [项目结构][2]

|

||||

2. 定时执行 (当前章节)

|

||||

3. [沙箱代码评估][3]

|

||||

4. [数据绑定介绍](https://blog.risingstack.com/writing-a-javascript-framework-data-binding-dirty-checking/)

|

||||

5. [数据绑定与 ES6 代理](https://blog.risingstack.com/writing-a-javascript-framework-data-binding-es6-proxy/)

|

||||

6. 自定义元素

|

||||

7. 客户端路由

|

||||

|

||||

### 异步代码执行

|

||||

|

||||

你可能比较熟悉 `Promise`、`process.nextTick()`、`setTimeout()`,或许还有 `requestAnimationFrame()` 这些异步执行代码的方式。它们内部都使用了事件循环,但是它们在精确计时方面有一些不同。

|

||||

|

||||

在这一章里,我将解释它们之间的不同,然后给大家演示怎样在一个类似 NX 这样的先进框架里面实现一个定时系统。不用我们重新做一个,我们将使用原生的事件循环来达到我们的目的。

|

||||

|

||||

### 事件循环

|

||||

|

||||

事件循环甚至没有在 [ES6 规范](http://www.ecma-international.org/ecma-262/6.0/)里提到。JavaScript 自身只有任务(Job)和任务队列(job queue)。更加复杂的事件循环是在 NodeJS 和 HTML5 规范里分别定义的,因为这篇是针对前端的,我会在详细说明后者。

|

||||

|

||||

事件循环可以被看做某个条件的循环。它不停的寻找新的任务来运行。这个循环中的一次迭代叫做一个滴答(tick)。在一次滴答期间执行的代码称为一次任务(task)。

|

||||

|

||||

```

|

||||

while (eventLoop.waitForTask()) {

|

||||

eventLoop.processNextTask()

|

||||

}

|

||||

```

|

||||

任务是同步代码,它可以在循环中调度其它任务。一个简单的调用新任务的方式是 `setTimeout(taskFn)`。不管怎样, 任务可能有很多来源,比如用户事件、网络或者 DOM 操作。

|

||||

|

||||

|

||||

|

||||

### 任务队列

|

||||

|

||||

更复杂一些的是,事件循环可以有多个任务队列。这里有两个约束条件,相同任务源的事件必须在相同的队列,以及任务必须按插入的顺序进行处理。除此之外,浏览器可以做任何它想做的事情。例如,它可以决定接下来处理哪个任务队列。

|

||||

|

||||

```

|

||||

while (eventLoop.waitForTask()) {

|

||||

const taskQueue = eventLoop.selectTaskQueue()

|

||||

if (taskQueue.hasNextTask()) {

|

||||

taskQueue.processNextTask()

|

||||

}

|

||||

}

|

||||

```

|

||||

|

||||

用这个模型,我们不能精确的控制定时。如果用 `setTimeout()`浏览器可能决定先运行完其它几个队列才运行我们的队列。

|

||||

|

||||

|

||||

|

||||

### 微任务队列

|

||||

|

||||

幸运的是,事件循环还提供了一个叫做微任务(microtask)队列的单一队列。当前任务结束的时候,微任务队列会清空每个滴答里的任务。

|

||||

|

||||

```

|

||||

while (eventLoop.waitForTask()) {

|

||||

const taskQueue = eventLoop.selectTaskQueue()

|

||||

if (taskQueue.hasNextTask()) {

|

||||

taskQueue.processNextTask()

|

||||

}

|

||||

|

||||

const microtaskQueue = eventLoop.microTaskQueue

|

||||

while (microtaskQueue.hasNextMicrotask()) {

|

||||

microtaskQueue.processNextMicrotask()

|

||||

}

|

||||

}

|

||||

```

|

||||

|

||||

最简单的调用微任务的方法是 `Promise.resolve().then(microtaskFn)`。微任务按照插入顺序进行处理,并且由于仅存在一个微任务队列,浏览器不会把时间弄乱了。

|

||||

|

||||

此外,微任务可以调度新的微任务,它将插入到同一个队列,并在同一个滴答内处理。

|

||||

|

||||

|

||||

|

||||

### 绘制(Rendering)

|

||||

|

||||

最后是绘制(Rendering)调度,不同于事件处理和分解,绘制并不是在单独的后台任务完成的。它是一个可以运行在每个循环滴答结束时的算法。

|

||||

|

||||

在这里浏览器又有了许多自由:它可能在每个任务以后绘制,但是它也可能在好几百个任务都执行了以后也不绘制。

|

||||

|

||||

幸运的是,我们有 `requestAnimationFrame()`,它在下一个绘制之前执行传递的函数。我们最终的事件模型像这样:

|

||||

|

||||

```

|

||||

while (eventLoop.waitForTask()) {

|

||||

const taskQueue = eventLoop.selectTaskQueue()

|

||||

if (taskQueue.hasNextTask()) {

|

||||

taskQueue.processNextTask()

|

||||

}

|

||||

|

||||

const microtaskQueue = eventLoop.microTaskQueue

|

||||

while (microtaskQueue.hasNextMicrotask()) {

|

||||

microtaskQueue.processNextMicrotask()

|

||||

}

|

||||

|

||||

if (shouldRender()) {

|

||||

applyScrollResizeAndCSS()

|

||||

runAnimationFrames()

|

||||

render()

|

||||

}

|

||||

}

|

||||

```

|

||||

|

||||

现在用我们所知道知识来创建定时系统!

|

||||

|

||||

### 利用事件循环

|

||||

|

||||

和大多数现代框架一样,[NX][1] 也是基于 DOM 操作和数据绑定的。批量操作和异步执行以取得更好的性能表现。基于以上理由我们用 `Promises`、 `MutationObservers` 和 `requestAnimationFrame()`。

|

||||

|

||||

我们所期望的定时器是这样的:

|

||||

|

||||

1. 代码来自于开发者

|

||||

2. 数据绑定和 DOM 操作由 NX 来执行

|

||||

3. 开发者定义事件钩子

|

||||

4. 浏览器进行绘制

|

||||

|

||||

#### 步骤 1

|

||||

|

||||

NX 寄存器对象基于 [ES6 代理](https://ponyfoo.com/articles/es6-proxies-in-depth) 以及 DOM 变动基于[MutationObserver](https://davidwalsh.name/mutationobserver-api) (变动观测器)同步运行(下一节详细介绍)。 它作为一个微任务延迟直到步骤 2 执行以后才做出反应。这个延迟已经在 `Promise.resolve().then(reaction)` 进行了对象转换,并且它将通过变动观测器自动运行。

|

||||

|

||||

#### 步骤 2

|

||||

|

||||

来自开发者的代码(任务)运行完成。微任务由 NX 开始执行所注册。 因为它们是微任务,所以按序执行。注意,我们仍然在同一个滴答循环中。

|

||||

|

||||

#### 步骤 3

|

||||

|

||||

开发者通过 `requestAnimationFrame(hook)` 通知 NX 运行钩子。这可能在滴答循环后发生。重要的是,钩子运行在下一次绘制之前和所有数据操作之后,并且 DOM 和 CSS 改变都已经完成。

|

||||

|

||||

#### 步骤 4

|

||||

|

||||

浏览器绘制下一个视图。这也有可能发生在滴答循环之后,但是绝对不会发生在一个滴答的步骤 3 之前。

|

||||

|

||||

### 牢记在心里的事情

|

||||

|

||||

我们在原生的事件循环之上实现了一个简单而有效的定时系统。理论上讲它运行的很好,但是还是很脆弱,一个轻微的错误可能会导致很严重的 BUG。

|

||||

|

||||

在一个复杂的系统当中,最重要的就是建立一定的规则并在以后保持它们。在 NX 中有以下规则:

|

||||

|

||||

1. 永远不用 `setTimeout(fn, 0)` 来进行内部操作

|

||||

2. 用相同的方法来注册微任务

|

||||

3. 微任务仅供内部操作

|

||||

4. 不要干预开发者钩子运行时间

|

||||

|

||||

#### 规则 1 和 2

|

||||

|

||||

数据反射和 DOM 操作将按照操作顺序执行。这样只要不混合就可以很好的延迟它们的执行。混合执行会出现莫名其妙的问题。

|

||||

|

||||

`setTimeout(fn, 0)` 的行为完全不可预测。使用不同的方法注册微任务也会发生混乱。例如,下面的例子中 microtask2 不会正确地在 microtask1 之前运行。

|

||||

|

||||

```

|

||||

Promise.resolve().then().then(microtask1)

|

||||

Promise.resolve().then(microtask2)

|

||||

```

|

||||

|

||||

|

||||

|

||||

#### 规则 3 和 4

|

||||

|

||||

分离开发者的代码执行和内部操作的时间窗口是非常重要的。混合这两种行为会导致不可预测的事情发生,并且它会需要开发者了解框架内部。我想很多前台开发者已经有过类似经历。

|

||||

|

||||

### 结论

|

||||

|

||||

如果你对 NX 框架感兴趣,可以参观我们的[主页][1]。还可以在 GIT 上找到我们的[源代码][5]。

|

||||

|

||||

在下一节我们再见,我们将讨论 [沙盒化代码执行][4]!

|

||||

|

||||

你也可以给我们留言。

|

||||

|

||||

--------------------------------------------------------------------------------

|

||||

|

||||

via: https://blog.risingstack.com/writing-a-javascript-framework-execution-timing-beyond-settimeout/

|

||||

|

||||

作者:[Bertalan Miklos][a]

|

||||

译者:[kokialoves](https://github.com/kokialoves)

|

||||

校对:[wxy](https://github.com/wxy)

|

||||

|

||||

本文由 [LCTT](https://github.com/LCTT/TranslateProject) 原创编译,[Linux中国](https://linux.cn/) 荣誉推出

|

||||

|

||||

[a]: https://blog.risingstack.com/author/bertalan/

|

||||

[1]: http://nx-framework.com/

|

||||

[2]: https://blog.risingstack.com/writing-a-javascript-framework-project-structuring/

|

||||

[3]: https://blog.risingstack.com/writing-a-javascript-framework-sandboxed-code-evaluation/

|

||||

[4]: https://blog.risingstack.com/writing-a-javascript-framework-sandboxed-code-evaluation/

|

||||

[5]: https://github.com/RisingStack/nx-framework

|

||||

@ -1,9 +1,11 @@

|

||||

小模块的开销

|

||||

JavaScript 小模块的开销

|

||||

====

|

||||

|

||||

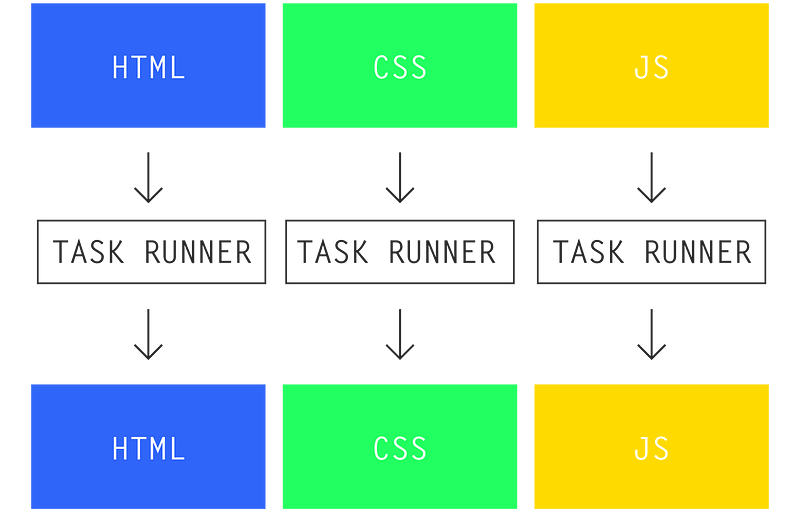

大约一年之前,我在将一个大型 JavaScript 代码库重构为小模块时发现了 Browserify 和 Webpack 中一个令人沮丧的事实:

|

||||

更新(2016/10/30):我写完这篇文章之后,我在[这个基准测试中发了一个错误](https://github.com/nolanlawson/cost-of-small-modules/pull/8),会导致 Rollup 比它预期的看起来要好一些。不过,整体结果并没有明显的不同(Rollup 仍然击败了 Browserify 和 Webpack,虽然它并没有像 Closure 十分好),所以我只是更新了图表。该基准测试包括了 [RequireJS 和 RequireJS Almond 打包器](https://github.com/nolanlawson/cost-of-small-modules/pull/5),所以文章中现在也包括了它们。要看原始帖子,可以查看[历史版本](https://web.archive.org/web/20160822181421/https://nolanlawson.com/2016/08/15/the-cost-of-small-modules/)。

|

||||

|

||||

> “代码越模块化,代码体积就越大。”- Nolan Lawson

|

||||

大约一年之前,我在将一个大型 JavaScript 代码库重构为更小的模块时发现了 Browserify 和 Webpack 中一个令人沮丧的事实:

|

||||

|

||||

> “代码越模块化,代码体积就越大。:< ”- Nolan Lawson

|

||||

|

||||

过了一段时间,Sam Saccone 发布了一些关于 [Tumblr][1] 和 [Imgur][2] 页面加载性能的出色的研究。其中指出:

|

||||

|

||||

@ -15,9 +17,9 @@

|

||||

|

||||

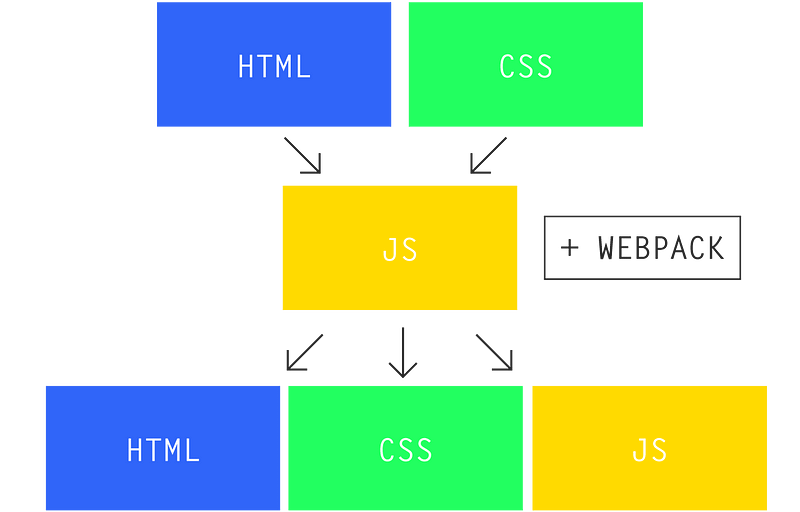

一个页面中包含的 JavaScript 脚本越多,页面加载也将越慢。庞大的 JavaScript 包会导致浏览器花费更多的时间去下载、解析和执行,这些都将加长载入时间。

|

||||

|

||||

即使当你使用如 Webpack [code splitting][3]、Browserify [factor bundles][4] 等工具将代码分解为多个包,时间的花费也仅仅是被延迟到页面生命周期的晚些时候。JavaScript 迟早都将有一笔开销。

|

||||

即使当你使用如 Webpack [code splitting][3]、Browserify [factor bundles][4] 等工具将代码分解为多个包,该开销也仅仅是被延迟到页面生命周期的晚些时候。JavaScript 迟早都将有一笔开销。

|

||||

|

||||

此外,由于 JavaScript 是一门动态语言,同时流行的 [CommonJS][5] 模块也是动态的,所以这就使得在最终分发给用户的代码中剔除无用的代码变得异常困难。譬如你可能只使用到 jQuery 中的 $.ajax,但是通过载入 jQuery 包,你将以整个包为代价。

|

||||

此外,由于 JavaScript 是一门动态语言,同时流行的 [CommonJS][5] 模块也是动态的,所以这就使得在最终分发给用户的代码中剔除无用的代码变得异常困难。譬如你可能只使用到 jQuery 中的 $.ajax,但是通过载入 jQuery 包,你将付出整个包的代价。

|

||||

|

||||

JavaScript 社区对这个问题提出的解决办法是提倡 [小模块][6] 的使用。小模块不仅有许多 [美好且实用的好处][7] 如易于维护,易于理解,易于集成等,而且还可以通过鼓励包含小巧的功能而不是庞大的库来解决之前提到的 jQuery 的问题。

|

||||

|

||||

@ -66,7 +68,7 @@ $ browserify node_modules/qs | browserify-count-modules

|

||||

|

||||

顺带一提,我写过的最大的开源站点 [Pokedex.org][21] 包含了 4 个包,共 311 个模块。

|

||||

|

||||

让我们先暂时忽略这些 JavaScript 包的实际大小,我认为去探索一下一定数量的模块本身开销会事一件有意思的事。虽然 Sam Saccone 的文章 [“2016 年 ES2015 转译的开销”][22] 已经广为流传,但是我认为他的结论还未到达足够深度,所以让我们挖掘的稍微再深一点吧。

|

||||

让我们先暂时忽略这些 JavaScript 包的实际大小,我认为去探索一下一定数量的模块本身开销会是一件有意思的事。虽然 Sam Saccone 的文章 [“2016 年 ES2015 转译的开销”][22] 已经广为流传,但是我认为他的结论还未到达足够深度,所以让我们挖掘的稍微再深一点吧。

|

||||

|

||||

### 测试环节!

|

||||

|

||||

@ -86,13 +88,13 @@ console.log(total)

|

||||

module.exports = 1

|

||||

```

|

||||

|

||||

我测试了五种打包方法:Browserify, 带 [bundle-collapser][24] 插件的 Browserify, Webpack, Rollup 和 Closure Compiler。对于 Rollup 和 Closure Compiler 我使用了 ES6 模块,而对于 Browserify 和 Webpack 则用的 CommonJS,目的是为了不涉及其各自缺点而导致测试的不公平(由于它们可能需要做一些转译工作,如 Babel 一样,而这些工作将会增加其自身的运行时间)。

|

||||

我测试了五种打包方法:Browserify、带 [bundle-collapser][24] 插件的 Browserify、Webpack、Rollup 和 Closure Compiler。对于 Rollup 和 Closure Compiler 我使用了 ES6 模块,而对于 Browserify 和 Webpack 则用的是 CommonJS,目的是为了不涉及其各自缺点而导致测试的不公平(由于它们可能需要做一些转译工作,如 Babel 一样,而这些工作将会增加其自身的运行时间)。

|

||||

|

||||

为了更好地模拟一个生产环境,我将带 -mangle 和 -compress 参数的 Uglify 用于所有的包,并且使用 gzip 压缩后通过 GitHub Pages 用 HTTPS 协议进行传输。对于每个包,我一共下载并执行 15 次,然后取其平均值,并使用 performance.now() 函数来记录载入时间(未使用缓存)与执行时间。

|

||||

为了更好地模拟一个生产环境,我对所有的包采用带 `-mangle` 和 `-compress` 参数的 `Uglify` ,并且使用 gzip 压缩后通过 GitHub Pages 用 HTTPS 协议进行传输。对于每个包,我一共下载并执行 15 次,然后取其平均值,并使用 `performance.now()` 函数来记录载入时间(未使用缓存)与执行时间。

|

||||

|

||||

### 包大小

|

||||

|

||||

在我们查看测试结果之前,我们有必要先来看一眼我们要测试的包文件。一下是每个包最小处理后但并未使用 gzip 压缩时的体积大小(单位:Byte):

|

||||

在我们查看测试结果之前,我们有必要先来看一眼我们要测试的包文件。以下是每个包最小处理后但并未使用 gzip 压缩时的体积大小(单位:Byte):

|

||||

|

||||

| | 100 个模块 | 1000 个模块 | 5000 个模块 |

|

||||

| --- | --- | --- | --- |

|

||||

@ -110,7 +112,7 @@ module.exports = 1

|

||||

| rollup | 300 | 2145 | 11510 |

|

||||

| closure | 302 | 2140 | 11789 |

|

||||

|

||||

Browserify 和 Webpack 的工作方式是隔离各个模块到各自的函数空间,然后声明一个全局载入器,并在每次 require() 函数调用时定位到正确的模块处。下面是我们的 Browserify 包的样子:

|

||||

Browserify 和 Webpack 的工作方式是隔离各个模块到各自的函数空间,然后声明一个全局载入器,并在每次 `require()` 函数调用时定位到正确的模块处。下面是我们的 Browserify 包的样子:

|

||||

|

||||

```

|

||||

(function e(t,n,r){function s(o,u){if(!n[o]){if(!t[o]){var a=typeof require=="function"&&require;if(!u&&a)return a(o,!0);if(i)return i(o,!0);var f=new Error("Cannot find module '"+o+"'");throw f.code="MODULE_NOT_FOUND",f}var l=n[o]={exports:{}};t[o][0].call(l.exports,function(e){var n=t[o][1][e];return s(n?n:e)},l,l.exports,e,t,n,r)}return n[o].exports}var i=typeof require=="function"&&require;for(var o=0;o

|

||||

@ -144,7 +146,7 @@ Browserify 和 Webpack 的工作方式是隔离各个模块到各自的函数空

|

||||

|

||||

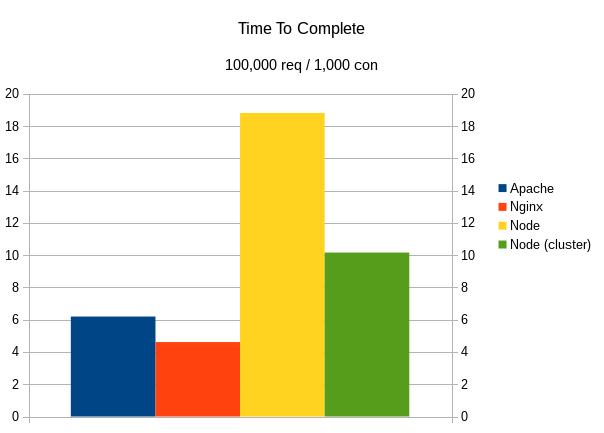

在 100 个模块时,各包的差异是微不足道的,但是一旦模块数量达到 1000 个甚至 5000 个时,差异将会变得非常巨大。iPod Touch 在不同包上的差异并不明显,而对于具有一定年代的 Nexus 5 来说,Browserify 和 Webpack 明显耗时更多。

|

||||

|

||||

与此同时,我发现有意思的是 Rollup 和 Closure 的运行开销对于 iPod 而言几乎可以忽略,并且与模块的数量关系也不大。而对于 Nexus 5 来说,运行的开销并非完全可以忽略,但它们仍比 Browserify 或 Webpack 低很多。后者若未在几百毫秒内完成加载则将会占用主线程的好几帧的时间,这就意味着用户界面将冻结并且等待直到模块载入完成。

|

||||

与此同时,我发现有意思的是 Rollup 和 Closure 的运行开销对于 iPod 而言几乎可以忽略,并且与模块的数量关系也不大。而对于 Nexus 5 来说,运行的开销并非完全可以忽略,但 Rollup/Closure 仍比 Browserify/Webpack 低很多。后者若未在几百毫秒内完成加载则将会占用主线程的好几帧的时间,这就意味着用户界面将冻结并且等待直到模块载入完成。

|

||||

|

||||

值得注意的是前面这些测试都是在千兆网速下进行的,所以在网络情况来看,这只是一个最理想的状况。借助 Chrome 开发者工具,我们可以认为地将 Nexus 5 的网速限制到 3G 水平,然后来看一眼这对测试产生的影响([查看表格][30]):

|

||||

|

||||

@ -152,13 +154,13 @@ Browserify 和 Webpack 的工作方式是隔离各个模块到各自的函数空

|

||||

|

||||

一旦我们将网速考虑进来,Browserify/Webpack 和 Rollup/Closure 的差异将变得更为显著。在 1000 个模块规模(接近于 Reddit 1050 个模块的规模)时,Browserify 花费的时间比 Rollup 长大约 400 毫秒。然而 400 毫秒已经不是一个小数目了,正如 Google 和 Bing 指出的,亚秒级的延迟都会 [对用户的参与产生明显的影响][32] 。

|

||||

|

||||

还有一件事需要指出,那就是这个测试并非测量 100 个、1000 个或者 5000 个模块的每个模块的精确运行时间。因为这还与你对 require() 函数的使用有关。在这些包中,我采用的是对每个模块调用一次 require() 函数。但如果你每个模块调用了多次 require() 函数(这在代码库中非常常见)或者你多次动态调用 require() 函数(例如在子函数中调用 require() 函数),那么你将发现明显的性能退化。

|

||||

还有一件事需要指出,那就是这个测试并非测量 100 个、1000 个或者 5000 个模块的每个模块的精确运行时间。因为这还与你对 `require()` 函数的使用有关。在这些包中,我采用的是对每个模块调用一次 `require()` 函数。但如果你每个模块调用了多次 `require()` 函数(这在代码库中非常常见)或者你多次动态调用 `require()` 函数(例如在子函数中调用 `require()` 函数),那么你将发现明显的性能退化。

|

||||

|

||||

Reddit 的移动站点就是一个很好的例子。虽然该站点有 1050 个模块,但是我测量了它们使用 Browserify 的实际执行时间后发现比“1000 个模块”的测试结果差好多。当使用那台运行 Chrome 的 Nexus 5 时,我测出 Reddit 的 Browserify require() 函数耗时 2.14 秒。而那个“1000 个模块”脚本中的等效函数只需要 197 毫秒(在搭载 i7 处理器的 Surface Book 上的桌面版 Chrome,我测出的结果分别为 559 毫秒与 37 毫秒,虽然给出桌面平台的结果有些令人惊讶)。

|

||||

|

||||

这结果提示我们有必要对每个模块使用多个 require() 函数的情况再进行一次测试。不过,我并不认为这对 Browserify 和 Webpack 会是一个公平的测试,因为 Rollup 和 Closure 都会将重复的 ES6 库导入处理为一个的顶级变量声明,同时也阻止了顶层空间以外的其他区域的导入。所以根本上来说,Rollup 和 Closure 中一个导入和多个导入的开销是相同的,而对于 Browserify 和 Webpack,运行开销随 require() 函数的数量线性增长。

|

||||

这结果提示我们有必要对每个模块使用多个 `require()` 函数的情况再进行一次测试。不过,我并不认为这对 Browserify 和 Webpack 会是一个公平的测试,因为 Rollup 和 Closure 都会将重复的 ES6 库导入处理为一个的顶级变量声明,同时也阻止了顶层空间以外的其他区域的导入。所以根本上来说,Rollup 和 Closure 中一个导入和多个导入的开销是相同的,而对于 Browserify 和 Webpack,运行开销随 `require()` 函数的数量线性增长。

|

||||

|

||||

为了我们这个分析的目的,我认为最好假设模块的数量是性能的短板。而事实上,“5000 个模块”也是一个比“5000 个 require() 函数调用”更好的度量标准。

|

||||

为了我们这个分析的目的,我认为最好假设模块的数量是性能的短板。而事实上,“5000 个模块”也是一个比“5000 个 `require()` 函数调用”更好的度量标准。

|

||||

|

||||

### 结论

|

||||

|

||||

@ -168,11 +170,11 @@ Reddit 的移动站点就是一个很好的例子。虽然该站点有 1050 个

|

||||

|

||||

给出这些结果之后,我对 Closure Compiler 和 Rollup 在 JavaScript 社区并没有得到过多关注而感到惊讶。我猜测或许是因为(前者)需要依赖 Java,而(后者)仍然相当不成熟并且未能做到开箱即用(详见 [Calvin’s Metcalf 的评论][37] 中作的不错的总结)。

|

||||

|

||||

即使没有足够数量的 JavaScript 开发者加入到 Rollup 或 Closure 的队伍中,我认为 npm 包作者们也已准备好了去帮助解决这些问题。如果你使用 npm 安装 lodash,你将会发其现主要的导入是一个巨大的 JavaScript 模块,而不是你期望的 Lodash 的超模块(hyper-modular)特性(require('lodash/uniq'),require('lodash.uniq') 等等)。对于 PouchDB,我们做了一个类似的声明以 [使用 Rollup 作为预发布步骤][38],这将产生对于用户而言尽可能小的包。

|

||||

即使没有足够数量的 JavaScript 开发者加入到 Rollup 或 Closure 的队伍中,我认为 npm 包作者们也已准备好了去帮助解决这些问题。如果你使用 npm 安装 lodash,你将会发其现主要的导入是一个巨大的 JavaScript 模块,而不是你期望的 Lodash 的超模块(hyper-modular)特性(`require('lodash/uniq')`,`require('lodash.uniq')` 等等)。对于 PouchDB,我们做了一个类似的声明以 [使用 Rollup 作为预发布步骤][38],这将产生对于用户而言尽可能小的包。

|

||||

|

||||

同时,我创建了 [rollupify][39] 来尝试将这过程变得更为简单一些,只需拖动到已存在的 Browserify 工程中即可。其基本思想是在你自己的项目中使用导入(import)和导出(export)(可以使用 [cjs-to-es6][40] 来帮助迁移),然后使用 require() 函数来载入第三方包。这样一来,你依旧可以在你自己的代码库中享受所有模块化的优点,同时能导出一个适当大小的大模块来发布给你的用户。不幸的是,你依旧得为第三方库付出一些代价,但是我发现这是对于当前 npm 生态系统的一个很好的折中方案。

|

||||

|

||||

所以结论如下:一个大的 JavaScript 包比一百个小 JavaScript 模块要快。尽管这是事实,我依旧希望我们社区能最终发现我们所处的困境————提倡小模块的原则对开发者有利,但是对用户不利。同时希望能优化我们的工具,使得我们可以对两方面都有利。

|

||||

所以结论如下:**一个大的 JavaScript 包比一百个小 JavaScript 模块要快**。尽管这是事实,我依旧希望我们社区能最终发现我们所处的困境————提倡小模块的原则对开发者有利,但是对用户不利。同时希望能优化我们的工具,使得我们可以对两方面都有利。

|

||||

|

||||

### 福利时间!三款桌面浏览器

|

||||

|

||||

@ -205,15 +207,15 @@ Firefox 48 ([查看表格][45])

|

||||

|

||||

[![Nexus 5 (3G) RequireJS 结果][53]](https://nolanwlawson.files.wordpress.com/2016/08/2016-08-20-14_45_29-small_modules3-xlsx-excel.png)

|

||||

|

||||

|

||||

更新 3: 我写了一个 [optimize-js](http://github.com/nolanlawson/optimize-js) ,它会减少一些函数内的函数的解析成本。

|

||||

|

||||

--------------------------------------------------------------------------------

|

||||

|

||||

via: https://nolanlawson.com/2016/08/15/the-cost-of-small-modules/?utm_source=javascriptweekly&utm_medium=email

|

||||

via: https://nolanlawson.com/2016/08/15/the-cost-of-small-modules/

|

||||

|

||||

作者:[Nolan][a]

|

||||

译者:[Yinr](https://github.com/Yinr)

|

||||

校对:[校对者ID](https://github.com/校对者ID)

|

||||

校对:[wxy](https://github.com/wxy)

|

||||

|

||||

本文由 [LCTT](https://github.com/LCTT/TranslateProject) 原创编译,[Linux中国](https://linux.cn/) 荣誉推出

|

||||

|

||||

@ -0,0 +1,57 @@

|

||||

宽松开源许可证的崛起意味着什么

|

||||

====

|

||||

|

||||

为什么像 GNU GPL 这样的限制性许可证越来越不受青睐。

|

||||

|

||||

“如果你用了任何开源软件, 那么你软件的其他部分也必须开源。” 这是微软前 CEO 巴尔默 2001 年说的, 尽管他说的不对, 还是引发了人们对自由软件的 FUD (恐惧, 不确定和怀疑(fear, uncertainty and doubt))。 大概这才是他的意图。

|

||||

|

||||

对开源软件的这些 FUD 主要与开源许可有关。 现在有许多不同的许可证, 当中有些限制比其他的更严格(也有人称“更具保护性”)。 诸如 GNU 通用公共许可证 (GPL) 这样的限制性许可证使用了 copyleft 的概念。 copyleft 赋予人们自由发布软件副本和修改版的权力, 只要衍生工作保留同样的权力。 bash 和 GIMP 等开源项目就是使用了 GPL(v3)。 还有一个 AGPL( Affero GPL) 许可证, 它为网络上的软件(如 web service)提供了 copyleft 许可。

|

||||

|

||||

这意味着, 如果你使用了这种许可的代码, 然后加入了你自己的专有代码, 那么在一些情况下, 整个代码, 包括你的代码也就遵从这种限制性开源许可证。 Ballmer 说的大概就是这类的许可证。

|

||||

|

||||

但宽松许可证不同。 比如, 只要保留版权声明和许可声明且不要求开发者承担责任, MIT 许可证允许任何人任意使用开源代码, 包括修改和出售。 另一个比较流行的宽松开源许可证是 Apache 许可证 2.0,它还包含了贡献者向用户提供专利授权相关的条款。 使用 MIT 许可证的有 JQuery、.NET Core 和 Rails , 使用 Apache 许可证 2.0 的软件包括安卓, Apache 和 Swift。

|

||||

|

||||

两种许可证类型最终都是为了让软件更有用。 限制性许可证促进了参与和分享的开源理念, 使每一个人都能从软件中得到最大化的利益。 而宽松许可证通过允许人们任意使用软件来确保人们能从软件中得到最多的利益, 即使这意味着他们可以使用代码, 修改它, 据为己有,甚至以专有软件出售,而不做任何回报。

|

||||

|

||||

开源许可证管理公司黑鸭子软件的数据显示, 去年使用最多的开源许可证是限制性许可证 GPL 2.0,份额大约 25%。 宽松许可证 MIT 和 Apache 2.0 次之, 份额分别为 18% 和 16%, 再后面是 GPL 3.0, 份额大约 10%。 这样来看, 限制性许可证占 35%, 宽松许可证占 34%, 几乎是平手。

|

||||

|

||||

但这份当下的数据没有揭示发展趋势。黑鸭子软件的数据显示, 从 2009 年到 2015 年的六年间, MIT 许可证的份额上升了 15.7%, Apache 的份额上升了 12.4%。 在这段时期, GPL v2 和 v3 的份额惊人地下降了 21.4%。 换言之, 在这段时期里, 大量软件从限制性许可证转到宽松许可证。

|

||||

|

||||

这个趋势还在继续。 黑鸭子软件的[最新数据][1]显示, MIT 现在的份额为 26%, GPL v2 为 21%, Apache 2 为 16%, GPL v3 为 9%。 即 30% 的限制性许可证和 42% 的宽松许可证--与前一年的 35% 的限制许可证和 34% 的宽松许可证相比, 发生了重大的转变。 对 GitHub 上使用许可证的[调查研究][2]证实了这种转变。 它显示 MIT 以压倒性的 45% 占有率成为最流行的许可证, 与之相比, GPL v2 只有 13%, Apache 11%。

|

||||

|

||||

|

||||

|

||||

### 引领趋势

|

||||

|

||||

从限制性许可证到宽松许可证,这么大的转变背后是什么呢? 是公司害怕如果使用了限制性许可证的软件,他们就会像巴尔默说的那样,失去自己私有软件的控制权了吗? 事实上, 可能就是如此。 比如, Google 就[禁用了 Affero GPL 软件][3]。

|

||||

|

||||

[Instructional Media + Magic][4] 的主席 Jim Farmer, 是一个教育开源技术的开发者。 他认为很多公司为避免法律问题而不使用限制性许可证。 “问题就在于复杂性。 许可证的复杂性越高, 被他人因为某行为而告上法庭的可能性越高。 高复杂性更可能带来诉讼”, 他说。

|

||||

|

||||

他补充说, 这种对限制性许可证的恐惧正被律师们驱动着, 许多律师建议自己的客户使用 MIT 或 Apache 2.0 许可证的软件, 并明确反对使用 Affero 许可证的软件。

|

||||

|

||||

他说, 这会对软件开发者产生影响, 因为如果公司都避开限制性许可证软件的使用,开发者想要自己的软件被使用, 就更会把新的软件使用宽松许可证。

|

||||

|

||||

但 SalesAgility(开源 SuiteCRM 背后的公司)的 CEO Greg Soper 认为这种到宽松许可证的转变也是由一些开发者驱动的。 “看看像 Rocket.Chat 这样的应用。 开发者本可以选择 GPL 2.0 或 Affero 许可证, 但他们选择了宽松许可证,” 他说。 “这样可以给这个应用最大的机会, 因为专有软件厂商可以使用它, 不会伤害到他们的产品, 且不需要把他们的产品也使用开源许可证。 这样如果开发者想要让第三方应用使用他的应用的话, 他有理由选择宽松许可证。”

|

||||

|

||||

Soper 指出, 限制性许可证致力于帮助开源项目获得成功,方式是阻止开发者拿了别人的代码、做了修改,但不把结果回报给社区。 “Affero 许可证对我们的产品健康发展很重要, 因为如果有人利用了我们的代码开发,做得比我们好, 却又不把代码回报回来, 就会扼杀掉我们的产品,” 他说。 “ 对 Rocket.Chat 则不同, 因为如果它使用 Affero, 那么它会污染公司的知识产权, 所以公司不会使用它。 不同的许可证有不同的使用案例。”

|

||||

|

||||

曾在 Gnome、OpenOffice 工作过,现在是 LibreOffice 的开源开发者的 Michael Meeks 同意 Jim Farmer 的观点,认为许多公司确实出于对法律的担心,而选择使用宽松许可证的软件。 “copyleft 许可证有风险, 但同样也有巨大的益处。 遗憾的是人们都听从律师的, 而律师只是讲风险, 却从不告诉你有些事是安全的。”

|

||||

|

||||

巴尔默发表他的错误言论已经过去 15 年了, 但它产生的 FUD 还是有影响--即使从限制性许可证到宽松许可证的转变并不是他的目的。

|

||||

|

||||

--------------------------------------------------------------------------------

|

||||

|

||||

via: http://www.cio.com/article/3120235/open-source-tools/what-the-rise-of-permissive-open-source-licenses-means.html

|

||||

|

||||

作者:[Paul Rubens][a]

|

||||

译者:[willcoderwang](https://github.com/willcoderwang)

|

||||

校对:[jasminepeng](https://github.com/jasminepeng)

|

||||

|

||||

本文由 [LCTT](https://github.com/LCTT/TranslateProject) 原创编译,[Linux中国](https://linux.cn/) 荣誉推出

|

||||

|

||||

[a]: http://www.cio.com/author/Paul-Rubens/

|

||||

[1]: https://www.blackducksoftware.com/top-open-source-licenses

|

||||

[2]: https://github.com/blog/1964-open-source-license-usage-on-github-com

|

||||

[3]: http://www.theregister.co.uk/2011/03/31/google_on_open_source_licenses/

|

||||

[4]: http://immagic.com/

|

||||

|

||||

@ -0,0 +1,117 @@

|

||||

在 Linux 上检测硬盘上的坏道和坏块

|

||||

===

|

||||

|

||||

让我们从坏道和坏块的定义开始说起,它们是一块磁盘或闪存上不再能够被读写的部分,一般是由于磁盘表面特定的[物理损坏][7]或闪存晶体管失效导致的。

|

||||

|

||||

随着坏道的继续积累,它们会对你的磁盘或闪存容量产生令人不快或破坏性的影响,甚至可能会导致硬件失效。

|

||||

|

||||

同时还需要注意的是坏块的存在警示你应该开始考虑买块新磁盘了,或者简单地将坏块标记为不可用。

|

||||

|

||||

因此,在这篇文章中,我们通过几个必要的步骤,使用特定的[磁盘扫描工具][6]让你能够判断 Linux 磁盘或闪存是否存在坏道。

|

||||

|

||||

以下就是步骤:

|

||||

|

||||

### 在 Linux 上使用坏块工具检查坏道

|

||||

|

||||

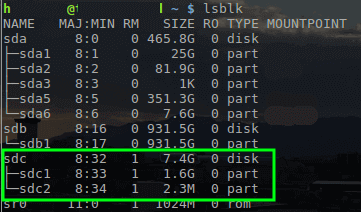

坏块工具可以让用户扫描设备检查坏道或坏块。设备可以是一个磁盘或外置磁盘,由一个如 `/dev/sdc` 这样的文件代表。

|

||||

|

||||

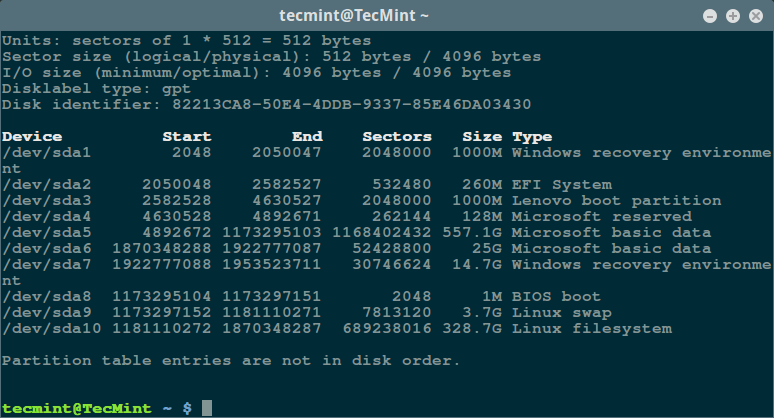

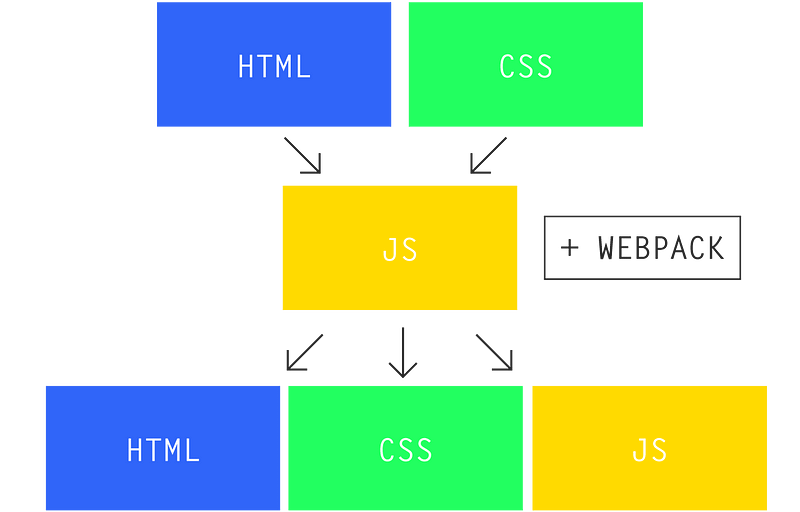

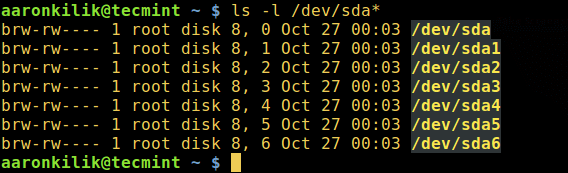

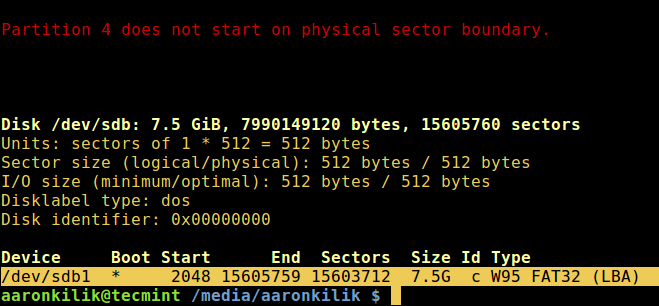

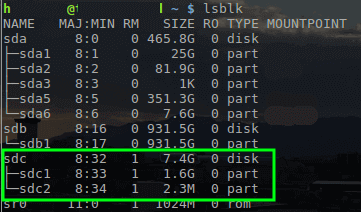

首先,通过超级用户权限执行 [fdisk 命令][5]来显示你的所有磁盘或闪存的信息以及它们的分区信息:

|

||||

|

||||

```

|

||||

$ sudo fdisk -l

|

||||

|

||||

```

|

||||

|

||||

[][4]

|

||||

|

||||

*列出 Linux 文件系统分区*

|

||||

|

||||

然后用如下命令检查你的 Linux 硬盘上的坏道/坏块:

|

||||

|

||||

```

|

||||

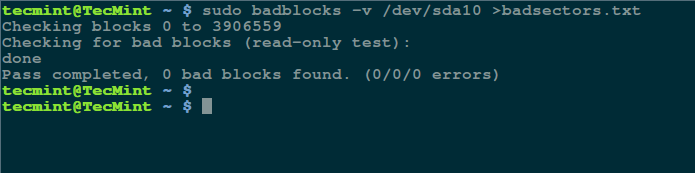

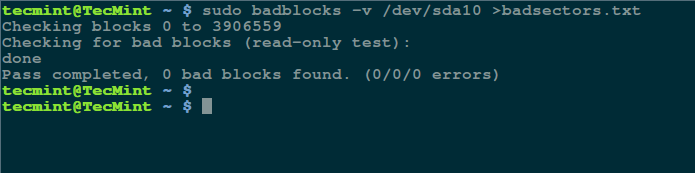

$ sudo badblocks -v /dev/sda10 > badsectors.txt

|

||||

|

||||

```

|

||||

|

||||

[][3]

|

||||

|

||||

*在 Linux 上扫描硬盘坏道*

|

||||

|

||||

上面的命令中,badblocks 扫描设备 `/dev/sda10`(记得指定你的实际设备),`-v` 选项让它显示操作的详情。另外,这里使用了输出重定向将操作结果重定向到了文件 `badsectors.txt`。

|

||||

|

||||

如果你在你的磁盘上发现任何坏道,卸载磁盘并像下面这样让系统不要将数据写入回报的扇区中。

|

||||

|

||||

你需要执行 `e2fsck`(针对 ext2/ext3/ext4 文件系统)或 `fsck` 命令,命令中还需要用到 `badsectors.txt` 文件和设备文件。

|

||||

|

||||

`-l` 选项告诉命令将在指定的文件 `badsectors.txt` 中列出的扇区号码加入坏块列表。

|

||||

|

||||

```

|

||||

------------ 针对 for ext2/ext3/ext4 文件系统 ------------

|

||||

$ sudo e2fsck -l badsectors.txt /dev/sda10

|

||||

|

||||

或

|

||||

|

||||

------------ 针对其它文件系统 ------------

|

||||

$ sudo fsck -l badsectors.txt /dev/sda10

|

||||

|

||||

```

|

||||

|

||||

### 在 Linux 上使用 Smartmontools 工具扫描坏道

|

||||

|

||||

这个方法对带有 S.M.A.R.T(Self-Monitoring, Analysis and Reporting Technology,自我监控分析报告技术)系统的现代磁盘(ATA/SATA 和 SCSI/SAS 硬盘以及固态硬盘)更加的可靠和高效。S.M.A.R.T 系统能够帮助检测,报告,以及可能记录它们的健康状况,这样你就可以找出任何可能出现的硬件失效。

|

||||

|

||||

你可以使用以下命令安装 `smartmontools`:

|

||||

|

||||

```

|

||||

------------ 在基于 Debian/Ubuntu 的系统上 ------------

|

||||

$ sudo apt-get install smartmontools

|

||||

|

||||

------------ 在基于 RHEL/CentOS 的系统上 ------------

|

||||

$ sudo yum install smartmontools

|

||||

|

||||

```

|

||||

|

||||

安装完成之后,使用 `smartctl` 控制磁盘集成的 S.M.A.R.T 系统。你可以这样查看它的手册或帮助:

|

||||

|

||||

```

|

||||

$ man smartctl

|

||||

$ smartctl -h

|

||||

|

||||

```

|

||||

|

||||

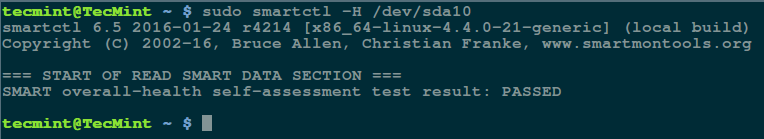

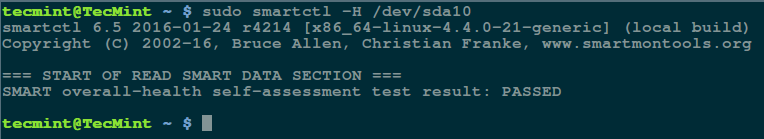

然后执行 `smartctrl` 命令并在命令中指定你的设备作为参数,以下命令包含了参数 `-H` 或 `--health` 以显示 SMART 整体健康自我评估测试结果。

|

||||

|

||||

```

|

||||

$ sudo smartctl -H /dev/sda10

|

||||

|

||||

```

|

||||

|

||||

[][2]

|

||||

|

||||

*检查 Linux 硬盘健康*

|

||||

|

||||

上面的结果指出你的硬盘很健康,近期内不大可能发生硬件失效。

|

||||

|

||||

要获取磁盘信息总览,使用 `-a` 或 `--all` 选项来显示关于磁盘所有的 SMART 信息,`-x` 或 `--xall` 来显示所有关于磁盘的 SMART 信息以及非 SMART 信息。

|

||||

|

||||

在这个教程中,我们覆盖了有关[磁盘健康诊断][1]的重要话题,你可以下面的反馈区来分享你的想法或提问,并且记得多回来看看。

|

||||

|

||||

--------------------------------------------------------------------------------

|

||||

|

||||

via: http://www.tecmint.com/check-linux-hard-disk-bad-sectors-bad-blocks/

|

||||

|

||||

|

||||

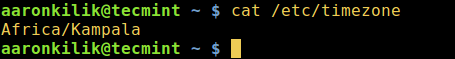

作者:[Aaron Kili][a]

|

||||

译者:[alim0x](https://github.com/alim0x)

|

||||

校对:[wxy](https://github.com/wxy)

|

||||

|

||||

本文由 [LCTT](https://github.com/LCTT/TranslateProject) 原创编译,[Linux中国](https://linux.cn/) 荣誉推出

|

||||

|

||||

[a]: http://www.tecmint.com/author/aaronkili/

|

||||

[1]:http://www.tecmint.com/defragment-linux-system-partitions-and-directories/

|

||||

[2]:http://www.tecmint.com/wp-content/uploads/2016/10/Check-Linux-Hard-Disk-Health.png

|

||||

[3]:http://www.tecmint.com/wp-content/uploads/2016/10/Scan-Hard-Disk-Bad-Sectors-in-Linux.png

|

||||

[4]:http://www.tecmint.com/wp-content/uploads/2016/10/List-Linux-Filesystem-Partitions.png

|

||||

[5]:http://www.tecmint.com/fdisk-commands-to-manage-linux-disk-partitions/

|

||||

[6]:http://www.tecmint.com/ncdu-a-ncurses-based-disk-usage-analyzer-and-tracker/

|

||||

[7]:http://www.tecmint.com/defragment-linux-system-partitions-and-directories/

|

||||

@ -0,0 +1,108 @@

|

||||

如何按最后修改时间对 ls 命令的输出进行排序

|

||||

============================================================

|

||||

|

||||

Linux 用户常常做的一个事情是,是在命令行[列出目录内容][1]。

|

||||

|

||||

我们已经知道,[ls][2] 和 [dir][3] 是两个可用在列出目录内容的 Linux 命令,前者是更受欢迎的,在大多数情况下,是用户的首选。

|

||||

|

||||

我们列出目录内容时,可以按照不同的标准进行排序,例如文件名、修改时间、添加时间、版本或者文件大小。可以通过指定一个特别的参数来使用这些文件的属性进行排序。

|

||||

|

||||

在这个简洁的 [ls 命令指导][4]中,我们将看看如何通过上次修改时间(日期和时分秒)[排序 ls 命令的输出结果][5] 。

|

||||

|

||||

让我们由执行一些基本的 [ls 命令][6]开始。

|

||||

|

||||

### Linux 基本 ls 命令

|

||||

|

||||

1、 不带任何参数运行 ls 命令将列出当前工作目录的内容。

|

||||

|

||||

```

|

||||

$ ls

|

||||

```

|

||||

|

||||

|

||||

|

||||

*列出工作目录的内容*

|

||||

|

||||

2、要列出任何目录的内容,例如 /etc 目录使用如下命令:

|

||||

|

||||

```

|

||||

$ ls /etc

|

||||

```

|

||||

|

||||

|

||||

*列出工作目录 /etc 的内容*

|

||||

|

||||

3、一个目录总是包含一些隐藏的文件(至少有两个),因此,要展示目录中的所有文件,使用`-a`或`-all`标志:

|

||||

|

||||

```

|

||||

$ ls -a

|

||||

```

|

||||

|

||||

|

||||

|

||||

*列出工作目录的隐藏文件*

|

||||

|

||||

4、你还可以打印输出的每一个文件的详细信息,例如文件权限、链接数、所有者名称和组所有者、文件大小、最后修改的时间和文件/目录名称。

|

||||

|

||||

这是由` -l `选项来设置,这意味着一个如下面的屏幕截图般的长长的列表格式。

|

||||

|

||||

```

|

||||

$ ls -l

|

||||

```

|

||||

|

||||

|

||||

*长列表目录内容*

|

||||

|

||||

### 基于日期和基于时刻排序文件

|

||||

|

||||

5、要在目录中列出文件并[对最后修改日期和时间进行排序][11],在下面的命令中使用`-t`选项:

|

||||

|

||||

```

|

||||

$ ls -lt

|

||||

```

|

||||

|

||||

|

||||

|

||||

*按日期和时间排序ls输出内容*

|

||||

|

||||

6、如果你想要一个基于日期和时间的逆向排序文件,你可以使用`-r`选项来工作,像这样:

|

||||

|

||||

```

|

||||

$ ls -ltr

|

||||

```

|

||||

|

||||

|

||||

|

||||

*按日期和时间排序的逆向输出*

|

||||

|

||||

我们将在这里结束,但是,[ls 命令][14]还有更多的使用信息和选项,因此,应该特别注意它或看看其它指南,比如《[每一个用户应该知道 ls 的命令技巧][15]》或《[使用排序命令][16]》。

|

||||

|

||||

最后但并非最不重要的,你可以通过以下反馈部分联系我们。

|

||||

|

||||

--------------------------------------------------------------------------------

|

||||

|

||||

via: http://www.tecmint.com/sort-ls-output-by-last-modified-date-and-time

|

||||

|

||||

作者:[Aaron Kili][a]

|

||||

译者:[zky001](https://github.com/zky001)

|

||||

校对:[wxy](https://github.com/wxy)

|

||||

|

||||

本文由 [LCTT](https://github.com/LCTT/TranslateProject) 原创编译,[Linux中国](https://linux.cn/) 荣誉推出

|

||||

|

||||

[a]:http://www.tecmint.com/author/aaronkili/

|

||||

[1]:http://www.tecmint.com/file-and-directory-management-in-linux/

|

||||

[2]:http://www.tecmint.com/15-basic-ls-command-examples-in-linux/

|

||||

[3]:http://www.tecmint.com/linux-dir-command-usage-with-examples/

|

||||

[4]:http://www.tecmint.com/tag/linux-ls-command/

|

||||

[5]:http://www.tecmint.com/sort-command-linux/

|

||||

[6]:http://www.tecmint.com/15-basic-ls-command-examples-in-linux/

|

||||

[7]:http://www.tecmint.com/wp-content/uploads/2016/10/List-Content-of-Working-Directory.png

|

||||

[8]:http://www.tecmint.com/wp-content/uploads/2016/10/List-Contents-of-Directory.png

|

||||

[9]:http://www.tecmint.com/wp-content/uploads/2016/10/List-Hidden-Files-in-Directory.png

|

||||

[10]:http://www.tecmint.com/wp-content/uploads/2016/10/ls-Long-List-Format.png

|

||||

[11]:http://www.tecmint.com/find-and-sort-files-modification-date-and-time-in-linux/

|

||||

[12]:http://www.tecmint.com/wp-content/uploads/2016/10/Sort-ls-Output-by-Date-and-Time.png

|

||||

[13]:http://www.tecmint.com/wp-content/uploads/2016/10/Sort-ls-Output-Reverse-by-Date-and-Time.png

|

||||

[14]:http://www.tecmint.com/tag/linux-ls-command/

|

||||

[15]:http://www.tecmint.com/linux-ls-command-tricks/

|

||||

[16]:http://www.tecmint.com/linux-sort-command-examples/

|

||||

@ -0,0 +1,98 @@

|

||||

完整指南:在 Linux 上使用 Calibre 创建电子书

|

||||

====

|

||||

|

||||

[][8]

|

||||

|

||||

摘要:这份初学者指南是告诉你如何在 Linux 上用 Calibre 工具快速创建一本电子书。

|

||||

|

||||

自从 Amazon(亚马逊)在多年前开始销售电子书,电子书已经有了质的飞跃发展并且变得越来越流行。好消息是电子书非常容易使用自由开源的工具来被创建。

|

||||

|

||||

在这个教程中,我会告诉你如何在 Linux 上创建一本电子书。

|

||||

|

||||

### 在 Linux 上创建一本电子书

|

||||

|

||||

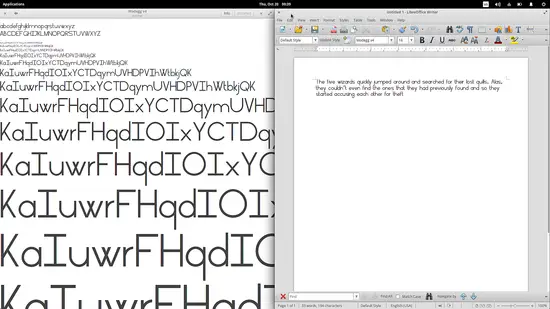

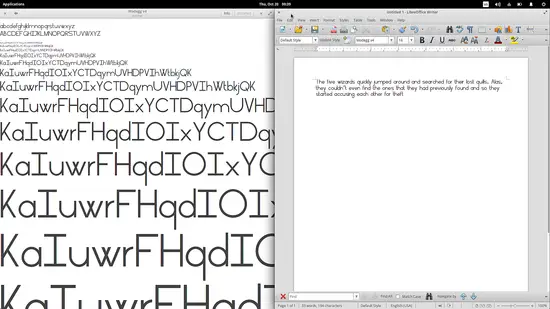

要创建一本电子书,你可能需要两个软件:一个文本处理器(当然,我使用的是 [LibreOffice][7])和 Calibre 。[Calibre][6] 是一个非常优秀的电子书阅读器,也是一个电子书库的程序。你可以使用它来[在 Linux 上打开 ePub 文件][5]或者管理你收集的电子书。(LCTT 译注:LibreOffice 是 Linux 上用来处理文本的软件,类似于 Windows 的 Office 软件)

|

||||

|

||||

除了这些软件之外,你还需要准备一个电子书封面(1410×2250)和你的原稿。

|

||||

|

||||

### 第一步

|

||||

|

||||

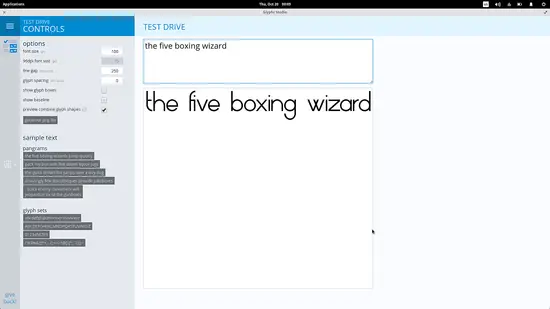

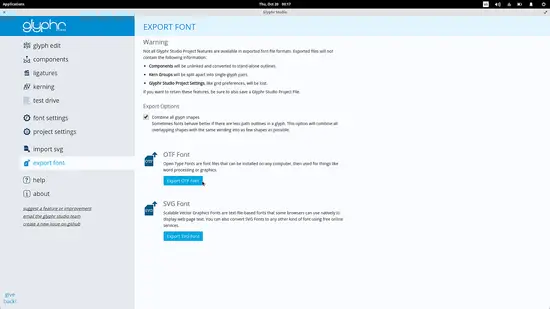

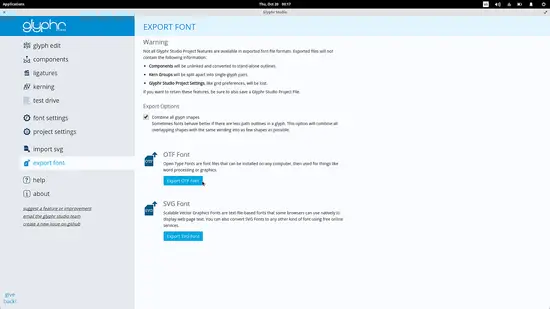

首先,你需要用你的文本处理器程序打开你的原稿。 Calibre 可以自动的为你创建一个书籍目录。要使用到这个功能,你需要在你的原稿中设置每一章的标题样式为 Heading 1,在 LibreOffice 中要做到这个只需要高亮标题并且在段落样式下拉框中选择“Heading 1”即可。

|

||||

|

||||

|

||||

|

||||

如果你想要有子章节,并且希望他们也被加入到目录中,只需要设置这些子章节的标题为 Heading 2。

|

||||

|

||||

做完这些之后,保存你的文档为 HTML 格式文件。

|

||||

|

||||

### 第二步

|

||||

|

||||

在 Calibre 程序里面,点击“添加书籍(Add books)”按钮。在对话框出现后,你可以打开你刚刚存储的 HTML 格式文件,将它加入到 Calibre 中。

|

||||

|

||||

|

||||

|

||||

### 第三步

|

||||

|

||||

一旦这个 HTML 文件加入到 Calibre 库中,选择这个新文件并且点击“编辑元数据(Edit Metadata)”按钮。在这里,你可以添加下面的这些信息:标题(Title)、 作者(Author)、封面图片(cover image)、 描述(description)和其它的一些信息。当你填完之后,点击“Ok”。

|

||||

|

||||

|

||||

|

||||

### 第四步

|

||||

|

||||

现在点击“转换书籍(Covert books)”按钮。

|

||||

|

||||

在新的窗口中,这里会有一些可选项,但是你不会需要使用它们。

|

||||

|

||||

|

||||

|

||||

在新窗口的右上部选择框中,选择 epub 文件格式。Calibre 也有创建 mobi 文件格式的其它选项,但是我发现创建那些文件之后经常出现我意料之外的事情。

|

||||

|

||||

|

||||

|

||||

### 第五步

|

||||

|

||||

在左边新的对话框中,点击“外观(Look & Feel)”。然后勾选中“移除段落间空白(Remove spacing between paragraphs)”

|

||||

|

||||

|

||||

|

||||

接下来,我们会创建一个内容目录。如果不打算在你的书中使用目录,你可以跳过这个步骤。选中“内容目录(Table of Contents)”标签。接下来,点击“一级目录(Level 1 TOC (XPath expression))”右边的魔术棒图标。

|

||||

|

||||

|

||||

|

||||

在这个新的窗口中,在“匹配 HTML 标签(Match HTML tags with tag name)”下的下拉菜单中选择“h1”。点击“OK” 来关闭这个窗口。如果你有子章节,在“二级目录(Level 2 TOC (XPath expression))”下选择“h2”。

|

||||

|

||||

|

||||

|

||||

在我们开始生成电子书前,选择输出 EPUB 文件。在这个新的页面,选择“插入目录(Insert inline Table of Contents)”选项。

|

||||

|

||||

|

||||

|

||||

现在你需要做的是点击“OK”来开始生成电子书。除非你的是一个大文件,否则生成电子书的过程一般都完成的很快。

|

||||

|

||||

到此为止,你就已经创建一本电子书了。

|

||||

|

||||

对一些特别的用户比如他们知道如何写 CSS 样式文件(LCTT 译注:CSS 文件可以用来美化 HTML 页面),Calibre 给了这类用户一个选项来为文章增加 CSS 样式。只需要回到“外观(Look & Feel)”部分,选择“风格(styling)”标签选项。但如果你想创建一个 mobi 格式的文件,因为一些原因,它是不能接受 CSS 样式文件的。

|

||||

|

||||

|

||||

|

||||

好了,是不是感到非常容易?我希望这个教程可以帮助你在 Linux 上创建电子书。

|

||||

|

||||

--------------------------------------------------------------------------------

|

||||

|

||||

via: https://itsfoss.com/create-ebook-calibre-linux/

|

||||

|

||||

作者:[John Paul][a]

|

||||