mirror of

https://github.com/LCTT/TranslateProject.git

synced 2025-03-21 02:10:11 +08:00

commit

8f83f9ff9c

231

published/20150717 How to monitor NGINX- Part 1.md

Normal file

231

published/20150717 How to monitor NGINX- Part 1.md

Normal file

@ -0,0 +1,231 @@

|

||||

如何监控 NGINX(第一篇)

|

||||

================================================================================

|

||||

|

||||

|

||||

### NGINX 是什么? ###

|

||||

|

||||

[NGINX][1] (发音为 “engine X”) 是一种流行的 HTTP 和反向代理服务器。作为一个 HTTP 服务器,NGINX 可以使用较少的内存非常高效可靠地提供静态内容。作为[反向代理][2],它可以用作多个后端服务器或类似缓存和负载平衡这样的其它应用的单一访问控制点。NGINX 是一个自由开源的产品,并有一个具备更全的功能的叫做 NGINX Plus 的商业版。

|

||||

|

||||

NGINX 也可以用作邮件代理和通用的 TCP 代理,但本文并不直接讨论 NGINX 的那些用例的监控。

|

||||

|

||||

### NGINX 主要指标 ###

|

||||

|

||||

通过监控 NGINX 可以 捕获到两类问题:NGINX 本身的资源问题,和出现在你的基础网络设施的其它问题。大多数 NGINX 用户会用到以下指标的监控,包括**每秒请求数**,它提供了一个由所有最终用户活动组成的上层视图;**服务器错误率** ,这表明你的服务器已经多长没有处理看似有效的请求;还有**请求处理时间**,这说明你的服务器处理客户端请求的总共时长(并且可以看出性能降低或当前环境的其他问题)。

|

||||

|

||||

更一般地,至少有三个主要的指标类别来监视:

|

||||

|

||||

- 基本活动指标

|

||||

- 错误指标

|

||||

- 性能指标

|

||||

|

||||

下面我们将分析在每个类别中最重要的 NGINX 指标,以及用一个相当普遍但是值得特别提到的案例来说明:使用 NGINX Plus 作反向代理。我们还将介绍如何使用图形工具或可选择的监控工具来监控所有的指标。

|

||||

|

||||

本文引用指标术语[来自我们的“监控 101 系列”][3],,它提供了一个指标收集和警告框架。

|

||||

|

||||

#### 基本活跃指标 ####

|

||||

|

||||

无论你在怎样的情况下使用 NGINX,毫无疑问你要监视服务器接收多少客户端请求和如何处理这些请求。

|

||||

|

||||

NGINX Plus 上像开源 NGINX 一样可以报告基本活跃指标,但它也提供了略有不同的辅助模块。我们首先讨论开源的 NGINX,再来说明 NGINX Plus 提供的其他指标的功能。

|

||||

|

||||

**NGINX**

|

||||

|

||||

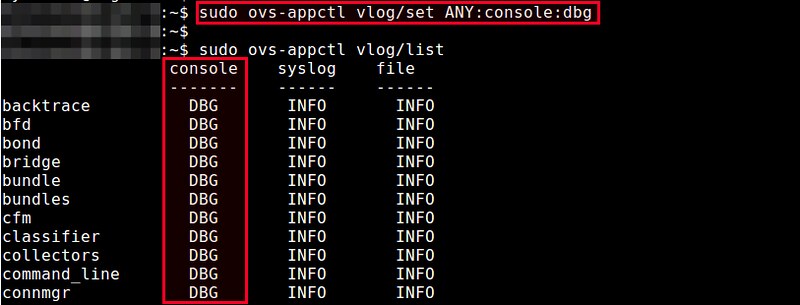

下图显示了一个客户端连接的过程,以及开源版本的 NGINX 如何在连接过程中收集指标。

|

||||

|

||||

|

||||

|

||||

Accepts(接受)、Handled(已处理)、Requests(请求)是一直在增加的计数器。Active(活跃)、Waiting(等待)、Reading(读)、Writing(写)随着请求量而增减。

|

||||

|

||||

| 名称 | 描述| [指标类型](https://www.datadoghq.com/blog/monitoring-101-collecting-data/)|

|

||||

|-----------|-----------------|-------------------------------------------------------------------------------------------------------------------------|

|

||||

| Accepts | NGINX 所接受的客户端连接数 | 资源: 功能 |

|

||||

| Handled | 成功的客户端连接数 | 资源: 功能 |

|

||||

| Active | 当前活跃的客户端连接数| 资源: 功能 |

|

||||

| Dropped(已丢弃,计算得出)| 丢弃的连接数(接受 - 已处理)| 工作:错误*|

|

||||

| Requests | 客户端请求数 | 工作:吞吐量 |

|

||||

|

||||

|

||||

_*严格的来说,丢弃的连接是 [一个资源饱和指标](https://www.datadoghq.com/blog/monitoring-101-collecting-data/#resource-metrics),但是因为饱和会导致 NGINX 停止服务(而不是延后该请求),所以,“已丢弃”视作 [一个工作指标](https://www.datadoghq.com/blog/monitoring-101-collecting-data/#work-metrics) 比较合适。_

|

||||

|

||||

NGINX worker 进程接受 OS 的连接请求时 **Accepts** 计数器增加,而**Handled** 是当实际的请求得到连接时(通过建立一个新的连接或重新使用一个空闲的)。这两个计数器的值通常都是相同的,如果它们有差别则表明连接被**Dropped**,往往这是由于资源限制,比如已经达到 NGINX 的[worker_connections][4]的限制。

|

||||

|

||||

一旦 NGINX 成功处理一个连接时,连接会移动到**Active**状态,在这里对客户端请求进行处理:

|

||||

|

||||

Active状态

|

||||

|

||||

- **Waiting**: 活跃的连接也可以处于 Waiting 子状态,如果有在此刻没有活跃请求的话。新连接可以绕过这个状态并直接变为到 Reading 状态,最常见的是在使用“accept filter(接受过滤器)” 和 “deferred accept(延迟接受)”时,在这种情况下,NGINX 不会接收 worker 进程的通知,直到它具有足够的数据才开始响应。如果连接设置为 keep-alive ,那么它在发送响应后将处于等待状态。

|

||||

|

||||

- **Reading**: 当接收到请求时,连接离开 Waiting 状态,并且该请求本身使 Reading 状态计数增加。在这种状态下 NGINX 会读取客户端请求首部。请求首部是比较小的,因此这通常是一个快速的操作。

|

||||

|

||||

- **Writing**: 请求被读取之后,其使 Writing 状态计数增加,并保持在该状态,直到响应返回给客户端。这意味着,该请求在 Writing 状态时, 一方面 NGINX 等待来自上游系统的结果(系统放在 NGINX “后面”),另外一方面,NGINX 也在同时响应。请求往往会在 Writing 状态花费大量的时间。

|

||||

|

||||

通常,一个连接在同一时间只接受一个请求。在这种情况下,Active 连接的数目 == Waiting 的连接 + Reading 请求 + Writing 。然而,较新的 SPDY 和 HTTP/2 协议允许多个并发请求/响应复用一个连接,所以 Active 可小于 Waiting 的连接、 Reading 请求、Writing 请求的总和。 (在撰写本文时,NGINX 不支持 HTTP/2,但预计到2015年期间将会支持。)

|

||||

|

||||

**NGINX Plus**

|

||||

|

||||

正如上面提到的,所有开源 NGINX 的指标在 NGINX Plus 中是可用的,但另外也提供其他的指标。本节仅说明了 NGINX Plus 可用的指标。

|

||||

|

||||

|

||||

|

||||

|

||||

Accepted (已接受)、Dropped,总数是不断增加的计数器。Active、 Idle(空闲)和处于 Current(当前)处理阶段的各种状态下的连接或请求的当前数量随着请求量而增减。

|

||||

|

||||

| 名称 | 描述| [指标类型](https://www.datadoghq.com/blog/monitoring-101-collecting-data/)|

|

||||

|-----------|-----------------|-------------------------------------------------------------------------------------------------------------------------|

|

||||

| Accepted | NGINX 所接受的客户端连接数 | 资源: 功能 |

|

||||

| Dropped |丢弃的连接数(接受 - 已处理)| 工作:错误*|

|

||||

| Active | 当前活跃的客户端连接数| 资源: 功能 |

|

||||

| Idle | 没有当前请求的客户端连接| 资源: 功能 |

|

||||

| Total(全部) | 客户端请求数 | 工作:吞吐量 |

|

||||

|

||||

_*严格的来说,丢弃的连接是 [一个资源饱和指标](https://www.datadoghq.com/blog/monitoring-101-collecting-data/#resource-metrics),但是因为饱和会导致 NGINX 停止服务(而不是延后该请求),所以,“已丢弃”视作 [一个工作指标](https://www.datadoghq.com/blog/monitoring-101-collecting-data/#work-metrics) 比较合适。_

|

||||

|

||||

当 NGINX Plus worker 进程接受 OS 的连接请求时 **Accepted** 计数器递增。如果 worker 进程为请求建立连接失败(通过建立一个新的连接或重新使用一个空闲),则该连接被丢弃, **Dropped** 计数增加。通常连接被丢弃是因为资源限制,如 NGINX Plus 的[worker_connections][4]的限制已经达到。

|

||||

|

||||

**Active** 和 **Idle** 和[如上所述][5]的开源 NGINX 的“active” 和 “waiting”状态是相同的,但是有一点关键的不同:在开源 NGINX 上,“waiting”状态包括在“active”中,而在 NGINX Plus 上“idle”的连接被排除在“active” 计数外。**Current** 和开源 NGINX 是一样的也是由“reading + writing” 状态组成。

|

||||

|

||||

**Total** 为客户端请求的累积计数。请注意,单个客户端连接可涉及多个请求,所以这个数字可能会比连接的累计次数明显大。事实上,(total / accepted)是每个连接的平均请求数量。

|

||||

|

||||

**开源 和 Plus 之间指标的不同**

|

||||

|

||||

|NGINX (开源) |NGINX Plus|

|

||||

|-----------------------|----------------|

|

||||

| accepts | accepted |

|

||||

| dropped 通过计算得来| dropped 直接得到 |

|

||||

| reading + writing| current|

|

||||

| waiting| idle|

|

||||

| active (包括 “waiting”状态) | active (排除 “idle” 状态)|

|

||||

| requests| total|

|

||||

|

||||

**提醒指标: 丢弃连接**

|

||||

|

||||

被丢弃的连接数目等于 Accepts 和 Handled 之差(NGINX 中),或是可直接得到标准指标(NGINX Plus 中)。在正常情况下,丢弃连接数应该是零。如果在每个单位时间内丢弃连接的速度开始上升,那么应该看看是否资源饱和了。

|

||||

|

||||

|

||||

|

||||

**提醒指标: 每秒请求数**

|

||||

|

||||

按固定时间间隔采样你的请求数据(开源 NGINX 的**requests**或者 NGINX Plus 中**total**) 会提供给你单位时间内(通常是分钟或秒)所接受的请求数量。监测这个指标可以查看进入的 Web 流量尖峰,无论是合法的还是恶意的,或者突然的下降,这通常都代表着出现了问题。每秒请求数若发生急剧变化可以提醒你的环境出现问题了,即使它不能告诉你确切问题的位置所在。请注意,所有的请求都同样计数,无论 URL 是什么。

|

||||

|

||||

|

||||

|

||||

**收集活跃指标**

|

||||

|

||||

开源的 NGINX 提供了一个简单状态页面来显示基本的服务器指标。该状态信息以标准格式显示,实际上任何图形或监控工具可以被配置去解析这些相关数据,以用于分析、可视化、或提醒。NGINX Plus 提供一个 JSON 接口来供给更多的数据。阅读相关文章“[NGINX 指标收集][6]”来启用指标收集的功能。

|

||||

|

||||

#### 错误指标 ####

|

||||

|

||||

| 名称 | 描述| [指标类型](https://www.datadoghq.com/blog/monitoring-101-collecting-data/)| 可用于 |

|

||||

|-----------|-----------------|--------------------------------------------------------------------------------------------------------|----------------|

|

||||

| 4xx 代码 | 客户端错误计数 | 工作:错误 | NGINX 日志, NGINX Plus|

|

||||

| 5xx 代码| 服务器端错误计数 | 工作:错误 | NGINX 日志, NGINX Plus|

|

||||

|

||||

NGINX 错误指标告诉你服务器是否经常返回错误而不是正常工作。客户端错误返回4XX状态码,服务器端错误返回5XX状态码。

|

||||

|

||||

**提醒指标: 服务器错误率**

|

||||

|

||||

服务器错误率等于在单位时间(通常为一到五分钟)内5xx错误状态代码的总数除以[状态码][7](1XX,2XX,3XX,4XX,5XX)的总数。如果你的错误率随着时间的推移开始攀升,调查可能的原因。如果突然增加,可能需要采取紧急行动,因为客户端可能收到错误信息。

|

||||

|

||||

|

||||

|

||||

关于客户端错误的注意事项:虽然监控4XX是很有用的,但从该指标中你仅可以捕捉有限的信息,因为它只是衡量客户的行为而不捕捉任何特殊的 URL。换句话说,4xx出现的变化可能是一个信号,例如网络扫描器正在寻找你的网站漏洞时。

|

||||

|

||||

**收集错误度量**

|

||||

|

||||

虽然开源 NGINX 不能马上得到用于监测的错误率,但至少有两种方法可以得到:

|

||||

|

||||

- 使用商业支持的 NGINX Plus 提供的扩展状态模块

|

||||

- 配置 NGINX 的日志模块将响应码写入访问日志

|

||||

|

||||

关于这两种方法,请阅读相关文章“[NGINX 指标收集][6]”。

|

||||

|

||||

#### 性能指标 ####

|

||||

|

||||

| 名称 | 描述| [指标类型](https://www.datadoghq.com/blog/monitoring-101-collecting-data/)| 可用于 |

|

||||

|-----------|-----------------|--------------------------------------------------------------------------------------------------------|----------------|

|

||||

| request time (请求处理时间)| 处理每个请求的时间,单位为秒 | 工作:性能 | NGINX 日志|

|

||||

|

||||

**提醒指标: 请求处理时间**

|

||||

|

||||

请求处理时间指标记录了 NGINX 处理每个请求的时间,从读到客户端的第一个请求字节到完成请求。较长的响应时间说明问题在上游。

|

||||

|

||||

**收集处理时间指标**

|

||||

|

||||

NGINX 和 NGINX Plus 用户可以通过添加 $request_time 变量到访问日志格式中来捕捉处理时间数据。关于配置日志监控的更多细节在[NGINX指标收集][6]。

|

||||

|

||||

#### 反向代理指标 ####

|

||||

|

||||

| 名称 | 描述| [指标类型](https://www.datadoghq.com/blog/monitoring-101-collecting-data/)| 可用于 |

|

||||

|-----------|-----------------|--------------------------------------------------------------------------------------------------------|----------------|

|

||||

| 上游服务器的活跃链接 | 当前活跃的客户端连接 | 资源:功能 | NGINX Plus |

|

||||

| 上游服务器的 5xx 错误代码| 服务器错误 | 工作:错误 | NGINX Plus |

|

||||

| 每个上游组的可用服务器 | 服务器传递健康检查 | 资源:可用性| NGINX Plus

|

||||

|

||||

[反向代理][9]是 NGINX 最常见的使用方法之一。商业支持的 NGINX Plus 显示了大量有关后端(或“上游 upstream”)的服务器指标,这些与反向代理设置相关的。本节重点介绍了几个 NGINX Plus 用户可用的关键上游指标。

|

||||

|

||||

NGINX Plus 首先将它的上游指标按组分开,然后是针对单个服务器的。因此,例如,你的反向代理将请求分配到五个上游的 Web 服务器上,你可以一眼看出是否有单个服务器压力过大,也可以看出上游组中服务器的健康状况,以确保良好的响应时间。

|

||||

|

||||

**活跃指标**

|

||||

|

||||

**每上游服务器的活跃连接**的数量可以帮助你确认反向代理是否正确的分配工作到你的整个服务器组上。如果你正在使用 NGINX 作为负载均衡器,任何一台服务器处理的连接数的明显偏差都可能表明服务器正在努力消化请求,或者是你配置使用的负载均衡的方法(例如[round-robin 或 IP hashing][10])不是最适合你流量模式的。

|

||||

|

||||

**错误指标**

|

||||

|

||||

错误指标,上面所说的高于5XX(服务器错误)状态码,是监控指标中有价值的一个,尤其是响应码部分。 NGINX Plus 允许你轻松地提取**每个上游服务器的 5xx 错误代码**的数量,以及响应的总数量,以此来确定某个特定服务器的错误率。

|

||||

|

||||

**可用性指标**

|

||||

|

||||

对于 web 服务器的运行状况,还有另一种角度,NGINX 可以通过**每个组中当前可用服务器的总量**很方便监控你的上游组的健康。在一个大的反向代理上,你可能不会非常关心其中一个服务器的当前状态,就像你只要有可用的服务器组能够处理当前的负载就行了。但监视上游组内的所有工作的服务器总量可为判断 Web 服务器的健康状况提供一个更高层面的视角。

|

||||

|

||||

**收集上游指标**

|

||||

|

||||

NGINX Plus 上游指标显示在内部 NGINX Plus 的监控仪表盘上,并且也可通过一个JSON 接口来服务于各种外部监控平台。在我们的相关文章“[NGINX指标收集][6]”中有个例子。

|

||||

|

||||

### 结论 ###

|

||||

|

||||

在这篇文章中,我们已经谈到了一些有用的指标,你可以使用表格来监控 NGINX 服务器。如果你是刚开始使用 NGINX,监控下面提供的大部分或全部指标,可以让你很好的了解你的网络基础设施的健康和活跃程度:

|

||||

|

||||

- [已丢弃的连接][12]

|

||||

- [每秒请求数][13]

|

||||

- [服务器错误率][14]

|

||||

- [请求处理数据][15]

|

||||

|

||||

最终,你会学到更多,更专业的衡量指标,尤其是关于你自己基础设施和使用情况的。当然,监控哪一项指标将取决于你可用的工具。参见相关的文章来[逐步指导你的指标收集][6],不管你使用 NGINX 还是 NGINX Plus。

|

||||

|

||||

在 Datadog 中,我们已经集成了 NGINX 和 NGINX Plus,这样你就可以以最少的设置来收集和监控所有 Web 服务器的指标。 [在本文中][17]了解如何用 NGINX Datadog来监控,并开始[免费试用 Datadog][18]吧。

|

||||

|

||||

### 诚谢 ###

|

||||

|

||||

在文章发表之前非常感谢 NGINX 团队审阅这篇,并提供重要的反馈和说明。

|

||||

|

||||

|

||||

--------------------------------------------------------------------------------

|

||||

|

||||

via: https://www.datadoghq.com/blog/how-to-monitor-nginx/

|

||||

|

||||

作者:K Young

|

||||

译者:[strugglingyouth](https://github.com/strugglingyouth)

|

||||

校对:[wxy](https://github.com/wxy)

|

||||

|

||||

本文由 [LCTT](https://github.com/LCTT/TranslateProject) 原创翻译,[Linux中国](https://linux.cn/) 荣誉推出

|

||||

|

||||

[1]:http://nginx.org/en/

|

||||

[2]:http://nginx.com/resources/glossary/reverse-proxy-server/

|

||||

[3]:https://www.datadoghq.com/blog/monitoring-101-collecting-data/

|

||||

[4]:http://nginx.org/en/docs/ngx_core_module.html#worker_connections

|

||||

[5]:https://www.datadoghq.com/blog/how-to-monitor-nginx/#active-state

|

||||

[6]:https://www.datadoghq.com/blog/how-to-collect-nginx-metrics/

|

||||

[7]:http://www.w3.org/Protocols/rfc2616/rfc2616-sec10.html

|

||||

[8]:https://www.datadoghq.com/blog/how-to-collect-nginx-metrics/

|

||||

[9]:https://en.wikipedia.org/wiki/Reverse_proxy

|

||||

[10]:http://nginx.com/blog/load-balancing-with-nginx-plus/

|

||||

[11]:https://www.datadoghq.com/blog/how-to-collect-nginx-metrics/

|

||||

[12]:https://www.datadoghq.com/blog/how-to-monitor-nginx/#dropped-connections

|

||||

[13]:https://www.datadoghq.com/blog/how-to-monitor-nginx/#requests-per-second

|

||||

[14]:https://www.datadoghq.com/blog/how-to-monitor-nginx/#server-error-rate

|

||||

[15]:https://www.datadoghq.com/blog/how-to-monitor-nginx/#request-processing-time

|

||||

[16]:https://www.datadoghq.com/blog/how-to-collect-nginx-metrics/

|

||||

[17]:https://www.datadoghq.com/blog/how-to-monitor-nginx-with-datadog/

|

||||

[18]:https://www.datadoghq.com/blog/how-to-monitor-nginx/#sign-up

|

||||

[19]:https://github.com/DataDog/the-monitor/blob/master/nginx/how_to_monitor_nginx.md

|

||||

[20]:https://github.com/DataDog/the-monitor/issues

|

||||

@ -1,32 +1,32 @@

|

||||

无忧之道:Docker中容器的备份、恢复和迁移

|

||||

================================================================================

|

||||

今天,我们将学习如何快速地对docker容器进行快捷备份、恢复和迁移。[Docker][1]是一个开源平台,用于自动化部署应用,以通过快捷的途径在称之为容器的轻量级软件层下打包、发布和运行这些应用。它使得应用平台独立,因为它扮演了Linux上一个额外的操作系统级虚拟化的自动化抽象层。它通过其组件cgroups和命名空间利用Linux内核的资源分离特性,达到避免虚拟机开销的目的。它使得用于部署和扩展web应用、数据库和后端服务的大规模构建块无需依赖于特定的堆栈或供应者。

|

||||

今天,我们将学习如何快速地对docker容器进行快捷备份、恢复和迁移。[Docker][1]是一个开源平台,用于自动化部署应用,以通过快捷的途径在称之为容器的轻量级软件层下打包、发布和运行这些应用。它使得应用平台独立,因为它扮演了Linux上一个额外的操作系统级虚拟化的自动化抽象层。它通过其组件cgroups和命名空间利用Linux内核的资源分离特性,达到避免虚拟机开销的目的。它使得用于部署和扩展web应用、数据库和后端服务的大规模构建组件无需依赖于特定的堆栈或供应者。

|

||||

|

||||

所谓的容器,就是那些创建自Docker镜像的软件层,它包含了独立的Linux文件系统和开箱即用的应用程序。如果我们有一个在盒子中运行着的Docker容器,并且想要备份这些容器以便今后使用,或者想要迁移这些容器,那么,本教程将帮助你掌握在Linux操作系统中备份、恢复和迁移Docker容器。

|

||||

所谓的容器,就是那些创建自Docker镜像的软件层,它包含了独立的Linux文件系统和开箱即用的应用程序。如果我们有一个在机器中运行着的Docker容器,并且想要备份这些容器以便今后使用,或者想要迁移这些容器,那么,本教程将帮助你掌握在Linux操作系统中备份、恢复和迁移Docker容器的方法。

|

||||

|

||||

我们怎样才能在Linux中备份、恢复和迁移Docker容器呢?这里为您提供了一些便捷的步骤。

|

||||

|

||||

### 1. 备份容器 ###

|

||||

|

||||

首先,为了备份Docker中的容器,我们会想看看我们想要备份的容器列表。要达成该目的,我们需要在我们运行这Docker引擎,并已创建了容器的Linux机器中运行 docker ps 命令。

|

||||

首先,为了备份Docker中的容器,我们会想看看我们想要备份的容器列表。要达成该目的,我们需要在我们运行着Docker引擎,并已创建了容器的Linux机器中运行 docker ps 命令。

|

||||

|

||||

# docker ps

|

||||

|

||||

|

||||

|

||||

在此之后,我们要选择我们想要备份的容器,然后我们会去创建该容器的快照。我们可以使用 docker commit 命令来创建快照。

|

||||

在此之后,我们要选择我们想要备份的容器,然后去创建该容器的快照。我们可以使用 docker commit 命令来创建快照。

|

||||

|

||||

# docker commit -p 30b8f18f20b4 container-backup

|

||||

|

||||

|

||||

|

||||

该命令会生成一个作为Docker镜像的容器快照,我们可以通过运行 docker images 命令来查看Docker镜像,如下。

|

||||

该命令会生成一个作为Docker镜像的容器快照,我们可以通过运行 `docker images` 命令来查看Docker镜像,如下。

|

||||

|

||||

# docker images

|

||||

|

||||

|

||||

|

||||

正如我们所看见的,上面做的快照已经作为Docker镜像保存了。现在,为了备份该快照,我们有两个选择,一个是我们可以登陆进Docker注册中心,并推送该镜像;另一个是我们可以将Docker镜像打包成tarball备份,以供今后使用。

|

||||

正如我们所看见的,上面做的快照已经作为Docker镜像保存了。现在,为了备份该快照,我们有两个选择,一个是我们可以登录进Docker注册中心,并推送该镜像;另一个是我们可以将Docker镜像打包成tar包备份,以供今后使用。

|

||||

|

||||

如果我们想要在[Docker注册中心][2]上传或备份镜像,我们只需要运行 docker login 命令来登录进Docker注册中心,然后推送所需的镜像即可。

|

||||

|

||||

@ -39,23 +39,23 @@

|

||||

|

||||

|

||||

|

||||

如果我们不想备份到docker注册中心,而是想要将此镜像保存在本地机器中,以供日后使用,那么我们可以将其作为tarball备份。要完成该操作,我们需要运行以下 docker save 命令。

|

||||

如果我们不想备份到docker注册中心,而是想要将此镜像保存在本地机器中,以供日后使用,那么我们可以将其作为tar包备份。要完成该操作,我们需要运行以下 `docker save` 命令。

|

||||

|

||||

# docker save -o ~/container-backup.tar container-backup

|

||||

|

||||

|

||||

|

||||

要验证tarball时候已经生成,我们只需要在保存tarball的目录中运行 ls 命令。

|

||||

要验证tar包是否已经生成,我们只需要在保存tar包的目录中运行 ls 命令即可。

|

||||

|

||||

### 2. 恢复容器 ###

|

||||

|

||||

接下来,在我们成功备份了我们的Docker容器后,我们现在来恢复这些被快照成Docker镜像的容器。如果我们已经在注册中心推送了这些Docker镜像,那么我们仅仅需要把那个Docker镜像拖回并直接运行即可。

|

||||

接下来,在我们成功备份了我们的Docker容器后,我们现在来恢复这些制作了Docker镜像快照的容器。如果我们已经在注册中心推送了这些Docker镜像,那么我们仅仅需要把那个Docker镜像拖回并直接运行即可。

|

||||

|

||||

# docker pull arunpyasi/container-backup:test

|

||||

|

||||

|

||||

|

||||

但是,如果我们将这些Docker镜像作为tarball文件备份到了本地,那么我们只要使用 docker load 命令,后面加上tarball的备份路径,就可以加载该Docker镜像了。

|

||||

但是,如果我们将这些Docker镜像作为tar包文件备份到了本地,那么我们只要使用 docker load 命令,后面加上tar包的备份路径,就可以加载该Docker镜像了。

|

||||

|

||||

# docker load -i ~/container-backup.tar

|

||||

|

||||

@ -63,7 +63,7 @@

|

||||

|

||||

# docker images

|

||||

|

||||

在镜像被加载后,我们将从加载的镜像去运行Docker容器。

|

||||

在镜像被加载后,我们将用加载的镜像去运行Docker容器。

|

||||

|

||||

# docker run -d -p 80:80 container-backup

|

||||

|

||||

@ -71,11 +71,11 @@

|

||||

|

||||

### 3. 迁移Docker容器 ###

|

||||

|

||||

迁移容器同时涉及到了上面两个操作,备份和恢复。我们可以将任何一个Docker容器从一台机器迁移到另一台机器。在迁移过程中,首先我们将容器的备份作为快照Docker镜像。然后,该Docker镜像或者是被推送到了Docker注册中心,或者被作为tarball文件保存到了本地。如果我们将镜像推送到了Docker注册中心,我们简单地从任何我们想要的机器上使用 docker run 命令来恢复并运行该容器。但是,如果我们将镜像打包成tarball备份到了本地,我们只需要拷贝或移动该镜像到我们想要的机器上,加载该镜像并运行需要的容器即可。

|

||||

迁移容器同时涉及到了上面两个操作,备份和恢复。我们可以将任何一个Docker容器从一台机器迁移到另一台机器。在迁移过程中,首先我们将把容器备份为Docker镜像快照。然后,该Docker镜像或者是被推送到了Docker注册中心,或者被作为tar包文件保存到了本地。如果我们将镜像推送到了Docker注册中心,我们简单地从任何我们想要的机器上使用 docker run 命令来恢复并运行该容器。但是,如果我们将镜像打包成tar包备份到了本地,我们只需要拷贝或移动该镜像到我们想要的机器上,加载该镜像并运行需要的容器即可。

|

||||

|

||||

### 尾声 ###

|

||||

|

||||

最后,我们已经学习了如何快速地备份、恢复和迁移Docker容器,本教程适用于各个成功运行Docker的操作系统平台。真的,Docker是一个相当简单易用,然而功能却十分强大的工具。它的命令相当易记,这些命令都非常短,带有许多简单而强大的标记和参数。上面的方法让我们备份容器时很是安逸,使得我们可以在日后很轻松地恢复它们。这会帮助我们恢复我们的容器和镜像,即便主机系统崩溃,甚至意外地被清除。如果你还有很多问题、建议、反馈,请在下面的评论框中写出来吧,可以帮助我们改进或更新我们的内容。谢谢大家!享受吧 :-)

|

||||

最后,我们已经学习了如何快速地备份、恢复和迁移Docker容器,本教程适用于各个可以成功运行Docker的操作系统平台。真的,Docker是一个相当简单易用,然而功能却十分强大的工具。它的命令相当易记,这些命令都非常短,带有许多简单而强大的标记和参数。上面的方法让我们备份容器时很是安逸,使得我们可以在日后很轻松地恢复它们。这会帮助我们恢复我们的容器和镜像,即便主机系统崩溃,甚至意外地被清除。如果你还有很多问题、建议、反馈,请在下面的评论框中写出来吧,可以帮助我们改进或更新我们的内容。谢谢大家!享受吧 :-)

|

||||

|

||||

--------------------------------------------------------------------------------

|

||||

|

||||

@ -83,7 +83,7 @@ via: http://linoxide.com/linux-how-to/backup-restore-migrate-containers-docker/

|

||||

|

||||

作者:[Arun Pyasi][a]

|

||||

译者:[GOLinux](https://github.com/GOLinux)

|

||||

校对:[校对者ID](https://github.com/校对者ID)

|

||||

校对:[wxy](https://github.com/wxy)

|

||||

|

||||

本文由 [LCTT](https://github.com/LCTT/TranslateProject) 原创翻译,[Linux中国](https://linux.cn/) 荣誉推出

|

||||

|

||||

@ -0,0 +1,75 @@

|

||||

选择成为软件开发工程师的5个原因

|

||||

================================================================================

|

||||

|

||||

|

||||

|

||||

这个星期我将给本地一所高中做一次有关于程序猿是怎样工作的演讲。我是志愿(由 [Transfer][1] 组织的)来到这所学校谈论我的工作的。这个学校本周将有一个技术主题日,并且他们很想听听科技行业是怎样工作的。因为我是从事软件开发的,这也是我将和学生们讲的内容。演讲的其中一部分是我为什么觉得软件开发是一个很酷的职业。主要原因如下:

|

||||

|

||||

### 5个原因 ###

|

||||

|

||||

**1、创造性**

|

||||

|

||||

如果你问别人创造性的工作有哪些,别人通常会说像作家,音乐家或者画家那样的(工作)。但是极少有人知道软件开发也是一项非常具有创造性的工作。它是最符合创造性定义的了,因为你创造了一个以前没有的新功能。这种解决方案可以在整体和细节上以很多形式来展现。我们经常会遇到一些需要做权衡的场景(比如说运行速度与内存消耗的权衡)。当然前提是这种解决方案必须是正确的。这些所有的行为都是需要强大的创造性的。

|

||||

|

||||

**2、协作性**

|

||||

|

||||

另外一个表象是程序猿们独自坐在他们的电脑前,然后撸一天的代码。但是软件开发事实上通常总是一个团队努力的结果。你会经常和你的同事讨论编程问题以及解决方案,并且和产品经理、测试人员、客户讨论需求以及其他问题。

|

||||

经常有人说,结对编程(2个开发人员一起在一个电脑上编程)是一种流行的最佳实践。

|

||||

|

||||

**3、高需性**

|

||||

|

||||

世界上越来越多的人在用软件,正如 [Marc Andreessen](https://en.wikipedia.org/wiki/Marc_Andreessen) 所说 " [软件正在吞噬世界][2] "。虽然程序猿现在的数量非常巨大(在斯德哥尔摩,程序猿现在是 [最普遍的职业][3] ),但是,需求量一直处于供不应求的局面。据软件公司说,他们最大的挑战之一就是 [找到优秀的程序猿][4] 。我也经常接到那些想让我跳槽的招聘人员打来的电话。我知道至少除软件行业之外的其他行业的雇主不会那么拼(的去招聘)。

|

||||

|

||||

**4、高酬性**

|

||||

|

||||

软件开发可以带来不菲的收入。卖一份你已经开发好的软件的额外副本是没有 [边际成本][5] 的。这个事实与对程序猿的高需求意味着收入相当可观。当然还有许多更捞金的职业,但是相比一般人群,我认为软件开发者确实“日进斗金”(知足吧!骚年~~)。

|

||||

|

||||

**5、前瞻性**

|

||||

|

||||

有许多工作岗位消失,往往是由于它们可以被计算机和软件代替。但是所有这些新的程序依然需要开发和维护,因此,程序猿的前景还是相当好的。

|

||||

|

||||

### 但是...###

|

||||

|

||||

**外包又是怎么一回事呢?**

|

||||

|

||||

难道所有外包到其他国家的软件开发的薪水都很低吗?这是一个理想丰满,现实骨感的例子(有点像 [瀑布开发模型][6] )。软件开发基本上跟设计的工作一样,是一个探索发现的工作。它受益于强有力的合作。更进一步说,特别当你的主打产品是软件的时候,你所掌握的开发知识是绝对的优势。知识在整个公司中分享的越容易,那么公司的发展也将越来越好。

|

||||

|

||||

换一种方式去看待这个问题。软件外包已经存在了相当一段时间了。但是对本土程序猿的需求量依旧非常高。因为许多软件公司看到了雇佣本土程序猿的带来的收益要远远超过了相对较高的成本(其实还是赚了)。

|

||||

|

||||

### 如何成为人生大赢家 ###

|

||||

|

||||

虽然我有许多我认为软件开发是一件非常有趣的事情的理由 (详情见: [为什么我热爱编程][7] )。但是这些理由,并不适用于所有人。幸运的是,尝试编程是一件非常容易的事情。在互联网上有数不尽的学习编程的资源。例如,[Coursera][8] 和 [Udacity][9] 都拥有很好的入门课程。如果你从来没有撸过码,可以尝试其中一个免费的课程,找找感觉。

|

||||

|

||||

寻找一个既热爱又能谋生的事情至少有2个好处。首先,由于你天天去做,工作将比你简单的只为谋生要有趣的多。其次,如果你真的非常喜欢,你将更好的擅长它。我非常喜欢下面一副关于伟大工作组成的韦恩图(作者 [@eskimon)][10]) 。因为编码的薪水确实相当不错,我认为如果你真的喜欢它,你将有一个很好的机会,成为人生的大赢家!

|

||||

|

||||

|

||||

|

||||

--------------------------------------------------------------------------------

|

||||

|

||||

via: http://henrikwarne.com/2014/12/08/5-reasons-why-software-developer-is-a-great-career-choice/

|

||||

|

||||

作者:[Henrik Warne][a]

|

||||

译者:[mousycoder](https://github.com/mousycoder)

|

||||

校对:[wxy](https://github.com/wxy)

|

||||

|

||||

本文由 [LCTT](https://github.com/LCTT/TranslateProject) 原创翻译,[Linux中国](https://linux.cn/) 荣誉推出

|

||||

|

||||

|

||||

[a]:http://henrikwarne.com/

|

||||

[1]:http://www.transfer.nu/omoss/transferinenglish.jspx?pageId=23

|

||||

[2]:http://www.wsj.com/articles/SB10001424053111903480904576512250915629460

|

||||

[3]:http://www.di.se/artiklar/2014/6/12/jobbet-som-tar-over-landet/

|

||||

[4]:http://computersweden.idg.se/2.2683/1.600324/examinationstakten-racker-inte-for-branschens-behov

|

||||

[5]:https://en.wikipedia.org/wiki/Marginal_cost

|

||||

[6]:https://en.wikipedia.org/wiki/Waterfall_model

|

||||

[7]:http://henrikwarne.com/2012/06/02/why-i-love-coding/

|

||||

[8]:https://www.coursera.org/

|

||||

[9]:https://www.udacity.com/

|

||||

[10]:https://eskimon.wordpress.com/about/

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

99

sources/talk/20150806 5 heroes of the Linux world.md

Normal file

99

sources/talk/20150806 5 heroes of the Linux world.md

Normal file

@ -0,0 +1,99 @@

|

||||

5 heroes of the Linux world

|

||||

================================================================================

|

||||

Who are these people, seen and unseen, whose work affects all of us every day?

|

||||

|

||||

|

||||

Image courtesy [Christopher Michel/Flickr][1]

|

||||

|

||||

### High-flying penguins ###

|

||||

|

||||

Linux and open source is driven by passionate people who write best-of-breed software and then release the code to the public so anyone can use it, without any strings attached. (Well, there is one string attached and that’s licence.)

|

||||

|

||||

Who are these people? These heroes of the Linux world, whose work affects all of us every day. Allow me to introduce you.

|

||||

|

||||

|

||||

Image courtesy Swapnil Bhartiya

|

||||

|

||||

### Klaus Knopper ###

|

||||

|

||||

Klaus Knopper, an Austrian developer who lives in Germany, is the founder of Knoppix and Adriana Linux, which he developed for his blind wife.

|

||||

|

||||

Knoppix holds a very special place in heart of those Linux users who started using Linux before Ubuntu came along. What makes Knoppix so special is that it popularized the concept of Live CD. Unlike Windows or Mac OS X, you could run the entire operating system from the CD without installing anything on the system. It allowed new users to test Linux on their systems without formatting the hard drive. The live feature of Linux alone contributed heavily to its popularity.

|

||||

|

||||

|

||||

Image courtesy [Fórum Internacional Software Live/Flickr][2]

|

||||

|

||||

### Lennart Pottering ###

|

||||

|

||||

Lennart Pottering is yet another genius from Germany. He has written so many core components of a Linux (as well as BSD) system that it’s hard to keep track. Most of his work is towards the successors of aging or broken components of the Linux systems.

|

||||

|

||||

Pottering wrote the modern init system systemd, which shook the Linux world and created a [rift in the Debian community][3].

|

||||

|

||||

While Linus Torvalds has no problems with systemd, and praises it, he is not a huge fan of the way systemd developers (including the co-author Kay Sievers,) respond to bug reports and criticism. At one point Linus said on the LKML (Linux Kernel Mailing List) that he would [never work with Sievers][4].

|

||||

|

||||

Lennart is also the author of Pulseaudio, sound server on Linux and Avahi, zero-configuration networking (zeroconf) implementation.

|

||||

|

||||

|

||||

Image courtesy [Meego Com/Flickr][5]

|

||||

|

||||

### Jim Zemlin ###

|

||||

|

||||

Jim Zemlin isn't a developer, but as founder of The Linux Foundation he is certainly one of the most important figures of the Linux world.

|

||||

|

||||

In 2007, The Linux Foundation was formed as a result of merger between two open source bodies: the Free Standards Group and the Open Source Development Labs. Zemlin was the executive director of the Free Standards Group. Post-merger Zemlin became the executive director of The Linux Foundation and has held that position since.

|

||||

|

||||

Under his leadership, The Linux Foundation has become the central figure in the modern IT world and plays a very critical role for the Linux ecosystem. In order to ensure that key developers like Torvalds and Kroah-Hartman can focus on Linux, the foundation sponsors them as fellows.

|

||||

|

||||

Zemlin also made the foundation a bridge between companies so they can collaborate on Linux while at the same time competing in the market. The foundation also organizes many conferences around the world and [offers many courses for Linux developers][6].

|

||||

|

||||

People may think of Zemlin as Linus Torvalds' boss, but he refers to himself as "Linus Torvalds' janitor."

|

||||

|

||||

|

||||

Image courtesy [Coscup/Flickr][7]

|

||||

|

||||

### Greg Kroah-Hartman ###

|

||||

|

||||

Greg Kroah-Hartman is known as second-in-command of the Linux kernel. The ‘gentle giant’ is the maintainer of the stable branch of the kernel and of staging subsystem, USB, driver core, debugfs, kref, kobject, and the [sysfs][8] kernel subsystems along with many other components of a Linux system.

|

||||

|

||||

He is also credited for device drivers for Linux. One of his jobs is to travel around the globe, meet hardware makers and persuade them to make their drivers available for Linux. The next time you plug some random USB device to your system and it works out of the box, thank Kroah-Hartman. (Don't thank the distro. Some distros try to take credit for the work Kroah-Hartman or the Linux kernel did.)

|

||||

|

||||

Kroah-Hartman previously worked for Novell and then joined the Linux Foundation as a fellow, alongside Linus Torvalds.

|

||||

|

||||

Kroah-Hartman is the total opposite of Linus and never rants (at least publicly). One time there was some ripple was when he stated that [Canonical doesn’t contribute much to the Linux kernel][9].

|

||||

|

||||

On a personal level, Kroah-Hartman is extremely helpful to new developers and users and is easily accessible.

|

||||

|

||||

|

||||

Image courtesy Swapnil Bhartiya

|

||||

|

||||

### Linus Torvalds ###

|

||||

|

||||

No collection of Linux heroes would be complete without Linus Torvalds. He is the author of the Linux kernel, the most used open source technology on the planet and beyond. His software powers everything from space stations to supercomputers, military drones to mobile devices and tiny smartwatches. Linus remains the authority on the Linux kernel and makes the final decision on which patches to merge to the kernel.

|

||||

|

||||

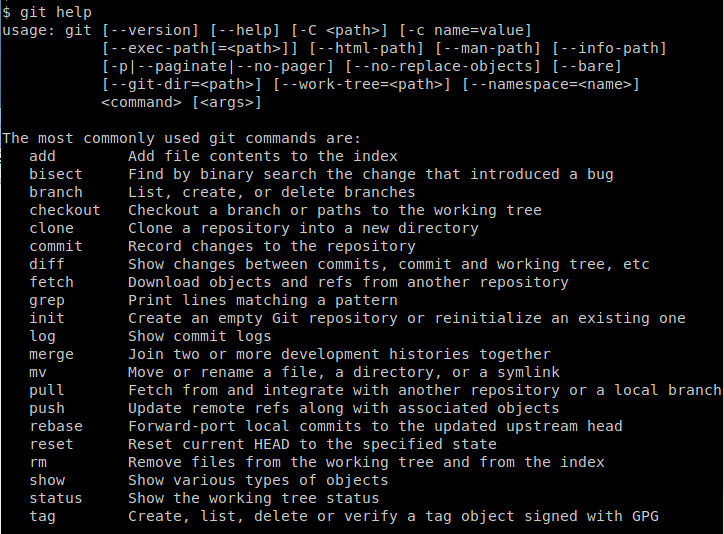

Linux isn't Torvalds' only contribution open source. When he got fed-up with the existing software revision control systems, which his kernel heavily relied on, he wrote his own, called Git. Git enjoys the same reputation as Linux; it is the most used version control system in the world.

|

||||

|

||||

Torvalds is also a passionate scuba diver and when he found no decent dive logs for Linux, he wrote his own and called it SubSurface.

|

||||

|

||||

Torvalds is [well known for his rants][10] and once admitted that his ego is as big as a small planet. But he is also known for admitting his mistakes if he realizes he was wrong.

|

||||

|

||||

--------------------------------------------------------------------------------

|

||||

|

||||

via: http://www.itworld.com/article/2955001/linux/5-heros-of-the-linux-world.html

|

||||

|

||||

作者:[Swapnil Bhartiya][a]

|

||||

译者:[译者ID](https://github.com/译者ID)

|

||||

校对:[校对者ID](https://github.com/校对者ID)

|

||||

|

||||

本文由 [LCTT](https://github.com/LCTT/TranslateProject) 原创翻译,[Linux中国](https://linux.cn/) 荣誉推出

|

||||

|

||||

[a]:http://www.itworld.com/author/Swapnil-Bhartiya/

|

||||

[1]:https://flic.kr/p/siJ25M

|

||||

[2]:https://flic.kr/p/uTzj54

|

||||

[3]:http://www.itwire.com/business-it-news/open-source/66153-systemd-fallout-two-debian-technical-panel-members-resign

|

||||

[4]:http://www.linuxveda.com/2014/04/04/linus-torvalds-systemd-kay-sievers/

|

||||

[5]:https://flic.kr/p/9Lnhpu

|

||||

[6]:http://www.itworld.com/article/2951968/linux/linux-foundation-offers-cheaper-courses-and-certifications-for-india.html

|

||||

[7]:https://flic.kr/p/hBv8Pp

|

||||

[8]:https://en.wikipedia.org/wiki/Sysfs

|

||||

[9]:https://www.youtube.com/watch?v=CyHAeGBFS8k

|

||||

[10]:http://www.itworld.com/article/2873200/operating-systems/11-technologies-that-tick-off-linus-torvalds.html

|

||||

@ -0,0 +1,81 @@

|

||||

Torvalds 2.0: Patricia Torvalds on computing, college, feminism, and increasing diversity in tech

|

||||

================================================================================

|

||||

|

||||

Image by : Photo by Becky Svartström. Modified by Opensource.com. [CC BY-SA 4.0][1]

|

||||

|

||||

Patricia Torvalds isn't the Torvalds name that pops up in Linux and open source circles. Yet.

|

||||

|

||||

|

||||

|

||||

At 18, Patricia is a feminist with a growing list of tech achievements, open source industry experience, and her sights set on diving into her freshman year of college at Duke University's Pratt School of Engineering. She works for [Puppet Labs][2] in Portland, Oregon, as an intern, but soon she'll head to Durham, North Carolina, to start the fall semester of college.

|

||||

|

||||

In this exclusive interview, Patricia explains what got her interested in computer science and engineering (spoiler alert: it wasn't her father), what her high school did "right" with teaching tech, the important role feminism plays in her life, and her thoughts on the lack of diversity in technology.

|

||||

|

||||

|

||||

|

||||

### What made you interested in studying computer science and engineering? ###

|

||||

|

||||

My interest in tech really grew throughout high school. I wanted to go into biology for a while, until around my sophomore year. I had a web design internship at the Portland VA after my sophomore year. And I took an engineering class called Exploratory Ventures, which sent an ROV into the Pacific ocean late in my sophomore year, but the turning point was probably when I was named a regional winner and national runner up for the [NCWIT Aspirations in Computing][3] award halfway through my junior year.

|

||||

|

||||

The award made me feel validated in my interest, of course, but I think the most important part of it was getting to join a Facebook group for all the award winners. The girls who have won the award are absolutely incredible and so supportive of each other. I was definitely interested in computer science before I won the award, because of my work in XV and at the VA, but having these girls to talk to solidified my interest and has kept it really strong. Teaching XV—more on that later—my junior and senior year, also, made engineering and computer science really fun for me.

|

||||

|

||||

### What do you plan to study? And do you already know what you want to do after college? ###

|

||||

|

||||

I hope to major in either Mechanical or Electrical and Computer Engineering as well as Computer Science, and minor in Women's Studies. After college, I hope to work for a company that supports or creates technology for social good, or start my own company.

|

||||

|

||||

### My daughter had one high school programming class—Visual Basic. She was the only girl in her class, and she ended up getting harassed and having a miserable experience. What was your experience like? ###

|

||||

|

||||

My high school began offering computer science classes my senior year, and I took Visual Basic as well! The class wasn't bad, but I was definitely one of three or four girls in the class of 20 or so students. Other computing classes seemed to have similar gender breakdowns. However, my high school was extremely small and the teacher was supportive of inclusivity in tech, so there was no harassment that I noticed. Hopefully the classes become more diverse in future years.

|

||||

|

||||

### What did your schools do right technology-wise? And how could they have been better? ###

|

||||

|

||||

My high school gave us consistent access to computers, and teachers occasionally assigned technology-based assignments in unrelated classes—we had to create a website for a social studies class a few times—which I think is great because it exposes everyone to tech. The robotics club was also pretty active and well-funded, but fairly small; I was not a member. One very strong component of the school's technology/engineering program is actually a student-taught engineering class called Exploratory Ventures, which is a hands-on class that tackles a new engineering or computer science problem every year. I taught it for two years with a classmate of mine, and have had students come up to me and tell me they're interested in pursuing engineering or computer science as a result of the class.

|

||||

|

||||

However, my high school was not particularly focused on deliberately including young women in these programs, and it isn't very racially diverse. The computing-based classes and clubs were, by a vast majority, filled with white male students. This could definitely be improved on.

|

||||

|

||||

### Growing up, how did you use technology at home? ###

|

||||

|

||||

Honestly, when I was younger I used my computer time (my dad created a tracker, which logged us off after an hour of Internet use) to play Neopets or similar games. I guess I could have tried to mess with the tracker or played on the computer without Internet use, but I just didn't. I sometimes did little science projects with my dad, and I remember once printing "Hello world" in the terminal with him a thousand times, but mostly I just played online games with my sisters and didn't get my start in computing until high school.

|

||||

|

||||

### You were active in the Feminism Club at your high school. What did you learn from that experience? What feminist issues are most important to you now? ###

|

||||

|

||||

My friend and I co-founded Feminism Club at our high school late in our sophomore year. We did receive lots of resistance to the club at first, and while that never entirely went away, by the time we graduated feminist ideals were absolutely a part of the school's culture. The feminist work we did at my high school was generally on a more immediate scale and focused on issues like the dress code.

|

||||

|

||||

Personally, I'm very focused on intersectional feminism, which is feminism as it applies to other aspects of oppression like racism and classism. The Facebook page [Guerrilla Feminism][4] is a great example of an intersectional feminism and has done so much to educate me. I currently run the Portland branch.

|

||||

|

||||

Feminism is also important to me in terms of diversity in tech, although as an upper-class white woman with strong connections in the tech world, the problems here affect me much less than they do other people. The same goes for my involvement in intersectional feminism. Publications like [Model View Culture][5] are very inspiring to me, and I admire Shanley Kane so much for what she does.

|

||||

|

||||

### What advice would you give parents who want to teach their children how to program? ###

|

||||

|

||||

Honestly, nobody ever pushed me into computer science or engineering. Like I said, for a long time I wanted to be a geneticist. I got a summer internship doing web design for the VA the summer after my sophomore year and totally changed my mind. So I don't know if I can fully answer that question.

|

||||

|

||||

I do think genuine interest is important, though. If my dad had sat me down in front of the computer and told me to configure a webserver when I was 12, I don't think I'd be interested in computer science. Instead, my parents gave me a lot of free reign to do what I wanted, which was mostly coding terrible little HTML sites for my Neopets. Neither of my younger sisters are interested in engineering or computer science, and my parents don't care. I'm really lucky my parents have given me and my sisters the encouragement and resources to explore our interests.

|

||||

|

||||

Still, I grew up saying my future career would be "like my dad's"—even when I didn't know what he did. He has a pretty cool job. Also, one time when I was in middle school, I told him that and he got a little choked up and said I wouldn't think that in high school. So I guess that motivated me a bit.

|

||||

|

||||

### What suggestions do you have for leaders in open source communities to help them attract and maintain a more diverse mix of contributors? ###

|

||||

|

||||

I'm actually not active in particular open source communities. I feel much more comfortable discussing computing with other women; I'm a member of the [NCWIT Aspirations in Computing][6] network and it's been one of the most important aspects of my continued interest in technology, as well as the Facebook group [Ladies Storm Hackathons][7].

|

||||

|

||||

I think this applies well to attracting and maintaining a talented and diverse mix of contributors: Safe spaces are important. I have seen the misogynistic and racist comments made in some open source communities, and subsequent dismissals when people point out the issues. I think that in maintaining a professional community there have to be strong standards on what constitutes harassment or inappropriate conduct. Of course, people can—and will—have a variety of opinions on what they should be able to express in open source communities, or any community. However, if community leaders actually want to attract and maintain diverse talent, they need to create a safe space and hold community members to high standards.

|

||||

|

||||

I also think that some community leaders just don't value diversity. It's really easy to argue that tech is a meritocracy, and the reason there are so few marginalized people in tech is just that they aren't interested, and that the problem comes from earlier on in the pipeline. They argue that if someone is good enough at their job, their gender or race or sexual orientation doesn't matter. That's the easy argument. But I was raised not to make excuses for mistakes. And I think the lack of diversity is a mistake, and that we should be taking responsibility for it and actively trying to make it better.

|

||||

|

||||

--------------------------------------------------------------------------------

|

||||

|

||||

via: http://opensource.com/life/15/8/patricia-torvalds-interview

|

||||

|

||||

作者:[Rikki Endsley][a]

|

||||

译者:[译者ID](https://github.com/译者ID)

|

||||

校对:[校对者ID](https://github.com/校对者ID)

|

||||

|

||||

本文由 [LCTT](https://github.com/LCTT/TranslateProject) 原创翻译,[Linux中国](https://linux.cn/) 荣誉推出

|

||||

|

||||

[a]:http://opensource.com/users/rikki-endsley

|

||||

[1]:https://creativecommons.org/licenses/by-sa/4.0/

|

||||

[2]:https://puppetlabs.com/

|

||||

[3]:https://www.aspirations.org/

|

||||

[4]:https://www.facebook.com/guerrillafeminism

|

||||

[5]:https://modelviewculture.com/

|

||||

[6]:https://www.aspirations.org/

|

||||

[7]:https://www.facebook.com/groups/LadiesStormHackathons/

|

||||

@ -1,52 +0,0 @@

|

||||

Translating by XLCYun.

|

||||

A Week With GNOME As My Linux Desktop: What They Get Right & Wrong - Page 4 - GNOME Settings

|

||||

================================================================================

|

||||

### Settings ###

|

||||

|

||||

There are a few specific KDE Control modules that I am going to pick at, mostly because they are so laughable horrible compared to their gnome counter-part that its honestly pathetic.

|

||||

|

||||

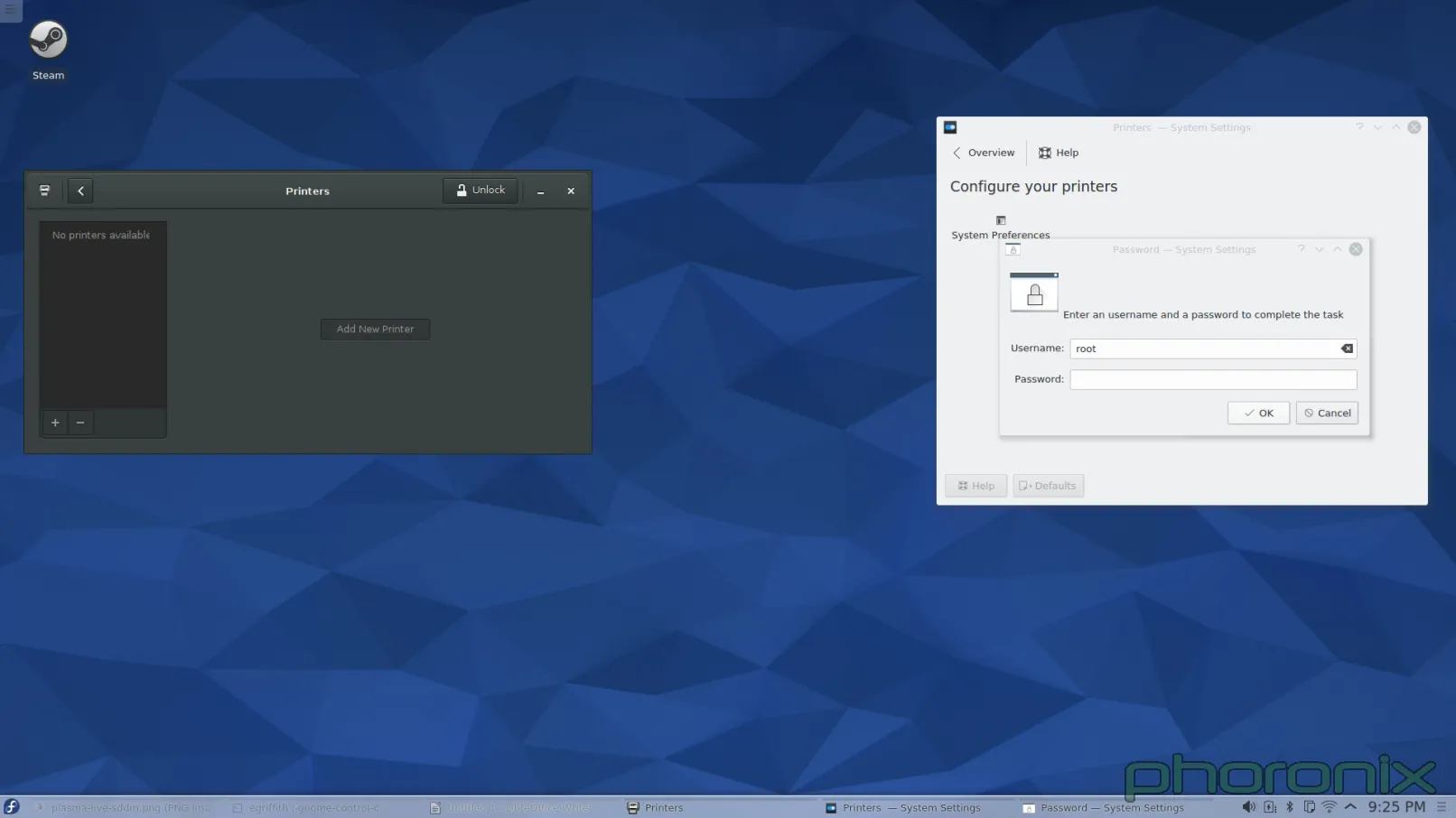

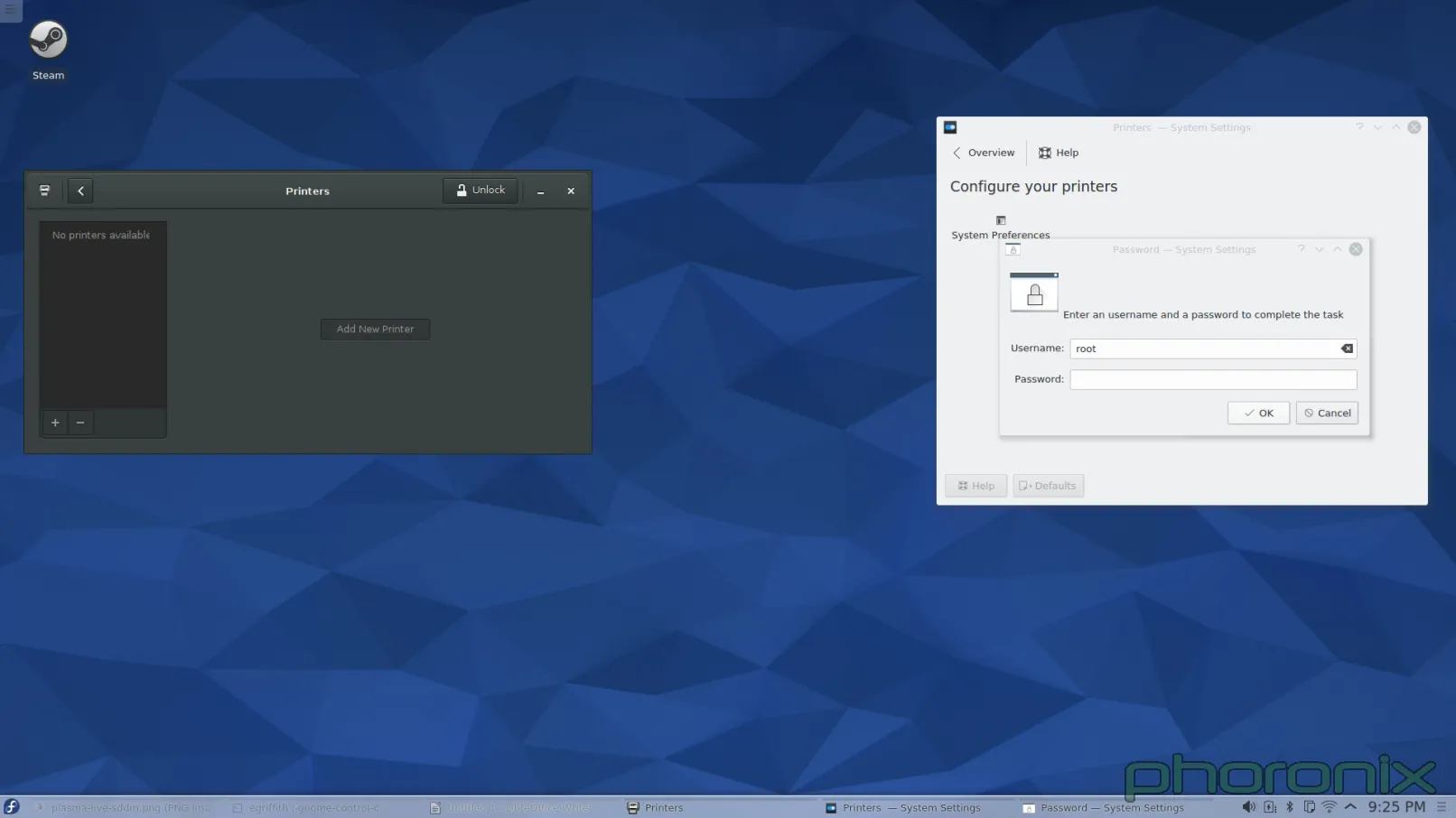

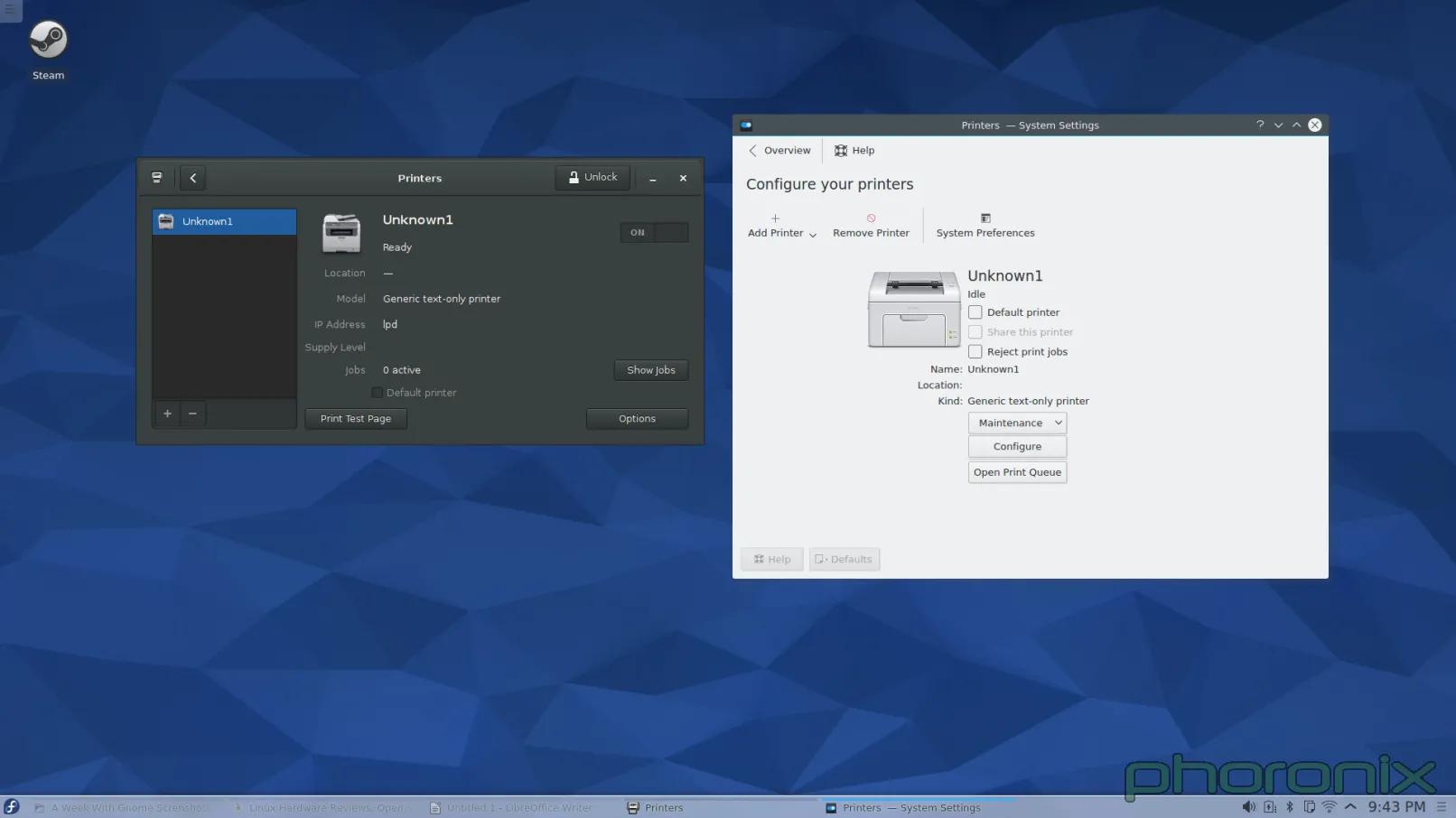

First one up? Printers.

|

||||

|

||||

|

||||

|

||||

Gnome is on the left, KDE is on the right. You know what the difference is between the printer applet on the left, and the one on the right? When I opened up Gnome Control Center and hit "Printers" the applet popped up and nothing happened. When I opened up KDE System Settings and hit "Printers" I got a password prompt. Before I was even allowed to LOOK at the printers I had to give up ROOT'S password.

|

||||

|

||||

Let me just re-iterate that. In this, the days of PolicyKit and Logind, I am still being asked for Root's password for what should be a sudo operation. I didn't even SETUP root's password when I installed the system. I had to drop down to Konsole and run 'sudo passwd root' so that I could GIVE root a password so that I could go back into System Setting's printer applet and then give up root's password to even LOOK at what printers were available. Once I did that I got prompted for root's password AGAIN when I hit "Add Printer" then I got prompted for root's password AGAIN after I went through and selected a printer and driver. Three times I got asked for ROOT'S password just to add a printer to the system.

|

||||

|

||||

When I added a printer under Gnome I didn't get prompted for my SUDO password until I hit "Unlock" in the printer applet. I got asked once, then I never got asked again. KDE, I am begging you... Adopt Gnome's "Unlock" methodology. Do not prompt for a password until you really need one. Furthermore, whatever library is out there that allows for KDE applications to bypass PolicyKit / Logind (if its available) and prompt directly for root... Bin that code. If this was a multi-user system I either have to give up root's password, or be there every second of every day in order to put it in any time a user might have to update, change, or add a new printer. Both options are completely unacceptable.

|

||||

|

||||

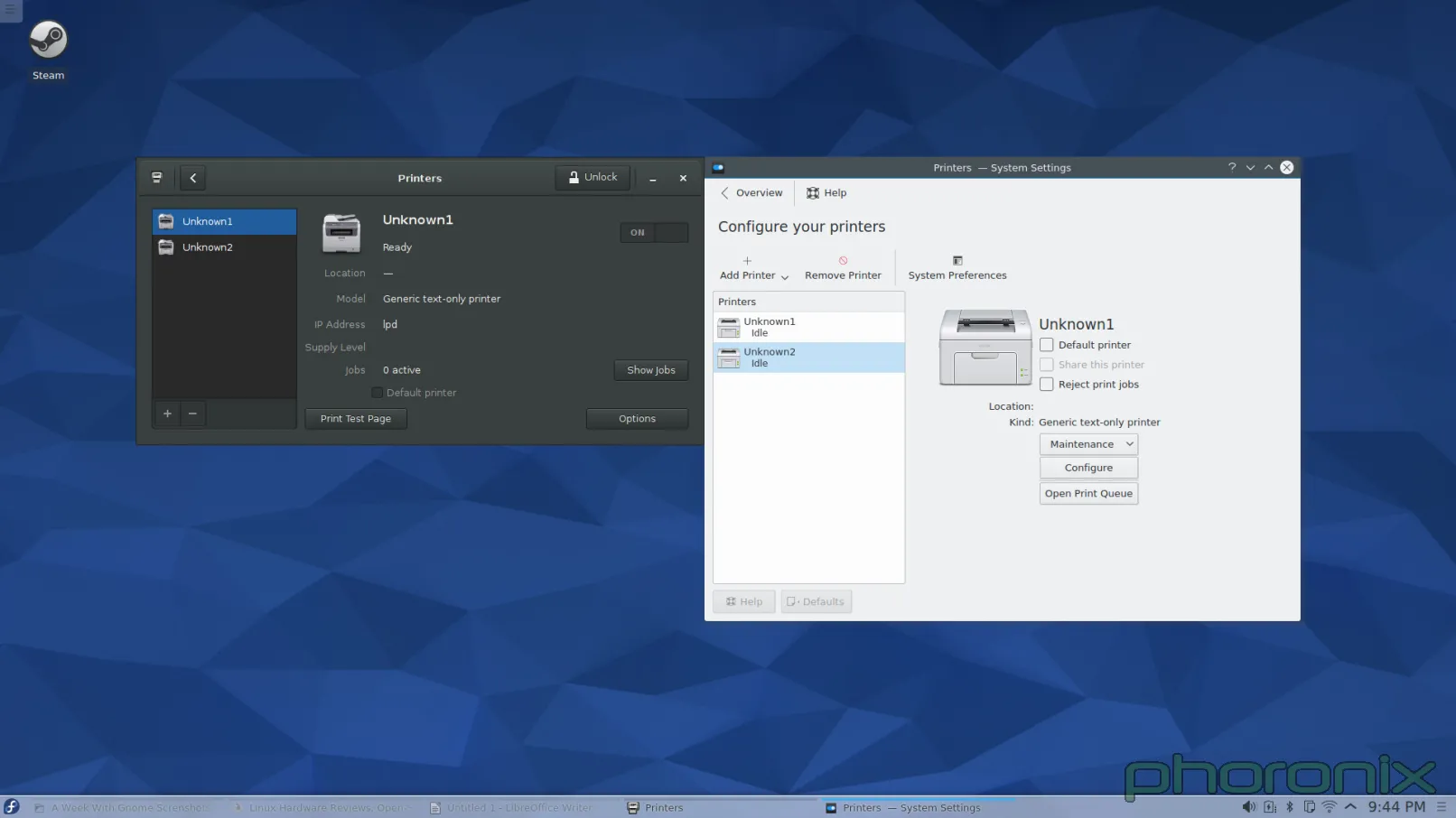

One more thing...

|

||||

|

||||

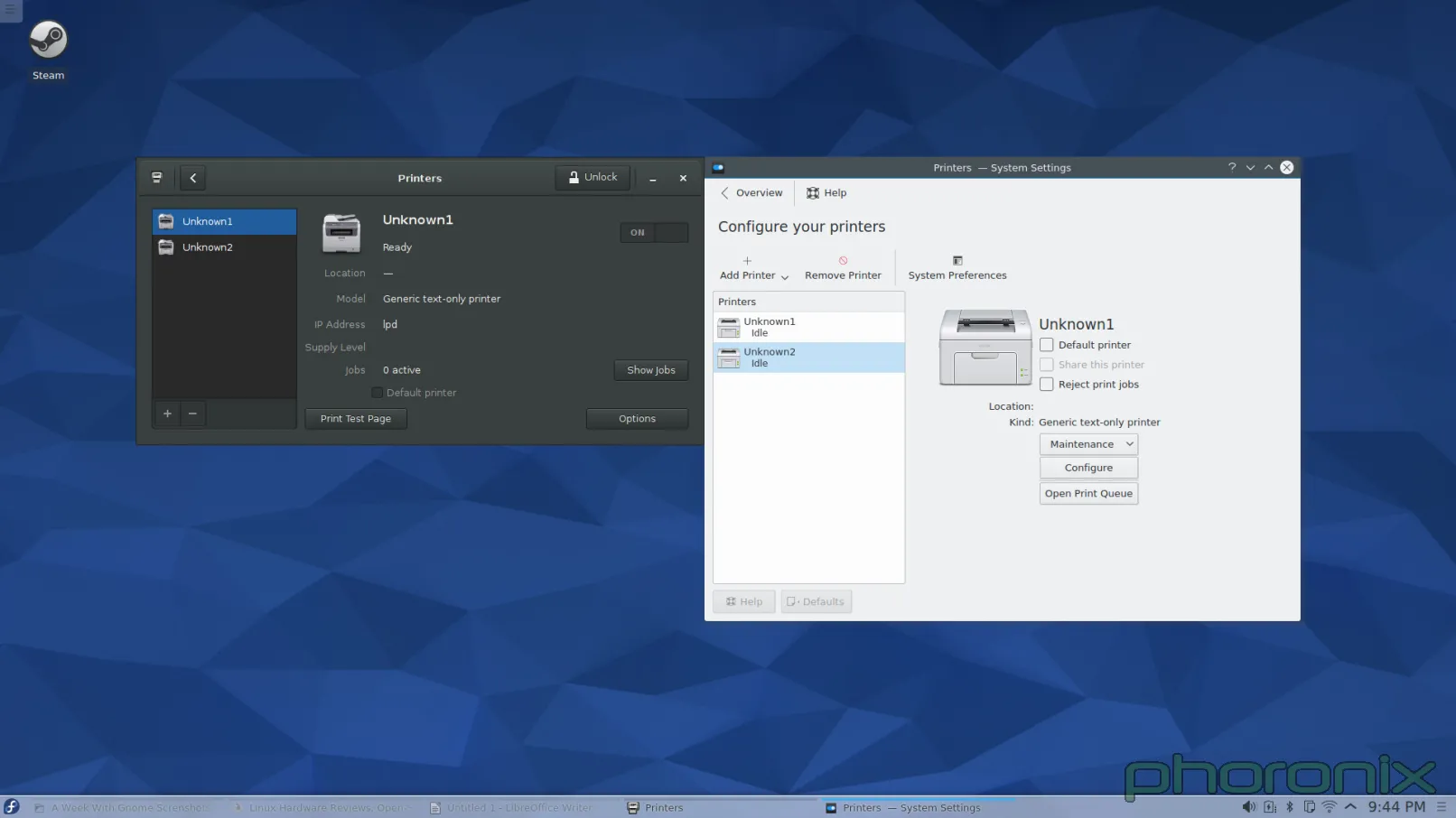

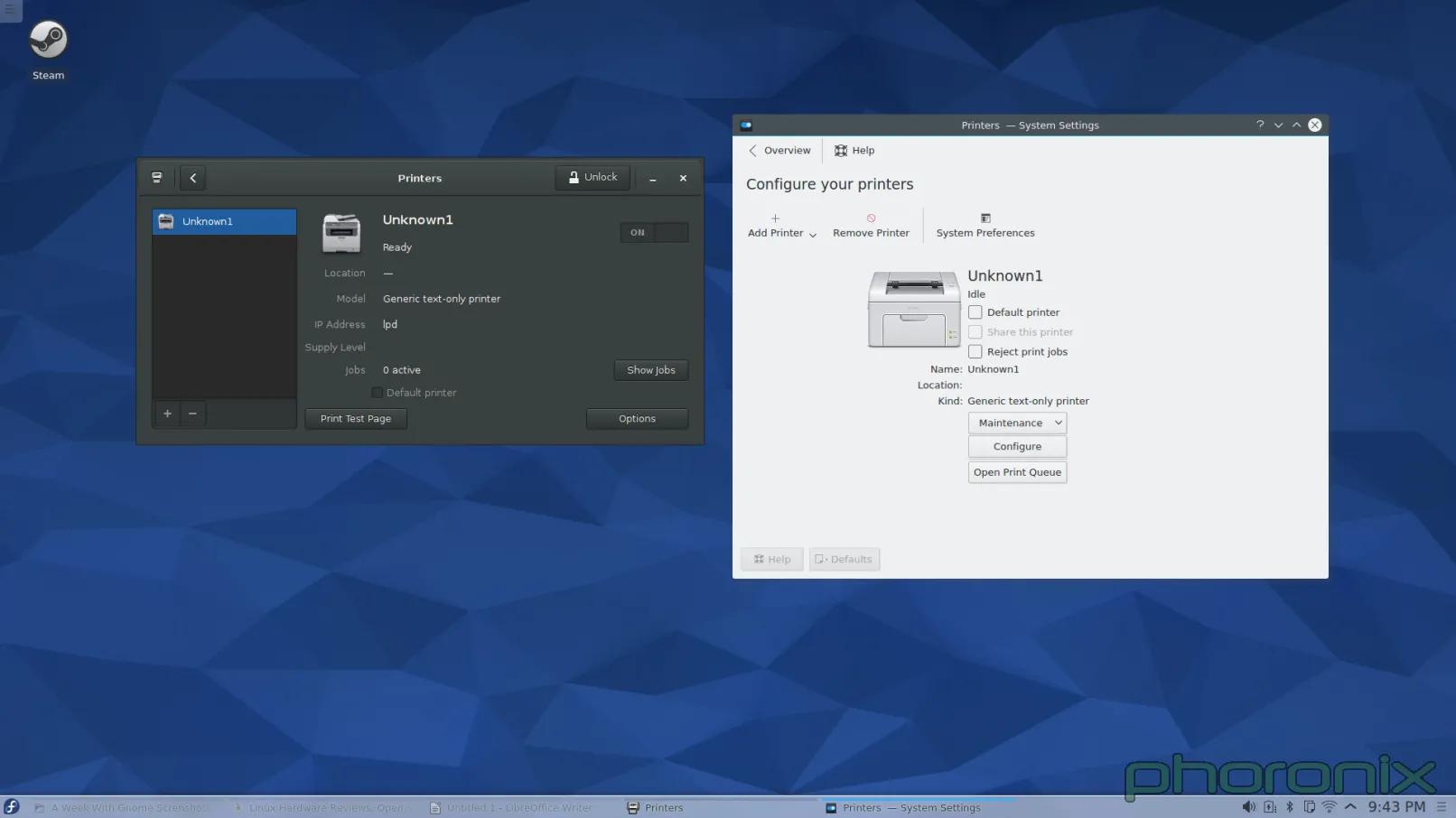

|

||||

|

||||

|

||||

|

||||

Question to the forums: What looks cleaner to you? I had this realization when I was writing this article: Gnome's applet makes it very clear where any additional printers are going to go, they set aside a column on the left to list them. Before I added a second printer to KDE, and it suddenly grew a left side column, I had this nightmare-image in my head of the applet just shoving another icon into the screen and them being listed out like preview images in a folder of pictures. I was pleasantly surprised to see that I was wrong but the fact that the applet just 'grew' another column that didn't exist before and drastically altered its presentation is not really 'good' either. It's a design that's confusing, shocking, and non-intuitive.

|

||||

|

||||

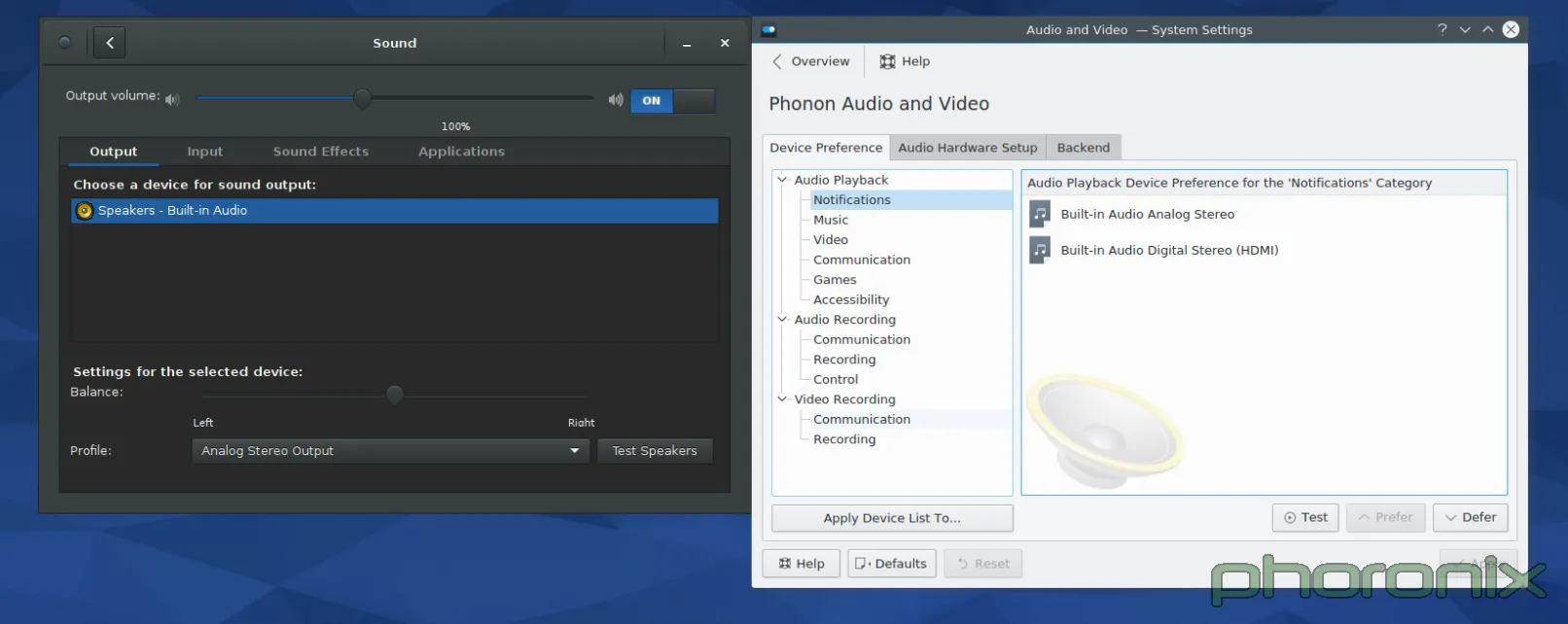

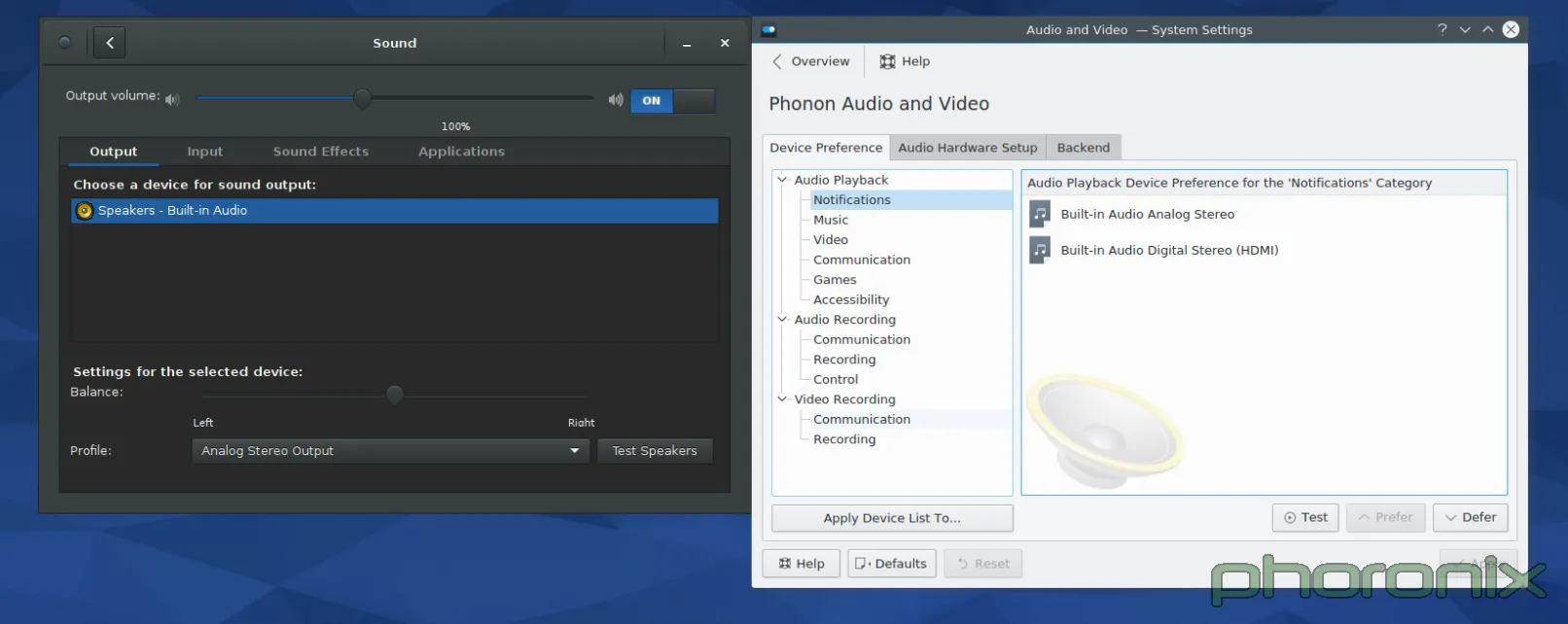

Enough about printers though... Next KDE System Setting that is up for my public stoning? Multimedia, Aka Phonon.

|

||||

|

||||

|

||||

|

||||

As always, Gnome's on the left, KDE is on the right. Let's just run through the Gnome setting first... The eyes go left to right, top to bottom, right? So let's do the same. First up: volume control slider. The blue hint against the empty bar with 100% clearly marked removes all confusion about which way is "volume up." Immediately after the slider is an easy On/Off toggle that functions a mute on/off. Points to Gnome for remembering what the volume was set to BEFORE I muted sound, and returning to that same level AFTER I press volume-up to un-mute. Kmixer, you amnesiac piece of crap, I wish I could say as much about you.

|

||||

|

||||

Moving on! Tabbed options for Output, Input and Applications? With per application volume controls within easy reach? Gnome I love you more and more with every passing second. Balance options, sound profiles, and a clearly marked "Test Speakers" option.

|

||||

|

||||

I'm not sure how this could have been implemented in a cleaner, more concise way. Yes, it's just a Gnome-ized Pavucontrol but I think that's the point. Pavucontrol got it mostly right to begin with, the Sound applet in Gnome Control Center just refines it slightly to make it even closer to perfect.

|

||||

|

||||

Phonon, you're up. And let me start by saying: What the fsck am I looking at? -I- get that I am looking at the priority list for the audio devices on the system, but the way it is presented is a bit of a nightmare. Also where are the things the user probably cares about? A priority list is a great thing to have, it SHOULD be available, but it's something the user messes with once or twice and then never touches again. It's not important, or common, enough to warrant being front and center. Where's the volume slider? Where's per application controls? The things that users will be using more frequently? Well.. those are under Kmix, a separate program, with its own settings and configuration... not under the System Settings... which kind of makes System Settings a bit of a misnomer. And in that same vein, Let's hop over to network settings.

|

||||

|

||||

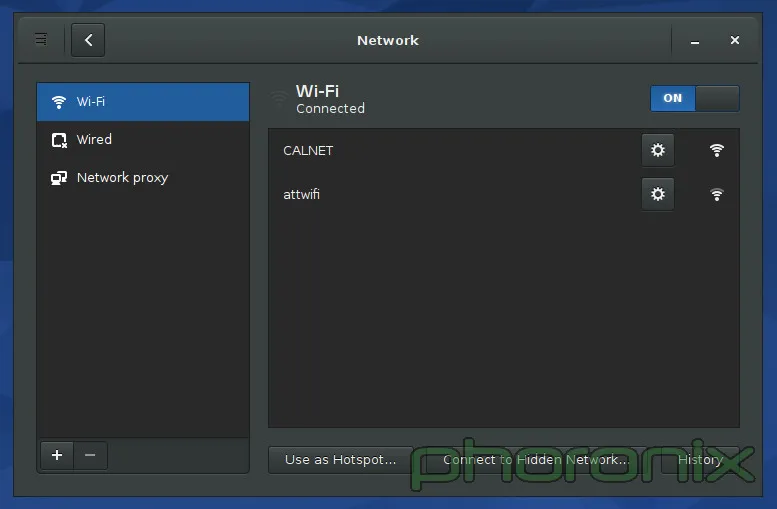

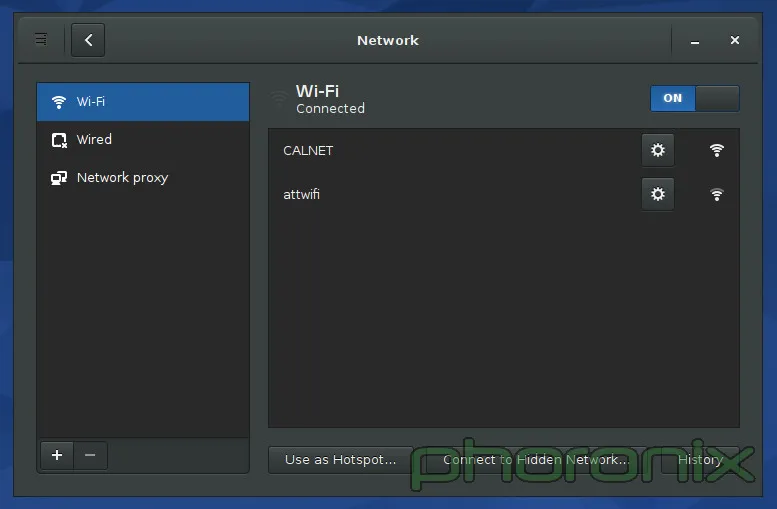

|

||||

|

||||

Presented above is the Gnome Network Settings. KDE's isn't included because of the reason I'm about to hit on. If you go to KDE's System Settings and hit any of the three options under the "Network" Section you get tons of options: Bluetooth settings, default username and password for Samba shares (Seriously, "Connectivity" only has 2 options: Username and password for SMB shares. How the fsck does THAT deserve the all-inclusive title "Connectivity"?), controls for Browser Identification (which only work for Konqueror...a dead project), proxy settings, etc... Where's my wifi settings? They aren't there. Where are they? Well, they are in the network applet's private settings... not under Network Settings...

|

||||

|

||||

KDE, you're killing me. You have "System Settings" USE IT!

|

||||

|

||||

--------------------------------------------------------------------------------

|

||||

|

||||

via: http://www.phoronix.com/scan.php?page=article&item=gnome-week-editorial&num=4

|

||||

|

||||

作者:Eric Griffith

|

||||

译者:[译者ID](https://github.com/译者ID)

|

||||

校对:[校对者ID](https://github.com/校对者ID)

|

||||

|

||||

本文由 [LCTT](https://github.com/LCTT/TranslateProject) 原创翻译,[Linux中国](https://linux.cn/) 荣誉推出

|

||||

@ -1,40 +0,0 @@

|

||||

Translating by XLCYun.

|

||||

A Week With GNOME As My Linux Desktop: What They Get Right & Wrong - Page 5 - Conclusion

|

||||

================================================================================

|

||||

### User Experience and Closing Thoughts ###

|

||||

|

||||

When Gnome 2.x and KDE 4.x were going head to head.. I jumped between the two quite happily. Some things I loved, some things I hated, but over all they were both a pleasure to use. Then Gnome 3.x came around and all of the drama with Gnome Shell. I swore off Gnome and avoided it every chance I could. It wasn't user friendly, it was non-intuitive, it broke an establish paradigm in preparation for tablet's taking over the world... A future that, judging from the dropping sales of tablets, will never come.

|

||||

|

||||

Eight releases of Gnome 3 later and the unimaginable happened. Gnome got user friendly. Gnome got intuitive. Is it perfect? Of course not. I still hate the paradigm it tries to push, I hate how it tries to force a work flow onto me, but both of those things can be gotten used to with time and patience. Once you have managed to look past Gnome Shell's alien appearance and you start interacting with it and the other parts of Gnome (Control Center especially) you see what Gnome has definitely gotten right: the little things. The attention to detail.

|

||||

|

||||

People can adapt to new paradigms, people can adapt to new work flows-- the iPhone and iPad proved that-- but what will always bother them are the paper cuts.

|

||||

|

||||

Which brings up an important distinction between KDE and Gnome. Gnome feels like a product. It feels like a singular experience. When you use it, it feels like it is complete and that everything you need is at your fingertips. It feel's like THE Linux desktop in the same way that Windows or OS X have THE desktop experience: what you need is there and it was all written by the same guys working on the same team towards the same goal. Hell, even an application prompting for sudo access feels like an intentional part of the desktop under Gnome, much the way that it is under Windows. In KDE it's just some random-looking window popup that any application could have created. It doesn't feel like a part of the system stopping and going "Hey! Something has requested administrative rights! Do you want to let it go through?" in an official capacity.

|

||||

|

||||

KDE doesn't feel like cohesive experience. KDE doesn't feel like it has a direction its moving in, it doesn't feel like a full experience. KDE feels like its a bunch of pieces that are moving in a bunch of different directions, that just happen to have a shared toolkit beneath them. If that's what the developers are happy with, then fine, good for them, but if the developers still have the hope of offering the best experience possible then the little stuff needs to matter. The user experience and being intuitive needs to be at the forefront of every single application, there needs to be a vision of what KDE wants to offer -and- how it should look.

|

||||

|

||||

Is there anything stopping me from using Gnome Disks under KDE? Rhythmbox? Evolution? Nope. Nope. Nope. But that misses the point. Gnome and KDE both market themselves as "Desktop Environments." They are supposed to be full -environments-, that means they all the pieces come and fit together, that you use that environment's tools because they are saying "We support everything you need to have a full desktop." Honestly? Only Gnome seems to fit the bill of being complete. KDE feel's half-finished when it comes to "coming together" part, let alone offering everything you need for a "full experience". There's no counterpart to Gnome Disks-- kpartitionmanager prompts for root. No "First Time User" run through, it just now got a user manager in Kubuntu. Hell, Gnome even provides a Maps, Notes, Calendar and Clock application. Do all of these applications matter 100%? No, of course not. But the fact that Gnome has them helps to push the idea that Gnome is a full and complete experience.

|

||||

|

||||

My complaints about KDE are not impossible to fix, not by a long shot. But it requires people to care. It requires developers to take pride in their work beyond just function-- form counts for a whole hell of a lot. Don't take away the user's ability to configure things-- the lack of configuration is one of my biggest gripes with GNOME 3.x, but don't use "Well you can configure it however you want," as an excuse for not providing sane defaults. The defaults are what users are going to see, they are what the users are going to judge from the first moment they open your application. Make it a good impression.

|

||||

|

||||

I know the KDE developers know design matters, that is WHY the Visual Design Group exists, but it feels like they aren't using the VDG to their fullest. And therein lies KDE's hamartia. It's not that KDE can't be complete, it's not that it can't come together and fix the downfalls, it just that they haven't. They aimed for the bulls eye... but they missed.

|

||||

|

||||

And before anyone says it... Don't say "Patches are welcome." Because while I can happily submit patches for the individual annoyances more will just keep coming as developers keep on their marry way of doing things in non-intuitive ways. This isn't about Muon not being center-aligned. This isn't about Amarok having an ugly UI. This isn't about the volume and brightness pop-up notifiers taking up a large chunk of my screen real-estate every time I hit my hotkeys (seriously, someone shrink those things).

|

||||

|

||||

This is about a mentality of apathy, this is about developers apparently not thinking things through when they make the UI for their applications. Everything the KDE Community does works fine. Amarok plays music. Dragon Player plays videos. Kwin / Qt & kdelibs is seemingly more power efficient than Mutter / gtk (according to my battery life times. Non-scientific testing). Those things are all well and good, and important.. but the presentation matters to. Arguably, the presentation matters the most because that is what user's see and interact with.

|

||||

|

||||

To KDE application developers... Get the VDG involved. Make every single 'core' application get its design vetted and approved by the VDG, have a UI/UX expert from the VDG go through the usage patterns and usage flow of your application to make sure its intuitive. Hell, even just posting a mock up to the VDG forums and asking for feedback would probably get you some nice pointers and feedback for whatever application you're working on. You have this great resource there, now actually use them.

|

||||

|

||||

I am not trying to sound ungrateful. I love KDE, I love the work and effort that volunteers put into giving Linux users a viable desktop, and an alternative to Gnome. And it is because I care that I write this article. Because I want to see KDE excel, I want to see it go further and farther than it has before. But doing that requires work on everyone's part, and it requires that people don't hold back criticism. It requires that people are honest about their interaction with the system and where it falls apart. If we can't give direct criticism, if we can't say "This sucks!" then it will never get better.

|

||||

|

||||

Will I still use Gnome after this week? Probably not, no. Gnome still trying to force a work flow on me that I don't want to follow or abide by, I feel less productive when I'm using it because it doesn't follow my paradigm. For my friends though, when they ask me "What desktop environment should I use?" I'm probably going to recommend Gnome, especially if they are less technical users who want things to "just work." And that is probably the most damning assessment I could make in regards to the current state of KDE.

|

||||

|

||||

--------------------------------------------------------------------------------

|

||||

|

||||

via: http://www.phoronix.com/scan.php?page=article&item=gnome-week-editorial&num=5

|

||||

|

||||

作者:Eric Griffith

|

||||

译者:[译者ID](https://github.com/译者ID)

|

||||

校对:[校对者ID](https://github.com/校对者ID)

|

||||

|

||||

本文由 [LCTT](https://github.com/LCTT/TranslateProject) 原创翻译,[Linux中国](https://linux.cn/) 荣誉推出

|

||||

@ -1,409 +0,0 @@

|

||||

translation by strugglingyouth

|

||||

How to monitor NGINX - Part 1

|

||||

================================================================================

|

||||

|

||||

|

||||

### What is NGINX? ###

|

||||

|

||||

[NGINX][1] (pronounced “engine X”) is a popular HTTP server and reverse proxy server. As an HTTP server, NGINX serves static content very efficiently and reliably, using relatively little memory. As a [reverse proxy][2], it can be used as a single, controlled point of access for multiple back-end servers or for additional applications such as caching and load balancing. NGINX is available as a free, open-source product or in a more full-featured, commercially distributed version called NGINX Plus.

|

||||

|

||||

NGINX can also be used as a mail proxy and a generic TCP proxy, but this article does not directly address NGINX monitoring for these use cases.

|

||||

|

||||

### Key NGINX metrics ###

|

||||

|

||||

By monitoring NGINX you can catch two categories of issues: resource issues within NGINX itself, and also problems developing elsewhere in your web infrastructure. Some of the metrics most NGINX users will benefit from monitoring include **requests per second**, which provides a high-level view of combined end-user activity; **server error rate**, which indicates how often your servers are failing to process seemingly valid requests; and **request processing time**, which describes how long your servers are taking to process client requests (and which can point to slowdowns or other problems in your environment).

|

||||

|

||||

More generally, there are at least three key categories of metrics to watch:

|

||||

|

||||

- Basic activity metrics

|

||||

- Error metrics

|

||||

- Performance metrics

|

||||

|

||||

Below we’ll break down a few of the most important NGINX metrics in each category, as well as metrics for a fairly common use case that deserves special mention: using NGINX Plus for reverse proxying. We will also describe how you can monitor all of these metrics with your graphing or monitoring tools of choice.

|

||||

|

||||

This article references metric terminology [introduced in our Monitoring 101 series][3], which provides a framework for metric collection and alerting.

|

||||

|

||||

#### Basic activity metrics ####

|

||||

|

||||

Whatever your NGINX use case, you will no doubt want to monitor how many client requests your servers are receiving and how those requests are being processed.

|

||||

|

||||

NGINX Plus can report basic activity metrics exactly like open-source NGINX, but it also provides a secondary module that reports metrics slightly differently. We discuss open-source NGINX first, then the additional reporting capabilities provided by NGINX Plus.

|

||||

|

||||

**NGINX**

|

||||

|

||||

The diagram below shows the lifecycle of a client connection and how the open-source version of NGINX collects metrics during a connection.

|

||||

|

||||

|

||||

|

||||

Accepts, handled, and requests are ever-increasing counters. Active, waiting, reading, and writing grow and shrink with request volume.

|

||||

|

||||

注:表格

|

||||

<table>

|

||||

<colgroup>

|

||||

<col style="text-align: left;">

|

||||

<col style="text-align: left;">

|

||||

<col style="text-align: left;"> </colgroup>

|

||||

<thead>

|

||||

<tr>

|

||||

<th style="text-align: left;"><strong>Name</strong></th>

|

||||

<th style="text-align: left;"><strong>Description</strong></th>

|

||||

<th style="text-align: left;"><strong><a target="_blank" href="https://www.datadoghq.com/blog/monitoring-101-collecting-data/">Metric type</a></strong></th>

|

||||

</tr>

|

||||

</thead>

|

||||

<tbody>

|

||||

<tr>

|

||||

<td style="text-align: left;">accepts</td>

|

||||

<td style="text-align: left;">Count of client connections attempted by NGINX</td>

|

||||

<td style="text-align: left;">Resource: Utilization</td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td style="text-align: left;">handled</td>

|

||||

<td style="text-align: left;">Count of successful client connections</td>

|

||||

<td style="text-align: left;">Resource: Utilization</td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td style="text-align: left;">active</td>

|

||||

<td style="text-align: left;">Currently active client connections</td>

|

||||

<td style="text-align: left;">Resource: Utilization</td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td style="text-align: left;">dropped (calculated)</td>

|

||||

<td style="text-align: left;">Count of dropped connections (accepts – handled)</td>

|

||||

<td style="text-align: left;">Work: Errors*</td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td style="text-align: left;">requests</td>

|

||||

<td style="text-align: left;">Count of client requests</td>

|

||||

<td style="text-align: left;">Work: Throughput</td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td colspan="3" style="text-align: left;">*<em>Strictly speaking, dropped connections is <a target="_blank" href="https://www.datadoghq.com/blog/monitoring-101-collecting-data/#resource-metrics">a metric of resource saturation</a>, but since saturation causes NGINX to stop servicing some work (rather than queuing it up for later), “dropped” is best thought of as <a target="_blank" href="https://www.datadoghq.com/blog/monitoring-101-collecting-data/#work-metrics">a work metric</a>.</em></td>

|

||||

</tr>

|

||||

</tbody>

|

||||

</table>

|

||||

|

||||

The **accepts** counter is incremented when an NGINX worker picks up a request for a connection from the OS, whereas **handled** is incremented when the worker actually gets a connection for the request (by establishing a new connection or reusing an open one). These two counts are usually the same—any divergence indicates that connections are being **dropped**, often because a resource limit, such as NGINX’s [worker_connections][4] limit, has been reached.

|

||||

|

||||

Once NGINX successfully handles a connection, the connection moves to an **active** state, where it remains as client requests are processed:

|

||||

|

||||

Active state

|

||||

|

||||

- **Waiting**: An active connection may also be in a Waiting substate if there is no active request at the moment. New connections can bypass this state and move directly to Reading, most commonly when using “accept filter” or “deferred accept”, in which case NGINX does not receive notice of work until it has enough data to begin working on the response. Connections will also be in the Waiting state after sending a response if the connection is set to keep-alive.

|

||||

- **Reading**: When a request is received, the connection moves out of the waiting state, and the request itself is counted as Reading. In this state NGINX is reading a client request header. Request headers are lightweight, so this is usually a fast operation.

|

||||

- **Writing**: After the request is read, it is counted as Writing, and remains in that state until a response is returned to the client. That means that the request is Writing while NGINX is waiting for results from upstream systems (systems “behind” NGINX), and while NGINX is operating on the response. Requests will often spend the majority of their time in the Writing state.

|

||||

|

||||

Often a connection will only support one request at a time. In this case, the number of Active connections == Waiting connections + Reading requests + Writing requests. However, the newer SPDY and HTTP/2 protocols allow multiple concurrent requests/responses to be multiplexed over a connection, so Active may be less than the sum of Waiting, Reading, and Writing. (As of this writing, NGINX does not support HTTP/2, but expects to add support during 2015.)

|

||||

|

||||

**NGINX Plus**

|

||||

|

||||

As mentioned above, all of open-source NGINX’s metrics are available within NGINX Plus, but Plus can also report additional metrics. The section covers the metrics that are only available from NGINX Plus.

|

||||

|

||||

|

||||

|

||||

Accepted, dropped, and total are ever-increasing counters. Active, idle, and current track the current number of connections or requests in each of those states, so they grow and shrink with request volume.

|

||||

|

||||

注:表格

|

||||

<table>

|

||||

<colgroup>

|

||||

<col style="text-align: left;">

|

||||

<col style="text-align: left;">

|

||||

<col style="text-align: left;"> </colgroup>

|

||||

<thead>

|

||||

<tr>

|

||||

<th style="text-align: left;"><strong>Name</strong></th>

|

||||

<th style="text-align: left;"><strong>Description</strong></th>

|

||||

<th style="text-align: left;"><strong><a target="_blank" href="https://www.datadoghq.com/blog/monitoring-101-collecting-data/">Metric type</a></strong></th>

|

||||

</tr>

|

||||

</thead>

|

||||

<tbody>

|

||||

<tr>

|

||||

<td style="text-align: left;">accepted</td>

|

||||

<td style="text-align: left;">Count of client connections attempted by NGINX</td>

|

||||

<td style="text-align: left;">Resource: Utilization</td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td style="text-align: left;">dropped</td>

|

||||

<td style="text-align: left;">Count of dropped connections</td>

|

||||

<td style="text-align: left;">Work: Errors*</td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td style="text-align: left;">active</td>

|

||||

<td style="text-align: left;">Currently active client connections</td>

|

||||

<td style="text-align: left;">Resource: Utilization</td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td style="text-align: left;">idle</td>

|

||||

<td style="text-align: left;">Client connections with zero current requests</td>

|

||||

<td style="text-align: left;">Resource: Utilization</td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td style="text-align: left;">total</td>

|

||||

<td style="text-align: left;">Count of client requests</td>

|

||||

<td style="text-align: left;">Work: Throughput</td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td colspan="3" style="text-align: left;">*<em>Strictly speaking, dropped connections is a metric of resource saturation, but since saturation causes NGINX to stop servicing some work (rather than queuing it up for later), “dropped” is best thought of as a work metric.</em></td>

|

||||

</tr>

|

||||

</tbody>

|

||||

</table>

|

||||

|

||||

The **accepted** counter is incremented when an NGINX Plus worker picks up a request for a connection from the OS. If the worker fails to get a connection for the request (by establishing a new connection or reusing an open one), then the connection is dropped and **dropped** is incremented. Ordinarily connections are dropped because a resource limit, such as NGINX Plus’s [worker_connections][4] limit, has been reached.

|

||||

|

||||

**Active** and **idle** are the same as “active” and “waiting” states in open-source NGINX as described [above][5], with one key exception: in open-source NGINX, “waiting” falls under the “active” umbrella, whereas in NGINX Plus “idle” connections are excluded from the “active” count. **Current** is the same as the combined “reading + writing” states in open-source NGINX.

|

||||

|

||||

**Total** is a cumulative count of client requests. Note that a single client connection can involve multiple requests, so this number may be significantly larger than the cumulative number of connections. In fact, (total / accepted) yields the average number of requests per connection.

|

||||

|

||||

**Metric differences between Open-Source and Plus**

|

||||

|

||||

注:表格

|

||||

<table>

|

||||

<colgroup>

|

||||

<col style="text-align: left;">

|

||||

<col style="text-align: left;"> </colgroup>

|

||||

<thead>

|

||||

<tr>

|

||||

<th style="text-align: left;">NGINX (open-source)</th>

|

||||

<th style="text-align: left;">NGINX Plus</th>

|

||||

</tr>

|

||||

</thead>

|

||||

<tbody>

|

||||

<tr>

|

||||

<td style="text-align: left;">accepts</td>

|

||||

<td style="text-align: left;">accepted</td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td style="text-align: left;">dropped must be calculated</td>

|

||||

<td style="text-align: left;">dropped is reported directly</td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td style="text-align: left;">reading + writing</td>

|

||||

<td style="text-align: left;">current</td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td style="text-align: left;">waiting</td>

|

||||

<td style="text-align: left;">idle</td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td style="text-align: left;">active (includes “waiting” states)</td>

|

||||

<td style="text-align: left;">active (excludes “idle” states)</td>

|

||||

</tr>

|

||||

<tr>

|

||||