[@TimSweeneyEpic][8] 可能会很喜欢 😊

+>

+> ![pic.twitter.com/7mt9fXt7TH][9]

+>

+> — Lutris Gaming (@LutrisGaming) [April 17, 2019][10]

+

+作为一名狂热的游戏玩家和 Linux 用户,我立即得到了这个消息,并安装了 Lutris 来运行 Epic 游戏。

+

+**备注:** 我使用 [Ubuntu 19.04][11] 来测试 Linux 环境下的游戏运行情况。

+

+### 通过 Lutris 在 Linux 下使用 Epic 游戏商城

+

+为了在你的 Linux 系统中安装 Epic 游戏商城,请确保你已经安装了 Wine 和 Python 3。接下来,[在 Ubuntu 中安装 Wine][12] ,或任何你正在使用的 Linux 发行版本也都可以。然后, [从官方网站下载 Lutris][13].

+

+#### 安装 Epic 游戏商城

+

+Lutris 安装成功后,直接启动它。

+

+当我尝试时,我遇到了一个问题(当我用 GUI 启动时却没有遇到)。当我尝试在命令行输入 `lutris` 来启动时,我发现了下图所示的错误:

+

+![][15]

+

+感谢 Abhishek,我了解到了这是一个常见问题 (你可以在 [GitHub][16] 上查看这个问题)。

+

+总之,为了解决这个问题,我需要在命令行中输入以下命令:

+

+```

+export LC_ALL=C

+```

+

+当你遇到同样的问题时,只要你输入这个命令,就能正常启动 Lutris 了。

+

+**注意**:每次启动 Lutris 时都必须输入这个命令。因此,最好将其添加到 `.bashrc` 文件或环境变量列表中。

+

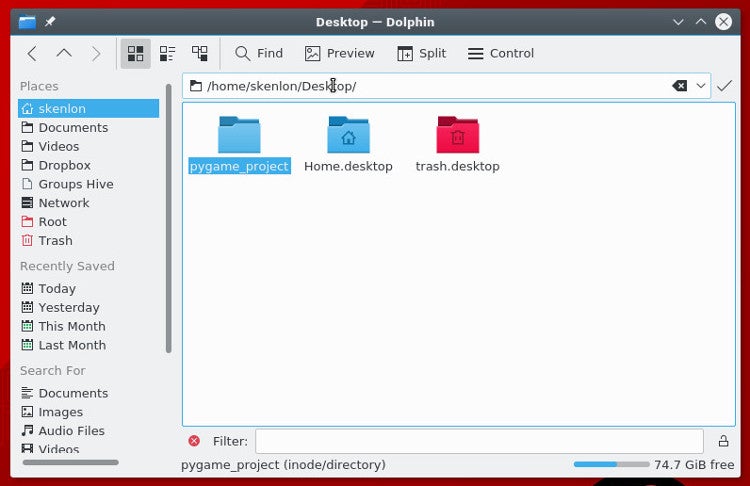

+上述操作完成后,只要启动并搜索 “Epic Games Store” 会显示以下图片中的内容:

+

+![Epic Games Store in Lutris][17]

+

+在这里,我已经安装过了,所以你将会看到“安装”选项,它会自动询问你是否需要安装需要的包。只需要继续操作就可以成功安装。就是这样,不需要任何黑科技。

+

+#### 玩一款 Epic 游戏商城中的游戏

+

+![Epic Games Store][18]

+

+现在我们已经通过 Lutris 在 Linux 上安装了 Epic 游戏商城,启动它并登录你的账号就可以开始了。

+

+但这真会奏效吗?

+

+*是的,Epic 游戏商城可以运行。* **但是所有游戏都不能玩。**(LCTT 译注:莫生气,请看文末的进一步解释!)

+

+好吧,我并没有尝试过所有内容,但是我拿了一个免费的游戏(Transistor —— 一款回合制 ARPG 游戏)来检查它是否工作。

+

+![Transistor – Epic Games Store][19]

+

+很不幸,游戏没有启动。当我运行时界面显示了 “Running” 不过什么都没有发生。

+

+到目前为止,我还不知道有什么解决方案 —— 所以如果我找到解决方案,我会尽力让你们知道最新情况。

+

+### 总结

+

+通过 Lutris 这样的工具使 Linux 的游戏场景得到了改善,这终归是个好消息 。不过,仍有许多工作要做。

+

+对于在 Linux 上运行的游戏来说,无障碍运行仍然是一个挑战。其中可能就会有我遇到的这种问题,或者其它类似的。但它正朝着正确的方向发展 —— 即使还存在着一些问题。

+

+你有什么看法吗?你是否也尝试用 Lutris 在 Linux 上启动 Epic 游戏商城?在下方评论让我们看看你的意见。

+

+### 补充

+

+Transistor 实际上有一个原生的 Linux 移植版。到目前为止,我从 Epic 获得的所有游戏都是如此。所以我会试着压下我的郁闷,而因为 Epic 只让你玩你通过他们的商店/启动器购买的游戏,所以在 Linux 机器上用 Lutris 玩这个原生的 Linux 游戏是不可能的。这简直愚蠢极了。Steam 有一个原生的 Linux 启动器,虽然不是很理想,但它可以工作。GOG 允许你从网站下载购买的内容,可以在你喜欢的任何平台上玩这些游戏。他们的启动器完全是可选的。

+

+我对此非常恼火,因为我在我的 Epic 库中的游戏都是可以在我的笔记本电脑上运行得很好的游戏,当我坐在桌面前时,玩起来很有趣。但是因为那台桌面机是我唯一拥有的 Windows 机器……

+

+我选择使用 Linux 时已经知道会存在兼容性问题,并且我有一个专门用于游戏的 Windows 机器,而我通过 Epic 获得的游戏都是免费的,所以我在这里只是表示一下不满。但是,他们两个作为最著名的竞争对手,Epic 应该有在我的 Linux 机器上玩原生 Linux 移植版的机制。

+

+--------------------------------------------------------------------------------

+

+via: https://itsfoss.com/epic-games-lutris-linux/

+

+作者:[Ankush Das][a]

+选题:[lujun9972][b]

+译者:[Modrisco](https://github.com/Modrisco)

+校对:[wxy](https://github.com/wxy)

+

+本文由 [LCTT](https://github.com/LCTT/TranslateProject) 原创编译,[Linux中国](https://linux.cn/) 荣誉推出

+

+[a]: https://itsfoss.com/author/ankush/

+[b]: https://github.com/lujun9972

+[1]: https://linux.cn/article-7316-1.html

+[2]: https://linux.cn/article-10061-1.html

+[3]: https://linux.cn/article-10054-1.html

+[4]: https://lutris.net/

+[5]: https://itsfoss.com/wp-content/uploads/2019/04/epic-games-store-lutris-linux-800x450.png

+[6]: https://www.epicgames.com/store/en-US/

+[7]: https://twitter.com/EpicGames?ref_src=twsrc%5Etfw

+[8]: https://twitter.com/TimSweeneyEpic?ref_src=twsrc%5Etfw

+[9]: https://pbs.twimg.com/media/D4XkXafX4AARDkW?format=jpg&name=medium

+[10]: https://twitter.com/LutrisGaming/status/1118552969816018948?ref_src=twsrc%5Etfw

+[11]: https://itsfoss.com/ubuntu-19-04-release-features/

+[12]: https://itsfoss.com/install-latest-wine/

+[13]: https://lutris.net/downloads/

+[14]: https://itsfoss.com/ubuntu-mate-entroware/

+[15]: https://itsfoss.com/wp-content/uploads/2019/04/lutris-error.jpg

+[16]: https://github.com/lutris/lutris/issues/660

+[17]: https://itsfoss.com/wp-content/uploads/2019/04/lutris-epic-games-store-800x520.jpg

+[18]: https://itsfoss.com/wp-content/uploads/2019/04/epic-games-store-800x450.jpg

+[19]: https://itsfoss.com/wp-content/uploads/2019/04/transistor-game-epic-games-store-800x410.jpg

+[20]: https://itsfoss.com/skpe-alpha-linux/

diff --git a/published/20190423 How to identify same-content files on Linux.md b/published/20190423 How to identify same-content files on Linux.md

new file mode 100644

index 0000000000..6353fe86b3

--- /dev/null

+++ b/published/20190423 How to identify same-content files on Linux.md

@@ -0,0 +1,256 @@

+[#]: collector: (lujun9972)

+[#]: translator: (tomjlw)

+[#]: reviewer: (wxy)

+[#]: publisher: (wxy)

+[#]: url: (https://linux.cn/article-10955-1.html)

+[#]: subject: (How to identify same-content files on Linux)

+[#]: via: (https://www.networkworld.com/article/3390204/how-to-identify-same-content-files-on-linux.html#tk.rss_all)

+[#]: author: (Sandra Henry-Stocker https://www.networkworld.com/author/Sandra-Henry_Stocker/)

+

+如何在 Linux 上识别同样内容的文件

+======

+> 有时文件副本相当于对硬盘空间的巨大浪费,并会在你想要更新文件时造成困扰。以下是用来识别这些文件的六个命令。

+

+![Vinoth Chandar \(CC BY 2.0\)][1]

+

+在最近的帖子中,我们看了[如何识别并定位硬链接的文件][2](即,指向同一硬盘内容并共享 inode)。在本文中,我们将查看能找到具有相同*内容*,却不相链接的文件的命令。

+

+硬链接很有用是因为它们能够使文件存放在文件系统内的多个地方却不会占用额外的硬盘空间。另一方面,有时文件副本相当于对硬盘空间的巨大浪费,在你想要更新文件时也会有造成困扰之虞。在本文中,我们将看一下多种识别这些文件的方式。

+

+### 用 diff 命令比较文件

+

+可能比较两个文件最简单的方法是使用 `diff` 命令。输出会显示你文件的不同之处。`<` 和 `>` 符号代表在当参数传过来的第一个(`<`)或第二个(`>`)文件中是否有额外的文字行。在这个例子中,在 `backup.html` 中有额外的文字行。

+

+```

+$ diff index.html backup.html

+2438a2439,2441

+>

+> That's all there is to report.

+>

+```

+

+如果 `diff` 没有输出那代表两个文件相同。

+

+```

+$ diff home.html index.html

+$

+```

+

+`diff` 的唯一缺点是它一次只能比较两个文件并且你必须指定用来比较的文件,这篇帖子中的一些命令可以为你找到多个重复文件。

+

+### 使用校验和

+

+`cksum`(checksum) 命令计算文件的校验和。校验和是一种将文字内容转化成一个长数字(例如2819078353 228029)的数学简化。虽然校验和并不是完全独有的,但是文件内容不同校验和却相同的概率微乎其微。

+

+```

+$ cksum *.html

+2819078353 228029 backup.html

+4073570409 227985 home.html

+4073570409 227985 index.html

+```

+

+在上述示例中,你可以看到产生同样校验和的第二个和第三个文件是如何可以被默认为相同的。

+

+### 使用 find 命令

+

+虽然 `find` 命令并没有寻找重复文件的选项,它依然可以被用来通过名字或类型寻找文件并运行 `cksum` 命令。例如:

+

+```

+$ find . -name "*.html" -exec cksum {} \;

+4073570409 227985 ./home.html

+2819078353 228029 ./backup.html

+4073570409 227985 ./index.html

+```

+

+### 使用 fslint 命令

+

+`fslint` 命令可以被特地用来寻找重复文件。注意我们给了它一个起始位置。如果它需要遍历相当多的文件,这就需要花点时间来完成。注意它是如何列出重复文件并寻找其它问题的,比如空目录和坏 ID。

+

+```

+$ fslint .

+-----------------------------------file name lint

+-------------------------------Invalid utf8 names

+-----------------------------------file case lint

+----------------------------------DUPlicate files <==

+home.html

+index.html

+-----------------------------------Dangling links

+--------------------redundant characters in links

+------------------------------------suspect links

+--------------------------------Empty Directories

+./.gnupg

+----------------------------------Temporary Files

+----------------------duplicate/conflicting Names

+------------------------------------------Bad ids

+-------------------------Non Stripped executables

+```

+

+你可能需要在你的系统上安装 `fslint`。你可能也需要将它加入你的命令搜索路径:

+

+```

+$ export PATH=$PATH:/usr/share/fslint/fslint

+```

+

+### 使用 rdfind 命令

+

+`rdfind` 命令也会寻找重复(相同内容的)文件。它的名字意即“重复数据搜寻”,并且它能够基于文件日期判断哪个文件是原件——这在你选择删除副本时很有用因为它会移除较新的文件。

+

+```

+$ rdfind ~

+Now scanning "/home/shark", found 12 files.

+Now have 12 files in total.

+Removed 1 files due to nonunique device and inode.

+Total size is 699498 bytes or 683 KiB

+Removed 9 files due to unique sizes from list.2 files left.

+Now eliminating candidates based on first bytes:removed 0 files from list.2 files left.

+Now eliminating candidates based on last bytes:removed 0 files from list.2 files left.

+Now eliminating candidates based on sha1 checksum:removed 0 files from list.2 files left.

+It seems like you have 2 files that are not unique

+Totally, 223 KiB can be reduced.

+Now making results file results.txt

+```

+

+你可以在 `dryrun` 模式中运行这个命令 (换句话说,仅仅汇报可能会另外被做出的改动)。

+

+```

+$ rdfind -dryrun true ~

+(DRYRUN MODE) Now scanning "/home/shark", found 12 files.

+(DRYRUN MODE) Now have 12 files in total.

+(DRYRUN MODE) Removed 1 files due to nonunique device and inode.

+(DRYRUN MODE) Total size is 699352 bytes or 683 KiB

+Removed 9 files due to unique sizes from list.2 files left.

+(DRYRUN MODE) Now eliminating candidates based on first bytes:removed 0 files from list.2 files left.

+(DRYRUN MODE) Now eliminating candidates based on last bytes:removed 0 files from list.2 files left.

+(DRYRUN MODE) Now eliminating candidates based on sha1 checksum:removed 0 files from list.2 files left.

+(DRYRUN MODE) It seems like you have 2 files that are not unique

+(DRYRUN MODE) Totally, 223 KiB can be reduced.

+(DRYRUN MODE) Now making results file results.txt

+```

+

+`rdfind` 命令同样提供了类似忽略空文档(`-ignoreempty`)和跟踪符号链接(`-followsymlinks`)的功能。查看 man 页面获取解释。

+

+```

+-ignoreempty ignore empty files

+-minsize ignore files smaller than speficied size

+-followsymlinks follow symbolic links

+-removeidentinode remove files referring to identical inode

+-checksum identify checksum type to be used

+-deterministic determiness how to sort files

+-makesymlinks turn duplicate files into symbolic links

+-makehardlinks replace duplicate files with hard links

+-makeresultsfile create a results file in the current directory

+-outputname provide name for results file

+-deleteduplicates delete/unlink duplicate files

+-sleep set sleep time between reading files (milliseconds)

+-n, -dryrun display what would have been done, but don't do it

+```

+

+注意 `rdfind` 命令提供了 `-deleteduplicates true` 的设置选项以删除副本。希望这个命令语法上的小问题不会惹恼你。;-)

+

+```

+$ rdfind -deleteduplicates true .

+...

+Deleted 1 files. <==

+```

+

+你将可能需要在你的系统上安装 `rdfind` 命令。试验它以熟悉如何使用它可能是一个好主意。

+

+### 使用 fdupes 命令

+

+`fdupes` 命令同样使得识别重复文件变得简单。它同时提供了大量有用的选项——例如用来迭代的 `-r`。在这个例子中,它像这样将重复文件分组到一起:

+

+```

+$ fdupes ~

+/home/shs/UPGRADE

+/home/shs/mytwin

+

+/home/shs/lp.txt

+/home/shs/lp.man

+

+/home/shs/penguin.png

+/home/shs/penguin0.png

+/home/shs/hideme.png

+```

+

+这是使用迭代的一个例子,注意许多重复文件是重要的(用户的 `.bashrc` 和 `.profile` 文件)并且不应被删除。

+

+```

+# fdupes -r /home

+/home/shark/home.html

+/home/shark/index.html

+

+/home/dory/.bashrc

+/home/eel/.bashrc

+

+/home/nemo/.profile

+/home/dory/.profile

+/home/shark/.profile

+

+/home/nemo/tryme

+/home/shs/tryme

+

+/home/shs/arrow.png

+/home/shs/PNGs/arrow.png

+

+/home/shs/11/files_11.zip

+/home/shs/ERIC/file_11.zip

+

+/home/shs/penguin0.jpg

+/home/shs/PNGs/penguin.jpg

+/home/shs/PNGs/penguin0.jpg

+

+/home/shs/Sandra_rotated.png

+/home/shs/PNGs/Sandra_rotated.png

+```

+

+`fdupe` 命令的许多选项列如下。使用 `fdupes -h` 命令或者阅读 man 页面获取详情。

+

+```

+-r --recurse recurse

+-R --recurse: recurse through specified directories

+-s --symlinks follow symlinked directories

+-H --hardlinks treat hard links as duplicates

+-n --noempty ignore empty files

+-f --omitfirst omit the first file in each set of matches

+-A --nohidden ignore hidden files

+-1 --sameline list matches on a single line

+-S --size show size of duplicate files

+-m --summarize summarize duplicate files information

+-q --quiet hide progress indicator

+-d --delete prompt user for files to preserve

+-N --noprompt when used with --delete, preserve the first file in set

+-I --immediate delete duplicates as they are encountered

+-p --permissions don't soncider files with different owner/group or

+ permission bits as duplicates

+-o --order=WORD order files according to specification

+-i --reverse reverse order while sorting

+-v --version display fdupes version

+-h --help displays help

+```

+

+`fdupes` 命令是另一个你可能需要安装并使用一段时间才能熟悉其众多选项的命令。

+

+### 总结

+

+Linux 系统提供能够定位并(潜在地)能移除重复文件的一系列的好工具,以及能让你指定搜索区域及当对你所发现的重复文件时的处理方式的选项。

+

+--------------------------------------------------------------------------------

+

+via: https://www.networkworld.com/article/3390204/how-to-identify-same-content-files-on-linux.html#tk.rss_all

+

+作者:[Sandra Henry-Stocker][a]

+选题:[lujun9972][b]

+译者:[tomjlw](https://github.com/tomjlw)

+校对:[wxy](https://github.com/wxy)

+

+本文由 [LCTT](https://github.com/LCTT/TranslateProject) 原创编译,[Linux中国](https://linux.cn/) 荣誉推出

+

+[a]: https://www.networkworld.com/author/Sandra-Henry_Stocker/

+[b]: https://github.com/lujun9972

+[1]: https://images.idgesg.net/images/article/2019/04/chairs-100794266-large.jpg

+[2]: https://www.networkworld.com/article/3387961/how-to-identify-duplicate-files-on-linux.html

+[3]: https://www.youtube.com/playlist?list=PL7D2RMSmRO9J8OTpjFECi8DJiTQdd4hua

+[4]: https://www.networkworld.com/article/3242170/linux/invaluable-tips-and-tricks-for-troubleshooting-linux.html

+[5]: https://www.facebook.com/NetworkWorld/

+[6]: https://www.linkedin.com/company/network-world

+

diff --git a/published/20190427 Monitoring CPU and GPU Temperatures on Linux.md b/published/20190427 Monitoring CPU and GPU Temperatures on Linux.md

new file mode 100644

index 0000000000..1ba2cb1a9b

--- /dev/null

+++ b/published/20190427 Monitoring CPU and GPU Temperatures on Linux.md

@@ -0,0 +1,146 @@

+[#]: collector: (lujun9972)

+[#]: translator: (cycoe)

+[#]: reviewer: (wxy)

+[#]: publisher: (wxy)

+[#]: url: (https://linux.cn/article-10929-1.html)

+[#]: subject: (Monitoring CPU and GPU Temperatures on Linux)

+[#]: via: (https://itsfoss.com/monitor-cpu-gpu-temp-linux/)

+[#]: author: (It's FOSS Community https://itsfoss.com/author/itsfoss/)

+

+在 Linux 上监控 CPU 和 GPU 温度

+======

+

+> 本篇文章讨论了在 Linux 命令行中监控 CPU 和 GPU 温度的两种简单方式。

+

+由于 [Steam][1](包括 [Steam Play][2],即 Proton)和一些其他的发展,GNU/Linux 正在成为越来越多计算机用户的日常游戏平台的选择。也有相当一部分用户在遇到像[视频编辑][3]或图形设计等(Kdenlive 和 [Blender][4] 是这类应用程序中很好的例子)资源消耗型计算任务时,也会使用 GNU/Linux。

+

+不管你是否是这些用户中的一员或其他用户,你也一定想知道你的电脑 CPU 和 GPU 能有多热(如果你想要超频的话更会如此)。如果是这样,那么继续读下去。我们会介绍两个非常简单的命令来监控 CPU 和 GPU 温度。

+

+我的装置包括一台 [Slimbook Kymera][5] 和两台显示器(一台 TV 和一台 PC 监视器),使得我可以用一台来玩游戏,另一台来留意监控温度。另外,因为我使用 [Zorin OS][6],我会将关注点放在 Ubuntu 和 Ubuntu 的衍生发行版上。

+

+为了监控 CPU 和 GPU 的行为,我们将利用实用的 `watch` 命令在每几秒钟之后动态地得到读数。

+

+![][7]

+

+### 在 Linux 中监控 CPU 温度

+

+对于 CPU 温度,我们将结合使用 `watch` 与 `sensors` 命令。一篇关于[此工具的图形用户界面版本][8]的有趣文章已经在 It's FOSS 中介绍过了。然而,我们将在此处使用命令行版本:

+

+```

+watch -n 2 sensors

+```

+

+`watch` 保证了读数会在每 2 秒钟更新一次(当然,这个周期值能够根据你的需要去更改):

+

+```

+Every 2,0s: sensors

+

+iwlwifi-virtual-0

+Adapter: Virtual device

+temp1: +39.0°C

+

+acpitz-virtual-0

+Adapter: Virtual device

+temp1: +27.8°C (crit = +119.0°C)

+temp2: +29.8°C (crit = +119.0°C)

+

+coretemp-isa-0000

+Adapter: ISA adapter

+Package id 0: +37.0°C (high = +82.0°C, crit = +100.0°C)

+Core 0: +35.0°C (high = +82.0°C, crit = +100.0°C)

+Core 1: +35.0°C (high = +82.0°C, crit = +100.0°C)

+Core 2: +33.0°C (high = +82.0°C, crit = +100.0°C)

+Core 3: +36.0°C (high = +82.0°C, crit = +100.0°C)

+Core 4: +37.0°C (high = +82.0°C, crit = +100.0°C)

+Core 5: +35.0°C (high = +82.0°C, crit = +100.0°C)

+```

+

+除此之外,我们还能得到如下信息:

+

+ * 我们有 5 个核心正在被使用(并且当前的最高温度为 37.0℃)。

+ * 温度超过 82.0℃ 会被认为是过热。

+ * 超过 100.0℃ 的温度会被认为是超过临界值。

+

+根据以上的温度值我们可以得出结论,我的电脑目前的工作负载非常小。

+

+### 在 Linux 中监控 GPU 温度

+

+现在让我们来看看显卡。我从来没使用过 AMD 的显卡,因此我会将重点放在 Nvidia 的显卡上。我们需要做的第一件事是从 [Ubuntu 的附加驱动][10] 中下载合适的最新驱动。

+

+在 Ubuntu(Zorin 或 Linux Mint 也是相同的)中,进入“软件和更新 > 附加驱动”选项,选择最新的可用驱动。另外,你可以添加或启用显示卡的官方 ppa(通过命令行或通过“软件和更新 > 其他软件”来实现)。安装驱动程序后,你将可以使用 “Nvidia X Server” 的 GUI 程序以及命令行工具 `nvidia-smi`(Nvidia 系统管理界面)。因此我们将使用 `watch` 和 `nvidia-smi`:

+

+```

+watch -n 2 nvidia-smi

+```

+

+与 CPU 的情况一样,我们会在每两秒得到一次更新的读数:

+

+```

+Every 2,0s: nvidia-smi

+

+Fri Apr 19 20:45:30 2019

++-----------------------------------------------------------------------------+

+| Nvidia-SMI 418.56 Driver Version: 418.56 CUDA Version: 10.1 |

+|-------------------------------+----------------------+----------------------+

+| GPU Name Persistence-M| Bus-Id Disp.A | Volatile Uncorr. ECC |

+| Fan Temp Perf Pwr:Usage/Cap| Memory-Usage | GPU-Util Compute M. |

+|===============================+======================+======================|

+| 0 GeForce GTX 106... Off | 00000000:01:00.0 On | N/A |

+| 0% 54C P8 10W / 120W | 433MiB / 6077MiB | 4% Default |

++-------------------------------+----------------------+----------------------+

+

++-----------------------------------------------------------------------------+

+| Processes: GPU Memory |

+| GPU PID Type Process name Usage |

+|=============================================================================|

+| 0 1557 G /usr/lib/xorg/Xorg 190MiB |

+| 0 1820 G /usr/bin/gnome-shell 174MiB |

+| 0 7820 G ...equest-channel-token=303407235874180773 65MiB |

++-----------------------------------------------------------------------------+

+```

+

+从这个表格中我们得到了关于显示卡的如下信息:

+

+ * 它正在使用版本号为 418.56 的开源驱动。

+ * 显示卡的当前温度为 54.0℃,并且风扇的使用量为 0%。

+ * 电量的消耗非常低:仅仅 10W。

+ * 总量为 6GB 的 vram(视频随机存取存储器),只使用了 433MB。

+ * vram 正在被 3 个进程使用,他们的 ID 分别为 1557、1820 和 7820。

+

+大部分这些事实或数值都清晰地表明,我们没有在玩任何消耗系统资源的游戏或处理大负载的任务。当我们开始玩游戏、处理视频或其他类似任务时,这些值就会开始上升。

+

+#### 结论

+

+即便我们有 GUI 工具,但我还是发现这两个命令对于实时监控硬件非常的顺手。

+

+你将如何去使用它们呢?你可以通过阅读他们的 man 手册来学习更多关于这些工具的使用技巧。

+

+你有其他偏爱的工具吗?在评论里分享给我们吧 ;)。

+

+玩得开心!

+

+--------------------------------------------------------------------------------

+

+via: https://itsfoss.com/monitor-cpu-gpu-temp-linux/

+

+作者:[Alejandro Egea-Abellán][a]

+选题:[lujun9972][b]

+译者:[cycoe](https://github.com/cycoe)

+校对:[wxy](https://github.com/wxy)

+

+本文由 [LCTT](https://github.com/LCTT/TranslateProject) 原创编译,[Linux中国](https://linux.cn/) 荣誉推出

+

+[a]: https://itsfoss.com/author/itsfoss/

+[b]: https://github.com/lujun9972

+[1]: https://itsfoss.com/install-steam-ubuntu-linux/

+[2]: https://itsfoss.com/steam-play-proton/

+[3]: https://itsfoss.com/best-video-editing-software-linux/

+[4]: https://www.blender.org/

+[5]: https://slimbook.es/

+[6]: https://zorinos.com/

+[7]: https://itsfoss.com/wp-content/uploads/2019/04/monitor-cpu-gpu-temperature-linux-800x450.png

+[8]: https://itsfoss.com/check-laptop-cpu-temperature-ubuntu/

+[9]: https://itsfoss.com/best-command-line-games-linux/

+[10]: https://itsfoss.com/install-additional-drivers-ubuntu/

+[11]: https://itsfoss.com/review-googler-linux/

+[12]: https://itsfoss.com/wp-content/uploads/2019/04/EGEA-ABELLAN-Alejandro.jpg

diff --git a/published/20190428 Installing Budgie Desktop on Ubuntu -Quick Guide.md b/published/20190428 Installing Budgie Desktop on Ubuntu -Quick Guide.md

new file mode 100644

index 0000000000..457caaa69a

--- /dev/null

+++ b/published/20190428 Installing Budgie Desktop on Ubuntu -Quick Guide.md

@@ -0,0 +1,112 @@

+[#]: collector: (lujun9972)

+[#]: translator: (geekpi)

+[#]: reviewer: (wxy)

+[#]: publisher: (wxy)

+[#]: url: (https://linux.cn/article-10919-1.html)

+[#]: subject: (Installing Budgie Desktop on Ubuntu [Quick Guide])

+[#]: via: (https://itsfoss.com/install-budgie-ubuntu/)

+[#]: author: (Atharva Lele https://itsfoss.com/author/atharva/)

+

+在 Ubuntu 上安装 Budgie 桌面

+======

+

+> 在这个逐步的教程中学习如何在 Ubuntu 上安装 Budgie 桌面。

+

+在所有[各种 Ubuntu 版本][1]中,[Ubuntu Budgie][2] 是最被低估的版本。它外观优雅,而且需要的资源也不多。

+

+阅读这篇 《[Ubuntu Budgie 点评][3]》或观看下面的视频,了解 Ubuntu Budgie 18.04 的外观如何。

+

+- [Ubuntu 18.04 Budgie Desktop Tour [It's Elegant]](https://youtu.be/KXgreWOK33k)

+

+如果你喜欢 [Budgie 桌面][5]但你正在使用其他版本的 Ubuntu,例如默认 Ubuntu 带有 GNOME 桌面,我有个好消息。你可以在当前的 Ubuntu 系统上安装 Budgie 并切换桌面环境。

+

+在这篇文章中,我将告诉你到底该怎么做。但首先,对那些不了解 Budgie 的人进行一点介绍。

+

+Budgie 桌面环境主要由 [Solus Linux 团队开发][6]。它的设计注重优雅和现代使用。Budgie 适用于所有主流 Linux 发行版,可以让用户在其上尝试体验这种新的桌面环境。Budgie 现在非常成熟,并提供了出色的桌面体验。

+

+> 警告

+>

+> 在同一系统上安装多个桌面可能会导致冲突,你可能会遇到一些问题,如面板中缺少图标或同一程序的多个图标。

+>

+> 你也许不会遇到任何问题。是否要尝试不同桌面由你决定。

+

+### 在 Ubuntu 上安装 Budgie

+

+此方法未在 Linux Mint 上进行测试,因此我建议你 Mint 上不要按照此指南进行操作。

+

+对于正在使用 Ubuntu 的人,Budgie 现在默认是 Ubuntu 仓库的一部分。因此,我们不需要添加任何 PPA 来下载 Budgie。

+

+要安装 Budgie,只需在终端中运行此命令即可。我们首先要确保系统已完全更新。

+

+```

+sudo apt update && sudo apt upgrade

+sudo apt install ubuntu-budgie-desktop

+```

+

+下载完成后,你将看到选择显示管理器的提示。选择 “lightdm” 以获得完整的 Budgie 体验。

+

+![Select lightdm][7]

+

+安装完成后,重启计算机。然后,你会看到 Budgie 的登录页面。输入你的密码进入主屏幕。

+

+![Budgie Desktop Home][8]

+

+### 切换到其他桌面环境

+

+![Budgie login screen][9]

+

+你可以单击登录名旁边的 Budgie 图标获取登录选项。在那里,你可以在已安装的桌面环境(DE)之间进行选择。就我而言,我看到了 Budgie 和默认的 Ubuntu(GNOME)桌面。

+

+![Select your DE][10]

+

+因此,无论何时你想登录 GNOME,都可以使用此菜单执行此操作。

+

+### 如何删除 Budgie

+

+如果你不喜欢 Budgie 或只是想回到常规的以前的 Ubuntu,你可以如上节所述切换回常规桌面。

+

+但是,如果你真的想要删除 Budgie 及其组件,你可以按照以下命令回到之前的状态。

+

+**在使用这些命令之前先切换到其他桌面环境:**

+

+```

+sudo apt remove ubuntu-budgie-desktop ubuntu-budgie* lightdm

+sudo apt autoremove

+sudo apt install --reinstall gdm3

+```

+

+成功运行所有命令后,重启计算机。

+

+现在,你将回到 GNOME 或其他你有的桌面。

+

+### 你对 Budgie 有什么看法?

+

+Budgie 是[最佳 Linux 桌面环境][12]之一。希望这个简短的指南帮助你在 Ubuntu 上安装了很棒的 Budgie 桌面。

+

+如果你安装了 Budgie,你最喜欢它的什么?请在下面的评论中告诉我们。像往常一样,欢迎任何问题或建议。

+

+--------------------------------------------------------------------------------

+

+via: https://itsfoss.com/install-budgie-ubuntu/

+

+作者:[Atharva Lele][a]

+选题:[lujun9972][b]

+译者:[geekpi](https://github.com/geekpi)

+校对:[wxy](https://github.com/wxy)

+

+本文由 [LCTT](https://github.com/LCTT/TranslateProject) 原创编译,[Linux中国](https://linux.cn/) 荣誉推出

+

+[a]: https://itsfoss.com/author/atharva/

+[b]: https://github.com/lujun9972

+[1]: https://itsfoss.com/which-ubuntu-install/

+[2]: https://ubuntubudgie.org/

+[3]: https://itsfoss.com/ubuntu-budgie-18-review/

+[4]: https://www.youtube.com/c/itsfoss?sub_confirmation=1

+[5]: https://github.com/solus-project/budgie-desktop

+[6]: https://getsol.us/home/

+[7]: https://i0.wp.com/itsfoss.com/wp-content/uploads/2019/04/budgie_install_select_dm.png?fit=800%2C559&ssl=1

+[8]: https://i1.wp.com/itsfoss.com/wp-content/uploads/2019/04/budgie_homescreen.jpg?fit=800%2C500&ssl=1

+[9]: https://i2.wp.com/itsfoss.com/wp-content/uploads/2019/04/budgie_install_lockscreen.png?fit=800%2C403&ssl=1

+[10]: https://i0.wp.com/itsfoss.com/wp-content/uploads/2019/04/budgie_install_lockscreen_select_de.png?fit=800%2C403&ssl=1

+[11]: https://itsfoss.com/snapd-error-ubuntu/

+[12]: https://itsfoss.com/best-linux-desktop-environments/

diff --git a/translated/tech/20161106 Myths about -dev-urandom.md b/published/201905/20161106 Myths about -dev-urandom.md

similarity index 73%

rename from translated/tech/20161106 Myths about -dev-urandom.md

rename to published/201905/20161106 Myths about -dev-urandom.md

index 118c6426f2..76f07ebc3c 100644

--- a/translated/tech/20161106 Myths about -dev-urandom.md

+++ b/published/201905/20161106 Myths about -dev-urandom.md

@@ -7,37 +7,37 @@

**`/dev/urandom` 不安全。加密用途必须使用 `/dev/random`**

-事实:`/dev/urandom` 才是类 Unix 操作系统下推荐的加密种子。

+*事实*:`/dev/urandom` 才是类 Unix 操作系统下推荐的加密种子。

**`/dev/urandom` 是伪随机数生成器(PRND),而 `/dev/random` 是“真”随机数生成器。**

-事实:它们两者本质上用的是同一种 CSPRNG (一种密码学伪随机数生成器)。它们之间细微的差别和“真”不“真”随机完全无关

+*事实*:它们两者本质上用的是同一种 CSPRNG (一种密码学伪随机数生成器)。它们之间细微的差别和“真”“不真”随机完全无关。(参见:“Linux 随机数生成器的构架”一节)

**`/dev/random` 在任何情况下都是密码学应用更好地选择。即便 `/dev/urandom` 也同样安全,我们还是不应该用它。**

-事实:`/dev/random` 有个很恶心人的问题:它是阻塞的。(LCTT 译注:意味着请求都得逐个执行,等待前一个请求完成)

+*事实*:`/dev/random` 有个很恶心人的问题:它是阻塞的。(参见:“阻塞有什么问题?”一节)(LCTT 译注:意味着请求都得逐个执行,等待前一个请求完成)

-**但阻塞不是好事吗!`/dev/random` 只会给出电脑收集的信息熵足以支持的随机量。`/dev/urandom` 在用完了所有熵的情况下还会不断吐不安全的随机数给你。**

+**但阻塞不是好事吗!`/dev/random` 只会给出电脑收集的信息熵足以支持的随机量。`/dev/urandom` 在用完了所有熵的情况下还会不断吐出不安全的随机数给你。**

-事实:这是误解。就算我们不去考虑应用层面后续对随机种子的用法,“用完信息熵池”这个概念本身就不存在。仅仅 256 位的熵就足以生成计算上安全的随机数很长、很长的一段时间了。

+*事实*:这是误解。就算我们不去考虑应用层面后续对随机种子的用法,“用完信息熵池”这个概念本身就不存在。仅仅 256 位的熵就足以生成计算上安全的随机数很长、很长的一段时间了。(参见:“那熵池快空了的情况呢?”一节)

问题的关键还在后头:`/dev/random` 怎么知道有系统会*多少*可用的信息熵?接着看!

**但密码学家老是讨论重新选种子(re-seeding)。这难道不和上一条冲突吗?**

-事实:你说的也没错!某种程度上吧。确实,随机数生成器一直在使用系统信息熵的状态重新选种。但这么做(一部分)是因为别的原因。

+*事实*:你说的也没错!某种程度上吧。确实,随机数生成器一直在使用系统信息熵的状态重新选种。但这么做(一部分)是因为别的原因。(参见:“重新选种”一节)

这样说吧,我没有说引入新的信息熵是坏的。更多的熵肯定更好。我只是说在熵池低的时候阻塞是没必要的。

**好,就算你说的都对,但是 `/dev/(u)random` 的 man 页面和你说的也不一样啊!到底有没有专家同意你说的这堆啊?**

-事实:其实 man 页面和我说的不冲突。它看似好像在说 `/dev/urandom` 对密码学用途来说不安全,但如果你真的理解这堆密码学术语你就知道它说的并不是这个意思。

+*事实*:其实 man 页面和我说的不冲突。它看似好像在说 `/dev/urandom` 对密码学用途来说不安全,但如果你真的理解这堆密码学术语你就知道它说的并不是这个意思。(参见:“random 和 urandom 的 man 页面”一节)

man 页面确实说在一些情况下推荐使用 `/dev/random` (我觉得也没问题,但绝对不是说必要的),但它也推荐在大多数“一般”的密码学应用下使用 `/dev/urandom` 。

虽然诉诸权威一般来说不是好事,但在密码学这么严肃的事情上,和专家统一意见是很有必要的。

-所以说呢,还确实有一些*专家*和我的一件事一致的:`/dev/urandom` 就应该是类 UNIX 操作系统下密码学应用的首选。显然的,是他们的观点说服了我而不是反过来的。

+所以说呢,还确实有一些*专家*和我的一件事一致的:`/dev/urandom` 就应该是类 UNIX 操作系统下密码学应用的首选。显然的,是他们的观点说服了我而不是反过来的。(参见:“正道”一节)

------

@@ -45,9 +45,9 @@ man 页面确实说在一些情况下推荐使用 `/dev/random` (我觉得也

我尝试不讲太高深的东西,但是有两点内容必须先提一下才能让我们接着论证观点。

-首当其冲的,*什么是随机性*,或者更准确地:我们在探讨什么样的随机性?

+首当其冲的,*什么是随机性*,或者更准确地:我们在探讨什么样的随机性?(参见:“真随机”一节)

-另外一点很重要的是,我*没有尝试以说教的态度*对你们写这段话。我写这篇文章是为了日后可以在讨论起的时候指给别人看。比 140 字长(LCTT 译注:推特长度)。这样我就不用一遍遍重复我的观点了。能把论点磨炼成一篇文章本身就很有助于将来的讨论。

+另外一点很重要的是,我*没有尝试以说教的态度*对你们写这段话。我写这篇文章是为了日后可以在讨论起的时候指给别人看。比 140 字长(LCTT 译注:推特长度)。这样我就不用一遍遍重复我的观点了。能把论点磨炼成一篇文章本身就很有助于将来的讨论。(参见:“你是在说我笨?!”一节)

并且我非常乐意听到不一样的观点。但我只是认为单单地说 `/dev/urandom` 坏是不够的。你得能指出到底有什么问题,并且剖析它们。

@@ -55,43 +55,43 @@ man 页面确实说在一些情况下推荐使用 `/dev/random` (我觉得也

绝对没有!

-事实上我自己也相信了 “`/dev/urandom` 是不安全的” 好些年。这几乎不是我们的错,因为那么德高望重的人在 Usenet、论坛、推特上跟我们重复这个观点。甚至*连 man 手册*都似是而非地说着。我们当年怎么可能鄙视诸如“信息熵太低了”这种看上去就很让人信服的观点呢?

+事实上我自己也相信了 “`/dev/urandom` 是不安全的” 好些年。这几乎不是我们的错,因为那么德高望重的人在 Usenet、论坛、推特上跟我们重复这个观点。甚至*连 man 手册*都似是而非地说着。我们当年怎么可能鄙视诸如“信息熵太低了”这种看上去就很让人信服的观点呢?(参见:“random 和 urandom 的 man 页面”一节)

整个流言之所以如此广为流传不是因为人们太蠢,而是因为但凡有点关于信息熵和密码学概念的人都会觉得这个说法很有道理。直觉似乎都在告诉我们这流言讲的很有道理。很不幸直觉在密码学里通常不管用,这次也一样。

### 真随机

-什么叫一个随机变量是“真随机的”?

+随机数是“真正随机”是什么意思?

-我不想搞的太复杂以至于变成哲学范畴的东西。这种讨论很容易走偏因为随机模型大家见仁见智,讨论很快变得毫无意义。

+我不想搞的太复杂以至于变成哲学范畴的东西。这种讨论很容易走偏因为对于随机模型大家见仁见智,讨论很快变得毫无意义。

在我看来“真随机”的“试金石”是量子效应。一个光子穿过或不穿过一个半透镜。或者观察一个放射性粒子衰变。这类东西是现实世界最接近真随机的东西。当然,有些人也不相信这类过程是真随机的,或者这个世界根本不存在任何随机性。这个就百家争鸣了,我也不好多说什么了。

-密码学家一般都会通过不去讨论什么是“真随机”来避免这种争论。它们更关心的是不可预测性。只要没有*任何*方法能猜出下一个随机数就可以了。所以当你以密码学应用为前提讨论一个随机数好不好的时候,在我看来这才是最重要的。

+密码学家一般都会通过不去讨论什么是“真随机”来避免这种哲学辩论。他们更关心的是不可预测性。只要没有*任何*方法能猜出下一个随机数就可以了。所以当你以密码学应用为前提讨论一个随机数好不好的时候,在我看来这才是最重要的。

无论如何,我不怎么关心“哲学上安全”的随机数,这也包括别人嘴里的“真”随机数。

-## 两种安全,一种有用

+### 两种安全,一种有用

但就让我们退一步说,你有了一个“真”随机变量。你下一步做什么呢?

-你把它们打印出来然后挂在墙上来展示量子宇宙的美与和谐?牛逼!我很理解你。

+你把它们打印出来然后挂在墙上来展示量子宇宙的美与和谐?牛逼!我支持你。

但是等等,你说你要*用*它们?做密码学用途?额,那这就废了,因为这事情就有点复杂了。

-事情是这样的,你的真随机,量子力学加护的随机数即将被用进不理想的现实世界程序里。

+事情是这样的,你的真随机、量子力学加护的随机数即将被用进不理想的现实世界算法里去。

-因为我们使用的大多数算法并不是理论信息学上安全的。它们“只能”提供 **计算意义上的安全**。我能想到为数不多的例外就只有 Shamir 密钥分享 和 One-time pad 算法。并且就算前者是名副其实的(如果你实际打算用的话),后者则毫无可行性可言。

+因为我们使用的几乎所有的算法都并不是信息论安全性的。它们“只能”提供**计算意义上的安全**。我能想到为数不多的例外就只有 Shamir 密钥分享和一次性密码本(OTP)算法。并且就算前者是名副其实的(如果你实际打算用的话),后者则毫无可行性可言。

-但所有那些大名鼎鼎的密码学算法,AES、RSA、Diffie-Hellman、椭圆曲线,还有所有那些加密软件包,OpenSSL、GnuTLS、Keyczar、你的操作系统的加密 API,都仅仅是计算意义上的安全的。

+但所有那些大名鼎鼎的密码学算法,AES、RSA、Diffie-Hellman、椭圆曲线,还有所有那些加密软件包,OpenSSL、GnuTLS、Keyczar、你的操作系统的加密 API,都仅仅是计算意义上安全的。

-那区别是什么呢?理论信息学上的安全肯定是安全的,绝对是,其它那些的算法都可能在理论上被拥有无限计算力的穷举破解。我们依然愉快地使用它们因为全世界的计算机加起来都不可能在宇宙年龄的时间里破解,至少现在是这样。而这就是我们文章里说的“不安全”。

+那区别是什么呢?信息论安全的算法肯定是安全的,绝对是,其它那些的算法都可能在理论上被拥有无限计算力的穷举破解。我们依然愉快地使用它们是因为全世界的计算机加起来都不可能在宇宙年龄的时间里破解,至少现在是这样。而这就是我们文章里说的“不安全”。

-除非哪个聪明的家伙破解了算法本身——在只需要极少量计算力的情况下。这也是每个密码学家梦寐以求的圣杯:破解 AES 本身、破解 RSA 本身等等。

+除非哪个聪明的家伙破解了算法本身 —— 在只需要更少量计算力、在今天可实现的计算力的情况下。这也是每个密码学家梦寐以求的圣杯:破解 AES 本身、破解 RSA 本身等等。

所以现在我们来到了更底层的东西:随机数生成器,你坚持要“真随机”而不是“伪随机”。但是没过一会儿你的真随机数就被喂进了你极为鄙视的伪随机算法里了!

-真相是,如果我们最先进的 hash 算法被破解了,或者最先进的块加密被破解了,你得到这些那些“哲学上不安全的”甚至无所谓了,因为反正你也没有安全的应用方法了。

+真相是,如果我们最先进的哈希算法被破解了,或者最先进的分组加密算法被破解了,你得到的这些“哲学上不安全”的随机数甚至无所谓了,因为反正你也没有安全的应用方法了。

所以把计算性上安全的随机数喂给你的仅仅是计算性上安全的算法就可以了,换而言之,用 `/dev/urandom`。

@@ -103,7 +103,7 @@ man 页面确实说在一些情况下推荐使用 `/dev/random` (我觉得也

![image: mythical structure of the kernel's random number generator][1]

-“真随机数”,尽管可能有点瑕疵,进入操作系统然后它的熵立刻被加入内部熵计数器。然后经过“矫偏”和“漂白”之后它进入内核的熵池,然后 `/dev/random` 和 `/dev/urandom` 从里面生成随机数。

+“真正的随机性”,尽管可能有点瑕疵,进入操作系统然后它的熵立刻被加入内部熵计数器。然后经过“矫偏”和“漂白”之后它进入内核的熵池,然后 `/dev/random` 和 `/dev/urandom` 从里面生成随机数。

“真”随机数生成器,`/dev/random`,直接从池里选出随机数,如果熵计数器表示能满足需要的数字大小,那就吐出数字并且减少熵计数。如果不够的话,它会阻塞程序直至有足够的熵进入系统。

@@ -123,25 +123,25 @@ man 页面确实说在一些情况下推荐使用 `/dev/random` (我觉得也

![image: actual structure of the kernel's random number generator before Linux 4.8][2]

-> 这是个很粗糙的简化。实际上不仅有一个,而是三个熵池。一个主池,另一个给 `/dev/random`,还有一个给 `/dev/urandom`,后两者依靠从主池里获取熵。这三个池都有各自的熵计数器,但二级池(后两个)的计数器基本都在 0 附近,而“新鲜”的熵总在需要的时候从主池流过来。同时还有好多混合和回流进系统在同时进行。整个过程对于这篇文档来说都过于复杂了我们跳过。

-

-你看到最大的区别了吗?CSPRNG 并不是和随机数生成器一起跑的,以 `/dev/urandom` 需要输出但熵不够的时候进行填充。CSPRNG 是整个随机数生成过程的内部组件之一。从来就没有什么 `/dev/random` 直接从池里输出纯纯的随机性。每个随机源的输入都在 CSPRNG 里充分混合和散列过了,这一切都发生在实际变成一个随机数,被 `/dev/urandom` 或者 `/dev/random` 吐出去之前。

+你看到最大的区别了吗?CSPRNG 并不是和随机数生成器一起跑的,它在 `/dev/urandom` 需要输出但熵不够的时候进行填充。CSPRNG 是整个随机数生成过程的内部组件之一。从来就没有什么 `/dev/random` 直接从池里输出纯纯的随机性。每个随机源的输入都在 CSPRNG 里充分混合和散列过了,这一切都发生在实际变成一个随机数,被 `/dev/urandom` 或者 `/dev/random` 吐出去之前。

另外一个重要的区别是这里没有熵计数器的任何事情,只有预估。一个源给你的熵的量并不是什么很明确能直接得到的数字。你得预估它。注意,如果你太乐观地预估了它,那 `/dev/random` 最重要的特性——只给出熵允许的随机量——就荡然无存了。很不幸的,预估熵的量是很困难的。

-Linux 内核只使用事件的到达时间来预估熵的量。它通过多项式插值,某种模型,来预估实际的到达时间有多“出乎意料”。这种多项式插值的方法到底是不是好的预估熵量的方法本身就是个问题。同时硬件情况会不会以某种特定的方式影响到达时间也是个问题。而所有硬件的取样率也是个问题,因为这基本上就直接决定了随机数到达时间的颗粒度。

+> 这是个很粗糙的简化。实际上不仅有一个,而是三个熵池。一个主池,另一个给 `/dev/random`,还有一个给 `/dev/urandom`,后两者依靠从主池里获取熵。这三个池都有各自的熵计数器,但二级池(后两个)的计数器基本都在 0 附近,而“新鲜”的熵总在需要的时候从主池流过来。同时还有好多混合和回流进系统在同时进行。整个过程对于这篇文档来说都过于复杂了,我们跳过。

+

+Linux 内核只使用事件的到达时间来预估熵的量。根据模型,它通过多项式插值来预估实际的到达时间有多“出乎意料”。这种多项式插值的方法到底是不是好的预估熵量的方法本身就是个问题。同时硬件情况会不会以某种特定的方式影响到达时间也是个问题。而所有硬件的取样率也是个问题,因为这基本上就直接决定了随机数到达时间的颗粒度。

说到最后,至少现在看来,内核的熵预估还是不错的。这也意味着它比较保守。有些人会具体地讨论它有多好,这都超出我的脑容量了。就算这样,如果你坚持不想在没有足够多的熵的情况下吐出随机数,那你看到这里可能还会有一丝紧张。我睡的就很香了,因为我不关心熵预估什么的。

-最后强调一下终点:`/dev/random` 和 `/dev/urandom` 都是被同一个 CSPRNG 喂的输入。只有它们在用完各自熵池(根据某种预估标准)的时候,它们的行为会不同:`/dev/random` 阻塞,`/dev/urandom` 不阻塞。

+最后要明确一下:`/dev/random` 和 `/dev/urandom` 都是被同一个 CSPRNG 饲喂的。只有它们在用完各自熵池(根据某种预估标准)的时候,它们的行为会不同:`/dev/random` 阻塞,`/dev/urandom` 不阻塞。

##### Linux 4.8 以后

-在 Linux 4.8 里,`/dev/random` 和 `/dev/urandom` 的等价性被放弃了。现在 `/dev/urandom` 的输出不来自于熵池,而是直接从 CSPRNG 来。

-

![image: actual structure of the kernel's random number generator from Linux 4.8 onward][3]

-*我们很快会理解*为什么这不是一个安全问题。

+在 Linux 4.8 里,`/dev/random` 和 `/dev/urandom` 的等价性被放弃了。现在 `/dev/urandom` 的输出不来自于熵池,而是直接从 CSPRNG 来。

+

+*我们很快会理解*为什么这不是一个安全问题。(参见:“CSPRNG 没问题”一节)

### 阻塞有什么问题?

@@ -149,7 +149,7 @@ Linux 内核只使用事件的到达时间来预估熵的量。它通过多项

这些都是问题。阻塞本质上会降低可用性。换而言之你的系统不干你让它干的事情。不用我说,这是不好的。要是它不干活你干嘛搭建它呢?

-> 我在工厂自动化里做过和安全相关的系统。猜猜看安全系统失效的主要原因是什么?被错误操作。就这么简单。很多安全措施的流程让工人恼火了。比如时间太长,或者太不方便。你要知道人很会找捷径来“解决”问题。

+> 我在工厂自动化里做过和安全相关的系统。猜猜看安全系统失效的主要原因是什么?操作问题。就这么简单。很多安全措施的流程让工人恼火了。比如时间太长,或者太不方便。你要知道人很会找捷径来“解决”问题。

但其实有个更深刻的问题:人们不喜欢被打断。它们会找一些绕过的方法,把一些诡异的东西接在一起仅仅因为这样能用。一般人根本不知道什么密码学什么乱七八糟的,至少正常的人是这样吧。

@@ -157,23 +157,23 @@ Linux 内核只使用事件的到达时间来预估熵的量。它通过多项

到头来如果东西太难用的话,你的用户就会被迫开始做一些降低系统安全性的事情——你甚至不知道它们会做些什么。

-我们很容易会忽视可用性之类的重要性。毕竟安全第一对吧?所以比起牺牲安全,不可用,难用,不方便都是次要的?

+我们很容易会忽视可用性之类的重要性。毕竟安全第一对吧?所以比起牺牲安全,不可用、难用、不方便都是次要的?

这种二元对立的想法是错的。阻塞不一定就安全了。正如我们看到的,`/dev/urandom` 直接从 CSPRNG 里给你一样好的随机数。用它不好吗!

### CSPRNG 没问题

-现在情况听上去很沧桑。如果连高质量的 `/dev/random` 都是从一个 CSPRNG 里来的,我们怎么敢在高安全性的需求上使用它呢?

+现在情况听上去很惨淡。如果连高质量的 `/dev/random` 都是从一个 CSPRNG 里来的,我们怎么敢在高安全性的需求上使用它呢?

-实际上,“看上去随机”是现存大多数密码学基础组件的基本要求。如果你观察一个密码学哈希的输出,它一定得和随机的字符串不可区分,密码学家才会认可这个算法。如果你生成一个块加密,它的输出(在你不知道密钥的情况下)也必须和随机数据不可区分才行。

+实际上,“看上去随机”是现存大多数密码学基础组件的基本要求。如果你观察一个密码学哈希的输出,它一定得和随机的字符串不可区分,密码学家才会认可这个算法。如果你生成一个分组加密,它的输出(在你不知道密钥的情况下)也必须和随机数据不可区分才行。

-如果任何人能比暴力穷举要更有效地破解一个加密,比如它利用了某些 CSPRNG 伪随机的弱点,那这就又是老一套了:一切都废了,也别谈后面的了。块加密、哈希,一切都是基于某个数学算法,比如 CSPRNG。所以别害怕,到头来都一样。

+如果任何人能比暴力穷举要更有效地破解一个加密,比如它利用了某些 CSPRNG 伪随机的弱点,那这就又是老一套了:一切都废了,也别谈后面的了。分组加密、哈希,一切都是基于某个数学算法,比如 CSPRNG。所以别害怕,到头来都一样。

### 那熵池快空了的情况呢?

毫无影响。

-加密算法的根基建立在攻击者不能预测输出上,只要最一开始有足够的随机性(熵)就行了。一般的下限是 256 位,不需要更多了。

+加密算法的根基建立在攻击者不能预测输出上,只要最一开始有足够的随机性(熵)就行了。“足够”的下限可以是 256 位,不需要更多了。

介于我们一直在很随意的使用“熵”这个概念,我用“位”来量化随机性希望读者不要太在意细节。像我们之前讨论的那样,内核的随机数生成器甚至没法精确地知道进入系统的熵的量。只有一个预估。而且这个预估的准确性到底怎么样也没人知道。

@@ -211,7 +211,7 @@ Linux 内核只使用事件的到达时间来预估熵的量。它通过多项

我们在回到 man 页面说:“使用 `/dev/random`”。我们已经知道了,虽然 `/dev/urandom` 不阻塞,但是它的随机数和 `/dev/random` 都是从同一个 CSPRNG 里来的。

-如果你真的需要信息论理论上安全的随机数(你不需要的,相信我),那才有可能成为唯一一个你需要等足够熵进入 CSPRNG 的理由。而且你也不能用 `/dev/random`。

+如果你真的需要信息论安全性的随机数(你不需要的,相信我),那才有可能成为唯一一个你需要等足够熵进入 CSPRNG 的理由。而且你也不能用 `/dev/random`。

man 页面有毒,就这样。但至少它还稍稍挽回了一下自己:

@@ -227,7 +227,7 @@ man 页面有毒,就这样。但至少它还稍稍挽回了一下自己:

### 正道

-本篇文章里的观点显然在互联网上是“小众”的。但如果问问一个真正的密码学家,你很难找到一个认同阻塞 `/dev/random` 的人。

+本篇文章里的观点显然在互联网上是“小众”的。但如果问一个真正的密码学家,你很难找到一个认同阻塞 `/dev/random` 的人。

比如我们看看 [Daniel Bernstein][5](即著名的 djb)的看法:

@@ -238,8 +238,6 @@ man 页面有毒,就这样。但至少它还稍稍挽回了一下自己:

>

> 对密码学家来说这甚至都不好笑了

-

-

或者 [Thomas Pornin][6] 的看法,他也是我在 stackexchange 上见过最乐于助人的一位:

> 简单来说,是的。展开说,答案还是一样。`/dev/urandom` 生成的数据可以说和真随机完全无法区分,至少在现有科技水平下。使用比 `/dev/urandom` “更好的“随机性毫无意义,除非你在使用极为罕见的“信息论安全”的加密算法。这肯定不是你的情况,不然你早就说了。

@@ -260,13 +258,13 @@ Linux 的 `/dev/urandom` 会很乐意给你吐点不怎么随机的随机数,

FreeBSD 的行为更正确点:`/dev/random` 和 `/dev/urandom` 是一样的,在系统启动的时候 `/dev/random` 会阻塞到有足够的熵为止,然后它们都再也不阻塞了。

-> 与此同时 Linux 实行了一个新的系统调用,最早由 OpenBSD 引入叫 `getentrypy(2)`,在 Linux 下这个叫 `getrandom(2)`。这个系统调用有着上述正确的行为:阻塞到有足够的熵为止,然后再也不阻塞了。当然,这是个系统调用,而不是一个字节设备(LCTT 译注:指不在 `/dev/` 下),所以它在 shell 或者别的脚本语言里没那么容易获取。这个系统调用 自 Linux 3.17 起存在。

+> 与此同时 Linux 实行了一个新的系统调用,最早由 OpenBSD 引入叫 `getentrypy(2)`,在 Linux 下这个叫 `getrandom(2)`。这个系统调用有着上述正确的行为:阻塞到有足够的熵为止,然后再也不阻塞了。当然,这是个系统调用,而不是一个字节设备(LCTT 译注:不在 `/dev/` 下),所以它在 shell 或者别的脚本语言里没那么容易获取。这个系统调用 自 Linux 3.17 起存在。

-在 Linux 上其实这个问题不太大,因为 Linux 发行版会在启动的过程中储蓄一点随机数(这发生在已经有一些熵之后,因为启动程序不会在按下电源的一瞬间就开始运行)到一个种子文件中,以便系统下次启动的时候读取。所以每次启动的时候系统都会从上一次会话里带一点随机性过来。

+在 Linux 上其实这个问题不太大,因为 Linux 发行版会在启动的过程中保存一点随机数(这发生在已经有一些熵之后,因为启动程序不会在按下电源的一瞬间就开始运行)到一个种子文件中,以便系统下次启动的时候读取。所以每次启动的时候系统都会从上一次会话里带一点随机性过来。

显然这比不上在关机脚本里写入一些随机种子,因为这样的显然就有更多熵可以操作了。但这样做显而易见的好处就是它不用关心系统是不是正确关机了,比如可能你系统崩溃了。

-而且这种做法在你真正第一次启动系统的时候也没法帮你随机,不过好在系统安装器一般会写一个种子文件,所以基本上问题不大。

+而且这种做法在你真正第一次启动系统的时候也没法帮你随机,不过好在 Linux 系统安装程序一般会保存一个种子文件,所以基本上问题不大。

虚拟机是另外一层问题。因为用户喜欢克隆它们,或者恢复到某个之前的状态。这种情况下那个种子文件就帮不到你了。

diff --git a/translated/tech/20171214 Build a game framework with Python using the module Pygame.md b/published/201905/20171214 Build a game framework with Python using the module Pygame.md

similarity index 52%

rename from translated/tech/20171214 Build a game framework with Python using the module Pygame.md

rename to published/201905/20171214 Build a game framework with Python using the module Pygame.md

index 7ce7402959..931a248a8c 100644

--- a/translated/tech/20171214 Build a game framework with Python using the module Pygame.md

+++ b/published/201905/20171214 Build a game framework with Python using the module Pygame.md

@@ -1,37 +1,39 @@

[#]: collector: (lujun9972)

[#]: translator: (robsean)

-[#]: reviewer: ( )

-[#]: publisher: ( )

-[#]: url: ( )

+[#]: reviewer: (wxy)

+[#]: publisher: (wxy)

+[#]: url: (https://linux.cn/article-10850-1.html)

[#]: subject: (Build a game framework with Python using the module Pygame)

[#]: via: (https://opensource.com/article/17/12/game-framework-python)

[#]: author: (Seth Kenlon https://opensource.com/users/seth)

使用 Python 和 Pygame 模块构建一个游戏框架

======

-这系列的第一篇通过创建一个简单的骰子游戏来探究 Python。现在是来从零制作你自己的游戏的时间。

+

+ > 这系列的第一篇通过创建一个简单的骰子游戏来探究 Python。现在是来从零制作你自己的游戏的时间。

+

-在我的 [这系列的第一篇文章][1] 中, 我已经讲解如何使用 Python 创建一个简单的,基于文本的骰子游戏。这次,我将展示如何使用 Python 和 Pygame 模块来创建一个图形化游戏。它将占用一些文章来得到一个确实完成一些东西的游戏,但是在这系列的结尾,你将有一个更好的理解,如何查找和学习新的 Python 模块和如何从其基础上构建一个应用程序。

+在我的[这系列的第一篇文章][1] 中, 我已经讲解如何使用 Python 创建一个简单的、基于文本的骰子游戏。这次,我将展示如何使用 Python 模块 Pygame 来创建一个图形化游戏。它将需要几篇文章才能来得到一个确实做成一些东西的游戏,但是到这系列的结尾,你将更好地理解如何查找和学习新的 Python 模块和如何从其基础上构建一个应用程序。

在开始前,你必须安装 [Pygame][2]。

### 安装新的 Python 模块

-这里有一些方法来安装 Python 模块,但是最通用的两个是:

+有几种方法来安装 Python 模块,但是最通用的两个是:

* 从你的发行版的软件存储库

- * 使用 Python 的软件包管理器,pip

+ * 使用 Python 的软件包管理器 `pip`

-两个方法都工作很好,并且每一个都有它自己的一套优势。如果你是在 Linux 或 BSD 上开发,促使你的发行版的软件存储库确保自动及时更新。

+两个方法都工作的很好,并且每一个都有它自己的一套优势。如果你是在 Linux 或 BSD 上开发,可以利用你的发行版的软件存储库来自动和及时地更新。

-然而,使用 Python 的内置软件包管理器给予你控制更新模块时间的能力。而且,它不是明确指定操作系统的,意味着,即使当你不是在你常用的开发机器上时,你也可以使用它。pip 的其它的优势是允许模块局部安装,如果你没有一台正在使用的计算机的权限,它是有用的。

+然而,使用 Python 的内置软件包管理器可以给予你控制更新模块时间的能力。而且,它不是特定于操作系统的,这意味着,即使当你不是在你常用的开发机器上时,你也可以使用它。`pip` 的其它的优势是允许本地安装模块,如果你没有正在使用的计算机的管理权限,这是有用的。

### 使用 pip

-如果 Python 和 Python3 都安装在你的系统上,你想使用的命令很可能是 `pip3`,它区分来自Python 2.x 的 `pip` 的命令。如果你不确定,先尝试 `pip3`。

+如果 Python 和 Python3 都安装在你的系统上,你想使用的命令很可能是 `pip3`,它用来区分 Python 2.x 的 `pip` 的命令。如果你不确定,先尝试 `pip3`。

-`pip` 命令有些像大多数 Linux 软件包管理器的工作。你可以使用 `search` 搜索 Pythin 模块,然后使用 `install` 安装它们。如果你没有你正在使用的计算机的权限来安装软件,你可以使用 `--user` 选项来仅仅安装模块到你的 home 目录。

+`pip` 命令有些像大多数 Linux 软件包管理器一样工作。你可以使用 `search` 搜索 Python 模块,然后使用 `install` 安装它们。如果你没有你正在使用的计算机的管理权限来安装软件,你可以使用 `--user` 选项来仅仅安装模块到你的家目录。

```

$ pip3 search pygame

@@ -44,11 +46,11 @@ pygame_cffi (0.2.1) - A cffi-based SDL wrapper that copies the

$ pip3 install Pygame --user

```

-Pygame 是一个 Python 模块,这意味着它仅仅是一套可以被使用在你的 Python 程序中库。换句话说,它不是一个你启动的程序,像 [IDLE][3] 或 [Ninja-IDE][4] 一样。

+Pygame 是一个 Python 模块,这意味着它仅仅是一套可以使用在你的 Python 程序中的库。换句话说,它不是一个像 [IDLE][3] 或 [Ninja-IDE][4] 一样可以让你启动的程序。

### Pygame 新手入门

-一个电子游戏需要一个故事背景;一个发生的地点。在 Python 中,有两种不同的方法来创建你的故事背景:

+一个电子游戏需要一个背景设定:故事发生的地点。在 Python 中,有两种不同的方法来创建你的故事背景:

* 设置一种背景颜色

* 设置一张背景图片

@@ -57,15 +59,15 @@ Pygame 是一个 Python 模块,这意味着它仅仅是一套可以被使用

### 设置你的 Pygame 脚本

-为了开始一个新的 Pygame 脚本,在计算机上创建一个文件夹。游戏的全部文件被放在这个目录中。在工程文件夹内部保持所需要的所有的文件来运行游戏是极其重要的。

+要开始一个新的 Pygame 工程,先在计算机上创建一个文件夹。游戏的全部文件被放在这个目录中。在你的工程文件夹内部保持所需要的所有的文件来运行游戏是极其重要的。

-一个 Python 脚本以文件类型,你的姓名,和你想使用的协议开始。使用一个开放源码协议,以便你的朋友可以改善你的游戏并与你一起分享他们的更改:

+一个 Python 脚本以文件类型、你的姓名,和你想使用的许可证开始。使用一个开放源码许可证,以便你的朋友可以改善你的游戏并与你一起分享他们的更改:

```

#!/usr/bin/env python3

-# Seth Kenlon 编写

+# by Seth Kenlon

## GPLv3

# This program is free software: you can redistribute it and/or

@@ -75,14 +77,14 @@ Pygame 是一个 Python 模块,这意味着它仅仅是一套可以被使用

#

# This program is distributed in the hope that it will be useful, but

# WITHOUT ANY WARRANTY; without even the implied warranty of

-# MERCHANTABILITY or FITNESS FOR A PARTICULAR PURPOSE. See the GNU

+# MERCHANTABILITY or FITNESS FOR A PARTICULAR PURPOSE. See the GNU

# General Public License for more details.

#

# You should have received a copy of the GNU General Public License

-# along with this program. If not, see .

+# along with this program. If not, see .

```

-然后,你告诉 Python 你想使用的模块。一些模块是常见的 Python 库,当然,你想包括一个你刚刚安装的,Pygame 。

+然后,你告诉 Python 你想使用的模块。一些模块是常见的 Python 库,当然,你想包括一个你刚刚安装的 Pygame 模块。

```

import pygame # 加载 pygame 关键字

@@ -90,7 +92,7 @@ import sys # 让 python 使用你的文件系统

import os # 帮助 python 识别你的操作系统

```

-由于你将用这个脚本文件工作很多,在文件中制作成段落是有帮助的,以便你知道在哪里放原料。使用语句块注释来做这些,这些注释仅在看你的源文件代码时是可见的。在你的代码中创建三个语句块。

+由于你将用这个脚本文件做很多工作,在文件中分成段落是有帮助的,以便你知道在哪里放代码。你可以使用块注释来做这些,这些注释仅在看你的源文件代码时是可见的。在你的代码中创建三个块。

```

'''

@@ -114,7 +116,7 @@ Main Loop

接下来,为你的游戏设置窗口大小。注意,不是每一个人都有大计算机屏幕,所以,最好使用一个适合大多数人的计算机的屏幕大小。

-这里有一个方法来切换全屏模式,很多现代电子游戏做的方法,但是,由于你刚刚开始,保存它简单和仅设置一个大小。

+这里有一个方法来切换全屏模式,很多现代电子游戏都会这样做,但是,由于你刚刚开始,简单起见仅设置一个大小即可。

```

'''

@@ -124,7 +126,7 @@ worldx = 960

worldy = 720

```

-在一个脚本中使用 Pygame 引擎前,你需要一些基本的设置。你必需设置帧频,启动它的内部时钟,然后开始 (`init`) Pygame 。

+在脚本中使用 Pygame 引擎前,你需要一些基本的设置。你必须设置帧频,启动它的内部时钟,然后开始 (`init`)Pygame 。

```

fps = 40 # 帧频

@@ -137,17 +139,15 @@ pygame.init()

### 设置背景

-在你继续前,打开一个图形应用程序,并为你的游戏世界创建一个背景。在你的工程目录中的 `images` 文件夹内部保存它为 `stage.png` 。

+在你继续前,打开一个图形应用程序,为你的游戏世界创建一个背景。在你的工程目录中的 `images` 文件夹内部保存它为 `stage.png` 。

这里有一些你可以使用的自由图形应用程序。

- * [Krita][5] 是一个专业级绘图原料模拟器,它可以被用于创建漂亮的图片。如果你对电子游戏创建艺术作品非常感兴趣,你甚至可以购买一系列的[游戏艺术作品教程][6].

- * [Pinta][7] 是一个基本的,易于学习的绘图应用程序。

- * [Inkscape][8] 是一个矢量图形应用程序。使用它来绘制形状,线,样条曲线,和 Bézier 曲线。

+* [Krita][5] 是一个专业级绘图素材模拟器,它可以被用于创建漂亮的图片。如果你对创建电子游戏艺术作品非常感兴趣,你甚至可以购买一系列的[游戏艺术作品教程][6]。

+* [Pinta][7] 是一个基本的,易于学习的绘图应用程序。

+* [Inkscape][8] 是一个矢量图形应用程序。使用它来绘制形状、线、样条曲线和贝塞尔曲线。

-

-

-你的图像不必很复杂,你可以以后回去更改它。一旦你有它,在你文件的 setup 部分添加这些代码:

+你的图像不必很复杂,你可以以后回去更改它。一旦有了它,在你文件的 Setup 部分添加这些代码:

```

world = pygame.display.set_mode([worldx,worldy])

@@ -155,13 +155,13 @@ backdrop = pygame.image.load(os.path.join('images','stage.png').convert())

backdropbox = world.get_rect()

```

-如果你仅仅用一种颜色来填充你的游戏的背景,你需要做的全部是:

+如果你仅仅用一种颜色来填充你的游戏的背景,你需要做的就是:

```

world = pygame.display.set_mode([worldx,worldy])

```

-你也必需定义一个来使用的颜色。在你的 setup 部分,使用红,绿,蓝 (RGB) 的值来创建一些颜色的定义。

+你也必须定义颜色以使用。在你的 Setup 部分,使用红、绿、蓝 (RGB) 的值来创建一些颜色的定义。

```

'''

@@ -173,13 +173,13 @@ BLACK = (23,23,23 )

WHITE = (254,254,254)

```

-在这点上,你能理论上启动你的游戏。问题是,它可能仅持续一毫秒。

+至此,你理论上可以启动你的游戏了。问题是,它可能仅持续了一毫秒。

-为证明这一点,保存你的文件为 `your-name_game.py` (用你真实的名称替换 `your-name` )。然后启动你的游戏。

+为证明这一点,保存你的文件为 `your-name_game.py`(用你真实的名称替换 `your-name`)。然后启动你的游戏。

-如果你正在使用 IDLE ,通过选择来自 Run 菜单的 `Run Module` 来运行你的游戏。

+如果你正在使用 IDLE,通过选择来自 “Run” 菜单的 “Run Module” 来运行你的游戏。

-如果你正在使用 Ninja ,在左侧按钮条中单击 `Run file` 按钮。

+如果你正在使用 Ninja,在左侧按钮条中单击 “Run file” 按钮。

@@ -189,27 +189,27 @@ WHITE = (254,254,254)

$ python3 ./your-name_game.py

```

-如果你正在使用 Windows ,使用这命令:

+如果你正在使用 Windows,使用这命令:

```

py.exe your-name_game.py

```

-你启动它,不过不要期望很多,因为你的游戏现在仅仅持续几毫秒。你可以在下一部分中修复它。

+启动它,不过不要期望很多,因为你的游戏现在仅仅持续几毫秒。你可以在下一部分中修复它。

### 循环

-除非另有说明,一个 Python 脚本运行一次并仅一次。近来计算机的运行速度是非常快的,所以你的 Python 脚本运行时间少于1秒钟。

+除非另有说明,一个 Python 脚本运行一次并仅一次。近来计算机的运行速度是非常快的,所以你的 Python 脚本运行时间会少于 1 秒钟。

为强制你的游戏来处于足够长的打开和活跃状态来让人看到它(更不要说玩它),使用一个 `while` 循环。为使你的游戏保存打开,你可以设置一个变量为一些值,然后告诉一个 `while` 循环只要变量保持未更改则一直保存循环。

-这经常被称为一个"主循环",你可以使用术语 `main` 作为你的变量。在你的 setup 部分的任意位置添加这些代码:

+这经常被称为一个“主循环”,你可以使用术语 `main` 作为你的变量。在你的 Setup 部分的任意位置添加代码:

```

main = True

```

-在主循环期间,使用 Pygame 关键字来检查是否在键盘上的按键已经被按下或释放。添加这些代码到你的主循环部分:

+在主循环期间,使用 Pygame 关键字来检查键盘上的按键是否已经被按下或释放。添加这些代码到你的主循环部分:

```

'''

@@ -228,7 +228,7 @@ while main == True:

main = False

```

-也在你的循环中,刷新你世界的背景。

+也是在你的循环中,刷新你世界的背景。

如果你使用一个图片作为背景:

@@ -242,33 +242,33 @@ world.blit(backdrop, backdropbox)

world.fill(BLUE)

```

-最后,告诉 Pygame 来刷新在屏幕上的所有内容并推进游戏的内部时钟。

+最后,告诉 Pygame 来重新刷新屏幕上的所有内容,并推进游戏的内部时钟。

```

pygame.display.flip()

clock.tick(fps)

```

-保存你的文件,再次运行它来查看曾经创建的最无趣的游戏。

+保存你的文件,再次运行它来查看你曾经创建的最无趣的游戏。

退出游戏,在你的键盘上按 `q` 键。

-在这系列的 [下一篇文章][9] 中,我将向你演示,如何加强你当前空的游戏世界,所以,继续学习并创建一些将要使用的图形!

+在这系列的 [下一篇文章][9] 中,我将向你演示,如何加强你当前空空如也的游戏世界,所以,继续学习并创建一些将要使用的图形!

--------------------------------------------------------------------------------

-通过: https://opensource.com/article/17/12/game-framework-python

+via: https://opensource.com/article/17/12/game-framework-python

作者:[Seth Kenlon][a]

选题:[lujun9972][b]

译者:[robsean](https://github.com/robsean)

-校对:[校对者ID](https://github.com/校对者ID)

+校对:[wxy](https://github.com/wxy)

本文由 [LCTT](https://github.com/LCTT/TranslateProject) 原创编译,[Linux中国](https://linux.cn/) 荣誉推出

[a]: https://opensource.com/users/seth

[b]: https://github.com/lujun9972

-[1]: https://opensource.com/article/17/10/python-101

+[1]: https://linux.cn/article-9071-1.html

[2]: http://www.pygame.org/wiki/about

[3]: https://en.wikipedia.org/wiki/IDLE

[4]: http://ninja-ide.org/

diff --git a/published/201905/20171215 How to add a player to your Python game.md b/published/201905/20171215 How to add a player to your Python game.md

new file mode 100644

index 0000000000..b313a8219e

--- /dev/null

+++ b/published/201905/20171215 How to add a player to your Python game.md

@@ -0,0 +1,163 @@

+[#]: collector: (lujun9972)

+[#]: translator: (cycoe)

+[#]: reviewer: (wxy)

+[#]: publisher: (wxy)

+[#]: url: (https://linux.cn/article-10858-1.html)

+[#]: subject: (How to add a player to your Python game)

+[#]: via: (https://opensource.com/article/17/12/game-python-add-a-player)

+[#]: author: (Seth Kenlon https://opensource.com/users/seth)

+

+如何在你的 Python 游戏中添加一个玩家

+======

+> 这是用 Python 从头开始构建游戏的系列文章的第三部分。

+

+

+

+在 [这个系列的第一篇文章][1] 中,我解释了如何使用 Python 创建一个简单的基于文本的骰子游戏。在第二部分中,我向你们展示了如何从头开始构建游戏,即从 [创建游戏的环境][2] 开始。但是每个游戏都需要一名玩家,并且每个玩家都需要一个可操控的角色,这也就是我们接下来要在这个系列的第三部分中需要做的。

+

+在 Pygame 中,玩家操控的图标或者化身被称作妖精。如果你现在还没有任何可用于玩家妖精的图像,你可以使用 [Krita][3] 或 [Inkscape][4] 来自己创建一些图像。如果你对自己的艺术细胞缺乏自信,你也可以在 [OpenClipArt.org][5] 或 [OpenGameArt.org][6] 搜索一些现成的图像。如果你还未按照上一篇文章所说的单独创建一个 `images` 文件夹,那么你需要在你的 Python 项目目录中创建它。将你想要在游戏中使用的图片都放 `images` 文件夹中。

+

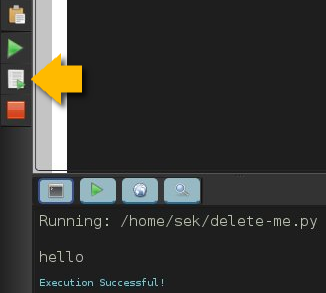

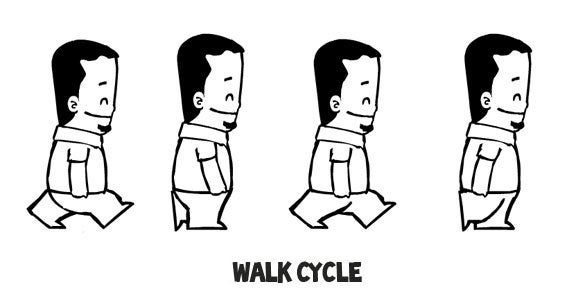

+为了使你的游戏真正的刺激,你应该为你的英雄使用一张动态的妖精图片。这意味着你需要绘制更多的素材,并且它们要大不相同。最常见的动画就是走路循环,通过一系列的图像让你的妖精看起来像是在走路。走路循环最快捷粗糙的版本需要四张图像。

+

+

+

+注意:这篇文章中的代码示例同时兼容静止的和动态的玩家妖精。

+

+将你的玩家妖精命名为 `hero.png`。如果你正在创建一个动态的妖精,则需要在名字后面加上一个数字,从 `hero1.png` 开始。

+

+### 创建一个 Python 类

+

+在 Python 中,当你在创建一个你想要显示在屏幕上的对象时,你需要创建一个类。

+

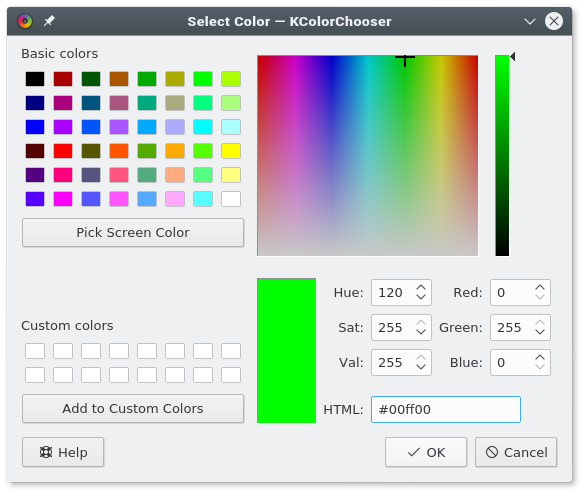

+在你的 Python 脚本靠近顶端的位置,加入如下代码来创建一个玩家。在以下的代码示例中,前三行已经在你正在处理的 Python 脚本中:

+

+```

+import pygame

+import sys

+import os # 以下是新代码

+

+class Player(pygame.sprite.Sprite):

+ '''

+ 生成一个玩家

+ '''

+ def __init__(self):

+ pygame.sprite.Sprite.__init__(self)

+ self.images = []

+ img = pygame.image.load(os.path.join('images','hero.png')).convert()

+ self.images.append(img)

+ self.image = self.images[0]

+ self.rect = self.image.get_rect()

+```

+

+如果你的可操控角色拥有一个走路循环,在 `images` 文件夹中将对应图片保存为 `hero1.png` 到 `hero4.png` 的独立文件。

+

+使用一个循环来告诉 Python 遍历每个文件。

+

+```

+'''

+对象

+'''

+

+class Player(pygame.sprite.Sprite):

+ '''

+ 生成一个玩家

+ '''

+ def __init__(self):

+ pygame.sprite.Sprite.__init__(self)

+ self.images = []

+ for i in range(1,5):

+ img = pygame.image.load(os.path.join('images','hero' + str(i) + '.png')).convert()

+ self.images.append(img)

+ self.image = self.images[0]

+ self.rect = self.image.get_rect()

+```

+

+### 将玩家带入游戏世界

+

+现在已经创建好了一个 Player 类,你需要使用它在你的游戏世界中生成一个玩家妖精。如果你不调用 Player 类,那它永远不会起作用,(游戏世界中)也就不会有玩家。你可以通过立马运行你的游戏来验证一下。游戏会像上一篇文章末尾看到的那样运行,并得到明确的结果:一个空荡荡的游戏世界。

+

+为了将一个玩家妖精带到你的游戏世界,你必须通过调用 Player 类来生成一个妖精,并将它加入到 Pygame 的妖精组中。在如下的代码示例中,前三行是已经存在的代码,你需要在其后添加代码:

+

+```

+world = pygame.display.set_mode([worldx,worldy])

+backdrop = pygame.image.load(os.path.join('images','stage.png')).convert()

+backdropbox = screen.get_rect()

+

+# 以下是新代码

+

+player = Player() # 生成玩家

+player.rect.x = 0 # 移动 x 坐标

+player.rect.y = 0 # 移动 y 坐标

+player_list = pygame.sprite.Group()

+player_list.add(player)

+```

+

+尝试启动你的游戏来看看发生了什么。高能预警:它不会像你预期的那样工作,当你启动你的项目,玩家妖精没有出现。事实上它生成了,只不过只出现了一毫秒。你要如何修复一个只出现了一毫秒的东西呢?你可能回想起上一篇文章中,你需要在主循环中添加一些东西。为了使玩家的存在时间超过一毫秒,你需要告诉 Python 在每次循环中都绘制一次。

+

+将你的循环底部的语句更改如下:

+

+```

+ world.blit(backdrop, backdropbox)

+ player_list.draw(screen) # 绘制玩家

+ pygame.display.flip()

+ clock.tick(fps)

+```

+

+现在启动你的游戏,你的玩家出现了!

+

+### 设置 alpha 通道

+

+根据你如何创建你的玩家妖精,在它周围可能会有一个色块。你所看到的是 alpha 通道应该占据的空间。它本来是不可见的“颜色”,但 Python 现在还不知道要使它不可见。那么你所看到的,是围绕在妖精周围的边界区(或现代游戏术语中的“命中区”)内的空间。

+

+

+

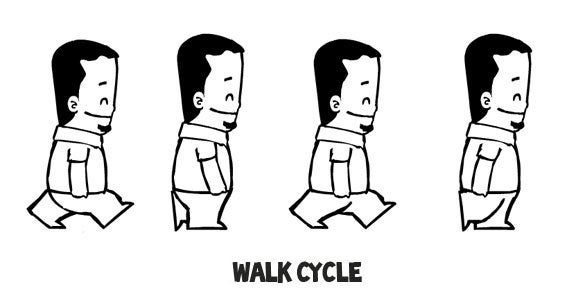

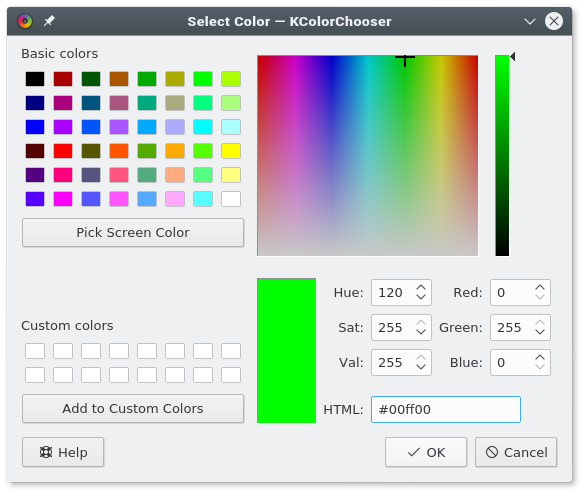

+你可以通过设置一个 alpha 通道和 RGB 值来告诉 Python 使哪种颜色不可见。如果你不知道你使用 alpha 通道的图像的 RGB 值,你可以使用 Krita 或 Inkscape 打开它,并使用一种独特的颜色,比如 `#00ff00`(差不多是“绿屏绿”)来填充图像周围的空白区域。记下颜色对应的十六进制值(此处为 `#00ff00`,绿屏绿)并将其作为 alpha 通道用于你的 Python 脚本。

+

+使用 alpha 通道需要在你的妖精生成相关代码中添加如下两行。类似第一行的代码已经存在于你的脚本中,你只需要添加另外两行:

+

+```

+ img = pygame.image.load(os.path.join('images','hero' + str(i) + '.png')).convert()

+ img.convert_alpha() # 优化 alpha

+ img.set_colorkey(ALPHA) # 设置 alpha

+```

+

+除非你告诉它,否则 Python 不知道将哪种颜色作为 alpha 通道。在你代码的设置相关区域,添加一些颜色定义。将如下的变量定义添加于你的设置相关区域的任意位置:

+

+```

+ALPHA = (0, 255, 0)

+```

+

+在以上示例代码中,`0,255,0` 被我们使用,它在 RGB 中所代表的值与 `#00ff00` 在十六进制中所代表的值相同。你可以通过一个优秀的图像应用程序,如 [GIMP][7]、Krita 或 Inkscape,来获取所有这些颜色值。或者,你可以使用一个优秀的系统级颜色选择器,如 [KColorChooser][8],来检测颜色。

+

+

+

+如果你的图像应用程序将你的妖精背景渲染成了其他的值,你可以按需调整 `ALPHA` 变量的值。不论你将 alpha 设为多少,最后它都将“不可见”。RGB 颜色值是非常严格的,因此如果你需要将 alpha 设为 000,但你又想将 000 用于你图像中的黑线,你只需要将图像中线的颜色设为 111。这样一来,(图像中的黑线)就足够接近黑色,但除了电脑以外没有人能看出区别。

+

+运行你的游戏查看结果。

+

+

+

+在 [这个系列的第四篇文章][9] 中,我会向你们展示如何使你的妖精动起来。多么的激动人心啊!

+

+--------------------------------------------------------------------------------

+

+via: https://opensource.com/article/17/12/game-python-add-a-player

+

+作者:[Seth Kenlon][a]

+选题:[lujun9972][b]

+译者:[cycoe](https://github.com/cycoe)

+校对:[wxy](https://github.com/wxy)

+

+本文由 [LCTT](https://github.com/LCTT/TranslateProject) 原创编译,[Linux中国](https://linux.cn/) 荣誉推出

+

+[a]: https://opensource.com/users/seth

+[b]: https://github.com/lujun9972

+[1]: https://linux.cn/article-9071-1.html

+[2]: https://linux.cn/article-10850-1.html

+[3]: http://krita.org

+[4]: http://inkscape.org

+[5]: http://openclipart.org

+[6]: https://opengameart.org/

+[7]: http://gimp.org

+[8]: https://github.com/KDE/kcolorchooser

+[9]: https://opensource.com/article/17/12/program-game-python-part-4-moving-your-sprite

diff --git a/published/201905/20180130 An introduction to the DomTerm terminal emulator for Linux.md b/published/201905/20180130 An introduction to the DomTerm terminal emulator for Linux.md

new file mode 100644

index 0000000000..13652f26ef

--- /dev/null

+++ b/published/201905/20180130 An introduction to the DomTerm terminal emulator for Linux.md

@@ -0,0 +1,130 @@

+DomTerm:一款为 Linux 打造的终端模拟器

+======

+> 了解一下 DomTerm,这是一款终端模拟器和复用器,带有 HTML 图形和其它不多见的功能。

+

+

+

+[DomTerm][1] 是一款现代化的终端模拟器,它使用浏览器引擎作为 “GUI 工具包”。这就支持了一些相关的特性,例如可嵌入图像和链接、HTML 富文本以及可折叠(显示/隐藏)命令。除此以外,它看起来感觉就像一个功能完整、独立的终端模拟器,有着出色 xterm 兼容性(包括鼠标处理和 24 位色)和恰当的 “装饰” (菜单)。另外它内置支持了会话管理和副窗口(如同 `tmux` 和 `GNU Screen` 中一样)、基本输入编辑(如在 `readline` 中)以及分页(如在 `less` 中)。

+

+

+

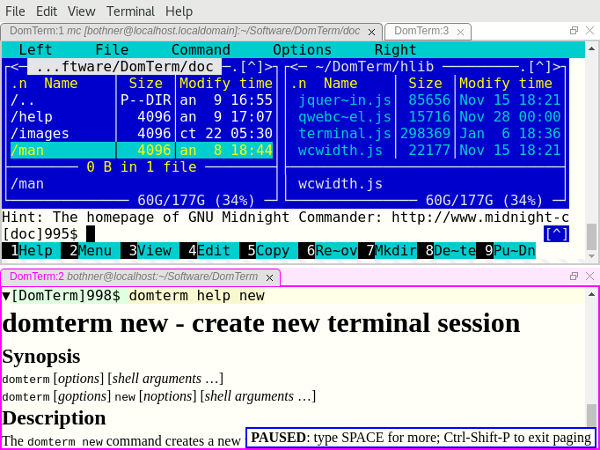

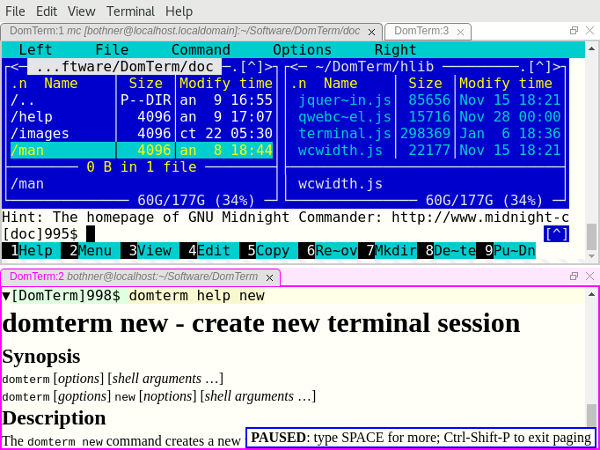

+*图 1: DomTerminal 终端模拟器。*

+

+在以下部分我们将看一看这些特性。我们将假设你已经安装好了 `domterm` (如果你需要获取并构建 Dormterm 请跳到本文最后)。开始之前先让我们概览一下这项技术。

+

+### 前端 vs. 后端

+

+DomTerm 大部分是用 JavaScript 写的,它运行在一个浏览器引擎中。它可以是像例如 Chrome 或者 Firefox 一样的桌面浏览器(见图 3),也可以是一个内嵌的浏览器。使用一个通用的网页浏览器没有问题,但是用户体验却不够好(因为菜单是为通用的网页浏览而不是为了终端模拟器所打造),并且其安全模型也会妨碍使用。因此使用内嵌的浏览器更好一些。

+

+目前以下这些是支持的:

+

+ * qdomterm,使用了 Qt 工具包 和 QtWebEngine

+ * 一个内嵌的 [Electron][2](见图 1)

+ * atom-domterm 以 [Atom 文本编辑器][3](同样基于 Electron)包的形式运行 DomTerm,并和 Atom 面板系统集成在一起(见图 2)

+ * 一个为 JavaFX 的 WebEngine 包装器,这对 Java 编程十分有用(见图 4)

+ * 之前前端使用 [Firefox-XUL][4] 作为首选,但是 Mozilla 已经终止了 XUL

+

+![在 Atom 编辑器中的 DomTerm 终端面板][6]

+

+*图 2:在 Atom 编辑器中的 DomTerm 终端面板。*

+

+目前,Electron 前端可能是最佳选择,紧随其后的是 Qt 前端。如果你使用 Atom,atom-domterm 也工作得相当不错。

+

+后端服务器是用 C 写的。它管理着伪终端(PTY)和会话。它同样也是一个为前端提供 Javascript 和其它文件的 HTTP 服务器。`domterm` 命令启动终端任务和执行其它请求。如果没有服务器在运行,domterm 就会自己来服务。后端与服务器之间的通讯通常是用 WebSockets(在服务器端是[libwebsockets][8])完成的。然而,JavaFX 的嵌入既不用 Websockets 也不用 DomTerm 服务器。相反 Java 应用直接通过 Java-Javascript 桥接进行通讯。

+

+### 一个稳健的可兼容 xterm 的终端模拟器

+

+DomTerm 看上去感觉像一个现代的终端模拟器。它处理鼠标事件、24 位色、Unicode、倍宽字符(CJK)以及输入方式。DomTerm 在 [vttest 测试套件][9] 上工作地十分出色。

+

+其不同寻常的特性包括:

+

+**展示/隐藏按钮(“折叠”):** 小三角(如上图 2)是隐藏/展示相应输出的按钮。仅需在[提示符][11]中添加特定的[转义字符][10]就可以创建按钮。

+

+**对于 readline 和类似输入编辑器的鼠标点击支持:** 如果你点击输入区域(黄色),DomTerm 会向应用发送正确的方向键按键序列。(可以通过提示符中的转义字符启用这一特性,你也可以通过 `Alt+点击` 强制使用。)

+

+**用 CSS 样式化终端:** 这通常是在 `~/.domterm/settings.ini` 里完成的,保存时会自动重载。例如在图 2 中,设置了终端专用的背景色。

+

+### 一个更好的 REPL 控制台

+

+一个经典的终端模拟器基于长方形的字符单元格工作的。这在 REPL(命令行)上没问题,但是并不理想。这里有些通常在终端模拟器中不常见的 REPL 很有用的 DomTerm 特性:

+

+**一个能“打印”图片、图形、数学公式或者一组可点击的链接的命令:** 应用可以发送包含几乎任何 HTML 的转义字符。(HTML 会被剔除部分,以移除 JavaScript 和其它危险特性。)

+

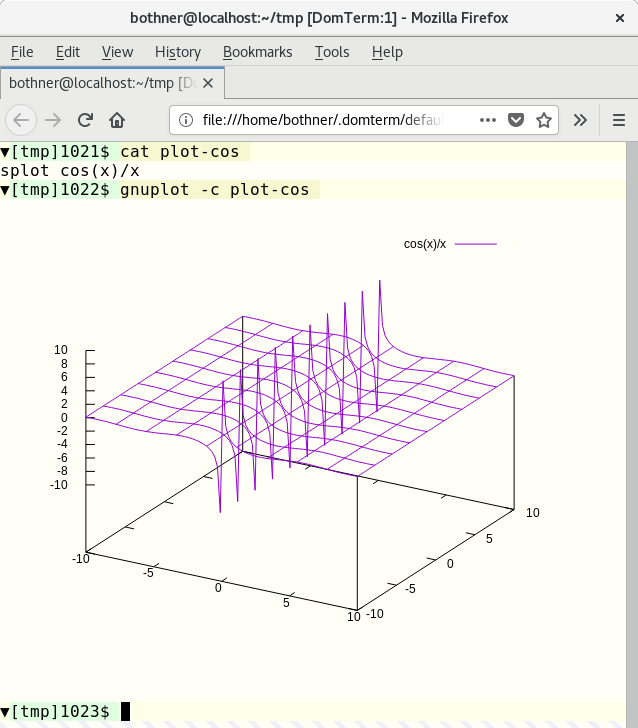

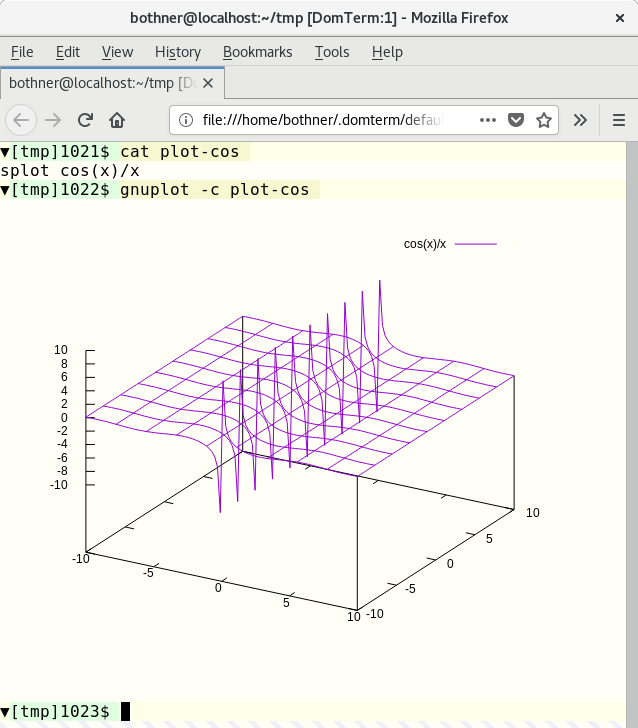

+图 3 显示了来自 [gnuplot][12] 会话的一个片段。Gnuplot(2.1 或者跟高版本)支持 DormTerm 作为终端类型。图形输出被转换成 [SVG 图片][13],然后被打印到终端。我的博客帖子[在 DormTerm 上的 Gnuplot 展示][14]在这方面提供了更多信息。

+

+

+

+*图 3:Gnuplot 截图。*

+

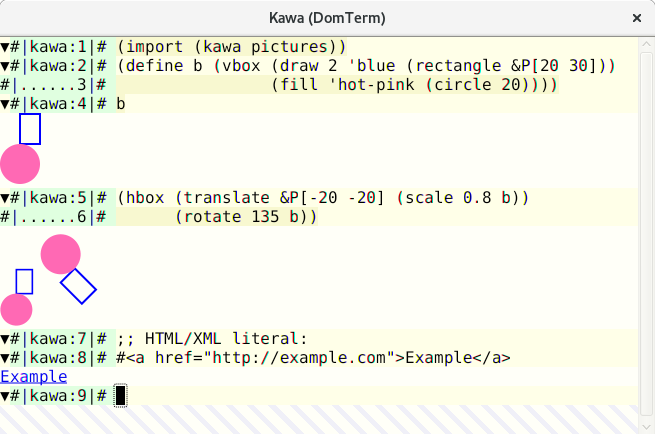

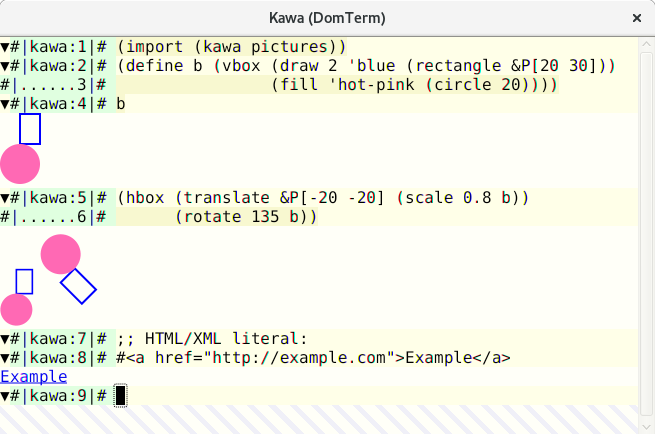

+[Kawa][15] 语言有一个创建并转换[几何图像值][16]的库。如果你将这样的图片值打印到 DomTerm 终端,图片就会被转换成 SVG 形式并嵌入进输出中。

+

+

+

+*图 4:Kawa 中可计算的几何形状。*

+

+**富文本输出:** 有着 HTML 样式的帮助信息更加便于阅读,看上去也更漂亮。图片 1 的下面面板展示 `dormterm help` 的输出。(如果没在 DomTerm 下运行的话输出的是普通文本。)注意自带的分页器中的 `PAUSED` 消息。

+

+**包括可点击链接的错误消息:** DomTerm 可以识别语法 `filename:line:column` 并将其转化成一个能在可定制文本编辑器中打开文件并定位到行的链接。(这适用于相对路径的文件名,如果你用 `PROMPT_COMMAND` 或类似的跟踪目录。)

+

+编译器可以侦测到它在 DomTerm 下运行,并直接用转义字符发出文件链接。这比依赖 DomTerm 的样式匹配要稳健得多,因为它可以处理空格和其他字符并且无需依赖目录追踪。在图 4 中,你可以看到来自 [Kawa Compiler][15] 的错误消息。悬停在文件位置上会使其出现下划线,`file:` URL 出现在 `atom-domterm` 消息栏(窗口底部)中。(当不用 atom-domterm 时,这样的消息会在一个浮层的框中显示,如图 1 中所看到的 `PAUSED` 消息所示。)

+

+点击链接时的动作是可以配置的。默认对于带有 `#position` 后缀的 `file:` 链接的动作是在文本编辑器中打开那个文件。

+

+**结构化内部表示:**以下内容均以内部节点结构表示:命令、提示符、输入行、正常和错误输出、标签,如果“另存为 HTML”,则保留结构。HTML 文件与 XML 兼容,因此你可以使用 XML 工具搜索或转换输出。命令 `domterm view-saved` 会以一种启用命令折叠(显示/隐藏按钮处于活动状态)和重新调整窗口大小的方式打开保存的 HTML 文件。

+

+**内建的 Lisp 样式优美打印:** 你可以在输出中包括优美打印指令(比如,grouping),这样断行会根据窗口大小调整而重新计算。查看我的文章 [DomTerm 中的动态优美打印][17]以更深入探讨。

+

+**基本的内建行编辑**,带着历史记录(像 GNU readline 一样): 这使用浏览器自带的编辑器,因此它有着优秀的鼠标和选择处理机制。你可以在正常字符模式(大多数输入的字符被指接送向进程);或者行模式(通常的字符是直接插入的,而控制字符导致编辑操作,回车键会向进程发送被编辑行)之间转换。默认的是自动模式,根据 PTY 是在原始模式还是终端模式中,DomTerm 在字符模式与行模式间转换。

+

+**自带的分页器**(类似简化版的 `less`):键盘快捷键控制滚动。在“页模式”中,输出在每个新的屏幕(或者单独的行,如果你想一行行地向前移)后暂停;页模式对于用户输入简单智能,因此(如果你想的话)你无需阻碍交互式程序就可以运行它。

+

+### 多路复用和会话

+

+**标签和平铺:** 你不仅可以创建多个终端标签,也可以平铺它们。你可以要么使用鼠标或键盘快捷键来创建或者切换面板和标签。它们可以用鼠标重新排列并调整大小。这是通过 [GoldenLayout][18] JavaScript 库实现的。图 1 展示了一个有着两个面板的窗口。上面的有两个标签,一个运行 [Midnight Commander][20];底下的面板以 HTML 形式展示了 `dormterm help` 输出。然而相反在 Atom 中我们使用其自带的可拖拽的面板和标签。你可以在图 2 中看到这个。

+

+**分离或重接会话:** 与 `tmux` 和 GNU `screen` 类似,DomTerm 支持会话安排。你甚至可以给同样的会话接上多个窗口或面板。这支持多用户会话分享和远程链接。(为了安全,同一个服务器的所有会话都需要能够读取 Unix 域接口和一个包含随机密钥的本地文件。当我们有了良好、安全的远程链接,这个限制将会有所放松。)

+

+**domterm 命令** 类似与 `tmux` 和 GNU `screen`,它有多个选项可以用于控制或者打开单个或多个会话的服务器。主要的差别在于,如果它没在 DomTerm 下运行,`dormterm` 命令会创建一个新的顶层窗口,而不是在现有的终端中运行。

+

+与 `tmux` 和 `git` 类似,`dormterm` 命令有许多子命令。一些子命令创建窗口或者会话。另一些(例如“打印”一张图片)仅在现有的 DormTerm 会话下起作用。

+

+命令 `domterm browse` 打开一个窗口或者面板以浏览一个指定的 URL,例如浏览文档的时候。

+

+### 获取并安装 DomTerm

+

+DomTerm 可以从其 [Github 仓库][21]获取。目前没有提前构建好的包,但是有[详细指导][22]。所有的前提条件在 Fedora 27 上都有,这使得其特别容易被搭建。

+

+--------------------------------------------------------------------------------

+

+via: https://opensource.com/article/18/1/introduction-domterm-terminal-emulator

+

+作者:[Per Bothner][a]

+译者:[tomjlw](https://github.com/tomjlw)

+校对:[wxy](https://github.com/wxy)

+

+本文由 [LCTT](https://github.com/LCTT/TranslateProject) 原创编译,[Linux中国](https://linux.cn/) 荣誉推出

+

+[a]:https://opensource.com/users/perbothner

+[1]:http://domterm.org/

+[2]:https://electronjs.org/

+[3]:https://atom.io/

+[4]:https://en.wikipedia.org/wiki/XUL

+[5]:/file/385346

+[6]:https://opensource.com/sites/default/files/images/dt-atom1.png (DomTerm terminal panes in Atom editor)

+[7]:https://opensource.com/sites/default/files/images/dt-atom1.png

+[8]:https://libwebsockets.org/

+[9]:http://invisible-island.net/vttest/

+[10]:http://domterm.org/Wire-byte-protocol.html

+[11]:http://domterm.org/Shell-prompts.html

+[12]:http://www.gnuplot.info/

+[13]:https://developer.mozilla.org/en-US/docs/Web/SVG

+[14]:http://per.bothner.com/blog/2016/gnuplot-in-domterm/

+[15]:https://www.gnu.org/software/kawa/

+[16]:https://www.gnu.org/software/kawa/Composable-pictures.html

+[17]:http://per.bothner.com/blog/2017/dynamic-prettyprinting/

+[18]:https://golden-layout.com/

+[19]:https://opensource.com/sites/default/files/u128651/domterm1.png

+[20]:https://midnight-commander.org/

+[21]:https://github.com/PerBothner/DomTerm

+[22]:http://domterm.org/Downloading-and-building.html

+

diff --git a/published/201905/20180312 ddgr - A Command Line Tool To Search DuckDuckGo From The Terminal.md b/published/201905/20180312 ddgr - A Command Line Tool To Search DuckDuckGo From The Terminal.md

new file mode 100644

index 0000000000..3d8083d440

--- /dev/null

+++ b/published/201905/20180312 ddgr - A Command Line Tool To Search DuckDuckGo From The Terminal.md

@@ -0,0 +1,229 @@

+ddgr:一个从终端搜索 DuckDuckGo 的命令行工具

+======

+

+在 Linux 中,Bash 技巧非常棒,它使 Linux 中的一切成为可能。

+

+对于开发人员或系统管理员来说,它真的很管用,因为他们大部分时间都在使用终端。你知道他们为什么喜欢这种技巧吗?

+

+因为这些技巧可以提高他们的工作效率,也能使他们工作更快。

+

+### 什么是 ddgr

+

+[ddgr][1] 是一个命令行实用程序,用于从终端搜索 DuckDuckGo。如果设置了 `BROWSER` 环境变量,ddgr 可以在几个基于文本的浏览器中开箱即用。

+

+确保你的系统安装了任何一个基于文本的浏览器。你可能知道 [googler][2],它允许用户从 Linux 命令行进行 Google 搜索。

+

+它在命令行用户中非常受欢迎,他们期望对隐私敏感的 DuckDuckGo 也有类似的实用程序,这就是 `ddgr` 出现的原因。

+

+与 Web 界面不同,你可以指定每页要查看的搜索结果数。

+

+**建议阅读:**

+

+- [Googler – 从 Linux 命令行搜索 Google][2]

+- [Buku – Linux 中一个强大的命令行书签管理器][3]

+- [SoCLI – 从终端搜索和浏览 StackOverflow 的简单方法][4]

+- [RTV(Reddit 终端查看器)- 一个简单的 Reddit 终端查看器][5]

+

+### 什么是 DuckDuckGo

+

+DDG 即 DuckDuckGo。DuckDuckGo(DDG)是一个真正保护用户搜索和隐私的互联网搜索引擎。它没有过滤用户的个性化搜索结果,对于给定的搜索词,它会向所有用户显示相同的搜索结果。

+

+大多数用户更喜欢谷歌搜索引擎,但是如果你真的担心隐私,那么你可以放心地使用 DuckDuckGo。

+

+### ddgr 特性

+

+ * 快速且干净(没有广告、多余的 URL 或杂物参数),自定义颜色

+ * 旨在以最小的空间提供最高的可读性

+ * 指定每页显示的搜索结果数

+ * 可以在 omniprompt 中导航结果,在浏览器中打开 URL

+ * 用于 Bash、Zsh 和 Fish 的搜索和选项补完脚本

+ * 支持 DuckDuckGo Bang(带有自动补完)

+ * 直接在浏览器中打开第一个结果(如同 “I’m Feeling Ducky”)

+ * 不间断搜索:无需退出即可在 omniprompt 中触发新搜索

+ * 关键字支持(例如:filetype:mime、site:somesite.com)

+ * 按时间、指定区域搜索,禁用安全搜索

+ * 支持 HTTPS 代理,支持 Do Not Track,可选择禁用用户代理字符串

+ * 支持自定义 URL 处理程序脚本或命令行实用程序

+ * 全面的文档,man 页面有方便的使用示例

+ * 最小的依赖关系

+

+### 需要条件

+

+`ddgr` 需要 Python 3.4 或更高版本。因此,确保你的系统应具有 Python 3.4 或更高版本。

+

+```

+$ python3 --version

+Python 3.6.3

+```

+

+### 如何在 Linux 中安装 ddgr

+

+我们可以根据发行版使用以下命令轻松安装 `ddgr`。

+

+对于 Fedora ,使用 [DNF 命令][6]来安装 `ddgr`。

+

+```

+# dnf install ddgr

+```

+

+或者我们可以使用 [SNAP 命令][7]来安装 `ddgr`。

+

+```

+# snap install ddgr

+```

+

+对于 LinuxMint/Ubuntu,使用 [APT-GET 命令][8] 或 [APT 命令][9]来安装 `ddgr`。

+

+```

+$ sudo add-apt-repository ppa:twodopeshaggy/jarun

+$ sudo apt-get update

+$ sudo apt-get install ddgr

+```

+

+对于基于 Arch Linux 的系统,使用 [Yaourt 命令][10]或 [Packer 命令][11]从 AUR 仓库安装 `ddgr`。

+

+```

+$ yaourt -S ddgr

+或

+$ packer -S ddgr

+```

+

+对于 Debian,使用 [DPKG 命令][12] 安装 `ddgr`。

+

+```

+# wget https://github.com/jarun/ddgr/releases/download/v1.2/ddgr_1.2-1_debian9.amd64.deb

+# dpkg -i ddgr_1.2-1_debian9.amd64.deb

+```

+

+对于 CentOS 7,使用 [YUM 命令][13]来安装 `ddgr`。

+

+```

+# yum install https://github.com/jarun/ddgr/releases/download/v1.2/ddgr-1.2-1.el7.3.centos.x86_64.rpm

+```

+

+对于 opensuse,使用 [zypper 命令][14]来安装 `ddgr`。

+

+```

+# zypper install https://github.com/jarun/ddgr/releases/download/v1.2/ddgr-1.2-1.opensuse42.3.x86_64.rpm

+```

+

+### 如何启动 ddgr

+

+在终端上输入 `ddgr` 命令,不带任何选项来进行 DuckDuckGo 搜索。你将获得类似于下面的输出。

+

+```

+$ ddgr

+```

+

+![][16]

+

+### 如何使用 ddgr 进行搜索

+

+我们可以通过两种方式启动搜索。从 omniprompt 或者直接从终端开始。你可以搜索任何你想要的短语。

+

+直接从终端:

+

+```

+$ ddgr 2daygeek

+```

+

+![][17]

+

+从 omniprompt:

+

+![][18]

+

+### Omniprompt 快捷方式

+

+输入 `?` 以获得 omniprompt,它将显示关键字列表和进一步使用 `ddgr` 的快捷方式。

+

+![][19]

+

+### 如何移动下一页、上一页和第一页

+

+它允许用户移动下一页、上一页或第一页。

+

+ * `n`: 移动到下一组搜索结果

+ * `p`: 移动到上一组搜索结果

+ * `f`: 跳转到第一页

+

+![][20]

+

+### 如何启动新搜索

+

+`d` 选项允许用户从 omniprompt 发起新的搜索。例如,我搜索了 “2daygeek website”,现在我将搜索 “Magesh Maruthamuthu” 这个新短语。

+

+从 omniprompt:

+

+```

+ddgr (? for help) d magesh maruthmuthu

+```

+

+![][21]

+

+### 在搜索结果中显示完整的 URL

+

+默认情况下,它仅显示文章标题,在搜索中添加 `x` 选项以在搜索结果中显示完整的文章网址。

+

+```

+$ ddgr -n 5 -x 2daygeek

+```

+

+![][22]

+

+### 限制搜索结果

+

+默认情况下,搜索结果每页显示 10 个结果。如果你想为方便起见限制页面结果,可以使用 `ddgr` 带有 `--num` 或 ` -n` 参数。

+

+```

+$ ddgr -n 5 2daygeek

+```

+

+![][23]

+

+### 网站特定搜索

+

+要搜索特定网站的特定页面,使用以下格式。这将从网站获取给定关键字的结果。例如,我们在 2daygeek 网站下搜索 “Package Manager”,查看结果。

+

+```

+$ ddgr -n 5 --site 2daygeek "package manager"

+```

+

+![][24]

+

+--------------------------------------------------------------------------------

+

+via: https://www.2daygeek.com/ddgr-duckduckgo-search-from-the-command-line-in-linux/

+

+作者:[Magesh Maruthamuthu][a]

+译者:[MjSeven](https://github.com/MjSeven)

+校对:[wxy](https://github.com/wxy)

+选题:[lujun9972](https://github.com/lujun9972)

+

+本文由 [LCTT](https://github.com/LCTT/TranslateProject) 原创编译,[Linux中国](https://linux.cn/) 荣誉推出

+

+[a]:https://www.2daygeek.com/author/magesh/

+[1]:https://github.com/jarun/ddgr

+[2]:https://www.2daygeek.com/googler-google-search-from-the-command-line-on-linux/

+[3]:https://www.2daygeek.com/buku-command-line-bookmark-manager-linux/

+[4]:https://www.2daygeek.com/socli-search-and-browse-stack-overflow-from-linux-terminal/

+[5]:https://www.2daygeek.com/rtv-reddit-terminal-viewer-a-simple-terminal-viewer-for-reddit/

+[6]:https://www.2daygeek.com/dnf-command-examples-manage-packages-fedora-system/

+[7]:https://www.2daygeek.com/snap-command-examples/

+[8]:https://www.2daygeek.com/apt-get-apt-cache-command-examples-manage-packages-debian-ubuntu-systems/

+[9]:https://www.2daygeek.com/apt-command-examples-manage-packages-debian-ubuntu-systems/

+[10]:https://www.2daygeek.com/install-yaourt-aur-helper-on-arch-linux/

+[11]:https://www.2daygeek.com/install-packer-aur-helper-on-arch-linux/

+[12]:https://www.2daygeek.com/dpkg-command-to-manage-packages-on-debian-ubuntu-linux-mint-systems/

+[13]:https://www.2daygeek.com/yum-command-examples-manage-packages-rhel-centos-systems/

+[14]:https://www.2daygeek.com/zypper-command-examples-manage-packages-opensuse-system/

+[15]:data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7

+[16]:https://www.2daygeek.com/wp-content/uploads/2018/03/ddgr-duckduckgo-command-line-search-for-linux1.png

+[17]:https://www.2daygeek.com/wp-content/uploads/2018/03/ddgr-duckduckgo-command-line-search-for-linux-3.png

+[18]:https://www.2daygeek.com/wp-content/uploads/2018/03/ddgr-duckduckgo-command-line-search-for-linux-2.png

+[19]:https://www.2daygeek.com/wp-content/uploads/2018/03/ddgr-duckduckgo-command-line-search-for-linux-4.png

+[20]:https://www.2daygeek.com/wp-content/uploads/2018/03/ddgr-duckduckgo-command-line-search-for-linux-5a.png

+[21]:https://www.2daygeek.com/wp-content/uploads/2018/03/ddgr-duckduckgo-command-line-search-for-linux-6a.png

+[22]:https://www.2daygeek.com/wp-content/uploads/2018/03/ddgr-duckduckgo-command-line-search-for-linux-7a.png

+[23]:https://www.2daygeek.com/wp-content/uploads/2018/03/ddgr-duckduckgo-command-line-search-for-linux-8.png

+[24]:https://www.2daygeek.com/wp-content/uploads/2018/03/ddgr-duckduckgo-command-line-search-for-linux-9a.png

diff --git a/published/201905/20180429 The Easiest PDO Tutorial (Basics).md b/published/201905/20180429 The Easiest PDO Tutorial (Basics).md

new file mode 100644

index 0000000000..cadc526b0f

--- /dev/null

+++ b/published/201905/20180429 The Easiest PDO Tutorial (Basics).md

@@ -0,0 +1,155 @@

+PHP PDO 简单教程

+======

+

+

+

+大约 80% 的 Web 应用程序由 PHP 提供支持。类似地,SQL 也是如此。PHP 5.5 版本之前,我们有用于访问 MySQL 数据库的 mysql_ 命令,但由于安全性不足,它们最终被弃用。

+

+弃用这件事是发生在 2013 年的 PHP 5.5 上,我写这篇文章的时间是 2018 年,PHP 版本为 7.2。mysql_ 的弃用带来了访问数据库的两种主要方法:mysqli 和 PDO 库。

+

+虽然 mysqli 库是官方指定的,但由于 mysqli 只能支持 mysql 数据库,而 PDO 可以支持 12 种不同类型的数据库驱动程序,因此 PDO 获得了更多的赞誉。此外,PDO 还有其它一些特性,使其成为大多数开发人员的更好选择。你可以在下表中看到一些特性比较:

+

+| | PDO | MySQLi

+---|---|---

+| 数据库支持 | 12 种驱动 | 只有 MySQL

+| 范例 | OOP | 过程 + OOP

+| 预处理语句(客户端侧) | Yes | No

+| 1命名参数 | Yes | No

+

+现在我想对于大多数开发人员来说,PDO 是首选的原因已经很清楚了。所以让我们深入研究它,并希望在本文中尽量涵盖关于 PDO 你需要的了解的。

+

+### 连接

+

+第一步是连接到数据库,由于 PDO 是完全面向对象的,所以我们将使用 PDO 类的实例。

+

+我们要做的第一件事是定义主机、数据库名称、用户名、密码和数据库字符集。

+

+```

+$host = 'localhost';

+$db = 'theitstuff';

+$user = 'root';

+$pass = 'root';

+$charset = 'utf8mb4';

+$dsn = "mysql:host=$host;dbname=$db;charset=$charset";

+$conn = new PDO($dsn, $user, $pass);

+```

+

+之后,正如你在上面的代码中看到的,我们创建了 DSN 变量,DSN 变量只是一个保存数据库信息的变量。对于一些在外部服务器上运行 MySQL 的人,你还可以通过提供一个 `port=$port_number` 来调整端口号。

+

+最后,你可以创建一个 PDO 类的实例,我使用了 `$conn` 变量,并提供了 `$dsn`、`$user`、`$pass` 参数。如果你遵循这些步骤,你现在应该有一个名为 `$conn` 的对象,它是 PDO 连接类的一个实例。现在是时候进入数据库并运行一些查询。

+

+### 一个简单的 SQL 查询

+

+现在让我们运行一个简单的 SQL 查询。

+

+```

+$tis = $conn->query('SELECT name, age FROM students');

+while ($row = $tis->fetch())

+{

+ echo $row['name']."\t";

+ echo $row['age'];

+ echo "

";

+}

+```

+

+这是使用 PDO 运行查询的最简单形式。我们首先创建了一个名为 `tis`(TheITStuff 的缩写 )的变量,然后你可以看到我们使用了创建的 `$conn` 对象中的查询函数。

+

+然后我们运行一个 `while` 循环并创建了一个 `$row` 变量来从 `$tis` 对象中获取内容,最后通过调用列名来显示每一行。

+

+很简单,不是吗?现在让我们来看看预处理语句。

+

+### 预处理语句

+

+预处理语句是人们开始使用 PDO 的主要原因之一,因为它提供了可以阻止 SQL 注入的语句。

+

+有两种基本方法可供使用,你可以使用位置参数或命名参数。

+

+#### 位置参数

+

+让我们看一个使用位置参数的查询示例。

+

+```

+$tis = $conn->prepare("INSERT INTO STUDENTS(name, age) values(?, ?)");

+$tis->bindValue(1,'mike');

+$tis->bindValue(2,22);

+$tis->execute();

+```

+

+在上面的例子中,我们放置了两个问号,然后使用 `bindValue()` 函数将值映射到查询中。这些值绑定到语句问号中的位置。

+

+我还可以使用变量而不是直接提供值,通过使用 `bindParam()` 函数相同例子如下:

+

+```

+$name='Rishabh'; $age=20;

+$tis = $conn->prepare("INSERT INTO STUDENTS(name, age) values(?, ?)");

+$tis->bindParam(1,$name);

+$tis->bindParam(2,$age);

+$tis->execute();

+```

+

+### 命名参数

+

+命名参数也是预处理语句,它将值/变量映射到查询中的命名位置。由于没有位置绑定,因此在多次使用相同变量的查询中非常有效。

+

+```

+$name='Rishabh'; $age=20;

+$tis = $conn->prepare("INSERT INTO STUDENTS(name, age) values(:name, :age)");

+$tis->bindParam(':name', $name);

+$tis->bindParam(':age', $age);

+$tis->execute();

+```

+

+你可以注意到,唯一的变化是我使用 `:name` 和 `:age` 作为占位符,然后将变量映射到它们。冒号在参数之前使用,让 PDO 知道该位置是一个变量,这非常重要。

+

+你也可以类似地使用 `bindValue()` 来使用命名参数直接映射值。

+

+### 获取数据

+

+PDO 在获取数据时非常丰富,它实际上提供了许多格式来从数据库中获取数据。

+

+你可以使用 `PDO::FETCH_ASSOC` 来获取关联数组,`PDO::FETCH_NUM` 来获取数字数组,使用 `PDO::FETCH_OBJ` 来获取对象数组。

+

+```

+$tis = $conn->prepare("SELECT * FROM STUDENTS");

+$tis->execute();

+$result = $tis->fetchAll(PDO::FETCH_ASSOC);

+```

+

+你可以看到我使用了 `fetchAll`,因为我想要所有匹配的记录。如果只需要一行,你可以简单地使用 `fetch`。

+

+现在我们已经获取了数据,现在是时候循环它了,这非常简单。

+

+```

+foreach ($result as $lnu){

+ echo $lnu['name'];

+ echo $lnu['age']."

";

+}

+```

+

+你可以看到,因为我请求了关联数组,所以我正在按名称访问各个成员。

+

+虽然在定义希望如何传输递数据方面没有要求,但在定义 `$conn` 变量本身时,实际上可以将其设置为默认值。

+

+你需要做的就是创建一个 `$options` 数组,你可以在其中放入所有默认配置,只需在 `$conn` 变量中传递数组即可。

+

+```

+$options = [

+ PDO::ATTR_DEFAULT_FETCH_MODE => PDO::FETCH_ASSOC,

+];

+$conn = new PDO($dsn, $user, $pass, $options);

+```

+

+这是一个非常简短和快速的 PDO 介绍,我们很快就会制作一个高级教程。如果你在理解本教程的任何部分时遇到任何困难,请在评论部分告诉我,我会在那你为你解答。

+

+--------------------------------------------------------------------------------

+

+via: http://www.theitstuff.com/easiest-pdo-tutorial-basics

+

+作者:[Rishabh Kandari][a]

+选题:[lujun9972](https://github.com/lujun9972)

+译者:[MjSeven](https://github.com/MjSeven)

+校对:[wxy](https://github.com/wxy)

+

+本文由 [LCTT](https://github.com/LCTT/TranslateProject) 原创编译,[Linux中国](https://linux.cn/) 荣誉推出

+

+[a]:http://www.theitstuff.com/author/reevkandari

diff --git a/translated/tech/20180518 What-s a hero without a villain- How to add one to your Python game.md b/published/201905/20180518 What-s a hero without a villain- How to add one to your Python game.md

similarity index 83%

rename from translated/tech/20180518 What-s a hero without a villain- How to add one to your Python game.md

rename to published/201905/20180518 What-s a hero without a villain- How to add one to your Python game.md

index a4a2138136..c4bb5c84f0 100644

--- a/translated/tech/20180518 What-s a hero without a villain- How to add one to your Python game.md

+++ b/published/201905/20180518 What-s a hero without a villain- How to add one to your Python game.md

@@ -1,21 +1,22 @@

-没有恶棍,英雄又将如何?如何向你的 Python 游戏中添加一个敌人

+如何向你的 Python 游戏中添加一个敌人

======

+

+> 在本系列的第五部分,学习如何增加一个坏蛋与你的好人战斗。

+

在本系列的前几篇文章中(参见 [第一部分][1]、[第二部分][2]、[第三部分][3] 以及 [第四部分][4]),你已经学习了如何使用 Pygame 和 Python 在一个空白的视频游戏世界中生成一个可玩的角色。但没有恶棍,英雄又将如何?

如果你没有敌人,那将会是一个非常无聊的游戏。所以在此篇文章中,你将为你的游戏添加一个敌人并构建一个用于创建关卡的框架。

-在对玩家妖精实现全部功能仍有许多事情可做之前,跳向敌人似乎就很奇怪。但你已经学到了很多东西,创造恶棍与与创造玩家妖精非常相似。所以放轻松,使用你已经掌握的知识,看看能挑起怎样一些麻烦。

+在对玩家妖精实现全部功能之前,就来实现一个敌人似乎就很奇怪。但你已经学到了很多东西,创造恶棍与与创造玩家妖精非常相似。所以放轻松,使用你已经掌握的知识,看看能挑起怎样一些麻烦。

针对本次训练,你能够从 [Open Game Art][5] 下载一些预创建的素材。此处是我使用的一些素材:

-

-+ 印加花砖(译注:游戏中使用的花砖贴图)

++ 印加花砖(LCTT 译注:游戏中使用的花砖贴图)

+ 一些侵略者

+ 妖精、角色、物体以及特效

-

### 创造敌方妖精

是的,不管你意识到与否,你其实已经知道如何去实现敌人。这个过程与创造一个玩家妖精非常相似:

@@ -24,40 +25,27 @@

2. 创建 `update` 方法使得敌人能够检测碰撞

3. 创建 `move` 方法使得敌人能够四处游荡

-

-

-从类入手。从概念上看,它与你的 Player 类大体相同。你设置一张或者一组图片,然后设置妖精的初始位置。

+从类入手。从概念上看,它与你的 `Player` 类大体相同。你设置一张或者一组图片,然后设置妖精的初始位置。

在继续下一步之前,确保你有一张你的敌人的图像,即使只是一张临时图像。将图像放在你的游戏项目的 `images` 目录(你放置你的玩家图像的相同目录)。

如果所有的活物都拥有动画,那么游戏看起来会好得多。为敌方妖精设置动画与为玩家妖精设置动画具有相同的方式。但现在,为了保持简单,我们使用一个没有动画的妖精。

在你代码 `objects` 节的顶部,使用以下代码创建一个叫做 `Enemy` 的类:

+

```

class Enemy(pygame.sprite.Sprite):

-

'''

-

生成一个敌人

-

'''

-

def __init__(self,x,y,img):

-

pygame.sprite.Sprite.__init__(self)

-

self.image = pygame.image.load(os.path.join('images',img))

-

self.image.convert_alpha()

-

self.image.set_colorkey(ALPHA)

-

self.rect = self.image.get_rect()

-

self.rect.x = x

-

self.rect.y = y

-

```

如果你想让你的敌人动起来,使用让你的玩家拥有动画的 [相同方式][4]。

@@ -67,25 +55,21 @@ class Enemy(pygame.sprite.Sprite):

你能够通过告诉类,妖精应使用哪张图像,应出现在世界上的什么地方,来生成不只一个敌人。这意味着,你能够使用相同的敌人类,在游戏世界的任意地方生成任意数量的敌方妖精。你需要做的仅仅是调用这个类,并告诉它应使用哪张图像,以及你期望生成点的 X 和 Y 坐标。

再次,这从原则上与生成一个玩家精灵相似。在你脚本的 `setup` 节添加如下代码:

+

```

enemy = Enemy(20,200,'yeti.png') # 生成敌人

-

enemy_list = pygame.sprite.Group() # 创建敌人组

-

enemy_list.add(enemy) # 将敌人加入敌人组

-

```

-在示例代码中,X 坐标为 20,Y 坐标为 200。你可能需要根据你的敌方妖精的大小,来调整这些数字,但尽量生成在一个地方,使得你的玩家妖精能够到它。`Yeti.png` 是用于敌人的图像。

+在示例代码中,X 坐标为 20,Y 坐标为 200。你可能需要根据你的敌方妖精的大小,来调整这些数字,但尽量生成在一个范围内,使得你的玩家妖精能够碰到它。`Yeti.png` 是用于敌人的图像。

接下来,将敌人组的所有敌人绘制在屏幕上。现在,你只有一个敌人,如果你想要更多你可以稍后添加。一但你将一个敌人加入敌人组,它就会在主循环中被绘制在屏幕上。中间这一行是你需要添加的新行:

+

```

player_list.draw(world)

-

enemy_list.draw(world) # 刷新敌人

-

pygame.display.flip()

-

```

启动你的游戏,你的敌人会出现在游戏世界中你选择的 X 和 Y 坐标处。

@@ -96,42 +80,31 @@ enemy_list.add(enemy) # 将敌人加入敌人组

思考一下“关卡”是什么。你如何知道你是在游戏中的一个特定关卡中呢?

-你可以把关卡想成一系列项目的集合。就像你刚刚创建的这个平台中,一个关卡,包含了平台、敌人放置、赃物等的一个特定排列。你可以创建一个类,用来在你的玩家附近创建关卡。最终,当你创建了超过一个关卡,你就可以在你的玩家达到特定目标时,使用这个类生成下一个关卡。

+你可以把关卡想成一系列项目的集合。就像你刚刚创建的这个平台中,一个关卡,包含了平台、敌人放置、战利品等的一个特定排列。你可以创建一个类,用来在你的玩家附近创建关卡。最终,当你创建了一个以上的关卡,你就可以在你的玩家达到特定目标时,使用这个类生成下一个关卡。

将你写的用于生成敌人及其群组的代码,移动到一个每次生成新关卡时都会被调用的新函数中。你需要做一些修改,使得每次你创建新关卡时,你都能够创建一些敌人。

+

```

class Level():

-

def bad(lvl,eloc):

-

if lvl == 1:

-

enemy = Enemy(eloc[0],eloc[1],'yeti.png') # 生成敌人

-

enemy_list = pygame.sprite.Group() # 生成敌人组

-

enemy_list.add(enemy) # 将敌人加入敌人组

-

if lvl == 2:

-

print("Level " + str(lvl) )

-

-

return enemy_list

-

```

`return` 语句确保了当你调用 `Level.bad` 方法时,你将会得到一个 `enemy_list` 变量包含了所有你定义的敌人。

因为你现在将创造敌人作为每个关卡的一部分,你的 `setup` 部分也需要做些更改。不同于创造一个敌人,取而代之的是你必须去定义敌人在那里生成,以及敌人属于哪个关卡。

+

```

eloc = []

-

eloc = [200,20]

-

enemy_list = Level.bad( 1, eloc )

-

```

再次运行游戏来确认你的关卡生成正确。与往常一样,你应该会看到你的玩家,并且能看到你在本章节中添加的敌人。

@@ -140,31 +113,27 @@ enemy_list = Level.bad( 1, eloc )

一个敌人如果对玩家没有效果,那么它不太算得上是一个敌人。当玩家与敌人发生碰撞时,他们通常会对玩家造成伤害。

-因为你可能想要去跟踪玩家的生命值,因此碰撞检测发生在 Player 类,而不是 Enemy 类中。当然如果你想,你也可以跟踪敌人的生命值。它们之间的逻辑与代码大体相似,现在,我们只需要跟踪玩家的生命值。

+因为你可能想要去跟踪玩家的生命值,因此碰撞检测发生在 `Player` 类,而不是 `Enemy` 类中。当然如果你想,你也可以跟踪敌人的生命值。它们之间的逻辑与代码大体相似,现在,我们只需要跟踪玩家的生命值。

为了跟踪玩家的生命值,你必须为它确定一个变量。代码示例中的第一行是上下文提示,那么将第二行代码添加到你的 Player 类中:

+

```

self.frame = 0

-

self.health = 10

-

```

-在你 Player 类的 `update` 方法中,添加如下代码块:

+在你 `Player` 类的 `update` 方法中,添加如下代码块:

+

```

hit_list = pygame.sprite.spritecollide(self, enemy_list, False)

-

for enemy in hit_list:

-

self.health -= 1

-

print(self.health)

-

```

这段代码使用 Pygame 的 `sprite.spritecollide` 方法,建立了一个碰撞检测器,称作 `enemy_hit`。每当它的父类妖精(生成检测器的玩家妖精)的碰撞区触碰到 `enemy_list` 中的任一妖精的碰撞区时,碰撞检测器都会发出一个信号。当这个信号被接收,`for` 循环就会被触发,同时扣除一点玩家生命值。

-一旦这段代码出现在你 Player 类的 `update` 方法,并且 `update` 方法在你的主循环中被调用,Pygame 会在每个时钟 tick 检测一次碰撞。

+一旦这段代码出现在你 `Player` 类的 `update` 方法,并且 `update` 方法在你的主循环中被调用,Pygame 会在每个时钟滴答中检测一次碰撞。

### 移动敌人

@@ -176,60 +145,41 @@ enemy_list = Level.bad( 1, eloc )