mirror of

https://github.com/LCTT/TranslateProject.git

synced 2025-03-12 01:40:10 +08:00

Merge branch 'master' of https://github.com/LCTT/TranslateProject

This commit is contained in:

commit

6daf5f87a3

@ -1,18 +1,18 @@

|

||||

如何通过反向 SSH 隧道访问 NAT 后面的 Linux 服务器

|

||||

================================================================================

|

||||

你在家里运行着一台 Linux 服务器,访问它需要先经过 NAT 路由器或者限制性防火墙。现在你想不在家的时候用 SSH 登录到这台服务器。你如何才能做到呢?SSH 端口转发当然是一种选择。但是,如果你需要处理多个嵌套的 NAT 环境,端口转发可能会变得非常棘手。另外,在多种 ISP 特定条件下可能会受到干扰,例如阻塞转发端口的限制性 ISP 防火墙、或者在用户间共享 IPv4 地址的运营商级 NAT。

|

||||

你在家里运行着一台 Linux 服务器,它放在一个 NAT 路由器或者限制性防火墙后面。现在你想在外出时用 SSH 登录到这台服务器。你如何才能做到呢?SSH 端口转发当然是一种选择。但是,如果你需要处理多级嵌套的 NAT 环境,端口转发可能会变得非常棘手。另外,在多种 ISP 特定条件下可能会受到干扰,例如阻塞转发端口的限制性 ISP 防火墙、或者在用户间共享 IPv4 地址的运营商级 NAT。

|

||||

|

||||

### 什么是反向 SSH 隧道? ###

|

||||

|

||||

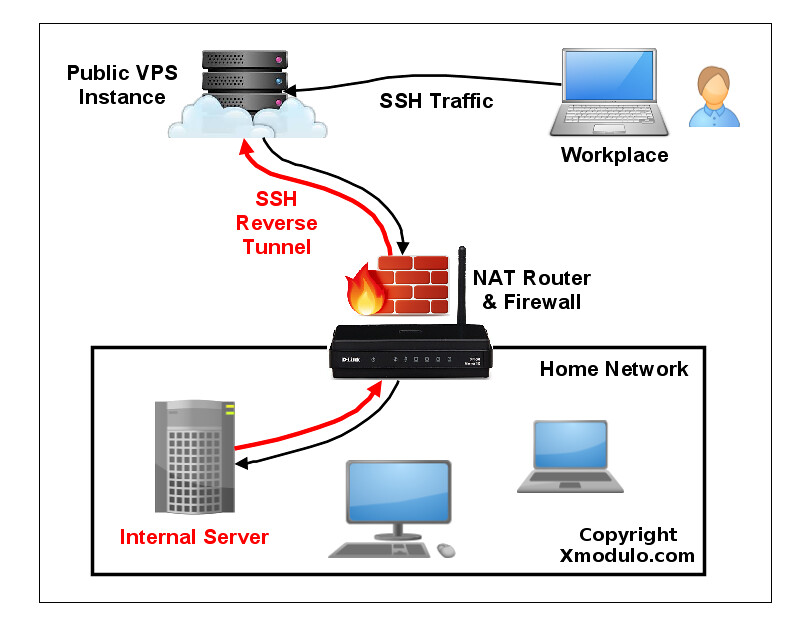

SSH 端口转发的一种替代方案是 **反向 SSH 隧道**。反向 SSH 隧道的概念非常简单。对于此,在限制性家庭网络之外你需要另一台主机(所谓的“中继主机”),你能从当前所在地通过 SSH 登录。你可以用有公共 IP 地址的 [VPS 实例][1] 配置一个中继主机。然后要做的就是从你家庭网络服务器中建立一个到公共中继主机的永久 SSH 隧道。有了这个隧道,你就可以从中继主机中连接“回”家庭服务器(这就是为什么称之为 “反向” 隧道)。不管你在哪里、你家庭网络中的 NAT 或 防火墙限制多么严重,只要你可以访问中继主机,你就可以连接到家庭服务器。

|

||||

SSH 端口转发的一种替代方案是 **反向 SSH 隧道**。反向 SSH 隧道的概念非常简单。使用这种方案,在你的受限的家庭网络之外你需要另一台主机(所谓的“中继主机”),你能从当前所在地通过 SSH 登录到它。你可以用有公网 IP 地址的 [VPS 实例][1] 配置一个中继主机。然后要做的就是从你的家庭网络服务器中建立一个到公网中继主机的永久 SSH 隧道。有了这个隧道,你就可以从中继主机中连接“回”家庭服务器(这就是为什么称之为 “反向” 隧道)。不管你在哪里、你的家庭网络中的 NAT 或 防火墙限制多么严格,只要你可以访问中继主机,你就可以连接到家庭服务器。

|

||||

|

||||

|

||||

|

||||

### 在 Linux 上设置反向 SSH 隧道 ###

|

||||

|

||||

让我们来看看怎样创建和使用反向 SSH 隧道。我们有如下假设。我们会设置一个从家庭服务器到中继服务器的反向 SSH 隧道,然后我们可以通过中继服务器从客户端计算机 SSH 登录到家庭服务器。**中继服务器** 的公共 IP 地址是 1.1.1.1。

|

||||

让我们来看看怎样创建和使用反向 SSH 隧道。我们做如下假设:我们会设置一个从家庭服务器(homeserver)到中继服务器(relayserver)的反向 SSH 隧道,然后我们可以通过中继服务器从客户端计算机(clientcomputer) SSH 登录到家庭服务器。本例中的**中继服务器** 的公网 IP 地址是 1.1.1.1。

|

||||

|

||||

在家庭主机上,按照以下方式打开一个到中继服务器的 SSH 连接。

|

||||

在家庭服务器上,按照以下方式打开一个到中继服务器的 SSH 连接。

|

||||

|

||||

homeserver~$ ssh -fN -R 10022:localhost:22 relayserver_user@1.1.1.1

|

||||

|

||||

@ -20,11 +20,11 @@ SSH 端口转发的一种替代方案是 **反向 SSH 隧道**。反向 SSH 隧

|

||||

|

||||

“-R 10022:localhost:22” 选项定义了一个反向隧道。它转发中继服务器 10022 端口的流量到家庭服务器的 22 号端口。

|

||||

|

||||

用 “-fN” 选项,当你用一个 SSH 服务器成功通过验证时 SSH 会进入后台运行。当你不想在远程 SSH 服务器执行任何命令、就像我们的例子中只想转发端口的时候非常有用。

|

||||

用 “-fN” 选项,当你成功通过 SSH 服务器验证时 SSH 会进入后台运行。当你不想在远程 SSH 服务器执行任何命令,就像我们的例子中只想转发端口的时候非常有用。

|

||||

|

||||

运行上面的命令之后,你就会回到家庭主机的命令行提示框中。

|

||||

|

||||

登录到中继服务器,确认 127.0.0.1:10022 绑定到了 sshd。如果是的话就表示已经正确设置了反向隧道。

|

||||

登录到中继服务器,确认其 127.0.0.1:10022 绑定到了 sshd。如果是的话就表示已经正确设置了反向隧道。

|

||||

|

||||

relayserver~$ sudo netstat -nap | grep 10022

|

||||

|

||||

@ -36,13 +36,13 @@ SSH 端口转发的一种替代方案是 **反向 SSH 隧道**。反向 SSH 隧

|

||||

|

||||

relayserver~$ ssh -p 10022 homeserver_user@localhost

|

||||

|

||||

需要注意的一点是你在本地输入的 SSH 登录/密码应该是家庭服务器的,而不是中继服务器的,因为你是通过隧道的本地端点登录到家庭服务器。因此不要输入中继服务器的登录/密码。成功登陆后,你就在家庭服务器上了。

|

||||

需要注意的一点是你在上面为localhost输入的 SSH 登录/密码应该是家庭服务器的,而不是中继服务器的,因为你是通过隧道的本地端点登录到家庭服务器,因此不要错误输入中继服务器的登录/密码。成功登录后,你就在家庭服务器上了。

|

||||

|

||||

### 通过反向 SSH 隧道直接连接到网络地址变换后的服务器 ###

|

||||

|

||||

上面的方法允许你访问 NAT 后面的 **家庭服务器**,但你需要登录两次:首先登录到 **中继服务器**,然后再登录到**家庭服务器**。这是因为中继服务器上 SSH 隧道的端点绑定到了回环地址(127.0.0.1)。

|

||||

|

||||

事实上,有一种方法可以只需要登录到中继服务器就能直接访问网络地址变换之后的家庭服务器。要做到这点,你需要让中继服务器上的 sshd 不仅转发回环地址上的端口,还要转发外部主机的端口。这通过指定中继服务器上运行的 sshd 的 **网关端口** 实现。

|

||||

事实上,有一种方法可以只需要登录到中继服务器就能直接访问NAT之后的家庭服务器。要做到这点,你需要让中继服务器上的 sshd 不仅转发回环地址上的端口,还要转发外部主机的端口。这通过指定中继服务器上运行的 sshd 的 **GatewayPorts** 实现。

|

||||

|

||||

打开**中继服务器**的 /etc/ssh/sshd_conf 并添加下面的行。

|

||||

|

||||

@ -74,23 +74,23 @@ SSH 端口转发的一种替代方案是 **反向 SSH 隧道**。反向 SSH 隧

|

||||

|

||||

tcp 0 0 1.1.1.1:10022 0.0.0.0:* LISTEN 1538/sshd: dev

|

||||

|

||||

不像之前的情况,现在隧道的端点是 1.1.1.1:10022(中继服务器的公共 IP 地址),而不是 127.0.0.1:10022。这就意味着从外部主机可以访问隧道的端点。

|

||||

不像之前的情况,现在隧道的端点是 1.1.1.1:10022(中继服务器的公网 IP 地址),而不是 127.0.0.1:10022。这就意味着从外部主机可以访问隧道的另一端。

|

||||

|

||||

现在在任何其它计算机(客户端计算机),输入以下命令访问网络地址变换之后的家庭服务器。

|

||||

|

||||

clientcomputer~$ ssh -p 10022 homeserver_user@1.1.1.1

|

||||

|

||||

在上面的命令中,1.1.1.1 是中继服务器的公共 IP 地址,家庭服务器用户必须是和家庭服务器相关联的用户账户。这是因为你真正登录到的主机是家庭服务器,而不是中继服务器。后者只是中继你的 SSH 流量到家庭服务器。

|

||||

在上面的命令中,1.1.1.1 是中继服务器的公共 IP 地址,homeserver_user必须是家庭服务器上的用户账户。这是因为你真正登录到的主机是家庭服务器,而不是中继服务器。后者只是中继你的 SSH 流量到家庭服务器。

|

||||

|

||||

### 在 Linux 上设置一个永久反向 SSH 隧道 ###

|

||||

|

||||

现在你已经明白了怎样创建一个反向 SSH 隧道,然后把隧道设置为 “永久”,这样隧道启动后就会一直运行(不管临时的网络拥塞、SSH 超时、中继主机重启,等等)。毕竟,如果隧道不是一直有效,你不可能可靠的登录到你的家庭服务器。

|

||||

现在你已经明白了怎样创建一个反向 SSH 隧道,然后把隧道设置为 “永久”,这样隧道启动后就会一直运行(不管临时的网络拥塞、SSH 超时、中继主机重启,等等)。毕竟,如果隧道不是一直有效,你就不能可靠的登录到你的家庭服务器。

|

||||

|

||||

对于永久隧道,我打算使用一个叫 autossh 的工具。正如名字暗示的,这个程序允许你不管任何理由自动重启 SSH 会话。因此对于保存一个反向 SSH 隧道有效非常有用。

|

||||

对于永久隧道,我打算使用一个叫 autossh 的工具。正如名字暗示的,这个程序可以让你的 SSH 会话无论因为什么原因中断都会自动重连。因此对于保持一个反向 SSH 隧道非常有用。

|

||||

|

||||

第一步,我们要设置从家庭服务器到中继服务器的[无密码 SSH 登录][2]。这样的话,autossh 可以不需要用户干预就能重启一个损坏的反向 SSH 隧道。

|

||||

|

||||

下一步,在初始化隧道的家庭服务器上[安装 autossh][3]。

|

||||

下一步,在建立隧道的家庭服务器上[安装 autossh][3]。

|

||||

|

||||

在家庭服务器上,用下面的参数运行 autossh 来创建一个连接到中继服务器的永久 SSH 隧道。

|

||||

|

||||

@ -113,7 +113,7 @@ SSH 端口转发的一种替代方案是 **反向 SSH 隧道**。反向 SSH 隧

|

||||

|

||||

### 总结 ###

|

||||

|

||||

在这篇博文中,我介绍了你如何能从外部中通过反向 SSH 隧道访问限制性防火墙或 NAT 网关之后的 Linux 服务器。尽管我介绍了家庭网络中的一个使用事例,在企业网络中使用时你尤其要小心。这样的一个隧道可能被视为违反公司政策,因为它绕过了企业的防火墙并把企业网络暴露给外部攻击。这很可能被误用或者滥用。因此在使用之前一定要记住它的作用。

|

||||

在这篇博文中,我介绍了你如何能从外部通过反向 SSH 隧道访问限制性防火墙或 NAT 网关之后的 Linux 服务器。这里我介绍了家庭网络中的一个使用事例,但在企业网络中使用时你尤其要小心。这样的一个隧道可能被视为违反公司政策,因为它绕过了企业的防火墙并把企业网络暴露给外部攻击。这很可能被误用或者滥用。因此在使用之前一定要记住它的作用。

|

||||

|

||||

--------------------------------------------------------------------------------

|

||||

|

||||

@ -121,11 +121,11 @@ via: http://xmodulo.com/access-linux-server-behind-nat-reverse-ssh-tunnel.html

|

||||

|

||||

作者:[Dan Nanni][a]

|

||||

译者:[ictlyh](https://github.com/ictlyh)

|

||||

校对:[校对者ID](https://github.com/校对者ID)

|

||||

校对:[wxy](https://github.com/wxy)

|

||||

|

||||

本文由 [LCTT](https://github.com/LCTT/TranslateProject) 原创翻译,[Linux中国](http://linux.cn/) 荣誉推出

|

||||

|

||||

[a]:http://xmodulo.com/author/nanni

|

||||

[1]:http://xmodulo.com/go/digitalocean

|

||||

[2]:http://xmodulo.com/how-to-enable-ssh-login-without.html

|

||||

[3]:http://ask.xmodulo.com/install-autossh-linux.html

|

||||

[2]:https://linux.cn/article-5444-1.html

|

||||

[3]:https://linux.cn/article-5459-1.html

|

||||

@ -1,53 +1,54 @@

|

||||

Autojump – 一个高级的‘cd’命令用以快速浏览 Linux 文件系统

|

||||

Autojump:一个可以在 Linux 文件系统快速导航的高级 cd 命令

|

||||

================================================================================

|

||||

对于那些主要通过控制台或终端使用 Linux 命令行来工作的 Linux 用户来说,他们真切地感受到了 Linux 的强大。 然而在 Linux 的分层文件系统中进行浏览有时或许是一件头疼的事,尤其是对于那些新手来说。

|

||||

|

||||

对于那些主要通过控制台或终端使用 Linux 命令行来工作的 Linux 用户来说,他们真切地感受到了 Linux 的强大。 然而在 Linux 的分层文件系统中进行导航有时或许是一件头疼的事,尤其是对于那些新手来说。

|

||||

|

||||

现在,有一个用 Python 写的名为 `autojump` 的 Linux 命令行实用程序,它是 Linux ‘[cd][1]’命令的高级版本。

|

||||

|

||||

|

||||

|

||||

Autojump – 浏览 Linux 文件系统的最快方式

|

||||

*Autojump – Linux 文件系统导航的最快方式*

|

||||

|

||||

这个应用原本由 Joël Schaerer 编写,现在由 +William Ting 维护。

|

||||

|

||||

Autojump 应用从用户那里学习并帮助用户在 Linux 命令行中进行更轻松的目录浏览。与传统的 `cd` 命令相比,autojump 能够更加快速地浏览至目的目录。

|

||||

Autojump 应用可以从用户那里学习并帮助用户在 Linux 命令行中进行更轻松的目录导航。与传统的 `cd` 命令相比,autojump 能够更加快速地导航至目的目录。

|

||||

|

||||

#### autojump 的特色 ####

|

||||

|

||||

- 免费且开源的应用,在 GPL V3 协议下发布。

|

||||

- 自主学习的应用,从用户的浏览习惯中学习。

|

||||

- 更快速地浏览。不必包含子目录的名称。

|

||||

- 对于大多数的标准 Linux 发行版本,能够在软件仓库中下载得到,它们包括 Debian (testing/unstable), Ubuntu, Mint, Arch, Gentoo, Slackware, CentOS, RedHat and Fedora。

|

||||

- 自由开源的应用,在 GPL V3 协议下发布。

|

||||

- 自主学习的应用,从用户的导航习惯中学习。

|

||||

- 更快速地导航。不必包含子目录的名称。

|

||||

- 对于大多数的标准 Linux 发行版本,能够在软件仓库中下载得到,它们包括 Debian (testing/unstable), Ubuntu, Mint, Arch, Gentoo, Slackware, CentOS, RedHat 和 Fedora。

|

||||

- 也能在其他平台中使用,例如 OS X(使用 Homebrew) 和 Windows (通过 Clink 来实现)

|

||||

- 使用 autojump 你可以跳至任何特定的目录或一个子目录。你还可以打开文件管理器来到达某个目录,并查看你在某个目录中所待时间的统计数据。

|

||||

- 使用 autojump 你可以跳至任何特定的目录或一个子目录。你还可以用文件管理器打开某个目录,并查看你在某个目录中所待时间的统计数据。

|

||||

|

||||

#### 前提 ####

|

||||

|

||||

- 版本号不低于 2.6 的 Python

|

||||

|

||||

### 第 1 步: 做一次全局系统升级 ###

|

||||

### 第 1 步: 做一次完整的系统升级 ###

|

||||

|

||||

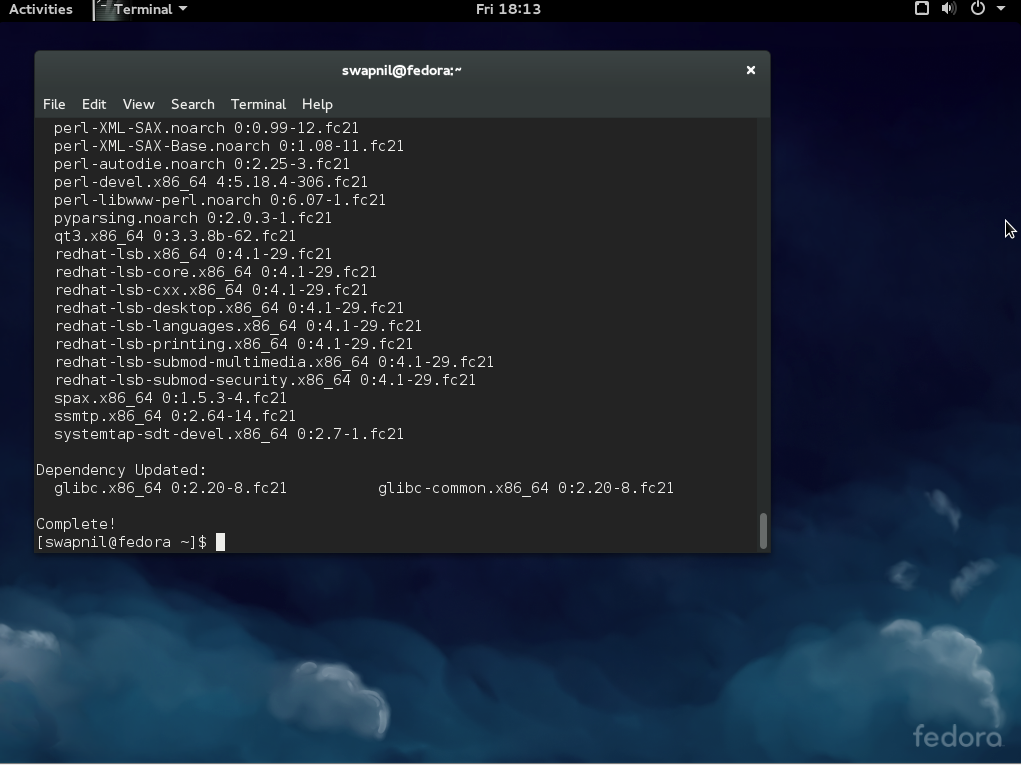

1. 以 **root** 用户的身份,做一次系统更新或升级,以此保证你安装有最新版本的 Python。

|

||||

1、 以 **root** 用户的身份,做一次系统更新或升级,以此保证你安装有最新版本的 Python。

|

||||

|

||||

# apt-get update && apt-get upgrade && apt-get dist-upgrade [APT based systems]

|

||||

# yum update && yum upgrade [YUM based systems]

|

||||

# dnf update && dnf upgrade [DNF based systems]

|

||||

# apt-get update && apt-get upgrade && apt-get dist-upgrade [基于 APT 的系统]

|

||||

# yum update && yum upgrade [基于 YUM 的系统]

|

||||

# dnf update && dnf upgrade [基于 DNF 的系统]

|

||||

|

||||

**注** : 这里特别提醒,在基于 YUM 或 DNF 的系统中,更新和升级执行相同的行动,大多数时间里它们是通用的,这点与基于 APT 的系统不同。

|

||||

|

||||

### 第 2 步: 下载和安装 Autojump ###

|

||||

|

||||

2. 正如前面所言,在大多数的 Linux 发行版本的软件仓库中, autojump 都可获取到。通过包管理器你就可以安装它。但若你想从源代码开始来安装它,你需要克隆源代码并执行 python 脚本,如下面所示:

|

||||

2、 正如前面所言,在大多数的 Linux 发行版本的软件仓库中, autojump 都可获取到。通过包管理器你就可以安装它。但若你想从源代码开始来安装它,你需要克隆源代码并执行 python 脚本,如下面所示:

|

||||

|

||||

#### 从源代码安装 ####

|

||||

|

||||

若没有安装 git,请安装它。我们需要使用它来克隆 git 仓库。

|

||||

|

||||

# apt-get install git [APT based systems]

|

||||

# yum install git [YUM based systems]

|

||||

# dnf install git [DNF based systems]

|

||||

# apt-get install git [基于 APT 的系统]

|

||||

# yum install git [基于 YUM 的系统]

|

||||

# dnf install git [基于 DNF 的系统]

|

||||

|

||||

一旦安装完 git,以常规用户身份登录,然后像下面那样来克隆 autojump:

|

||||

一旦安装完 git,以普通用户身份登录,然后像下面那样来克隆 autojump:

|

||||

|

||||

$ git clone git://github.com/joelthelion/autojump.git

|

||||

|

||||

@ -55,29 +56,29 @@ Autojump 应用从用户那里学习并帮助用户在 Linux 命令行中进行

|

||||

|

||||

$ cd autojump

|

||||

|

||||

下载,赋予脚本文件可执行权限,并以 root 用户身份来运行安装脚本。

|

||||

下载,赋予安装脚本文件可执行权限,并以 root 用户身份来运行安装脚本。

|

||||

|

||||

# chmod 755 install.py

|

||||

# ./install.py

|

||||

|

||||

#### 从软件仓库中安装 ####

|

||||

|

||||

3. 假如你不想麻烦,你可以以 **root** 用户身份从软件仓库中直接安装它:

|

||||

3、 假如你不想麻烦,你可以以 **root** 用户身份从软件仓库中直接安装它:

|

||||

|

||||

在 Debian, Ubuntu, Mint 及类似系统中安装 autojump :

|

||||

|

||||

# apt-get install autojump (注: 这里原文为 autojumo, 应该为 autojump)

|

||||

# apt-get install autojump

|

||||

|

||||

为了在 Fedora, CentOS, RedHat 及类似系统中安装 autojump, 你需要启用 [EPEL 软件仓库][2]。

|

||||

|

||||

# yum install epel-release

|

||||

# yum install autojump

|

||||

OR

|

||||

或

|

||||

# dnf install autojump

|

||||

|

||||

### 第 3 步: 安装后的配置 ###

|

||||

|

||||

4. 在 Debian 及其衍生系统 (Ubuntu, Mint,…) 中, 激活 autojump 应用是非常重要的。

|

||||

4、 在 Debian 及其衍生系统 (Ubuntu, Mint,…) 中, 激活 autojump 应用是非常重要的。

|

||||

|

||||

为了暂时激活 autojump 应用,即直到你关闭当前会话或打开一个新的会话之前让 autojump 均有效,你需要以常规用户身份运行下面的命令:

|

||||

|

||||

@ -89,7 +90,7 @@ Autojump 应用从用户那里学习并帮助用户在 Linux 命令行中进行

|

||||

|

||||

### 第 4 步: Autojump 的预测试和使用 ###

|

||||

|

||||

5. 如先前所言, autojump 将只跳到先前 `cd` 命令到过的目录。所以在我们开始测试之前,我们要使用 `cd` 切换到一些目录中去,并创建一些目录。下面是我所执行的命令。

|

||||

5、 如先前所言, autojump 将只跳到先前 `cd` 命令到过的目录。所以在我们开始测试之前,我们要使用 `cd` 切换到一些目录中去,并创建一些目录。下面是我所执行的命令。

|

||||

|

||||

$ cd

|

||||

$ cd

|

||||

@ -120,45 +121,45 @@ Autojump 应用从用户那里学习并帮助用户在 Linux 命令行中进行

|

||||

|

||||

现在,我们已经切换到过上面所列的目录,并为了测试创建了一些目录,一切准备就绪,让我们开始吧。

|

||||

|

||||

**需要记住的一点** : `j` 是 autojump 的一个包装,你可以使用 j 来代替 autojump, 相反亦可。

|

||||

**需要记住的一点** : `j` 是 autojump 的一个封装,你可以使用 j 来代替 autojump, 相反亦可。

|

||||

|

||||

6. 使用 -v 选项查看安装的 autojump 的版本。

|

||||

6、 使用 -v 选项查看安装的 autojump 的版本。

|

||||

|

||||

$ j -v

|

||||

or

|

||||

或

|

||||

$ autojump -v

|

||||

|

||||

|

||||

|

||||

查看 Autojump 的版本

|

||||

*查看 Autojump 的版本*

|

||||

|

||||

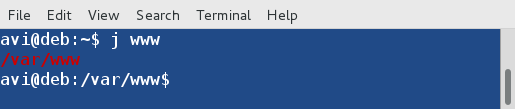

7. 跳到先前到过的目录 ‘/var/www‘。

|

||||

7、 跳到先前到过的目录 ‘/var/www‘。

|

||||

|

||||

$ j www

|

||||

|

||||

|

||||

|

||||

跳到目录

|

||||

*跳到目录*

|

||||

|

||||

8. 跳到先前到过的子目录‘/home/avi/autojump-test/b‘ 而不键入子目录的全名。

|

||||

8、 跳到先前到过的子目录‘/home/avi/autojump-test/b‘ 而不键入子目录的全名。

|

||||

|

||||

$ jc b

|

||||

|

||||

|

||||

|

||||

跳到子目录

|

||||

*跳到子目录*

|

||||

|

||||

9. 使用下面的命令,你就可以从命令行打开一个文件管理器,例如 GNOME Nautilus ,而不是跳到一个目录。

|

||||

9、 使用下面的命令,你就可以从命令行打开一个文件管理器,例如 GNOME Nautilus ,而不是跳到一个目录。

|

||||

|

||||

$ jo www

|

||||

|

||||

|

||||

|

||||

|

||||

跳到目录

|

||||

*打开目录*

|

||||

|

||||

|

||||

|

||||

在文件管理器中打开目录

|

||||

*在文件管理器中打开目录*

|

||||

|

||||

你也可以在一个文件管理器中打开一个子目录。

|

||||

|

||||

@ -166,19 +167,19 @@ Autojump 应用从用户那里学习并帮助用户在 Linux 命令行中进行

|

||||

|

||||

|

||||

|

||||

打开子目录

|

||||

*打开子目录*

|

||||

|

||||

|

||||

|

||||

在文件管理器中打开子目录

|

||||

*在文件管理器中打开子目录*

|

||||

|

||||

10. 查看每个文件夹的关键权重和在所有目录权重中的总关键权重的相关统计数据。文件夹的关键权重代表在这个文件夹中所花的总时间。 目录权重是列表中目录的数目。(注: 在这一句中,我觉得原文中的 if 应该为 is)

|

||||

10、 查看每个文件夹的权重和全部文件夹计算得出的总权重的统计数据。文件夹的权重代表在这个文件夹中所花的总时间。 文件夹权重是该列表中目录的数字。(LCTT 译注: 在这一句中,我觉得原文中的 if 应该为 is)

|

||||

|

||||

$ j --stat

|

||||

|

||||

|

||||

|

||||

|

||||

查看目录统计数据

|

||||

*查看文件夹统计数据*

|

||||

|

||||

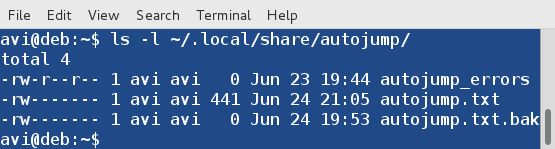

**提醒** : autojump 存储其运行日志和错误日志的地方是文件夹 `~/.local/share/autojump/`。千万不要重写这些文件,否则你将失去你所有的统计状态结果。

|

||||

|

||||

@ -186,15 +187,15 @@ Autojump 应用从用户那里学习并帮助用户在 Linux 命令行中进行

|

||||

|

||||

|

||||

|

||||

Autojump 的日志

|

||||

*Autojump 的日志*

|

||||

|

||||

11. 假如需要,你只需运行下面的命令就可以查看帮助 :

|

||||

11、 假如需要,你只需运行下面的命令就可以查看帮助 :

|

||||

|

||||

$ j --help

|

||||

|

||||

|

||||

|

||||

Autojump 的帮助和选项

|

||||

*Autojump 的帮助和选项*

|

||||

|

||||

### 功能需求和已知的冲突 ###

|

||||

|

||||

@ -204,18 +205,19 @@ Autojump 的帮助和选项

|

||||

|

||||

### 结论: ###

|

||||

|

||||

假如你是一个命令行用户, autojump 是你必备的实用程序。它可以简化许多事情。它是一个在命令行中浏览 Linux 目录的绝佳的程序。请自行尝试它,并在下面的评论框中让我知晓你宝贵的反馈。保持联系,保持分享。喜爱并分享,帮助我们更好地传播。

|

||||

假如你是一个命令行用户, autojump 是你必备的实用程序。它可以简化许多事情。它是一个在命令行中导航 Linux 目录的绝佳的程序。请自行尝试它,并在下面的评论框中让我知晓你宝贵的反馈。保持联系,保持分享。喜爱并分享,帮助我们更好地传播。

|

||||

|

||||

--------------------------------------------------------------------------------

|

||||

|

||||

via: http://www.tecmint.com/autojump-a-quickest-way-to-navigate-linux-filesystem/

|

||||

|

||||

作者:[Avishek Kumar][a]

|

||||

译者:[FSSlc](https://github.com/FSSlc)

|

||||

校对:[校对者ID](https://github.com/校对者ID)

|

||||

校对:[wxy](https://github.com/wxy)

|

||||

|

||||

本文由 [LCTT](https://github.com/LCTT/TranslateProject) 原创翻译,[Linux中国](https://linux.cn/) 荣誉推出

|

||||

|

||||

[a]:http://www.tecmint.com/author/avishek/

|

||||

[1]:http://www.tecmint.com/cd-command-in-linux/

|

||||

[2]:http://www.tecmint.com/how-to-enable-epel-repository-for-rhel-centos-6-5/

|

||||

[2]:https://linux.cn/article-2324-1.html

|

||||

[3]:http://www.tecmint.com/manage-linux-filenames-with-special-characters/

|

||||

@ -1,6 +1,6 @@

|

||||

如何在Ubuntu 14.04/15.04上配置Chef(服务端/客户端)

|

||||

如何在 Ubuntu 上安装配置管理系统 Chef (大厨)

|

||||

================================================================================

|

||||

Chef是对于信息技术专业人员的一款配置管理和自动化工具,它可以配置和管理你的设备无论它在本地还是在云上。它可以用于加速应用部署并协调多个系统管理员和开发人员的工作,涉及到成百甚至上千的服务器和程序来支持大量的客户群。chef最有用的是让设备变成代码。一旦你掌握了Chef,你可以获得一流的网络IT支持来自动化管理你的云端设备或者终端用户。

|

||||

Chef是面对IT专业人员的一款配置管理和自动化工具,它可以配置和管理你的基础设施,无论它在本地还是在云上。它可以用于加速应用部署并协调多个系统管理员和开发人员的工作,这涉及到可支持大量的客户群的成百上千的服务器和程序。chef最有用的是让基础设施变成代码。一旦你掌握了Chef,你可以获得一流的网络IT支持来自动化管理你的云端基础设施或者终端用户。

|

||||

|

||||

下面是我们将要在本篇中要设置和配置Chef的主要组件。

|

||||

|

||||

@ -10,34 +10,13 @@ Chef是对于信息技术专业人员的一款配置管理和自动化工具,

|

||||

|

||||

我们将在下面的基础环境下设置Chef配置管理系统。

|

||||

|

||||

注:表格

|

||||

<table width="701" style="height: 284px;">

|

||||

<tbody>

|

||||

<tr>

|

||||

<td width="660" colspan="2"><strong>管理和配置工具:Chef</strong></td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td width="220"><strong>基础操作系统</strong></td>

|

||||

<td width="492">Ubuntu 14.04.1 LTS (x86_64)</td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td width="220"><strong>Chef Server</strong></td>

|

||||

<td width="492">Version 12.1.0</td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td width="220"><strong>Chef Manage</strong></td>

|

||||

<td width="492">Version 1.17.0</td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td width="220"><strong>Chef Development Kit</strong></td>

|

||||

<td width="492">Version 0.6.2</td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td width="220"><strong>内存和CPU</strong></td>

|

||||

<td width="492">4 GB , 2.0+2.0 GHZ</td>

|

||||

</tr>

|

||||

</tbody>

|

||||

</table>

|

||||

|管理和配置工具:Chef||

|

||||

|-------------------------------|---|

|

||||

|基础操作系统|Ubuntu 14.04.1 LTS (x86_64)|

|

||||

|Chef Server|Version 12.1.0|

|

||||

|Chef Manage|Version 1.17.0|

|

||||

|Chef Development Kit|Version 0.6.2|

|

||||

|内存和CPU|4 GB , 2.0+2.0 GHz|

|

||||

|

||||

### Chef服务端的安装和配置 ###

|

||||

|

||||

@ -45,15 +24,15 @@ Chef服务端是核心组件,它存储配置以及其他和工作站交互的

|

||||

|

||||

我使用下面的命令来下载和安装它。

|

||||

|

||||

**1) 下载Chef服务端**

|

||||

####1) 下载Chef服务端

|

||||

|

||||

root@ubuntu-14-chef:/tmp# wget https://web-dl.packagecloud.io/chef/stable/packages/ubuntu/trusty/chef-server-core_12.1.0-1_amd64.deb

|

||||

|

||||

**2) 安装Chef服务端**

|

||||

####2) 安装Chef服务端

|

||||

|

||||

root@ubuntu-14-chef:/tmp# dpkg -i chef-server-core_12.1.0-1_amd64.deb

|

||||

|

||||

**3) 重新配置Chef服务端**

|

||||

####3) 重新配置Chef服务端

|

||||

|

||||

现在运行下面的命令来启动所有的chef服务端服务,这步也许会花费一些时间,因为它有许多不同一起工作的服务组成来创建一个正常运作的系统。

|

||||

|

||||

@ -64,35 +43,35 @@ chef服务端启动命令'chef-server-ctl reconfigure'需要运行两次,这

|

||||

Chef Client finished, 342/350 resources updated in 113.71139964 seconds

|

||||

opscode Reconfigured!

|

||||

|

||||

**4) 重启系统 **

|

||||

####4) 重启系统

|

||||

|

||||

安装完成后重启系统使系统能最好的工作,不然我们或许会在创建用户的时候看到下面的SSL连接错误。

|

||||

|

||||

ERROR: Errno::ECONNRESET: Connection reset by peer - SSL_connect

|

||||

|

||||

**5) 创建心的管理员**

|

||||

####5) 创建新的管理员

|

||||

|

||||

运行下面的命令来创建一个新的用它自己的配置的管理员账户。创建过程中,用户的RSA私钥会自动生成并需要被保存到一个安全的地方。--file选项会保存RSA私钥到指定的路径下。

|

||||

运行下面的命令来创建一个新的管理员账户及其配置。创建过程中,用户的RSA私钥会自动生成,它需要保存到一个安全的地方。--file选项会保存RSA私钥到指定的路径下。

|

||||

|

||||

root@ubuntu-14-chef:/tmp# chef-server-ctl user-create kashi kashi kashi kashif.fareedi@gmail.com kashi123 --filename /root/kashi.pem

|

||||

|

||||

### Chef服务端的管理设置 ###

|

||||

|

||||

Chef Manage是一个针对企业Chef用户的管理控制台,它启用了可视化的web用户界面并可以管理节点、数据包、规则、环境、配置和基于角色的访问控制(RBAC)

|

||||

Chef Manage是一个针对企业Chef用户的管理控制台,它提供了可视化的web用户界面,可以管理节点、数据包、规则、环境、Cookbook 和基于角色的访问控制(RBAC)

|

||||

|

||||

**1) 下载Chef Manage**

|

||||

####1) 下载Chef Manage

|

||||

|

||||

从官网复制链接病下载chef manage的安装包。

|

||||

从官网复制链接并下载chef manage的安装包。

|

||||

|

||||

root@ubuntu-14-chef:~# wget https://web-dl.packagecloud.io/chef/stable/packages/ubuntu/trusty/opscode-manage_1.17.0-1_amd64.deb

|

||||

|

||||

**2) 安装Chef Manage**

|

||||

####2) 安装Chef Manage

|

||||

|

||||

使用下面的命令在root的家目录下安装它。

|

||||

|

||||

root@ubuntu-14-chef:~# chef-server-ctl install opscode-manage --path /root

|

||||

|

||||

**3) 重启Chef Manage和服务端**

|

||||

####3) 重启Chef Manage和服务端

|

||||

|

||||

安装完成后我们需要运行下面的命令来重启chef manage和服务端。

|

||||

|

||||

@ -101,28 +80,27 @@ Chef Manage是一个针对企业Chef用户的管理控制台,它启用了可

|

||||

|

||||

### Chef Manage网页控制台 ###

|

||||

|

||||

我们可以使用localhost访问网页控制台以及fqdn,并用已经创建的管理员登录

|

||||

我们可以使用localhost或它的全称域名来访问网页控制台,并用已经创建的管理员登录

|

||||

|

||||

|

||||

|

||||

**1) Chef Manage创建新的组织 **

|

||||

####1) Chef Manage创建新的组织

|

||||

|

||||

你或许被要求创建新的组织或者接受其他阻止的邀请。如下所示,使用缩写和全名来创建一个新的组织。

|

||||

你或许被要求创建新的组织,或者也可以接受其他组织的邀请。如下所示,使用缩写和全名来创建一个新的组织。

|

||||

|

||||

|

||||

|

||||

**2) 用命令行创建心的组织 **

|

||||

####2) 用命令行创建新的组织

|

||||

|

||||

We can also create new Organization from the command line by executing the following command.

|

||||

我们同样也可以运行下面的命令来创建新的组织。

|

||||

|

||||

root@ubuntu-14-chef:~# chef-server-ctl org-create linux Linoxide Linux Org. --association_user kashi --filename linux.pem

|

||||

|

||||

### 设置工作站 ###

|

||||

|

||||

我们已经完成安装chef服务端,现在我们可以开始创建任何recipes、cookbooks、属性和其他任何的我们想要对Chef的修改。

|

||||

我们已经完成安装chef服务端,现在我们可以开始创建任何recipes([基础配置元素](https://docs.chef.io/recipes.html))、cookbooks([基础配置集](https://docs.chef.io/cookbooks.html))、attributes([节点属性](https://docs.chef.io/attributes.html))和其他任何的我们想要对Chef做的修改。

|

||||

|

||||

**1) 在Chef服务端上创建新的用户和组织 **

|

||||

####1) 在Chef服务端上创建新的用户和组织

|

||||

|

||||

为了设置工作站,我们用命令行创建一个新的用户和组织。

|

||||

|

||||

@ -130,25 +108,23 @@ We can also create new Organization from the command line by executing the follo

|

||||

|

||||

root@ubuntu-14-chef:~# chef-server-ctl org-create blogs Linoxide Blogs Inc. --association_user bloger --filename blogs.pem

|

||||

|

||||

**2) 下载工作站入门套件 **

|

||||

####2) 下载工作站入门套件

|

||||

|

||||

Now Download and Save starter-kit from the chef manage web console on a workstation and use it to work with Chef server.

|

||||

在工作站的网页控制台中下面并保存入门套件用于与服务端协同工作

|

||||

在工作站的网页控制台中下载保存入门套件,它用于与服务端协同工作

|

||||

|

||||

|

||||

|

||||

**3) 点击"Proceed"下载套件 **

|

||||

####3) 下载套件后,点击"Proceed"

|

||||

|

||||

|

||||

|

||||

### 对于工作站的Chef开发套件设置 ###

|

||||

### 用于工作站的Chef开发套件设置 ###

|

||||

|

||||

Chef开发套件是一款包含所有开发chef所需工具的软件包。它捆绑了由Chef开发的带Chef客户端的工具。

|

||||

Chef开发套件是一款包含开发chef所需的所有工具的软件包。它捆绑了由Chef开发的带Chef客户端的工具。

|

||||

|

||||

**1) 下载 Chef DK**

|

||||

####1) 下载 Chef DK

|

||||

|

||||

We can Download chef development kit from its official web link and choose the required operating system to get its chef development tool kit.

|

||||

我们可以从它的官网链接中下载开发包,并选择操作系统来得到chef开发包。

|

||||

我们可以从它的官网链接中下载开发包,并选择操作系统来下载chef开发包。

|

||||

|

||||

|

||||

|

||||

@ -156,13 +132,13 @@ We can Download chef development kit from its official web link and choose the r

|

||||

|

||||

root@ubuntu-15-WKS:~# wget https://opscode-omnibus-packages.s3.amazonaws.com/ubuntu/12.04/x86_64/chefdk_0.6.2-1_amd64.deb

|

||||

|

||||

**1) Chef开发套件安装**

|

||||

####2) Chef开发套件安装

|

||||

|

||||

使用dpkg命令安装开发套件

|

||||

|

||||

root@ubuntu-15-WKS:~# dpkg -i chefdk_0.6.2-1_amd64.deb

|

||||

|

||||

**3) Chef DK 验证**

|

||||

####3) Chef DK 验证

|

||||

|

||||

使用下面的命令验证客户端是否已经正确安装。

|

||||

|

||||

@ -195,7 +171,7 @@ We can Download chef development kit from its official web link and choose the r

|

||||

Verification of component 'chefspec' succeeded.

|

||||

Verification of component 'package installation' succeeded.

|

||||

|

||||

**连接Chef服务端**

|

||||

####4) 连接Chef服务端

|

||||

|

||||

我们将创建 ~/.chef并从chef服务端复制两个用户和组织的pem文件到chef的文件到这个目录下。

|

||||

|

||||

@ -209,7 +185,7 @@ We can Download chef development kit from its official web link and choose the r

|

||||

kashi.pem 100% 1678 1.6KB/s 00:00

|

||||

linux.pem 100% 1678 1.6KB/s 00:00

|

||||

|

||||

** 编辑配置来管理chef环境 **

|

||||

####5) 编辑配置来管理chef环境

|

||||

|

||||

现在使用下面的内容创建"~/.chef/knife.rb"。

|

||||

|

||||

@ -231,13 +207,13 @@ We can Download chef development kit from its official web link and choose the r

|

||||

|

||||

root@ubuntu-15-WKS:/# mkdir cookbooks

|

||||

|

||||

**测试Knife配置**

|

||||

####6) 测试Knife配置

|

||||

|

||||

运行“knife user list”和“knife client list”来验证knife是否在工作。

|

||||

|

||||

root@ubuntu-15-WKS:/.chef# knife user list

|

||||

|

||||

第一次运行的时候可能会得到下面的错误,这是因为工作站上还没有chef服务端的SSL证书。

|

||||

第一次运行的时候可能会看到下面的错误,这是因为工作站上还没有chef服务端的SSL证书。

|

||||

|

||||

ERROR: SSL Validation failure connecting to host: 172.25.10.173 - SSL_connect returned=1 errno=0 state=SSLv3 read server certificate B: certificate verify failed

|

||||

ERROR: Could not establish a secure connection to the server.

|

||||

@ -245,24 +221,24 @@ We can Download chef development kit from its official web link and choose the r

|

||||

If your Chef Server uses a self-signed certificate, you can use

|

||||

`knife ssl fetch` to make knife trust the server's certificates.

|

||||

|

||||

要从上面的命令中恢复,运行下面的命令来获取ssl整数并重新运行knife user和client list,这时候应该就可以了。

|

||||

要从上面的命令中恢复,运行下面的命令来获取ssl证书,并重新运行knife user和client list,这时候应该就可以了。

|

||||

|

||||

root@ubuntu-15-WKS:/.chef# knife ssl fetch

|

||||

WARNING: Certificates from 172.25.10.173 will be fetched and placed in your trusted_cert

|

||||

directory (/.chef/trusted_certs).

|

||||

|

||||

knife没有办法验证这些是有效的证书。你应该在下载时候验证这些证书的真实性。

|

||||

knife没有办法验证这些是有效的证书。你应该在下载时候验证这些证书的真实性。

|

||||

|

||||

在/.chef/trusted_certs/ubuntu-14-chef_test_com.crt下面添加ubuntu-14-chef.test.com的证书。

|

||||

在/.chef/trusted_certs/ubuntu-14-chef_test_com.crt下面添加ubuntu-14-chef.test.com的证书。

|

||||

|

||||

在上面的命令取得ssl证书后,接着运行下面的命令。

|

||||

|

||||

root@ubuntu-15-WKS:/.chef#knife client list

|

||||

kashi-linux

|

||||

|

||||

### 与chef服务端交互的新的节点 ###

|

||||

### 配置与chef服务端交互的新节点 ###

|

||||

|

||||

节点是执行所有设备自动化的chef客户端。因此是时侯添加新的服务端到我们的chef环境下,在配置完chef-server和knife工作站后配置新的节点与chef-server交互。

|

||||

节点是执行所有基础设施自动化的chef客户端。因此,在配置完chef-server和knife工作站后,通过配置新的与chef-server交互的节点,来添加新的服务端到我们的chef环境下。

|

||||

|

||||

我们使用下面的命令来添加新的节点与chef服务端工作。

|

||||

|

||||

@ -291,16 +267,16 @@ We can Download chef development kit from its official web link and choose the r

|

||||

172.25.10.170 to file /tmp/install.sh.26024/metadata.txt

|

||||

172.25.10.170 trying wget...

|

||||

|

||||

之后我们可以在knife节点列表下看到新创建的节点,也会新节点列表下创建新的客户端。

|

||||

之后我们可以在knife节点列表下看到新创建的节点,它也会在新节点创建新的客户端。

|

||||

|

||||

root@ubuntu-15-WKS:~# knife node list

|

||||

mydns

|

||||

|

||||

相似地我们只要提供ssh证书通过上面的knife命令来创建多个节点到chef设备上。

|

||||

相似地我们只要提供ssh证书通过上面的knife命令,就可以在chef设施上创建多个节点。

|

||||

|

||||

### 总结 ###

|

||||

|

||||

本篇我们学习了chef管理工具并通过安装和配置设置浏览了它的组件。我希望你在学习安装和配置Chef服务端以及它的工作站和客户端节点中获得乐趣。

|

||||

本篇我们学习了chef管理工具并通过安装和配置设置基本了解了它的组件。我希望你在学习安装和配置Chef服务端以及它的工作站和客户端节点中获得乐趣。

|

||||

|

||||

--------------------------------------------------------------------------------

|

||||

|

||||

@ -308,7 +284,7 @@ via: http://linoxide.com/ubuntu-how-to/install-configure-chef-ubuntu-14-04-15-04

|

||||

|

||||

作者:[Kashif Siddique][a]

|

||||

译者:[geekpi](https://github.com/geekpi)

|

||||

校对:[校对者ID](https://github.com/校对者ID)

|

||||

校对:[wxy](https://github.com/wxy)

|

||||

|

||||

本文由 [LCTT](https://github.com/LCTT/TranslateProject) 原创翻译,[Linux中国](https://linux.cn/) 荣誉推出

|

||||

|

||||

178

published/20150717 How to collect NGINX metrics - Part 2.md

Normal file

178

published/20150717 How to collect NGINX metrics - Part 2.md

Normal file

@ -0,0 +1,178 @@

|

||||

|

||||

如何收集 NGINX 指标(第二篇)

|

||||

================================================================================

|

||||

|

||||

|

||||

### 如何获取你所需要的 NGINX 指标 ###

|

||||

|

||||

如何获取需要的指标取决于你正在使用的 NGINX 版本以及你希望看到哪些指标。(参见 [如何监控 NGINX(第一篇)][1] 来深入了解NGINX指标。)自由开源的 NGINX 和商业版的 NGINX Plus 都有可以报告指标度量的状态模块,NGINX 也可以在其日志中配置输出特定指标:

|

||||

|

||||

**指标可用性**

|

||||

|

||||

| 指标 | [NGINX (开源)](https://www.datadoghq.com/blog/how-to-collect-nginx-metrics/#open-source) | [NGINX Plus](https://www.datadoghq.com/blog/how-to-collect-nginx-metrics/#plus) | [NGINX 日志](https://www.datadoghq.com/blog/how-to-collect-nginx-metrics/#logs)|

|

||||

|-----|------|-------|-----|

|

||||

|accepts(接受) / accepted(已接受)|x|x| |

|

||||

|handled(已处理)|x|x| |

|

||||

|dropped(已丢弃)|x|x| |

|

||||

|active(活跃)|x|x| |

|

||||

|requests (请求数)/ total(全部请求数)|x|x| |

|

||||

|4xx 代码||x|x|

|

||||

|5xx 代码||x|x|

|

||||

|request time(请求处理时间)|||x|

|

||||

|

||||

#### 指标收集:NGINX(开源版) ####

|

||||

|

||||

开源版的 NGINX 会在一个简单的状态页面上显示几个与服务器状态有关的基本指标,它们由你启用的 HTTP [stub status module][2] 所提供。要检查该模块是否已启用,运行以下命令:

|

||||

|

||||

nginx -V 2>&1 | grep -o with-http_stub_status_module

|

||||

|

||||

如果你看到终端输出了 **http_stub_status_module**,说明该状态模块已启用。

|

||||

|

||||

如果该命令没有输出,你需要启用该状态模块。你可以在[从源代码构建 NGINX ][3]时使用 `--with-http_stub_status_module` 配置参数:

|

||||

|

||||

./configure \

|

||||

… \

|

||||

--with-http_stub_status_module

|

||||

make

|

||||

sudo make install

|

||||

|

||||

在验证该模块已经启用或你自己启用它后,你还需要修改 NGINX 配置文件,来给状态页面设置一个本地可访问的 URL(例如: /nginx_status):

|

||||

|

||||

server {

|

||||

location /nginx_status {

|

||||

stub_status on;

|

||||

|

||||

access_log off;

|

||||

allow 127.0.0.1;

|

||||

deny all;

|

||||

}

|

||||

}

|

||||

|

||||

注:nginx 配置中的 server 块通常并不放在主配置文件中(例如:/etc/nginx/nginx.conf),而是放在主配置会加载的辅助配置文件中。要找到主配置文件,首先运行以下命令:

|

||||

|

||||

nginx -t

|

||||

|

||||

打开列出的主配置文件,在以 http 块结尾的附近查找以 include 开头的行,如:

|

||||

|

||||

include /etc/nginx/conf.d/*.conf;

|

||||

|

||||

在其中一个包含的配置文件中,你应该会找到主 **server** 块,你可以如上所示配置 NGINX 的指标输出。更改任何配置后,通过执行以下命令重新加载配置文件:

|

||||

|

||||

nginx -s reload

|

||||

|

||||

现在,你可以浏览状态页看到你的指标:

|

||||

|

||||

Active connections: 24

|

||||

server accepts handled requests

|

||||

1156958 1156958 4491319

|

||||

Reading: 0 Writing: 18 Waiting : 6

|

||||

|

||||

请注意,如果你希望从远程计算机访问该状态页面,则需要将远程计算机的 IP 地址添加到你的状态配置文件的白名单中,在上面的配置文件中的白名单仅有 127.0.0.1。

|

||||

|

||||

NGINX 的状态页面是一种快速查看指标状况的简单方法,但当连续监测时,你需要按照标准间隔自动记录该数据。监控工具箱 [Nagios][4] 或者 [Datadog][5],以及收集统计信息的服务 [collectD][6] 已经可以解析 NGINX 的状态信息了。

|

||||

|

||||

#### 指标收集: NGINX Plus ####

|

||||

|

||||

商业版的 NGINX Plus 通过它的 ngx_http_status_module 提供了比开源版 NGINX [更多的指标][7]。NGINX Plus 以字节流的方式提供这些额外的指标,提供了关于上游系统和高速缓存的信息。NGINX Plus 也会报告所有的 HTTP 状态码类型(1XX,2XX,3XX,4XX,5XX)的计数。一个 NGINX Plus 状态报告例子[可在此查看][8]:

|

||||

|

||||

|

||||

|

||||

注:NGINX Plus 在状态仪表盘中的“Active”连接的定义和开源 NGINX 通过 stub_status_module 收集的“Active”连接指标略有不同。在 NGINX Plus 指标中,“Active”连接不包括Waiting状态的连接(即“Idle”连接)。

|

||||

|

||||

NGINX Plus 也可以输出 [JSON 格式的指标][9],可以用于集成到其他监控系统。在 NGINX Plus 中,你可以看到 [给定的上游服务器组][10]的指标和健康状况,或者简单地从上游服务器的[单个服务器][11]得到响应代码的计数:

|

||||

|

||||

{"1xx":0,"2xx":3483032,"3xx":0,"4xx":23,"5xx":0,"total":3483055}

|

||||

|

||||

要启动 NGINX Plus 指标仪表盘,你可以在 NGINX 配置文件的 http 块内添加状态 server 块。 (参见上一节,为收集开源版 NGINX 指标而如何查找相关的配置文件的说明。)例如,要设置一个状态仪表盘 (http://your.ip.address:8080/status.html)和一个 JSON 接口(http://your.ip.address:8080/status),可以添加以下 server 块来设定:

|

||||

|

||||

server {

|

||||

listen 8080;

|

||||

root /usr/share/nginx/html;

|

||||

|

||||

location /status {

|

||||

status;

|

||||

}

|

||||

|

||||

location = /status.html {

|

||||

}

|

||||

}

|

||||

|

||||

当你重新加载 NGINX 配置后,状态页就可以用了:

|

||||

|

||||

nginx -s reload

|

||||

|

||||

关于如何配置扩展状态模块,官方 NGINX Plus 文档有 [详细介绍][13] 。

|

||||

|

||||

#### 指标收集:NGINX 日志 ####

|

||||

|

||||

NGINX 的 [日志模块][14] 会把可自定义的访问日志写到你配置的指定位置。你可以通过[添加或移除变量][15]来自定义日志的格式和包含的数据。要存储详细的日志,最简单的方法是添加下面一行在你配置文件的 server 块中(参见上上节,为收集开源版 NGINX 指标而如何查找相关的配置文件的说明。):

|

||||

|

||||

access_log logs/host.access.log combined;

|

||||

|

||||

更改 NGINX 配置文件后,执行如下命令重新加载配置文件:

|

||||

|

||||

nginx -s reload

|

||||

|

||||

默认包含的 “combined” 的日志格式,会包括[一系列关键的数据][17],如实际的 HTTP 请求和相应的响应代码。在下面的示例日志中,NGINX 记录了请求 /index.html 时的 200(成功)状态码和访问不存在的请求文件 /fail 的 404(未找到)错误。

|

||||

|

||||

127.0.0.1 - - [19/Feb/2015:12:10:46 -0500] "GET /index.html HTTP/1.1" 200 612 "-" "Mozilla/5.0 (Macintosh; Intel Mac OS X 10_10_1) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/40.0.2214.111 Safari 537.36"

|

||||

|

||||

127.0.0.1 - - [19/Feb/2015:12:11:05 -0500] "GET /fail HTTP/1.1" 404 570 "-" "Mozilla/5.0 (Macintosh; Intel Mac OS X 10_10_1) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/40.0.2214.111 Safari/537.36"

|

||||

|

||||

你可以通过在 NGINX 配置文件中的 http 块添加一个新的日志格式来记录请求处理时间:

|

||||

|

||||

log_format nginx '$remote_addr - $remote_user [$time_local] '

|

||||

'"$request" $status $body_bytes_sent $request_time '

|

||||

'"$http_referer" "$http_user_agent"';

|

||||

|

||||

并修改配置文件中 **server** 块的 access_log 行:

|

||||

|

||||

access_log logs/host.access.log nginx;

|

||||

|

||||

重新加载配置文件后(运行 `nginx -s reload`),你的访问日志将包括响应时间,如下所示。单位为秒,精度到毫秒。在这个例子中,服务器接收到一个对 /big.pdf 的请求时,发送 33973115 字节后返回 206(成功)状态码。处理请求用时 0.202 秒(202毫秒):

|

||||

|

||||

127.0.0.1 - - [19/Feb/2015:15:50:36 -0500] "GET /big.pdf HTTP/1.1" 206 33973115 0.202 "-" "Mozilla/5.0 (Macintosh; Intel Mac OS X 10_10_1) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/40.0.2214.111 Safari/537.36"

|

||||

|

||||

你可以使用各种工具和服务来解析和分析 NGINX 日志。例如,[rsyslog][18] 可以监视你的日志,并将其传递给多个日志分析服务;你也可以使用自由开源工具,比如 [logstash][19] 来收集和分析日志;或者你可以使用一个统一日志记录层,如 [Fluentd][20] 来收集和解析你的 NGINX 日志。

|

||||

|

||||

### 结论 ###

|

||||

|

||||

监视 NGINX 的哪一项指标将取决于你可用的工具,以及监控指标所提供的信息是否满足你们的需要。举例来说,错误率的收集是否足够重要到需要你们购买 NGINX Plus ,还是架设一个可以捕获和分析日志的系统就够了?

|

||||

|

||||

在 Datadog 中,我们已经集成了 NGINX 和 NGINX Plus,这样你就可以以最小的设置来收集和监控所有 Web 服务器的指标。[在本文中][21]了解如何用 NGINX Datadog 来监控 ,并开始 [Datadog 的免费试用][22]吧。

|

||||

|

||||

|

||||

--------------------------------------------------------------------------------

|

||||

|

||||

via: https://www.datadoghq.com/blog/how-to-collect-nginx-metrics/

|

||||

|

||||

作者:K Young

|

||||

译者:[strugglingyouth](https://github.com/strugglingyouth)

|

||||

校对:[wxy](https://github.com/wxy)

|

||||

|

||||

本文由 [LCTT](https://github.com/LCTT/TranslateProject) 原创翻译,[Linux中国](https://linux.cn/) 荣誉推出

|

||||

|

||||

[1]:https://www.datadoghq.com/blog/how-to-monitor-nginx/

|

||||

[2]:http://nginx.org/en/docs/http/ngx_http_stub_status_module.html

|

||||

[3]:http://wiki.nginx.org/InstallOptions

|

||||

[4]:https://exchange.nagios.org/directory/Plugins/Web-Servers/nginx

|

||||

[5]:http://docs.datadoghq.com/integrations/nginx/

|

||||

[6]:https://collectd.org/wiki/index.php/Plugin:nginx

|

||||

[7]:http://nginx.org/en/docs/http/ngx_http_status_module.html#data

|

||||

[8]:http://demo.nginx.com/status.html

|

||||

[9]:http://demo.nginx.com/status

|

||||

[10]:http://demo.nginx.com/status/upstreams/demoupstreams

|

||||

[11]:http://demo.nginx.com/status/upstreams/demoupstreams/0/responses

|

||||

[12]:https://www.datadoghq.com/blog/how-to-collect-nginx-metrics/#open-source

|

||||

[13]:http://nginx.org/en/docs/http/ngx_http_status_module.html#example

|

||||

[14]:http://nginx.org/en/docs/http/ngx_http_log_module.html

|

||||

[15]:http://nginx.org/en/docs/http/ngx_http_log_module.html#log_format

|

||||

[16]:https://www.datadoghq.com/blog/how-to-collect-nginx-metrics/#open-source

|

||||

[17]:http://nginx.org/en/docs/http/ngx_http_log_module.html#log_format

|

||||

[18]:http://www.rsyslog.com/

|

||||

[19]:https://www.elastic.co/products/logstash

|

||||

[20]:http://www.fluentd.org/

|

||||

[21]:https://www.datadoghq.com/blog/how-to-monitor-nginx-with-datadog/

|

||||

[22]:https://www.datadoghq.com/blog/how-to-collect-nginx-metrics/#sign-up

|

||||

[23]:https://github.com/DataDog/the-monitor/blob/master/nginx/how_to_collect_nginx_metrics.md

|

||||

[24]:https://github.com/DataDog/the-monitor/issues

|

||||

@ -1,16 +1,16 @@

|

||||

在Linux中利用"Explain Shell"脚本更容易地理解Shell命令

|

||||

轻松使用“Explain Shell”脚本来理解 Shell 命令

|

||||

================================================================================

|

||||

在某些时刻, 当我们在Linux平台上工作时我们所有人都需要shell命令的帮助信息。 尽管内置的帮助像man pages、whatis命令是有帮助的, 但man pages的输出非常冗长, 除非是个有linux经验的人,不然从大量的man pages中获取帮助信息是非常困难的,而whatis命令的输出很少超过一行, 这对初学者来说是不够的。

|

||||

我们在Linux上工作时,每个人都会遇到需要查找shell命令的帮助信息的时候。 尽管内置的帮助像man pages、whatis命令有所助益, 但man pages的输出非常冗长, 除非是个有linux经验的人,不然从大量的man pages中获取帮助信息是非常困难的,而whatis命令的输出很少超过一行, 这对初学者来说是不够的。

|

||||

|

||||

|

||||

|

||||

在Linux Shell中解释Shell命令

|

||||

*在Linux Shell中解释Shell命令*

|

||||

|

||||

有一些第三方应用程序, 像我们在[Commandline Cheat Sheet for Linux Users][1]提及过的'cheat'命令。Cheat是个杰出的应用程序,即使计算机没有联网也能提供shell命令的帮助, 但是它仅限于预先定义好的命令。

|

||||

有一些第三方应用程序, 像我们在[Linux 用户的命令行速查表][1]提及过的'cheat'命令。cheat是个优秀的应用程序,即使计算机没有联网也能提供shell命令的帮助, 但是它仅限于预先定义好的命令。

|

||||

|

||||

Jackson写了一小段代码,它能非常有效地在bash shell里面解释shell命令,可能最美之处就是你不需要安装第三方包了。他把包含这段代码的的文件命名为”explain.sh“。

|

||||

Jackson写了一小段代码,它能非常有效地在bash shell里面解释shell命令,可能最美之处就是你不需要安装第三方包了。他把包含这段代码的的文件命名为“explain.sh”。

|

||||

|

||||

#### Explain工具的特性 ####

|

||||

#### explain.sh工具的特性 ####

|

||||

|

||||

- 易嵌入代码。

|

||||

- 不需要安装第三方工具。

|

||||

@ -18,22 +18,22 @@ Jackson写了一小段代码,它能非常有效地在bash shell里面解释she

|

||||

- 需要网络连接才能工作。

|

||||

- 纯命令行工具。

|

||||

- 可以解释bash shell里面的大部分shell命令。

|

||||

- 无需root账户参与。

|

||||

- 无需使用root账户。

|

||||

|

||||

**先决条件**

|

||||

|

||||

唯一的条件就是'curl'包了。 在如今大多数Linux发行版里面已经预安装了culr包, 如果没有你可以按照下面的命令来安装。

|

||||

唯一的条件就是'curl'包了。 在如今大多数Linux发行版里面已经预安装了curl包, 如果没有你可以按照下面的命令来安装。

|

||||

|

||||

# apt-get install curl [On Debian systems]

|

||||

# yum install curl [On CentOS systems]

|

||||

|

||||

### 在Linux上安装explain.sh工具 ###

|

||||

|

||||

我们要将下面这段代码插入'~/.bashrc'文件(LCTT注: 若没有该文件可以自己新建一个)中。我们必须为每个用户以及对应的'.bashrc'文件插入这段代码,笔者建议你不要加在root用户下。

|

||||

我们要将下面这段代码插入'~/.bashrc'文件(LCTT译注: 若没有该文件可以自己新建一个)中。我们要为每个用户以及对应的'.bashrc'文件插入这段代码,但是建议你不要加在root用户下。

|

||||

|

||||

我们注意到.bashrc文件的第一行代码以(#)开始, 这个是可选的并且只是为了区分余下的代码。

|

||||

|

||||

# explain.sh 标记代码的开始, 我们将代码插入.bashrc文件的底部。

|

||||

\# explain.sh 标记代码的开始, 我们将代码插入.bashrc文件的底部。

|

||||

|

||||

# explain.sh begins

|

||||

explain () {

|

||||

@ -53,7 +53,7 @@ Jackson写了一小段代码,它能非常有效地在bash shell里面解释she

|

||||

|

||||

### explain.sh工具的使用 ###

|

||||

|

||||

在插入代码并保存之后,你必须退出当前的会话然后重新登录来使改变生效(LCTT注:你也可以直接使用命令“source~/.bashrc”来让改变生效)。每件事情都是交由‘curl’命令处理, 它负责将需要解释的命令以及命令选项传送给mankier服务,然后将必要的信息打印到Linux命令行。不必说的就是使用这个工具你总是需要连接网络。

|

||||

在插入代码并保存之后,你必须退出当前的会话然后重新登录来使改变生效(LCTT译注:你也可以直接使用命令`source~/.bashrc` 来让改变生效)。每件事情都是交由‘curl’命令处理, 它负责将需要解释的命令以及命令选项传送给mankier服务,然后将必要的信息打印到Linux命令行。不必说的就是使用这个工具你总是需要连接网络。

|

||||

|

||||

让我们用explain.sh脚本测试几个笔者不懂的命令例子。

|

||||

|

||||

@ -63,7 +63,7 @@ Jackson写了一小段代码,它能非常有效地在bash shell里面解释she

|

||||

|

||||

|

||||

|

||||

获得du命令的帮助

|

||||

*获得du命令的帮助*

|

||||

|

||||

**2.如果你忘了'tar -zxvf'的作用,你可以简单地如此做:**

|

||||

|

||||

@ -71,7 +71,7 @@ Jackson写了一小段代码,它能非常有效地在bash shell里面解释she

|

||||

|

||||

|

||||

|

||||

Tar命令帮助

|

||||

*Tar命令帮助*

|

||||

|

||||

**3.我的一个朋友经常对'whatis'以及'whereis'命令的使用感到困惑,所以我建议他:**

|

||||

|

||||

@ -86,7 +86,7 @@ Tar命令帮助

|

||||

|

||||

|

||||

|

||||

Whatis/Whereis命令的帮助

|

||||

*Whatis/Whereis命令的帮助*

|

||||

|

||||

你只需要使用“Ctrl+c”就能退出交互模式。

|

||||

|

||||

@ -96,11 +96,11 @@ Whatis/Whereis命令的帮助

|

||||

|

||||

|

||||

|

||||

获取多条命令的帮助

|

||||

*获取多条命令的帮助*

|

||||

|

||||

同样地,你可以请求你的shell来解释任何shell命令。 前提是你需要一个可用的网络。输出的信息是基于解释的需要从服务器中生成的,因此输出的结果是不可定制的。

|

||||

同样地,你可以请求你的shell来解释任何shell命令。 前提是你需要一个可用的网络。输出的信息是基于需要解释的命令,从服务器中生成的,因此输出的结果是不可定制的。

|

||||

|

||||

对于我来说这个工具真的很有用并且它已经荣幸地添加在我的.bashrc文件中。你对这个项目有什么想法?它对你有用么?它的解释令你满意吗?请让我知道吧!

|

||||

对于我来说这个工具真的很有用,并且它已经荣幸地添加在我的.bashrc文件中。你对这个项目有什么想法?它对你有用么?它的解释令你满意吗?请让我知道吧!

|

||||

|

||||

请在下面评论为我们提供宝贵意见,喜欢并分享我们以及帮助我们得到传播。

|

||||

|

||||

@ -110,7 +110,7 @@ via: http://www.tecmint.com/explain-shell-commands-in-the-linux-shell/

|

||||

|

||||

作者:[Avishek Kumar][a]

|

||||

译者:[dingdongnigetou](https://github.com/dingdongnigetou)

|

||||

校对:[校对者ID](https://github.com/校对者ID)

|

||||

校对:[wxy](https://github.com/wxy)

|

||||

|

||||

本文由 [LCTT](https://github.com/LCTT/TranslateProject) 原创翻译,[Linux中国](https://linux.cn/) 荣誉推出

|

||||

|

||||

@ -1,11 +1,12 @@

|

||||

新手应知应会的Linux命令

|

||||

================================================================================

|

||||

|

||||

在Fedora上通过命令行使用dnf来管理系统更新

|

||||

|

||||

基于Linux的系统的优点之一,就是你可以通过终端中使用命令该ing来管理整个系统。使用命令行的优势在于,你可以使用相同的知识和技能来管理随便哪个Linux发行版。

|

||||

*在Fedora上通过命令行使用dnf来管理系统更新*

|

||||

|

||||

对于各个发行版以及桌面环境(DE)而言,要一致地使用图形化用户界面(GUI)却几乎是不可能的,因为它们都提供了各自的用户界面。要明确的是,有那么些情况,你需要在不同的发行版上使用不同的命令来部署某些特定的任务,但是,或多或少它们的概念和意图却仍然是一致的。

|

||||

基于Linux的系统最美妙的一点,就是你可以在终端中使用命令行来管理整个系统。使用命令行的优势在于,你可以使用相同的知识和技能来管理随便哪个Linux发行版。

|

||||

|

||||

对于各个发行版以及桌面环境(DE)而言,要一致地使用图形化用户界面(GUI)却几乎是不可能的,因为它们都提供了各自的用户界面。要明确的是,有些情况下在不同的发行版上需要使用不同的命令来执行某些特定的任务,但是,基本来说它们的思路和目的是一致的。

|

||||

|

||||

在本文中,我们打算讨论Linux用户应当掌握的一些基本命令。我将给大家演示怎样使用命令行来更新系统、管理软件、操作文件以及切换到root,这些操作将在三个主要发行版上进行:Ubuntu(也包括其定制版和衍生版,还有Debian),openSUSE,以及Fedora。

|

||||

|

||||

@ -15,7 +16,7 @@

|

||||

|

||||

Linux是基于安全设计的,但事实上是,任何软件都有缺陷,会导致安全漏洞。所以,保持你的系统更新到最新是十分重要的。这么想吧:运行过时的操作系统,就像是你坐在全副武装的坦克里头,而门却没有锁。武器会保护你吗?任何人都可以进入开放的大门,对你造成伤害。同样,在你的系统中也有没有打补丁的漏洞,这些漏洞会危害到你的系统。开源社区,不像专利世界,在漏洞补丁方面反应是相当快的,所以,如果你保持系统最新,你也获得了安全保证。

|

||||

|

||||

留意新闻站点,了解安全漏洞。如果发现了一个漏洞,请阅读之,然后在补丁出来的第一时间更新。不管怎样,在生产机器上,你每星期必须至少运行一次更新命令。如果你运行这一台复杂的服务器,那么就要额外当心了。仔细阅读变更日志,以确保更新不会搞坏你的自定义服务。

|

||||

留意新闻站点,了解安全漏洞。如果发现了一个漏洞,了解它,然后在补丁出来的第一时间更新。不管怎样,在生产环境上,你每星期必须至少运行一次更新命令。如果你运行着一台复杂的服务器,那么就要额外当心了。仔细阅读变更日志,以确保更新不会搞坏你的自定义服务。

|

||||

|

||||

**Ubuntu**:牢记一点:你在升级系统或安装不管什么软件之前,都必须要刷新仓库(也就是repos)。在Ubuntu上,你可以使用下面的命令来更新系统,第一个命令用于刷新仓库:

|

||||

|

||||

@ -29,7 +30,7 @@ Linux是基于安全设计的,但事实上是,任何软件都有缺陷,会

|

||||

|

||||

sudo apt-get dist-upgrade

|

||||

|

||||

**openSUSE**:如果你是在openSUSE上,你可以使用以下命令来更新系统(照例,第一个命令的意思是更新仓库)

|

||||

**openSUSE**:如果你是在openSUSE上,你可以使用以下命令来更新系统(照例,第一个命令的意思是更新仓库):

|

||||

|

||||

sudo zypper refresh

|

||||

sudo zypper up

|

||||

@ -42,7 +43,7 @@ Linux是基于安全设计的,但事实上是,任何软件都有缺陷,会

|

||||

### 软件安装与移除 ###

|

||||

|

||||

你只可以安装那些你系统上启用的仓库中可用的包,各个发行版默认都附带有并启用了一些官方或者第三方仓库。

|

||||

**Ubuntu**: To install any package on Ubuntu, first update the repo and then use this syntax:

|

||||

|

||||

**Ubuntu**:要在Ubuntu上安装包,首先更新仓库,然后使用下面的语句:

|

||||

|

||||

sudo apt-get install [package_name]

|

||||

@ -75,9 +76,9 @@ Linux是基于安全设计的,但事实上是,任何软件都有缺陷,会

|

||||

|

||||

### 如何管理第三方软件? ###

|

||||

|

||||

在一个庞大的开发者社区中,这些开发者们为用户提供了许多的软件。不同的发行版有不同的机制来使用这些第三方软件,将它们提供给用户。同时也取决于开发者怎样将这些软件提供给用户,有些开发者会提供二进制包,而另外一些开发者则将软件发布到仓库中。

|

||||

在一个庞大的开发者社区中,这些开发者们为用户提供了许多的软件。不同的发行版有不同的机制来将这些第三方软件提供给用户。当然,同时也取决于开发者怎样将这些软件提供给用户,有些开发者会提供二进制包,而另外一些开发者则将软件发布到仓库中。

|

||||

|

||||

Ubuntu严重依赖于PPA(个人包归档),但是,不幸的是,它却没有提供一个内建工具来帮助用于搜索这些PPA仓库。在安装软件前,你将需要通过Google搜索PPA,然后手工添加该仓库。下面就是添加PPA到系统的方法:

|

||||

Ubuntu很多地方都用到PPA(个人包归档),但是,不幸的是,它却没有提供一个内建工具来帮助用于搜索这些PPA仓库。在安装软件前,你将需要通过Google搜索PPA,然后手工添加该仓库。下面就是添加PPA到系统的方法:

|

||||

|

||||

sudo add-apt-repository ppa:<repository-name>

|

||||

|

||||

@ -85,7 +86,7 @@ Ubuntu严重依赖于PPA(个人包归档),但是,不幸的是,它却

|

||||

|

||||

sudo add-apt-repository ppa:libreoffice/ppa

|

||||

|

||||

它会要你按下回车键来导入秘钥。完成后,使用'update'命令来刷新仓库,然后安装该包。

|

||||

它会要你按下回车键来导入密钥。完成后,使用'update'命令来刷新仓库,然后安装该包。

|

||||

|

||||

openSUSE拥有一个针对第三方应用的优雅的解决方案。你可以访问software.opensuse.org,一键点击搜索并安装相应包,它会自动将对应的仓库添加到你的系统中。如果你想要手工添加仓库,可以使用该命令:

|

||||

|

||||

@ -97,13 +98,13 @@ openSUSE拥有一个针对第三方应用的优雅的解决方案。你可以访

|

||||

sudo zypper refresh

|

||||

sudo zypper install libreoffice

|

||||

|

||||

Fedora用户只需要添加RPMFusion(free和non-free仓库一起),该仓库包含了大量的应用。如果你需要添加仓库,命令如下:

|

||||

Fedora用户只需要添加RPMFusion(包括自由软件和非自由软件仓库),该仓库包含了大量的应用。如果你需要添加该仓库,命令如下:

|

||||

|

||||

dnf config-manager --add-repo http://www.example.com/example.repo

|

||||

dnf config-manager --add-repo http://www.example.com/example.repo

|

||||

|

||||

### 一些基本命令 ###

|

||||

|

||||

我已经写了一些关于使用CLI来管理你系统上的文件的[文章][1],下面介绍一些基本米ing令,这些命令在所有发行版上都经常会用到。

|

||||

我已经写了一些关于使用CLI来管理你系统上的文件的[文章][1],下面介绍一些基本命令,这些命令在所有发行版上都经常会用到。

|

||||

|

||||

拷贝文件或目录到一个新的位置:

|

||||

|

||||

@ -113,13 +114,13 @@ dnf config-manager --add-repo http://www.example.com/example.repo

|

||||

|

||||

cp path_of_files/* path_of_the_directory_where_you_want_to_copy/

|

||||

|

||||

将一个文件从某个位置移动到另一个位置(尾斜杠是说在该目录中):

|

||||

将一个文件从某个位置移动到另一个位置(尾斜杠是说放在该目录中):

|

||||

|

||||

mv path_of_file_1 path_of_the_directory_where_you_want_to_move/

|

||||

mv path_of_file_1 path_of_the_directory_where_you_want_to_move/

|

||||

|

||||

将所有文件从一个位置移动到另一个位置:

|

||||

|

||||

mv path_of_directory_where_files_are/* path_of_the_directory_where_you_want_to_move/

|

||||

mv path_of_directory_where_files_are/* path_of_the_directory_where_you_want_to_move/

|

||||

|

||||

删除一个文件:

|

||||

|

||||

@ -135,11 +136,11 @@ dnf config-manager --add-repo http://www.example.com/example.repo

|

||||

|

||||

### 创建新目录 ###

|

||||

|

||||

要创建一个新目录,首先输入你要创建的目录的位置。比如说,你想要在你的Documents目录中创建一个名为'foundation'的文件夹。让我们使用 cd (即change directory,改变目录)命令来改变目录:

|

||||

要创建一个新目录,首先进入到你要创建该目录的位置。比如说,你想要在你的Documents目录中创建一个名为'foundation'的文件夹。让我们使用 cd (即change directory,改变目录)命令来改变目录:

|

||||

|

||||

cd /home/swapnil/Documents

|

||||

|

||||

(替换'swapnil'为你系统中的用户)

|

||||

(替换'swapnil'为你系统中的用户名)

|

||||

|

||||

然后,使用 mkdir 命令来创建该目录:

|

||||

|

||||

@ -149,13 +150,13 @@ dnf config-manager --add-repo http://www.example.com/example.repo

|

||||

|

||||

mdkir /home/swapnil/Documents/foundation

|

||||

|

||||

如果你想要创建父-子目录,那是指目录中的目录,那么可以使用 -p 选项。它会在指定路径中创建所有目录:

|

||||

如果你想要连父目录一起创建,那么可以使用 -p 选项。它会在指定路径中创建所有目录:

|

||||

|

||||

mdkir -p /home/swapnil/Documents/linux/foundation

|

||||

|

||||

### 成为root ###

|

||||

|

||||

你或许需要成为root,或者具有sudo权力的用户,来实施一些管理任务,如管理软件包或者对根目录或其下的文件进行一些修改。其中一个例子就是编辑'fstab'文件,该文件记录了挂载的硬件驱动器。它在'etc'目录中,而该目录又在根目录中,你只能作为超级用户来修改该文件。在大多数的发行版中,你可以通过'切换用户'来成为root。比如说,在openSUSE上,我想要成为root,因为我要在根目录中工作,你可以使用下面的命令之一:

|

||||

你或许需要成为root,或者具有sudo权力的用户,来实施一些管理任务,如管理软件包或者对根目录或其下的文件进行一些修改。其中一个例子就是编辑'fstab'文件,该文件记录了挂载的硬盘驱动器。它在'etc'目录中,而该目录又在根目录中,你只能作为超级用户来修改该文件。在大多数的发行版中,你可以通过'su'来成为root。比如说,在openSUSE上,我想要成为root,因为我要在根目录中工作,你可以使用下面的命令之一:

|

||||

|

||||

sudo su -

|

||||

|

||||

@ -165,7 +166,7 @@ dnf config-manager --add-repo http://www.example.com/example.repo

|

||||

|

||||

该命令会要求输入密码,然后你就具有root特权了。记住一点:千万不要以root用户来运行系统,除非你知道你正在做什么。另外重要的一点需要注意的是,你以root什么对目录或文件进行修改后,会将它们的拥有关系从该用户或特定的服务改变为root。你必须恢复这些文件的拥有关系,否则该服务或用户就不能访问或写入到那些文件。要改变用户,命令如下:

|

||||

|

||||

sudo chown -R user:user /path_of_file_or_directory

|

||||

sudo chown -R 用户:组 文件或目录名

|

||||

|

||||

当你将其它发行版上的分区挂载到系统中时,你可能经常需要该操作。当你试着访问这些分区上的文件时,你可能会碰到权限拒绝错误,你只需要改变这些分区的拥有关系就可以访问它们了。需要额外当心的是,不要改变根目录的权限或者拥有关系。

|

||||

|

||||

@ -177,7 +178,7 @@ via: http://www.linux.com/learn/tutorials/842251-must-know-linux-commands-for-ne

|

||||

|

||||

作者:[Swapnil Bhartiya][a]

|

||||

译者:[GOLinux](https://github.com/GOLinux)

|

||||

校对:[校对者ID](https://github.com/校对者ID)

|

||||

校对:[wxy](https://github.com/wxy)

|

||||

|

||||

本文由 [LCTT](https://github.com/LCTT/TranslateProject) 原创翻译,[Linux中国](https://linux.cn/) 荣誉推出

|

||||

|

||||

@ -1,15 +1,15 @@

|

||||

Linux问答 -- 如何在Linux上安装Git

|

||||

Linux有问必答:如何在Linux上安装Git

|

||||

================================================================================

|

||||

|

||||

> **问题:** 我尝试从一个Git公共仓库克隆项目,但出现了这样的错误提示:“git: command not found”。 请问我该如何安装Git? [注明一下是哪个Linux发行版]?

|

||||

> **问题:** 我尝试从一个Git公共仓库克隆项目,但出现了这样的错误提示:“git: command not found”。 请问我该如何在某某发行版上安装Git?

|

||||

|

||||

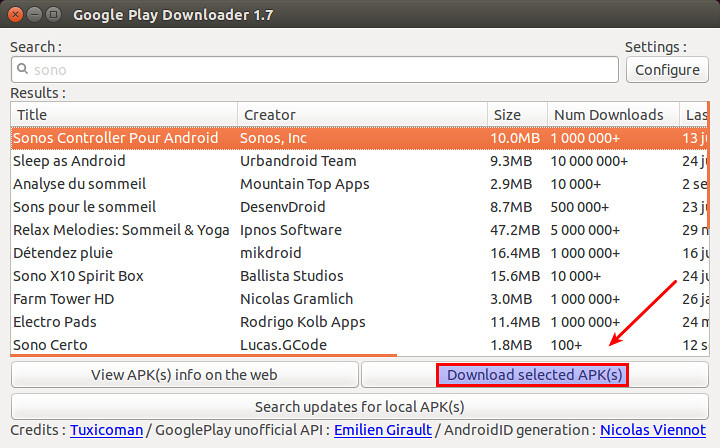

Git是一个流行的并且开源的版本控制系统(VCS),最初是为Linux环境开发的。跟CVS或者SVN这些版本控制系统不同的是,Git的版本控制被认为是“分布式的”,某种意义上,git的本地工作目录可以作为一个功能完善的仓库来使用,它具备完整的历史记录和版本追踪能力。在这种工作模型之下,各个协作者将内容提交到他们的本地仓库中(与之相对的会直接提交到核心仓库),如果有必要,再有选择性地推送到核心仓库。这就为Git这个版本管理系统带来了大型协作系统所必须的可扩展能力和冗余能力。

|

||||

Git是一个流行的开源版本控制系统(VCS),最初是为Linux环境开发的。跟CVS或者SVN这些版本控制系统不同的是,Git的版本控制被认为是“分布式的”,某种意义上,git的本地工作目录可以作为一个功能完善的仓库来使用,它具备完整的历史记录和版本追踪能力。在这种工作模型之下,各个协作者将内容提交到他们的本地仓库中(与之相对的会总是提交到核心仓库),如果有必要,再有选择性地推送到核心仓库。这就为Git这个版本管理系统带来了大型协作系统所必须的可扩展能力和冗余能力。

|

||||

|

||||

|

||||

|

||||

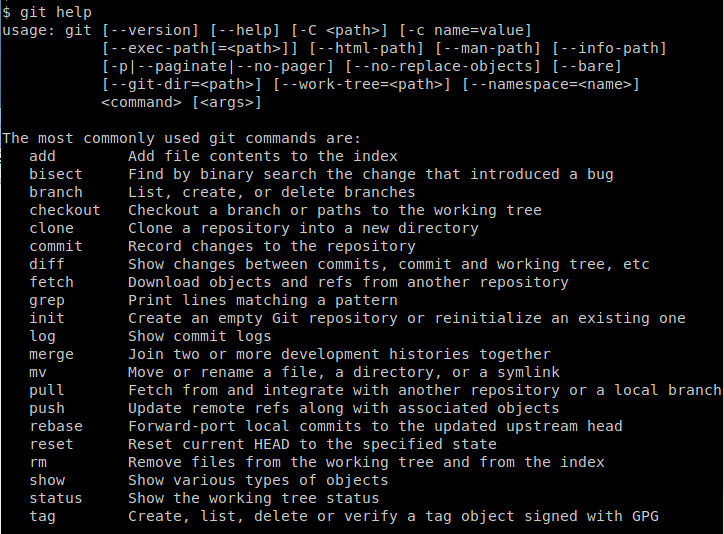

### 使用包管理器安装Git ###

|

||||

|

||||

Git已经被所有的主力Linux发行版所支持。所以安装它最简单的方法就是使用各个Linux发行版的包管理器。

|

||||

Git已经被所有的主流Linux发行版所支持。所以安装它最简单的方法就是使用各个Linux发行版的包管理器。

|

||||

|

||||

**Debian, Ubuntu, 或 Linux Mint**

|

||||

|

||||

@ -18,6 +18,8 @@ Git已经被所有的主力Linux发行版所支持。所以安装它最简单的

|

||||

**Fedora, CentOS 或 RHEL**

|

||||

|

||||

$ sudo yum install git

|

||||

或

|

||||

$ sudo dnf install git

|

||||

|

||||

**Arch Linux**

|

||||

|

||||

@ -33,7 +35,7 @@ Git已经被所有的主力Linux发行版所支持。所以安装它最简单的

|

||||

|

||||

### 从源码安装Git ###

|

||||

|

||||

如果由于某些原因,你希望从源码安装Git,安装如下介绍操作。

|

||||

如果由于某些原因,你希望从源码安装Git,按照如下介绍操作。

|

||||

|

||||

**安装依赖包**

|

||||

|

||||

@ -65,7 +67,7 @@ via: http://ask.xmodulo.com/install-git-linux.html

|

||||

|

||||

作者:[Dan Nanni][a]

|

||||

译者:[mr-ping](https://github.com/mr-ping)

|

||||

校对:[校对者ID](https://github.com/校对者ID)

|

||||

校对:[wxy](https://github.com/wxy)

|

||||

|

||||

本文由 [LCTT](https://github.com/LCTT/TranslateProject) 原创翻译,[Linux中国](https://linux.cn/) 荣誉推出

|

||||

|

||||

@ -0,0 +1,98 @@

|

||||

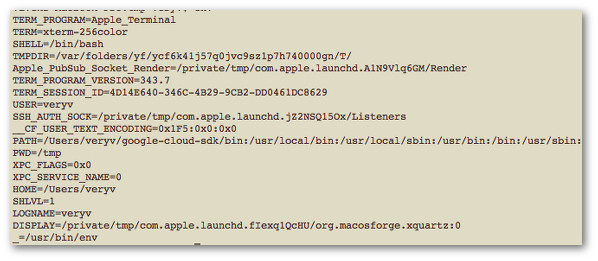

如何在 Linux 上运行命令前临时清空 Bash 环境变量

|

||||

================================================================================

|

||||

我是个 bash shell 用户。我想临时清空 bash shell 环境变量。但我不想删除或者 unset 一个输出的环境变量。我怎样才能在 bash 或 ksh shell 的临时环境中运行程序呢?

|

||||

|

||||

你可以在 Linux 或类 Unix 系统中使用 env 命令设置并打印环境。env 命令可以按命令行指定的变量来修改环境,之后再执行程序。

|

||||

|

||||

### 如何显示当前环境? ###

|

||||

|

||||

打开终端应用程序并输入下面的其中一个命令:

|

||||

|

||||

printenv

|

||||

|

||||

或

|

||||

|

||||

env

|

||||

|

||||

输出样例:

|

||||

|

||||

|

||||

|

||||

*Fig.01: Unix/Linux: 列出所有环境变量*

|

||||

|

||||

### 统计环境变量数目 ###

|

||||

|

||||

输入下面的命令:

|

||||

|

||||

env | wc -l

|

||||

printenv | wc -l # 或者

|

||||

|

||||

输出样例:

|

||||

|

||||

20

|

||||

|

||||

### 在干净的 bash/ksh/zsh 环境中运行程序 ###

|

||||

|

||||

语法如下所示:

|

||||

|

||||

env -i your-program-name-here arg1 arg2 ...

|

||||

|

||||

例如,要在不使用 http_proxy 和/或任何其它环境变量的情况下运行 wget 程序。临时清除所有 bash/ksh/zsh 环境变量并运行 wget 程序:

|

||||

|

||||

env -i /usr/local/bin/wget www.cyberciti.biz

|

||||

env -i wget www.cyberciti.biz # 或者

|

||||

|

||||

这当你想忽视任何已经设置的环境变量来运行命令时非常有用。我每天都会多次使用这个命令,以便忽视 http_proxy 和其它我设置的环境变量。

|

||||

|

||||

#### 例子:使用 http_proxy ####

|

||||

|

||||

$ wget www.cyberciti.biz

|

||||

--2015-08-03 23:20:23-- http://www.cyberciti.biz/

|

||||

Connecting to 10.12.249.194:3128... connected.

|

||||

Proxy request sent, awaiting response... 200 OK

|

||||

Length: unspecified [text/html]

|

||||

Saving to: 'index.html'

|

||||

index.html [ <=> ] 36.17K 87.0KB/s in 0.4s

|

||||

2015-08-03 23:20:24 (87.0 KB/s) - 'index.html' saved [37041]

|

||||

|

||||

#### 例子:忽视 http_proxy ####

|

||||

|

||||

$ env -i /usr/local/bin/wget www.cyberciti.biz

|

||||

--2015-08-03 23:25:17-- http://www.cyberciti.biz/

|

||||

Resolving www.cyberciti.biz... 74.86.144.194

|

||||

Connecting to www.cyberciti.biz|74.86.144.194|:80... connected.

|

||||

HTTP request sent, awaiting response... 200 OK

|

||||

Length: unspecified [text/html]

|

||||

Saving to: 'index.html.1'

|

||||

index.html.1 [ <=> ] 36.17K 115KB/s in 0.3s

|

||||

2015-08-03 23:25:18 (115 KB/s) - 'index.html.1' saved [37041]

|

||||

|

||||

-i 选项使 env 命令完全忽视它继承的环境。但是,它并不会阻止你的命令(例如 wget 或 curl)设置新的变量。同时,也要注意运行 bash/ksh shell 的副作用:

|

||||

|

||||

env -i env | wc -l ## 空的 ##

|

||||

# 现在运行 bash ##

|

||||

env -i bash

|

||||

## bash 设置了新的环境变量 ##

|

||||

env | wc -l

|

||||

|

||||

#### 例子:设置一个环境变量 ####

|

||||

|

||||

语法如下:

|

||||

|

||||

env var=value /path/to/command arg1 arg2 ...

|

||||

## 或 ##

|

||||

var=value /path/to/command arg1 arg2 ...

|

||||

|

||||

例如设置 http_proxy:

|

||||

|

||||

env http_proxy="http://USER:PASSWORD@server1.cyberciti.biz:3128/" /usr/local/bin/wget www.cyberciti.biz

|

||||

|

||||

--------------------------------------------------------------------------------

|

||||

|

||||

via: http://www.cyberciti.biz/faq/linux-unix-temporarily-clearing-environment-variables-command/

|

||||

|

||||

作者:Vivek Gite

|

||||

译者:[ictlyh](https://github.com/ictlyh)

|

||||

校对:[wxy](https://github.com/wxy)

|

||||

|

||||

本文由 [LCTT](https://github.com/LCTT/TranslateProject) 原创翻译,[Linux中国](https://linux.cn/) 荣誉推出

|

||||

61

published/20150810 For Linux, Supercomputers R Us.md

Normal file

61

published/20150810 For Linux, Supercomputers R Us.md

Normal file

@ -0,0 +1,61 @@

|

||||

有了 Linux,你就可以搭建自己的超级计算机

|

||||

================================================================================

|

||||

|

||||

> 几乎所有超级计算机上运行的系统都是 Linux,其中包括那些由树莓派(Raspberry Pi)板卡和 PlayStation 3游戏机组成的计算机。

|

||||

|

||||

|

||||

|

||||

*题图来源:By Michel Ngilen,[ CC BY 2.0 ], via Wikimedia Commons*

|

||||

|

||||

超级计算机是一种严肃的工具,做的都是高大上的计算。它们往往从事于严肃的用途,比如原子弹模拟、气候模拟和高等物理学。当然,它们的花费也很高大上。在最新的超级计算机 [Top500][1] 排名中,中国国防科技大学研制的天河 2 号位居第一,而天河 2 号的建造耗资约 3.9 亿美元!

|

||||

|

||||

但是,也有一个超级计算机,是由博伊西州立大学电气和计算机工程系的一名在读博士 Joshua Kiepert [用树莓派构建完成][2]的,其建造成本低于2000美元。

|

||||

|

||||

不,这不是我编造的。它一个真实的超级计算机,由超频到 1GHz 的 [B 型树莓派][3]的 ARM11 处理器与 VideoCore IV GPU 组成。每个都配备了 512MB 的内存、一对 USB 端口和 1 个 10/100 BaseT 以太网端口。

|

||||

|

||||

那么天河 2 号和博伊西州立大学的超级计算机有什么共同点吗?它们都运行 Linux 系统。世界最快的超级计算机[前 500 强中有 486][4] 个也同样运行的是 Linux 系统。这是从 20 多年前就开始的格局。而现在的趋势是超级计算机开始由廉价单元组成,因为 Kiepert 的机器并不是唯一一个无所谓预算的超级计算机。

|

||||

|

||||

麻省大学达特茅斯分校的物理学副教授 Gaurav Khanna 创建了一台超级计算机仅用了[不足 200 台的 PlayStation3 视频游戏机][5]。

|

||||

|

||||

PlayStation 游戏机由一个 3.2 GHz 的基于 PowerPC 的 Power 处理器所驱动。每个都配有 512M 的内存。你现在仍然可以花 200 美元买到一个,尽管索尼将在年底逐步淘汰它们。Khanna 仅用了 16 个 PlayStation 3 构建了他第一台超级计算机,所以你也可以花费不到 4000 美元就拥有你自己的超级计算机。

|

||||

|

||||

这些机器可能是用玩具建成的,但他们不是玩具。Khanna 已经用它做了严肃的天体物理学研究。一个白帽子黑客组织使用了类似的 [PlayStation 3 超级计算机在 2008 年破解了 SSL 的 MD5 哈希算法][6]。

|

||||

|

||||

两年后,美国空军研究实验室研制的 [Condor Cluster,使用了 1760 个索尼的 PlayStation 3 的处理器][7]和168 个通用的图形处理单元。这个低廉的超级计算机,每秒运行约 500 TFLOP ,即每秒可进行 500 万亿次浮点运算。

|

||||

|

||||

其他的一些便宜且适用于构建家庭超级计算机的构件包括,专业并行处理板卡,比如信用卡大小的 [99 美元的 Parallella 板卡][8],以及高端显卡,比如 [Nvidia 的 Titan Z][9] 和 [ AMD 的 FirePro W9100][10]。这些高端板卡的市场零售价约 3000 美元,一些想要一台梦幻般的机器的玩家为此参加了[英特尔极限大师赛:英雄联盟世界锦标赛][11],要是甚至有机会得到了第一名的话,能获得超过 10 万美元奖金。另一方面,一个能够自己提供超过 2.5TFLOPS 计算能力的计算机,对于科学家和研究人员来说,这为他们提供了一个可以拥有自己专属的超级计算机的经济的方法。

|

||||

|

||||

而超级计算机与 Linux 的连接,这一切都始于 1994 年戈达德航天中心的第一个名为 [Beowulf 超级计算机][13]。

|

||||

|

||||

按照我们的标准,Beowulf 不能算是最优越的。但在那个时期,作为第一台自制的超级计算机,它的 16 个英特尔486DX 处理器和 10Mbps 的以太网总线,是个伟大的创举。[Beowulf 是由美国航空航天局的承建商 Don Becker 和 Thomas Sterling 所设计的][14],是第一台“创客”超级计算机。它的“计算部件” 486DX PC,成本仅有几千美元。尽管它的速度只有个位数的 GFLOPS (吉拍,每秒10亿次)浮点运算,[Beowulf][15] 表明了你可以用商用现货(COTS)硬件和 Linux 创建超级计算机。

|

||||

|

||||

我真希望我参与创建了一部分,但是我 1994 年就离开了戈达德,开始了作为一名全职的科技记者的职业生涯。该死。

|

||||

|

||||

但是尽管我只是使用笔记本的记者,我依然能够体会到 COTS 和开源软件是如何永远的改变了超级计算机。我希望现在读这篇文章的你也能。因为,无论是 Raspberry Pi 集群,还是超过 300 万个英特尔的 Ivy Bridge 和 Xeon Phi 芯片的庞然大物,几乎所有当代的超级计算机都可以追溯到 Beowulf。

|

||||

|

||||

--------------------------------------------------------------------------------

|

||||

|

||||

via: http://www.computerworld.com/article/2960701/linux/for-linux-supercomputers-r-us.html

|

||||

|

||||

作者:[Steven J. Vaughan-Nichols][a]

|

||||

译者:[xiaoyu33](https://github.com/xiaoyu33)

|

||||

校对:[wxy](https://github.com/wxy)

|

||||

|

||||

本文由 [LCTT](https://github.com/LCTT/TranslateProject) 原创翻译,[Linux中国](https://linux.cn/) 荣誉推出

|

||||

|

||||

[a]:http://www.computerworld.com/author/Steven-J.-Vaughan_Nichols/

|

||||

[1]:http://www.top500.org/

|

||||

[2]:http://www.zdnet.com/article/build-your-own-supercomputer-out-of-raspberry-pi-boards/

|

||||

[3]:https://www.raspberrypi.org/products/model-b/

|

||||

[4]:http://www.zdnet.com/article/linux-still-rules-supercomputing/

|

||||

[5]:http://www.nytimes.com/2014/12/23/science/an-economical-way-to-save-progress.html?smid=fb-nytimes&smtyp=cur&bicmp=AD&bicmlukp=WT.mc_id&bicmst=1409232722000&bicmet=1419773522000&_r=4

|

||||

[6]:http://www.computerworld.com/article/2529932/cybercrime-hacking/researchers-hack-verisign-s-ssl-scheme-for-securing-web-sites.html

|

||||

[7]:http://phys.org/news/2010-12-air-playstation-3s-supercomputer.html

|

||||

[8]:http://www.zdnet.com/article/parallella-the-99-linux-supercomputer/

|

||||

[9]:http://blogs.nvidia.com/blog/2014/03/25/titan-z/

|

||||

[10]:http://www.amd.com/en-us/press-releases/Pages/amd-flagship-professional-2014apr7.aspx

|

||||

[11]:http://en.intelextrememasters.com/news/check-out-the-intel-extreme-masters-katowice-prize-money-distribution/

|

||||

|

||||

[13]:http://www.beowulf.org/overview/history.html

|

||||

[14]:http://yclept.ucdavis.edu/Beowulf/aboutbeowulf.html

|

||||

[15]:http://www.beowulf.org/

|

||||

@ -1,100 +0,0 @@

|

||||

Translating by ZTinoZ

|

||||

5 heroes of the Linux world

|

||||

================================================================================

|

||||

Who are these people, seen and unseen, whose work affects all of us every day?

|

||||

|

||||

|

||||

Image courtesy [Christopher Michel/Flickr][1]

|

||||

|

||||

### High-flying penguins ###

|

||||

|

||||

Linux and open source is driven by passionate people who write best-of-breed software and then release the code to the public so anyone can use it, without any strings attached. (Well, there is one string attached and that’s licence.)

|

||||

|

||||

Who are these people? These heroes of the Linux world, whose work affects all of us every day. Allow me to introduce you.

|

||||

|

||||

|

||||

Image courtesy Swapnil Bhartiya

|

||||

|

||||

### Klaus Knopper ###

|

||||

|

||||

Klaus Knopper, an Austrian developer who lives in Germany, is the founder of Knoppix and Adriana Linux, which he developed for his blind wife.

|

||||

|

||||

Knoppix holds a very special place in heart of those Linux users who started using Linux before Ubuntu came along. What makes Knoppix so special is that it popularized the concept of Live CD. Unlike Windows or Mac OS X, you could run the entire operating system from the CD without installing anything on the system. It allowed new users to test Linux on their systems without formatting the hard drive. The live feature of Linux alone contributed heavily to its popularity.

|

||||

|

||||

|

||||

Image courtesy [Fórum Internacional Software Live/Flickr][2]

|

||||

|

||||

### Lennart Pottering ###

|

||||

|

||||

Lennart Pottering is yet another genius from Germany. He has written so many core components of a Linux (as well as BSD) system that it’s hard to keep track. Most of his work is towards the successors of aging or broken components of the Linux systems.

|

||||

|

||||

Pottering wrote the modern init system systemd, which shook the Linux world and created a [rift in the Debian community][3].

|

||||

|

||||

While Linus Torvalds has no problems with systemd, and praises it, he is not a huge fan of the way systemd developers (including the co-author Kay Sievers,) respond to bug reports and criticism. At one point Linus said on the LKML (Linux Kernel Mailing List) that he would [never work with Sievers][4].

|

||||

|

||||

Lennart is also the author of Pulseaudio, sound server on Linux and Avahi, zero-configuration networking (zeroconf) implementation.

|

||||

|

||||

|

||||

Image courtesy [Meego Com/Flickr][5]

|

||||

|

||||

### Jim Zemlin ###

|

||||

|

||||

Jim Zemlin isn't a developer, but as founder of The Linux Foundation he is certainly one of the most important figures of the Linux world.

|

||||

|

||||

In 2007, The Linux Foundation was formed as a result of merger between two open source bodies: the Free Standards Group and the Open Source Development Labs. Zemlin was the executive director of the Free Standards Group. Post-merger Zemlin became the executive director of The Linux Foundation and has held that position since.

|

||||

|

||||

Under his leadership, The Linux Foundation has become the central figure in the modern IT world and plays a very critical role for the Linux ecosystem. In order to ensure that key developers like Torvalds and Kroah-Hartman can focus on Linux, the foundation sponsors them as fellows.

|

||||

|

||||

Zemlin also made the foundation a bridge between companies so they can collaborate on Linux while at the same time competing in the market. The foundation also organizes many conferences around the world and [offers many courses for Linux developers][6].

|

||||

|

||||

People may think of Zemlin as Linus Torvalds' boss, but he refers to himself as "Linus Torvalds' janitor."

|

||||

|

||||

|

||||

Image courtesy [Coscup/Flickr][7]

|

||||

|

||||

### Greg Kroah-Hartman ###

|

||||

|

||||