mirror of

https://github.com/LCTT/TranslateProject.git

synced 2025-03-12 01:40:10 +08:00

Merge branch 'master' of https://github.com/LCTT/TranslateProject

This commit is contained in:

commit

56179567d8

84

published/20090127 Anatomy of a Program in Memory.md

Normal file

84

published/20090127 Anatomy of a Program in Memory.md

Normal file

@ -0,0 +1,84 @@

|

||||

剖析内存中的程序之秘

|

||||

============================================================

|

||||

|

||||

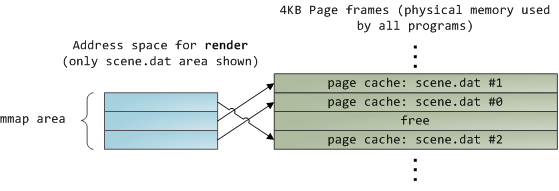

内存管理是操作系统的核心任务;它对程序员和系统管理员来说也是至关重要的。在接下来的几篇文章中,我将从实践出发着眼于内存管理,并深入到它的内部结构。虽然这些概念很通用,但示例大都来自于 32 位 x86 架构的 Linux 和 Windows 上。这第一篇文章描述了在内存中程序如何分布。

|

||||

|

||||

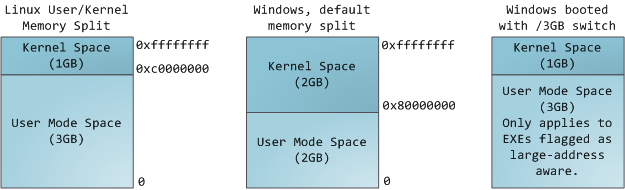

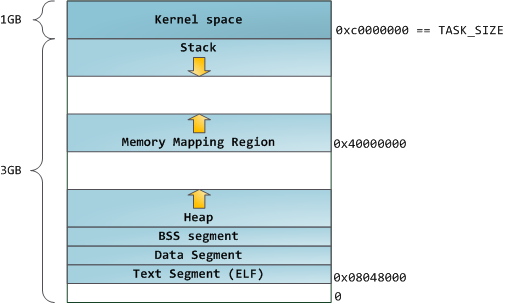

在一个多任务操作系统中的每个进程都运行在它自己的内存“沙箱”中。这个沙箱是一个<ruby>虚拟地址空间<rt>virtual address space</rt></ruby>,在 32 位的模式中它总共有 4GB 的内存地址块。这些虚拟地址是通过内核<ruby>页表<rt>page table</rt></ruby>映射到物理地址的,并且这些虚拟地址是由操作系统内核来维护,进而被进程所消费的。每个进程都有它自己的一组页表,但是这里有点玄机。一旦虚拟地址被启用,这些虚拟地址将被应用到这台电脑上的 _所有软件_,_包括内核本身_。因此,一部分虚拟地址空间必须保留给内核使用:

|

||||

|

||||

|

||||

|

||||

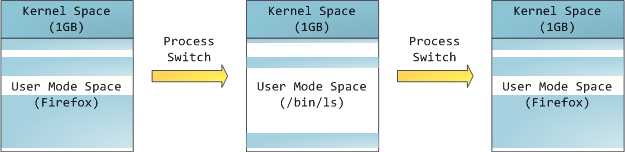

但是,这并**不是**说内核就使用了很多的物理内存,恰恰相反,它只使用了很少一部分可用的地址空间映射到其所需要的物理内存。内核空间在内核页表中被标记为独占使用于 [特权代码][1] (ring 2 或更低),因此,如果一个用户模式的程序尝试去访问它,将触发一个页面故障错误。在 Linux 中,内核空间是始终存在的,并且在所有进程中都映射相同的物理内存。内核代码和数据总是可寻址的,准备随时去处理中断或者系统调用。相比之下,用户模式中的地址空间,在每次进程切换时都会发生变化:

|

||||

|

||||

|

||||

|

||||

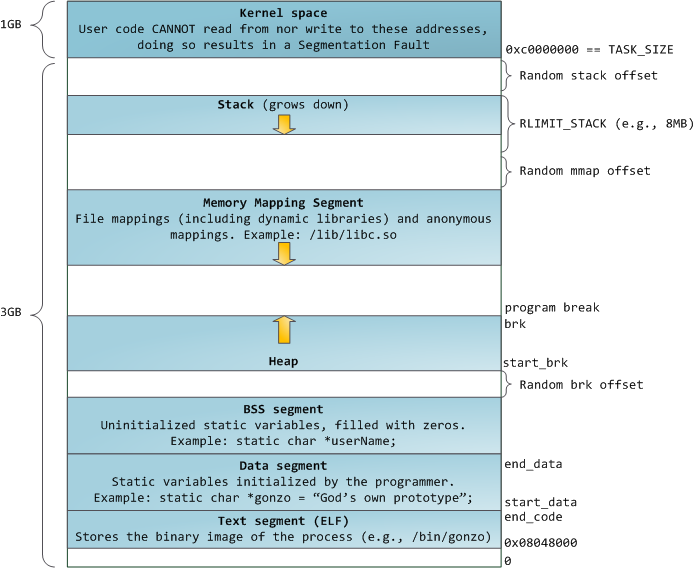

蓝色的区域代表映射到物理地址的虚拟地址空间,白色的区域是尚未映射的部分。在上面的示例中,众所周知的内存“饕餮” Firefox 使用了大量的虚拟内存空间。在地址空间中不同的条带对应了不同的内存段,像<ruby>堆<rt>heap</rt></ruby>、<ruby>栈<rt>stack</rt></ruby>等等。请注意,这些段只是一系列内存地址的简化表示,它与 [Intel 类型的段][2] _并没有任何关系_ 。不过,这是一个在 Linux 进程的标准段布局:

|

||||

|

||||

|

||||

|

||||

当计算机还是快乐、安全的时代时,在机器中的几乎每个进程上,那些段的起始虚拟地址都是**完全相同**的。这将使远程挖掘安全漏洞变得容易。漏洞利用经常需要去引用绝对内存位置:比如在栈中的一个地址,一个库函数的地址,等等。远程攻击可以闭着眼睛选择这个地址,因为地址空间都是相同的。当攻击者们这样做的时候,人们就会受到伤害。因此,地址空间随机化开始流行起来。Linux 会通过在其起始地址上增加偏移量来随机化[栈][3]、[内存映射段][4]、以及[堆][5]。不幸的是,32 位的地址空间是非常拥挤的,为地址空间随机化留下的空间不多,因此 [妨碍了地址空间随机化的效果][6]。

|

||||

|

||||

在进程地址空间中最高的段是栈,在大多数编程语言中它存储本地变量和函数参数。调用一个方法或者函数将推送一个新的<ruby>栈帧<rt>stack frame</rt></ruby>到这个栈。当函数返回时这个栈帧被删除。这个简单的设计,可能是因为数据严格遵循 [后进先出(LIFO)][7] 的次序,这意味着跟踪栈内容时不需要复杂的数据结构 —— 一个指向栈顶的简单指针就可以做到。推入和弹出也因此而非常快且准确。也可能是,持续的栈区重用往往会在 [CPU 缓存][8] 中保持活跃的栈内存,这样可以加快访问速度。进程中的每个线程都有它自己的栈。

|

||||

|

||||

向栈中推送更多的而不是刚合适的数据可能会耗尽栈的映射区域。这将触发一个页面故障,在 Linux 中它是通过 [`expand_stack()`][9] 来处理的,它会去调用 [`acct_stack_growth()`][10] 来检查栈的增长是否正常。如果栈的大小低于 `RLIMIT_STACK` 的值(一般是 8MB 大小),那么这是一个正常的栈增长和程序的合理使用,否则可能是发生了未知问题。这是一个栈大小按需调节的常见机制。但是,栈的大小达到了上述限制,将会发生一个栈溢出,并且,程序将会收到一个<ruby>段故障<rt>Segmentation Fault</rt></ruby>错误。当映射的栈区为满足需要而扩展后,在栈缩小时,映射区域并不会收缩。就像美国联邦政府的预算一样,它只会扩张。

|

||||

|

||||

动态栈增长是 [唯一例外的情况][11] ,当它去访问一个未映射的内存区域,如上图中白色部分,是允许的。除此之外的任何其它访问未映射的内存区域将触发一个页面故障,导致段故障。一些映射区域是只读的,因此,尝试去写入到这些区域也将触发一个段故障。

|

||||

|

||||

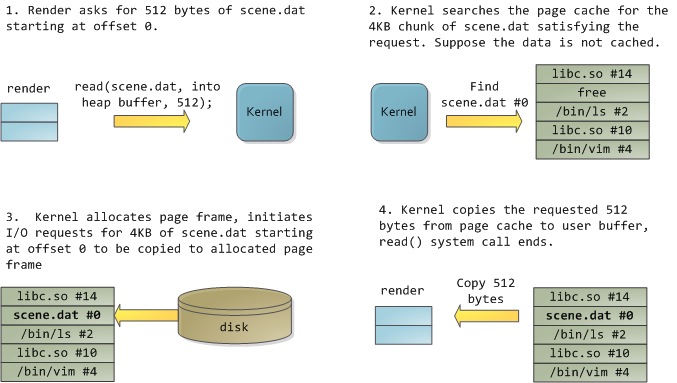

在栈的下面,有内存映射段。在这里,内核将文件内容直接映射到内存。任何应用程序都可以通过 Linux 的 [`mmap()`][12] 系统调用( [代码实现][13])或者 Windows 的 [`CreateFileMapping()`][14] / [`MapViewOfFile()`][15] 来请求一个映射。内存映射是实现文件 I/O 的方便高效的方式。因此,它经常被用于加载动态库。有时候,也被用于去创建一个并不匹配任何文件的匿名内存映射,这种映射经常被用做程序数据的替代。在 Linux 中,如果你通过 [`malloc()`][16] 去请求一个大的内存块,C 库将会创建这样一个匿名映射而不是使用堆内存。这里所谓的“大”表示是超过了`MMAP_THRESHOLD` 设置的字节数,它的缺省值是 128 kB,可以通过 [`mallopt()`][17] 去调整这个设置值。

|

||||

|

||||

接下来讲的是“堆”,就在我们接下来的地址空间中,堆提供运行时内存分配,像栈一样,但又不同于栈的是,它分配的数据生存期要长于分配它的函数。大多数编程语言都为程序提供了堆管理支持。因此,满足内存需要是编程语言运行时和内核共同来做的事情。在 C 中,堆分配的接口是 [`malloc()`][18] 一族,然而在支持垃圾回收的编程语言中,像 C#,这个接口使用 `new` 关键字。

|

||||

|

||||

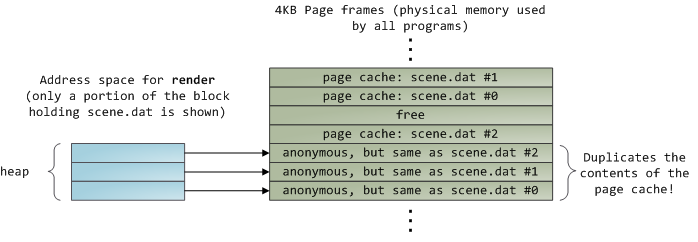

如果在堆中有足够的空间可以满足内存请求,它可以由编程语言运行时来处理内存分配请求,而无需内核参与。否则将通过 [`brk()`][19] 系统调用([代码实现][20])来扩大堆以满足内存请求所需的大小。堆管理是比较 [复杂的][21],在面对我们程序的混乱分配模式时,它通过复杂的算法,努力在速度和内存使用效率之间取得一种平衡。服务一个堆请求所需要的时间可能是非常可观的。实时系统有一个 [特定用途的分配器][22] 去处理这个问题。堆也会出现 _碎片化_ ,如下图所示:

|

||||

|

||||

|

||||

|

||||

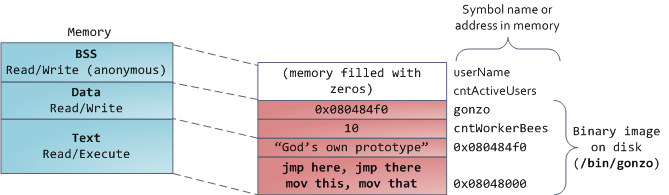

最后,我们抵达了内存的低位段:BSS、数据、以及程序文本。在 C 中,静态(全局)变量的内容都保存在 BSS 和数据中。它们之间的不同之处在于,BSS 保存 _未初始化的_ 静态变量的内容,它的值在源代码中并没有被程序员设置。BSS 内存区域是 _匿名_ 的:它没有映射到任何文件上。如果你在程序中写这样的语句 `static int cntActiveUsers`,`cntActiveUsers` 的内容就保存在 BSS 中。

|

||||

|

||||

反过来,数据段,用于保存在源代码中静态变量 _初始化后_ 的内容。这个内存区域是 _非匿名_ 的。它映射了程序的二进值镜像上的一部分,包含了在源代码中给定初始化值的静态变量内容。因此,如果你在程序中写这样的语句 `static int cntWorkerBees = 10`,那么,`cntWorkerBees` 的内容就保存在数据段中,并且初始值为 `10`。尽管可以通过数据段映射到一个文件,但是这是一个私有内存映射,意味着,如果改变内存,它并不会将这种变化反映到底层的文件上。必须是这样的,否则,分配的全局变量将会改变你磁盘上的二进制文件镜像,这种做法就太不可思议了!

|

||||

|

||||

用图去展示一个数据段是很困难的,因为它使用一个指针。在那种情况下,指针 `gonzo` 的_内容_(一个 4 字节的内存地址)保存在数据段上。然而,它并没有指向一个真实的字符串。而这个字符串存在于文本段中,文本段是只读的,它用于保存你的代码中的类似于字符串常量这样的内容。文本段也会在内存中映射你的二进制文件,但是,如果你的程序写入到这个区域,将会触发一个段故障错误。尽管在 C 中,它比不上从一开始就避免这种指针错误那么有效,但是,这种机制也有助于避免指针错误。这里有一个展示这些段和示例变量的图:

|

||||

|

||||

|

||||

|

||||

你可以通过读取 `/proc/pid_of_process/maps` 文件来检查 Linux 进程中的内存区域。请记住,一个段可以包含很多的区域。例如,每个内存映射的文件一般都在 mmap 段中的它自己的区域中,而动态库有类似于 BSS 和数据一样的额外的区域。下一篇文章中我们将详细说明“<ruby>区域<rt>area</rt></ruby>”的真正含义是什么。此外,有时候人们所说的“<ruby>数据段<rt>data segment</rt></ruby>”是指“<ruby>数据<rt>data</rt></ruby> + BSS + 堆”。

|

||||

|

||||

你可以使用 [nm][23] 和 [objdump][24] 命令去检查二进制镜像,去显示它们的符号、地址、段等等。最终,在 Linux 中上面描述的虚拟地址布局是一个“弹性的”布局,这就是这几年来的缺省情况。它假设 `RLIMIT_STACK` 有一个值。如果没有值的话,Linux 将恢复到如下所示的“经典” 布局:

|

||||

|

||||

|

||||

|

||||

这就是虚拟地址空间布局。接下来的文章将讨论内核如何对这些内存区域保持跟踪、内存映射、文件如何读取和写入、以及内存使用数据的意义。

|

||||

|

||||

--------------------------------------------------------------------------------

|

||||

|

||||

via: http://duartes.org/gustavo/blog/post/anatomy-of-a-program-in-memory/

|

||||

|

||||

作者:[Gustavo Duarte][a]

|

||||

译者:[qhwdw](https://github.com/qhwdw)

|

||||

校对:[wxy](https://github.com/wxy)

|

||||

|

||||

本文由 [LCTT](https://github.com/LCTT/TranslateProject) 原创编译,[Linux中国](https://linux.cn/) 荣誉推出

|

||||

|

||||

[a]:http://duartes.org/gustavo/blog/about/

|

||||

[1]:http://duartes.org/gustavo/blog/post/cpu-rings-privilege-and-protection

|

||||

[2]:http://duartes.org/gustavo/blog/post/memory-translation-and-segmentation

|

||||

[3]:http://lxr.linux.no/linux+v2.6.28.1/fs/binfmt_elf.c#L542

|

||||

[4]:http://lxr.linux.no/linux+v2.6.28.1/arch/x86/mm/mmap.c#L84

|

||||

[5]:http://lxr.linux.no/linux+v2.6.28.1/arch/x86/kernel/process_32.c#L729

|

||||

[6]:http://www.stanford.edu/~blp/papers/asrandom.pdf

|

||||

[7]:http://en.wikipedia.org/wiki/Lifo

|

||||

[8]:http://duartes.org/gustavo/blog/post/intel-cpu-caches

|

||||

[9]:http://lxr.linux.no/linux+v2.6.28/mm/mmap.c#L1716

|

||||

[10]:http://lxr.linux.no/linux+v2.6.28/mm/mmap.c#L1544

|

||||

[11]:http://lxr.linux.no/linux+v2.6.28.1/arch/x86/mm/fault.c#L692

|

||||

[12]:http://www.kernel.org/doc/man-pages/online/pages/man2/mmap.2.html

|

||||

[13]:http://lxr.linux.no/linux+v2.6.28.1/arch/x86/kernel/sys_i386_32.c#L27

|

||||

[14]:http://msdn.microsoft.com/en-us/library/aa366537(VS.85).aspx

|

||||

[15]:http://msdn.microsoft.com/en-us/library/aa366761(VS.85).aspx

|

||||

[16]:http://www.kernel.org/doc/man-pages/online/pages/man3/malloc.3.html

|

||||

[17]:http://www.kernel.org/doc/man-pages/online/pages/man3/undocumented.3.html

|

||||

[18]:http://www.kernel.org/doc/man-pages/online/pages/man3/malloc.3.html

|

||||

[19]:http://www.kernel.org/doc/man-pages/online/pages/man2/brk.2.html

|

||||

[20]:http://lxr.linux.no/linux+v2.6.28.1/mm/mmap.c#L248

|

||||

[21]:http://g.oswego.edu/dl/html/malloc.html

|

||||

[22]:http://rtportal.upv.es/rtmalloc/

|

||||

[23]:http://manpages.ubuntu.com/manpages/intrepid/en/man1/nm.1.html

|

||||

[24]:http://manpages.ubuntu.com/manpages/intrepid/en/man1/objdump.1.html

|

||||

@ -1,35 +1,43 @@

|

||||

Linux / Unix / Mac OS X 中的 30 个方便的 Bash shell 别名

|

||||

30 个方便的 Bash shell 别名

|

||||

======

|

||||

bash 别名不是把别的,只不过是指向命令的快捷方式而已。`alias` 命令允许用户只输入一个单词就运行任意一个命令或一组命令(包括命令选项和文件名)。执行 `alias` 命令会显示一个所有已定义别名的列表。你可以在 [~/.bashrc][1] 文件中自定义别名。使用别名可以在命令行中减少输入的时间,使工作更流畅,同时增加生产率。

|

||||

|

||||

bash <ruby>别名<rt>alias</rt></ruby>只不过是指向命令的快捷方式而已。`alias` 命令允许用户只输入一个单词就运行任意一个命令或一组命令(包括命令选项和文件名)。执行 `alias` 命令会显示一个所有已定义别名的列表。你可以在 [~/.bashrc][1] 文件中自定义别名。使用别名可以在命令行中减少输入的时间,使工作更流畅,同时增加生产率。

|

||||

|

||||

本文通过 30 个 bash shell 别名的实际案例演示了如何创建和使用别名。

|

||||

|

||||

![30 Useful Bash Shell Aliase For Linux/Unix Users][2]

|

||||

|

||||

## bash alias 的那些事

|

||||

### bash alias 的那些事

|

||||

|

||||

bash shell 中的 alias 命令的语法是这样的:

|

||||

|

||||

### 如何列出 bash 别名

|

||||

```

|

||||

alias [alias-name[=string]...]

|

||||

```

|

||||

|

||||

#### 如何列出 bash 别名

|

||||

|

||||

输入下面的 [alias 命令][3]:

|

||||

|

||||

输入下面的 [alias 命令 ][3]:

|

||||

```

|

||||

alias

|

||||

```

|

||||

|

||||

结果为:

|

||||

|

||||

```

|

||||

alias ..='cd ..'

|

||||

alias amazonbackup='s3backup'

|

||||

alias apt-get='sudo apt-get'

|

||||

...

|

||||

|

||||

```

|

||||

|

||||

默认 alias 命令会列出当前用户定义好的别名。

|

||||

`alias` 命令默认会列出当前用户定义好的别名。

|

||||

|

||||

### 如何定义或者说创建一个 bash shell 别名

|

||||

#### 如何定义或者创建一个 bash shell 别名

|

||||

|

||||

使用下面语法 [创建别名][4]:

|

||||

|

||||

使用下面语法 [创建别名 ][4]:

|

||||

```

|

||||

alias name =value

|

||||

alias name = 'command'

|

||||

@ -38,19 +46,22 @@ alias name = '/path/to/script'

|

||||

alias name = '/path/to/script.pl arg1'

|

||||

```

|

||||

|

||||

举个例子,输入下面命令并回车就会为常用的 `clear`( 清除屏幕)命令创建一个别名 **c**:

|

||||

举个例子,输入下面命令并回车就会为常用的 `clear`(清除屏幕)命令创建一个别名 `c`:

|

||||

|

||||

```

|

||||

alias c = 'clear'

|

||||

```

|

||||

|

||||

然后输入字母 `c` 而不是 `clear` 后回车就会清除屏幕了:

|

||||

|

||||

```

|

||||

c

|

||||

```

|

||||

|

||||

### 如何临时性地禁用 bash 别名

|

||||

#### 如何临时性地禁用 bash 别名

|

||||

|

||||

下面语法可以[临时性地禁用别名][5]:

|

||||

|

||||

下面语法可以[临时性地禁用别名 ][5]:

|

||||

```

|

||||

## path/to/full/command

|

||||

/usr/bin/clear

|

||||

@ -60,37 +71,43 @@ c

|

||||

command ls

|

||||

```

|

||||

|

||||

### 如何删除 bash 别名

|

||||

#### 如何删除 bash 别名

|

||||

|

||||

使用 [unalias 命令来删除别名][6]。其语法为:

|

||||

|

||||

使用 [unalias 命令来删除别名 ][6]。其语法为:

|

||||

```

|

||||

unalias aliasname

|

||||

unalias foo

|

||||

```

|

||||

|

||||

例如,删除我们之前创建的别名 `c`:

|

||||

|

||||

```

|

||||

unalias c

|

||||

```

|

||||

|

||||

你还需要用文本编辑器删掉 [~/.bashrc 文件 ][1] 中的别名定义(参见下一部分内容)。

|

||||

你还需要用文本编辑器删掉 [~/.bashrc 文件][1] 中的别名定义(参见下一部分内容)。

|

||||

|

||||

### 如何让 bash shell 别名永久生效

|

||||

#### 如何让 bash shell 别名永久生效

|

||||

|

||||

别名 `c` 在当前登录会话中依然有效。但当你登出或重启系统后,别名 `c` 就没有了。为了防止出现这个问题,将别名定义写入 [~/.bashrc file][1] 中,输入:

|

||||

|

||||

```

|

||||

vi ~/.bashrc

|

||||

```

|

||||

|

||||

输入下行内容让别名 `c` 对当前用户永久有效:

|

||||

|

||||

```

|

||||

alias c = 'clear'

|

||||

```

|

||||

|

||||

保存并关闭文件就行了。系统级的别名(也就是对所有用户都生效的别名) 可以放在 `/etc/bashrc` 文件中。请注意,alias 命令内建于各种 shell 中,包括 ksh,tcsh/csh,ash,bash 以及其他 shell。

|

||||

保存并关闭文件就行了。系统级的别名(也就是对所有用户都生效的别名)可以放在 `/etc/bashrc` 文件中。请注意,`alias` 命令内建于各种 shell 中,包括 ksh,tcsh/csh,ash,bash 以及其他 shell。

|

||||

|

||||

### 关于特权权限判断

|

||||

#### 关于特权权限判断

|

||||

|

||||

可以将下面代码加入 `~/.bashrc`:

|

||||

|

||||

```

|

||||

# if user is not root, pass all commands via sudo #

|

||||

if [ $UID -ne 0 ]; then

|

||||

@ -99,9 +116,10 @@ if [ $UID -ne 0 ]; then

|

||||

fi

|

||||

```

|

||||

|

||||

### 定义与操作系统类型相关的别名

|

||||

#### 定义与操作系统类型相关的别名

|

||||

|

||||

可以将下面代码加入 `~/.bashrc` [使用 case 语句][7]:

|

||||

|

||||

可以将下面代码加入 `~/.bashrc` [使用 case 语句 ][7]:

|

||||

```

|

||||

### Get os name via uname ###

|

||||

_myos="$(uname)"

|

||||

@ -115,13 +133,14 @@ case $_myos in

|

||||

esac

|

||||

```

|

||||

|

||||

## 30 个 bash shell 别名的案例

|

||||

### 30 个 bash shell 别名的案例

|

||||

|

||||

你可以定义各种类型的别名来节省时间并提高生产率。

|

||||

|

||||

### #1:控制 ls 命令的输出

|

||||

#### #1:控制 ls 命令的输出

|

||||

|

||||

[ls 命令列出目录中的内容][8] 而你可以对输出进行着色:

|

||||

|

||||

[ls 命令列出目录中的内容 ][8] 而你可以对输出进行着色:

|

||||

```

|

||||

## Colorize the ls output ##

|

||||

alias ls = 'ls --color=auto'

|

||||

@ -133,7 +152,8 @@ alias ll = 'ls -la'

|

||||

alias l.= 'ls -d . .. .git .gitignore .gitmodules .travis.yml --color=auto'

|

||||

```

|

||||

|

||||

### #2:控制 cd 命令的行为

|

||||

#### #2:控制 cd 命令的行为

|

||||

|

||||

```

|

||||

## get rid of command not found ##

|

||||

alias cd..= 'cd ..'

|

||||

@ -147,9 +167,10 @@ alias .4= 'cd ../../../../'

|

||||

alias .5= 'cd ../../../../..'

|

||||

```

|

||||

|

||||

### #3:控制 grep 命令的输出

|

||||

#### #3:控制 grep 命令的输出

|

||||

|

||||

[grep 命令是一个用于在纯文本文件中搜索匹配正则表达式的行的命令行工具][9]:

|

||||

|

||||

[grep 命令是一个用于在纯文本文件中搜索匹配正则表达式的行的命令行工具 ][9]:

|

||||

```

|

||||

## Colorize the grep command output for ease of use (good for log files)##

|

||||

alias grep = 'grep --color=auto'

|

||||

@ -157,44 +178,51 @@ alias egrep = 'egrep --color=auto'

|

||||

alias fgrep = 'fgrep --color=auto'

|

||||

```

|

||||

|

||||

### #4:让计算器默认开启 math 库

|

||||

#### #4:让计算器默认开启 math 库

|

||||

|

||||

```

|

||||

alias bc = 'bc -l'

|

||||

```

|

||||

|

||||

### #4:生成 sha1 数字签名

|

||||

#### #4:生成 sha1 数字签名

|

||||

|

||||

```

|

||||

alias sha1 = 'openssl sha1'

|

||||

```

|

||||

|

||||

### #5:自动创建父目录

|

||||

#### #5:自动创建父目录

|

||||

|

||||

[mkdir 命令][10] 用于创建目录:

|

||||

|

||||

[mkdir 命令 ][10] 用于创建目录:

|

||||

```

|

||||

alias mkdir = 'mkdir -pv'

|

||||

```

|

||||

|

||||

### #6:为 diff 输出着色

|

||||

#### #6:为 diff 输出着色

|

||||

|

||||

你可以[使用 diff 来一行行第比较文件][11] 而一个名为 `colordiff` 的工具可以为 diff 输出着色:

|

||||

|

||||

你可以[使用 diff 来一行行第比较文件 ][11] 而一个名为 colordiff 的工具可以为 diff 输出着色:

|

||||

```

|

||||

# install colordiff package :)

|

||||

alias diff = 'colordiff'

|

||||

```

|

||||

|

||||

### #7:让 mount 命令的输出更漂亮,更方便人类阅读

|

||||

#### #7:让 mount 命令的输出更漂亮,更方便人类阅读

|

||||

|

||||

```

|

||||

alias mount = 'mount |column -t'

|

||||

```

|

||||

|

||||

### #8:简化命令以节省时间

|

||||

#### #8:简化命令以节省时间

|

||||

|

||||

```

|

||||

# handy short cuts #

|

||||

alias h = 'history'

|

||||

alias j = 'jobs -l'

|

||||

```

|

||||

|

||||

### #9:创建一系列新命令

|

||||

#### #9:创建一系列新命令

|

||||

|

||||

```

|

||||

alias path = 'echo -e ${PATH//:/\\n}'

|

||||

alias now = 'date +"%T"'

|

||||

@ -202,7 +230,8 @@ alias nowtime =now

|

||||

alias nowdate = 'date +"%d-%m-%Y"'

|

||||

```

|

||||

|

||||

### #10:设置 vim 为默认编辑器

|

||||

#### #10:设置 vim 为默认编辑器

|

||||

|

||||

```

|

||||

alias vi = vim

|

||||

alias svi = 'sudo vi'

|

||||

@ -210,7 +239,8 @@ alias vis = 'vim "+set si"'

|

||||

alias edit = 'vim'

|

||||

```

|

||||

|

||||

### #11:控制网络工具 ping 的输出

|

||||

#### #11:控制网络工具 ping 的输出

|

||||

|

||||

```

|

||||

# Stop after sending count ECHO_REQUEST packets #

|

||||

alias ping = 'ping -c 5'

|

||||

@ -219,16 +249,18 @@ alias ping = 'ping -c 5'

|

||||

alias fastping = 'ping -c 100 -s.2'

|

||||

```

|

||||

|

||||

### #12:显示打开的端口

|

||||

#### #12:显示打开的端口

|

||||

|

||||

使用 [netstat 命令][12] 可以快速列出服务区中所有的 TCP/UDP 端口:

|

||||

|

||||

使用 [netstat 命令 ][12] 可以快速列出服务区中所有的 TCP/UDP 端口:

|

||||

```

|

||||

alias ports = 'netstat -tulanp'

|

||||

```

|

||||

|

||||

### #13:唤醒休眠额服务器

|

||||

#### #13:唤醒休眠的服务器

|

||||

|

||||

[Wake-on-LAN (WOL) 是一个以太网标准][13],可以通过网络消息来开启服务器。你可以使用下面别名来[快速激活 nas 设备][14] 以及服务器:

|

||||

|

||||

[Wake-on-LAN (WOL) 是一个以太网标准 ][13],可以通过网络消息来开启服务器。你可以使用下面别名来[快速激活 nas 设备 ][14] 以及服务器:

|

||||

```

|

||||

## replace mac with your actual server mac address #

|

||||

alias wakeupnas01 = '/usr/bin/wakeonlan 00:11:32:11:15:FC'

|

||||

@ -236,9 +268,10 @@ alias wakeupnas02 = '/usr/bin/wakeonlan 00:11:32:11:15:FD'

|

||||

alias wakeupnas03 = '/usr/bin/wakeonlan 00:11:32:11:15:FE'

|

||||

```

|

||||

|

||||

### #14:控制防火墙 (iptables) 的输出

|

||||

#### #14:控制防火墙 (iptables) 的输出

|

||||

|

||||

[Netfilter 是一款 Linux 操作系统上的主机防火墙][15]。它是 Linux 发行版中的一部分,且默认情况下是激活状态。[这里列出了大多数 Liux 新手防护入侵者最常用的 iptables 方法][16]。

|

||||

|

||||

[Netfilter 是一款 Linux 操作系统上的主机防火墙 ][15]。它是 Linux 发行版中的一部分,且默认情况下是激活状态。[这里列出了大多数 Liux 新手防护入侵者最常用的 iptables 方法 ][16]。

|

||||

```

|

||||

## shortcut for iptables and pass it via sudo#

|

||||

alias ipt = 'sudo /sbin/iptables'

|

||||

@ -251,7 +284,8 @@ alias iptlistfw = 'sudo /sbin/iptables -L FORWARD -n -v --line-numbers'

|

||||

alias firewall =iptlist

|

||||

```

|

||||

|

||||

### #15:使用 curl 调试 web 服务器 /cdn 上的问题

|

||||

#### #15:使用 curl 调试 web 服务器 / CDN 上的问题

|

||||

|

||||

```

|

||||

# get web server headers #

|

||||

alias header = 'curl -I'

|

||||

@ -260,7 +294,8 @@ alias header = 'curl -I'

|

||||

alias headerc = 'curl -I --compress'

|

||||

```

|

||||

|

||||

### #16:增加安全性

|

||||

#### #16:增加安全性

|

||||

|

||||

```

|

||||

# do not delete / or prompt if deleting more than 3 files at a time #

|

||||

alias rm = 'rm -I --preserve-root'

|

||||

@ -276,9 +311,10 @@ alias chmod = 'chmod --preserve-root'

|

||||

alias chgrp = 'chgrp --preserve-root'

|

||||

```

|

||||

|

||||

### #17:更新 Debian Linux 服务器

|

||||

#### #17:更新 Debian Linux 服务器

|

||||

|

||||

[apt-get 命令][17] 用于通过因特网安装软件包 (ftp 或 http)。你也可以一次性升级所有软件包:

|

||||

|

||||

[apt-get 命令 ][17] 用于通过因特网安装软件包 (ftp 或 http)。你也可以一次性升级所有软件包:

|

||||

```

|

||||

# distro specific - Debian / Ubuntu and friends #

|

||||

# install with apt-get

|

||||

@ -289,25 +325,27 @@ alias updatey = "sudo apt-get --yes"

|

||||

alias update = 'sudo apt-get update && sudo apt-get upgrade'

|

||||

```

|

||||

|

||||

### #18:更新 RHEL / CentOS / Fedora Linux 服务器

|

||||

#### #18:更新 RHEL / CentOS / Fedora Linux 服务器

|

||||

|

||||

[yum 命令][18] 是 RHEL / CentOS / Fedora Linux 以及其他基于这些发行版的 Linux 上的软件包管理工具:

|

||||

|

||||

[yum 命令 ][18] 是 RHEL / CentOS / Fedora Linux 以及其他基于这些发行版的 Linux 上的软件包管理工具:

|

||||

```

|

||||

## distrp specifc RHEL/CentOS ##

|

||||

alias update = 'yum update'

|

||||

alias updatey = 'yum -y update'

|

||||

```

|

||||

|

||||

### #19:优化 sudo 和 su 命令

|

||||

#### #19:优化 sudo 和 su 命令

|

||||

|

||||

```

|

||||

# become root #

|

||||

alias root = 'sudo -i'

|

||||

alias su = 'sudo -i'

|

||||

```

|

||||

|

||||

### #20:使用 sudo 执行 halt/reboot 命令

|

||||

#### #20:使用 sudo 执行 halt/reboot 命令

|

||||

|

||||

[shutdown 命令 ][19] 会让 Linux / Unix 系统关机:

|

||||

[shutdown 命令][19] 会让 Linux / Unix 系统关机:

|

||||

```

|

||||

# reboot / halt / poweroff

|

||||

alias reboot = 'sudo /sbin/reboot'

|

||||

@ -316,7 +354,8 @@ alias halt = 'sudo /sbin/halt'

|

||||

alias shutdown = 'sudo /sbin/shutdown'

|

||||

```

|

||||

|

||||

### #21:控制 web 服务器

|

||||

#### #21:控制 web 服务器

|

||||

|

||||

```

|

||||

# also pass it via sudo so whoever is admin can reload it without calling you #

|

||||

alias nginxreload = 'sudo /usr/local/nginx/sbin/nginx -s reload'

|

||||

@ -327,7 +366,8 @@ alias httpdreload = 'sudo /usr/sbin/apachectl -k graceful'

|

||||

alias httpdtest = 'sudo /usr/sbin/apachectl -t && /usr/sbin/apachectl -t -D DUMP_VHOSTS'

|

||||

```

|

||||

|

||||

### #22:与备份相关的别名

|

||||

#### #22:与备份相关的别名

|

||||

|

||||

```

|

||||

# if cron fails or if you want backup on demand just run these commands #

|

||||

# again pass it via sudo so whoever is in admin group can start the job #

|

||||

@ -342,7 +382,8 @@ alias rsnapshotmonthly = 'sudo /home/scripts/admin/scripts/backup/wrapper.rsnaps

|

||||

alias amazonbackup =s3backup

|

||||

```

|

||||

|

||||

### #23:桌面应用相关的别名 - 按需播放的 avi/mp3 文件

|

||||

#### #23:桌面应用相关的别名 - 按需播放的 avi/mp3 文件

|

||||

|

||||

```

|

||||

## play video files in a current directory ##

|

||||

# cd ~/Download/movie-name

|

||||

@ -364,10 +405,10 @@ alias nplaymp3 = 'for i in /nas/multimedia/mp3/*.mp3; do mplayer "$i"; done'

|

||||

alias music = 'mplayer --shuffle *'

|

||||

```

|

||||

|

||||

#### #24:设置系统管理相关命令的默认网卡

|

||||

|

||||

### #24:设置系统管理相关命令的默认网卡

|

||||

[vnstat 一款基于终端的网络流量检测器][20]。[dnstop 是一款分析 DNS 流量的终端工具][21]。[tcptrack 和 iftop 命令显示][22] TCP/UDP 连接方面的信息,它监控网卡并显示其消耗的带宽。

|

||||

|

||||

[vnstat 一款基于终端的网络流量检测器 ][20]。[dnstop 是一款分析 DNS 流量的终端工具 ][21]。[tcptrack 和 iftop 命令显示 ][22] TCP/UDP 连接方面的信息,它监控网卡并显示其消耗的带宽。

|

||||

```

|

||||

## All of our servers eth1 is connected to the Internets via vlan / router etc ##

|

||||

alias dnstop = 'dnstop -l 5 eth1'

|

||||

@ -381,7 +422,8 @@ alias ethtool = 'ethtool eth1'

|

||||

alias iwconfig = 'iwconfig wlan0'

|

||||

```

|

||||

|

||||

### #25:快速获取系统内存,cpu 使用,和 gpu 内存相关信息

|

||||

#### #25:快速获取系统内存,cpu 使用,和 gpu 内存相关信息

|

||||

|

||||

```

|

||||

## pass options to free ##

|

||||

alias meminfo = 'free -m -l -t'

|

||||

@ -404,9 +446,10 @@ alias cpuinfo = 'lscpu'

|

||||

alias gpumeminfo = 'grep -i --color memory /var/log/Xorg.0.log'

|

||||

```

|

||||

|

||||

### #26:控制家用路由器

|

||||

#### #26:控制家用路由器

|

||||

|

||||

`curl` 命令可以用来 [重启 Linksys 路由器][23]。

|

||||

|

||||

curl 命令可以用来 [重启 Linksys 路由器 ][23]。

|

||||

```

|

||||

# Reboot my home Linksys WAG160N / WAG54 / WAG320 / WAG120N Router / Gateway from *nix.

|

||||

alias rebootlinksys = "curl -u 'admin:my-super-password' 'http://192.168.1.2/setup.cgi?todo=reboot'"

|

||||

@ -415,15 +458,17 @@ alias rebootlinksys = "curl -u 'admin:my-super-password' 'http://192.168.1.2/set

|

||||

alias reboottomato = "ssh admin@192.168.1.1 /sbin/reboot"

|

||||

```

|

||||

|

||||

### #27 wget 默认断点续传

|

||||

#### #27 wget 默认断点续传

|

||||

|

||||

[GNU wget 是一款用来从 web 下载文件的自由软件][25]。它支持 HTTP,HTTPS,以及 FTP 协议,而且它也支持断点续传:

|

||||

|

||||

[GNU Wget 是一款用来从 web 下载文件的自由软件 ][25]。它支持 HTTP,HTTPS,以及 FTP 协议,而且它页支持断点续传:

|

||||

```

|

||||

## this one saved by butt so many times ##

|

||||

alias wget = 'wget -c'

|

||||

```

|

||||

|

||||

### #28 使用不同浏览器来测试网站

|

||||

#### #28 使用不同浏览器来测试网站

|

||||

|

||||

```

|

||||

## this one saved by butt so many times ##

|

||||

alias ff4 = '/opt/firefox4/firefox'

|

||||

@ -438,9 +483,10 @@ alias ff =ff13

|

||||

alias browser =chrome

|

||||

```

|

||||

|

||||

### #29:关于 ssh 别名的注意事项

|

||||

#### #29:关于 ssh 别名的注意事项

|

||||

|

||||

不要创建 ssh 别名,代之以 `~/.ssh/config` 这个 OpenSSH SSH 客户端配置文件。它的选项更加丰富。下面是一个例子:

|

||||

|

||||

```

|

||||

Host server10

|

||||

Hostname 1.2.3.4

|

||||

@ -451,12 +497,13 @@ Host server10

|

||||

TCPKeepAlive yes

|

||||

```

|

||||

|

||||

然后你就可以使用下面语句连接 peer1 了:

|

||||

然后你就可以使用下面语句连接 server10 了:

|

||||

|

||||

```

|

||||

$ ssh server10

|

||||

```

|

||||

|

||||

### #30:现在该分享你的别名了

|

||||

#### #30:现在该分享你的别名了

|

||||

|

||||

```

|

||||

## set some other defaults ##

|

||||

@ -486,27 +533,26 @@ alias cdnmdel = '/home/scripts/admin/cdn/purge_cdn_cache --profile akamai --stdi

|

||||

alias amzcdnmdel = '/home/scripts/admin/cdn/purge_cdn_cache --profile amazon --stdin'

|

||||

```

|

||||

|

||||

## 结论

|

||||

### 总结

|

||||

|

||||

本文总结了 *nix bash 别名的多种用法:

|

||||

|

||||

1。为命令设置默认的参数(例如通过 `alias ethtool='ethtool eth0'` 设置 ethtool 命令的默认参数为 eth0)。

|

||||

2。修正错误的拼写(通过 `alias cd。.='cd .。'`让 `cd。.` 变成 `cd .。`)。

|

||||

3。缩减输入。

|

||||

4。设置系统中多版本命令的默认路径(例如 GNU/grep 位于 /usr/local/bin/grep 中而 Unix grep 位于 /bin/grep 中。若想默认使用 GNU grep 则设置别名 `grep='/usr/local/bin/grep'` )。

|

||||

5。通过默认开启命令(例如 rm,mv 等其他命令)的交互参数来增加 Unix 的安全性。

|

||||

6。为老旧的操作系统(比如 MS-DOS 或者其他类似 Unix 的操作系统)创建命令以增加兼容性(比如 `alias del=rm` )。

|

||||

1. 为命令设置默认的参数(例如通过 `alias ethtool='ethtool eth0'` 设置 ethtool 命令的默认参数为 eth0)。

|

||||

2. 修正错误的拼写(通过 `alias cd..='cd ..'`让 `cd..` 变成 `cd ..`)。

|

||||

3. 缩减输入。

|

||||

4. 设置系统中多版本命令的默认路径(例如 GNU/grep 位于 `/usr/local/bin/grep` 中而 Unix grep 位于 `/bin/grep` 中。若想默认使用 GNU grep 则设置别名 `grep='/usr/local/bin/grep'` )。

|

||||

5. 通过默认开启命令(例如 `rm`,`mv` 等其他命令)的交互参数来增加 Unix 的安全性。

|

||||

6. 为老旧的操作系统(比如 MS-DOS 或者其他类似 Unix 的操作系统)创建命令以增加兼容性(比如 `alias del=rm`)。

|

||||

|

||||

我已经分享了多年来为了减少重复输入命令而使用的别名。若你知道或使用的哪些 bash/ksh/csh 别名能够减少输入,请在留言框中分享。

|

||||

|

||||

|

||||

--------------------------------------------------------------------------------

|

||||

|

||||

via: https://www.cyberciti.biz/tips/bash-aliases-mac-centos-linux-unix.html

|

||||

|

||||

作者:[nixCraft][a]

|

||||

译者:[lujun9972](https://github.com/lujun9972)

|

||||

校对:[校对者ID](https://github.com/校对者ID)

|

||||

校对:[wxy](https://github.com/wxy)

|

||||

|

||||

本文由 [LCTT](https://github.com/LCTT/TranslateProject) 原创编译,[Linux中国](https://linux.cn/) 荣誉推出

|

||||

|

||||

113

published/20140210 Three steps to learning GDB.md

Normal file

113

published/20140210 Three steps to learning GDB.md

Normal file

@ -0,0 +1,113 @@

|

||||

三步上手 GDB

|

||||

===============

|

||||

|

||||

调试 C 程序,曾让我很困扰。然而当我之前在写我的[操作系统][2]时,我有很多的 Bug 需要调试。我很幸运的使用上了 qemu 模拟器,它允许我将调试器附加到我的操作系统。这个调试器就是 `gdb`。

|

||||

|

||||

我得解释一下,你可以使用 `gdb` 先做一些小事情,因为我发现初学它的时候真的很混乱。我们接下来会在一个小程序中,设置断点,查看内存。.

|

||||

|

||||

### 1、 设断点

|

||||

|

||||

如果你曾经使用过调试器,那你可能已经会设置断点了。

|

||||

|

||||

下面是一个我们要调试的程序(虽然没有任何 Bug):

|

||||

|

||||

```

|

||||

#include <stdio.h>

|

||||

void do_thing() {

|

||||

printf("Hi!\n");

|

||||

}

|

||||

int main() {

|

||||

do_thing();

|

||||

}

|

||||

```

|

||||

|

||||

另存为 `hello.c`. 我们可以使用 `dbg` 调试它,像这样:

|

||||

|

||||

```

|

||||

bork@kiwi ~> gcc -g hello.c -o hello

|

||||

bork@kiwi ~> gdb ./hello

|

||||

```

|

||||

|

||||

以上是带调试信息编译 `hello.c`(为了 `gdb` 可以更好工作),并且它会给我们醒目的提示符,就像这样:

|

||||

|

||||

```

|

||||

(gdb)

|

||||

```

|

||||

|

||||

我们可以使用 `break` 命令设置断点,然后使用 `run` 开始调试程序。

|

||||

|

||||

```

|

||||

(gdb) break do_thing

|

||||

Breakpoint 1 at 0x4004f8

|

||||

(gdb) run

|

||||

Starting program: /home/bork/hello

|

||||

|

||||

Breakpoint 1, 0x00000000004004f8 in do_thing ()

|

||||

```

|

||||

|

||||

程序暂停在了 `do_thing` 开始的地方。

|

||||

|

||||

我们可以通过 `where` 查看我们所在的调用栈。

|

||||

|

||||

```

|

||||

(gdb) where

|

||||

#0 do_thing () at hello.c:3

|

||||

#1 0x08050cdb in main () at hello.c:6

|

||||

(gdb)

|

||||

```

|

||||

|

||||

### 2、 阅读汇编代码

|

||||

|

||||

使用 `disassemble` 命令,我们可以看到这个函数的汇编代码。棒级了,这是 x86 汇编代码。虽然我不是很懂它,但是 `callq` 这一行是 `printf` 函数调用。

|

||||

|

||||

```

|

||||

(gdb) disassemble do_thing

|

||||

Dump of assembler code for function do_thing:

|

||||

0x00000000004004f4 <+0>: push %rbp

|

||||

0x00000000004004f5 <+1>: mov %rsp,%rbp

|

||||

=> 0x00000000004004f8 <+4>: mov $0x40060c,%edi

|

||||

0x00000000004004fd <+9>: callq 0x4003f0

|

||||

0x0000000000400502 <+14>: pop %rbp

|

||||

0x0000000000400503 <+15>: retq

|

||||

```

|

||||

|

||||

你也可以使用 `disassemble` 的缩写 `disas`。

|

||||

|

||||

### 3、 查看内存

|

||||

|

||||

当调试我的内核时,我使用 `gdb` 的主要原因是,以确保内存布局是如我所想的那样。检查内存的命令是 `examine`,或者使用缩写 `x`。我们将使用`x`。

|

||||

|

||||

通过阅读上面的汇编代码,似乎 `0x40060c` 可能是我们所要打印的字符串地址。我们来试一下。

|

||||

|

||||

```

|

||||

(gdb) x/s 0x40060c

|

||||

0x40060c: "Hi!"

|

||||

```

|

||||

|

||||

的确是这样。`x/s` 中 `/s` 部分,意思是“把它作为字符串展示”。我也可以“展示 10 个字符”,像这样:

|

||||

|

||||

```

|

||||

(gdb) x/10c 0x40060c

|

||||

0x40060c: 72 'H' 105 'i' 33 '!' 0 '\000' 1 '\001' 27 '\033' 3 '\003' 59 ';'

|

||||

0x400614: 52 '4' 0 '\000'

|

||||

```

|

||||

|

||||

你可以看到前四个字符是 `H`、`i`、`!` 和 `\0`,并且它们之后的是一些不相关的东西。

|

||||

|

||||

我知道 `gdb` 很多其他的东西,但是我仍然不是很了解它,其中 `x` 和 `break` 让我获得很多。你还可以阅读 [do umentation for examining memory][4]。

|

||||

|

||||

--------------------------------------------------------------------------------

|

||||

|

||||

via: https://jvns.ca/blog/2014/02/10/three-steps-to-learning-gdb/

|

||||

|

||||

作者:[Julia Evans][a]

|

||||

译者:[Torival](https://github.com/Torival)

|

||||

校对:[wxy](https://github.com/wxy)

|

||||

|

||||

本文由 [LCTT](https://github.com/LCTT/TranslateProject) 原创编译,[Linux中国](https://linux.cn/) 荣誉推出

|

||||

|

||||

[a]:https://jvns.ca

|

||||

[1]:https://jvns.ca/categories/spytools

|

||||

[2]:https://jvns.ca/blog/categories/kernel

|

||||

[3]:https://twitter.com/mgedmin

|

||||

[4]:https://ftp.gnu.org/old-gnu/Manuals/gdb-5.1.1/html_chapter/gdb_9.html#SEC56

|

||||

239

published/20160625 Trying out LXD containers on our Ubuntu.md

Normal file

239

published/20160625 Trying out LXD containers on our Ubuntu.md

Normal file

@ -0,0 +1,239 @@

|

||||

在 Ubuntu 上体验 LXD 容器

|

||||

======

|

||||

|

||||

本文的主角是容器,一种类似虚拟机但更轻量级的构造。你可以轻易地在你的 Ubuntu 桌面系统中创建一堆容器!

|

||||

|

||||

虚拟机会虚拟出整个电脑让你来安装客户机操作系统。**相比之下**,容器**复用**了主机的 Linux 内核,只是简单地 **包容** 了我们选择的根文件系统(也就是运行时环境)。Linux 内核有很多功能可以将运行的 Linux 容器与我们的主机分割开(也就是我们的 Ubuntu 桌面)。

|

||||

|

||||

Linux 本身需要一些手工操作来直接管理他们。好在,有 LXD(读音为 Lex-deeh),这是一款为我们管理 Linux 容器的服务。

|

||||

|

||||

我们将会看到如何:

|

||||

|

||||

1. 在我们的 Ubuntu 桌面上配置容器,

|

||||

2. 创建容器,

|

||||

3. 安装一台 web 服务器,

|

||||

4. 测试一下这台 web 服务器,以及

|

||||

5. 清理所有的东西。

|

||||

|

||||

### 设置 Ubuntu 容器

|

||||

|

||||

如果你安装的是 Ubuntu 16.04,那么你什么都不用做。只要安装下面所列出的一些额外的包就行了。若你安装的是 Ubuntu 14.04.x 或 Ubuntu 15.10,那么按照 [LXD 2.0 系列(二):安装与配置][1] 来进行一些操作,然后再回来。

|

||||

|

||||

确保已经更新了包列表:

|

||||

|

||||

```

|

||||

sudo apt update

|

||||

sudo apt upgrade

|

||||

```

|

||||

|

||||

安装 `lxd` 包:

|

||||

|

||||

```

|

||||

sudo apt install lxd

|

||||

```

|

||||

|

||||

若你安装的是 Ubuntu 16.04,那么还可以让你的容器文件以 ZFS 文件系统的格式进行存储。Ubuntu 16.04 的 Linux kernel 包含了支持 ZFS 必要的内核模块。若要让 LXD 使用 ZFS 进行存储,我们只需要安装 ZFS 工具包。没有 ZFS,容器会在主机文件系统中以单独的文件形式进行存储。通过 ZFS,我们就有了写入时拷贝等功能,可以让任务完成更快一些。

|

||||

|

||||

安装 `zfsutils-linux` 包(若你安装的是 Ubuntu 16.04.x):

|

||||

|

||||

```

|

||||

sudo apt install zfsutils-linux

|

||||

```

|

||||

|

||||

安装好 LXD 后,包安装脚本应该会将你加入 `lxd` 组。该组成员可以使你无需通过 `sudo` 就能直接使用 LXD 管理容器。根据 Linux 的习惯,**你需要先登出桌面会话然后再登录** 才能应用 `lxd` 的组成员关系。(若你是高手,也可以通过在当前 shell 中执行 `newgrp lxd` 命令,就不用重登录了)。

|

||||

|

||||

在开始使用前,LXD 需要初始化存储和网络参数。

|

||||

|

||||

运行下面命令:

|

||||

|

||||

```

|

||||

$ sudo lxd init

|

||||

Name of the storage backend to use (dir or zfs): zfs

|

||||

Create a new ZFS pool (yes/no)? yes

|

||||

Name of the new ZFS pool: lxd-pool

|

||||

Would you like to use an existing block device (yes/no)? no

|

||||

Size in GB of the new loop device (1GB minimum): 30

|

||||

Would you like LXD to be available over the network (yes/no)? no

|

||||

Do you want to configure the LXD bridge (yes/no)? yes

|

||||

> You will be asked about the network bridge configuration. Accept all defaults and continue.

|

||||

Warning: Stopping lxd.service, but it can still be activated by:

|

||||

lxd.socket

|

||||

LXD has been successfully configured.

|

||||

$ _

|

||||

```

|

||||

|

||||

我们在一个(单独)的文件而不是块设备(即分区)中构建了一个文件系统来作为 ZFS 池,因此我们无需进行额外的分区操作。在本例中我指定了 30GB 大小,这个空间取之于根(`/`) 文件系统中。这个文件就是 `/var/lib/lxd/zfs.img`。

|

||||

|

||||

行了!最初的配置完成了。若有问题,或者想了解其他信息,请阅读 https://www.stgraber.org/2016/03/15/lxd-2-0-installing-and-configuring-lxd-212/ 。

|

||||

|

||||

### 创建第一个容器

|

||||

|

||||

所有 LXD 的管理操作都可以通过 `lxc` 命令来进行。我们通过给 `lxc` 不同参数来管理容器。

|

||||

|

||||

```

|

||||

lxc list

|

||||

```

|

||||

|

||||

可以列出所有已经安装的容器。很明显,这个列表现在是空的,但这表示我们的安装是没问题的。

|

||||

|

||||

```

|

||||

lxc image list

|

||||

```

|

||||

|

||||

列出可以用来启动容器的(已经缓存的)镜像列表。很明显这个列表也是空的,但这也说明我们的安装是没问题的。

|

||||

|

||||

```

|

||||

lxc image list ubuntu:

|

||||

```

|

||||

|

||||

列出可以下载并启动容器的远程镜像。而且指定了显示 Ubuntu 镜像。

|

||||

|

||||

```

|

||||

lxc image list images:

|

||||

```

|

||||

|

||||

列出可以用来启动容器的(已经缓存的)各种发行版的镜像列表。这会列出各种发行版的镜像比如 Alpine、Debian、Gentoo、Opensuse 以及 Fedora。

|

||||

|

||||

让我们启动一个 Ubuntu 16.04 容器,并称之为 `c1`:

|

||||

|

||||

```

|

||||

$ lxc launch ubuntu:x c1

|

||||

Creating c1

|

||||

Starting c1

|

||||

$

|

||||

```

|

||||

|

||||

我们使用 `launch` 动作,然后选择镜像 `ubuntu:x` (`x` 表示 Xenial/16.04 镜像),最后我们使用名字 `c1` 作为容器的名称。

|

||||

|

||||

让我们来看看安装好的首个容器,

|

||||

|

||||

```

|

||||

$ lxc list

|

||||

|

||||

+---------|---------|----------------------|------|------------|-----------+

|

||||

| NAME | STATE | IPV4 | IPV6 | TYPE | SNAPSHOTS |

|

||||

+---------|---------|----------------------|------|------------|-----------+

|

||||

| c1 | RUNNING | 10.173.82.158 (eth0) | | PERSISTENT | 0 |

|

||||

+---------|---------|----------------------|------|------------|-----------+

|

||||

```

|

||||

|

||||

我们的首个容器 c1 已经运行起来了,它还有自己的 IP 地址(可以本地访问)。我们可以开始用它了!

|

||||

|

||||

### 安装 web 服务器

|

||||

|

||||

我们可以在容器中运行命令。运行命令的动作为 `exec`。

|

||||

|

||||

```

|

||||

$ lxc exec c1 -- uptime

|

||||

11:47:25 up 2 min,0 users,load average:0.07,0.05,0.04

|

||||

$ _

|

||||

```

|

||||

|

||||

在 `exec` 后面,我们指定容器、最后输入要在容器中运行的命令。该容器的运行时间只有 2 分钟,这是个新出炉的容器:-)。

|

||||

|

||||

命令行中的 `--` 跟我们 shell 的参数处理过程有关。若我们的命令没有任何参数,则完全可以省略 `-`。

|

||||

|

||||

```

|

||||

$ lxc exec c1 -- df -h

|

||||

```

|

||||

|

||||

这是一个必须要 `-` 的例子,由于我们的命令使用了参数 `-h`。若省略了 `-`,会报错。

|

||||

|

||||

然后我们运行容器中的 shell 来更新包列表。

|

||||

|

||||

```

|

||||

$ lxc exec c1 bash

|

||||

root@c1:~# apt update

|

||||

Ign http://archive.ubuntu.com trusty InRelease

|

||||

Get:1 http://archive.ubuntu.com trusty-updates InRelease [65.9 kB]

|

||||

Get:2 http://security.ubuntu.com trusty-security InRelease [65.9 kB]

|

||||

...

|

||||

Hit http://archive.ubuntu.com trusty/universe Translation-en

|

||||

Fetched 11.2 MB in 9s (1228 kB/s)

|

||||

Reading package lists... Done

|

||||

root@c1:~# apt upgrade

|

||||

Reading package lists... Done

|

||||

Building dependency tree

|

||||

...

|

||||

Processing triggers for man-db (2.6.7.1-1ubuntu1) ...

|

||||

Setting up dpkg (1.17.5ubuntu5.7) ...

|

||||

root@c1:~# _

|

||||

```

|

||||

|

||||

我们使用 nginx 来做 web 服务器。nginx 在某些方面要比 Apache web 服务器更酷一些。

|

||||

|

||||

```

|

||||

root@c1:~# apt install nginx

|

||||

Reading package lists... Done

|

||||

Building dependency tree

|

||||

...

|

||||

Setting up nginx-core (1.4.6-1ubuntu3.5) ...

|

||||

Setting up nginx (1.4.6-1ubuntu3.5) ...

|

||||

Processing triggers for libc-bin (2.19-0ubuntu6.9) ...

|

||||

root@c1:~# _

|

||||

```

|

||||

|

||||

让我们用浏览器访问一下这个 web 服务器。记住 IP 地址为 10.173.82.158,因此你需要在浏览器中输入这个 IP。

|

||||

|

||||

[![lxd-nginx][2]][3]

|

||||

|

||||

让我们对页面文字做一些小改动。回到容器中,进入默认 HTML 页面的目录中。

|

||||

|

||||

```

|

||||

root@c1:~# cd /var/www/html/

|

||||

root@c1:/var/www/html# ls -l

|

||||

total 2

|

||||

-rw-r--r-- 1 root root 612 Jun 25 12:15 index.nginx-debian.html

|

||||

root@c1:/var/www/html#

|

||||

```

|

||||

|

||||

使用 nano 编辑文件,然后保存:

|

||||

|

||||

[![lxd-nginx-nano][4]][5]

|

||||

|

||||

之后,再刷一下页面看看,

|

||||

|

||||

[![lxd-nginx-modified][6]][7]

|

||||

|

||||

### 清理

|

||||

|

||||

让我们清理一下这个容器,也就是删掉它。当需要的时候我们可以很方便地创建一个新容器出来。

|

||||

|

||||

```

|

||||

$ lxc list

|

||||

+---------+---------+----------------------+------+------------+-----------+

|

||||

| NAME | STATE | IPV4 | IPV6 | TYPE | SNAPSHOTS |

|

||||

+---------+---------+----------------------+------+------------+-----------+

|

||||

| c1 | RUNNING | 10.173.82.169 (eth0) | | PERSISTENT | 0 |

|

||||

+---------+---------+----------------------+------+------------+-----------+

|

||||

$ lxc stop c1

|

||||

$ lxc delete c1

|

||||

$ lxc list

|

||||

+---------+---------+----------------------+------+------------+-----------+

|

||||

| NAME | STATE | IPV4 | IPV6 | TYPE | SNAPSHOTS |

|

||||

+---------+---------+----------------------+------+------------+-----------+

|

||||

+---------+---------+----------------------+------+------------+-----------+

|

||||

```

|

||||

|

||||

我们停止(关闭)这个容器,然后删掉它了。

|

||||

|

||||

本文至此就结束了。关于容器有很多玩法。而这只是配置 Ubuntu 并尝试使用容器的第一步而已。

|

||||

|

||||

--------------------------------------------------------------------------------

|

||||

|

||||

via: https://blog.simos.info/trying-out-lxd-containers-on-our-ubuntu/

|

||||

|

||||

作者:[Simos Xenitellis][a]

|

||||

译者:[lujun9972](https://github.com/lujun9972)

|

||||

校对:[wxy](https://github.com/wxy)

|

||||

|

||||

本文由 [LCTT](https://github.com/LCTT/TranslateProject) 原创编译,[Linux中国](https://linux.cn/) 荣誉推出

|

||||

|

||||

[a]:https://blog.simos.info/author/simos/

|

||||

[1]:https://linux.cn/article-7687-1.html

|

||||

[2]:https://i2.wp.com/blog.simos.info/wp-content/uploads/2016/06/lxd-nginx.png?resize=564%2C269&ssl=1

|

||||

[3]:https://i2.wp.com/blog.simos.info/wp-content/uploads/2016/06/lxd-nginx.png?ssl=1

|

||||

[4]:https://i2.wp.com/blog.simos.info/wp-content/uploads/2016/06/lxd-nginx-nano.png?resize=750%2C424&ssl=1

|

||||

[5]:https://i2.wp.com/blog.simos.info/wp-content/uploads/2016/06/lxd-nginx-nano.png?ssl=1

|

||||

[6]:https://i1.wp.com/blog.simos.info/wp-content/uploads/2016/06/lxd-nginx-modified.png?resize=595%2C317&ssl=1

|

||||

[7]:https://i1.wp.com/blog.simos.info/wp-content/uploads/2016/06/lxd-nginx-modified.png?ssl=1

|

||||

255

published/20160808 Top 10 Command Line Games For Linux.md

Normal file

255

published/20160808 Top 10 Command Line Games For Linux.md

Normal file

@ -0,0 +1,255 @@

|

||||

十大 Linux 命令行游戏

|

||||

======

|

||||

|

||||

概要: 本文列举了 Linux 中最好的命令行游戏。

|

||||

|

||||

Linux 从来都不是游戏的首选操作系统,尽管近日来 [Linux 的游戏][1]提供了很多,你也可以从许多资源[下载到 Linux 游戏][2]。

|

||||

|

||||

也有专门的 [游戏版 Linux][3]。没错,确实有。但是今天,我们并不是要欣赏游戏版 Linux。

|

||||

|

||||

Linux 有一个超过 Windows 的优势。它拥有一个强大的 Linux 终端。在 Linux 终端上,你可以做很多事情,包括玩 **命令行游戏**。

|

||||

|

||||

当然,我们都是 Linux 终端的骨灰粉。终端游戏轻便、快速、有地狱般的魔力。而这最有意思的事情是,你可以在 Linux 终端上重温大量经典游戏。

|

||||

|

||||

### 最好的 Linux 终端游戏

|

||||

|

||||

来揭秘这张榜单,找出 Linux 终端最好的游戏。

|

||||

|

||||

#### 1. Bastet

|

||||

|

||||

谁还没花上几个小时玩[俄罗斯方块][4]?它简单而且容易上瘾。 Bastet 就是 Linux 版的俄罗斯方块。

|

||||

|

||||

![Linux 终端游戏 Bastet][5]

|

||||

|

||||

使用下面的命令获取 Bastet:

|

||||

|

||||

```

|

||||

sudo apt install bastet

|

||||

```

|

||||

|

||||

运行下列命令,在终端上开始这个游戏:

|

||||

|

||||

```

|

||||

bastet

|

||||

```

|

||||

|

||||

使用空格键旋转方块,方向键控制方块移动。

|

||||

|

||||

#### 2. Ninvaders

|

||||

|

||||

Space Invaders(太空侵略者)。我仍记得这个游戏里,和我兄弟为了最高分而比拼。这是最好的街机游戏之一。

|

||||

|

||||

![Linux 终端游戏 nInvaders][6]

|

||||

|

||||

复制粘贴这段代码安装 Ninvaders。

|

||||

|

||||

```

|

||||

sudo apt-get install ninvaders

|

||||

```

|

||||

|

||||

使用下面的命令开始游戏:

|

||||

|

||||

```

|

||||

ninvaders

|

||||

```

|

||||

|

||||

方向键移动太空飞船。空格键射击外星人。

|

||||

|

||||

[推荐阅读:2016 你可以开始的 Linux 游戏 Top 10][21]

|

||||

|

||||

#### 3. Pacman4console

|

||||

|

||||

是的,这个就是街机之王。Pacman4console 是最受欢迎的街机游戏 Pacman(吃豆人)的终端版。

|

||||

|

||||

![Linux 命令行吃豆豆游戏 Pacman4console][7]

|

||||

|

||||

使用以下命令获取 pacman4console:

|

||||

|

||||

```

|

||||

sudo apt-get install pacman4console

|

||||

```

|

||||

|

||||

打开终端,建议使用最大的终端界面。键入以下命令启动游戏:

|

||||

|

||||

```

|

||||

pacman4console

|

||||

```

|

||||

|

||||

使用方向键控制移动。

|

||||

|

||||

#### 4. nSnake

|

||||

|

||||

记得在老式诺基亚手机里玩的贪吃蛇游戏吗?

|

||||

|

||||

这个游戏让我在很长时间内着迷于手机。我曾经设计过各种姿态去获得更长的蛇身。

|

||||

|

||||

![nsnake : Linux 终端上的贪吃蛇游戏][8]

|

||||

|

||||

我们拥有 [Linux 终端上的贪吃蛇游戏][9] 得感谢 [nSnake][9]。使用下面的命令安装它:

|

||||

|

||||

```

|

||||

sudo apt-get install nsnake

|

||||

```

|

||||

|

||||

键入下面的命令开始游戏:

|

||||

|

||||

```

|

||||

nsnake

|

||||

```

|

||||

|

||||

使用方向键控制蛇身并喂它。

|

||||

|

||||

#### 5. Greed

|

||||

|

||||

Greed 有点像 Tron(类似贪吃蛇的进化版),但是减少了速度,也没那么刺激。

|

||||

|

||||

你当前的位置由闪烁的 ‘@’ 表示。你被数字所环绕,你可以在四个方向任意移动。

|

||||

|

||||

你选择的移动方向上标识的数字,就是你能移动的步数。你将重复这个步骤。走过的路不能再走,如果你无路可走,游戏结束。

|

||||

|

||||

似乎我让它听起来变得更复杂了。

|

||||

|

||||

![Greed : 命令行上的 Tron][10]

|

||||

|

||||

通过下列命令获取 Greed:

|

||||

|

||||

```

|

||||

sudo apt-get install greed

|

||||

```

|

||||

|

||||

通过下列命令启动游戏,使用方向键控制游戏。

|

||||

|

||||

```

|

||||

greed

|

||||

```

|

||||

|

||||

#### 6. Air Traffic Controller

|

||||

|

||||

还有什么比做飞行员更有意思的?那就是空中交通管制员。在你的终端中,你可以模拟一个空中交通系统。说实话,在终端里管理空中交通蛮有意思的。

|

||||

|

||||

![Linux 空中交通管理员][11]

|

||||

|

||||

使用下列命令安装游戏:

|

||||

|

||||

```

|

||||

sudo apt-get install bsdgames

|

||||

```

|

||||

|

||||

键入下列命令启动游戏:

|

||||

|

||||

```

|

||||

atc

|

||||

```

|

||||

|

||||

ATC 不是孩子玩的游戏。建议查看官方文档。

|

||||

|

||||

#### 7. Backgammon(双陆棋)

|

||||

|

||||

无论之前你有没有玩过 [双陆棋][12],你都应该看看这个。 它的说明书和控制手册都非常友好。如果你喜欢,可以挑战你的电脑或者你的朋友。

|

||||

|

||||

![Linux 终端上的双陆棋][13]

|

||||

|

||||

使用下列命令安装双陆棋:

|

||||

|

||||

```

|

||||

sudo apt-get install bsdgames

|

||||

```

|

||||

|

||||

键入下列命令启动游戏:

|

||||

|

||||

```

|

||||

backgammon

|

||||

```

|

||||

|

||||

当你提示游戏规则时,回复 ‘y’ 即可。

|

||||

|

||||

#### 8. Moon Buggy

|

||||

|

||||

跳跃、开火。欢乐时光不必多言。

|

||||

|

||||

![Moon buggy][14]

|

||||

|

||||

使用下列命令安装游戏:

|

||||

|

||||

```

|

||||

sudo apt-get install moon-buggy

|

||||

```

|

||||

|

||||

使用下列命令启动游戏:

|

||||

|

||||

```

|

||||

moon-buggy

|

||||

```

|

||||

|

||||

空格跳跃,‘a’ 或者 ‘l’射击。尽情享受吧。

|

||||

|

||||

#### 9. 2048

|

||||

|

||||

2048 可以活跃你的大脑。[2048][15] 是一个策咯游戏,很容易上瘾。以获取 2048 分为目标。

|

||||

|

||||

![Linux 终端上的 2048][16]

|

||||

|

||||

复制粘贴下面的命令安装游戏:

|

||||

|

||||

```

|

||||

wget https://raw.githubusercontent.com/mevdschee/2048.c/master/2048.c

|

||||

|

||||

gcc -o 2048 2048.c

|

||||

```

|

||||

|

||||

键入下列命令启动游戏:

|

||||

|

||||

```

|

||||

./2048

|

||||

```

|

||||

|

||||

#### 10. Tron

|

||||

|

||||

没有动作类游戏,这张榜单怎么可能结束?

|

||||

|

||||

![Linux 终端游戏 Tron][17]

|

||||

|

||||

是的,Linux 终端可以实现这种精力充沛的游戏 Tron。为接下来迅捷的反应做准备吧。无需被下载和安装困扰。一个命令即可启动游戏,你只需要一个网络连接:

|

||||

|

||||

```

|

||||

ssh sshtron.zachlatta.com

|

||||

```

|

||||

|

||||

如果有别的在线游戏者,你可以多人游戏。了解更多:[Linux 终端游戏 Tron][18]。

|

||||

|

||||

### 你看上了哪一款?

|

||||

|

||||

伙计,十大 Linux 终端游戏都分享给你了。我猜你现在正准备键入 `ctrl+alt+T`(终端快捷键) 了。榜单中那个是你最喜欢的游戏?或者你有其它的终端游戏么?尽情分享吧!

|

||||

|

||||

--------------------------------------------------------------------------------

|

||||

|

||||

via: https://itsfoss.com/best-command-line-games-linux/

|

||||

|

||||

作者:[Aquil Roshan][a]

|

||||

译者:[CYLeft](https://github.com/CYleft)

|

||||

校对:[wxy](https://github.com/wxy)

|

||||

|

||||

本文由 [LCTT](https://github.com/LCTT/TranslateProject) 原创编译,[Linux中国](https://linux.cn/) 荣誉推出

|

||||

|

||||

[a]:https://itsfoss.com/author/aquil/

|

||||

[1]:https://linux.cn/article-7316-1.html

|

||||

[2]:https://itsfoss.com/download-linux-games/

|

||||

[3]:https://itsfoss.com/manjaro-gaming-linux/

|

||||

[4]:https://en.wikipedia.org/wiki/Tetris

|

||||

[5]:https://4bds6hergc-flywheel.netdna-ssl.com/wp-content/uploads/2016/08/bastet.jpg

|

||||

[6]:https://4bds6hergc-flywheel.netdna-ssl.com/wp-content/uploads/2016/08/ninvaders.jpg

|

||||

[7]:https://4bds6hergc-flywheel.netdna-ssl.com/wp-content/uploads/2016/08/pacman.jpg

|

||||

[8]:https://4bds6hergc-flywheel.netdna-ssl.com/wp-content/uploads/2016/08/nsnake.jpg

|

||||

[9]:https://itsfoss.com/nsnake-play-classic-snake-game-linux-terminal/

|

||||

[10]:https://4bds6hergc-flywheel.netdna-ssl.com/wp-content/uploads/2016/08/greed.jpg

|

||||

[11]:https://4bds6hergc-flywheel.netdna-ssl.com/wp-content/uploads/2016/08/atc.jpg

|

||||

[12]:https://en.wikipedia.org/wiki/Backgammon

|

||||

[13]:https://4bds6hergc-flywheel.netdna-ssl.com/wp-content/uploads/2016/08/backgammon.jpg

|

||||

[14]:https://4bds6hergc-flywheel.netdna-ssl.com/wp-content/uploads/2016/08/moon-buggy.jpg

|

||||

[15]:https://itsfoss.com/2048-offline-play-ubuntu/

|

||||

[16]:https://4bds6hergc-flywheel.netdna-ssl.com/wp-content/uploads/2016/08/2048.jpg

|

||||

[17]:https://4bds6hergc-flywheel.netdna-ssl.com/wp-content/uploads/2016/08/tron.jpg

|

||||

[18]:https://itsfoss.com/play-tron-game-linux-terminal/

|

||||

[19]:https://twitter.com/abhishek_pc

|

||||

[20]:https://itsfoss.com/linux-gaming-guide/

|

||||

[21]:https://itsfoss.com/best-linux-games/

|

||||

@ -0,0 +1,135 @@

|

||||

当你在 Linux 上启动一个进程时会发生什么?

|

||||

===========================================================

|

||||

|

||||

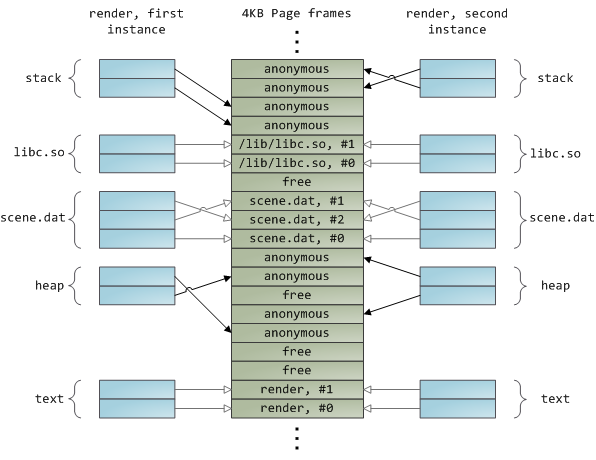

本文是关于 fork 和 exec 是如何在 Unix 上工作的。你或许已经知道,也有人还不知道。几年前当我了解到这些时,我惊叹不已。

|

||||

|

||||

我们要做的是启动一个进程。我们已经在博客上讨论了很多关于**系统调用**的问题,每当你启动一个进程或者打开一个文件,这都是一个系统调用。所以你可能会认为有这样的系统调用:

|

||||

|

||||

```

|

||||

start_process(["ls", "-l", "my_cool_directory"])

|

||||

```

|

||||

|

||||

这是一个合理的想法,显然这是它在 DOS 或 Windows 中的工作原理。我想说的是,这并不是 Linux 上的工作原理。但是,我查阅了文档,确实有一个 [posix_spawn][2] 的系统调用基本上是这样做的,不过这不在本文的讨论范围内。

|

||||

|

||||

### fork 和 exec

|

||||

|

||||

Linux 上的 `posix_spawn` 是通过两个系统调用实现的,分别是 `fork` 和 `exec`(实际上是 `execve`),这些都是人们常常使用的。尽管在 OS X 上,人们使用 `posix_spawn`,而 `fork` 和 `exec` 是不提倡的,但我们将讨论的是 Linux。

|

||||

|

||||

Linux 中的每个进程都存在于“进程树”中。你可以通过运行 `pstree` 命令查看进程树。树的根是 `init`,进程号是 1。每个进程(`init` 除外)都有一个父进程,一个进程都可以有很多子进程。

|

||||

|

||||

所以,假设我要启动一个名为 `ls` 的进程来列出一个目录。我是不是只要发起一个进程 `ls` 就好了呢?不是的。

|

||||

|

||||

我要做的是,创建一个子进程,这个子进程是我(`me`)本身的一个克隆,然后这个子进程的“脑子”被吃掉了,变成 `ls`。

|

||||

|

||||

开始是这样的:

|

||||

|

||||

```

|

||||

my parent

|

||||

|- me

|

||||

```

|

||||

|

||||

然后运行 `fork()`,生成一个子进程,是我(`me`)自己的一份克隆:

|

||||

|

||||

```

|

||||

my parent

|

||||

|- me

|

||||

|-- clone of me

|

||||

```

|

||||

|

||||

然后我让该子进程运行 `exec("ls")`,变成这样:

|

||||

|

||||

```

|

||||

my parent

|

||||

|- me

|

||||

|-- ls

|

||||

```

|

||||

|

||||

当 ls 命令结束后,我几乎又变回了我自己:

|

||||

|

||||

```

|

||||

my parent

|

||||

|- me

|

||||

|-- ls (zombie)

|

||||

```

|

||||

|

||||

在这时 `ls` 其实是一个僵尸进程。这意味着它已经死了,但它还在等我,以防我需要检查它的返回值(使用 `wait` 系统调用)。一旦我获得了它的返回值,我将再次恢复独自一人的状态。

|

||||

|

||||

```

|

||||

my parent

|

||||

|- me

|

||||

```

|

||||

|

||||

### fork 和 exec 的代码实现

|

||||

|

||||

如果你要编写一个 shell,这是你必须做的一个练习(这是一个非常有趣和有启发性的项目。Kamal 在 Github 上有一个很棒的研讨会:[https://github.com/kamalmarhubi/shell-workshop][3])。

|

||||

|

||||

事实证明,有了 C 或 Python 的技能,你可以在几个小时内编写一个非常简单的 shell,像 bash 一样。(至少如果你旁边能有个人多少懂一点,如果没有的话用时会久一点。)我已经完成啦,真的很棒。

|

||||

|

||||

这就是 `fork` 和 `exec` 在程序中的实现。我写了一段 C 的伪代码。请记住,[fork 也可能会失败哦。][4]

|

||||

|

||||

```

|

||||

int pid = fork();

|

||||

// 我要分身啦

|

||||

// “我”是谁呢?可能是子进程也可能是父进程

|

||||

if (pid == 0) {

|

||||

// 我现在是子进程

|

||||

// “ls” 吃掉了我脑子,然后变成一个完全不一样的进程

|

||||

exec(["ls"])

|

||||

} else if (pid == -1) {

|

||||

// 天啊,fork 失败了,简直是灾难!

|

||||

} else {

|

||||

// 我是父进程耶

|

||||

// 继续做一个酷酷的美男子吧

|

||||

// 需要的话,我可以等待子进程结束

|

||||

}

|

||||

```

|

||||

|

||||

### 上文提到的“脑子被吃掉”是什么意思呢?

|

||||

|

||||

进程有很多属性:

|

||||

|

||||

* 打开的文件(包括打开的网络连接)

|

||||

* 环境变量

|

||||

* 信号处理程序(在程序上运行 Ctrl + C 时会发生什么?)

|

||||

* 内存(你的“地址空间”)

|

||||

* 寄存器

|

||||

* 可执行文件(`/proc/$pid/exe`)

|

||||

* cgroups 和命名空间(与 Linux 容器相关)

|

||||

* 当前的工作目录

|

||||

* 运行程序的用户

|

||||

* 其他我还没想到的

|

||||

|

||||

当你运行 `execve` 并让另一个程序吃掉你的脑子的时候,实际上几乎所有东西都是相同的! 你们有相同的环境变量、信号处理程序和打开的文件等等。

|

||||

|

||||

唯一改变的是,内存、寄存器以及正在运行的程序,这可是件大事。

|

||||

|

||||

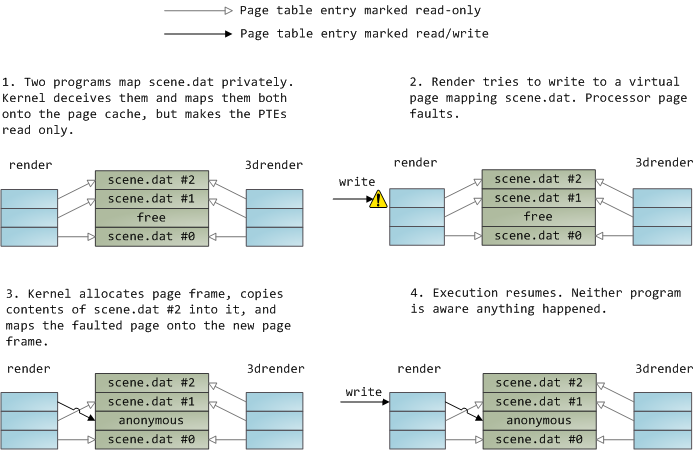

### 为何 fork 并非那么耗费资源(写入时复制)

|

||||

|

||||

你可能会问:“如果我有一个使用了 2GB 内存的进程,这是否意味着每次我启动一个子进程,所有 2 GB 的内存都要被复制一次?这听起来要耗费很多资源!”

|

||||

|

||||

事实上,Linux 为 `fork()` 调用实现了<ruby>写时复制<rt>copy on write</rt></ruby>,对于新进程的 2GB 内存来说,就像是“看看旧的进程就好了,是一样的!”。然后,当如果任一进程试图写入内存,此时系统才真正地复制一个内存的副本给该进程。如果两个进程的内存是相同的,就不需要复制了。

|

||||

|

||||

### 为什么你需要知道这么多

|

||||

|

||||

你可能会说,好吧,这些细节听起来很厉害,但为什么这么重要?关于信号处理程序或环境变量的细节会被继承吗?这对我的日常编程有什么实际影响呢?

|

||||

|

||||

有可能哦!比如说,在 Kamal 的博客上有一个很有意思的 [bug][5]。它讨论了 Python 如何使信号处理程序忽略了 `SIGPIPE`。也就是说,如果你从 Python 里运行一个程序,默认情况下它会忽略 `SIGPIPE`!这意味着,程序从 Python 脚本和从 shell 启动的表现会**有所不同**。在这种情况下,它会造成一个奇怪的问题。

|

||||

|

||||

所以,你的程序的环境(环境变量、信号处理程序等)可能很重要,都是从父进程继承来的。知道这些,在调试时是很有用的。

|

||||

|

||||

--------------------------------------------------------------------------------

|

||||

|

||||

via: https://jvns.ca/blog/2016/10/04/exec-will-eat-your-brain/

|

||||

|

||||

作者:[Julia Evans][a]

|

||||

译者:[jessie-pang](https://github.com/jessie-pang)

|

||||

校对:[wxy](https://github.com/wxy)

|

||||

|

||||

本文由 [LCTT](https://github.com/LCTT/TranslateProject) 原创编译,[Linux中国](https://linux.cn/) 荣誉推出

|

||||

|

||||

[a]:https://jvns.ca

|

||||

[1]:https://jvns.ca/categories/favorite

|

||||

[2]:http://man7.org/linux/man-pages/man3/posix_spawn.3.html

|

||||

[3]:https://github.com/kamalmarhubi/shell-workshop

|

||||

[4]:https://rachelbythebay.com/w/2014/08/19/fork/

|

||||

[5]:http://kamalmarhubi.com/blog/2015/06/30/my-favourite-bug-so-far-at-the-recurse-center/

|

||||

268

published/20170319 ftrace trace your kernel functions.md

Normal file

268

published/20170319 ftrace trace your kernel functions.md

Normal file

@ -0,0 +1,268 @@

|

||||

ftrace:跟踪你的内核函数!

|

||||

============================================================

|

||||

|

||||

大家好!今天我们将去讨论一个调试工具:ftrace,之前我的博客上还没有讨论过它。还有什么能比一个新的调试工具更让人激动呢?

|

||||

|

||||

这个非常棒的 ftrace 并不是个新的工具!它大约在 Linux 的 2.6 内核版本中就有了,时间大约是在 2008 年。[这一篇是我用谷歌能找到的最早的文档][10]。因此,如果你是一个调试系统的“老手”,可能早就已经使用它了!

|

||||

|

||||

我知道,ftrace 已经存在了大约 2.5 年了(LCTT 译注:距本文初次写作时),但是还没有真正的去学习它。假设我明天要召开一个专题研究会,那么,关于 ftrace 应该讨论些什么?因此,今天是时间去讨论一下它了!

|

||||

|

||||

### 什么是 ftrace?

|

||||

|

||||

ftrace 是一个 Linux 内核特性,它可以让你去跟踪 Linux 内核的函数调用。为什么要这么做呢?好吧,假设你调试一个奇怪的问题,而你已经得到了你的内核版本中这个问题在源代码中的开始的位置,而你想知道这里到底发生了什么?

|

||||

|

||||

每次在调试的时候,我并不会经常去读内核源代码,但是,极个别的情况下会去读它!例如,本周在工作中,我有一个程序在内核中卡死了。查看到底是调用了什么函数,能够帮我更好的理解在内核中发生了什么,哪些系统涉及其中!(在我的那个案例中,它是虚拟内存系统)。

|

||||

|

||||

我认为 ftrace 是一个十分好用的工具(它肯定没有 `strace` 那样使用广泛,也比它难以使用),但是它还是值得你去学习。因此,让我们开始吧!

|

||||

|

||||

### 使用 ftrace 的第一步

|

||||

|

||||

不像 `strace` 和 `perf`,ftrace 并不是真正的 **程序** – 你不能只运行 `ftrace my_cool_function`。那样太容易了!

|

||||

|

||||

如果你去读 [使用 ftrace 调试内核][11],它会告诉你从 `cd /sys/kernel/debug/tracing` 开始,然后做很多文件系统的操作。

|

||||

|

||||

对于我来说,这种办法太麻烦——一个使用 ftrace 的简单例子像是这样:

|

||||

|

||||

```

|

||||

cd /sys/kernel/debug/tracing

|

||||

echo function > current_tracer

|

||||

echo do_page_fault > set_ftrace_filter

|

||||

cat trace

|

||||

```

|

||||

|

||||

这个文件系统是跟踪系统的接口(“给这些神奇的文件赋值,然后该发生的事情就会发生”)理论上看起来似乎可用,但是它不是我的首选方式。

|

||||

|

||||

幸运的是,ftrace 团队也考虑到这个并不友好的用户界面,因此,它有了一个更易于使用的界面,它就是 `trace-cmd`!!!`trace-cmd` 是一个带命令行参数的普通程序。我们后面将使用它!我在 LWN 上找到了一个 `trace-cmd` 的使用介绍:[trace-cmd: Ftrace 的一个前端][12]。

|

||||

|

||||

### 开始使用 trace-cmd:让我们仅跟踪一个函数

|

||||

|

||||

首先,我需要去使用 `sudo apt-get install trace-cmd` 安装 `trace-cmd`,这一步很容易。

|

||||

|

||||

对于第一个 ftrace 的演示,我决定去了解我的内核如何去处理一个页面故障。当 Linux 分配内存时,它经常偷懒,(“你并不是_真的_计划去使用内存,对吗?”)。这意味着,当一个应用程序尝试去对分配给它的内存进行写入时,就会发生一个页面故障,而这个时候,内核才会真正的为应用程序去分配物理内存。

|

||||

|

||||

我们开始使用 `trace-cmd` 并让它跟踪 `do_page_fault` 函数!

|

||||

|

||||

```

|

||||

$ sudo trace-cmd record -p function -l do_page_fault

|

||||

plugin 'function'

|

||||

Hit Ctrl^C to stop recording

|

||||

```

|

||||

|

||||

我将它运行了几秒钟,然后按下了 `Ctrl+C`。 让我大吃一惊的是,它竟然产生了一个 2.5MB 大小的名为 `trace.dat` 的跟踪文件。我们来看一下这个文件的内容!

|

||||

|

||||

```

|

||||

$ sudo trace-cmd report

|

||||

chrome-15144 [000] 11446.466121: function: do_page_fault

|

||||

chrome-15144 [000] 11446.467910: function: do_page_fault

|

||||

chrome-15144 [000] 11446.469174: function: do_page_fault

|

||||

chrome-15144 [000] 11446.474225: function: do_page_fault

|

||||

chrome-15144 [000] 11446.474386: function: do_page_fault

|

||||

chrome-15144 [000] 11446.478768: function: do_page_fault

|

||||

CompositorTileW-15154 [001] 11446.480172: function: do_page_fault

|

||||

chrome-1830 [003] 11446.486696: function: do_page_fault

|

||||

CompositorTileW-15154 [001] 11446.488983: function: do_page_fault

|

||||

CompositorTileW-15154 [001] 11446.489034: function: do_page_fault

|

||||

CompositorTileW-15154 [001] 11446.489045: function: do_page_fault

|

||||

|

||||

```

|

||||

|

||||

看起来很整洁 – 它展示了进程名(chrome)、进程 ID(15144)、CPU ID(000),以及它跟踪的函数。

|

||||

|

||||

通过察看整个文件,(`sudo trace-cmd report | grep chrome`)可以看到,我们跟踪了大约 1.5 秒,在这 1.5 秒的时间段内,Chrome 发生了大约 500 个页面故障。真是太酷了!这就是我们做的第一个 ftrace!

|

||||

|

||||

### 下一个 ftrace 技巧:我们来跟踪一个进程!

|

||||

|

||||

好吧,只看一个函数是有点无聊!假如我想知道一个程序中都发生了什么事情。我使用一个名为 Hugo 的静态站点生成器。看看内核为 Hugo 都做了些什么事情?

|

||||

|

||||

在我的电脑上 Hugo 的 PID 现在是 25314,因此,我使用如下的命令去记录所有的内核函数:

|

||||

|

||||

```

|

||||

sudo trace-cmd record --help # I read the help!

|

||||

sudo trace-cmd record -p function -P 25314 # record for PID 25314

|

||||

```

|

||||

|

||||

`sudo trace-cmd report` 输出了 18,000 行。如果你对这些感兴趣,你可以看 [这里是所有的 18,000 行的输出][13]。

|

||||

|

||||

18,000 行太多了,因此,在这里仅摘录其中几行。

|

||||

|

||||

当系统调用 `clock_gettime` 运行的时候,都发生了什么:

|

||||

|

||||

```

|

||||

compat_SyS_clock_gettime

|

||||

SyS_clock_gettime

|

||||

clockid_to_kclock

|

||||

posix_clock_realtime_get

|

||||

getnstimeofday64

|

||||

__getnstimeofday64

|

||||

arch_counter_read

|

||||

__compat_put_timespec

|

||||

```

|

||||

|

||||

这是与进程调试相关的一些东西:

|

||||

|

||||

```

|

||||

cpufreq_sched_irq_work

|

||||

wake_up_process

|

||||

try_to_wake_up

|

||||

_raw_spin_lock_irqsave

|

||||

do_raw_spin_lock

|

||||

_raw_spin_lock

|

||||

do_raw_spin_lock

|

||||

walt_ktime_clock

|

||||

ktime_get

|

||||

arch_counter_read

|

||||

walt_update_task_ravg

|

||||

exiting_task

|

||||

|

||||

```

|

||||

|

||||

虽然你可能还不理解它们是做什么的,但是,能够看到所有的这些函数调用也是件很酷的事情。

|

||||

|

||||

### “function graph” 跟踪

|

||||

|

||||

这里有另外一个模式,称为 `function_graph`。除了它既可以进入也可以退出一个函数外,其它的功能和函数跟踪器是一样的。[这里是那个跟踪器的输出][14]

|

||||

|

||||

```

|

||||

sudo trace-cmd record -p function_graph -P 25314

|

||||

```

|

||||

|

||||

同样,这里只是一个片断(这次来自 futex 代码):

|

||||

|

||||

```

|

||||

| futex_wake() {

|

||||

| get_futex_key() {

|

||||

| get_user_pages_fast() {

|

||||

1.458 us | __get_user_pages_fast();

|

||||

4.375 us | }

|

||||

| __might_sleep() {

|

||||

0.292 us | ___might_sleep();

|

||||

2.333 us | }

|

||||

0.584 us | get_futex_key_refs();

|

||||

| unlock_page() {

|

||||

0.291 us | page_waitqueue();

|

||||

0.583 us | __wake_up_bit();

|

||||

5.250 us | }

|

||||

0.583 us | put_page();

|

||||

+ 24.208 us | }

|

||||

```

|

||||

|

||||

我们看到在这个示例中,在 `futex_wake` 后面调用了 `get_futex_key`。这是在源代码中真实发生的事情吗?我们可以检查一下!![这里是在 Linux 4.4 中 futex_wake 的定义][15] (我的内核版本是 4.4)。

|

||||

|

||||

为节省时间我直接贴出来,它的内容如下:

|

||||

|

||||

```

|

||||

static int

|

||||

futex_wake(u32 __user *uaddr, unsigned int flags, int nr_wake, u32 bitset)

|

||||

{

|

||||

struct futex_hash_bucket *hb;

|

||||

struct futex_q *this, *next;

|

||||

union futex_key key = FUTEX_KEY_INIT;

|

||||

int ret;

|

||||

WAKE_Q(wake_q);

|

||||

|

||||

if (!bitset)

|

||||

return -EINVAL;

|

||||

|

||||

ret = get_futex_key(uaddr, flags & FLAGS_SHARED, &key, VERIFY_READ);

|

||||

```

|

||||

|

||||

如你所见,在 `futex_wake` 中的第一个函数调用真的是 `get_futex_key`! 太棒了!相比阅读内核代码,阅读函数跟踪肯定是更容易的找到结果的办法,并且让人高兴的是,还能看到所有的函数用了多长时间。

|

||||

|

||||

### 如何知道哪些函数可以被跟踪

|

||||

|

||||

如果你去运行 `sudo trace-cmd list -f`,你将得到一个你可以跟踪的函数的列表。它很简单但是也很重要。

|

||||

|

||||

### 最后一件事:事件!

|

||||

|

||||

现在,我们已经知道了怎么去跟踪内核中的函数,真是太酷了!

|

||||

|

||||

还有一类我们可以跟踪的东西!有些事件与我们的函数调用并不相符。例如,你可能想知道当一个程序被调度进入或者离开 CPU 时,都发生了什么事件!你可能想通过“盯着”函数调用计算出来,但是,我告诉你,不可行!

|

||||

|

||||

由于函数也为你提供了几种事件,因此,你可以看到当重要的事件发生时,都发生了什么事情。你可以使用 `sudo cat /sys/kernel/debug/tracing/available_events` 来查看这些事件的一个列表。

|

||||

|

||||

我查看了全部的 sched_switch 事件。我并不完全知道 sched_switch 是什么,但是,我猜测它与调度有关。

|

||||

|

||||

```

|

||||

sudo cat /sys/kernel/debug/tracing/available_events

|

||||

sudo trace-cmd record -e sched:sched_switch

|

||||

sudo trace-cmd report

|

||||

```

|

||||

|

||||

输出如下:

|

||||

|

||||

```

|

||||

16169.624862: Chrome_ChildIOT:24817 [112] S ==> chrome:15144 [120]

|

||||

16169.624992: chrome:15144 [120] S ==> swapper/3:0 [120]

|

||||

16169.625202: swapper/3:0 [120] R ==> Chrome_ChildIOT:24817 [112]

|

||||