mirror of

https://github.com/LCTT/TranslateProject.git

synced 2025-01-28 23:20:10 +08:00

commit

305a81c338

@ -0,0 +1,120 @@

|

||||

如何更新 Linux 内核来提升系统性能

|

||||

================================================================================

|

||||

|

||||

|

||||

目前的 [Linux 内核][1]的开发速度是前所未有的,大概每2到3个月就会有一个主要的版本发布。每个发布都带来几个的新的功能和改进,可以让很多人的处理体验更快、更有效率、或者其它的方面更好。

|

||||

|

||||

问题是,你不能在这些内核发布的时候就用它们,你要等到你的发行版带来新内核的发布。我们先前讲到[定期更新内核的好处][2],所以你不必等到那时。让我们来告诉你该怎么做。

|

||||

|

||||

> 免责声明: 我们先前的一些文章已经提到过,升级内核有(很小)的风险可能会破坏你系统。如果发生这种情况,通常可以通过使用旧内核来使系统保持工作,但是有时还是不行。因此我们对系统的任何损坏都不负责,你得自己承担风险!

|

||||

|

||||

### 预备工作 ###

|

||||

|

||||

要更新你的内核,你首先要确定你使用的是32位还是64位的系统。打开终端并运行:

|

||||

|

||||

uname -a

|

||||

|

||||

检查一下输出的是 x86\_64 还是 i686。如果是 x86\_64,你就运行64位的版本,否则就运行32位的版本。千万记住这个,这很重要。

|

||||

|

||||

|

||||

|

||||

接下来,访问[官方的 Linux 内核网站][3],它会告诉你目前稳定内核的版本。愿意的话,你可以尝试下发布预选版(RC),但是这比稳定版少了很多测试。除非你确定想要需要发布预选版,否则就用稳定内核。

|

||||

|

||||

|

||||

|

||||

### Ubuntu 指导 ###

|

||||

|

||||

对 Ubuntu 及其衍生版的用户而言升级内核非常简单,这要感谢 Ubuntu 主线内核 PPA。虽然,官方把它叫做 PPA,但是你不能像其他 PPA 一样将它添加到你软件源列表中,并指望它自动升级你的内核。实际上,它只是一个简单的网页,你应该浏览并下载到你想要的内核。

|

||||

|

||||

|

||||

|

||||

现在,访问这个[内核 PPA 网页][4],并滚到底部。列表的最下面会含有最新发布的预选版本(你可以在名字中看到“rc”字样),但是这上面就可以看到最新的稳定版(说的更清楚些,本文写作时最新的稳定版是4.1.2。LCTT 译注:这里虽然 4.1.2 是当时的稳定版,但是由于尚未进入 Ubuntu 发行版中,所以文件夹名称为“-unstable”)。点击文件夹名称,你会看到几个选择。你需要下载 3 个文件并保存到它们自己的文件夹中(如果你喜欢的话可以放在下载文件夹中),以便它们与其它文件相隔离:

|

||||

|

||||

1. 针对架构的含“generic”(通用)的头文件(我这里是64位,即“amd64”)

|

||||

2. 放在列表中间,在文件名末尾有“all”的头文件

|

||||

3. 针对架构的含“generic”内核文件(再说一次,我会用“amd64”,但是你如果用32位的,你需要使用“i686”)

|

||||

|

||||

你还可以在下面看到含有“lowlatency”(低延时)的文件。但最好忽略它们。这些文件相对不稳定,并且只为那些通用文件不能满足像音频录制这类任务想要低延迟的人准备的。再说一次,首选通用版,除非你有特定的任务需求不能很好地满足。一般的游戏和网络浏览不是使用低延时版的借口。

|

||||

|

||||

你把它们放在各自的文件夹下,对么?现在打开终端,使用`cd`命令切换到新创建的文件夹下,如

|

||||

|

||||

cd /home/user/Downloads/Kernel

|

||||

|

||||

接着运行:

|

||||

|

||||

sudo dpkg -i *.deb

|

||||

|

||||

这个命令会标记文件夹中所有的“.deb”文件为“待安装”,接着执行安装。这是推荐的安装方法,因为不可以很简单地选择一个文件安装,它总会报出依赖问题。这这样一起安装就可以避免这个问题。如果你不清楚`cd`和`sudo`是什么。快速地看一下 [Linux 基本命令][5]这篇文章。

|

||||

|

||||

|

||||

|

||||

安装完成后,**重启**你的系统,这时应该就会运行刚安装的内核了!你可以在命令行中使用`uname -a`来检查输出。

|

||||

|

||||

### Fedora 指导 ###

|

||||

|

||||

如果你使用的是 Fedora 或者它的衍生版,过程跟 Ubuntu 很类似。不同的是文件获取的位置不同,安装的命令也不同。

|

||||

|

||||

|

||||

|

||||

查看 [最新 Fedora 内核构建][6]列表。选取列表中最新的稳定版并翻页到下面选择 i686 或者 x86_64 版。这取决于你的系统架构。这时你需要下载下面这些文件并保存到它们对应的目录下(比如“Kernel”到下载目录下):

|

||||

|

||||

- kernel

|

||||

- kernel-core

|

||||

- kernel-headers

|

||||

- kernel-modules

|

||||

- kernel-modules-extra

|

||||

- kernel-tools

|

||||

- perf 和 python-perf (可选)

|

||||

|

||||

如果你的系统是 i686(32位)同时你有 4GB 或者更大的内存,你需要下载所有这些文件的 PAE 版本。PAE 是用于32位系统上的地址扩展技术,它允许你使用超过 3GB 的内存。

|

||||

|

||||

现在使用`cd`命令进入文件夹,像这样

|

||||

|

||||

cd /home/user/Downloads/Kernel

|

||||

|

||||

接着运行下面的命令来安装所有的文件

|

||||

|

||||

yum --nogpgcheck localinstall *.rpm

|

||||

|

||||

最后**重启**你的系统,这样你就可以运行新的内核了!

|

||||

|

||||

#### 使用 Rawhide ####

|

||||

|

||||

另外一个方案是,Fedora 用户也可以[切换到 Rawhide][7],它会自动更新所有的包到最新版本,包括内核。然而,Rawhide 经常会破坏系统(尤其是在早期的开发阶段中),它**不应该**在你日常使用的系统中用。

|

||||

|

||||

### Arch 指导 ###

|

||||

|

||||

[Arch 用户][8]应该总是使用的是最新和最棒的稳定版(或者相当接近的版本)。如果你想要更接近最新发布的稳定版,你可以启用测试库提前2到3周获取到主要的更新。

|

||||

|

||||

要这么做,用[你喜欢的编辑器][9]以`sudo`权限打开下面的文件

|

||||

|

||||

/etc/pacman.conf

|

||||

|

||||

接着取消注释带有 testing 的三行(删除行前面的#号)。如果你启用了 multilib 仓库,就把 multilib-testing 也做相同的事情。如果想要了解更多参考[这个 Arch 的 wiki 界面][10]。

|

||||

|

||||

升级内核并不简单(有意这么做的),但是这会给你带来很多好处。只要你的新内核不会破坏任何东西,你可以享受它带来的性能提升,更好的效率,更多的硬件支持和潜在的新特性。尤其是你正在使用相对较新的硬件时,升级内核可以帮助到你。

|

||||

|

||||

|

||||

**怎么升级内核这篇文章帮助到你了么?你认为你所喜欢的发行版对内核的发布策略应该是怎样的?**。在评论栏让我们知道!

|

||||

|

||||

--------------------------------------------------------------------------------

|

||||

|

||||

via: http://www.makeuseof.com/tag/update-linux-kernel-improved-system-performance/

|

||||

|

||||

作者:[Danny Stieben][a]

|

||||

译者:[geekpi](https://github.com/geekpi)

|

||||

校对:[wxy](https://github.com/wxy)

|

||||

|

||||

本文由 [LCTT](https://github.com/LCTT/TranslateProject) 原创翻译,[Linux中国](https://linux.cn/) 荣誉推出

|

||||

|

||||

[a]:http://www.makeuseof.com/tag/author/danny/

|

||||

[1]:http://www.makeuseof.com/tag/linux-kernel-explanation-laymans-terms/

|

||||

[2]:http://www.makeuseof.com/tag/5-reasons-update-kernel-linux/

|

||||

[3]:http://www.kernel.org/

|

||||

[4]:http://kernel.ubuntu.com/~kernel-ppa/mainline/

|

||||

[5]:http://www.makeuseof.com/tag/an-a-z-of-linux-40-essential-commands-you-should-know/

|

||||

[6]:http://koji.fedoraproject.org/koji/packageinfo?packageID=8

|

||||

[7]:http://www.makeuseof.com/tag/bleeding-edge-linux-fedora-rawhide/

|

||||

[8]:http://www.makeuseof.com/tag/arch-linux-letting-you-build-your-linux-system-from-scratch/

|

||||

[9]:http://www.makeuseof.com/tag/nano-vs-vim-terminal-text-editors-compared/

|

||||

[10]:https://wiki.archlinux.org/index.php/Pacman#Repositories

|

||||

@ -1,14 +1,15 @@

|

||||

如何在 Linux 中从 Google Play 商店里下载 apk 文件

|

||||

如何在 Linux 上从 Google Play 商店里下载 apk 文件

|

||||

================================================================================

|

||||

假设你想在你的 Android 设备中安装一个 Android 应用,然而由于某些原因,你不能在 Andor 设备上访问 Google Play 商店。接着你该怎么做呢?在不访问 Google Play 商店的前提下安装应用的一种可能的方法是使用其他的手段下载该应用的 APK 文件,然后手动地在 Android 设备上 [安装 APK 文件][1]。

|

||||

|

||||

在非 Android 设备如常规的电脑和笔记本电脑上,有着几种方式来从 Google Play 商店下载到官方的 APK 文件。例如,使用浏览器插件(例如, 针对 [Chrome][2] 或针对 [Firefox][3] 的插件) 或利用允许你使用浏览器下载 APK 文件的在线的 APK 存档等。假如你不信任这些闭源的插件或第三方的 APK 仓库,这里有另一种手动下载官方 APK 文件的方法,它使用一个名为 [GooglePlayDownloader][4] 的开源 Linux 应用。

|

||||

假设你想在你的 Android 设备中安装一个 Android 应用,然而由于某些原因,你不能在 Andord 设备上访问 Google Play 商店(LCTT 译注:显然这对于我们来说是常态)。接着你该怎么做呢?在不访问 Google Play 商店的前提下安装应用的一种可能的方法是,使用其他的手段下载该应用的 APK 文件,然后手动地在 Android 设备上 [安装 APK 文件][1]。

|

||||

|

||||

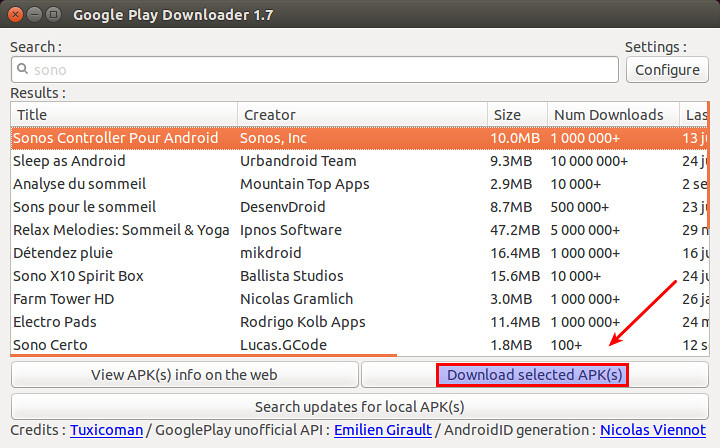

GooglePlayDownloader 是一个基于 Python 的 GUI 应用,使得你可以从 Google Play 商店上搜索和下载 APK 文件。由于它是完全开源的,你可以放心地使用它。在本篇教程中,我将展示如何在 Linux 环境下,使用 GooglePlayDownloader 来从 Google Play 商店下载 APK 文件。

|

||||

在非 Android 设备如常规的电脑和笔记本电脑上,有着几种方式来从 Google Play 商店下载到官方的 APK 文件。例如,使用浏览器插件(例如,针对 [Chrome][2] 或针对 [Firefox][3] 的插件) 或利用允许你使用浏览器下载 APK 文件的在线的 APK 存档等。假如你不信任这些闭源的插件或第三方的 APK 仓库,这里有另一种手动下载官方 APK 文件的方法,它使用一个名为 [GooglePlayDownloader][4] 的开源 Linux 应用。

|

||||

|

||||

GooglePlayDownloader 是一个基于 Python 的 GUI 应用,它可以让你从 Google Play 商店上搜索和下载 APK 文件。由于它是完全开源的,你可以放心地使用它。在本篇教程中,我将展示如何在 Linux 环境下,使用 GooglePlayDownloader 来从 Google Play 商店下载 APK 文件。

|

||||

|

||||

### Python 需求 ###

|

||||

|

||||

GooglePlayDownloader 需要使用 Python 中 SSL 模块的扩展 SNI(服务器名称指示) 来支持 SSL/TLS 通信,该功能由 Python 2.7.9 或更高版本带来。这使得一些旧的发行版本如 Debian 7 Wheezy 及早期版本,Ubuntu 14.04 及早期版本或 CentOS/RHEL 7 及早期版本均不能满足该要求。假设你已经有了一个带有 Python 2.7.9 或更高版本的发行版本,可以像下面这样接着安装 GooglePlayDownloader。

|

||||

GooglePlayDownloader 需要使用带有 SNI(Server Name Indication 服务器名称指示)的 Python 来支持 SSL/TLS 通信,该功能由 Python 2.7.9 或更高版本引入。这使得一些旧的发行版本如 Debian 7 Wheezy 及早期版本,Ubuntu 14.04 及早期版本或 CentOS/RHEL 7 及早期版本均不能满足该要求。这里假设你已经有了一个带有 Python 2.7.9 或更高版本的发行版本,可以像下面这样接着安装 GooglePlayDownloader。

|

||||

|

||||

### 在 Ubuntu 上安装 GooglePlayDownloader ###

|

||||

|

||||

@ -16,7 +17,7 @@ GooglePlayDownloader 需要使用 Python 中 SSL 模块的扩展 SNI(服务器

|

||||

|

||||

#### 在 Ubuntu 14.10 上 ####

|

||||

|

||||

下载 [python-ndg-httpsclient][5] deb 软件包,这在旧一点的 Ubuntu 发行版本中是一个缺失的依赖。同时还要下载 GooglePlayDownloader 的官方 deb 软件包。

|

||||

下载 [python-ndg-httpsclient][5] deb 软件包,这是一个较旧的 Ubuntu 发行版本中缺失的依赖。同时还要下载 GooglePlayDownloader 的官方 deb 软件包。

|

||||

|

||||

$ wget http://mirrors.kernel.org/ubuntu/pool/main/n/ndg-httpsclient/python-ndg-httpsclient_0.3.2-1ubuntu4_all.deb

|

||||

$ wget http://codingteam.net/project/googleplaydownloader/download/file/googleplaydownloader_1.7-1_all.deb

|

||||

@ -64,7 +65,7 @@ GooglePlayDownloader 需要使用 Python 中 SSL 模块的扩展 SNI(服务器

|

||||

|

||||

### 使用 GooglePlayDownloader 从 Google Play 商店下载 APK 文件 ###

|

||||

|

||||

一旦你安装好 GooglePlayDownloader 后,你就可以像下面那样从 Google Play 商店下载 APK 文件。

|

||||

一旦你安装好 GooglePlayDownloader 后,你就可以像下面那样从 Google Play 商店下载 APK 文件。(LCTT 译注:显然你需要让你的 Linux 能爬梯子)

|

||||

|

||||

首先通过输入下面的命令来启动该应用:

|

||||

|

||||

@ -76,7 +77,7 @@ GooglePlayDownloader 需要使用 Python 中 SSL 模块的扩展 SNI(服务器

|

||||

|

||||

|

||||

|

||||

一旦你从搜索列表中找到了该应用,就选择该应用,接着点击 "下载选定的 APK 文件" 按钮。最后你将在你的家目录中找到下载的 APK 文件。现在,你就可以将下载到的 APK 文件转移到你所选择的 Android 设备上,然后手动安装它。

|

||||

一旦你从搜索列表中找到了该应用,就选择该应用,接着点击 “下载选定的 APK 文件” 按钮。最后你将在你的家目录中找到下载的 APK 文件。现在,你就可以将下载到的 APK 文件转移到你所选择的 Android 设备上,然后手动安装它。

|

||||

|

||||

希望这篇教程对你有所帮助。

|

||||

|

||||

@ -86,7 +87,7 @@ via: http://xmodulo.com/download-apk-files-google-play-store.html

|

||||

|

||||

作者:[Dan Nanni][a]

|

||||

译者:[FSSlc](https://github.com/FSSlc)

|

||||

校对:[校对者ID](https://github.com/校对者ID)

|

||||

校对:[wxy](https://github.com/wxy)

|

||||

|

||||

本文由 [LCTT](https://github.com/LCTT/TranslateProject) 原创翻译,[Linux中国](https://linux.cn/) 荣誉推出

|

||||

|

||||

@ -0,0 +1,63 @@

|

||||

Ubuntu 有望让你安装最新 Nvidia Linux 驱动更简单

|

||||

================================================================================

|

||||

|

||||

|

||||

*Ubuntu 上的游戏玩家在增长——因而需要最新版驱动*

|

||||

|

||||

**在 Ubuntu 上安装上游的 NVIDIA 图形驱动即将变得更加容易。**

|

||||

|

||||

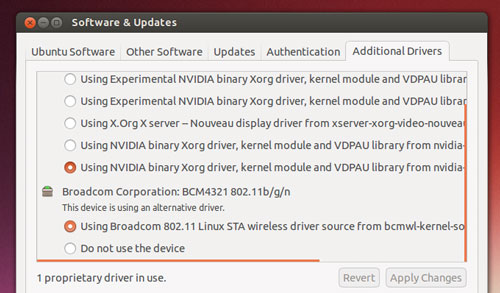

Ubuntu 开发者正在考虑构建一个全新的'官方' PPA,以便为桌面用户分发最新的闭源 NVIDIA 二进制驱动。

|

||||

|

||||

该项改变会让 Ubuntu 游戏玩家收益,并且*不会*给其它人造成 OS 稳定性方面的风险。

|

||||

|

||||

**仅**当用户明确选择它时,新的上游驱动将通过这个新 PPA 安装并更新。其他人将继续得到并使用更近的包含在 Ubuntu 归档中的稳定版 NVIDIA Linux 驱动快照。

|

||||

|

||||

### 为什么需要该项目? ###

|

||||

|

||||

|

||||

|

||||

*Ubuntu 提供了驱动——但是它们不是最新的*

|

||||

|

||||

可以从归档中(使用命令行、synaptic,或者通过额外驱动工具)安装到 Ubuntu 上的闭源 NVIDIA 图形驱动在大多数情况下都能工作得很好,并且可以轻松地处理 Unity 桌面外壳的混染。

|

||||

|

||||

但对于游戏需求而言,那完全是另外一码事儿。

|

||||

|

||||

如果你想要将最高帧率和 HD 纹理从最新流行的 Steam 游戏中压榨出来,你需要最新的二进制驱动文件。

|

||||

|

||||

驱动越新,越可能支持最新的特性和技术,或者带有预先打包的游戏专门的优化和漏洞修复。

|

||||

|

||||

问题在于,在 Ubuntu 上安装最新 Nvidia Linux 驱动不是件容易的事儿,而且也不具安全保证。

|

||||

|

||||

要填补这个空白,许多由热心人维护的第三方 PPA 就出现了。由于许多这些 PPA 也发布了其它实验性的或者前沿软件,它们的使用**并不是毫无风险的**。添加一个前沿的 PPA 通常是搞崩整个系统的最快的方式!

|

||||

|

||||

一个解决方法是,让 Ubuntu 用户安装最新的专有图形驱动以满足对第三方 PPA 的需要,**但是**提供一个安全机制,如果有需要,你可以回滚到稳定版本。

|

||||

|

||||

### ‘对全新驱动的需求难以忽视’ ###

|

||||

|

||||

> '一个让Ubuntu用户安全地获得最新硬件驱动的解决方案出现了。'

|

||||

|

||||

‘在快速发展的市场中,对全新驱动的需求正变得难以忽视,用户将想要最新的上游软件,’卡斯特罗在一封给 Ubuntu 桌面邮件列表的电子邮件中解释道。

|

||||

|

||||

‘[NVIDIA] 可以毫不费力为 [Windows 10] 用户带来了不起的体验。直到我们可以说服 NVIDIA 在 Ubuntu 中做了同样的工作,这样我们就可以搞定这一切了。’

|

||||

|

||||

卡斯特罗的“官方的” NVIDIA PPA 方案就是最实现这一目的的最容易的方式。

|

||||

|

||||

游戏玩家将可以在 Ubuntu 的默认专有软件驱动工具中选择接收来自该 PPA 的新驱动,再也不需要它们从网站或维基页面拷贝并粘贴终端命令了。

|

||||

|

||||

该 PPA 内的驱动将由一个选定的社区成员组成的团队打包并维护,并受惠于一个名为**自动化测试**的半官方方式。

|

||||

|

||||

就像卡斯特罗自己说的那样:'人们想要最新的闪光的东西,而不管他们想要做什么。我们也许也要在其周围放置一个框架,因此人们可以获得他们所想要的,而不必破坏他们的计算机。'

|

||||

|

||||

**你想要使用这个 PPA 吗?你怎样来评估 Ubuntu 上默认 Nvidia 驱动的性能呢?在评论中分享你的想法吧,伙计们!**

|

||||

|

||||

--------------------------------------------------------------------------------

|

||||

|

||||

via: http://www.omgubuntu.co.uk/2015/08/ubuntu-easy-install-latest-nvidia-linux-drivers

|

||||

|

||||

作者:[Joey-Elijah Sneddon][a]

|

||||

译者:[GOLinux](https://github.com/GOLinux)

|

||||

校对:[wxy](https://github.com/wxy)

|

||||

|

||||

本文由 [LCTT](https://github.com/LCTT/TranslateProject) 原创翻译,[Linux中国](https://linux.cn/) 荣誉推出

|

||||

|

||||

[a]:https://plus.google.com/117485690627814051450/?rel=author

|

||||

@ -1,95 +0,0 @@

|

||||

Tickr Is An Open-Source RSS News Ticker for Linux Desktops

|

||||

================================================================================

|

||||

|

||||

|

||||

**Latest! Latest! Read all about it!**

|

||||

|

||||

Alright, so the app we’re highlighting today isn’t quite the binary version of an old newspaper seller — but it is a great way to have the latest news brought to you, on your desktop.

|

||||

|

||||

Tick is a GTK-based news ticker for the Linux desktop that scrolls the latest headlines and article titles from your favourite RSS feeds in horizontal strip that you can place anywhere on your desktop.

|

||||

|

||||

Call me Joey Calamezzo; I put mine on the bottom TV news station style.

|

||||

|

||||

“Over to you, sub-heading.”

|

||||

|

||||

### RSS — Remember That? ###

|

||||

|

||||

“Thanks paragraph ending.”

|

||||

|

||||

In an era of push notifications, social media, and clickbait, cajoling us into reading the latest mind-blowing, humanity saving listicle ASAP, RSS can seem a bit old hat.

|

||||

|

||||

For me? Well, RSS lives up to its name of Really Simple Syndication. It’s the easiest, most manageable way to have news come to me. I can manage and read stuff when I want; there’s no urgency to view lest the tweet vanish into the stream or the push notification vanish.

|

||||

|

||||

The beauty of Tickr is in its utility. You can have a constant stream of news trundling along the bottom of your screen, which you can passively glance at from time to time.

|

||||

|

||||

|

||||

|

||||

There’s no pressure to ‘read’ or ‘mark all read’ or any of that. When you see something you want to read you just click it to open it in a web browser.

|

||||

|

||||

### Setting it Up ###

|

||||

|

||||

|

||||

|

||||

Although Tickr is available to install from the Ubuntu Software Centre it hasn’t been updated for a long time. Nowhere is this sense of abandonment more keenly felt than when opening the unwieldy and unintuitive configuration panel.

|

||||

|

||||

To open it:

|

||||

|

||||

1. Right click on the Tickr bar

|

||||

1. Go to Edit > Preferences

|

||||

1. Adjust the various settings

|

||||

|

||||

Row after row of options and settings, few of which seem to make sense at first. But poke and prod around and you’ll controls for pretty much everything, including:

|

||||

|

||||

- Set scrolling speed

|

||||

- Choose behaviour when mousing over

|

||||

- Feed update frequency

|

||||

- Font, including font sizes and color

|

||||

- Separator character (‘delineator’)

|

||||

- Position of Tickr on screen

|

||||

- Color and opacity of Tickr bar

|

||||

- Choose how many articles each feed displays

|

||||

|

||||

One ‘quirk’ worth mentioning is that pressing the ‘Apply’ only updates the on-screen Tickr to preview changes. For changes to take effect when you exit the Preferences window you need to click ‘OK’.

|

||||

|

||||

Getting the bar to sit flush on your display can also take a fair bit of tweaking, especially on Unity.

|

||||

|

||||

Press the “full width button” to have the app auto-detect your screen width. By default when placed at the top or bottom it leaves a 25px gap (the app was created back in the days of GNOME 2.x desktops). After hitting the top or bottom buttons just add an extra 25 pixels to the input box compensate for this.

|

||||

|

||||

Other options available include: choose which browser articles open in; whether Tickr appears within a regular window frame; whether a clock is shown; and how often the app checks feed for articles.

|

||||

|

||||

#### Adding Feeds ####

|

||||

|

||||

Tickr comes with a built-in list of over 30 different feeds, ranging from technology blogs to mainstream news services.

|

||||

|

||||

|

||||

|

||||

You can select as many of these as you like to show headlines in the on screen ticker. If you want to add your own feeds you can: –

|

||||

|

||||

1. Right click on the Tickr bar

|

||||

1. Go to File > Open Feed

|

||||

1. Enter Feed URL

|

||||

1. Click ‘Add/Upd’ button

|

||||

1. Click ‘OK (select)’

|

||||

|

||||

To set how many items from each feed shows in the ticker change the “Read N items max per feed” in the other preferences window.

|

||||

|

||||

### Install Tickr in Ubuntu 14.04 LTS and Up ###

|

||||

|

||||

So that’s Tickr. It’s not going to change the world but it will keep you abreast of what’s happening in it.

|

||||

|

||||

To install it in Ubuntu 14.04 LTS or later head to the Ubuntu Software Centre but clicking the button below.

|

||||

|

||||

- [Click to install Tickr form the Ubuntu Software Center][1]

|

||||

|

||||

--------------------------------------------------------------------------------

|

||||

|

||||

via: http://www.omgubuntu.co.uk/2015/06/tickr-open-source-desktop-rss-news-ticker

|

||||

|

||||

作者:[Joey-Elijah Sneddon][a]

|

||||

译者:[译者ID](https://github.com/译者ID)

|

||||

校对:[校对者ID](https://github.com/校对者ID)

|

||||

|

||||

本文由 [LCTT](https://github.com/LCTT/TranslateProject) 原创翻译,[Linux中国](https://linux.cn/) 荣誉推出

|

||||

|

||||

[a]:https://plus.google.com/117485690627814051450/?rel=author

|

||||

[1]:apt://tickr

|

||||

@ -1,151 +0,0 @@

|

||||

translation by strugglingyouth

|

||||

How to monitor NGINX with Datadog - Part 3

|

||||

================================================================================

|

||||

|

||||

|

||||

If you’ve already read [our post on monitoring NGINX][1], you know how much information you can gain about your web environment from just a handful of metrics. And you’ve also seen just how easy it is to start collecting metrics from NGINX on ad hoc basis. But to implement comprehensive, ongoing NGINX monitoring, you will need a robust monitoring system to store and visualize your metrics, and to alert you when anomalies happen. In this post, we’ll show you how to set up NGINX monitoring in Datadog so that you can view your metrics on customizable dashboards like this:

|

||||

|

||||

|

||||

|

||||

Datadog allows you to build graphs and alerts around individual hosts, services, processes, metrics—or virtually any combination thereof. For instance, you can monitor all of your NGINX hosts, or all hosts in a certain availability zone, or you can monitor a single key metric being reported by all hosts with a certain tag. This post will show you how to:

|

||||

|

||||

- Monitor NGINX metrics on Datadog dashboards, alongside all your other systems

|

||||

- Set up automated alerts to notify you when a key metric changes dramatically

|

||||

|

||||

### Configuring NGINX ###

|

||||

|

||||

To collect metrics from NGINX, you first need to ensure that NGINX has an enabled status module and a URL for reporting its status metrics. Step-by-step instructions [for configuring open-source NGINX][2] and [NGINX Plus][3] are available in our companion post on metric collection.

|

||||

|

||||

### Integrating Datadog and NGINX ###

|

||||

|

||||

#### Install the Datadog Agent ####

|

||||

|

||||

The Datadog Agent is [the open-source software][4] that collects and reports metrics from your hosts so that you can view and monitor them in Datadog. Installing the agent usually takes [just a single command][5].

|

||||

|

||||

As soon as your Agent is up and running, you should see your host reporting metrics [in your Datadog account][6].

|

||||

|

||||

|

||||

|

||||

#### Configure the Agent ####

|

||||

|

||||

Next you’ll need to create a simple NGINX configuration file for the Agent. The location of the Agent’s configuration directory for your OS can be found [here][7].

|

||||

|

||||

Inside that directory, at conf.d/nginx.yaml.example, you will find [a sample NGINX config file][8] that you can edit to provide the status URL and optional tags for each of your NGINX instances:

|

||||

|

||||

init_config:

|

||||

|

||||

instances:

|

||||

|

||||

- nginx_status_url: http://localhost/nginx_status/

|

||||

tags:

|

||||

- instance:foo

|

||||

|

||||

Once you have supplied the status URLs and any tags, save the config file as conf.d/nginx.yaml.

|

||||

|

||||

#### Restart the Agent ####

|

||||

|

||||

You must restart the Agent to load your new configuration file. The restart command varies somewhat by platform—see the specific commands for your platform [here][9].

|

||||

|

||||

#### Verify the configuration settings ####

|

||||

|

||||

To check that Datadog and NGINX are properly integrated, run the Datadog info command. The command for each platform is available [here][10].

|

||||

|

||||

If the configuration is correct, you will see a section like this in the output:

|

||||

|

||||

Checks

|

||||

======

|

||||

|

||||

[...]

|

||||

|

||||

nginx

|

||||

-----

|

||||

- instance #0 [OK]

|

||||

- Collected 8 metrics & 0 events

|

||||

|

||||

#### Install the integration ####

|

||||

|

||||

Finally, switch on the NGINX integration inside your Datadog account. It’s as simple as clicking the “Install Integration” button under the Configuration tab in the [NGINX integration settings][11].

|

||||

|

||||

|

||||

|

||||

### Metrics! ###

|

||||

|

||||

Once the Agent begins reporting NGINX metrics, you will see [an NGINX dashboard][12] among your list of available dashboards in Datadog.

|

||||

|

||||

The basic NGINX dashboard displays a handful of graphs encapsulating most of the key metrics highlighted [in our introduction to NGINX monitoring][13]. (Some metrics, notably request processing time, require log analysis and are not available in Datadog.)

|

||||

|

||||

You can easily create a comprehensive dashboard for monitoring your entire web stack by adding additional graphs with important metrics from outside NGINX. For example, you might want to monitor host-level metrics on your NGINX hosts, such as system load. To start building a custom dashboard, simply clone the default NGINX dashboard by clicking on the gear near the upper right of the dashboard and selecting “Clone Dash”.

|

||||

|

||||

|

||||

|

||||

You can also monitor your NGINX instances at a higher level using Datadog’s [Host Maps][14]—for instance, color-coding all your NGINX hosts by CPU usage to identify potential hotspots.

|

||||

|

||||

|

||||

|

||||

### Alerting on NGINX metrics ###

|

||||

|

||||

Once Datadog is capturing and visualizing your metrics, you will likely want to set up some monitors to automatically keep tabs on your metrics—and to alert you when there are problems. Below we’ll walk through a representative example: a metric monitor that alerts on sudden drops in NGINX throughput.

|

||||

|

||||

#### Monitor your NGINX throughput ####

|

||||

|

||||

Datadog metric alerts can be threshold-based (alert when the metric exceeds a set value) or change-based (alert when the metric changes by a certain amount). In this case we’ll take the latter approach, alerting when our incoming requests per second drop precipitously. Such drops are often indicative of problems.

|

||||

|

||||

1.**Create a new metric monitor**. Select “New Monitor” from the “Monitors” dropdown in Datadog. Select “Metric” as the monitor type.

|

||||

|

||||

|

||||

|

||||

2.**Define your metric monitor**. We want to know when our total NGINX requests per second drop by a certain amount. So we define the metric of interest to be the sum of nginx.net.request_per_s across our infrastructure.

|

||||

|

||||

|

||||

|

||||

3.**Set metric alert conditions**. Since we want to alert on a change, rather than on a fixed threshold, we select “Change Alert.” We’ll set the monitor to alert us whenever the request volume drops by 30 percent or more. Here we use a one-minute window of data to represent the metric’s value “now” and alert on the average change across that interval, as compared to the metric’s value 10 minutes prior.

|

||||

|

||||

|

||||

|

||||

4.**Customize the notification**. If our NGINX request volume drops, we want to notify our team. In this case we will post a notification in the ops team’s chat room and page the engineer on call. In “Say what’s happening”, we name the monitor and add a short message that will accompany the notification to suggest a first step for investigation. We @mention the Slack channel that we use for ops and use @pagerduty to [route the alert to PagerDuty][15]

|

||||

|

||||

|

||||

|

||||

5.**Save the integration monitor**. Click the “Save” button at the bottom of the page. You’re now monitoring a key NGINX [work metric][16], and your on-call engineer will be paged anytime it drops rapidly.

|

||||

|

||||

### Conclusion ###

|

||||

|

||||

In this post we’ve walked you through integrating NGINX with Datadog to visualize your key metrics and notify your team when your web infrastructure shows signs of trouble.

|

||||

|

||||

If you’ve followed along using your own Datadog account, you should now have greatly improved visibility into what’s happening in your web environment, as well as the ability to create automated monitors tailored to your environment, your usage patterns, and the metrics that are most valuable to your organization.

|

||||

|

||||

If you don’t yet have a Datadog account, you can sign up for [a free trial][17] and start monitoring your infrastructure, your applications, and your services today.

|

||||

|

||||

----------

|

||||

|

||||

Source Markdown for this post is available [on GitHub][18]. Questions, corrections, additions, etc.? Please [let us know][19].

|

||||

|

||||

------------------------------------------------------------

|

||||

|

||||

via: https://www.datadoghq.com/blog/how-to-monitor-nginx-with-datadog/

|

||||

|

||||

作者:K Young

|

||||

译者:[译者ID](https://github.com/译者ID)

|

||||

校对:[校对者ID](https://github.com/校对者ID)

|

||||

|

||||

本文由 [LCTT](https://github.com/LCTT/TranslateProject) 原创翻译,[Linux中国](https://linux.cn/) 荣誉推出

|

||||

|

||||

[1]:https://www.datadoghq.com/blog/how-to-monitor-nginx/

|

||||

[2]:https://www.datadoghq.com/blog/how-to-collect-nginx-metrics/#open-source

|

||||

[3]:https://www.datadoghq.com/blog/how-to-collect-nginx-metrics/#plus

|

||||

[4]:https://github.com/DataDog/dd-agent

|

||||

[5]:https://app.datadoghq.com/account/settings#agent

|

||||

[6]:https://app.datadoghq.com/infrastructure

|

||||

[7]:http://docs.datadoghq.com/guides/basic_agent_usage/

|

||||

[8]:https://github.com/DataDog/dd-agent/blob/master/conf.d/nginx.yaml.example

|

||||

[9]:http://docs.datadoghq.com/guides/basic_agent_usage/

|

||||

[10]:http://docs.datadoghq.com/guides/basic_agent_usage/

|

||||

[11]:https://app.datadoghq.com/account/settings#integrations/nginx

|

||||

[12]:https://app.datadoghq.com/dash/integration/nginx

|

||||

[13]:https://www.datadoghq.com/blog/how-to-monitor-nginx/

|

||||

[14]:https://www.datadoghq.com/blog/introducing-host-maps-know-thy-infrastructure/

|

||||

[15]:https://www.datadoghq.com/blog/pagerduty/

|

||||

[16]:https://www.datadoghq.com/blog/monitoring-101-collecting-data/#metrics

|

||||

[17]:https://www.datadoghq.com/blog/how-to-monitor-nginx-with-datadog/#sign-up

|

||||

[18]:https://github.com/DataDog/the-monitor/blob/master/nginx/how_to_monitor_nginx_with_datadog.md

|

||||

[19]:https://github.com/DataDog/the-monitor/issues

|

||||

@ -1,419 +0,0 @@

|

||||

wyangsun translating

|

||||

Managing Linux Logs

|

||||

================================================================================

|

||||

A key best practice for logging is to centralize or aggregate your logs in one place, especially if you have multiple servers or tiers in your architecture. We’ll tell you why this is a good idea and give tips on how to do it easily.

|

||||

|

||||

### Benefits of Centralizing Logs ###

|

||||

|

||||

It can be cumbersome to look at individual log files if you have many servers. Modern web sites and services often include multiple tiers of servers, distributed with load balancers, and more. It’d take a long time to hunt down the right file, and even longer to correlate problems across servers. There’s nothing more frustrating than finding the information you are looking for hasn’t been captured, or the log file that could have held the answer has just been lost after a restart.

|

||||

|

||||

Centralizing your logs makes them faster to search, which can help you solve production issues faster. You don’t have to guess which server had the issue because all the logs are in one place. Additionally, you can use more powerful tools to analyze them, including log management solutions. These solutions can [transform plain text logs][1] into fields that can be easily searched and analyzed.

|

||||

|

||||

Centralizing your logs also makes them easier to manage:

|

||||

|

||||

- They are safer from accidental or intentional loss when they are backed up and archived in a separate location. If your servers go down or are unresponsive, you can use the centralized logs to debug the problem.

|

||||

- You don’t have to worry about ssh or inefficient grep commands requiring more resources on troubled systems.

|

||||

- You don’t have to worry about full disks, which can crash your servers.

|

||||

- You can keep your production servers secure without giving your entire team access just to look at logs. It’s much safer to give your team access to logs from the central location.

|

||||

|

||||

With centralized log management, you still must deal with the risk of being unable to transfer logs to the centralized location due to poor network connectivity or of using up a lot of network bandwidth. We’ll discuss how to intelligently address these issues in the sections below.

|

||||

|

||||

### Popular Tools for Centralizing Logs ###

|

||||

|

||||

The most common way of centralizing logs on Linux is by using syslog daemons or agents. The syslog daemon supports local log collection, then transports logs through the syslog protocol to a central server. There are several popular daemons that you can use to centralize your log files:

|

||||

|

||||

- [rsyslog][2] is a light-weight daemon installed on most common Linux distributions.

|

||||

- [syslog-ng][3] is the second most popular syslog daemon for Linux.

|

||||

- [logstash][4] is a heavier-weight agent that can do more advanced processing and parsing.

|

||||

- [fluentd][5] is another agent with advanced processing capabilities.

|

||||

|

||||

Rsyslog is the most popular daemon for centralizing your log data because it’s installed by default in most common distributions of Linux. You don’t need to download it or install it, and it’s lightweight so it won’t take up much of your system resources.

|

||||

|

||||

If you need more advanced filtering or custom parsing capabilities, Logstash is the next most popular choice if you don’t mind the extra system footprint.

|

||||

|

||||

### Configure Rsyslog.conf ###

|

||||

|

||||

Since rsyslog is the most widely used syslog daemon, we’ll show how to configure it to centralize logs. The global configuration file is located at /etc/rsyslog.conf. It loads modules, sets global directives, and has an include for application-specific files located in the directory /etc/rsyslog.d/. This directory contains /etc/rsyslog.d/50-default.conf which instructs rsyslog to write the system logs to file. You can read more about the configuration files in the [rsyslog documentation][6].

|

||||

|

||||

The configuration language for rsyslog is [RainerScript][7]. You set up specific inputs for logs as well as actions to output them to another destination. Rsyslog already configures standard defaults for syslog input, so you usually just need to add an output to your log server. Here is an example configuration for rsyslog to output logs to an external server. In this example, **BEBOP** is the hostname of the server, so you should replace it with your own server name.

|

||||

|

||||

action(type="omfwd" protocol="tcp" target="BEBOP" port="514")

|

||||

|

||||

You could send your logs to a log server with ample storage to keep a copy for search, backup, and analysis. If you’re storing logs in the file system, then you should set up [log rotation][8] to keep your disk from getting full.

|

||||

|

||||

Alternatively, you can send these logs to a log management solution. If your solution is installed locally you can send it to your local host and port as specified in your system documentation. If you use a cloud-based provider, you will send them to the hostname and port specified by your provider.

|

||||

|

||||

### Log Directories ###

|

||||

|

||||

You can centralize all the files in a directory or matching a wildcard pattern. The nxlog and syslog-ng daemons support both directories and wildcards (*).

|

||||

|

||||

Common versions of rsyslog can’t monitor directories directly. As a workaround, you can setup a cron job to monitor the directory for new files, then configure rsyslog to send those files to a destination, such as your log management system. As an example, the log management vendor Loggly has an open source version of a [script to monitor directories][9].

|

||||

|

||||

### Which Protocol: UDP, TCP, or RELP? ###

|

||||

|

||||

There are three main protocols that you can choose from when you transmit data over the Internet. The most common is UDP for your local network and TCP for the Internet. If you cannot lose logs, then use the more advanced RELP protocol.

|

||||

|

||||

[UDP][10] sends a datagram packet, which is a single packet of information. It’s an outbound-only protocol, so it doesn’t send you an acknowledgement of receipt (ACK). It makes only one attempt to send the packet. UDP can be used to smartly degrade or drop logs when the network gets congested. It’s most commonly used on reliable networks like localhost.

|

||||

|

||||

[TCP][11] sends streaming information in multiple packets and returns an ACK. TCP makes multiple attempts to send the packet, but is limited by the size of the [TCP buffer][12]. This is the most common protocol for sending logs over the Internet.

|

||||

|

||||

[RELP][13] is the most reliable of these three protocols but was created for rsyslog and has less industry adoption. It acknowledges receipt of data in the application layer and will resend if there is an error. Make sure your destination also supports this protocol.

|

||||

|

||||

### Reliably Send with Disk Assisted Queues ###

|

||||

|

||||

If rsyslog encounters a problem when storing logs, such as an unavailable network connection, it can queue the logs until the connection is restored. The queued logs are stored in memory by default. However, memory is limited and if the problem persists, the logs can exceed memory capacity.

|

||||

|

||||

**Warning: You can lose data if you store logs only in memory.**

|

||||

|

||||

Rsyslog can queue your logs to disk when memory is full. [Disk-assisted queues][14] make transport of logs more reliable. Here is an example of how to configure rsyslog with a disk-assisted queue:

|

||||

|

||||

$WorkDirectory /var/spool/rsyslog # where to place spool files

|

||||

$ActionQueueFileName fwdRule1 # unique name prefix for spool files

|

||||

$ActionQueueMaxDiskSpace 1g # 1gb space limit (use as much as possible)

|

||||

$ActionQueueSaveOnShutdown on # save messages to disk on shutdown

|

||||

$ActionQueueType LinkedList # run asynchronously

|

||||

$ActionResumeRetryCount -1 # infinite retries if host is down

|

||||

|

||||

### Encrypt Logs Using TLS ###

|

||||

|

||||

When the security and privacy of your data is a concern, you should consider encrypting your logs. Sniffers and middlemen could read your log data if you transmit it over the Internet in clear text. You should encrypt your logs if they contain private information, sensitive identification data, or government-regulated data. The rsyslog daemon can encrypt your logs using the TLS protocol and keep your data safer.

|

||||

|

||||

To set up TLS encryption, you need to do the following tasks:

|

||||

|

||||

1. Generate a [certificate authority][15] (CA). There are sample certificates in /contrib/gnutls, which are good only for testing, but you need to create your own for production. If you’re using a log management service, it will have one ready for you.

|

||||

1. Generate a [digital certificate][16] for your server to enable SSL operation, or use one from your log management service provider.

|

||||

1. Configure your rsyslog daemon to send TLS-encrypted data to your log management system.

|

||||

|

||||

Here’s an example rsyslog configuration with TLS encryption. Replace CERT and DOMAIN_NAME with your own server setting.

|

||||

|

||||

$DefaultNetstreamDriverCAFile /etc/rsyslog.d/keys/ca.d/CERT.crt

|

||||

$ActionSendStreamDriver gtls

|

||||

$ActionSendStreamDriverMode 1

|

||||

$ActionSendStreamDriverAuthMode x509/name

|

||||

$ActionSendStreamDriverPermittedPeer *.DOMAIN_NAME.com

|

||||

|

||||

### Best Practices for Application Logging ###

|

||||

|

||||

In addition to the logs that Linux creates by default, it’s also a good idea to centralize logs from important applications. Almost all Linux-based server class applications write their status information in separate, dedicated log files. This includes database products like PostgreSQL or MySQL, web servers like Nginx or Apache, firewalls, print and file sharing services, directory and DNS servers and so on.

|

||||

|

||||

The first thing administrators do after installing an application is to configure it. Linux applications typically have a .conf file somewhere within the /etc directory. It can be somewhere else too, but that’s the first place where people look for configuration files.

|

||||

|

||||

Depending on how complex or large the application is, the number of settable parameters can be few or in hundreds. As mentioned before, most applications would write their status in some sort of log file: configuration file is where log settings are defined among other things.

|

||||

|

||||

If you’re not sure where it is, you can use the locate command to find it:

|

||||

|

||||

[root@localhost ~]# locate postgresql.conf

|

||||

/usr/pgsql-9.4/share/postgresql.conf.sample

|

||||

/var/lib/pgsql/9.4/data/postgresql.conf

|

||||

|

||||

#### Set a Standard Location for Log Files ####

|

||||

|

||||

Linux systems typically save their log files under /var/log directory. This works fine, but check if the application saves under a specific directory under /var/log. If it does, great, if not, you may want to create a dedicated directory for the app under /var/log. Why? That’s because other applications save their log files under /var/log too and if your app saves more than one log file – perhaps once every day or after each service restart – it may be a bit difficult to trawl through a large directory to find the file you want.

|

||||

|

||||

If you have the more than one instance of the application running in your network, this approach also comes handy. Think about a situation where you may have a dozen web servers running in your network. When troubleshooting any one of the boxes, you would know exactly where to go.

|

||||

|

||||

#### Use A Standard Filename ####

|

||||

|

||||

Use a standard filename for the latest logs from your application. This makes it easy because you can monitor and tail a single file. Many applications add some sort of date time stamp in them. This makes it much more difficult to find the latest file and to setup file monitoring by rsyslog. A better approach is to add timestamps to older log files using logrotate. This makes them easier to archive and search historically.

|

||||

|

||||

#### Append the Log File ####

|

||||

|

||||

Is the log file going to be overwritten after each application restart? If so, we recommend turning that off. After each restart the app should append to the log file. That way, you can always go back to the last log line before the restart.

|

||||

|

||||

#### Appending vs. Rotation of Log File ####

|

||||

|

||||

Even if the application writes a new log file after each restart, how is it saving in the current log? Is it appending to one single, massive file? Linux systems are not known for frequent reboots or crashes: applications can run for very long periods without even blinking, but that can also make the log file very large. If you are trying to analyze the root cause of a connection failure that happened last week, you could easily be searching through tens of thousands of lines.

|

||||

|

||||

We recommend you configure the application to rotate its log file once every day, say at mid-night.

|

||||

|

||||

Why? Well it becomes manageable for a starter. It’s much easier to find a file name with a specific date time pattern than to search through one file for that date’s entries. Files are also much smaller: you don’t think vi has frozen when you open a log file. Secondly, if you are sending the log file over the wire to a different location – perhaps a nightly backup job copying to a centralized log server – it doesn’t chew up your network’s bandwidth. Third and final, it helps with your log retention. If you want to cull old log entries, it’s easier to delete files older than a particular date than to have an application parsing one single large file.

|

||||

|

||||

#### Retention of Log File ####

|

||||

|

||||

How long do you keep a log file? That definitely comes down to business requirement. You could be asked to keep one week’s worth of logging information, or it may be a regulatory requirement to keep ten years’ worth of data. Whatever it is, logs need to go from the server at one time or other.

|

||||

|

||||

In our opinion, unless otherwise required, keep at least a month’s worth of log files online, plus copy them to a secondary location like a logging server. Anything older than that can be offloaded to a separate media. For example, if you are on AWS, your older logs can be copied to Glacier.

|

||||

|

||||

#### Separate Disk Location for Log Files ####

|

||||

|

||||

Linux best practice usually suggests mounting the /var directory to a separate file system. This is because of the high number of I/Os associated with this directory. We would recommend mounting /var/log directory under a separate disk system. This can save I/O contention with the main application’s data. Also, if the number of log files becomes too large or the single log file becomes too big, it doesn’t fill up the entire disk.

|

||||

|

||||

#### Log Entries ####

|

||||

|

||||

What information should be captured in each log entry?

|

||||

|

||||

That depends on what you want to use the log for. Do you want to use it only for troubleshooting purposes, or do you want to capture everything that’s happening? Is it a legal requirement to capture what each user is running or viewing?

|

||||

|

||||

If you are using logs for troubleshooting purposes, save only errors, warnings or fatal messages. There’s no reason to capture debug messages, for example. The app may log debug messages by default or another administrator might have turned this on for another troubleshooting exercise, but you need to turn this off because it can definitely fill up the space quickly. At a minimum, capture the date, time, client application name, source IP or client host name, action performed and the message itself.

|

||||

|

||||

#### A Practical Example for PostgreSQL ####

|

||||

|

||||

As an example, let’s look at the main configuration file for a vanilla PostgreSQL 9.4 installation. It’s called postgresql.conf and contrary to other config files in Linux systems, it’s not saved under /etc directory. In the code snippet below, we can see it’s in /var/lib/pgsql directory of our CentOS 7 server:

|

||||

|

||||

root@localhost ~]# vi /var/lib/pgsql/9.4/data/postgresql.conf

|

||||

...

|

||||

#------------------------------------------------------------------------------

|

||||

# ERROR REPORTING AND LOGGING

|

||||

#------------------------------------------------------------------------------

|

||||

# - Where to Log -

|

||||

log_destination = 'stderr'

|

||||

# Valid values are combinations of

|

||||

# stderr, csvlog, syslog, and eventlog,

|

||||

# depending on platform. csvlog

|

||||

# requires logging_collector to be on.

|

||||

# This is used when logging to stderr:

|

||||

logging_collector = on

|

||||

# Enable capturing of stderr and csvlog

|

||||

# into log files. Required to be on for

|

||||

# csvlogs.

|

||||

# (change requires restart)

|

||||

# These are only used if logging_collector is on:

|

||||

log_directory = 'pg_log'

|

||||

# directory where log files are written,

|

||||

# can be absolute or relative to PGDATA

|

||||

log_filename = 'postgresql-%a.log' # log file name pattern,

|

||||

# can include strftime() escapes

|

||||

# log_file_mode = 0600 .

|

||||

# creation mode for log files,

|

||||

# begin with 0 to use octal notation

|

||||

log_truncate_on_rotation = on # If on, an existing log file with the

|

||||

# same name as the new log file will be

|

||||

# truncated rather than appended to.

|

||||

# But such truncation only occurs on

|

||||

# time-driven rotation, not on restarts

|

||||

# or size-driven rotation. Default is

|

||||

# off, meaning append to existing files

|

||||

# in all cases.

|

||||

log_rotation_age = 1d

|

||||

# Automatic rotation of logfiles will happen after that time. 0 disables.

|

||||

log_rotation_size = 0 # Automatic rotation of logfiles will happen after that much log output. 0 disables.

|

||||

# These are relevant when logging to syslog:

|

||||

#syslog_facility = 'LOCAL0'

|

||||

#syslog_ident = 'postgres'

|

||||

# This is only relevant when logging to eventlog (win32):

|

||||

#event_source = 'PostgreSQL'

|

||||

# - When to Log -

|

||||

#client_min_messages = notice # values in order of decreasing detail:

|

||||

# debug5

|

||||

# debug4

|

||||

# debug3

|

||||

# debug2

|

||||

# debug1

|

||||

# log

|

||||

# notice

|

||||

# warning

|

||||

# error

|

||||

#log_min_messages = warning # values in order of decreasing detail:

|

||||

# debug5

|

||||

# debug4

|

||||

# debug3

|

||||

# debug2

|

||||

# debug1

|

||||

# info

|

||||

# notice

|

||||

# warning

|

||||

# error

|

||||

# log

|

||||

# fatal

|

||||

# panic

|

||||

#log_min_error_statement = error # values in order of decreasing detail:

|

||||

# debug5

|

||||

# debug4

|

||||

# debug3

|

||||

# debug2

|

||||

# debug1

|

||||

# info

|

||||

# notice

|

||||

# warning

|

||||

# error

|

||||

# log

|

||||

# fatal

|

||||

# panic (effectively off)

|

||||

#log_min_duration_statement = -1 # -1 is disabled, 0 logs all statements

|

||||

# and their durations, > 0 logs only

|

||||

# statements running at least this number

|

||||

# of milliseconds

|

||||

# - What to Log

|

||||

#debug_print_parse = off

|

||||

#debug_print_rewritten = off

|

||||

#debug_print_plan = off

|

||||

#debug_pretty_print = on

|

||||

#log_checkpoints = off

|

||||

#log_connections = off

|

||||

#log_disconnections = off

|

||||

#log_duration = off

|

||||

#log_error_verbosity = default

|

||||

# terse, default, or verbose messages

|

||||

#log_hostname = off

|

||||

log_line_prefix = '< %m >' # special values:

|

||||

# %a = application name

|

||||

# %u = user name

|

||||

# %d = database name

|

||||

# %r = remote host and port

|

||||

# %h = remote host

|

||||

# %p = process ID

|

||||

# %t = timestamp without milliseconds

|

||||

# %m = timestamp with milliseconds

|

||||

# %i = command tag

|

||||

# %e = SQL state

|

||||

# %c = session ID

|

||||

# %l = session line number

|

||||

# %s = session start timestamp

|

||||

# %v = virtual transaction ID

|

||||

# %x = transaction ID (0 if none)

|

||||

# %q = stop here in non-session

|

||||

# processes

|

||||

# %% = '%'

|

||||

# e.g. '<%u%%%d> '

|

||||

#log_lock_waits = off # log lock waits >= deadlock_timeout

|

||||

#log_statement = 'none' # none, ddl, mod, all

|

||||

#log_temp_files = -1 # log temporary files equal or larger

|

||||

# than the specified size in kilobytes;5# -1 disables, 0 logs all temp files5

|

||||

log_timezone = 'Australia/ACT'

|

||||

|

||||

Although most parameters are commented out, they assume default values. We can see the log file directory is pg_log (log_directory parameter), the file names should start with postgresql (log_filename parameter), the files are rotated once every day (log_rotation_age parameter) and the log entries start with a timestamp (log_line_prefix parameter). Of particular interest is the log_line_prefix parameter: there is a whole gamut of information you can include there.

|

||||

|

||||

Looking under /var/lib/pgsql/9.4/data/pg_log directory shows us these files:

|

||||

|

||||

[root@localhost ~]# ls -l /var/lib/pgsql/9.4/data/pg_log

|

||||

total 20

|

||||

-rw-------. 1 postgres postgres 1212 May 1 20:11 postgresql-Fri.log

|

||||

-rw-------. 1 postgres postgres 243 Feb 9 21:49 postgresql-Mon.log

|

||||

-rw-------. 1 postgres postgres 1138 Feb 7 11:08 postgresql-Sat.log

|

||||

-rw-------. 1 postgres postgres 1203 Feb 26 21:32 postgresql-Thu.log

|

||||

-rw-------. 1 postgres postgres 326 Feb 10 01:20 postgresql-Tue.log

|

||||

|

||||

So the log files only have the name of the weekday stamped in the file name. We can change it. How? Configure the log_filename parameter in postgresql.conf.

|

||||

|

||||

Looking inside one log file shows its entries start with date time only:

|

||||

|

||||

[root@localhost ~]# cat /var/lib/pgsql/9.4/data/pg_log/postgresql-Fri.log

|

||||

...

|

||||

< 2015-02-27 01:21:27.020 EST >LOG: received fast shutdown request

|

||||

< 2015-02-27 01:21:27.025 EST >LOG: aborting any active transactions

|

||||

< 2015-02-27 01:21:27.026 EST >LOG: autovacuum launcher shutting down

|

||||

< 2015-02-27 01:21:27.036 EST >LOG: shutting down

|

||||

< 2015-02-27 01:21:27.211 EST >LOG: database system is shut down

|

||||

|

||||

### Centralizing Application Logs ###

|

||||

|

||||

#### Log File Monitoring with Imfile ####

|

||||

|

||||

Traditionally, the most common way for applications to log their data is with files. Files are easy to search on a single machine but don’t scale well with more servers. You can set up log file monitoring and send the events to a centralized server when new logs are appended to the bottom. Create a new configuration file in /etc/rsyslog.d/ then add a file input like this:

|

||||

|

||||

$ModLoad imfile

|

||||

$InputFilePollInterval 10

|

||||

$PrivDropToGroup adm

|

||||

|

||||

----------

|

||||

|

||||

# Input for FILE1

|

||||

$InputFileName /FILE1

|

||||

$InputFileTag APPNAME1

|

||||

$InputFileStateFile stat-APPNAME1 #this must be unique for each file being polled

|

||||

$InputFileSeverity info

|

||||

$InputFilePersistStateInterval 20000

|

||||

$InputRunFileMonitor

|

||||

|

||||

Replace FILE1 and APPNAME1 with your own file and application names. Rsyslog will send it to the outputs you have configured.

|

||||

|

||||

#### Local Socket Logs with Imuxsock ####

|

||||

|

||||

A socket is similar to a UNIX file handle except that the socket is read into memory by your syslog daemon and then sent to the destination. No file needs to be written. As an example, the logger command sends its logs to this UNIX socket.

|

||||

|

||||

This approach makes efficient use of system resources if your server is constrained by disk I/O or you have no need for local file logs. The disadvantage of this approach is that the socket has a limited queue size. If your syslog daemon goes down or can’t keep up, then you could lose log data.

|

||||

|

||||

The rsyslog daemon will read from the /dev/log socket by default, but you can specifically enable it with the [imuxsock input module][17] using the following command:

|

||||

|

||||

$ModLoad imuxsock

|

||||

|

||||

#### UDP Logs with Imupd ####

|

||||

|

||||

Some applications output log data in UDP format, which is the standard syslog protocol when transferring log files over a network or your localhost. Your syslog daemon receives these logs and can process them or transmit them in a different format. Alternately, you can send the logs to your log server or to a log management solution.

|

||||

|

||||

Use the following command to configure rsyslog to accept syslog data over UDP on the standard port 514:

|

||||

|

||||

$ModLoad imudp

|

||||

|

||||

----------

|

||||

|

||||

$UDPServerRun 514

|

||||

|

||||

### Manage Logs with Logrotate ###

|

||||

|

||||

Log rotation is a process that archives log files automatically when they reach a specified age. Without intervention, log files keep growing, using up disk space. Eventually they will crash your machine.

|

||||

|

||||

The logrotate utility can truncate your logs as they age, freeing up space. Your new log file retains the filename. Your old log file is renamed with a number appended to the end of it. Each time the logrotate utility runs, a new file is created and the existing file is renamed in sequence. You determine the threshold when old files are deleted or archived.

|

||||

|

||||

When logrotate copies a file, the new file has a new inode, which can interfere with rsyslog’s ability to monitor the new file. You can alleviate this issue by adding the copytruncate parameter to your logrotate cron job. This parameter copies existing log file contents to a new file and truncates these contents from the existing file. The inode never changes because the log file itself remains the same; its contents are in a new file.

|

||||

|

||||

The logrotate utility uses the main configuration file at /etc/logrotate.conf and application-specific settings in the directory /etc/logrotate.d/. DigitalOcean has a detailed [tutorial on logrotate][18].

|

||||

|

||||

### Manage Configuration on Many Servers ###

|

||||

|

||||

When you have just a few servers, you can manually configure logging on them. Once you have a few dozen or more servers, you can take advantage of tools that make this easier and more scalable. At a basic level, all of these copy your rsyslog configuration to each server, and then restart rsyslog so the changes take effect.

|

||||

|

||||

#### Pssh ####

|

||||

|

||||

This tool lets you run an ssh command on several servers in parallel. Use a pssh deployment for only a small number of servers. If one of your servers fails, then you have to ssh into the failed server and do the deployment manually. If you have several failed servers, then the manual deployment on them can take a long time.

|

||||

|

||||

#### Puppet/Chef ####

|

||||

|

||||

Puppet and Chef are two different tools that can automatically configure all of the servers in your network to a specified standard. Their reporting tools let you know about failures and can resync periodically. Both Puppet and Chef have enthusiastic supporters. If you aren’t sure which one is more suitable for your deployment configuration management, you might appreciate [InfoWorld’s comparison of the two tools][19].

|

||||

|

||||

Some vendors also offer modules or recipes for configuring rsyslog. Here is an example from Loggly’s Puppet module. It offers a class for rsyslog to which you can add an identifying token:

|

||||

|

||||

node 'my_server_node.example.net' {

|

||||

# Send syslog events to Loggly

|

||||

class { 'loggly::rsyslog':

|

||||

customer_token => 'de7b5ccd-04de-4dc4-fbc9-501393600000',

|

||||

}

|

||||

}

|

||||

|

||||

#### Docker ####

|

||||

|

||||

Docker uses containers to run applications independent of the underlying server. Everything runs from inside a container, which you can think of as a unit of functionality. ZDNet has an in-depth article about [using Docker][20] in your data center.

|

||||

|

||||

There are several ways to log from Docker containers including linking to a logging container, logging to a shared volume, or adding a syslog agent directly inside the container. One of the most popular logging containers is called [logspout][21].

|

||||

|

||||

#### Vendor Scripts or Agents ####

|

||||

|

||||

Most log management solutions offer scripts or agents to make sending data from one or more servers relatively easy. Heavyweight agents can use up extra system resources. Some vendors like Loggly offer configuration scripts to make using existing syslog daemons easier. Here is an example [script][22] from Loggly which can run on any number of servers.

|

||||

|

||||

--------------------------------------------------------------------------------

|

||||

|

||||

via: http://www.loggly.com/ultimate-guide/logging/managing-linux-logs/

|

||||

|

||||

作者:[Jason Skowronski][a1]

|

||||

作者:[Amy Echeverri][a2]

|

||||

作者:[Sadequl Hussain][a3]

|

||||

译者:[译者ID](https://github.com/译者ID)

|

||||

校对:[校对者ID](https://github.com/校对者ID)

|

||||

|

||||

本文由 [LCTT](https://github.com/LCTT/TranslateProject) 原创翻译,[Linux中国](https://linux.cn/) 荣誉推出

|

||||

|

||||

[a1]:https://www.linkedin.com/in/jasonskowronski

|

||||

[a2]:https://www.linkedin.com/in/amyecheverri

|

||||

[a3]:https://www.linkedin.com/pub/sadequl-hussain/14/711/1a7

|

||||

[1]:https://docs.google.com/document/d/11LXZxWlkNSHkcrCWTUdnLRf_CiZz9kK0cr3yGM_BU_0/edit#heading=h.esrreycnpnbl

|

||||

[2]:http://www.rsyslog.com/

|

||||

[3]:http://www.balabit.com/network-security/syslog-ng/opensource-logging-system

|

||||

[4]:http://logstash.net/

|

||||

[5]:http://www.fluentd.org/

|

||||

[6]:http://www.rsyslog.com/doc/rsyslog_conf.html

|

||||

[7]:http://www.rsyslog.com/doc/master/rainerscript/index.html

|

||||

[8]:https://docs.google.com/document/d/11LXZxWlkNSHkcrCWTUdnLRf_CiZz9kK0cr3yGM_BU_0/edit#heading=h.eck7acdxin87

|

||||

[9]:https://www.loggly.com/docs/file-monitoring/

|

||||

[10]:http://www.networksorcery.com/enp/protocol/udp.htm

|

||||

[11]:http://www.networksorcery.com/enp/protocol/tcp.htm

|

||||

[12]:http://blog.gerhards.net/2008/04/on-unreliability-of-plain-tcp-syslog.html

|

||||

[13]:http://www.rsyslog.com/doc/relp.html

|

||||

[14]:http://www.rsyslog.com/doc/queues.html

|

||||

[15]:http://www.rsyslog.com/doc/tls_cert_ca.html

|

||||

[16]:http://www.rsyslog.com/doc/tls_cert_machine.html

|

||||

[17]:http://www.rsyslog.com/doc/v8-stable/configuration/modules/imuxsock.html

|

||||

[18]:https://www.digitalocean.com/community/tutorials/how-to-manage-log-files-with-logrotate-on-ubuntu-12-10

|

||||

[19]:http://www.infoworld.com/article/2614204/data-center/puppet-or-chef--the-configuration-management-dilemma.html

|

||||

[20]:http://www.zdnet.com/article/what-is-docker-and-why-is-it-so-darn-popular/

|

||||

[21]:https://github.com/progrium/logspout

|

||||

[22]:https://www.loggly.com/docs/sending-logs-unixlinux-system-setup/

|

||||

@ -1,3 +1,5 @@

|

||||

Vic020

|

||||

|

||||

Linux Tricks: Play Game in Chrome, Text-to-Speech, Schedule a Job and Watch Commands in Linux

|

||||

================================================================================

|

||||

Here again, I have compiled a list of four things under [Linux Tips and Tricks][1] series you may do to remain more productive and entertained with Linux Environment.

|

||||

@ -140,4 +142,4 @@ via: http://www.tecmint.com/text-to-speech-in-terminal-schedule-a-job-and-watch-

|

||||

|

||||

[a]:http://www.tecmint.com/author/avishek/

|

||||

[1]:http://www.tecmint.com/tag/linux-tricks/

|

||||

[2]:http://www.tecmint.com/11-cron-scheduling-task-examples-in-linux/

|

||||

[2]:http://www.tecmint.com/11-cron-scheduling-task-examples-in-linux/

|

||||

|

||||

@ -1,3 +1,4 @@

|

||||

KevinSJ Translating

|

||||

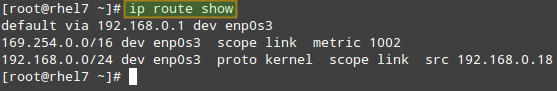

How to get Public IP from Linux Terminal?

|

||||

================================================================================

|

||||

|

||||

@ -65,4 +66,4 @@ via: http://www.blackmoreops.com/2015/06/14/how-to-get-public-ip-from-linux-term

|

||||

译者:[译者ID](https://github.com/译者ID)

|

||||

校对:[校对者ID](https://github.com/校对者ID)

|

||||

|

||||

本文由 [LCTT](https://github.com/LCTT/TranslateProject) 原创翻译,[Linux中国](https://linux.cn/) 荣誉推出

|

||||

本文由 [LCTT](https://github.com/LCTT/TranslateProject) 原创翻译,[Linux中国](https://linux.cn/) 荣誉推出

|

||||

|

||||

@ -1,3 +1,4 @@

|

||||

translating wi-cuckoo

|

||||

Howto Run JBoss Data Virtualization GA with OData in Docker Container

|

||||

================================================================================

|

||||

Hi everyone, today we'll learn how to run JBoss Data Virtualization 6.0.0.GA with OData in a Docker Container. JBoss Data Virtualization is a data supply and integration solution platform that transforms various scatered multiple sources data, treats them as single source and delivers the required data into actionable information at business speed to any applications or users. JBoss Data Virtualization can help us easily combine and transform data into reusable business friendly data models and make unified data easily consumable through open standard interfaces. It offers comprehensive data abstraction, federation, integration, transformation, and delivery capabilities to combine data from one or multiple sources into reusable for agile data utilization and sharing.For more information about JBoss Data Virtualization, we can check out [its official page][1]. Docker is an open source platform that provides an open platform to pack, ship and run any application as a lightweight container. Running JBoss Data Virtualization with OData in Docker Container makes us easy to handle and launch.

|

||||

@ -99,4 +100,4 @@ via: http://linoxide.com/linux-how-to/run-jboss-data-virtualization-ga-odata-doc

|

||||

[a]:http://linoxide.com/author/arunp/

|

||||

[1]:http://www.redhat.com/en/technologies/jboss-middleware/data-virtualization

|

||||

[2]:https://github.com/jbossdemocentral/dv-odata-docker-integration-demo

|

||||

[3]:http://www.jboss.org/products/datavirt/download/

|

||||

[3]:http://www.jboss.org/products/datavirt/download/

|

||||

|

||||

@ -1,63 +0,0 @@

|

||||

Ubuntu Want To Make It Easier For You To Install The Latest Nvidia Linux Driver

|

||||

================================================================================

|

||||

|

||||

Ubuntu Gamers are on the rise -and so is demand for the latest drivers

|

||||

|

||||

**Installing the latest upstream NVIDIA graphics driver on Ubuntu could be about to get much easier. **

|

||||

|

||||

Ubuntu developers are considering the creation of a brand new ‘official’ PPA to distribute the latest closed-source NVIDIA binary drivers to desktop users.

|

||||

|

||||

The move would benefit Ubuntu gamers **without** risking the stability of the OS for everyone else.

|

||||

|

||||

New upstream drivers would be installed and updated from this new PPA **only** when a user explicitly opts-in to it. Everyone else would continue to receive and use the more recent stable NVIDIA Linux driver snapshot included in the Ubuntu archive.

|

||||

|

||||

### Why Is This Needed? ###

|

||||

|

||||

|

||||

Ubuntu provides drivers – but they’re not the latest

|

||||

|

||||

The closed-source NVIDIA graphics drivers that are available to install on Ubuntu from the archive (using the command line, synaptic or through the additional drivers tool) work fine for most and can handle the composited Unity desktop shell with ease.

|

||||

|

||||

For gaming needs it’s a different story.

|

||||

|

||||

If you want to squeeze every last frame and HD texture out of the latest big-name Steam game you’ll need the latest binary drivers blob.

|

||||

|

||||

> ‘Installing the very latest Nvidia Linux driver on Ubuntu is not easy and not always safe.’

|

||||

|

||||

The more recent the driver the more likely it is to support the latest features and technologies, or come pre-packed with game-specific tweaks and bug fixes too.

|

||||

|

||||

The problem is that installing the very latest Nvidia Linux driver on Ubuntu is not easy and not always safe.

|

||||

|

||||

To fill the void many third-party PPAs maintained by enthusiasts have emerged. Since many of these PPAs also distribute other experimental or bleeding-edge software their use is **not without risk**. Adding a bleeding edge PPA is often the fastest way to entirely hose a system!

|

||||

|

||||

A solution that lets Ubuntu users install the latest propriety graphics drivers as offered in third-party PPAs is needed **but** with the safety catch of being able to roll-back to the stable archive version if needed.

|

||||

|

||||

### ‘Demand for fresh drivers is hard to ignore’ ###

|

||||

|

||||

> ‘A solution that lets Ubuntu users get the latest hardware drivers safely is coming.’

|

||||

|

||||

‘The demand for fresh drivers in a fast developing market is becoming hard to ignore, users are going to want the latest upstream has to offer,’ Castro explains in an e-mail to the Ubuntu Desktop mailing list.

|

||||

|

||||